1. Обзор библиотеки pandas, важность подготовки данных¶

1.1. Pandas - библиотека Python для анализа данных¶

- Быстрая и эффективная работа с объектами таблиц (DataFrame) с индексацией;

- Чтение и запись данных между данными в памяти компьютера и файлами в разных форматах: CSV, текстовые файлы, Microsoft Excel, SQL-базы данных, HDF5;

- Обработка пропущенных и неформатированных данных;

- Изменение формы, расположения, структуры данных;

- Срезы на основе индексов и названий колонок, создание подмножеств для больших объёмов данных;

Основными структурами данных в pandas являются классы Series и DataFrame.

Series - это одномерный индексированный массив данных одного типа.

DataFrame – двухмерная структура данных, таблица, каждый столбец которой содержит данные одного типа.

Часто загружаемые с помощью pandas данные хранятся как таблицы. Популярные форматы таблиц: - .csv = Comma Separated Valuse, .tsv = Tab Separated Values, - Microsoft Excel: .xls, .xlsx, - HDF5, - базы данных SQL.

Сегодня мы познакомимся с чтением данных из формата CSV, фильтрацией и базовой подготовкой данных для анализа.

1.2. Признаки (features) в анализе данных. Категориальные и числовые признаки¶

Наиболее распространённая задача анализа данных - обучение с учителем. Имеется множество объектов и множество возможных ответов. Существует некоторая зависимость между ответами и объектами, но она неизвестна. Известна только конечная совокупность прецедентов — пар «объект, ответ», называемая обучающей выборкой. На основе этих данных требуется восстановить зависимость, то есть построить алгоритм, способный для любого объекта выдать достаточно точный ответ. (По материалу machinelearning.ru.)

Часто встречающимся типом входных данных является признаковое описание (матрица объекты-признаки). Каждый объект описывается набором своих характеристик, называемых признаками. Признаки могут быть числовыми или нечисловыми.

Числовые признаки - характеристики данных, выраженные в числах: возраст, количество, размер детали, цена и т. д. Чаще всего такие признаки не требуют особой обработки.

Категориальные признаки - определяют принадлежность к какой-то категории. Примеры: пол, город, время года, номер группы, категория товаров и т.п. Для автоматической обработки таких признаков вместо исходного значения значения категории (например, "М", "Ж" или "Москва", "Лондон") часто необходим перевод в числовую форму.

Подробнее о кодировке категориальных признаков: https://habr.com/ru/company/ods/blog/326418/ http://datareview.info/article/universalnyj-podxod-pochti-k-lyuboj-zadache-mashinnogo-obucheniya/

Иногда выделяют текстовые признаки. Примеры: полное имя, комментарий, описание. Такие признаки могут оказаться незначимыми в задаче, тогда их можно исключить, иначе к ним тоже применяется обработка и кодировка.

Подготовка данных к анализу включает в себя много этапов: очистка и исправление неверных данных, отбор и кодировка признаков, трансформация данных, удаление и создание новых признаков и т. д.

Подробная классификация процедур подготовки данных: http://www.machinelearning.ru/wiki/images/9/91/PZAD2017_07_datapreprocessing.pdf

# Loading (importing) pandas module with often used alias "pd"

# Загрузка модуля pandas с коротким именем pd

import pandas as pd

# load Excel file with pandas as an example

# Зазгрузка таблицы Excel

excel_file = 'mov.xls'

df = pd.read_excel(excel_file)

# Review top rows of data

# Просмотр верхней части таблицы

df.head()

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 1 | 3 Backyards | 2010 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 2 | 3 | 2010 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | NaN | 59774.0 | ... | 24 | 20 | 9 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 4 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | NaN | NaN | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

5 rows × 25 columns

2. Аккуратные данные (Tidy Data)¶

2.1. Принципы аккуратных данных¶

“Data cleaning is one of the most frequent task in data science. No matter what kind of data you are dealing with or what kind of analysis you are performing, you will have to clean the data at some point.” -- Jean-Nicholas Hould http://www.jeannicholashould.com/tidy-data-in-python.html

"Аккуратные данные" - термин и набор правил для организации данных при статистических исследованиях из статьи Hadley Wickham, Tidy Data, Vol. 59, Issue 10, Sep 2014, Journal of Statistical Software.

- Переменная (variable) - оценка какого-то атрибута. Рост, вес, пол, день недели, размер... Переменная содержит все значения, которые отображают один и тот же атрибут всех объектов.

- Значение (value): конкретный результат измерения атрибута. 152 см, 80 кг, М/Ж, XXL. Каждое значение принадлежит переменной и наблюдению.

- Наблюдение (observation) содержит все значения атрибутов, измеренные для одного и того же объекта (такого как человек, день, кинофильм).

Три принципа аккуратных данных (Tidy Data) [http://vita.had.co.nz/papers/tidy-data.pdf]¶

- Каждая переменная формирует колонку и имеет значения

- Каждое наблюдение формирует строку (ряд)

- Каждый вид эксперимента формирует таблицу. То есть каждый факт выражается только в одном месте. Примеры: баллы студента по разным предметам и данные самого студента должны быть в разных таблицах, не должно быть дубликатов и разночтений.

- (При наличии многих таблиц, нужно включать колонки, которые связывают их https://en.wikipedia.org/wiki/Tidy_data)

Иллюстрация принципов аккуратных данных из статьи https://r4ds.had.co.nz/tidy-data.html

2.2. Распространённые проблемы неаккуратности данных¶

Связанные с организацией данных в одной таблице:

- Заголовки колонок - это значения, а не имена переменных

- Несколько переменных хранятся в одной колонке

- Переменные хранятся в колонках и строках

Связанные с организацией группы таблиц:

- Много типов наблюдений хранится в одной таблице

- Один и тот же тип наблюдений хранится во многих таблицах

Наш фокус в этом мастер-классе - организация данных в одной таблице.

Пример: заголовки колонок - это не имена переменных¶

Из статьи Tidy Data

В этой таблице заголовок показывает доход в нескольких колонках.

| religion | 10k-20k | 20k-30k |

|---|---|---|

| Agnostic | 27 | 34 |

| Atheist | 12 | 25 |

| Buddhist | 20 | 27 |

Переменные в этом наборе - вид религии, доход (категориальный признак) и количество (или частота) людей такого дохода и религии. Для исправления этой таблицы по принципу аккуратных данных нужно из доход сделать переменной-колонкой, а её значения из бывших колонок сделать строками.

| religion | income | frequency |

|---|---|---|

| Agnostic | 10k-20k | 27 |

| Agnostic | 20k-30k | 34 |

| Atheist | 10k-20k | 12 |

| Atheist | 20k-30k | 25 |

| Buddhist | 10k-20k | 20 |

| Buddhist | 20k-30k | 27 |

Несколько переменных хранятся в одной колонке¶

Источник примера - tutorial

Колонка "ключ" (key) меняет тип переменной, представленной в соседней колонке, и колонка "значение" value имеет либо количество случаев cases, либо общую численность населения в зависимости от ключа. Это небоходимо разделить по колонкам.

| country | year | key | value |

|---|---|---|---|

| Afghanistan | 1999 | cases | 745 |

| Afghanistan | 1999 | population | 19987071 |

| Afghanistan | 2000 | cases | 2666 |

| Afghanistan | 2000 | population | 20595360 |

| Brazil | 1999 | cases | 37737 |

| Brazil | 1999 | population | 172006362 |

| Brazil | 2000 | cases | 80488 |

| Brazil | 2000 | population | 174504898 |

В такой таблице неудобно считать средние, максимумы или минимумы по значениям cases, population. Аккуратное и более удобное расположение данных - создать колонки cases, population.

| country | year | cases | population |

|---|---|---|---|

| Afghanistan | 1999 | 745 | 19987071 |

| Afghanistan | 2000 | 2666 | 20595360 |

| Brazil | 1999 | 37737 | 172006362 |

| Brazil | 2000 | 80488 | 174504898 |

Переменные хранятся в колонках и строках¶

Пример 1. Иллюстрация не полностью аккуратных данных из статьи http://garrettgman.github.io/tidying/

Пример 2. По материалу https://habr.com/ru/post/248741/

Несколько студентов учились по разным предметам (classes) в течение семестра (term) и сдавали тесты в середине семестра (midterm) и в конце (final). Имеется таблица оценок студентов по всем тестам, которые они сдали.

| name | test | class1 | class2 | class3 |

|---|---|---|---|---|

| Sally | midterm | A | NA | NA |

| Sally | final | C | NA | NA |

| Jeff | midterm | NA | D | A |

| Jeff | final | NA | A | C |

| Roger | midterm | NA | NA | A |

| Roger | final | NA | NA | C |

Столбцы class1, class2, class3 содержат значения одной переменной class. А значения столбца test (midterm, final) должны быть переменными и содержать результат теста для каждого студента.

Создадим новую переменную class со значениями 1-3 и раскроем значения столбца test в переменные final-score и midterm-score.

| name | class | final-score | midterm-score |

|---|---|---|---|

| Sally | 1 | C | A |

| Jeff | 2 | A | D |

| Jeff | 3 | C | A |

| Roger | 3 | C | A |

3. Загрузка файлов CSV через Excel и pandas¶

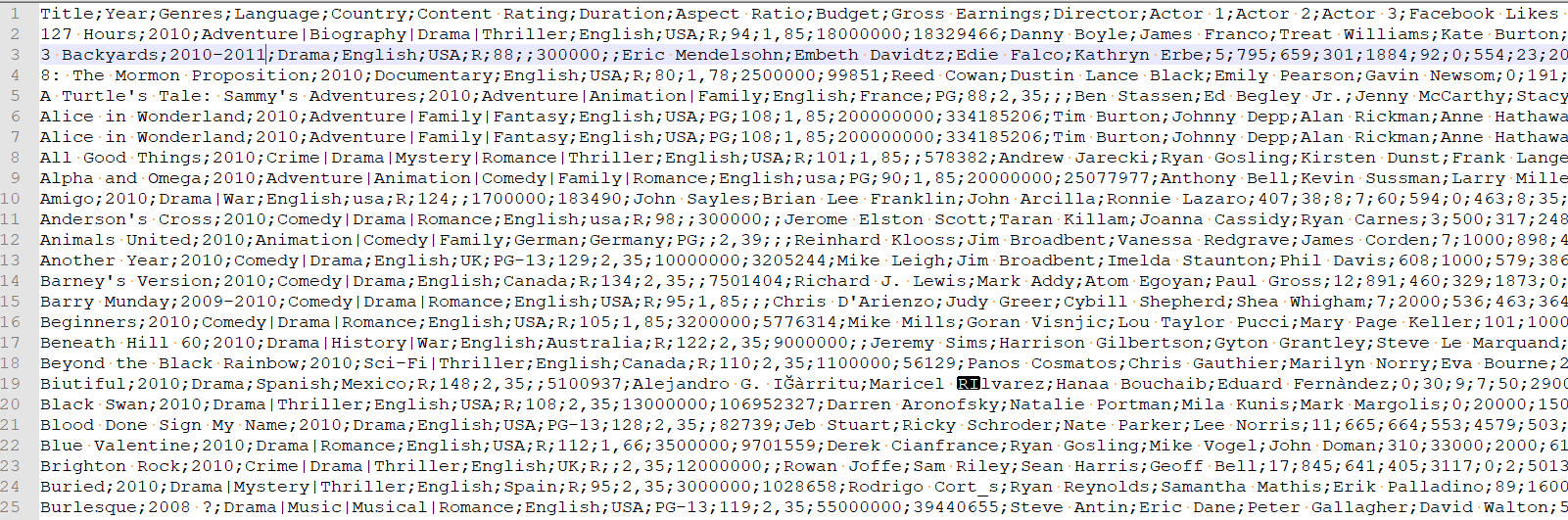

3.1. Просмотр "сырого" файла CSV¶

3.2. Удобный просмотр файла CSV с помощью Excel¶

Метод получения Excel таблицы из CSV - это команда в меню Data > Text to Columns. В диалоге перевода данных в Excel много параметров (тип разделения, разделитель, что делать с дополнительными пробельными символами и т. д.). Результат - читаемая таблица.

3.3. Загрузка CSV через методы pandas. Настройка параметров загрузки¶

# Read data from file 'winequality-red.csv' in the same directory that your python script

# Простое чтение файла без параметров

wine_filename = "winequality-red.csv"

data = pd.read_csv(wine_filename)

# Check top/head lines of loaded data, but the table seems strange

# Убедимся, что данные присутствуют, однако таблица не выглядит верной

data.head()

| This is a dataset of red wine quality with about 10 parameters | |

|---|---|

| 0 | fixed acidity;"volatile acidity";"citric acid"... |

| 1 | 7.4;0.7;0;1.9;0.076;11;34;0.9978;3.51;0.56;9.4;5 |

| 2 | 7.8;0.88;0;2.6;0.098;25;67;0.9968;3.2;0.68;9.8;5 |

| 3 | 7.8;0.76;0.04;2.3;0.092;15;54;0.997;3.26;0.65;... |

| 4 | 11.2;0.28;0.56;1.9;0.075;17;60;0.998;3.16;0.58... |

# Loading CSV file from WEB (URL)

# Загрузка файла по WEB-адресу

# May not work undef proxy

url_csv = 'https://vincentarelbundock.github.io/Rdatasets/csv/boot/amis.csv'

df2 = pd.read_csv(url_csv)

df2.head()

| Unnamed: 0 | speed | period | warning | pair | |

|---|---|---|---|---|---|

| 0 | 1 | 26 | 1 | 1 | 1 |

| 1 | 2 | 26 | 1 | 1 | 1 |

| 2 | 3 | 26 | 1 | 1 | 1 |

| 3 | 4 | 26 | 1 | 1 | 1 |

| 4 | 5 | 27 | 1 | 1 | 1 |

help(pd.read_csv)

Help on function read_csv in module pandas.io.parsers:

read_csv(filepath_or_buffer, sep=',', delimiter=None, header='infer', names=None, index_col=None, usecols=None, squeeze=False, prefix=None, mangle_dupe_cols=True, dtype=None, engine=None, converters=None, true_values=None, false_values=None, skipinitialspace=False, skiprows=None, nrows=None, na_values=None, keep_default_na=True, na_filter=True, verbose=False, skip_blank_lines=True, parse_dates=False, infer_datetime_format=False, keep_date_col=False, date_parser=None, dayfirst=False, iterator=False, chunksize=None, compression='infer', thousands=None, decimal=b'.', lineterminator=None, quotechar='"', quoting=0, escapechar=None, comment=None, encoding=None, dialect=None, tupleize_cols=None, error_bad_lines=True, warn_bad_lines=True, skipfooter=0, doublequote=True, delim_whitespace=False, low_memory=True, memory_map=False, float_precision=None)

Read CSV (comma-separated) file into DataFrame

Also supports optionally iterating or breaking of the file

into chunks.

Additional help can be found in the `online docs for IO Tools

<http://pandas.pydata.org/pandas-docs/stable/io.html>`_.

Parameters

----------

filepath_or_buffer : str, pathlib.Path, py._path.local.LocalPath or any \

object with a read() method (such as a file handle or StringIO)

The string could be a URL. Valid URL schemes include http, ftp, s3, and

file. For file URLs, a host is expected. For instance, a local file could

be file://localhost/path/to/table.csv

sep : str, default ','

Delimiter to use. If sep is None, the C engine cannot automatically detect

the separator, but the Python parsing engine can, meaning the latter will

be used and automatically detect the separator by Python's builtin sniffer

tool, ``csv.Sniffer``. In addition, separators longer than 1 character and

different from ``'\s+'`` will be interpreted as regular expressions and

will also force the use of the Python parsing engine. Note that regex

delimiters are prone to ignoring quoted data. Regex example: ``'\r\t'``

delimiter : str, default ``None``

Alternative argument name for sep.

delim_whitespace : boolean, default False

Specifies whether or not whitespace (e.g. ``' '`` or ``'\t'``) will be

used as the sep. Equivalent to setting ``sep='\s+'``. If this option

is set to True, nothing should be passed in for the ``delimiter``

parameter.

.. versionadded:: 0.18.1 support for the Python parser.

header : int or list of ints, default 'infer'

Row number(s) to use as the column names, and the start of the

data. Default behavior is to infer the column names: if no names

are passed the behavior is identical to ``header=0`` and column

names are inferred from the first line of the file, if column

names are passed explicitly then the behavior is identical to

``header=None``. Explicitly pass ``header=0`` to be able to

replace existing names. The header can be a list of integers that

specify row locations for a multi-index on the columns

e.g. [0,1,3]. Intervening rows that are not specified will be

skipped (e.g. 2 in this example is skipped). Note that this

parameter ignores commented lines and empty lines if

``skip_blank_lines=True``, so header=0 denotes the first line of

data rather than the first line of the file.

names : array-like, default None

List of column names to use. If file contains no header row, then you

should explicitly pass header=None. Duplicates in this list will cause

a ``UserWarning`` to be issued.

index_col : int or sequence or False, default None

Column to use as the row labels of the DataFrame. If a sequence is given, a

MultiIndex is used. If you have a malformed file with delimiters at the end

of each line, you might consider index_col=False to force pandas to _not_

use the first column as the index (row names)

usecols : list-like or callable, default None

Return a subset of the columns. If list-like, all elements must either

be positional (i.e. integer indices into the document columns) or strings

that correspond to column names provided either by the user in `names` or

inferred from the document header row(s). For example, a valid list-like

`usecols` parameter would be [0, 1, 2] or ['foo', 'bar', 'baz']. Element

order is ignored, so ``usecols=[0, 1]`` is the same as ``[1, 0]``.

To instantiate a DataFrame from ``data`` with element order preserved use

``pd.read_csv(data, usecols=['foo', 'bar'])[['foo', 'bar']]`` for columns

in ``['foo', 'bar']`` order or

``pd.read_csv(data, usecols=['foo', 'bar'])[['bar', 'foo']]``

for ``['bar', 'foo']`` order.

If callable, the callable function will be evaluated against the column

names, returning names where the callable function evaluates to True. An

example of a valid callable argument would be ``lambda x: x.upper() in

['AAA', 'BBB', 'DDD']``. Using this parameter results in much faster

parsing time and lower memory usage.

squeeze : boolean, default False

If the parsed data only contains one column then return a Series

prefix : str, default None

Prefix to add to column numbers when no header, e.g. 'X' for X0, X1, ...

mangle_dupe_cols : boolean, default True

Duplicate columns will be specified as 'X', 'X.1', ...'X.N', rather than

'X'...'X'. Passing in False will cause data to be overwritten if there

are duplicate names in the columns.

dtype : Type name or dict of column -> type, default None

Data type for data or columns. E.g. {'a': np.float64, 'b': np.int32}

Use `str` or `object` together with suitable `na_values` settings

to preserve and not interpret dtype.

If converters are specified, they will be applied INSTEAD

of dtype conversion.

engine : {'c', 'python'}, optional

Parser engine to use. The C engine is faster while the python engine is

currently more feature-complete.

converters : dict, default None

Dict of functions for converting values in certain columns. Keys can either

be integers or column labels

true_values : list, default None

Values to consider as True

false_values : list, default None

Values to consider as False

skipinitialspace : boolean, default False

Skip spaces after delimiter.

skiprows : list-like or integer or callable, default None

Line numbers to skip (0-indexed) or number of lines to skip (int)

at the start of the file.

If callable, the callable function will be evaluated against the row

indices, returning True if the row should be skipped and False otherwise.

An example of a valid callable argument would be ``lambda x: x in [0, 2]``.

skipfooter : int, default 0

Number of lines at bottom of file to skip (Unsupported with engine='c')

nrows : int, default None

Number of rows of file to read. Useful for reading pieces of large files

na_values : scalar, str, list-like, or dict, default None

Additional strings to recognize as NA/NaN. If dict passed, specific

per-column NA values. By default the following values are interpreted as

NaN: '', '#N/A', '#N/A N/A', '#NA', '-1.#IND', '-1.#QNAN', '-NaN', '-nan',

'1.#IND', '1.#QNAN', 'N/A', 'NA', 'NULL', 'NaN', 'n/a', 'nan',

'null'.

keep_default_na : bool, default True

Whether or not to include the default NaN values when parsing the data.

Depending on whether `na_values` is passed in, the behavior is as follows:

* If `keep_default_na` is True, and `na_values` are specified, `na_values`

is appended to the default NaN values used for parsing.

* If `keep_default_na` is True, and `na_values` are not specified, only

the default NaN values are used for parsing.

* If `keep_default_na` is False, and `na_values` are specified, only

the NaN values specified `na_values` are used for parsing.

* If `keep_default_na` is False, and `na_values` are not specified, no

strings will be parsed as NaN.

Note that if `na_filter` is passed in as False, the `keep_default_na` and

`na_values` parameters will be ignored.

na_filter : boolean, default True

Detect missing value markers (empty strings and the value of na_values). In

data without any NAs, passing na_filter=False can improve the performance

of reading a large file

verbose : boolean, default False

Indicate number of NA values placed in non-numeric columns

skip_blank_lines : boolean, default True

If True, skip over blank lines rather than interpreting as NaN values

parse_dates : boolean or list of ints or names or list of lists or dict, default False

* boolean. If True -> try parsing the index.

* list of ints or names. e.g. If [1, 2, 3] -> try parsing columns 1, 2, 3

each as a separate date column.

* list of lists. e.g. If [[1, 3]] -> combine columns 1 and 3 and parse as

a single date column.

* dict, e.g. {'foo' : [1, 3]} -> parse columns 1, 3 as date and call result

'foo'

If a column or index contains an unparseable date, the entire column or

index will be returned unaltered as an object data type. For non-standard

datetime parsing, use ``pd.to_datetime`` after ``pd.read_csv``

Note: A fast-path exists for iso8601-formatted dates.

infer_datetime_format : boolean, default False

If True and `parse_dates` is enabled, pandas will attempt to infer the

format of the datetime strings in the columns, and if it can be inferred,

switch to a faster method of parsing them. In some cases this can increase

the parsing speed by 5-10x.

keep_date_col : boolean, default False

If True and `parse_dates` specifies combining multiple columns then

keep the original columns.

date_parser : function, default None

Function to use for converting a sequence of string columns to an array of

datetime instances. The default uses ``dateutil.parser.parser`` to do the

conversion. Pandas will try to call `date_parser` in three different ways,

advancing to the next if an exception occurs: 1) Pass one or more arrays

(as defined by `parse_dates`) as arguments; 2) concatenate (row-wise) the

string values from the columns defined by `parse_dates` into a single array

and pass that; and 3) call `date_parser` once for each row using one or

more strings (corresponding to the columns defined by `parse_dates`) as

arguments.

dayfirst : boolean, default False

DD/MM format dates, international and European format

iterator : boolean, default False

Return TextFileReader object for iteration or getting chunks with

``get_chunk()``.

chunksize : int, default None

Return TextFileReader object for iteration.

See the `IO Tools docs

<http://pandas.pydata.org/pandas-docs/stable/io.html#io-chunking>`_

for more information on ``iterator`` and ``chunksize``.

compression : {'infer', 'gzip', 'bz2', 'zip', 'xz', None}, default 'infer'

For on-the-fly decompression of on-disk data. If 'infer' and

`filepath_or_buffer` is path-like, then detect compression from the

following extensions: '.gz', '.bz2', '.zip', or '.xz' (otherwise no

decompression). If using 'zip', the ZIP file must contain only one data

file to be read in. Set to None for no decompression.

.. versionadded:: 0.18.1 support for 'zip' and 'xz' compression.

thousands : str, default None

Thousands separator

decimal : str, default '.'

Character to recognize as decimal point (e.g. use ',' for European data).

float_precision : string, default None

Specifies which converter the C engine should use for floating-point

values. The options are `None` for the ordinary converter,

`high` for the high-precision converter, and `round_trip` for the

round-trip converter.

lineterminator : str (length 1), default None

Character to break file into lines. Only valid with C parser.

quotechar : str (length 1), optional

The character used to denote the start and end of a quoted item. Quoted

items can include the delimiter and it will be ignored.

quoting : int or csv.QUOTE_* instance, default 0

Control field quoting behavior per ``csv.QUOTE_*`` constants. Use one of

QUOTE_MINIMAL (0), QUOTE_ALL (1), QUOTE_NONNUMERIC (2) or QUOTE_NONE (3).

doublequote : boolean, default ``True``

When quotechar is specified and quoting is not ``QUOTE_NONE``, indicate

whether or not to interpret two consecutive quotechar elements INSIDE a

field as a single ``quotechar`` element.

escapechar : str (length 1), default None

One-character string used to escape delimiter when quoting is QUOTE_NONE.

comment : str, default None

Indicates remainder of line should not be parsed. If found at the beginning

of a line, the line will be ignored altogether. This parameter must be a

single character. Like empty lines (as long as ``skip_blank_lines=True``),

fully commented lines are ignored by the parameter `header` but not by

`skiprows`. For example, if ``comment='#'``, parsing

``#empty\na,b,c\n1,2,3`` with ``header=0`` will result in 'a,b,c' being

treated as the header.

encoding : str, default None

Encoding to use for UTF when reading/writing (ex. 'utf-8'). `List of Python

standard encodings

<https://docs.python.org/3/library/codecs.html#standard-encodings>`_

dialect : str or csv.Dialect instance, default None

If provided, this parameter will override values (default or not) for the

following parameters: `delimiter`, `doublequote`, `escapechar`,

`skipinitialspace`, `quotechar`, and `quoting`. If it is necessary to

override values, a ParserWarning will be issued. See csv.Dialect

documentation for more details.

tupleize_cols : boolean, default False

.. deprecated:: 0.21.0

This argument will be removed and will always convert to MultiIndex

Leave a list of tuples on columns as is (default is to convert to

a MultiIndex on the columns)

error_bad_lines : boolean, default True

Lines with too many fields (e.g. a csv line with too many commas) will by

default cause an exception to be raised, and no DataFrame will be returned.

If False, then these "bad lines" will dropped from the DataFrame that is

returned.

warn_bad_lines : boolean, default True

If error_bad_lines is False, and warn_bad_lines is True, a warning for each

"bad line" will be output.

low_memory : boolean, default True

Internally process the file in chunks, resulting in lower memory use

while parsing, but possibly mixed type inference. To ensure no mixed

types either set False, or specify the type with the `dtype` parameter.

Note that the entire file is read into a single DataFrame regardless,

use the `chunksize` or `iterator` parameter to return the data in chunks.

(Only valid with C parser)

memory_map : boolean, default False

If a filepath is provided for `filepath_or_buffer`, map the file object

directly onto memory and access the data directly from there. Using this

option can improve performance because there is no longer any I/O overhead.

Returns

-------

result : DataFrame or TextParser

# Basic parameters for data loading from CSV

# Простые параметры для настройки загрузки CSV

# delimiter - разделитель значений в CSV-файле

data = pd.read_csv(wine_filename, delimiter=';')

data.head()

| This is a dataset of red wine quality with about 10 parameters | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| fixed acidity | volatile acidity | citric acid | residual sugar | chlorides | free sulfur dioxide | total sulfur dioxide | density | pH | sulphates | alcohol | quality |

| 7.4 | 0.7 | 0 | 1.9 | 0.076 | 11 | 34 | 0.9978 | 3.51 | 0.56 | 9.4 | 5 |

| 7.8 | 0.88 | 0 | 2.6 | 0.098 | 25 | 67 | 0.9968 | 3.2 | 0.68 | 9.8 | 5 |

| 0.76 | 0.04 | 2.3 | 0.092 | 15 | 54 | 0.997 | 3.26 | 0.65 | 9.8 | 5 | |

| 11.2 | 0.28 | 0.56 | 1.9 | 0.075 | 17 | 60 | 0.998 | 3.16 | 0.58 | 9.8 | 6 |

# Dataset has description in first row and one empty row, we skip them with skiprows parameter

# Файл содержит краткое описание данных в первой строке и пустую строку, пропустим их с помощью параметра skiprows

data = pd.read_csv(wine_filename, delimiter=';', skiprows = 2)

data.head()

| fixed acidity | volatile acidity | citric acid | residual sugar | chlorides | free sulfur dioxide | total sulfur dioxide | density | pH | sulphates | alcohol | quality | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 7.4 | 0.70 | 0.00 | 1.9 | 0.076 | 11.0 | 34.0 | 0.9978 | 3.51 | 0.56 | 9.4 | 5 |

| 1 | 7.8 | 0.88 | 0.00 | 2.6 | 0.098 | 25.0 | 67.0 | 0.9968 | 3.20 | 0.68 | 9.8 | 5 |

| 2 | 7.8 | 0.76 | 0.04 | 2.3 | 0.092 | 15.0 | 54.0 | 0.9970 | 3.26 | 0.65 | 9.8 | 5 |

| 3 | 11.2 | 0.28 | 0.56 | 1.9 | 0.075 | 17.0 | 60.0 | 0.9980 | 3.16 | 0.58 | 9.8 | 6 |

| 4 | 7.4 | 0.70 | 0.00 | 1.9 | 0.076 | 11.0 | 34.0 | 0.9978 | 3.51 | 0.56 | 9.4 | 5 |

# Choose columns of interest with usecols parameter

# Выбор отдельных колонок для анализа с помощью параметра usecols по списку названий или номеров

# usecols = ['col_name1', 'col_name2'] or usecols=[1, 2, 5]

data = pd.read_csv(wine_filename, delimiter=';',

skiprows = 2, usecols = ['residual sugar', 'density', 'alcohol', 'quality'])

data.head()

| residual sugar | density | alcohol | quality | |

|---|---|---|---|---|

| 0 | 1.9 | 0.9978 | 9.4 | 5 |

| 1 | 2.6 | 0.9968 | 9.8 | 5 |

| 2 | 2.3 | 0.9970 | 9.8 | 5 |

| 3 | 1.9 | 0.9980 | 9.8 | 6 |

| 4 | 1.9 | 0.9978 | 9.4 | 5 |

3.5. Обзор данных: head(), tail(), shape, columns, info(), describe()¶

# Check the end of data

# Просмотр последних строк данных

print("data.tail()")

print(data.tail())

# Get dimensions of data table

# Узнаем размеры таблицы данных

print()

print("data.shape")

print(data.shape)

# Print list of columns in data

# Распечатаем список колонок в таблице данных

print()

print("data.columns")

print(data.columns)

# Print basic information about dataset

# Узнаем описание таблицы данных

print()

print("data.info()")

print(data.info())

data.tail()

residual sugar density alcohol quality

1594 2.0 0.99490 10.5 5

1595 2.2 0.99512 11.2 6

1596 2.3 0.99574 11.0 6

1597 2.0 0.99547 10.2 5

1598 3.6 0.99549 11.0 6

data.shape

(1599, 4)

data.columns

Index(['residual sugar', 'density', 'alcohol', 'quality'], dtype='object')

data.info()

<class 'pandas.core.frame.DataFrame'>

RangeIndex: 1599 entries, 0 to 1598

Data columns (total 4 columns):

residual sugar 1599 non-null float64

density 1599 non-null float64

alcohol 1599 non-null float64

quality 1599 non-null int64

dtypes: float64(3), int64(1)

memory usage: 50.0 KB

None

Метод describe() показывает основные статистические характеристики данных по каждому числовому признаку (типы int64 и float64): число непропущенных значений, среднее, стандартное отклонение, диапазон, медиану, 0.25 и 0.75 квартили.

data.describe()

| residual sugar | density | alcohol | quality | |

|---|---|---|---|---|

| count | 1599.000000 | 1599.000000 | 1599.000000 | 1599.000000 |

| mean | 2.538806 | 0.996747 | 10.422983 | 5.636023 |

| std | 1.409928 | 0.001887 | 1.065668 | 0.807569 |

| min | 0.900000 | 0.990070 | 8.400000 | 3.000000 |

| 25% | 1.900000 | 0.995600 | 9.500000 | 5.000000 |

| 50% | 2.200000 | 0.996750 | 10.200000 | 6.000000 |

| 75% | 2.600000 | 0.997835 | 11.100000 | 6.000000 |

| max | 15.500000 | 1.003690 | 14.900000 | 8.000000 |

4. Поиск некорректных данных с pandas¶

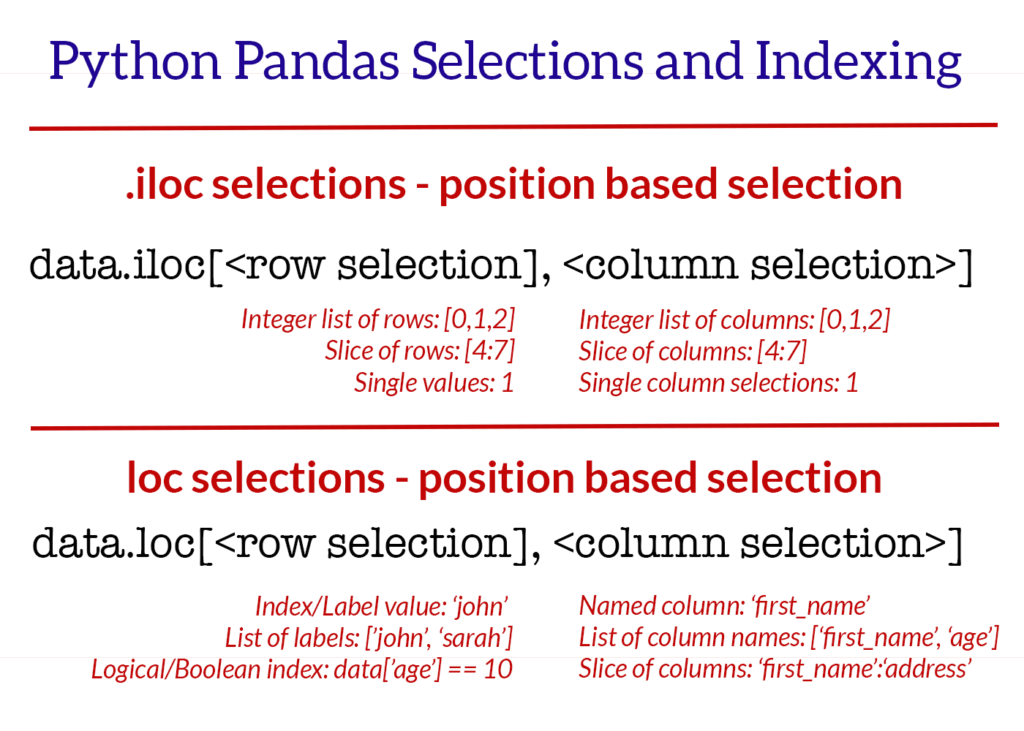

4.1 Просмотр данных, чтение строк и столбцов c методами loc, iloc¶

По материалу https://www.shanelynn.ie/select-pandas-dataframe-rows-and-columns-using-iloc-loc-and-ix/

# Selection of data by row or col position (iloc)

# Выбор данных по строке и колонке - по нумерованной позиции iloc

# Print row 3

# Вывод строки с индексом 3

row3 = df.iloc[3]

print("row3 type")

print(type(row3))

print()

print("row3 contents:")

print(row3)

# Create sub-dataFrame with list of row indexes and a slice of columns

# Создание подтаблицы по индексам колонок и строк

print()

sub_df1 = df.iloc[[1,2,4],0:2]

print(type(sub_df1))

print(sub_df1)

row3 type

<class 'pandas.core.series.Series'>

row3 contents:

Title 8: The Mormon Proposition

Year 2010

Genres Documentary

Language English

Country USA

Content Rating R

Duration 80

Aspect Ratio 1.78

Budget 2.5e+06

Gross Earnings 99851

Director Reed Cowan

Actor 1 Dustin Lance Black

Actor 2 Emily Pearson

Actor 3 Gavin Newsom

Facebook Likes - Director 0

Facebook Likes - Actor 1 191

Facebook Likes - Actor 2 12

Facebook Likes - Actor 3 5

Facebook Likes - cast Total 210

Facebook likes - Movie 0

Facenumber in posters 0

User Votes 1138

Reviews by Users 30

Reviews by Crtiics 28

IMDB Score 7.1

Name: 3, dtype: object

<class 'pandas.core.frame.DataFrame'>

Title Year

1 3 Backyards 2010

2 3 2010

4 A Turtle's Tale: Sammy's Adventures 2010

# Selection of data by label with loc[rows, columns]

# Выбор данных по названиям (label)

# Example: all rows and 2 named columns

# Пример: все строки (:) и две колонки списком имён

sub_frame1 = df.loc[:, ['Language', 'Country']]

print(sub_frame1.head())

print()

# In this data frame row index is just numbers, rows don't have names

# Example: rows 0, 1, 2 and a range of columns

sub_frame2 = df.loc[0:2, 'Language':'Budget']

print(sub_frame2.head())

Language Country 0 English USA 1 English USA 2 German Germany 3 English USA 4 English France Language Country Content Rating Duration Aspect Ratio Budget 0 English USA R 94.0 1.85 18000000.0 1 English USA R 88.0 NaN 300000.0 2 German Germany Unrated 119.0 2.35 NaN

4.2. Поиск пропущенных данных¶

В разных типах таблиц пропущенные данные могут выглядеть как:

- np.nan - это специальное значение из библиотеки numpy (np),

- пустые значения,

- неподходящие значения: пробельные символы, мусор

В библиотеке pandas почти все пропущенные данные по умолчанию выражаются как np.nan

Задание: с помощью методов loc, iloc и здравого смысла исправить рейтинг фильма со значением "Unrated"¶

# Example in our small dataset of movies

# Пример в подтаблице фильмов рейтинг - не установлен и некоторые значения NaN.

# Content Rating = Unrated и Aspect Ratio = NaN

# How to fix "Unrated"

# Content rating should be set up according to

# https://en.wikipedia.org/wiki/Motion_Picture_Association_of_America_film_rating_system

# Как исправить "Unrated" - специалист по данным должен принять решение.

df.head()

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 1 | 3 Backyards | 2010 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 2 | 3 | 2010 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | NaN | 59774.0 | ... | 24 | 20 | 9 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 4 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | NaN | NaN | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

5 rows × 25 columns

na_mask = sub_frame2.isna()

print(na_mask)

print()

# Example how to use: count NA values in one column

# Пример: вычисление количества пропущенных значений в одной колонке для полной таблицы

budget_col = df.loc[:, 'Budget']

budget_col_na = budget_col.isna()

print('Budget column in whole movies dataset has this count of NaN values:')

print(budget_col_na.sum())

Language Country Content Rating Duration Aspect Ratio Budget 0 False False False False False False 1 False False False False True False 2 False False False False False True Budget column in whole movies dataset has this count of NaN values: 11

4.3. Некорректные данные¶

Данные могут присутствовать в таблице, но иметь проблемы:

- неверный формат,

- не подходить как категория признака,

- иметь дубликаты,

- содержать неточную информацию или ошибки

- и другие :)

# 1 example on mov.xls: see rows 8, 9 column Country = "usa", not "USA"

# 2 example on mov.xls: see row 7, column Year = 2010-2011 seems incorrect

# 4 example on mov.xls: see rows 5 and 6, this is the same movie twice

df.head(10)

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 1 | 3 Backyards | 2010 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 2 | 3 | 2010 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | NaN | 59774.0 | ... | 24 | 20 | 9 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 4 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | NaN | NaN | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

| 5 | Alice in Wonderland | 2010 | Adventure|Family|Fantasy | English | USA | PG | 108.0 | 1.85 | 200000000.0 | 334185206.0 | ... | 40000 | 25000 | 11000 | 79957 | 24000 | 0.0 | 306320 | 736.0 | 451.0 | 6.5 |

| 6 | Alice in Wonderland | 2010 | Adventure|Family|Fantasy | English | USA | PG | 108.0 | 1.85 | 200000000.0 | 334185206.0 | ... | 40000 | 25000 | 11000 | 79957 | 24000 | 0.0 | 306336 | 736.0 | 451.0 | 6.5 |

| 7 | All Good Things | 2010-2011 | Crime|Drama|Mystery|Romance|Thriller | English | USA | R | 101.0 | 1.85 | NaN | 578382.0 | ... | 33000 | 4000 | 902 | 39515 | 0 | 2.0 | 41249 | 67.0 | 140.0 | 6.3 |

| 8 | Alpha and Omega | 2010 | Adventure|Animation|Comedy|Family|Romance | English | usa | PG | 90.0 | 1.85 | 20000000.0 | 25077977.0 | ... | 681 | 611 | 518 | 2486 | 0 | 0.0 | 10986 | 84.0 | 84.0 | 5.3 |

| 9 | Amigo | 2010 | Drama|War | English | usa | R | 124.0 | NaN | 1700000.0 | 183490.0 | ... | 38 | 8 | 7 | 60 | 594 | 0.0 | 463 | 8.0 | 35.0 | 5.8 |

10 rows × 25 columns

# drop missing data

# Удаление строк с отсутствующими данными

df_without_na = df.dropna(how='any')

df_without_na.head()

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 5 | Alice in Wonderland | 2010 | Adventure|Family|Fantasy | English | USA | PG | 108.0 | 1.85 | 200000000.0 | 334185206.0 | ... | 40000 | 25000 | 11000 | 79957 | 24000 | 0.0 | 306320 | 736.0 | 451.0 | 6.5 |

| 6 | Alice in Wonderland | 2010 | Adventure|Family|Fantasy | English | USA | PG | 108.0 | 1.85 | 200000000.0 | 334185206.0 | ... | 40000 | 25000 | 11000 | 79957 | 24000 | 0.0 | 306336 | 736.0 | 451.0 | 6.5 |

| 8 | Alpha and Omega | 2010 | Adventure|Animation|Comedy|Family|Romance | English | usa | PG | 90.0 | 1.85 | 20000000.0 | 25077977.0 | ... | 681 | 611 | 518 | 2486 | 0 | 0.0 | 10986 | 84.0 | 84.0 | 5.3 |

5 rows × 25 columns

# Simple fill missing data with 0 value

# Простая замена отсутствующих данных нулями

# https://pandas.pydata.org/pandas-docs/stable/generated/pandas.DataFrame.fillna.html

df_filled = df.fillna(value=0)

df_filled.head()

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 1 | 3 Backyards | 2010 | Drama | English | USA | R | 88.0 | 0.00 | 300000.0 | 0.0 | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 2 | 3 | 2010 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | 0.0 | 59774.0 | ... | 24 | 20 | 9 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 4 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | 0.0 | 0.0 | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

5 rows × 25 columns

# fill NA budget with some mean budget for these films

# заполним пустой бюджет фильма средним значением по этой колонке

mean = df['Budget'].mean()

print('mean film budget is')

print(mean)

print()

# inplace - значит заполнять в исходном датаFrame, а не только в возвращаемом новом

df['Budget'].fillna(mean, inplace=True)

df.head()

mean film budget is 36309750.0

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1.85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7.6 |

| 1 | 3 Backyards | 2010 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5.2 |

| 2 | 3 | 2010 | Comedy|Drama|Romance | German | Germany | Unrated | 119.0 | 2.35 | 36309750.0 | 59774.0 | ... | 24 | 20 | 9 | 69 | 2000 | 0.0 | 4212 | 18.0 | 76.0 | 6.8 |

| 3 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1.78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7.1 |

| 4 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2.35 | 36309750.0 | NaN | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6.1 |

5 rows × 25 columns

5.2. Обработка строк для однотипного форматирования данных¶

# load Excel file with pandas as an example

# Зазгрузка таблицы Excel

# Using encoding parameter because unicode defaiult encoder gives some errors on content

file = 'mov.csv'

df = pd.read_csv(file, encoding="ISO-8859-1", delimiter=';');

# Review top rows of data

# Просмотр верхней части таблицы

df.head()

| Title | Year | Genres | Language | Country | Content Rating | Duration | Aspect Ratio | Budget | Gross Earnings | ... | Facebook Likes - Actor 1 | Facebook Likes - Actor 2 | Facebook Likes - Actor 3 | Facebook Likes - cast Total | Facebook likes - Movie | Facenumber in posters | User Votes | Reviews by Users | Reviews by Crtiics | IMDB Score | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 127 Hours | 2010 | Adventure|Biography|Drama|Thriller | English | USA | R | 94.0 | 1,85 | 18000000.0 | 18329466.0 | ... | 11000 | 642 | 223 | 11984 | 63000 | 0.0 | 279179 | 440.0 | 450.0 | 7,6 |

| 1 | 3 Backyards | 2010-2011 | Drama | English | USA | R | 88.0 | NaN | 300000.0 | NaN | ... | 795 | 659 | 301 | 1884 | 92 | 0.0 | 554 | 23.0 | 20.0 | 5,2 |

| 2 | 8: The Mormon Proposition | 2010 | Documentary | English | USA | R | 80.0 | 1,78 | 2500000.0 | 99851.0 | ... | 191 | 12 | 5 | 210 | 0 | 0.0 | 1138 | 30.0 | 28.0 | 7,1 |

| 3 | A Turtle's Tale: Sammy's Adventures | 2010 | Adventure|Animation|Family | English | France | PG | 88.0 | 2,35 | NaN | NaN | ... | 783 | 749 | 602 | 3874 | 0 | 2.0 | 5385 | 22.0 | 56.0 | 6,1 |

| 4 | Alice in Wonderland | 2010 | Adventure|Family|Fantasy | English | USA | PG | 108.0 | 1,85 | 200000000.0 | 334185206.0 | ... | 40000 | 25000 | 11000 | 79957 | 24000 | 0.0 | 306320 | 736.0 | 451.0 | 6,5 |

5 rows × 25 columns

# Pay attention that dtype of "Year" is object, not a value

# Because there are some years like 2010-2011

df.dtypes

Title object Year object Genres object Language object Country object Content Rating object Duration float64 Aspect Ratio object Budget float64 Gross Earnings float64 Director object Actor 1 object Actor 2 object Actor 3 object Facebook Likes - Director int64 Facebook Likes - Actor 1 int64 Facebook Likes - Actor 2 int64 Facebook Likes - Actor 3 int64 Facebook Likes - cast Total int64 Facebook likes - Movie int64 Facenumber in posters float64 User Votes int64 Reviews by Users float64 Reviews by Crtiics float64 IMDB Score object dtype: object

df.Year

0 2010 1 2010-2011 2 2010 3 2010 4 2010 5 2010 6 2010 7 2010 8 2010 9 2010 10 2010 11 2010 12 2010 13 2009-2010 14 2010 15 2010 16 2010 17 2010 18 2010 19 2010 20 2010 21 2010 22 2010 23 2008 ? 24 2010 25 2010 26 2010 27 2010 28 2010 29 2010 30 2010 31 2010 32 2010 33 2010 34 2010 35 2010 36 2010 37 2010 38 2010 39 2010 40 2010 41 2010 42 2010 43 2010 44 2010 45 2010 46 2010 47 2010 48 2010 49 2010 Name: Year, dtype: object

# Replace " ?" first and then choose the ending year

df.Year = df.Year.replace("2008 ?", "2008")

df.Year

0 2010 1 2010-2011 2 2010 3 2010 4 2010 5 2010 6 2010 7 2010 8 2010 9 2010 10 2010 11 2010 12 2010 13 2009-2010 14 2010 15 2010 16 2010 17 2010 18 2010 19 2010 20 2010 21 2010 22 2010 23 2008 24 2010 25 2010 26 2010 27 2010 28 2010 29 2010 30 2010 31 2010 32 2010 33 2010 34 2010 35 2010 36 2010 37 2010 38 2010 39 2010 40 2010 41 2010 42 2010 43 2010 44 2010 45 2010 46 2010 47 2010 48 2010 49 2010 Name: Year, dtype: object

# Choose the ending year as film year

# Выберем год окончания как год фильма

def getEndingYear(str):

return str[-4:]

df['Year'] = df['Year'].apply(getEndingYear)

df.Year

0 2010 1 2011 2 2010 3 2010 4 2010 5 2010 6 2010 7 2010 8 2010 9 2010 10 2010 11 2010 12 2010 13 2010 14 2010 15 2010 16 2010 17 2010 18 2010 19 2010 20 2010 21 2010 22 2010 23 2008 24 2010 25 2010 26 2010 27 2010 28 2010 29 2010 30 2010 31 2010 32 2010 33 2010 34 2010 35 2010 36 2010 37 2010 38 2010 39 2010 40 2010 41 2010 42 2010 43 2010 44 2010 45 2010 46 2010 47 2010 48 2010 49 2010 Name: Year, dtype: object

# Now covert year to int type

# Теперь колонка с годом преобразуется в число целого типа

df['Year'] = pd.to_numeric(df['Year'])

df.dtypes

Title object Year int64 Genres object Language object Country object Content Rating object Duration float64 Aspect Ratio object Budget float64 Gross Earnings float64 Director object Actor 1 object Actor 2 object Actor 3 object Facebook Likes - Director int64 Facebook Likes - Actor 1 int64 Facebook Likes - Actor 2 int64 Facebook Likes - Actor 3 int64 Facebook Likes - cast Total int64 Facebook likes - Movie int64 Facenumber in posters float64 User Votes int64 Reviews by Users float64 Reviews by Crtiics float64 IMDB Score object dtype: object

5.3. Упражнение: исправить проблемы данных в колонке 'Country'¶

# Hint 1: issues in this column: 'Official site' value, 'canada' and 'Canada', 'USA' and 'usa',

# Совет: проблемы в этой колонке - строчные и прописные буквы, неверное название страны 'Official site'

# We can use functions from pandas.str

# Можно использовать функции pandas для строк

# dataFrame.Column.str.lower()

# Converts all characters to lowercase.

# dataFrame.Column.str.upper()

# Converts all characters to uppercase.

# Look at column to see its data problems

# Просмотр колонки для визуального определения проблем с данными

df.Country

0 USA 1 USA 2 USA 3 France 4 USA 5 USA 6 USA 7 usa 8 usa 9 usa 10 Germany 11 UK 12 Canada 13 USA 14 USA 15 Australia 16 Canada 17 Mexico 18 USA 19 USA 20 USA 21 UK 22 Spain 23 USA 24 USA 25 canada 26 USA 27 UK 28 USA 29 USA 30 USA 31 USA 32 USA 33 Official site 34 USA 35 USA 36 USA 37 USA 38 USA 39 USA 40 USA 41 USA 42 USA 43 USA 44 USA 45 USA 46 Sweden 47 USA 48 UK 49 USA Name: Country, dtype: object

# Hint 2

# print what is in row 33 where Country == Official site

# Распечатаем строку 33, чтобы понять, для какого фильма неверно указана страна

# Hint 2 - find out the real country of issuing this film here: https://www.imdb.com/title/tt1555064/

# Можно узнать, в какой стране выпущен этот фильм на сайте https://www.imdb.com/title/tt1555064/

df.loc[33, :]

Title Country Strong Year 2010 Genres Drama|Music Language English Country Official site Content Rating PG-13 Duration 117 Aspect Ratio 2,35 Budget 1.5e+07 Gross Earnings 2.02189e+07 Director Shana Feste Actor 1 Leighton Meester Actor 2 Cinda McCain Actor 3 Tim McGraw Facebook Likes - Director 19 Facebook Likes - Actor 1 3000 Facebook Likes - Actor 2 646 Facebook Likes - Actor 3 461 Facebook Likes - cast Total 4204 Facebook likes - Movie 0 Facenumber in posters 4 User Votes 14814 Reviews by Users 114 Reviews by Crtiics 135 IMDB Score 6,3 Name: 33, dtype: object

6. Cтатьи для самостоятельного изучения¶

6.1. Загрузка и обработка файлов CSV c Pandas¶

- Python Pandas read_csv – Load Data from CSV Files

- Interactive online tutorial for reading CSV with pandas from DataCamp

6.2. Подготовка данных с Pandas, работа с признаками, создание аккуратных данных¶

- Selecting Subsets of Data in Pandas: Part 1, by Ted Petrou

- Открытый курс машинного обучения. Тема 1. Первичный анализ данных с Pandas

- Продвинутая подготовка данных. Открытый курс машинного обучения. Тема 6. Построение и отбор признаков

- Подготовка данных к анализу на языке R. Data tidying: Подготовка наборов данных для анализа на конкретных примерах