ENV/ATM 415: Climate Laboratory¶

Brian E. J. Rose, University at Albany

Lecture 4: Introducing the Community Earth System Model (CESM)¶

What is it?¶

- CESM is one of a handful of complex coupled GCMs that are used as part of the IPCC process.

- Developed and maintained at NCAR (Boulder CO) by a group of climate scientists and software engineers.

- “Community” refers to the fact that the code is open-source, with new pieces contributed by a wide variety of users.

I use CESM in my own research. We are going to be using CESM in this course. Everyone should visit the website and learn about it.

Key components of CESM:¶

see http://www.cesm.ucar.edu/models/cesm1.2/ for more info

- Atmospheric model (AGCM)

- Community Atmsophere Model (CAM)

- Ocean model (OGCM)

- Parallel Ocean Program (POP)

- Land surface model

- Community Land Model (CLM)

- Sea ice model

- Community Ice CodE (CICE)

The software is somewhat modular, so different submodels can be combined together depending on the nature of the scientific problem at hand and the available computer power.

The Slab Ocean Model¶

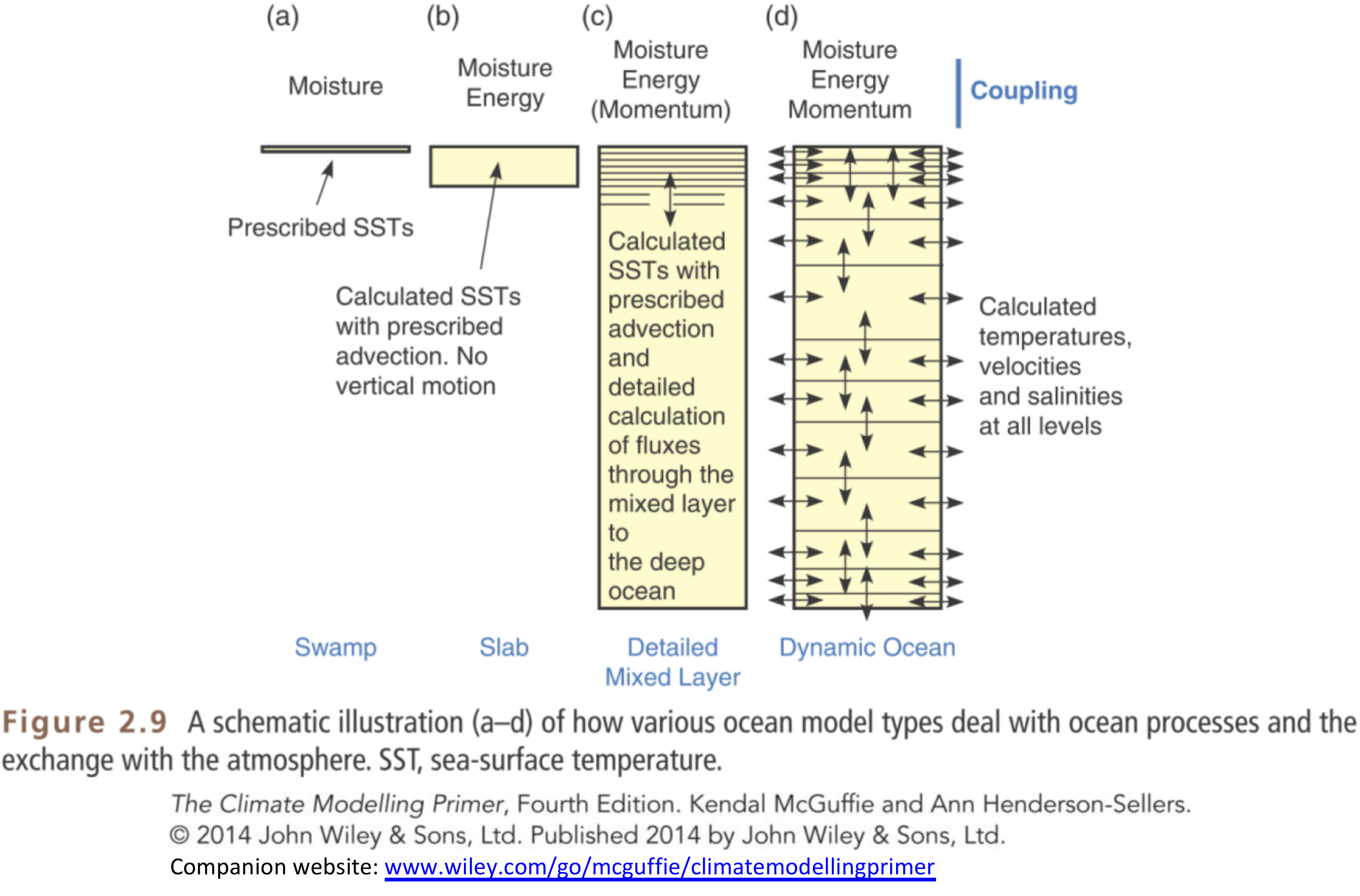

Our experiments will use CESM in the so-called Slab Ocean Model mode, in which the ocean is represented by a static layer of water with some fixed heat capacity but no motion.

Recall that we saw this schematic of different ways to represent the ocean in climate models:

Using the slab ocean greatly reduced the time required for the model to reach equilibrium.

The net effect heat transport by ocean currents is prescribed through a so-called q-flux, which really just means we prescribe sources and sinks of heat at different locations.

For (lots of) details, see http://www2.cesm.ucar.edu/working-groups/pwg/documentation/cesm1-paleo-toolkit/ocean/som

The key is that we allow the sea surface temperature to change, but we fix (prescribe) the net effect of ocean currents on the transport of energy.

Why do this?

- Because it takes thousands of years for the full ocean model to come into equilibrium!

Why should we believe the results of the slab ocean model?

- We shouldn’t!

But experience with coupled models (meaning interactive ocean circulation) has shown that the circulation does not change radically under $2\times CO_2$.

So the slab ocean model gives us a decent first guess at climate sensitivity. And it makes it possible to do a lot of experimentation that we wouldn’t be able to do otherwise.

Atmosphere¶

- Horizontal resolution about 2º lat/lon

- AGCM solves the fundamental equations:

- Conservation of momentum, mass, energy, water, equation of state

- At 2º we resolve the synoptic-scale dynamics

- storm tracks and cyclones.

- We do NOT resolve the mesoscale and smaller

- thunderstorms, individual convective events, clouds

- These all must be parameterized.

- Model also solves equations of radiative transfer. This takes account of

- composition of the atmosphere and the absorption properties of different gases

- radiative effects of clouds.

Sea ice¶

- Resolution of 1º.

- Thermodynamics (conservation of energy, water and salt)

- determines freezing and melting

- Dynamics (momentum equations)

- determine ice motion and deformation.

- Complex! Sea ice is sort of a mixture of a fluid and a solid.

Land surface model¶

- Same resolution as atmosphere.

- Determines surface fluxes of heat, water, momentum (friction) based on prescribed vegetation types.

- Don’t actually know much about how it works!

- Great topic for someone to dig in to for their term project.

Ocean¶

- Same grid as sea ice, 1º.

- Sea surface temperature evolves based on:

- heat exchange with atmosphere

- prescribed “q-flux”.

Experimental setup¶

Model is given realistic atmospheric composition, realistic solar radiation, etc.

We perform a control run to get a baseline simulation, and take averages of several years (because the model has internal variability – every year is a little bit different)

We then change something, e.g. $2\times CO_2$!

And allow the model to adjust to a new equilibrium, just as we did with the toy energy balance model.

Once it has gotten close to its new equilibrium, we run it for several more years again to get the new climatology.

Then we can look at the differences in the climatologies before and after the perturbation.

Description of input¶

First, let's take a look at some of the ingredients that go into the control run. All of the necessary data will be served up by a special data server sitting in the department, so you should be able to run this code to interact with the data on any computer that is connected to the internet.

You need to be connected to the internet to run the code in this notebook¶

You can browse the available data through a web interface here:

http://ramadda.atmos.albany.edu:8080/repository/entry/show/Top/Users/BrianRose/CESM_runs

Within this folder called CESM runs, you will find another folder called som_input which contains all the input files.

The data are all stored in NetCDF files, a standard file format for self-describing gridded data.

%matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

import xarray as xr

We are going to use a package called xarray (abbreviated here as xr) to work with the datasets.

Boundary conditions: continents and topography¶

Here we are going to load the input topography file and take a look at what's inside.

In this case we are passing it a URL to our online dataserver. We'll put the URL in a string variable called datapath to simplify things later on.

datapath = "http://ramadda.atmos.albany.edu:8080/repository/opendap/latest/Top/Users/BrianRose/CESM_runs/"

endstr = "/entry.das"

# Notice that in Python we can easily concatenate strings together just by `adding` them

fullURL = datapath + 'som_input/USGS-gtopo30_1.9x2.5_remap_c050602.nc' + endstr

print( fullURL)

# Now we actually open the dataset

topo = xr.open_dataset( fullURL )

print(topo)

The Dataset object has several important attributes. Much of this should look familiar if you have worked with netCDF data before. The xarray package gives a very powerful and easy to use interface to the data.

We can access individual variables within the xarray.Dataset object as follows:

topo.PHIS

Plotting the topography¶

We will now read the geopotential and make a plot of the topography of the Earth's surface as represented on the 2º grid. The code below makes a colorful plot of the topography. We also use the land-sea mask in order to plot nothing at grid points that are entirely ocean-covered.

Execute this code exactly as written first, and then play around with it to see how you might customize the graph.

Note that the function pcolormesh does most of the work here. It's a function that makes color 2D plots of an array.

g = 9.8 # gravity in m/s2

meters_per_kilometer = 1E3

height = topo.PHIS / g / meters_per_kilometer # in kilometers

# Note that we have just created a new xarray.DataArray object that preserves the axis labels

# Let's go ahead and give it some useful metadata:

height.attrs['units'] = 'km'

height.name = 'height'

height

Let's make a plot! xarray is able to automatically generate labeled plots. This is very handy for "quick and dirty" investigation of the data:

height.plot()

If we want more control over the appearance of the plot, we can use features of matplotlib

# A filled contour plot of topography with contours every 500 m

lev = np.arange(0., 6., 0.5)

fig1, ax1 = plt.subplots(figsize=(8,4))

# Here we are masking the data to exclude points where the land fraction is zero (water only)

cax1 = ax1.contourf( height.lon, height.lat,

height.where(topo.LANDFRAC>0), levels=lev)

ax1.set_title('Topography (km) and land-sea mask in CESM')

ax1.set_xlabel('Longitude')

ax1.set_ylabel('Latitude')

cbar1 = fig1.colorbar(cax1)

Note that at 2º resolution we can see many smaller features (e.g. Pacific islands). The model is given a fractional land cover for each grid point.

Here let's plot the land-sea mask itself so we can see where there is at least "some" water:

fig2, ax2 = plt.subplots()

cax2 = ax2.pcolormesh( topo.lon, topo.lat, topo.LANDFRAC )

ax2.set_title('Ocean mask in CESM')

ax2.set_xlabel('Longitude'); ax2.set_ylabel('Latitude')

cbar2 = fig2.colorbar(cax2);

Making nicer maps¶

Notice that to make these plots we've just plotted the lat-lon array without using any map projection.

There are nice tools available to make better maps. We'll leave that as a topic for another day. But if you're keen to read ahead, check out:

Ocean boundary conditions¶

Another important input file contains information about the slab ocean. You can see this file in the data catalog here:

Let's load it and take a look.

som_input = xr.open_dataset( datapath + 'som_input/pop_frc.1x1d.090130.nc' + endstr, decode_times=False )

print(som_input)

The ocean / sea ice models exist on different grids than the atmosphere (1º instead of 2º resolution).

Now we are going to look at the annual mean heat flux out of the ocean, which is the prescribed 'q-flux' that we give to the slab ocean model.

It is stored in the field qdp in the input file.

The sign convention in CESM is that qdp > 0 where heat is going IN to the ocean. We will change the sign to plot heat going OUT of the ocean INTO the atmosphere (a more atmosphere-centric viewpoint).

som_input.qdp

Unfortunately, here is a case in which the metadata are not very useful. There is no text description of what variable qdp actually is, or what its units are. (It is actually in units of W/m2)

We can see that there are 12 x 180 x 360 data points. One 180 x 360 grid for each calendar month!

Now we are going to take the average over the year at each point.

We will use the power of xarray here to take the average over the time dimension, leaving us with a single grid on 180 latitude points by 360 longitude points:

(-som_input.qdp.mean(dim='time')).plot()

Now make a nice plot of the annual mean q-flux.

# We can always set a non-standard size for our figure window

fig3, ax3 = plt.subplots(figsize=(10, 6))

lev = np.arange(-700., 750., 50.)

cax3 = ax3.contourf(som_input.xc, som_input.yc,

-som_input.qdp.mean(dim='time'),

levels=lev, cmap=plt.cm.bwr)

cbar3 = fig3.colorbar(cax3)

ax3.set_title( 'CESM: Prescribed heat flux out of ocean (W m$^{-2}$), annual mean',

fontsize=14 )

ax3.set_xlabel('Longitude', fontsize=14)

ax3.set_ylabel('Latitude', fontsize=14)

ax3.text(65, 50, 'Annual', fontsize=16 )

ax3.contour(topo.lon, topo.lat, topo.LANDFRAC, levels=[0.5], colors='k');

Notice all the spatial structure here:

- Lots of heat is going in to the oceans at the equator, particularly in the eastern Pacific Ocean.

- The red hot spots show where lots of heat is coming out of the ocean.

- Hot spots include the mid-latitudes off the eastern coasts of Asia and North America

- And also the northern North Atlantic.

All this structure is determined by ocean circulation, which we are not modeling here. Instead, we are prescribing these heat flux patterns as an input to the atmosphere.

This pattern changes throughout the year. Recall that we just averaged over all months to make this plot. We might want to look at just one month:

# select by month index (0 through 11)

som_input.qdp.isel(time=0)

# select by array slicing (but for this you have to know the axis order!)

som_input.qdp[0,:,:]

Here we got just the first month (January) by specifying [0,:,:] after the variable name. This is called slicing or indexing an array. We are saying "give me everything for month number 0". Now make the plot:

fig4, ax4 = plt.subplots(figsize=(10,4))

cax4 = ax4.contourf( som_input.xc, som_input.yc,

-som_input.qdp.isel(time=0),

levels=lev, cmap=plt.cm.bwr)

cbar4 = plt.colorbar(cax4)

ax4.set_title( 'CESM: Prescribed heat flux out of ocean (W m$^{-2}$)',

fontsize=14 )

ax3.set_xlabel('Longitude', fontsize=14)

ax3.set_ylabel('Latitude', fontsize=14)

ax4.text(65, 50, 'January', fontsize=12 );

ax4.contour(topo.lon, topo.lat, topo.LANDFRAC, levels=[0.5], colors='k');

Just for fun: some interactive plotting¶

IPython provides some really neat and easy-to-use tools to set up interactive graphics in your notebook.

Here we're going to create a figure with a slider that lets of step through each month of the q-flux data.

# A list of text labels for each month

months = ['Jan', 'Feb', 'Mar', 'Apr', 'May', 'Jun', 'Jul', 'Aug',

'Sep', 'Oct', 'Nov', 'Dec']

# an example of slicing this list:

months[-2:]

# A function that takes a month index (0 - 11) and creates a plot just like above

def sh(month):

fig, ax = plt.subplots(figsize=(10,4))

cax = ax.contourf( som_input.xc, som_input.yc,

-som_input.qdp.isel(time=month),

levels=lev, cmap=plt.cm.bwr)

cbar = plt.colorbar(cax)

ax.set_title( 'CESM: Prescribed heat flux out of ocean (W m$^{-2}$)',

fontsize=14 )

ax.set_xlabel('Longitude', fontsize=14)

ax.set_ylabel('Latitude', fontsize=14)

ax.text(65, 50, months[month], fontsize=12 );

ax.contour(topo.lon, topo.lat, topo.LANDFRAC, levels=[0.5], colors='k');

# Calling this function with a single month index gives us a single plot:

sh(6)

When you execute the next cell, you should get a figure with a slider above it. Go ahead and play with it.

from ipywidgets import interact

interact(sh, month=(0,11,1));

The "pre-industrial" control run¶

Our control run is set up to simulate the climate of the "pre-industrial era", meaning before significant human-induced changes to the composition of the atmosphere, nominally the year 1850.

Output from the control run is available on the same data server as above. Look in the folder called som_1850_f19 (Here som stands for "slab ocean model", 1850 indicated pre-industrial conditions, and f19 is a code for the horizontal grid resolution).

There are climatology files for each active model component:

- atmosphere,

- sea ice

- land surface

I created these files by averaging over the last 10 years of the simulation. Let's take a look at the atmosphere file. The file is called

som_1850_f19.cam.h0.clim.nc

(the file extension .nc is used to indicate NetCDF format).

atm_control = xr.open_dataset( datapath + 'som_1850_f19/som_1850_f19.cam.h0.clim.nc' + endstr )

print(atm_control)

Lots of different stuff! These are all the different quantities that are calculated as part of the model simulation. Every quantity represents a long-term average for a particular month.

Want to get more information about a particular variable?

atm_control.co2vmr

This is the (prescribed) amount of CO2 in the atmosphere (about 285 parts per million by volume).

One nice thing about xarray.DataArray objects is that we can do simple arithmetic with them (already seen several examples of this in the notes above). For example, change the units of CO2 amount to ppm:

atm_control.co2vmr * 1E6

Here's another variable:

atm_control.SOLIN

Apparently this is the incoming solar radiation or insolation, with shape (12,96,144) meaning it's got 12 months, 96 latitude points and 144 longitude points.

Exercise¶

Make two well-labeled plots of the insolation:

- The annual mean

- The (June - December) difference

Comparing the control run with the observed energy budget¶

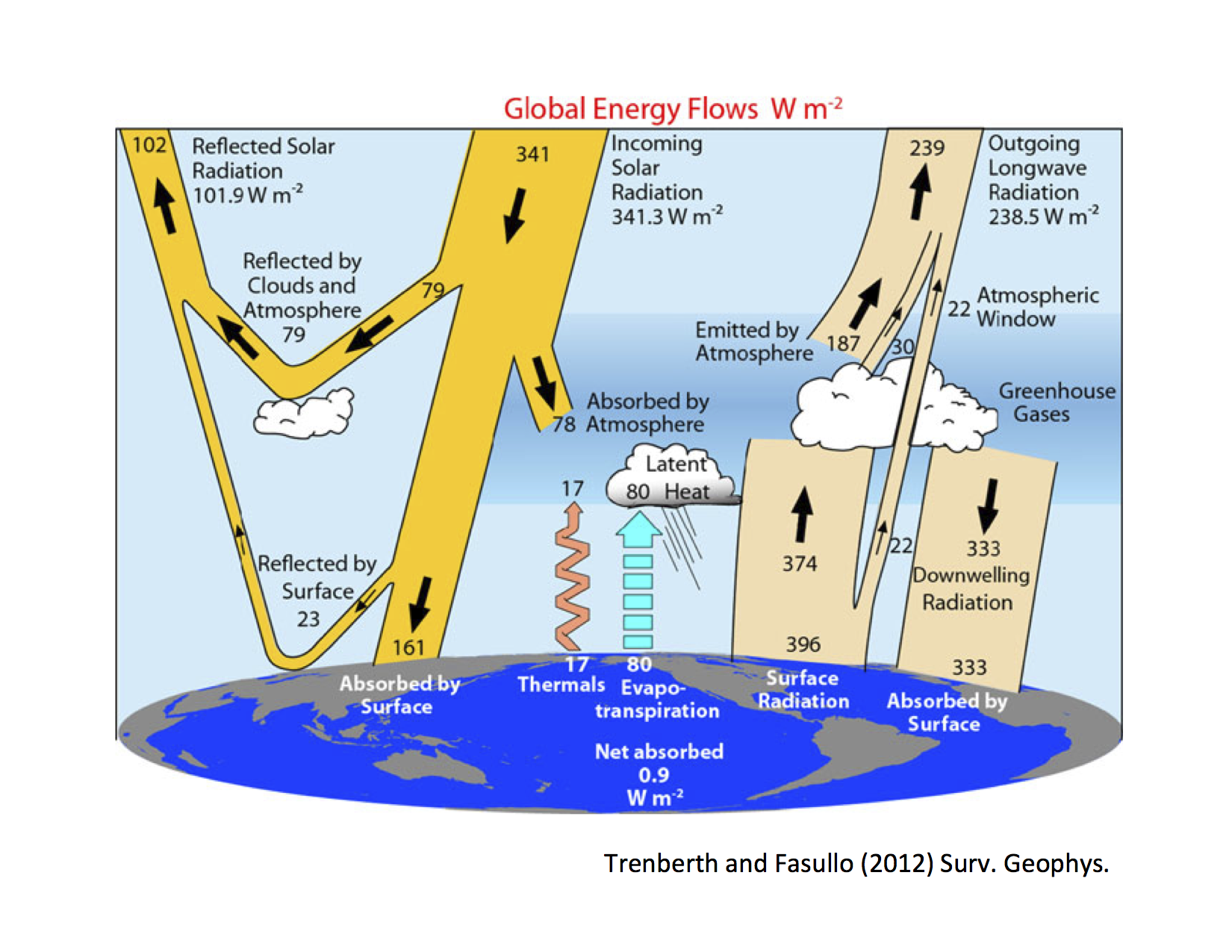

Recall that our investigations so far have been guided by this figure of the observed annual, global mean energy budget:

The global average¶

In order to compare these numbers with the control run, we need to take global averages of the data.

A global average must be weighted by the area of each grid cell. We cannot simply average over each data point on a latitude-longitude grid.

WHY?

Weighting for global average¶

The global average needs to weighted by the cosine of latitude (do you understand why?)

We can implement this in xarray as follows:

# functions available in xarray for standard mathematical operations

# (but still preserving DataArray attributes and axes)

from xarray.ufuncs import cos, deg2rad

# Take the cosine of latitude (first converting to radians)

coslat = cos(deg2rad(atm_control.lat))

# And divide by its mean value

weight_factor = coslat / coslat.mean(dim='lat')

# Want to see what we just created?

weight_factor

An alternative: use weights already provided in the dataset¶

You will find that many gridded datasets already provide a field that gives accurate area weighting.

In the case of the CESM output, the field is called gw

weight_factor2 = atm_control.gw / atm_control.gw.mean(dim='lat')

# Compare our two weights

print( (atm_control.FLNT * weight_factor).mean(dim=('time', 'lon', 'lat')))

print( (atm_control.FLNT * weight_factor2).mean(dim=('time', 'lon', 'lat')))

These numbers should be very close to each other.

Exercise¶

Make sure you can take a global average, testing on surface temperature in the control run.

Surface temperature is called 'TS' in the control run data file.

Calculate annual, global average 'TS'

Verify that you get something close to 289.57

If you don't, try to find and fix the errors. ____________________________

Finding the radiative fluxes in the model output¶

Now that you have a working function to take global averages, we can compare some energy budget values against observations.

The model output contains lots of diagnostics about the radiative fluxes. Here some CESM naming conventions to help you find the appropriate output fields:

- All variables whose names being with

'F'are an energy flux of some kind. - Most have a four-letter code, e.g.

'FLNT' 'FL'means longwave flux (i.e. terrestrial)'FS'means shortwave flux (i.e. solar)- The third letter indicates direction of the flux:

'U'= up'D'= down'N'= net

- The fourth letter indicates the location of the flux:

'T'= top of atmosphere'S'= surface

- So

'FLNT'means 'net longwave flux at the top of atmosphere', i.e. the outgoing longwave radiation.

You wil see that these are all 12 x 96 x 144 -- i.e. a two-dimensional grid for every calendar month.

atm_control.FLNT

Exercise¶

Compute annual, global averages of the following four quantities.

- Incoming solar radiation (or insolation)

- Absorbed solar radiation

- Planetary albedo

- Outgoing longwave radiation

Compare your results briefly to the observations. ____________________________

A few more tidbits¶

Feel free to keep exploring the data!

Many other fields are four-dimensional (time, level, latitude, longitude).

For example, here is the shape of the array that hold the air temperature at every point and every month:

atm_control['T'].shape

An important gotcha with xarray¶

Normally we can access a variable with the notation Dataset.variable_name

But in xarray (and also in numpy and other packages), the notation object.T actually represents the transpose operator:

print( atm_control.FLNT.shape)

print( atm_control.FLNT.T.shape)

Here there is a name conflict because the air temperature variable is called T. One solution is to access it through the dictionary method, as I did above:

atm_control['T']

Another solution is just to rename the variable (here going from T to Ta):

atm_control.rename({'T': 'Ta'}, inplace=True)

atm_control.Ta

And here is some code to plot the average sounding (temperature as function of pressure) at a particular point in the month of January.

plt.plot( atm_control.Ta[0,:,70,115], atm_control.lev )

plt.gca().invert_yaxis()

plt.ylabel('Pressure (hPa)')

plt.xlabel('Temperature (K)')

What was the location we just used for that plot? Let's check by indexing the latitude and longitude arrays:

print( atm_control.lat[70].values)

print( atm_control.lon[115].values)

These are actually the coordinates of the Albany area (read longitude in degrees east).

So go ahead and mess around with the model output and see what you can do with it. And have fun.¶

Thanks for playing!