In [1]:

%pylab inline

Populating the interactive namespace from numpy and matplotlib

In [2]:

import torch

from torch import nn

from torchmore import flex, layers

APPLICATIONS TO OCR¶

Character Recognition¶

- assuming you have a character segmentation

- extract each character

- feed to any of these architectures as if it were an object recognition problem

Goodfellow, Ian J., et al. "Multi-digit number recognition from street view imagery using deep convolutional neural networks." arXiv preprint arXiv:1312.6082 (2013).

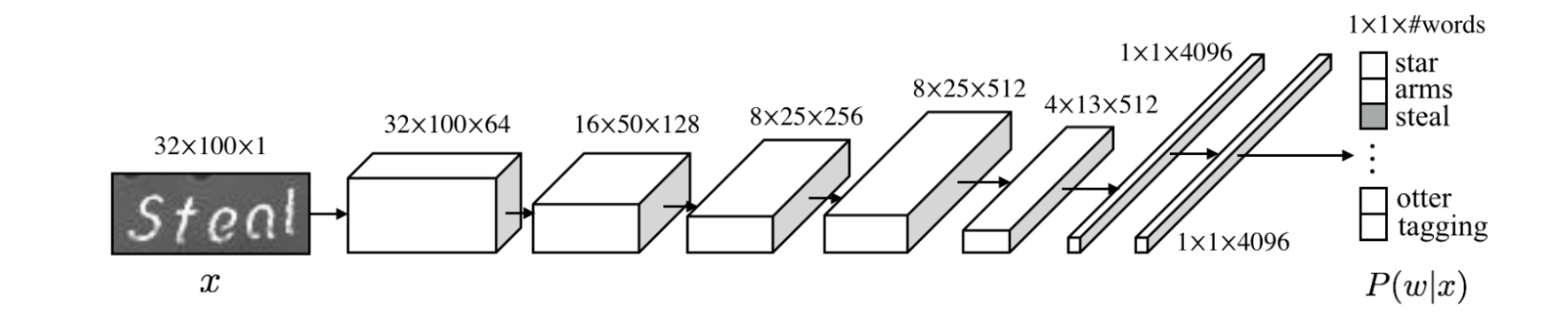

Whole Word Recognition¶

- perform word localization using Faster RCNN

- perform whole word recognition as if it were a large object reconition problem

Jaderberg, Max, et al. "Deep structured output learning for unconstrained text recognition." arXiv preprint arXiv:1412.5903 (2014).

Better Techniques¶

- above techniques are applications of computer vision localization

- Faster RCNN and similar techniques are ad hoc and limited

- often require pre-segmented text for training

- better approaches:

- use markers for localizing/bounding text (later)

- use sequence learning techniques and CTC

Convolutional Networks for OCR¶

Historically, LSTM came first, but we're going to start off with convolutional networks analogous to object recognition networks.

Structure:

- perform 2D convolutions over the entire image

- assume that that has extracted features that correspond to characters

- project those features into a 1D sequence

- classify the projected 1D feature sequence into characters

- perform training using EM alignment (CTC, Viterbi, etc.)

Convolutional Networks for OCR¶

In [3]:

def make_model():

return nn.Sequential(

# BDHW

*convolutional_layers(),

# BDHW, now reduce along the vertical

layers.Fun(lambda x: x.sum(2)),

# BDW

layers.Conv1d(num_classes, 1)

)

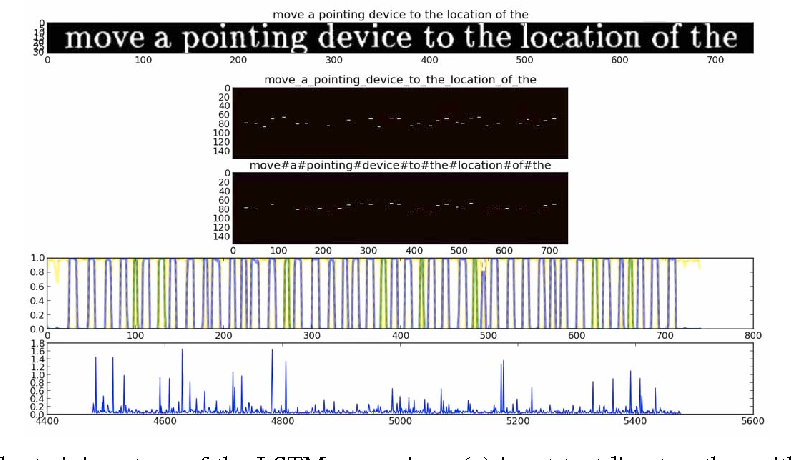

Viterbi Training¶

- ground truth: text string = sequence of classes

- ground truth

"ABC"is replaced by regular expression/_+A+_+B+_+C+_+/ - network outputs $P(c|i)$, a probability of each class $c$ at each position $i$

- find the best possible alignment between network outputs and ground truth regex

- that alignment gives an output for each time step

- treat that alignment as if it were the ground truth and backpropagate

- this is an example of an EM algorithm

CTC Training¶

- like Viterbi training, but instead of finding the best alignment uses an average alignment

Identical to traditional HMM training in speech recognition:

- Viterbi training = Viterbi training

- CTC training = forward-backward algorithm

CTC training with cctc2¶

- with the

cctc2library, we can make the alignment explicit

In [4]:

def train_batch(input, target):

optimizer.zero_grad()

output = model(input)

aligned = cctc2.align(output, target)

loss = mse_loss(aligned, output)

loss.backward()

optimizer.step()

Explicit CTC¶

CTC in PyTorch¶

- in PyTorch, CTC is implemented as a loss function

CTCLossin PyTorch obscures what's going on- all you get is the loss output, not the EM alignment

- sequences are packed in a special way into batches

In [5]:

ctc_loss = nn.CTCLoss()

def train_batch(input, target):

optimizer.zero_grad()

output = model(input)

loss = ctc_loss(output, target)

loss.backward()

optimizer.step()

Word / Text Line Recognition¶

In [6]:

def make_model():

return nn.Sequential(

*convolutional_layers(),

layers.Fun(lambda x: x.sum(2)),

layers.Conv1d(num_classes, 1)

)

def train_batch(input, target):

optimizer.zero_grad()

output = model(input)

loss = ctc_loss(output, target)

loss.backward()

optimizer.step()

VGG-Like Model¶

In [7]:

def conv2d(d, r=3, stride=1, repeat=1):

"""Generate a conv layer with batchnorm and optional maxpool."""

result = []

for i in range(repeat):

result += [

flex.Conv2d(d, r, padding=(r//2, r//2), stride=stride),

flex.BatchNorm2d(),

nn.ReLU()

]

return result

def conv2mp(d, r=3, mp=2, repeat=1):

"""Generate a conv layer with batchnorm and optional maxpool."""

result = conv2d(d, r, repeat=repeat)

if mp is not None:

result += [nn.MaxPool2d(mp)]

return result

def project_and_conv1d(d, noutput, r=5):

return [

layers.Fun("lambda x: x.max(2)[0]"),

flex.Conv1d(d, r, padding=r//2),

flex.BatchNorm1d(),

nn.ReLU(),

flex.Conv1d(noutput, 1),

layers.Reorder("BDL", "BLD")

]

class Additive(nn.Module):

def __init__(self, *args, post=None):

super().__init__()

self.sub = nn.ModuleList(args)

self.post = None

def forward(self, x):

y = self.sub[0](x)

for f in self.sub[1:]:

y = y + f(x)

if self.post is not None:

y = self.post(y)

return y

In [8]:

def make_vgg_model(noutput=53):

return nn.Sequential(

layers.Input("BDHW", sizes=[None, 1, None, None]),

*conv2mp(100, 3, 2, repeat=2),

*conv2mp(200, 3, 2, repeat=2),

*conv2mp(300, 3, 2, repeat=2),

*conv2d(400, 3, repeat=2),

*project_and_conv1d(800, noutput)

)

make_vgg_model()(torch.rand(1, 1, 60, 400)).shape

Out[8]:

torch.Size([1, 50, 53])

Resnet-Block¶

- NB: we can easily define Resnet etc. in an object-oriented fashion

In [9]:

def ResnetBlock(d, r=3):

return Additive(

nn.Identity(),

nn.Sequential(

nn.Conv2d(d, d, r, padding=r//2), nn.BatchNorm2d(d), nn.ReLU(),

nn.Conv2d(d, d, r, padding=r//2), nn.BatchNorm2d(d)

)

)

def resnet_blocks(n, d, r=3):

return [ResnetBlock(d, r) for _ in range(n)]

Resnet-like Model¶

In [10]:

def make_resnet_model(noutput=53):

return nn.Sequential(

layers.Input("BDHW", sizes=[None, 1, None, None]),

*conv2mp(64, 3, (2, 1)),

*resnet_blocks(5, 64), *conv2mp(128, 3, 2),

*resnet_blocks(5, 128), *conv2mp(256, 3, 2),

*resnet_blocks(5, 256), *conv2mp(512, 3, 2),

*resnet_blocks(5, 512),

*project_and_conv1d(800, noutput)

)

make_resnet_model()(torch.rand(1, 1, 60, 400)).shape

Out[10]:

torch.Size([1, 50, 53])

Footprints¶

- even with projection/1D convolution, a character is first recognized in 2D by the 2D convolutional network

- character recognition with 2D convolutional networks really a kind of deformable template matching

- in order to recognize a character, each pixel at the output of the 2D convolutional network needs to have a footprint large enough to cover the character to be recognized

- footprint calculation:

- 3x3 convolution, three maxpool operations = 24x24 footprint

Conv-Only Training¶

Problems with VGG/Resnet+Conv1d¶

Problem:

- reduces output to H/8, W/8

- CTC alignment needs two pixels for each character

- result: models trouble with narrow characters

Solutions:

- use fractional max pooling

- use upscaling

- use transposed convolutions

Less Downscaling using FractionalMaxPool2d¶

- permits more max pooling steps without making image too small

- can be performed anisotropically

- necessary non-uniform spacing may have additional benefits

In [11]:

def conv2fmp(d, r=3, fmp=(0.7, 0.85), repeat=1):

result = conv2d(d, r, repeat=repeat)

if fmp is not None:

result += [nn.FractionalMaxPool2d(3, output_ratio=fmp)]

return result

def make_fmp_model(noutput=53):

return nn.Sequential(

layers.Input("BDHW", sizes=[None, 1, None, None]),

*[l for d in [50, 100, 150, 200, 250, 300] for l in conv2fmp(d, 3, (0.7, 0.9))],

*project_and_conv1d(800, noutput)

)

make_fmp_model()(torch.rand(1, 1, 60, 400)).shape

Out[11]:

torch.Size([1, 210, 53])

Upscaling using interpolate¶

interpolatescales an image, hasbackward()MaxPool2d...interpolateis a simple multiscale analysis- can be combined with loss functions at each level

In [12]:

import torch.nn.functional as F

def make_interpolating_model(noutput=53):

return nn.Sequential(

layers.Input("BDHW", sizes=[None, 1, None, None]),

*conv2mp(50, 3), *conv2mp(100, 3), *conv2mp(150, 3), *conv2mp(200, 3),

layers.Fun_(lambda x: F.interpolate(x, scale_factor=16)),

*project_and_conv1d(800, noutput)

)

make_interpolating_model()(torch.rand(1, 1, 60, 400)).shape

Out[12]:

torch.Size([1, 400, 53])

Upscaling with interpolate¶

Upscaling using ConvTranspose1d¶

ConvTranspose2dfills in higher resolutions with "templates"- commonly used in image segmentation and superresolution

In [13]:

def make_ct_model(noutput=53, ct=1):

return nn.Sequential(

layers.Input("BDHW", sizes=[None, 1, None, None]),

*conv2mp(50, 3),

*conv2mp(100, 3),

*conv2mp(150, 3),

*conv2mp(200, 3),

layers.Fun("lambda x: x.sum(2)"), # BDHW -> BDW

*[flex.ConvTranspose1d(800, 1, stride=2)]*ct,

flex.Conv1d(noutput, 7, padding=3)

)

print(make_ct_model()(torch.rand(1, 1, 60, 400)).shape)

print(make_ct_model(ct=0)(torch.rand(1, 1, 60, 400)).shape)

torch.Size([1, 53, 49]) torch.Size([1, 53, 25])

How well do these work?¶

- Works for word or text line recognition.

- All these models only require that characters are arranged left to right.

- Input images can be rotated up to around 30 degrees and scaled.

- Input images can be grayscale.

- Great for scene text and degraded documents.

But:

- You pay a price for translation/scale inv: lower performance.

- These don't use any recurrent networks, so all information flow is strictly limited to the footprint.