SYDE 556/750: Simulating Neurobiological Systems¶

Terry Stewart

Action Selection and the Basal Ganglia¶

- When we did the Nengo Tutorial, we ended up building a simple neural system that could do one of two things:

- move in the direction it was told to move in

- or go back to where it started from

- Which one of those things it did would depend on a separate input (which we called being 'scared')

import nef

net = nef.Network('Creature')

net.make_input('command_input', [0,0])

net.make('command', neurons=100, dimensions=2)

net.make('motor', neurons=100, dimensions=2)

net.make('position', neurons=1000, dimensions=2, radius=5)

net.make('scared_direction', neurons=100, dimensions=2)

def negative(x):

return -x[0], -x[1]

net.connect('position', 'scared_direction', func=negative)

net.connect('position', 'position')

net.make('plan', neurons=500, dimensions=5)

net.connect('command', 'plan', index_post=[0,1])

net.connect('scared_direction', 'plan', index_post=[2,3])

net.make('scared', neurons=50, dimensions=1)

net.make_input('scared_input', [0])

net.connect('scared_input', 'scared')

net.connect('scared', 'plan', index_post=[4])

def plan_function(x):

c_x, c_y, s_x, s_y, s = x

return s*(s_x)+(1-s)*c_x, s*(s_y)+(1-s)*c_y

net.connect('plan', 'motor', func=plan_function)

def rescale(x):

return x[0]*0.1, x[1]*0.1

net.connect('motor', 'position', func=rescale)

net.connect('command_input', 'command')

net.add_to_nengo()

This sort of system comes up a lot in cognitive models

- A bunch of different possible actions

- Pick one of them to do

How can we do this?

In the above example, we did it like this:

- $m = (s)(s_x, s_y) + (1-s)(c_x, c_y)$

This required a 5-dimensional ensemble

- We can simplify to two 3-dimensional ensembles:

- $m_x = (s)s_x + (1-s)c_x$

- $m_y = (s)s_y + (1-s)c_y$

What about more complex situations?

- What if there are three possible actions?

- What if the actions involve different outputs?

Action Selection and Execution¶

- This is known as action selection

- Often divided into two parts:

- Action selection (identifying the action to perform)

- Action execution (actually performing the action)

- Actions can be many different things

- physical movements

- moving attention

- changing contents of working memory ("1, 2, 3, 4, ...")

- recalling items from long-term memory

Action Selection¶

- How can we do this?

- We have a bunch of different possible actions

- go to where we started from

- go in the direction we are told to go in

- move randomly

- go towards food

- go away from predators

- Which one do we pick?

- Ideas?

Reinforcement Learning¶

- Let's steal an idea from reinforcement learning

- Lots of different actions, learn to pick one

- Each action has a utility $Q$ that depends on the current state $s$

- $Q(s, a)$

- Pick the action that has the largest $Q$

- Note

- Lots of different variations on this

- $V(s)$

- softmax: $p(a_i) = e^{Q(s, a_i)/T} / \sum_i e^{Q(s, a_i)/T}$

- In Reinforcement Learning research, people come up with learning algorithms for adjusting $Q$ based on rewards

- Let's not worry about that for now and just use the basic idea

- There's some sort of state $s$

- For each action $a_i$, compute $Q(s, a_i)$ which is a function that we can define

- Take the biggest

Implementation¶

One group of neurons to represent the state $s$

One group of neurons for each action's utility $Q(s, a_i)$

- Or one large group of neurons for all the $Q$ values

What should the output be?

- We could have $index$, which is the index $i$ of the action with the largest $Q$ value

- Or we could have something like $[0,0,1,0]$, indicating which action is selected

- Advantages and disadvantages?

The second option seems easier if we consider that we have to do action execution next...

A Simple Example¶

- State $s$ is 2-dimensional

- Four actions (A, B, C, D)

- Do action A if $s$ is near [1,0], B if near [-1,0], C if near [0,1], D if near [0,-1]

- $Q(s, a_A)=s \cdot [1,0]$

- $Q(s, a_B)=s \cdot [-1,0]$

- $Q(s, a_C)=s \cdot [0,1]$

- $Q(s, a_D)=s \cdot [0,-1]$

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make('Q_A', neurons=50, dimensions=1)

net.make('Q_B', neurons=50, dimensions=1)

net.make('Q_C', neurons=50, dimensions=1)

net.make('Q_D', neurons=50, dimensions=1)

net.connect('s', 'Q_A', transform=[1,0])

net.connect('s', 'Q_B', transform=[-1,0])

net.connect('s', 'Q_C', transform=[0,1])

net.connect('s', 'Q_D', transform=[0,-1])

net.make_input('input', [0,0])

net.connect('input', 's')

net.add_to_nengo()

net.view()

- It's annoying to have all those separate $Q$ neurons

- Nengo has an

arraycapability to help with this- Doesn't change the model at all

- It just groups things together for you

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_input('input', [0,0])

net.connect('input', 's')

net.add_to_nengo()

net.view()

- How do we implement the $max$ function?

- Well, it's just a function, so let's implement it

- Need to combine all the $Q$ values into one 4-dimensional ensemble

- Why?

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.make('Qall', neurons=200, dimensions=4)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

def maximum(x):

max_x = max(x)

result = [0,0,0,0]

result[x.index(max_x)] = 1

return result

net.make('Action', neurons=200, dimensions=4)

net.connect('Q', 'Qall')

net.connect('Qall', 'Action', func=maximum)

net.make_input('input', [0,0])

net.connect('input', 's')

net.add_to_nengo()

net.view()

- Hmm, that's not as good as we'd hoped

- Very nonlinear function, so neurons are not able to approximate it well

- Other options?

The Standard Neural Network Approach (modified)¶

- If you give this problem to a standard neural networks person, what would they do?

- They'll say this is exactly what neural networks are great at

- Implement this with mutual inhibition and self-excitation

- Neural competition

- 4 "neurons"

- have excitation from each neuron back to themselves

- have inhibition from each neuron to all the others

- Now just put in the input and wait for a while and it'll stablize to one option

- Can we do that?

- Sure! Just replace each "neuron" with a group of neurons, and compute the desired function on those connections

- note that this is a very general method of converting any non-realistic neural model into a biologically realistic spiking neuron model

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_input('input', [0,0])

net.connect('input', 's')

e = 0.1

i = -1

transform = [[e, i, i, i], [i, e, i, i], [i, i, e, i], [i, i, i, e]]

net.connect('Q', 'Q', transform=transform)

net.add_to_nengo()

net.view()

- Oops, that's not quite right

- Why is it selecting more than one action?

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_array('Action', neurons=50, length=4, dimensions=1)

net.connect('Q', 'Action')

net.make_input('input', [0,0])

net.connect('input', 's')

e = 0.5

i = -1

transform = [[e, i, i, i], [i, e, i, i], [i, i, e, i], [i, i, i, e]]

# Let's force the feedback connection to only consider positive values

def positive(x):

if x[0]<0: return [0]

else: return x

net.connect('Action', 'Action', func=positive, transform=transform)

net.add_to_nengo()

net.view()

- Now we only influence other Actions when we have a positive value

- Note: Is there a more neurally efficient way to do this?

- Much better

- Selects one action reliably

- But still gives values smaller than 1.0 for the output a lot

- Can we fix that?

- What if we adjust

e?

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_array('Action', neurons=50, length=4, dimensions=1)

net.connect('Q', 'Action')

net.make_input('input', [0,0])

net.connect('input', 's')

e = 1

i = -1

transform = [[e, i, i, i], [i, e, i, i], [i, i, e, i], [i, i, i, e]]

def positive(x):

if x[0]<0: return [0]

else: return x

net.connect('Action', 'Action', func=positive, transform=transform)

net.add_to_nengo()

net.view()

- Hmm, that seems to introduce a new problem

- The self-excitation is so strong that it can't respond to changes in the input

- Indeed, any method like this is going to have some form of memory effects

- Notice that what has been implemented is an integrator (sort of)

- Could we do anything to help without increasing

etoo much?

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_array('Action', neurons=50, length=4, dimensions=1)

net.connect('Q', 'Action')

net.make_input('input', [0,0])

net.connect('input', 's')

e = 0.5

i = -1

transform = [[e, i, i, i], [i, e, i, i], [i, i, e, i], [i, i, i, e]]

def positive(x):

if x[0]<0: return [0]

else: return x

net.connect('Action', 'Action', func=positive, transform=transform)

# Apply this function on the output

def select(x):

if x[0]<=0: return [0]

else: return [1]

net.make_array('ActionValue', neurons=50, length=4, dimensions=1)

net.connect('Action', 'ActionValue', func=select)

net.add_to_nengo()

net.view()

- Better behaviour

- But there's still situations where there's too much memory

- We can reduce this by reducing

e

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_array('Action', neurons=50, length=4, dimensions=1)

net.connect('Q', 'Action')

net.make_input('input', [0,0])

net.connect('input', 's')

e = 0.2

i = -1

transform = [[e, i, i, i], [i, e, i, i], [i, i, e, i], [i, i, i, e]]

def positive(x):

if x[0]<0: return [0]

else: return x

net.connect('Action', 'Action', func=positive, transform=transform)

# Apply this function on the output

def select(x):

if x[0]<=0: return [0]

else: return [1]

net.make_array('ActionValue', neurons=50, length=4, dimensions=1)

net.connect('Action', 'ActionValue', func=select)

net.add_to_nengo()

net.view()

Much less memory, but it's still there

And much slower to respond to changes

Note that this speed is dependent on $e$, $i$, and the time constant of the neurotransmitter used

Can be hard to find good values

And this gets harder to balance as the number of actions increases

- Also hard to balance for a wide range of $Q$ values

- (Does it work for $Q$=[0.9, 0.9, 0.95, 0.9] and $Q$=[0.2, 0.2, 0.25, 0.2]?)

- Also hard to balance for a wide range of $Q$ values

But this is still a pretty standard approach

- Nice and easy to get working for special cases

- Don't really need the NEF (if you're willing to assume non-realistic non-spiking neurons)

- (Although really, if you're not looking for biological realism, why not just compute the max function?)

They tend to use a "kWTA" (k-Winners Take All) approach in their models

- Set up inhibition so that only $k$ neurons will be active

- But since that's complex to do, just do the math instead of doing the inhibition

- We think that doing it their way means that the dynamics of the model will be wrong (i.e. all the effects we saw above are being ignored).

Any other options?

Biology¶

- Let's look at the biology

- Where is this action selection in the brain?

- General consensus: the basal ganglia

- Pretty much all of cortex connects in to this area (via the striatum)

- Output goes to the thalamus, the central routing system of the brain

- Disorders of this area of the brain cause problems controlling actions:

- Parkinson's disease

- Neurons in the substancia nigra die off

- Extremely difficult to trigger actions to start

- Usually physical actions; as disease progresses and more of the SNc is gone, can get cognitive effects too

- Huntington's disease

- Neurons in the striatum die off

- Actions are triggered inappropriately (disinhibition)

- Small uncontrollable movements

- Trouble sequencing cognitive actions too

- Parkinson's disease

- Also heavily implicated in reinforcement learning

- The dopamine levels seem to map onto reward prediction error

- High levels when get an unexpected reward, low levels when didn't get a reward that was expected

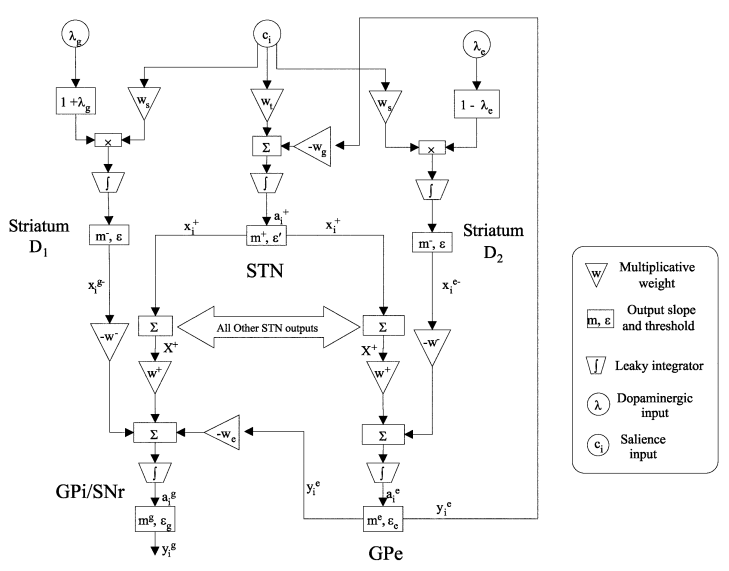

- Connectivity diagram:

Old terminology:

- "direct" pathway: cortex -> striatum -> GPi -> thalamus

- "indirect" pathway: cortex -> striatum -> GPe -> STN -> GPi -> thalamus

Then they found:

- "hyperdirect" pathway: cortex -> STN -> SPi -> thalamus

- and lots of other connections

Activity in the GPi (output)

- generally always active

- neurons stop firing when corresponding action is chosen

- representing [1, 1, 0, 1] instead of [0, 0, 1, 0]

Leabra approach

- Each action has two groups of neurons in the striatum representing $Q(s, a_i)$ and $1-Q(s, a_i)$ ("go" and "no go")

- Mutual inhibition causes only one of the "go" and one of the "no go" groups to fire

- GPi neuron get connections from "go" neurons, with value multiplied by -1 (direct pathway)

- GPi also gets connections from "no go" neurons, but multiplied by -1 (striatum->GPe), then -1 again (GPe->STN), then +1 (STN->GPi)

- Result in GPi is close to [1, 1, 0, 1] form

Seems to match onto the biology okay

- But why the weird double-inverting thing? Why not skip the GPe and STN entirely?

- And why split into "go" and "no-go"? Just the direct pathway on its own would be fine

- Maybe it's useful for some aspect of the learning...

- What about all those other connections?

An alternate model of the Basal Ganglia¶

Maybe the weird structure of the basal ganglia is an attempt to do action selection without doing mutual inhibition

Needs to select from a large number of actions

Needs to do so quickly, and without the memory effects

Let's start with a very simple version

Sort of like an "unrolled" version of one step of mutual inhibition

Now let's map that onto the basal ganglia

- But that's only going to work for very specific $Q$ values.

- Need to dynamically adjust the amount of +ve and -ve weighting

This turns out to work surprisingly well

But extremely hard to analyze its behaviour

They showed that it qualitatively matches pretty well

So what happens if we convert this into realistic spiking neurons?

Use the same approach where one "neuron" in their model is a pool of neurons in the NEF

The "neuron model" they use was rectified linear

- That becomes the function the decoders are computing

Neurotransmitter time constant are all known

$Q$ values are between 0 and 1

Firing rates max out around 50-100Hz

Encoders are all positive and thresholds are chosen for efficiency

mm=1

mp=1

me=1

mg=1

ws=1

wt=1

wm=1

wg=1

wp=0.9

we=0.3

e=0.2

ep=-0.25

ee=-0.2

eg=-0.2

le=0.2

lg=0.2

D = 5

tau_ampa=0.002

tau_gaba=0.008

N = 50

radius = 1.5

import nef

net = nef.Network('Basal Ganglia')

net.make_input('input', [0]*D)

net.make_array('StrD1',N, D,intercept=(e,1),encoders=[[1]],radius=radius)

net.make_array('StrD2',N, D,intercept=(e,1),encoders=[[1]],radius=radius)

net.make_array('STN',N, D,intercept=(ep,1),encoders=[[1]],radius=radius)

net.make_array('GPi',N, D,intercept=(eg,1),encoders=[[1]],radius=radius)

net.make_array('GPe',N, D,intercept=(ee,1),encoders=[[1]],radius=radius)

net.connect('input','StrD1',weight=ws*(1+lg),pstc=tau_ampa)

net.connect('input','StrD2',weight=ws*(1-le),pstc=tau_ampa)

net.connect('input','STN',weight=wt,pstc=tau_ampa)

def func_str(x):

if x[0]<e: return 0

return mm*(x[0]-e)

net.connect('StrD1','GPi',func=func_str,weight=-wm,pstc=tau_gaba)

net.connect('StrD2','GPe',func=func_str,weight=-wm,pstc=tau_gaba)

def func_stn(x):

if x[0]<ep: return 0

return mp*(x[0]-ep)

tr=[[wp]*D for i in range(D)]

net.connect('STN','GPi',func=func_stn,transform=tr,pstc=tau_ampa)

net.connect('STN','GPe',func=func_stn,transform=tr,pstc=tau_ampa)

def func_gpe(x):

if x[0]<ee: return 0

return me*(x[0]-ee)

net.connect('GPe','GPi',func=func_gpe,weight=-we,pstc=tau_gaba)

net.connect('GPe','STN',func=func_gpe,weight=-wg,pstc=tau_gaba)

net.make_array('Action',N, D,intercept=(0.2,1),encoders=[[1]])

net.make_input('bias', [1]*D)

net.connect('bias', 'Action')

import numeric as np

net.connect('Action', 'Action', (np.eye(D)-1), pstc=tau_gaba)

def func_gpi(x):

if x[0]<eg: return 0

return mg*(x[0]-eg)

net.connect('GPi','Action',func=func_gpi,pstc=tau_gaba,weight=-3)

net.add_to_nengo()

net.view()

Notice that we are also flipping the output from [1, 1, 0, 1] to [0, 0, 1, 0]

- Mostly for our convenience, but we can also an some mutual inhibition there

Works pretty well

Scales up to many actions

Selects quickly

Gets behavioural match to empirical data, including timing predictions (!)

- Also shows interesting oscillations not seen in the original GPR model

- But these are seen in the real basal ganglia

Dynamic Behaviour of a Spiking Model of Action Selection in the Basal Ganglia

Let's make sure this works with our original system

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_input('input', [0,0])

net.connect('input', 's')

D = 4

net.make_array('Action', neurons=50, length=D, dimensions=1, encoders=[[1]], intercept=(0.2,1))

net.make_input('bias', [1]*D)

net.connect('bias', 'Action')

import numeric as np

net.connect('Action', 'Action', (np.eye(D)-1), pstc=0.008)

import nps

nps.basalganglia.make_basal_ganglia(net, 'Q', 'Action', D, same_neurons=False, output_weight=-3)

net.add_to_nengo()

net.view()

- This system seems to work well

- Still not perfect

- Matches biology nicely

- Some notes on the basal ganglia implementation:

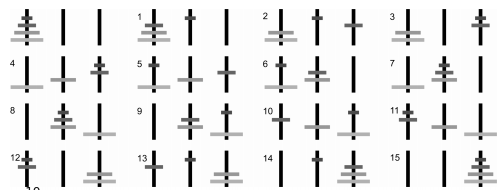

- Each action has a single "neuron" in each area that responds like this:

- These need to get turned into groups of neurons

- What is the best way to do this?

- encoders are all +1

- intercepts are chosen to be $> \epsilon$

Action Execution¶

- Now that we can select an action, how do we perform it?

- Depends on what the action is

- Let's start with simple actions

- Move in a given direction

- Remember a specific vector

- Send a particular value as input into a particular cognitive system

- Example:

- State $s$ is 2-dimensional

- Four actions (A, B, C, D)

- Do action A if $s$ is near [1,0], B if near [-1,0], C if near [0,1], D if near [0,-1]

- $Q(s, a_A)=s \cdot [1,0]$

- $Q(s, a_B)=s \cdot [-1,0]$

- $Q(s, a_C)=s \cdot [0,1]$

- $Q(s, a_D)=s \cdot [0,-1]$

- To do Action A, set $m=[1,0]$

- To do Action B, set $m=[-1,0]$

- To do Action C, set $m=[0,1]$

- To do Action D, set $m=[0,-1]$

import nef

net = nef.Network('Selection')

net.make('s', neurons=200, dimensions=2)

net.make_array('Q', neurons=50, length=4, dimensions=1)

net.connect('s', 'Q', transform=[[1,0],[-1,0],[0,1],[0,-1]])

net.make_input('input', [0,0])

net.connect('input', 's')

D = 4

net.make_array('Action', neurons=50, length=D, dimensions=1, encoders=[[1]], intercept=(0.2,1))

net.make_input('bias', [1]*D)

net.connect('bias', 'Action')

import numeric as np

net.connect('Action', 'Action', (np.eye(D)-1), pstc=0.008)

import nps

nps.basalganglia.make_basal_ganglia(net, 'Q', 'Action', D, same_neurons=False, output_weight=-3)

net.make('motor', neurons=100, dimensions=2)

net.connect('Action.0', 'motor', transform=[1,0])

net.connect('Action.1', 'motor', transform=[-1,0])

net.connect('Action.2', 'motor', transform=[0,1])

net.connect('Action.3', 'motor', transform=[0,-1])

net.add_to_nengo()

net.view()

- What about more complex actions?

- Consider the creature we were making in class

- Action 1: set $m$ to the direction we're told to do

- Action 2: set $m$ to the direction we started from

- Need to pass information from one group of neurons to another

- But only do this when the action is chosen

- How?

- Well, let's use a function

- $m = a*d$

- where $a$ is the action selection (0 for not selected, 1 for selected)

- Let's try that with the creature

import nef

net = nef.Network('Creature')

net.make_input('command_input', [0,0])

net.make('command', neurons=100, dimensions=2)

net.make('motor', neurons=100, dimensions=2)

net.make('position', neurons=1000, dimensions=2, radius=5)

net.make('scared_direction', neurons=100, dimensions=2)

def negative(x):

return -x[0], -x[1]

net.connect('position', 'scared_direction', func=negative)

net.connect('position', 'position')

def rescale(x):

return x[0]*0.1, x[1]*0.1

net.connect('motor', 'position', func=rescale)

net.connect('command_input', 'command')

D = 4

net.make_input('Q_input', [0]*D)

net.make_array('Q', neurons=50, length=D)

net.connect('Q_input', 'Q')

net.make_array('Action', neurons=50, length=D, dimensions=1, encoders=[[1]], intercept=(0.2,1))

net.make_input('bias', [1]*D)

net.connect('bias', 'Action')

import numeric as np

net.connect('Action', 'Action', (np.eye(D)-1), pstc=0.008)

import nps

nps.basalganglia.make_basal_ganglia(net, 'Q', 'Action', D, same_neurons=False, output_weight=-3)

net.make('do_command', 300, 3)

net.connect('command', 'do_command', index_post=[0,1])

net.connect('Action.0', 'do_command', index_post=[2])

def command(x):

return x[2]*x[0], x[2]*x[1]

net.connect('do_command', 'motor', func=command)

net.make('do_scared', 300, 3)

net.connect('scared_direction', 'do_scared', index_post=[0,1])

net.connect('Action.1', 'do_scared', index_post=[2])

def command(x):

return x[2]*x[0], x[2]*x[1]

net.connect('do_scared', 'motor', func=command)

net.add_to_nengo()

- There's also another way to do this

- A special case for forcing a function to go to zero when a particular group of neurons is active

import nef

net = nef.Network('Creature')

net.make_input('command_input', [0,0])

net.make('command', neurons=100, dimensions=2)

net.make('motor', neurons=100, dimensions=2)

net.make('position', neurons=1000, dimensions=2, radius=5)

net.make('scared_direction', neurons=100, dimensions=2)

def negative(x):

return -x[0], -x[1]

net.connect('position', 'scared_direction', func=negative)

net.connect('position', 'position')

def rescale(x):

return x[0]*0.1, x[1]*0.1

net.connect('motor', 'position', func=rescale)

net.connect('command_input', 'command')

D = 4

net.make_input('Q_input', [0]*D)

net.make_array('Q', neurons=50, length=D)

net.connect('Q_input', 'Q')

net.make_array('Action', neurons=50, length=D, dimensions=1, encoders=[[1]], intercept=(0.2,1))

net.make_input('bias', [1]*D)

net.connect('bias', 'Action')

import numeric as np

net.connect('Action', 'Action', (np.eye(D)-1), pstc=0.008)

import nps

nps.basalganglia.make_basal_ganglia(net, 'Q', 'Action', D, same_neurons=False, output_weight=-3)

net.make('do_command', 200, 2)

net.connect('command', 'do_command')

net.connect('do_command', 'motor')

net.connect('GPi.0', 'do_command', encoders=-10)

net.make('do_scared', 300, 2)

net.connect('scared_direction', 'do_scared')

net.connect('do_scared', 'motor')

net.connect('GPi.1', 'do_scared', encoders=-10)

net.add_to_nengo()

- This is a situation where it makes sense to ignore the NEF!

- All we want to do is shut down the neural activity

- So just do a very inhibitory connection

- We can also think of this as changing the encoders for the target pool of neurons

The Cortex-Basal Ganglia-Thalamus loop¶

We now have everything we need for a model of one of the primary structures in the mammalian brain

- Basal ganglia: action selection

- Thalamus: action execution

- Cortex: everything else

We build systems in cortex that give some input-output functionality

- We set up the basal ganglia and thalamus to make use of that functionality appropriately

Example

- Cortex stores some state (integrator)

- Add some state transition rules

- If in state A, go to state B

- If in state B, go to state C

- If in state C, go to state D

- ...

- For now, let's just have states A, B, C, D, etc be some randomly chosen vectors

- $Q(s, a_i) = s \cdot a_i$

- The effect of each action is to input the corresponding vector into the integrator

- This sort of model is going to get complicated, so we've added a wrapper to help build everything:

from spa import *

D=16

class Rules: #Define the rules by specifying the start state and the

#desired next state

def A(state='A'): #e.g. If in state A

set(state='B') # then go to state B

def B(state='B'):

set(state='C')

def C(state='C'):

set(state='D')

def D(state='D'):

set(state='E')

def E(state='E'):

set(state='A')

class Sequence(SPA): #Define an SPA model (cortex, basal ganglia, thalamus)

dimensions=16

state=Buffer() #Create a working memory (recurrent network) object:

#i.e. a Buffer

BG=BasalGanglia(Rules()) #Create a basal ganglia with the prespecified

#set of rules

thal=Thalamus(BG) # Create a thalamus for that basal ganglia (so it

# uses the same rules)

seq=Sequence()

- Can also provide sort of input to start things off

from spa import *

D=16

class Rules: #Define the rules by specifying the start state and the

#desired next state

def A(state='A'): #e.g. If in state A

set(state='B') # then go to state B

def B(state='B'):

set(state='C')

def C(state='C'):

set(state='D')

def D(state='D'):

set(state='E')

def E(state='E'):

set(state='A')

class Sequence(SPA): #Define an SPA model (cortex, basal ganglia, thalamus)

dimensions=16

state=Buffer() #Create a working memory (recurrent network) object:

#i.e. a Buffer

BG=BasalGanglia(Rules()) #Create a basal ganglia with the prespecified

#set of rules

thal=Thalamus(BG) # Create a thalamus for that basal ganglia (so it

# uses the same rules)

input=Input(0.1,state='D') #Define an input; set the input to

#state D for 100 ms

seq=Sequence()

- But that's all using the simple actions

- What about an action that involves taking information from one neural system and sending it to another?

- Let's have a separate visual state and use if to put information into the changing state

from spa import *

D=16

class Rules: #Define the rules by specifying the start state and the

#desired next state

def start(vision='(A+B+C+D+E)*2'):

set(state=vision)

def A(state='A'): #e.g. If in state A

set(state='B') # then go to state B

def B(state='B'):

set(state='C')

def C(state='C'):

set(state='D')

def D(state='D'):

set(state='E')

def E(state='E'):

set(state='A')

class Routing(SPA): #Define an SPA model (cortex, basal ganglia, thalamus)

dimensions=16

state=Buffer() #Create a working memory (recurrent network)

#object: i.e. a Buffer

vision=Buffer(feedback=0) #Create a cortical network object with no

#recurrence (so no memory properties, just

#transient states)

BG=BasalGanglia(Rules) #Create a basal ganglia with the prespecified

#set of rules

thal=Thalamus(BG) # Create a thalamus for that basal ganglia (so it

# uses the same rules)

input=Input(0.1,vision='D')

model=Routing()

Behavioural Evidence¶

- So this lets us build more complex models

- Is there any evidence that this is the way it works in brains?

- Consistent with anatomy/connectivity

- What about behavioural?

- Sort of

- Timing data

- How long does it take to do an action?

- There are lots of existing computational (non-neural) cognitive models that have something like this action selection loop

- Usually all-symbolic

- A set of IF-THEN rules

- e.g. ACT-R

- Used to model mental arithmetic, driving a car, using a GUI, air-traffic control, staffing a battleship, etc etc

- Best fit across all these situations is to set the loop time to 50ms

- how long does this model take?

- Notice that all the timing is based on neural properties, not the algorithm

- Dominated by the longer neurotransmitter time constants in the basal ganglia

- This is in the right ballpark

- But what about this distinction between the two types of actions?

- Not a distinction made in the literature

- But once we start looking for it, lots of evidence

- Resolves an outstanding weirdness where some actions seem to take twice as long as others

- Starting to be lots of citations for 40ms for simple tasks

- This is a nice example of the usefulness of making neural models!

- This distinction wasn't obvious from computational implementations

More complex tasks¶

- Lots of complex tasks can be modelled this way

- Some basic cognitive components (cortex)

- action selection system (basal ganglia and thalamus)

- The tricky part is figuring out the actions

- Example: the Tower of Hanoi task

- 3 pegs

- N disks of different sizes on the pegs

- move from one configuration to another

- can only move one disk at a time

- no larger disk can be on a smaller disk

- can we build rules to do this?

- How do people do this task?

- Studied extensively by cognitive scientists

- Simon (1975):

- Find the largest disk not in its goal position and make the goal to get it in that position. This is the initial “goal move” for purposes of the next two steps. If all disks are in their goal positions, the problem is solved

- If there are any disks blocking the goal move, find the largest blocking disk (either on top of the disk to be moved or at the destination peg) and make the new goal move to move this blocking disk to the other peg (i.e., the peg that is neither the source nor destination of this disk). The previous goal move is stored as the parent goal of the new goal move. Repeat this step with the new goal move.

- If there are no disks blocking the goal move perform the goal move and (a) If the goal move had a parent goal retrieve that parent goal, make it the goal move, and go back to step 2. (b) If the goal had no parent goal, go back to step 1.

What do the actions look like?

State:

goal: what disk am I trying to move (D0, D1, D2)focus: what disk am I looking at (D0, D1, D2)goal_peg: where is the disk I am trying to move (A, B, C)focus_peg: where is the disk I am looking at (A, B, C)target_peg: where am I trying to move a disk to (A, B, C)goal_final: what is the overall final desired location of the disk I'm trying to move (A, B, C)

Note: we're not yet modelling all the sensory and memory stuff here, so we manually set things like

goal_final.Action effects: when an action is selected, it could do the following

- set

focus - set

goal - set

goal_peg - actually try to move a disk to a given location by setting

moveandmove_peg- Note: we're also not modelling the full motor system, so we fake this too

- set

Is this sufficient to implement the algorithm described above?

- What do the action rules look like?

- if

focus=NONE thenfocus=D2,goal=D2,goal_peg=goal_final- $Q$=

focus$\cdot$ NONE

- $Q$=

- if

focus=D2 andgoal=D2 andgoal_peg!=target_pegthenfocus=D1- $Q$=

focus$\cdot$ D2 +goal$\cdot$ D2 -goal_peg$\cdot$target_peg

- $Q$=

- if

focus=D2 andgoal=D2 andgoal_peg==target_pegthenfocus=D1,goal=D1,goal_peg=goal_final - if

focus=D1 andgoal=D1 andgoal_peg!=target_pegthenfocus=D0 - if

focus=D1 andgoal=D1 andgoal_peg==target_pegthenfocus=D0,goal=D0,goal_peg=goal_final - if

focus=D0 andgoal_peg==target_pegthenfocus=NONE - if

focus=D0 andgoal=D0 andgoal_peg!=target_pegthenfocus=NONE,move=D0,move_peg=target_peg - if

focus!=goalandfocus_peg==goal_pegandtarget_peg!=focus_pegthengoal=focus,goal_peg=A+B+C-target_peg-focus_peg- trying to move something, but smaller disk is on top of this one

- if

focus!=goalandfocus_peg!=goal_pegandtarget_peg==focus_pegthengoal=focus,goal_peg=A+B+C-target_peg-goal_peg- trying to move something, but smaller disk is on top of target peg

- if

focus=D0 andgoal!=D0 andtarget_peg!=focus_pegandtarget_peg!=goal_pegandfocus_peg!=goal_pegthenmove=goal,move_peg=target_peg- move the disk, since there's nothing in the way

- if

focus=D1 andgoal!=D1 andtarget_peg!=focus_pegandtarget_peg!=goal_pegandfocus_peg!=goal_pegthenfocus=D0- check the next disk

- if

- Sufficient to solve any version of the problem

- Is it what people do?

- How can we tell?

Do science

- What predictions does the theory make

- Errors?

- Reaction times?

- Neural activity?

- fMRI?

Timing:

- not quite

- much longer pauses in some situations than there should be

- At those stages, it is recomputing plans that it has made previously

- People probably remember those, and don't restart from scratch

- Need to add that into the model

- Neural Cognitive Modelling: A Biologically Constrained Spiking Neuron Model of the Tower of Hanoi Task