Data Driven Modeling¶

### PhD seminar series at Chair for Computer Aided Architectural Design (CAAD), ETH Zurich

Topics to be discussed¶

- Markov Chains

In [1]:

import warnings

warnings.filterwarnings("ignore")

import pandas as pd

import numpy as np

from matplotlib import pyplot as plt

pd.__version__

import sys

from scipy import stats

import time

import pysparse

from scipy.linalg import norm

%matplotlib inline

Markov Chains¶

Introduced by Andrei Markov in 1906¶

His original work was on the modeling of stochastic systems, where we are interested to study the sequence of random events.¶

famously he did his work on the sequence of charachters in a language.¶

His work is one of the earilest data driven models of the langugae.¶

Nevertheless, he didn't succeed as his model is data and computation intensive.¶

Later it was used extensively to study dynamic (stochastic) systems.¶

Recently, it was used and inspired a lot of data driven representation works.¶

We discuss Markov Chains from the following aspects:¶

- From the point of view of dynamical systems

- From the point of view of object representation

- Properties and applications

- Extensions to machine learning applications

Random walk¶

In [83]:

direction

state=0

all_states= [0]

all_walks= []

n_time = 1000

for i in range(n_time):

walk = np.random.choice([-1,1],size=1)[0]

state = state + walk

all_states.append(state)

all_walks.append(walk)

plt.plot(range(n_time+1),all_states,'o-',color='gray')

Out[83]:

[<matplotlib.lines.Line2D at 0x164b94690>]

here, each state is only possible from only two other states (states+-1).¶

Therefore, the current state as a random variable depends only on the previous state. more formally:¶

This is called Markov property¶

Now in principle, if we approximate all the one-to-one transition probabilites, we can represent it in a directed graph or in a Matrix format.¶

Markov Chians¶

Usually we have a matrix, called transition matrix, M¶

Where M has certain conditions:¶

- Matrix M has equal rows and columns: M is nxn

- ** n is the number of states in the markov chain**

- Row (column) Stochastic: Sum of rows (columns) are equal to one

- ** non-negative elements**

- ** There is always a directed graph associated to M**

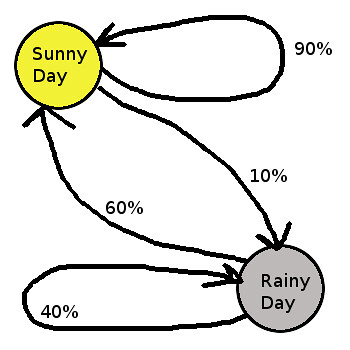

A very basic example, with two states¶

In [85]:

# state space: Rainy, sunny

M =np.asarray([[.9,.1],[.6,.4]])

print(np.matrix(M))

print

# any arbitrary initial point

x = np.asarray([0,1])

print "next probable state:"

print x.dot(M)

[[ 0.9 0.1] [ 0.6 0.4]] next probable state: [ 0.6 0.4]

In [86]:

print x

for i in range(20):

x = x.dot(M)

print x

print x.shape

[0 1] [ 0.6 0.4] [ 0.78 0.22] [ 0.834 0.166] [ 0.8502 0.1498] [ 0.85506 0.14494] [ 0.856518 0.143482] [ 0.8569554 0.1430446] [ 0.85708662 0.14291338] [ 0.85712599 0.14287401] [ 0.8571378 0.1428622] [ 0.85714134 0.14285866] [ 0.8571424 0.1428576] [ 0.85714272 0.14285728] [ 0.85714282 0.14285718] [ 0.85714284 0.14285716] [ 0.85714285 0.14285715] [ 0.85714286 0.14285714] [ 0.85714286 0.14285714] [ 0.85714286 0.14285714] [ 0.85714286 0.14285714] (2,)

¶

How to look at Markov chain: Probabilistic model or a deterministic model?¶

Cell values as conditional probabilities:¶

Cell values as the the rates of a linear equation system¶

$$x_i(t) = x_i(t-1).M_{1,i} + ... +x_i(t-1).M_{n,i} $$¶

Imagine now our states are the numbers of people in two countries, who are migrating to the other country.¶

In this sense, Markov chain is a system of linear equations.¶

This is the case, in many applications: flow of money in an economic network, flow of cars, flow of energy, flow of water, flow of web surfers, ...¶

Why it is called a linear operator:¶

Because it freezes the dynamical system with a probabilistic matrix.¶

On macro level it is deterministic but at the Micro (individual) level is pure probabilistic¶

¶

Usual Assumptions:¶

- ** Time homogeneous transition matrix **

- ** Finite states **

- ** Discrete-time**

¶

Now the data driven part:¶

Of course, if we have the underlying rules (i.e. transition probabilites), we can use markov chain properties, but...¶

The beauty of Markov chain is that it is super easy to be constructed from data

We only need sequential observations¶

Only basic operations such as addition and multiplication are required.¶

And it gets better with more data!!!¶

how to construct the Markov Chain?¶

In [77]:

def buildTM_from_sequential_data(data,states,irreducible=True):

# each row is a sequence of observation

n = len(states)

M = np.zeros((n,n))

for d in data:

for k in range(1,len(d)):

i = d[k-1]

j = d[k]

M[i,j]= M[i,j] + 1

eps = .001

for i in range(M.shape[0]):

s= sum(M[i])

if s==0:

if irreducible==True:

M[i]=eps

M[i,i]=1.

s= sum(M[i])

M[i]=np.divide(M[i],s)

else:

M[i,i]=1.

else:

M[i]=np.divide(M[i],s)

return M

# Power iteration Method

def simulate_markov(TM,verbose='on'):

e1 = time.time()

states_n = TM.shape[0]

pi = np.ones(states_n); pi1 = np.zeros(states_n);

pi = np.random.rand(states_n)

pi = pi/pi.sum()

n = norm(pi - pi1); i = 0;

diff = []

while n > 1e-6 and i <1*1e4 :

pi1 = TM.T.dot(pi).copy()

n = norm(pi - pi1); i += 1

diff.append(n)

pi = pi1.copy()

if verbose=='on':

print "Iterating {} times in {}".format(i, time.time() - e1)

mixing_ = i

return pi1,mixing_

In [89]:

# M =np.asarray([[1,.0],[.8,.2]])

sz = 4

n_state = 6

data = np.random.randint(0,high=n_state,size=sz).reshape(1,sz)

states = np.arange(0,n_state)

# If it is irreducible, it will have a steady state alway, other wise, it might oscilate

M = buildTM_from_sequential_data(data,states,irreducible=False)

print(np.matrix(M))

print

# any arbitrary initial point

x = np.asarray([0,1])

x = np.random.rand(len(states))

x = x/x.sum()

all_x = []

all_x.append(x)

for i in range(20):

x = x.dot(M)

all_x.append(x)

print x

all_x = np.asarray(all_x)

for i in range(all_x.shape[1]):

plt.plot(range(len(all_x)),all_x[:,i])

[[ 0. 0. 0. 0. 0. 1.] [ 0. 1. 0. 0. 0. 0.] [ 0. 0. 1. 0. 0. 0.] [ 0. 0. 0. 1. 0. 0.] [ 1. 0. 0. 0. 0. 0.] [ 0. 0. 0. 0. 1. 0.]] [ 0.20000934 0.18171897 0.17723441 0.30698769 0.08627169 0.04777791]

In [9]:

import numpy as np

from scipy import integrate

from matplotlib import pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

from matplotlib.colors import cnames

from matplotlib import animation

%matplotlib inline

N_trajectories = 1000

def lorentz_deriv((x, y, z), t0, sigma=10., beta=8./3, rho=28.0):

"""Compute the time-derivative of a Lorentz system."""

return [sigma * (y - x), x * (rho - z) - y, x * y - beta * z]

# Choose random starting points, uniformly distributed

x0 = -10 + 30 * np.random.random((N_trajectories, 3))

# Solve for the trajectories

t = np.linspace(0, 5, 500)

x_t = np.asarray([integrate.odeint(lorentz_deriv, x0i, t)

for x0i in x0])

# this is just one dimension

for i in range(100):

plt.plot(x_t[i,:,0])

State Space explosion¶

One important problem of Markov chains is the so called state space explosion.¶

One possible solution would be to learn the high dimensional manifold using methods such as SOM and index that space with low dimensional indices. Then, compute Markov Chain with the new indexes.¶

Some early tries I did:¶

Vahid, Moosavi, Computing With Contextual Numbers. arXiv preprint arXiv:1408.0889. (2014).

In [90]:

# Here, we assume one dimensional state space

# Coarse graining and descretizing the state space

data = x_t[:,:,0]/1

data = data.astype(int)

print np.unique(data).shape

data = data-np.min(data)

data = data[:,range(0,data.shape[1],5)]

print np.unique(data)

print data.shape

for i in range(100):

plt.plot(data[i,:])

(50,) [ 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49] (1000, 100)

In [91]:

# frequency of visited states

f_data = data.flatten()

plt.hist(f_data,normed=True,bins=51);

In [93]:

# Now let's buid a markov chain out of this data

n_state = np.unique(data).shape[0]

states = np.arange(0,n_state)

M = buildTM_from_sequential_data(data,states,irreducible=False)

print(np.matrix(M))

[[ 0. 0. 0. ..., 0. 0. 0. ] [ 0. 0. 0. ..., 0. 0. 0. ] [ 0. 0.03448276 0.10344828 ..., 0. 0. 0. ] ..., [ 0. 0. 0. ..., 0. 0.03846154 0. ] [ 0. 0. 0. ..., 0. 0. 0. ] [ 0. 0. 0. ..., 0. 0. 0. ]]

In [94]:

# The meaning of steady state distribution

# How to interpret this?

pi, mixing_t = simulate_markov(M,verbose='on')

plt.plot(pi)

Iterating 379 times in 0.00762104988098

Out[94]:

[<matplotlib.lines.Line2D at 0x1649bbad0>]

But now if we want to see the dynamics of a single trajectory¶

In [95]:

# any arbitrary initial point: here, it means a one-hot vector. initially only one state is one and the rest is one

x = np.asarray([0,1])

ind_initial = np.random.randint(0,n_state,size=1)

print ind_initial

x = np.zeros((1,n_state))

x[0,ind_initial] = 1

# print x

x = x/x.sum()

all_x = []

all_x.append(x)

for i in range(100):

x = x.dot(M)

all_x.append(x)

all_x = np.asarray(all_x)

[15]

Because of the probabilistic nature of Markov Chain, it calculates all the possible combinations together.¶

In [96]:

state_pic = all_x[:,0,:].copy()

plt.imshow(state_pic.T[::-1]);

In [99]:

all_inds = []

ind_initial = np.random.randint(0,n_state,size=1)

ind = ind_initial[0]

all_inds.append(ind)

for i in range(100):

ind = np.random.choice(range(M.shape[0]),size=1,p=M[ind])[0]

all_inds.append(ind)

In [100]:

plt.plot(all_inds)

plt.ylim((0,n_state))

Out[100]:

(0, 50)

Important points:¶

If we look at the problem of single trajectory, we have the problem of time series prediction.¶

In fact, this Markov chain is providing a very naive time series model, where the next step is provided only based on the current state.¶

For this purpose, there are much better methods¶

Therefore, it is not recommended to use a Markov chain for the prediction of a single path.¶

On the other hand, Markov chain has very interesting features, if one is interested in the overal macroscopic properties of dynamical systems, for example in:¶

- Water flow

- ** Traffic Dynamics**

- Economic networks

- ** WWW**

- ** Electricity Network**

- Flight dynamics

Some of the very interesting properties of Markov chains:¶

- Steady state probabilities

- Clustering the state space

- Mixing times

- Recurrence time

- Mean first passage time

- Kemeny Constant

- Sensitivity analysis of the transition matrix

First Example:¶

Transportation Dynamics¶

Vahid, Moosavi and Ludger Hovestadt. “Modeling urban traffic dynamics in coexistence with urban data streams.” Proceedings of the 2nd ACM SIGKDD International Workshop on Urban Computing. ACM, 2013. https://vahidmoosavi.files.wordpress.com/2013/09/modeling-urban-traffic-dynamics-in-coexistence-with-urban-data-streams.pdf

the basic idea is that we start with a collection of traces of cars across a city.¶

This is the only data we use for modeling the dynamics of traffic in a city¶

In [22]:

from IPython.display import YouTubeVideo

YouTubeVideo('0aQxJgHknGs',width=700, height=600)

Out[22]:

In [23]:

from IPython.display import YouTubeVideo

YouTubeVideo('VQ1f312SVqg',width=700, height=600)

Out[23]:

In [24]:

from IPython.display import YouTubeVideo

YouTubeVideo('D6XTyLbO13w',width=700, height=600)

Out[24]:

The proposed Markov model from input-output table¶

Here, we assume two economies each with one industry¶

However, considering the changes in the values of Input-output models, we have a kind of time varying markov chain. Similarly, we assume¶

we have a time series of stationary markov chains, where for each year we fit one markov chain¶

In this experiment, we have focused on 41 economies (countries) with 35 industries within each economy interacting with other industries and economies¶

Robert Solow 1952 had talked about the relationships between Leontief input-output models and markov chains as their algebraic formalism¶

In [25]:

economy_n = 41

industry_n = 35

header = pd.read_csv('./Data/WIOD/header.csv',header=None)

economy_names =header.values[1,range(0,1435,35)]

industry_names =header.values[0,range(0,35)]

Countries =pd.read_csv("./Data/WIOD/Economies_Names.csv")

VA = pd.read_csv('./Data/WIOD/VAs.csv',index_col=[0])

In [26]:

WIO = list()

for i in range(1995,2012):

d = pd.read_csv('./Data/WIOD/wiot'+str(i)+'_row_apr12.csv',header=[0,1,2])

WIO.append(d.values[:])

print i, d.shape

1995 (1435, 1640) 1996 (1435, 1640) 1997 (1435, 1640) 1998 (1435, 1640) 1999 (1435, 1640) 2000 (1435, 1640) 2001 (1435, 1640) 2002 (1435, 1640) 2003 (1435, 1640) 2004 (1435, 1640) 2005 (1435, 1640) 2006 (1435, 1640) 2007 (1435, 1640) 2008 (1435, 1640) 2009 (1435, 1640) 2010 (1435, 1640) 2011 (1435, 1640)

Using Power Iteration to calculate the steady state probabilities, Pi¶

In [27]:

def aggregate_economy_int_consumptions(WIOT,VA_y):

economy_n = 41

industry_n = 35

economy_int_consumptions_n =1

economy_produsction_costs_n=1

states_n = economy_n*industry_n+economy_n*1+industry_n*1

economy_income = np.zeros((economy_n*industry_n,economy_n))

economy_int_consumptions = np.zeros((economy_n*industry_n,economy_n))

ind = economy_n*industry_n

for i in range(economy_n):

col = i*5+ind

economy_int_consumptions[:,i]=WIOT[:,col:col+5].sum(axis=1)

#I don't know why but sometimes it gets a bit negative?!!! due to change in inventory (5th column of economy consum)

economy_int_consumptions[economy_int_consumptions<0]=0

industry_produsction_costs = np.around(WIOT[:].sum(axis=1)-WIOT[:,:economy_n*industry_n].sum(axis=0)-VA_y,decimals=3)

return economy_int_consumptions,industry_produsction_costs

def build_markov_chain_Dense(Q,WIOT,economy_n,industry_n,economy_int_consumptions,industry_produsction_costs):

e0 = time.time()

eps = .001

# economy_n = 41

# industry_n = 35

# economy_int_consumptions_n =1

# economy_produsction_costs_n=1

for i in range(0,economy_n*industry_n):

#For industry interactions

for j in range(0,economy_n*industry_n):

Q[i,j]=WIOT[j,i]

#For payments of industry to its economy: This is the source of income for the economy

economy_ind_industry = i/industry_n

Q[i,economy_n*industry_n+economy_ind_industry]=industry_produsction_costs[i]

#For economy's costs (i.e. consumptions)

ind_economy_inTM = economy_n*industry_n

for j in range(economy_n):

Q[j+ind_economy_inTM,i]=economy_int_consumptions[i,j]

singular_industries = []

for i in range(Q.shape[0]):

s= sum(Q[i])

if s==0:

singular_industries.append(i%economy_n)

Q[i]=eps

Q[i,i]=1.

s= sum(Q[i])

Q[i]=np.divide(Q[i],s)

else:

Q[i]=np.divide(Q[i],s)

print "Making the TM in {} second".format(time.time() - e0)

# print np.unique(singular_industries)

# print "Transition Matrix is ready!"

return np.around(Q,decimals=10),list(np.unique(singular_industries))

def simulate_markov(TM,verbose='on'):

e1 = time.time()

states_n = TM.shape[0]

pi = np.ones(states_n); pi1 = np.zeros(states_n);

pi = np.random.rand(states_n)

# pi[np.random.randint(1,high=size-1, size=1000)] = 1;

# pi[np.random.randint(1,high=states_n, size=int(.1*states_n))] = np.random.rand(int(.1*states_n));

pi = pi/pi.sum()

# print pi.shape

# print pi.sum()

# pi[range(int(.1*size))] = 1;

n = norm(pi - pi1); i = 0;

diff = []

while n > 1e-6 and i <1*1e4 :

pi1 = TM.T.dot(pi).copy()

n = norm(pi - pi1); i += 1

diff.append(n)

pi = pi1.copy()

if verbose=='on':

print "Iterating {} times in {}".format(i, time.time() - e1)

mixing_ = i

return pi1,mixing_

In [28]:

Pi =[]

TMs = []

Mixing_times =[]

singular_ids = []

fig3 = plt.figure(figsize=(15,7))

for i,WIOT in enumerate(WIO):

VA_y = VA.values[i]

VA_y = np.zeros(VA.values[0].shape[0])

economy_int_consumptions,industry_produsction_costs = aggregate_economy_int_consumptions(WIOT,VA_y)

# economy_int_consumptions,industry_produsction_costs = aggregate_economy_int_consumptions(WIOT)

economy_n = 41

industry_n = 35

states_n = economy_n*industry_n+economy_n

# Q = pysparse.spmatrix.ll_mat(states_n,states_n)

TM = np.zeros((states_n,states_n))

TM, singular_id = build_markov_chain_Dense(TM,WIOT,economy_n,industry_n,economy_int_consumptions,industry_produsction_costs)

TMs.append(TM)

singular_ids.extend(singular_id)

t,mixing_ = simulate_markov(TM)

Pi.append(t)

Mixing_times.append(mixing_)

singular_ids = np.unique(singular_ids)

# pl = plt.plot(t)

# pl = plt.plot(t[economy_n*industry_n:])

# p =plt.xticks(range(economy_n),economy_names,rotation=45)

Making the TM in 0.859048843384 second Iterating 289 times in 0.165277957916 Making the TM in 0.868260860443 second Iterating 257 times in 0.144299030304 Making the TM in 0.852555036545 second Iterating 251 times in 0.139472007751 Making the TM in 0.857586860657 second Iterating 259 times in 0.146198034286 Making the TM in 0.861138820648 second Iterating 262 times in 0.148849010468 Making the TM in 0.867842912674 second Iterating 240 times in 0.133607149124 Making the TM in 0.876348972321 second Iterating 228 times in 0.131730079651 Making the TM in 0.848108053207 second Iterating 229 times in 0.130686044693 Making the TM in 0.880218029022 second Iterating 222 times in 0.123178958893 Making the TM in 0.846520900726 second Iterating 208 times in 0.11580991745 Making the TM in 0.85072183609 second Iterating 190 times in 0.115315914154 Making the TM in 0.870454072952 second Iterating 172 times in 0.0955309867859 Making the TM in 0.870944023132 second Iterating 166 times in 0.0922381877899 Making the TM in 0.849496126175 second Iterating 157 times in 0.0873749256134 Making the TM in 0.855029821396 second Iterating 191 times in 0.109220027924 Making the TM in 0.882319927216 second Iterating 173 times in 0.097767829895 Making the TM in 0.84793305397 second Iterating 158 times in 0.0903940200806

<matplotlib.figure.Figure at 0x11d619090>

In [29]:

#MIXING TIME: TIME OR ITERATIONS REQUIRED TO REACH TO STEADY STATE. THIS IS A DIRECT MEASURE TO SHOW HOW CONNECTED THE

#NETWORK IS. MORE MODULAR NETWORK MORE ITERATIONS REQUIRED TO REACH TO STEADY STATE

fig = plt.figure(figsize=(10,7))

plt.plot(Mixing_times,"*-")

label = range(1995,2012)

# plt.title('Mixing Times')

plt.xticks(range(17),label,rotation=90)

plt.xlabel('Year')

plt.ylabel('Mixing Time of the Corresponding Markov Chain')

plt.grid()

#Why every year the TM reaches to steady state faster?

4- Kemeny Constant¶

Kemeny Constant is indicating a similar aspect to what mixing time offers. Kemeny constant in the context of economic network shows the inverse of average speed of monetary flow (i.e. the average number of steps that one unit of money is taking to start from any given state to any other state in a stationary condition). Astonishingly, this value is fixed for a markov chain with fixed transition matrix.¶

If Kemeny constant is high this says we have less connected network, since there are less chances to go from one node in a community to another node in another community. Therefore, the average time will increase.¶

In [30]:

def Kemeny_constant(MC):

from scipy.linalg import eig

eigval, vl, vr = eig(MC, left=True)

eigval = np.real(eigval)

vl = np.real(vl)

eigvec_1 = vl[:,np.argmax(np.abs(eigval))]/vl[:,np.argmax(np.abs(eigval))].sum()

ind = np.around(eigval,decimals=8)!=1

# print ind

# print eigval

return np.divide(1,(1-eigval[ind])).sum(),eigvec_1

In [31]:

Kemenys = []

i = 0

for TM in TMs:

K, pi = Kemeny_constant(TM)

Kemenys.append(K)

i+=1

print i

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17

In [32]:

fig = plt.figure(figsize=(10,7))

plt.plot(Kemenys,'o-')

label = range(1995,2012)

plt.xticks(range(17),label,rotation=90)

plt.grid()

plt.xlabel('Year')

plt.ylabel('Kemeny Constant of the Corresponding Markov Chain')

Out[32]:

<matplotlib.text.Text at 0x102b782d0>

In [33]:

names = []

for i,eco in enumerate(Countries.Country):

for j,ind in enumerate(industry_names):

names.append(eco+"-"+ind)

for i,eco in enumerate(Countries.Country):

names.append(eco+"-"+"Government")

print len(names)

Data_all = np.asarray(Pi).T

what_to_plot = Data_all

# fig = plt.figure(figsize=(15,7))

# np.argsort(what_to_plot[:industry_n*economy_n][:,2])[::-1]

DF = pd.DataFrame(data=what_to_plot[:economy_n*industry_n],columns=range(1995,2012),index=names[:economy_n*industry_n])

DF = DF.sort_values([2011],ascending=False)

DF.head()

ax = DF.T.plot(DF.T.index,DF.T.columns[:20],xticks=range(1995,2012),rot=45)

fig = ax.get_figure()

ax.legend(bbox_to_anchor=(1.77,1.021))

fig.set_size_inches(15,7)

plt.tight_layout()

font = {'size' : 12}

plt.rc('font', **font)

1476

In [34]:

plt.title('world total GDP')

# plt.plot(range(1995,2012),GDP_USD_Selected_Countries.ix[:,5:22].sum(axis=0),'o-r')

plt.plot(range(1995,2012),VA.sum(axis=1),'o-r')

plt.grid()

GDP trends of Economies¶

In [35]:

Data_all = np.asarray(Pi).T

# Data_all = Data_all[:economy_n*industry_n]

fig = plt.figure(figsize=(15,15))

GDP_shares = np.zeros((economy_n,17))

Economies_shares = np.zeros((economy_n,17))

for i in range(economy_n):

ind_indusrty = range(i*industry_n,(i+1)*industry_n)

GDP_shares[i]=VA.values[:,ind_indusrty].sum(axis=1)

plt.subplot(7,6,i+1)

plt.title(Countries.Country[i])

plt.plot(range(17),GDP_shares[i],'-r',linewidth=1.2,label='GDP of Economy')

label = range(1995,2012)

plt.xticks(range(17),label,rotation=90)

plt.tight_layout()

plt.grid()

plt.legend(bbox_to_anchor=(3.19,1.022))

# fig.savefig(path,dpi=100)

Out[35]:

<matplotlib.legend.Legend at 0x11e95a410>

GDP share trends¶

In [36]:

fig = plt.figure(figsize=(15,15))

GDP_shares = np.zeros((economy_n,17))

Economies_shares = np.zeros((economy_n,17))

for i in range(economy_n):

ind_indusrty = range(i*industry_n,(i+1)*industry_n)

GDP_shares[i]=VA.values[:,ind_indusrty].sum(axis=1)/VA.values[:].sum(axis=1)

plt.subplot(7,6,i+1)

plt.title(Countries.Country[i])

plt.plot(range(17),GDP_shares[i],'-r',linewidth=1.2,label='GDP Share of Economy')

label = range(1995,2012)

plt.xticks(range(17),label,rotation=90)

plt.tight_layout()

plt.grid()

plt.legend(bbox_to_anchor=(3.19,1.022))

# fig.savefig(path,dpi=100)

Out[36]:

<matplotlib.legend.Legend at 0x11b6dbdd0>

In [37]:

Data_all = np.asarray(Pi).T

# Data_all = Data_all[:economy_n*industry_n]

fig = plt.figure(figsize=(15,15))

Economies_shares = np.zeros((economy_n,17))

for i in range(economy_n):

plt.subplot(7,6,i+1)

plt.title(Countries.Country[i])

ind_indusrty = range(i*industry_n,(i+1)*industry_n)+ [industry_n*economy_n+i]

Economies_shares[i]=Data_all[ind_indusrty].T.sum(axis=1)

plt.plot(range(17),Economies_shares[i],linewidth=1.2,label='Aggregated Structural Power of Economy')

# plt.plot(range(17),GDP_shares[i],'-r',linewidth=1.2,label='GDP Share of Economy')

label = range(1995,2012)

plt.xticks(range(17),label,rotation=90)

plt.tight_layout()

plt.grid()

plt.legend(bbox_to_anchor=(3.19,1.022))

Out[37]:

<matplotlib.legend.Legend at 0x154930490>

In [38]:

#GPD share and Pi share

Data_all = np.asarray(Pi).T

# Data_all = Data_all[:economy_n*industry_n]

fig = plt.figure(figsize=(15,15))

#To remove Taiwan from the list, since its GDP is not known based on UN DB

ind_economies_UN = range(38)+[39]

GDP_shares = np.zeros((economy_n,17))

Economies_shares = np.zeros((economy_n,17))

Counties_names_in_UN = []

for i in range(economy_n):

ind_indusrty = range(i*industry_n,(i+1)*industry_n)

GDP_shares[i]=VA.values[:,ind_indusrty].sum(axis=1)/VA.values[:].sum(axis=1)

plt.subplot(7,6,i+1)

plt.title(Countries.Country[i])

ind_indusrty = range(i*industry_n,(i+1)*industry_n)+ [industry_n*economy_n+i]

Economies_shares[i]=Data_all[ind_indusrty].T.sum(axis=1)

plt.plot(range(17),Economies_shares[i],linewidth=1.2,label='Aggregated Structural Power of Economy')

plt.plot(range(17),GDP_shares[i],'-r',linewidth=1.2,label='GDP Share of Economy')

y1 = Economies_shares[i]

y2 = GDP_shares[i]

x = range(17)

plt.fill_between(x, y1, y2, where=y2 >= y1, facecolor='red',alpha=.5, interpolate=True)

plt.fill_between(x, y1, y2, where= y2 <= y1, facecolor='blue', alpha=.5,interpolate=True)

label = range(1995,2012)

plt.xticks(range(17),label,rotation=90)

plt.tight_layout()

plt.grid()

plt.legend(bbox_to_anchor=(3.19,1.022))

Out[38]:

<matplotlib.legend.Legend at 0x1575d1990>

In [39]:

fig = plt.figure(figsize=(18,18))

for c in range(economy_n):

plt.subplot(7,6,c+1)

plt.title(Countries.Country[c])

y = Economies_shares[c,:].copy()

x = GDP_shares[c,:].copy()

color = y/x

min_x = np.min(x)

min_y = np.min(y)

min_min = np.min([min_x,min_y])

max_x = np.max(x)

max_y = np.max(y)

max_max = np.max([max_x,max_y])

eps = .02

plt.plot(x,y,linewidth=.2)

plt.scatter(x,y,vmin=.5,vmax=1.8,c=color,s=40,marker='o',edgecolor='None', cmap='RdYlBu' ,alpha=1)

plt.annotate(1995, size=10,xy = (x[0],y[0]), xytext = (0, 0),

textcoords = 'offset points', ha = 'right', va = 'bottom')

plt.annotate(2011, size=10,xy = (x[16],y[16]), xytext = (0, 0),

textcoords = 'offset points', ha = 'right', va = 'bottom')

# #Linear line

plt.plot([min_min,max_max],[min_min,max_max],'-b',linewidth=.2)

plt.ylim([min_min*(1-eps),max_max*(1+eps)])

plt.xlim([min_min*(1-eps),max_max*(1+eps)])

plt.xlabel('GDP Share of Economy')

plt.ylabel('Aggregated Structural Power of Economy')

plt.grid()

plt.tight_layout()

font = {'size' : 8}

plt.rc('font', **font)

In [40]:

def update_by_momentum(series,span,pred_period):

result = [] # first value is same as series

vals = series[-span-1:]

for n in range(pred_period):

momentum_avg = np.mean(np.diff(vals))

result.append(vals[-1] +momentum_avg )

vals[-1]= result[-1]

return result

fig = plt.figure(figsize=(12,8))

min_x = np.min(Economies_shares[:,:]/GDP_shares[:,:])

min_y = np.min(GDP_shares[:,:])

max_x = np.max(Economies_shares[:,:]/GDP_shares[:,:])

max_y = np.max(GDP_shares[:,:])

eps = .02

for c in range(economy_n):

# plt.subplot(7,6,c+1)

# plt.title(Counties_names_in_UN[c])

x = Economies_shares[c,:]

color = Economies_shares[c,:]/GDP_shares[c,:]

x = range(17)

# color = range(17)

y = GDP_shares[c,:]

y = Economies_shares[c,:]

y = Economies_shares[c,:]/GDP_shares[c,:]

min_x = np.min(Economies_shares[c,:])

min_y = np.min(GDP_shares[c,:])

min_min = np.min([min_x,min_y])

max_x = np.max(Economies_shares[c,:])

max_y = np.max(GDP_shares[c,:])

max_max = np.max([max_x,max_y])

eps = .02

# lim = max(max(y),max(x))

# lim_min = min(min(y),min(x))

# eps = .05*lim

ax = plt.gca()

pred_period = 5

col = 0

if Countries.Country[c] in ["Brazil","Russia","China","India"]:

ax.plot(x,y,'-',linewidth=1.2)

all_preds = []

for span in range(3,7):

series = y.copy()

y_pred =update_by_momentum(series,span,pred_period)

all_preds.append(y_pred)

y_pred = list(y[-1:]) + y_pred

x_pred = x[-1:]+ range(17,17+pred_period)

ax.plot(x_pred,y_pred,'-r',linewidth=0.2)

y_pred = list(y[-1:]) + list(np.median(all_preds,axis=0))

x_pred = x[-1:]+ range(17,17+pred_period)

ax.plot(x_pred,y_pred,'--r',linewidth=1.2)

ax.scatter(x,y,vmax=1.7,vmin=0.5,c=color,s=20,marker='.',edgecolor='None', cmap='RdYlBu' ,alpha=1)

ax.annotate(Countries.Country[c], size=10,xy = (x[16],y[-1]+.002), xytext = (0, 0),

textcoords = 'offset points', ha = 'right', va = 'bottom')

else:

ax.plot(x,y,'gray',linewidth=.3)

# ax.set_yscale('log')

# ax.set_xscale('log')

# #Linear line

# ax.plot([min_min,max_max],[min_min,max_max],'-b',linewidth=.2)

ax.plot([0,16+pred_period],[1,1],'--k',linewidth=.2)

plt.ylim([.25,2])

plt.xlim([0,16+pred_period])

plt.ylabel('Aggregated Structural Power of Economy Over GDP Share of Economy\n (Structural Potential of Economy)')

plt.xlabel('Year')

plt.xticks(range(17+pred_period),range(1995,2012+pred_period), rotation='vertical')

# plt.xticks([])

# plt.yticks([])

plt.grid()

plt.tight_layout()

font = {'size' : 10}

plt.rc('font', **font)

Sensitivity Analysis and Systemic Risks¶

What if we slightly change the values of the Markov chains?¶

We defined two measures as "Systemic Influence" and "Systemic Fragility", which measure how many times economies influence and get influenced by (from) the changes of the economies activities change.¶

For example, we slow down the activity of one inducstry by changing the corresponding values in the row and columns of the Markov chain and then, compare the steady state probabilities before and after change¶

In [41]:

# We have precomputed them before

whichyearnetwork = 2011

How_much_change = -99

Kemeny_Change_PCTpath = "./Data/WIOD/Kemeny_Change_PCT_How_much_change_"+str(How_much_change)+"_year_"+str(whichyearnetwork)+".csv"

Kemeny_Change_PCT = pd.read_csv(Kemeny_Change_PCTpath,index_col=0)

pi_perturbedpath = "./Data/WIOD/pi_perturbed_How_much_change_"+str(How_much_change)+"_year_"+str(whichyearnetwork)+".csv"

pi_perturbed = pd.read_csv(pi_perturbedpath,index_col=0)

pi_normalpath = "./Data/WIOD/pi_normal_How_much_change_"+str(How_much_change)+"_year_"+str(whichyearnetwork)+".csv"

pi_normal = pd.read_csv(pi_normalpath,index_col=0)

pi_diff_path ="./Data/WIOD/PerturbationOnIndustries_Change_PCT_How_much_change_"+str(How_much_change)+"_year_"+str(whichyearnetwork)+".csv"

pi_diff = pd.read_csv(pi_diff_path,index_col=0)

In [42]:

#Systemic Influence Vs. Systemice Fragility

#Threshold of change in pct

thresh = .5

#Systemic Influence

no_of_pos_affecting = (pi_diff.values[:,:]>thresh).sum(axis=1)

no_of_neg_affecting = (pi_diff.values[:,:]<-1*thresh).sum(axis=1)

s = float(industry_n*economy_n)

ind_pos_affecting = pi_diff.values[:,:]>thresh

ind_neg_affecting = pi_diff.values[:,:]<-1*thresh

pos_affecting = np.zeros(pi_diff.shape[0])

neg_affecting = np.zeros(pi_diff.shape[0])

for i in range(pi_diff.shape[0]):

pos_affecting[i] = pi_diff.values[i,ind_pos_affecting[i]].dot(pi_normal.values[0,:][ind_pos_affecting[i]])

neg_affecting[i] = pi_diff.values[i,ind_neg_affecting[i]].dot(pi_normal.values[0,:][ind_neg_affecting[i]])

#We want to take out the effect each node on itself

self_change = np.diag(pi_diff.values[:,:industry_n*economy_n])*pi_normal.values[0,:economy_n*industry_n]

# - np.abs(self_change)

sum_of_affecting = np.abs(neg_affecting) + pos_affecting - np.abs(self_change)

pi_norm = pi_normal.values[0,:industry_n*economy_n]

systemic_influence = (no_of_pos_affecting+no_of_neg_affecting)/s

#Systemic Fragility

no_of_pos_affected = (pi_diff.values[:,:industry_n*economy_n]>thresh).sum(axis=0)

no_of_neg_affected = (pi_diff.values[:,:industry_n*economy_n]<-1*thresh).sum(axis=0)

# s = float(pi_diff.values[:,:].shape[0])

ind_pos_affected = pi_diff.values[:,:]>thresh

ind_neg_affected = pi_diff.values[:,:]<-1*thresh

pos_affected = np.zeros(industry_n*economy_n)

neg_affected = np.zeros(industry_n*economy_n)

for i in range(industry_n*economy_n):

pos_affected[i] = pi_diff.values[ind_pos_affected[:,i],i].sum()*pi_normal.values[0,i]

neg_affected[i] = pi_diff.values[ind_neg_affected[:,i],i].sum()*pi_normal.values[0,i]

######################

systemic_fragility = (no_of_pos_affected+no_of_neg_affected)/s

sum_of_affected = np.abs(neg_affected) + pos_affected - np.abs(self_change)

fig = plt.figure(figsize=(10,7))

plt.subplot(1,1,1)

ax = plt.gca()

x = systemic_fragility

y = systemic_influence

p= ax.scatter(x,y,c=pi_norm,s=pi_norm*15000,marker='o',edgecolor='gray', cmap=plt.cm.RdYlBu_r ,alpha=1)

ax.set_xscale('log')

plt.plot(x,y,'ob',markersize=1.3,alpha=.99)

plt.ylabel('systemic influence (absolute change > {}%)'.format(thresh),color='darkblue')

plt.xlabel('systemic fragility (absolute change > {}%)'.format(thresh),color='darkblue')

cb =plt.colorbar(p,shrink=.6,pad=.02)

cb.set_label('Eigen Centrality')

eps= .022

xmin = x.min() - eps*.1

xmax = x.max() + eps*.05

ymin = y.min() - eps*.4

ymax = y.max() + eps

plt.xlim(xmin,xmax)

plt.ylim(ymin,ymax)

font = {'size' : 14.}

plt.rc('font', **font)

plt.legend(bbox_to_anchor=(.91,1.0))

plt.tight_layout()

In [43]:

fig = plt.figure(figsize=(14,12))

plt.subplot(2,2,1)

ax = plt.gca()

y = systemic_influence

x = pi_norm

p= ax.scatter(x,y,c=pi_norm,s=pi_norm*15000,marker='o',edgecolor='gray', cmap=plt.cm.RdYlBu_r ,alpha=1)

plt.plot(x,y,'ob',markersize=1.3,alpha=.99)

plt.xlabel('log scale-Eigen Centrality',color='darkblue')

plt.ylabel('systemic influence (absolute change > {}%)'.format(thresh),color='darkblue')

cb =plt.colorbar(p,shrink=.6,pad=.02)

cb.set_label('Eigen Centrality')

eps= .022

xmin = x.min() - eps*.1

xmax = x.max() + eps*.05

ymin = y.min() - eps*.4

ymax = y.max() + eps

plt.xlim(xmin,xmax)

plt.ylim(ymin,ymax)

plt.subplot(2,2,2)

ax = plt.gca()

y = systemic_fragility

x = pi_norm

p= ax.scatter(x,y,c=pi_norm,s=pi_norm*15000,marker='o',edgecolor='gray', cmap=plt.cm.RdYlBu_r ,alpha=1)

plt.plot(x,y,'ob',markersize=1.3,alpha=.99)

plt.ylabel('systemic fragility (absolute change > {}%)'.format(thresh),color='darkblue')

plt.xlabel('log scale-Eigen Centrality',color='darkblue')

cb =plt.colorbar(p,shrink=.6,pad=.02)

cb.set_label('Eigen Centrality')

eps= .022

xmin = x.min() - eps*.1

xmax = x.max() + eps*.05

ymin = y.min() - eps*.4

ymax = y.max() + eps

plt.xlim(xmin,xmax)

plt.ylim(ymin,ymax)

font = {'size' : 8.}

plt.rc('font', **font)

plt.legend(bbox_to_anchor=(.91,1.0))

plt.tight_layout()

In [44]:

fig = plt.figure(figsize=(10,7))

plt.subplot(1,1,1)

ax = plt.gca()

y = Kemeny_Change_PCT.values[:]

x = pi_norm

p= ax.scatter(x,y,c=pi_norm,s=pi_norm*15000,marker='o',edgecolor='gray', cmap=plt.cm.RdYlBu_r ,alpha=1)

ax.set_xscale('log')

plt.plot(x,y,'ob',markersize=1.3,alpha=.99)

plt.xlabel('log scale-Eigen Centrality',color='darkblue')

plt.ylabel('Percent of Change in Kemeny Constant',color='darkblue')

cb =plt.colorbar(p,shrink=.6,pad=.02)

cb.set_label('log scale-Eigen Centrality')

eps= .022

xmin = x.min() - eps*.1

xmax = x.max() + eps*.05

ymin = y.min() - eps*.4

ymax = y.max() + eps

plt.xlim(xmin,xmax)

plt.ylim(ymin,ymax)

font = {'size' : 14.}

plt.rc('font', **font)

plt.legend(bbox_to_anchor=(.91,1.0))

plt.tight_layout()

In [101]:

names = []

for i,eco in enumerate(Countries.Country):

for j,ind in enumerate(industry_names):

names.append(eco+"-"+ind)

# for i,eco in enumerate(Countries.Country):

# names.append(eco+"-"+"Government")

Data = np.concatenate((pi_norm[:,np.newaxis],systemic_influence[:,np.newaxis],systemic_fragility[:,np.newaxis],Kemeny_Change_PCT.values[:]),axis=1)

cols = [['Eigen Centrality','Systemic Influence','Systemic Fragility','Pct of chng Kemeny']]

DF = pd.DataFrame(data=Data,index = names, columns=cols)

DF = DF.sort_values('Systemic Fragility',ascending=True)

# DF = DF.sort_values('Pct of chng Kemeny',ascending=True)

DF.ix[DF.index[:10]]

Out[101]:

| Eigen Centrality | Systemic Influence | Systemic Fragility | Pct of chng Kemeny | |

|---|---|---|---|---|

| Finland-Electrical and Optical Equipment | 0.000138 | 0.077352 | 0.043902 | 0.027277 |

| Rest Of World-Chemicals and Chemical Products | 0.001948 | 0.793728 | 0.045993 | 0.352296 |

| Taiwan-Manufacturing, Nec; Recycling | 0.000034 | 0.022300 | 0.046690 | 0.008769 |

| Rest Of World-Coke, Refined Petroleum and Nuclear Fuel | 0.001756 | 0.712892 | 0.047387 | 0.330850 |

| USA-Electrical and Optical Equipment | 0.002326 | 0.971429 | 0.049477 | 0.164367 |

| Germany-Chemicals and Chemical Products | 0.001110 | 0.708711 | 0.049477 | 0.208808 |

| Germany-Machinery, Nec | 0.001831 | 0.716376 | 0.049477 | 0.254888 |

| UK-Electrical and Optical Equipment | 0.000292 | 0.080139 | 0.049477 | 0.050286 |

| Germany-Electrical and Optical Equipment | 0.001540 | 0.776307 | 0.050174 | 0.229635 |

| Ireland-Machinery, Nec | 0.000013 | 0.015331 | 0.050871 | 0.002057 |

Clustering the network¶

Web application demo