1. LU for band matrices (5 pts)¶

The complexity to find an LU decomposition of a dense $n\times n$ matrix is $\mathcal{O}(n^3)$. Significant reduction in complexity can be achieved if the matrix has a certain structure, e.g. it is sparse. In the following task we consider an important example of $LU$ for a special type of sparse matrices –– tridiagonal matrices.

- Find the number of operations to compute an $LU$ decomposition of a tridiagonal matrix taking into account only non-zero elements. How many nonzero elements are in factors $L$, $U$ and where are they located? Conclude what is the complexity to solve a linear system with tridiagonal matrix in terms of $n$.

2. Completing the proof of existence of LU (10 pts)¶

Some details in lecture proofs about $LU$ were omitted. Let us complete them.

- Prove that if $LU$ decomposition exists, then matrix is strictly regular.

- Prove that if $A$ is strictly regular, then $A_1 = D - \frac 1a b c^T$ (see lectures for notations) is also strictly regular.

3. Stability of LU (10 pts)¶

Let $A = \begin{pmatrix} a & 1 & 0\\ 1 & 1 & 1 \\ 0 & 1 & 1 \end{pmatrix}.$

- Find analytically an $LU$ decomposition of the matrix $A$.

- For what values of $a$ does the LU decomposition of $A$ exist?

- Explain, why can the LU decomposition fail to approximate factors $L$ and $U$ for $|a|\ll 1$ in computer arithmetic?

How can this problem be solved?

4. Block LU (5 pts)¶

Let $A = \begin{bmatrix} A_{11} & A_{12} \\ A_{21} & A_{22} \end{bmatrix}$ be a block matrix. The goal is to solve the linear system $$ \begin{bmatrix} A_{11} & A_{12} \\ A_{21} & A_{22} \end{bmatrix} \begin{bmatrix} u_1 \\ u_2 \end{bmatrix} = \begin{bmatrix} f_1 \\ f_2 \end{bmatrix}. $$

- Using block elimination find matrix $S$ and right-hand side $f_2$ so that $u_2$ can be found from $S u_2 = f_2$. Note that the matrix $S$ is called Schur complement of the block $A_{11}$.

Problem 2 (QR decomposition)¶

20 pts¶

1. Standard Gram-Schmidt algorithm (10 pts)¶

Our goal now is to orthogonalize a system of linearly independent vectors $v_1,\dots,v_n$. The standard algorithm for the task is the Gram-Schmidt algorithm:

$$ \begin{split} u_1 &= v_1, \\ u_2 &= v_2 - \frac{(v_2, u_1)}{(u_1, u_1)} u_1, \\ \dots \\ u_n &= v_n - \frac{(v_n, u_1)}{(u_1, u_1)} u_1 - \frac{(v_n, u_2)}{(u_2, u_2)} u_2 - \dots - \frac{(v_n, u_{n-1})}{(u_{n-1}, u_{n-1})} u_{n-1}. \end{split} $$Now $u_1, \dots, u_n$ are orthogonal vectors in exact arithmetics. Then to get orthonormal system you should divide each of the vectors by its norm: $u_i := u_i/\|u_i\|$. The Gram-Schidt process can be viewed as a QR decomposition. Let us show that.

Write out what is $Q$ and $R$ obtained in the process described.

Implement the described Gram-Schmidt algorithm as a function

gram_schmidt(A), which outputsQ,Rand check it on a random $100\times 100$ matrix $B.$ Print out the error.

Note: To check orthogonality calculate the matrix of scalar products $G_{ij} = (u_i, u_j)$ (called Gram matrix of set of vectors $u_1,\dots, u_n$) which should be equal to the identity matrix $I.$ Error $\|G - I\|_2$ will show you how far is the system $u_i$ from orthonormal.

- Create a Hilbert matrix $A$ of size $100\times 100$ without using loops.

Othogonalize its columns by the described Gram-Schmidt algorithm. Is the Gram matrix close to the identity matrix in this case? Why?

The observed loss of orthogonality is a problem of this particular algorithm. To avoid it modified Gram-Schmidt algorithm, QR via Householder reflections or Givens rotations can be used.

# INPUT : rectangular matrix A

# OUTPUT: matrices Q - orthogonal and R - upper triangular such that A=QR

def gram_schmidt(A): # 5 pts

# enter your code here

return Q, R

2. Householder QR (10 pts)¶

Implement Householder QR decomposition as a function

householder_qr(A)which outputsQ,R. Apply it to the matrix $B$ created above. Print out the error.Apply it to the Hilbert matrix $A$ created in the first part of the problem and print out the error. Consider how stable is Householder compared to Gram-Schmidt.

# INPUT : rectangular matrix A

# OUTPUT: matrices Q - orthogonal and R - upper triangular such that A=QR

def householder_qr(A): # 7 pts

# enter your code here

return Q, R

Problem 3 (Low-rank decompositions)¶

45 pts¶

1. Theoretical tasks (15 pts)¶

Prove that for any Hermitian matrix, singular values equal to absolute value of eigenvalues. Does this hold for a general matrix? Prove or provide a counterexample.

Find analytically a skeleton decomposition of the matrix of size $n\times m$ with elements $a_{ij} = \sin i + \sin j$.

Let $A\in\mathbb{C}^{n\times m}$ be of rank $r$ and let $A = U\Sigma V^*$ be its SVD. Prove that $\mathrm{im}(A^*) = \mathrm{span}\{v_1,\dots, v_r\}$, where $V = [v_1, \dots, v_n]$.

2. Recommender system using SVD (30 pts)¶

In this task you are asked to build a simple movie recommender system based on collaborative filtering approach and SVD. Collaborative filtering implies that you build recommendations based on the feedback of other users given in a matrix $\mathbf{M}$ of users vs. movies. If a user $i$ watched a movie $j$ and rated it, say, as $3$ out of $5$, then the value $3$ is the corresponding matrix entry, i.e. $\mathbf{M}_{i,j}=3$. If a user did not watch a movie, then we put $0$ as a matrix element, i.e. $\mathbf{M}=0$. Hence, the matrix is sparse.

Task 1. Building the core of recommender (15 pts)¶

Build representation of users and movies in the latent factors space with the help of SVD.

We test the SVD model on a Movielens 10M dataset. Download the dataset using python functions provided in the following Jupyter notebook.

Is it possible to use

np.linalg.svdfunction to calculate SVD of the downloaded matrices on your laptop? Provide an estimate.Implement function

tr_svdso that it computes truncated SVD usingscipy.linalg.svds:- Be aware that

scipyreturns singular values in ascending order (see the docs). - Sort all your svd data in descending (by singular values) order without breaking the result.

- Be aware that

Fix the rank of approximation and compute truncated SVD with

tr_svdof the training set of the dataset. Plot the obtained singular values. Can you tell from the plot whether the data has a low-rank structure? Give your intuition, why it happens?Write the function

top_nwhich takes user as a row of his/her ratings (including non-rated films, i.e. just a row from the train\test set), integer number $N$ and returns array of indices which correspond to $N$ highest ratings. Use functionnp.argsort().Pick several users at random from the training set. Compare their top-10 films and top-10, suggested by your model ($A_k = U_k \Sigma_k V_k^T$). Comment on the result. Note: you can run all tests in this task with $k=25$.

# INPUT: A: scipy.sparse.csr_matrix (N_train x N_films), k - integer

# OUTPUT: U - np.array (N_train x k), S - np.array (k x k), Vh - np.array (k x N_films)

def tr_svd(A, k): # 5 pts

# enter your code here

return U, S, Vh

# INPUT: user - np.array (N_films,), N - integer

# OUTPUT: np.array (N,)

def top_n(user, N): # 2 pts

# enter your code here

return top_n_list

Task 2. Evaluating performance of the recommender (15 pts)¶

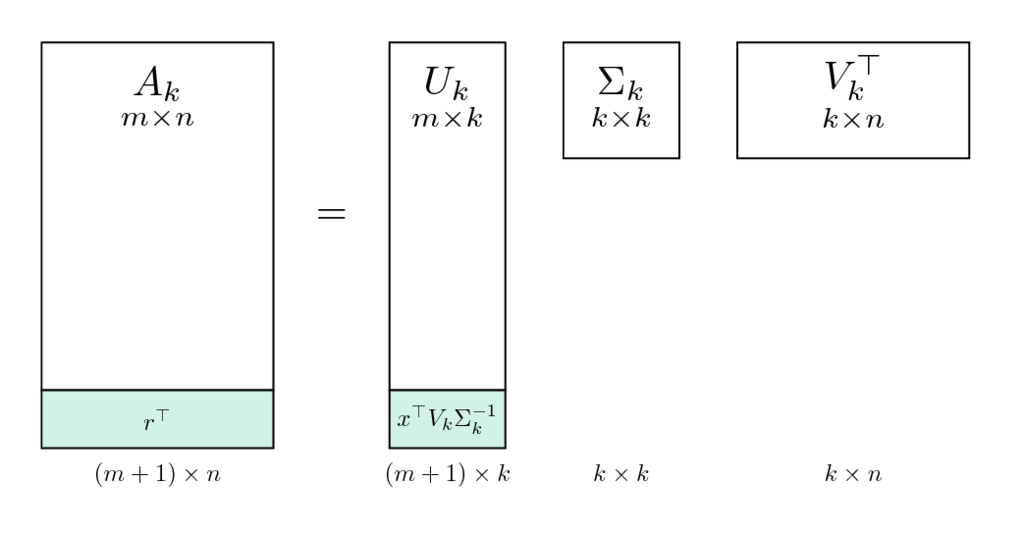

Suppose, we trained our model (obtain $U_k, \Sigma_k, V^T_k$ from $A_{train}$). Let's evaluate it! For this purpose we have $A_{test}$ (recall the function split_data). And our goal is to obtain vectors of recommendation $r$ for each user (row) in the test set ($A_{test}$). But there is no need to recompute the whole SVD for each user. We have the tool, which is called folding-in for recommender systems.

Folding-in technique¶

A new user can be considered as an update to the original matrix (appending new row). Appending a row in the original matrix corresponds to appending a row into the users latent factors matrix $U_k$ in the SVD decomposition. Since we do not want to recompute the SVD, we project the new user on the space of found latent factors $V_k$, which spans the row space of matrix $A_k = U_k \Sigma_k V^T_k$ (recall the problem from the theoretical tasks). The orthoprojection on this space is $P = V_kV_k^T$ (check that it satisfies definition of orthoprojection, i.e. $P^2=P$, $P^T = P$).

Thus, the recommendation vector $r$ for a new user $x$ (considered as a column vector) can be written as

$$ r = V_kV_k^T x. $$Computing the total score¶

You have to iterate over all users in the test set and make the following steps:

- obtain vector $x$, which is the same as user row, but the last $N = 3$ rated films should be filled with zeroes. Example:

- compute the folding-in prediction $r$:

- Obtain top-3 from $user$ (truth) and top-3 from $r$ (prediction). The number of films appearing simultaneously in both top-3's should be added to the

total_score. Write the corresponding functiontotal_score_folding, which takes the sparse test matrix $A_{test}$, $V_k$ from truncated SVD of $A_{train}$ and compute the total score.

Example:

| $user$ | $recommendation$ |

|---|---|

| (1,2,3) | (10,2,1) |

| (34, 27, 69) | (69, 5, 9) |

| (7,6,4) | (8,9,2) |

total_score = 2 + 1 + 0 = 3.

# INPUT: V - np.array(N_films, k), test_data - scipy.sparse.csr_matrix (N_train x N_films)

# OTPUT: total_score - integer

def total_score_folding(V, test_data): # 8 pts

# enter you code here

return total_score

Task 3 (bonus) Fine-tuning your model¶

- Try to find the rank that produces the best evaluation score.

- Plot the dependency of evaluation score on the rank of SVD for all your trials in one graph.

- Report the best result and the corresponding SVD rank.

- Compare your model with the non-personalized recommender which simply recommends top-3 movies with highest average ratings.

Note, that you don't have to recompute SVD to evaluate your model. You might compute once relatively large ($k =500$) truncated SVD and then just use submatrices of it.

Optionally: You may want to test your parameters with different data splittings in order to minimize the risk of local effects. You're also free to add modifications to your code for producing better results. Report what modificatons you've done and what effect it had if any.

Problem 4 (eigenvalues)¶

55 pts¶

1. Theoretical tasks (10 pts)¶

Prove that normal matrix is Hermitian iff its eigenvalues are real. Prove that normal matrix is unitary iff its eigenvalues satisfy $|\lambda| = 1$.

The following problem illustrates instability of the Jordan form. Find theoretically the eigenvalues of the perturbed Jordan block:

Comment how eigenvalues of $J(0)$ are perturbed for large $n$.

2. PageRank (30 pts)¶

Damping factor importance¶

Write the function

pagerank_matrix(G)that takes an adjacency matrix $G$ as an input and outputs the corresponding PageRank matrix $A$.Find PageRank matrix $A$ that corresponds to the following graph:

What is its largest eigenvalue? What multiplicity does it have?

Implement the power method for a given matrix $A$, an initial guess $x_0$ and a number of iterations

num_iter. It should be organized as a functionpower_method(A, x0, num_iter)that outputs approximation to eigenvector $x$, eigenvalue $\lambda$ and history of residuals $\{\|Ax_k - \lambda_k x_k\|_2\}$. Make sure that the method conveges to the correct solution on a matrix $\begin{bmatrix} 2 & -1 \\ -1 & 2 \end{bmatrix}$ which is known to have the largest eigenvalue equal to $3$.Run the power method for the graph presented above and plot residuals $\|Ax_k - \lambda_k x_k\|_2$ as a function of $k$ for

num_iter=100and random initial guessx0. Explain the absence of convergence.Consider the same graph, but with the directed edge that goes from the node 3 to the node 4 being removed. Plot residuals as in the previous task and discuss the convergence. Now, run the power method with

num_iter=100for 10 different initial guesses and print/plot the resulting approximated eigenvectors. Why do they depend on the initial guess?

In order to avoid this problem Larry Page and Sergey Brin proposed to use the following regularization technique:

$$ A_d = dA + \frac{1-d}{N} \begin{pmatrix} 1 & \dots & 1 \\ \vdots & & \vdots \\ 1 & \dots & 1 \end{pmatrix}, $$where $d$ is a small parameter in $[0,1]$ (typically $d=0.85$), which is called damping factor, $A$ is of size $N\times N$. Now $A_d$ is the matrix with multiplicity of the largest eigenvalue equal to 1. Recall that computing the eigenvector of the PageRank matrix, which corresponds to the largest eigenvalue, has the following interpretation. Consider a person who stays in a random node of a graph (i.e. opens a random web page); at each step s/he follows one of the outcoming edges uniformly at random (i.e. opens one of the links). So the person randomly walks through the graph and the eigenvector we are looking for is exactly his/her stationary distribution — for each node it tells you the probability of visiting this particular node. Therefore, if the person has started from a part of the graph which is not connected with the other part, he will never get there. In the regularized model, the person at each step follows one of the outcoming links with probability $d$ OR visits a random node from the whole graph with probability $(1-d)$.

- Now, run the power method with $A_d$ and plot residuals $\|A_d x_k - \lambda_k x_k\|_2$ as a function of $k$ for $d=0.99$,

num_iter=100and a random initial guessx0.

Usually, graphs that arise in various areas are sparse (social, web, road networks, etc.) and, thus, computation of a matrix-vector product for corresponding PageRank matrix $A$ is much cheaper than $\mathcal{O}(N^2)$. However, if $A_d$ is calculated directly, it becomes dense and, therefore, $\mathcal{O}(N^2)$ cost grows prohibitively large for big $N$.

- Implement fast matrix-vector product for $A_d$ as a function

pagerank_matvec(A, d, x), which takes a PageRank matrix $A$ (in sparse format, e.g.,csr_matrix), damping factor $d$ and a vector $x$ as an input and returns $A_dx$ as an output. Generate a random adjacency matrix of size $10000 \times 10000$ with only 100 non-zero elements and comparepagerank_matvecperformance with direct evaluation of $A_dx$.

# INPUT: G - np.ndarray

# OUTPUT: A - np.ndarray (of size G.shape)

def pagerank_matrix(G): # 5 pts

# enter your code here

return A

# INPUT: A - np.ndarray (2D), x0 - np.ndarray (1D), num_iter - integer (positive)

# OUTPUT: x - np.ndarray (of size x0), l - float, res - np.ndarray (of size num_iter + 1 [include initial guess])

def power_method(A, x0, num_iter): # 5 pts

# enter your code here

return x, l, res

# INPUT: A - np.ndarray (2D), d - float (from 0.0 to 1.0), x - np.ndarray (1D, size of A.shape[0/1])

# OUTPUT: y - np.ndarray (1D, size of x)

def pagerank_matvec(A, d, x): # 2 pts

# enter your code here

return y

DBLP: computer science bibliography¶

Download the dataset from here, unzip it and put dblp_authors.npz and dblp_graph.npz in the same folder with this notebook. Each value (author name) from dblp_authors.npz corresponds to the row/column of the matrix from dblp_graph.npz. Value at row i and column j of the matrix from dblp_graph.npz corresponds to the number of times author i cited papers of the author j. Let us now find the most significant scientists according to PageRank model over DBLP data.

Load the weighted adjacency matrix and the authors list into Python using

load_dblp(...)function. Print its density (fraction of nonzero elements). Find top-10 most cited authors from the weighted adjacency matrix. Now, make all the weights of the adjacency matrix equal to 1 for simplicity (consider only existence of connection between authors, not its weight). Obtain the PageRank matrix $A$ from the adjacency matrix and verify that it is stochastic.In order to provide

pagerank_matvecto yourpower_method(without rewriting it) for fast calculation of $A_dx$, you can create aLinearOperator:

L = scipy.sparse.linalg.LinearOperator(A.shape, matvec=lambda x, A=A, d=d: pagerank_matvec(A, d, x))

Calling L@x or L.dot(x) will result in calculation of pagerank_matvec(A, d, x) and, thus, you can plug $L$ instead of the matrix $A$ in the power_method directly. Note: though in the previous subtask graph was very small (so you could disparage fast matvec implementation), here it is very large (but sparse), so that direct evaluation of $A_dx$ will require $\sim 10^{12}$ matrix elements to store - good luck with that (^_<)≡☆

Run the power method starting from the vector of all ones and plot residuals $\|A_dx_k - \lambda_k x_k\|_2$ as a function of $k$ for $d=0.85$.

Print names of the top-10 authors according to PageRank over DBLP when $d=0.85$.

(Bonus) Does it look suspicious? Why? Discuss what could cause such results.

from scipy.sparse import load_npz

import numpy as np

def load_dblp(path_auth, path_graph):

G = load_npz(path_graph).astype(float)

with np.load(path_auth) as data: authors = data['authors']

return G, authors

G, authors = load_dblp('dblp_authors.npz', 'dblp_graph.npz')

3. QR algorithm (10 pts)¶

- Implement QR-algorithm without shifting. Prototype of the function is given below

# INPUT:

# A_init - square matrix,

# num_iter - number of iterations for QR algorithm

# OUTPUT:

# Ak - transformed matrix A_init given by QR algorithm,

# convergence - numpy array of shape (num_iter, ),

# where we store the maximal number from the Chebyshev norm

# of triangular part of the Ak for every iteration

def qr_algorithm(A_init, num_iter): # 3 pts

# enter your code here

return Ak, convergence

Symmetric case¶

- Create symmetric tridiagonal $11 \times 11$ matrix with elements $-1, 2, -1$ on sub-, main- and upper diagonal respectively without using loops.

- Run $300$ iterations of the QR algorithm for this matrix.

- Plot the output matrix with function

plt.spy(Ak, precision=1e-7). - Plot convergence of QR-algorithm.

*Photo comment*: professor Gilbert Strang (MIT) "These are 121 cupcakes with my favorite -1, 2, -1 matrix. It was the day before Thanksgiving and two days before my birthday. A happy surprise."

*Photo comment*: professor Gilbert Strang (MIT) "These are 121 cupcakes with my favorite -1, 2, -1 matrix. It was the day before Thanksgiving and two days before my birthday. A happy surprise."

Nonsymmetric case¶

- Create nonsymmetric tridiagonal $11 \times 11$ matrix with elements $5, 3, -2$ on sub-, main- and upper diagonal respectively without using loops.

- Run $200$ iterations of the QR algorithm for this matrix.

- Plot the result matrix with function

plt.spy(Ak, precision=1e-7). Is this matrix lower triangular? How does this correspond to the claim about convergence of the QR algorithm?