# Environment setup

const DEPENDENCIES = ["GraphViz", "CairoMakie", "BenchmarkTools"];

import Pkg

Pkg.activate(temp=true)

Pkg.add(name="DataFlowTasks", rev="0e708676eedca1e3ec825246f68b2de4c0906d86")

foreach(Pkg.add, DEPENDENCIES)

Activating new project at `/tmp/jl_3eoSJI`

Updating git-repo `https://github.com/maltezfaria/DataFlowTasks.jl.git`

Resolving package versions...

Updating `/tmp/jl_3eoSJI/Project.toml`

[d1549cb6] + DataFlowTasks v0.2.0 `https://github.com/maltezfaria/DataFlowTasks.jl.git#0e70867`

Updating `/tmp/jl_3eoSJI/Manifest.toml`

[34da2185] + Compat v4.12.0

[d1549cb6] + DataFlowTasks v0.2.0 `https://github.com/maltezfaria/DataFlowTasks.jl.git#0e70867`

[bac558e1] + OrderedCollections v1.6.3

[6c6a2e73] + Scratch v1.2.1

[0dad84c5] + ArgTools v1.1.1

[56f22d72] + Artifacts

[2a0f44e3] + Base64

[ade2ca70] + Dates

[f43a241f] + Downloads v1.6.0

[7b1f6079] + FileWatching

[b77e0a4c] + InteractiveUtils

[b27032c2] + LibCURL v0.6.4

[76f85450] + LibGit2

[8f399da3] + Libdl

[37e2e46d] + LinearAlgebra

[56ddb016] + Logging

[d6f4376e] + Markdown

[ca575930] + NetworkOptions v1.2.0

[44cfe95a] + Pkg v1.10.0

[de0858da] + Printf

[3fa0cd96] + REPL

[9a3f8284] + Random

[ea8e919c] + SHA v0.7.0

[9e88b42a] + Serialization

[6462fe0b] + Sockets

[fa267f1f] + TOML v1.0.3

[a4e569a6] + Tar v1.10.0

[cf7118a7] + UUIDs

[4ec0a83e] + Unicode

[e66e0078] + CompilerSupportLibraries_jll v1.0.5+1

[deac9b47] + LibCURL_jll v8.4.0+0

[e37daf67] + LibGit2_jll v1.6.4+0

[29816b5a] + LibSSH2_jll v1.11.0+1

[c8ffd9c3] + MbedTLS_jll v2.28.2+1

[14a3606d] + MozillaCACerts_jll v2023.1.10

[4536629a] + OpenBLAS_jll v0.3.23+2

[83775a58] + Zlib_jll v1.2.13+1

[8e850b90] + libblastrampoline_jll v5.8.0+1

[8e850ede] + nghttp2_jll v1.52.0+1

[3f19e933] + p7zip_jll v17.4.0+2

Precompiling project...

✓ DataFlowTasks

1 dependency successfully precompiled in 2 seconds. 6 already precompiled.

1 dependency precompiled but a different version is currently loaded. Restart julia to access the new version

Resolving package versions...

Updating `/tmp/jl_3eoSJI/Project.toml`

[f526b714] + GraphViz v0.2.0

Updating `/tmp/jl_3eoSJI/Manifest.toml`

[5789e2e9] + FileIO v1.16.2

[f526b714] + GraphViz v0.2.0

[692b3bcd] + JLLWrappers v1.5.0

[21216c6a] + Preferences v1.4.1

[ae029012] + Requires v1.3.0

[6e34b625] + Bzip2_jll v1.0.8+1

[83423d85] + Cairo_jll v1.16.1+1

[2e619515] + Expat_jll v2.5.0+0

[a3f928ae] + Fontconfig_jll v2.13.93+0

[d7e528f0] + FreeType2_jll v2.13.1+0

[559328eb] + FriBidi_jll v1.0.10+0

[78b55507] + Gettext_jll v0.21.0+0

[7746bdde] + Glib_jll v2.76.5+0

[3b182d85] + Graphite2_jll v1.3.14+0

[3c863552] + Graphviz_jll v2.50.0+1

[2e76f6c2] + HarfBuzz_jll v2.8.1+1

[1d63c593] + LLVMOpenMP_jll v15.0.7+0

[dd4b983a] + LZO_jll v2.10.1+0

⌅ [e9f186c6] + Libffi_jll v3.2.2+1

[d4300ac3] + Libgcrypt_jll v1.8.7+0

[7add5ba3] + Libgpg_error_jll v1.42.0+0

[94ce4f54] + Libiconv_jll v1.17.0+0

[4b2f31a3] + Libmount_jll v2.35.0+0

[38a345b3] + Libuuid_jll v2.36.0+0

[36c8627f] + Pango_jll v1.50.14+0

[30392449] + Pixman_jll v0.42.2+0

[02c8fc9c] + XML2_jll v2.12.2+0

[aed1982a] + XSLT_jll v1.1.34+0

[4f6342f7] + Xorg_libX11_jll v1.8.6+0

[0c0b7dd1] + Xorg_libXau_jll v1.0.11+0

[a3789734] + Xorg_libXdmcp_jll v1.1.4+0

[1082639a] + Xorg_libXext_jll v1.3.4+4

[ea2f1a96] + Xorg_libXrender_jll v0.9.10+4

[14d82f49] + Xorg_libpthread_stubs_jll v0.1.1+0

[c7cfdc94] + Xorg_libxcb_jll v1.15.0+0

[c5fb5394] + Xorg_xtrans_jll v1.5.0+0

[b53b4c65] + libpng_jll v1.6.40+0

[efcefdf7] + PCRE2_jll v10.42.0+1

Info Packages marked with ⌅ have new versions available but compatibility constraints restrict them from upgrading. To see why use `status --outdated -m`

Precompiling project...

✓ DataFlowTasks → DataFlowTasks_GraphViz_Ext

1 dependency successfully precompiled in 1 seconds. 45 already precompiled.

1 dependency precompiled but a different version is currently loaded. Restart julia to access the new version

Resolving package versions...

Updating `/tmp/jl_3eoSJI/Project.toml`

[13f3f980] + CairoMakie v0.11.8

Updating `/tmp/jl_3eoSJI/Manifest.toml`

[621f4979] + AbstractFFTs v1.5.0

[398f06c4] + AbstractLattices v0.3.0

[1520ce14] + AbstractTrees v0.4.4

[79e6a3ab] + Adapt v4.0.1

[27a7e980] + Animations v0.4.1

[4fba245c] + ArrayInterface v7.7.0

[67c07d97] + Automa v1.0.3

[13072b0f] + AxisAlgorithms v1.1.0

[39de3d68] + AxisArrays v0.4.7

[6e4b80f9] + BenchmarkTools v1.4.0

[fa961155] + CEnum v0.5.0

[96374032] + CRlibm v1.0.1

[159f3aea] + Cairo v1.0.5

[13f3f980] + CairoMakie v0.11.8

[49dc2e85] + Calculus v0.5.1

[d360d2e6] + ChainRulesCore v1.21.0

[523fee87] + CodecBzip2 v0.8.2

[944b1d66] + CodecZlib v0.7.4

[a2cac450] + ColorBrewer v0.4.0

[35d6a980] + ColorSchemes v3.24.0

[3da002f7] + ColorTypes v0.11.4

[c3611d14] + ColorVectorSpace v0.10.0

[5ae59095] + Colors v0.12.10

[861a8166] + Combinatorics v1.0.2

[bbf7d656] + CommonSubexpressions v0.3.0

[187b0558] + ConstructionBase v1.5.4

[d38c429a] + Contour v0.6.2

[9a962f9c] + DataAPI v1.16.0

[864edb3b] + DataStructures v0.18.16

[e2d170a0] + DataValueInterfaces v1.0.0

[927a84f5] + DelaunayTriangulation v0.8.12

[163ba53b] + DiffResults v1.1.0

[b552c78f] + DiffRules v1.15.1

[31c24e10] + Distributions v0.25.107

[ffbed154] + DocStringExtensions v0.9.3

[fa6b7ba4] + DualNumbers v0.6.8

[4e289a0a] + EnumX v1.0.4

[429591f6] + ExactPredicates v2.2.8

[411431e0] + Extents v0.1.2

[7a1cc6ca] + FFTW v1.8.0

[8fc22ac5] + FilePaths v0.8.3

[48062228] + FilePathsBase v0.9.21

[1a297f60] + FillArrays v1.9.3

[6a86dc24] + FiniteDiff v2.22.0

[53c48c17] + FixedPointNumbers v0.8.4

[59287772] + Formatting v0.4.2

[f6369f11] + ForwardDiff v0.10.36

[b38be410] + FreeType v4.1.1

[663a7486] + FreeTypeAbstraction v0.10.1

[46192b85] + GPUArraysCore v0.1.6

[cf35fbd7] + GeoInterface v1.3.3

[5c1252a2] + GeometryBasics v0.4.10

[a2bd30eb] + Graphics v1.1.2

[3955a311] + GridLayoutBase v0.10.0

[42e2da0e] + Grisu v1.0.2

[34004b35] + HypergeometricFunctions v0.3.23

[2803e5a7] + ImageAxes v0.6.11

[c817782e] + ImageBase v0.1.7

[a09fc81d] + ImageCore v0.10.2

[82e4d734] + ImageIO v0.6.7

[bc367c6b] + ImageMetadata v0.9.9

[9b13fd28] + IndirectArrays v1.0.0

[d25df0c9] + Inflate v0.1.4

[18e54dd8] + IntegerMathUtils v0.1.2

[a98d9a8b] + Interpolations v0.15.1

⌅ [d1acc4aa] + IntervalArithmetic v0.22.5

[8197267c] + IntervalSets v0.7.9

[92d709cd] + IrrationalConstants v0.2.2

[f1662d9f] + Isoband v0.1.1

[c8e1da08] + IterTools v1.10.0

[82899510] + IteratorInterfaceExtensions v1.0.0

[682c06a0] + JSON v0.21.4

[b835a17e] + JpegTurbo v0.1.5

[5ab0869b] + KernelDensity v0.6.8

[b964fa9f] + LaTeXStrings v1.3.1

[8cdb02fc] + LazyModules v0.3.1

[9c8b4983] + LightXML v0.9.1

[d3d80556] + LineSearches v7.2.0

[9b3f67b0] + LinearAlgebraX v0.2.7

[2ab3a3ac] + LogExpFunctions v0.3.26

[1914dd2f] + MacroTools v0.5.13

[ee78f7c6] + Makie v0.20.7

[20f20a25] + MakieCore v0.7.3

[dbb5928d] + MappedArrays v0.4.2

[b8f27783] + MathOptInterface v1.25.2

[0a4f8689] + MathTeXEngine v0.5.7

[e1d29d7a] + Missings v1.1.0

[7475f97c] + Mods v2.2.4

[e94cdb99] + MosaicViews v0.3.4

[3b2b4ff1] + Multisets v0.4.4

[d8a4904e] + MutableArithmetics v1.4.0

[d41bc354] + NLSolversBase v7.8.3

[77ba4419] + NaNMath v1.0.2

[f09324ee] + Netpbm v1.1.1

[510215fc] + Observables v0.5.5

[6fe1bfb0] + OffsetArrays v1.13.0

[52e1d378] + OpenEXR v0.3.2

[429524aa] + Optim v1.9.2

[90014a1f] + PDMats v0.11.31

[f57f5aa1] + PNGFiles v0.4.3

[19eb6ba3] + Packing v0.5.0

[5432bcbf] + PaddedViews v0.5.12

[d96e819e] + Parameters v0.12.3

[69de0a69] + Parsers v2.8.1

[2ae35dd2] + Permutations v0.4.20

[3bbf5609] + PikaParser v0.6.1

[eebad327] + PkgVersion v0.3.3

[995b91a9] + PlotUtils v1.4.0

[647866c9] + PolygonOps v0.1.2

[f27b6e38] + Polynomials v4.0.6

[85a6dd25] + PositiveFactorizations v0.2.4

[aea7be01] + PrecompileTools v1.2.0

[27ebfcd6] + Primes v0.5.5

[92933f4c] + ProgressMeter v1.9.0

[4b34888f] + QOI v1.0.0

[1fd47b50] + QuadGK v2.9.4

[b3c3ace0] + RangeArrays v0.3.2

[c84ed2f1] + Ratios v0.4.5

[3cdcf5f2] + RecipesBase v1.3.4

[189a3867] + Reexport v1.2.2

[05181044] + RelocatableFolders v1.0.1

[286e9d63] + RingLists v0.2.8

[79098fc4] + Rmath v0.7.1

[5eaf0fd0] + RoundingEmulator v0.2.1

[efcf1570] + Setfield v1.1.1

[65257c39] + ShaderAbstractions v0.4.1

[992d4aef] + Showoff v1.0.3

[73760f76] + SignedDistanceFields v0.4.0

[55797a34] + SimpleGraphs v0.8.6

[ec83eff0] + SimplePartitions v0.3.1

[cc47b68c] + SimplePolynomials v0.2.17

[a6525b86] + SimpleRandom v0.3.1

[699a6c99] + SimpleTraits v0.9.4

[45858cf5] + Sixel v0.1.3

[a2af1166] + SortingAlgorithms v1.2.1

[276daf66] + SpecialFunctions v2.3.1

[c5dd0088] + StableHashTraits v1.1.6

[cae243ae] + StackViews v0.1.1

[90137ffa] + StaticArrays v1.9.2

[1e83bf80] + StaticArraysCore v1.4.2

[82ae8749] + StatsAPI v1.7.0

[2913bbd2] + StatsBase v0.34.2

[4c63d2b9] + StatsFuns v1.3.0

[09ab397b] + StructArrays v0.6.17

[3783bdb8] + TableTraits v1.0.1

[bd369af6] + Tables v1.11.1

[62fd8b95] + TensorCore v0.1.1

⌅ [731e570b] + TiffImages v0.6.8

[3bb67fe8] + TranscodingStreams v0.10.3

[981d1d27] + TriplotBase v0.1.0

[9d95972d] + TupleTools v1.4.3

[3a884ed6] + UnPack v1.0.2

[1cfade01] + UnicodeFun v0.4.1

[efce3f68] + WoodburyMatrices v1.0.0

[4e9b3aee] + CRlibm_jll v1.0.1+0

[5ae413db] + EarCut_jll v2.2.4+0

[b22a6f82] + FFMPEG_jll v4.4.4+1

[f5851436] + FFTW_jll v3.3.10+0

[905a6f67] + Imath_jll v3.1.7+0

[1d5cc7b8] + IntelOpenMP_jll v2024.0.2+0

[aacddb02] + JpegTurbo_jll v3.0.1+0

[c1c5ebd0] + LAME_jll v3.100.1+0

[856f044c] + MKL_jll v2024.0.0+0

[e7412a2a] + Ogg_jll v1.3.5+1

[18a262bb] + OpenEXR_jll v3.1.4+0

[458c3c95] + OpenSSL_jll v3.0.13+0

[efe28fd5] + OpenSpecFun_jll v0.5.5+0

[91d4177d] + Opus_jll v1.3.2+0

[f50d1b31] + Rmath_jll v0.4.0+0

[9a68df92] + isoband_jll v0.2.3+0

[a4ae2306] + libaom_jll v3.4.0+0

[0ac62f75] + libass_jll v0.15.1+0

[f638f0a6] + libfdk_aac_jll v2.0.2+0

[075b6546] + libsixel_jll v1.10.3+0

[f27f6e37] + libvorbis_jll v1.3.7+1

[1270edf5] + x264_jll v2021.5.5+0

[dfaa095f] + x265_jll v3.5.0+0

[8bf52ea8] + CRC32c

[8ba89e20] + Distributed

[9fa8497b] + Future

[4af54fe1] + LazyArtifacts

[a63ad114] + Mmap

[9abbd945] + Profile

[1a1011a3] + SharedArrays

[2f01184e] + SparseArrays v1.10.0

[10745b16] + Statistics v1.10.0

[4607b0f0] + SuiteSparse

[8dfed614] + Test

[05823500] + OpenLibm_jll v0.8.1+2

[bea87d4a] + SuiteSparse_jll v7.2.1+1

Info Packages marked with ⌅ have new versions available but compatibility constraints restrict them from upgrading. To see why use `status --outdated -m`

Precompiling project...

✓ DataFlowTasks → DataFlowTasks_Makie_Ext

1 dependency successfully precompiled in 6 seconds. 265 already precompiled.

1 dependency precompiled but a different version is currently loaded. Restart julia to access the new version

Resolving package versions...

Updating `/tmp/jl_3eoSJI/Project.toml`

[6e4b80f9] + BenchmarkTools v1.4.0

No Changes to `/tmp/jl_3eoSJI/Manifest.toml`

Tiled Cholesky Factorization¶

We illustrate here the use of DataFlowTasks to parallelize a tiled Cholesky

factorization. The implementation shown here is delibarately made as simple

and self-contained as possible; yet, as we shall see when comparing to

OpenBLAS, it is already quite performant!

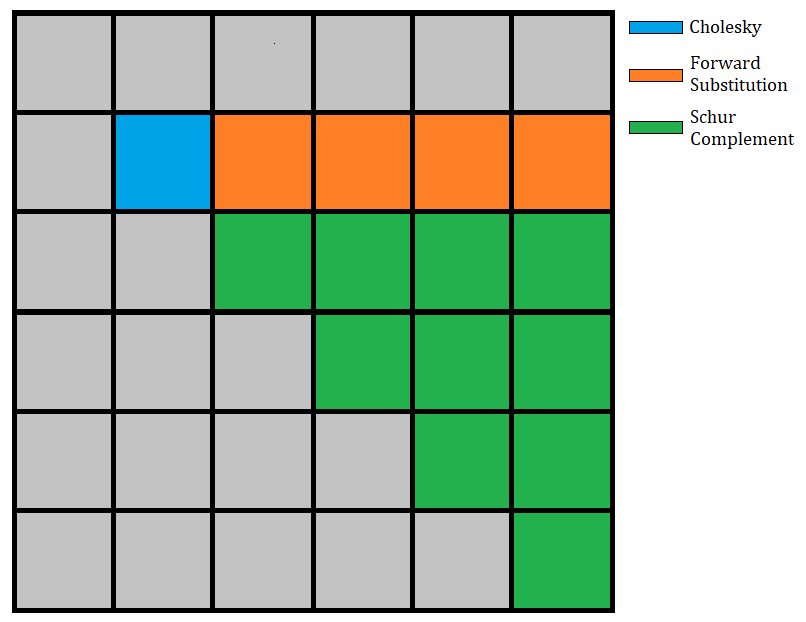

The Cholesky factorization algorithm takes a symmetric positive definite matrix $A$ and finds a lower triangular matrix $L$ such that $A = LLᵀ$. The tiled version of this algorithm decomposes the matrix $A$ into tiles (of even sizes, in this simplified version). At each step of the algorithm, we do a Cholesky factorization on the diagonal tile, use a triangular solve to update all of the tiles at the right of the diagonal tile, and finally update all the tiles of the submatrix with a schur complement.

If we have a matrix $A$ decomposed in $n \times n$ tiles, then the algorithm will have $n$ steps. The $i$-th step (with $i \in [1:n]$) performs:

- $1$ cholesky factorization of the $(i,i)$ tile,

- $(i-1)$ triangular solves (one for each tile in the $i$-th row of the upper triangular matrix),

- $i(i-1)/2$ matrix multiplications to update the submatrix.

These are the basic operations on tiles, which we are going to spawn in separate tasks in the parallel implementation. Accounting for all iterations, this makes a total of $\mathcal{O}(n^3)$ such tasks, decomposed as:

- $\mathcal{O}(n)$ cholesky factorizations,

- $\mathcal{O}(n^2)$ triangular solves,

- $\mathcal{O}(n^3)$ matrix multiplications.

The following image illustrates the 2nd step of the algorithm:

Sequential implementation¶

A sequential tiled factorization algorithm can be implemented as:

using LinearAlgebra

tilerange(ti, ts) = (ti-1)*ts+1:ti*ts

function cholesky_tiled!(A, ts)

m = size(A, 1); @assert m==size(A, 2)

m%ts != 0 && error("Tilesize doesn't fit the matrix")

n = m÷ts # number of tiles in each dimension

T = [view(A, tilerange(i, ts), tilerange(j, ts)) for i in 1:n, j in 1:n]

for i in 1:n

# Diagonal cholesky serial factorization

cholesky!(T[i,i])

# Left tiles update

U = UpperTriangular(T[i,i])

for j in i+1:n

ldiv!(U', T[i,j])

end

# Submatrix update

for j in i+1:n

for k in j:n

mul!(T[j,k], T[i,j]', T[i,k], -1, 1)

end

end

end

# Construct the factorized object

return Cholesky(A, 'U', zero(LinearAlgebra.BlasInt))

end

cholesky_tiled! (generic function with 1 method)

Let us build a small test case to check the correctness of the factorization. Here we divide a matrix of size 4096×4096 in 8×8 tiles of size 512×512:

n = 4096

ts = 512

A = rand(n, n)

A = (A + adjoint(A))/2

A = A + n*I;

and the results seem to be correct:

F = cholesky_tiled!(copy(A), ts)

# Check results

err = norm(F.L*F.U-A,Inf)/max(norm(A),norm(F.L*F.U))

@show err

@assert err < eps(Float64)

err = 1.3875605786879431e-17

Parallel implementation¶

In order to parallelize the code with DataFlowTasks.jl, function calls

acting on tiles are wrapped within @dspawn, along with annotations

describing data access modes. We also give meaningful labels to the tasks,

which will help debug and profile the code.

using DataFlowTasks

function cholesky_dft!(A, ts)

m = size(A, 1); @assert m==size(A, 2)

m%ts != 0 && error("Tilesize doesn't fit the matrix")

n = m÷ts # number of tiles in each dimension

T = [view(A, tilerange(i, ts), tilerange(j, ts)) for i in 1:n, j in 1:n]

for i in 1:n

# Diagonal cholesky serial factorization

@dspawn cholesky!(@RW(T[i,i])) label="chol ($i,$i)"

# Left tiles update

U = UpperTriangular(T[i,i])

for j in i+1:n

@dspawn ldiv!(@R(U)', @RW(T[i,j])) label="ldiv ($i,$j)"

end

# Submatrix update

for j in i+1:n

for k in j:n

@dspawn schur_complement!(@RW(T[j,k]), @R(T[i,j])', @R(T[i,k])) label="schur ($j,$k)"

end

end

end

# Construct the factorized object

r = @dspawn Cholesky(@R(A), 'U', zero(LinearAlgebra.BlasInt)) label="result"

return fetch(r)

end

schur_complement!(C, A, B) = mul!(C, A, B, -1, 1)

schur_complement! (generic function with 1 method)

Again, let us check the correctness of the result:

F = cholesky_dft!(copy(A), ts)

# Check results

err = norm(F.L*F.U-A,Inf)/max(norm(A),norm(F.L*F.U))

@show err

@assert err < eps(Float64)

err = 1.3875605786879431e-17

Debugging and Profiling¶

Let us now check what happens during a parallel run of our cholesky factorization. Thanks to the test above, the code is now compiled. Let's re-run it and collect meaningful profiling information:

# Clean profiling environment

GC.gc()

# Real workload to be analysed

Ac = copy(A)

log_info = DataFlowTasks.@log cholesky_dft!(Ac, ts)

LogInfo with 121 logged tasks

The number of tasks being $\mathcal{O}(n^3)$, we can see how quickly the DAG complexity increases (even though the test case only has 8×8 tiles here):

DataFlowTasks.stack_weakdeps_env!()

using GraphViz

dag = GraphViz.Graph(log_info)

Status `~/.julia/scratchspaces/d1549cb6-e9f4-42f8-98cc-ffc8d067ff5b/weakdeps-1.10/Project.toml` ⌃ [13f3f980] CairoMakie v0.11.5 ⌃ [e9467ef8] GLMakie v0.9.5 [f526b714] GraphViz v0.2.0 ⌅ [ee78f7c6] Makie v0.20.4 Info Packages marked with ⌃ and ⌅ have new versions available. Those with ⌃ may be upgradable, but those with ⌅ are restricted by compatibility constraints from upgrading. To see why use `status --outdated`

The critical path, highlighted in red, includes all cholesky factorizations of diagonal tiles, as well as the required tasks in between them.

We can also readily get more details about the performance limiting factors:

DataFlowTasks.describe(log_info; categories=["chol", "ldiv", "schur"])

• Elapsed time : 0.243 ├─ Critical Path : 0.216 ╰─ No-Wait : 0.184 • Run time : 1.948 ├─ Computing : 1.470 │ ├─ chol : 0.085 │ ├─ ldiv : 0.208 │ ├─ schur : 1.177 │ ╰─ unlabeled : 0.000 ├─ Task Insertion : 0.006 ╰─ Other (idle) : 0.472

and, at the price of loading Makie, display these in a more convenient

profile plot:

using CairoMakie # or GLMakie in order to have more interactivity

trace = plot(log_info; categories=["chol", "ldiv", "schur"])

┌ Warning: Found `resolution` in the theme when creating a `Scene`. The `resolution` keyword for `Scene`s and `Figure`s has been deprecated. Use `Figure(; size = ...` or `Scene(; size = ...)` instead, which better reflects that this is a unitless size and not a pixel resolution. The key could also come from `set_theme!` calls or related theming functions. └ @ Makie ~/.julia/packages/Makie/z2T2o/src/scenes.jl:220 [ Info: Computing : 1.4704559240000001 [ Info: Inserting : 0.005555413000000003 [ Info: Other : 0.47156888131512414

The overhead incurred by DataFlowTasks seems relatively small here: the time

taken inserting tasks is barely measurable, and the scheduling did not lead to

threads waiting idly for too long. This is confirmed by the bottom middle

plot, showing a measured wall clock time not too much longer than the lower

bound obtained when suppressing idle time.

The "Computing time: breakdown by category" plot seems to indicate that the

matrix multiplications performed in the "Schur" tasks account for the majority

of the computing time. This routine is probably already quite fast since it

simply calls our BLAS library for a matrix-matrix product; it would be

interesting, however, to see how

LoopVectorization.jl

fares here!

Performances¶

To benchmark the performance, we will compare our implementation to the one provided by our system's BLAS library. We will use OpenBlas here because it is the default BLAS library shipped with Julia, but if you have access to Intel's MKL, you should probably give it a try! Here is the benchmark:

using BenchmarkTools

BenchmarkTools.DEFAULT_PARAMETERS.seconds = 1

# n × n symmetric positive definite matrix

function spd_matrix(n)

A = rand(n, n)

A = (A + adjoint(A))/2

return A + n*I

end

function bench_blas(n)

nt = Threads.nthreads()

BLAS.set_num_threads(nt)

return @belapsed cholesky!(A) setup=(A=spd_matrix($n)) evals=1

end

function bench_tiled(n;tilesize=256)

BLAS.set_num_threads(1)

return @belapsed cholesky_tiled!(A, $tilesize) setup=(A=spd_matrix($n)) evals=1

end

function bench_dft(n;tilesize=256)

BLAS.set_num_threads(1)

return @belapsed cholesky_dft!(A, $tilesize) setup=(A=spd_matrix($n)) evals=1

end

bench_dft (generic function with 1 method)

Let us compare the performances of the default BLAS library and ours for various matrix sizes, and plot the results:

BLAS.get_config()

LinearAlgebra.BLAS.LBTConfig Libraries: └ [ILP64] libopenblas64_.so

nsizes = 512 .* (1:1:8)

tblas = map(bench_blas, nsizes)

tdft = map(bench_dft, nsizes)

Gflops = map(n->(1/3*n^3 + 1/2*n^2)/10^9, nsizes)

fig = Figure()

ax = Axis(fig[1,1], xlabel="Matrix size", ylabel="Time (s)")

scatterlines!(ax, nsizes, tblas, label= "OpenBLAS", linewidth=2)

scatterlines!(ax, nsizes, tdft, label="DFT", linewidth=2)

axislegend(position=:lt)

ax = Axis(fig[1,2], xlabel="Matrix size", ylabel="GFlops/second")

scatterlines!(ax, nsizes, Gflops ./ tblas, label= "OpenBLAS", linewidth=2)

scatterlines!(ax, nsizes, Gflops ./ tdft, label="DFT", linewidth=2)

axislegend(position=:lt)

Label(fig[0,:], "Cholesky factorization on $(Threads.nthreads()) threads", fontsize=20)

fig

We see that, despite the simplicity of the implementation, the parallel version performs in par with the default BLAS library for the matrix sizes considered! For very large matrices, further optmizations are probably necessary to take into account the memory hierarchy of the machine. Finally, here is the observed speedup compared to a sequential tiled implementation and a matrix of size $n=4096$:

(;

threads = Threads.nthreads(),

speedup = bench_tiled(4096) / bench_dft(4096),

)

(threads = 8, speedup = 5.410474310871607)

This notebook was generated using Literate.jl.