pyBumpHunter : A BumpHunting tool in python¶

presenter : Louis VASLIN - Université Clermont Auvergne

LPC/IN2P3

import numpy as np

import pyBumpHunter as BH

import matplotlib.pyplot as plt

from matplotlib import colors as mcl

The BumpHunter algorithm¶

Principle¶

- Look for an excess (or deficit) in a data distribution w.r.t. a reference background.

- Compute the local and global p-value of the localized deviation found in data.

The "bump hunt" is done using histograms

This package is inspired by the original BumpHunter algorithm described in arXiv:1101.0390

Global p-value¶

BumpHunter generates toys from the reference and scans them like data.

This will give a background-only local p-value distribution.

The global p-value is then the p-value of the local p-values.

t = -ln(local p-value) <- BumpHunter test statistic

Why do we need pyBumpHunter ?¶

Model agnostic bump hunt

Don't need any signal model

Possibility to use data driven reference background

See Ioan's talk

Pure python based (don't need any ROOT setup to work)

Code available on github (see links above)

The toolwss presented at PyHEP last July (link)

It has also been integrated in Scikit-HEP

A paper presenting the tool and some test of all the features is in preparation is in preparation.

Features proposed in pyBumpHunter¶

Extension of the algorithm to 2D histograms

Signal injection test (1D)¶

To test sensitivity to a given signal model

Side-band normalization¶

To scale the reference background down to the data

Multi-channel combination (coming soon)¶

To get a combined local and global p-value over several channels

1D scan¶

Follow the basic BumpHunter algorithm

Give the position and width of the bump, as well as the local and global p-value.

Also give a rough evaluation of the signal content of the bump (data - ref).

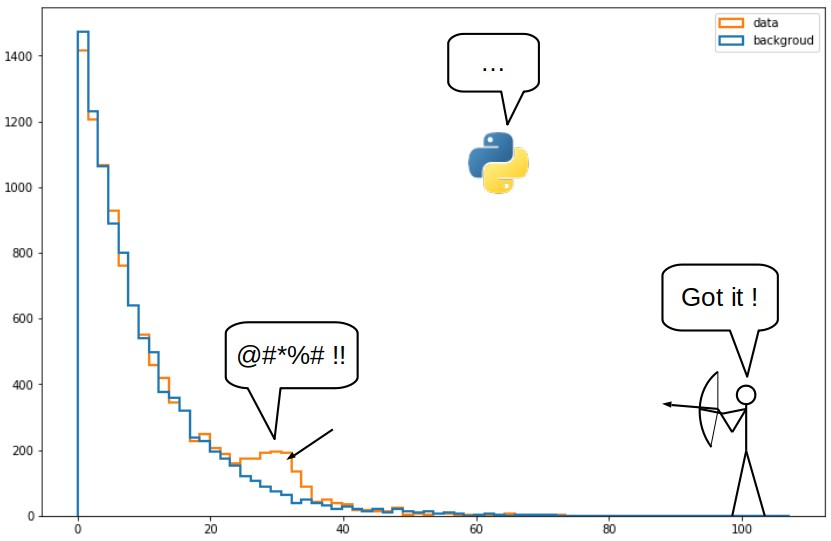

# Generate some background and data

np.random.seed(42)

bkg = np.random.exponential(scale=8.0, size=1_000_000)

data = np.random.exponential(scale=8.0, size=500_000)

data = np.append(

data,

np.random.normal(loc=20, scale=2.5, size=900)

)

rng = [0,70]

# Make histograms

bkg_hist, bins = np.histogram(bkg, bins=50, range=rng)

bkg_hist = bkg_hist * 1/2

data_hist, _ = np.histogram(data, bins=bins)

# Plot the distributions

F = plt.figure(figsize=(12,8))

plt.title('Example distributions', size='xx-large')

plt.hist(bins[:-1], bins=bins, weights=bkg_hist, histtype='step', lw=2, label='reference background')

plt.hist(bins[:-1], bins=bins, weights=data_hist, histtype='step', lw=2, label='observed data')

plt.legend(fontsize='x-large')

plt.xticks(fontsize='x-large')

plt.yticks(fontsize='x-large')

plt.xlabel('variable',size='x-large')

plt.ylabel('Event count',size='x-large')

plt.yscale('log')

plt.show()

# Create a BumpHunter1D instance

bh1 = BH.BumpHunter1D(

width_min=2,

width_max=5,

width_step=1,

scan_step=1,

bins=bins,

rang=rng,

npe=10_000,

nworker=1,

seed=666

)

# Call the scan method

bh1.bump_scan(data_hist, bkg_hist, is_hist=True)

# Display results

print('##.print_bump_info() call##')

bh1.print_bump_info()

print('##.print_bump_true() call##')

bh1.print_bump_true(data_hist, bkg_hist, is_hist=True)

bh1.plot_tomography(data_hist, is_hist=True)

bh1.plot_bump(data_hist, bkg_hist, is_hist=True)

bh1.plot_stat(show_Pval=True)

Generating histograms 4 values of width will be tested SCAN Global p-value : 0.0045 (45 / 10000) Significance = 2.61205 ##.print_bump_info() call## BUMP WINDOW loc = 13 width = 2 local p-value | t = 0.00004 | 10.12585 ##.print_bump_true() call## BUMP POSITION min : 18.200 max : 21.000 mean : 19.600 width : 2.800 number of signal events : 486.0 global p-value : 0.00450 significance = 2.61205

Signal injection¶

Builds data based on a reference background and a signal model

Performs BumpHunter scans on the injected data with increasing signal strength

Stops the process once the required global significance is reached

Signal strength is computed w.r.t the expected number of signal event (medel dependent)

# Generate some signal (normal distribution centered on 20)

sig = np.random.normal(loc=20, scale=3, size=10_000)

sig_hist, _ = np.histogram(sig, bins=bins)

# Set the expected number of signal event

bh1.signal_exp = 1000

# Set the injection parameters

bh1.str_min = 0.1

bh1.str_step = 0.1

bh1.str_scale = 'lin'

bh1.sigma_limit = 3 # Injection stops when we reach 3 sigma global

# Call the injection method

bh1.signal_inject(sig_hist, bkg_hist, is_hist=True)

# Display results

bh1.plot_inject()

Generating background only histograms 4 values of width will be tested BACKGROUND ONLY SCAN STARTING INJECTION STEP 1 : signal strength = 0.1 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0457 (457 / 10000) 0.0811 (811) 0.0146 (146) Significance = 1.68806 (1.39771 2.18078) STEP 2 : signal strength = 0.2 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0396 (396 / 10000) 0.0743 (743) 0.0111 (111) Significance = 1.75535 (1.44449 2.28693) STEP 3 : signal strength = 0.30000000000000004 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0317 (317 / 10000) 0.0694 (694) 0.0067 (67) Significance = 1.85638 (1.48027 2.47296) STEP 4 : signal strength = 0.4 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0232 (232 / 10000) 0.0616 (616) 0.0049 (49) Significance = 1.99174 (1.54148 2.58281) STEP 5 : signal strength = 0.5 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0153 (153 / 10000) 0.0511 (511) 0.0021 (21) Significance = 2.16224 (1.63428 2.86274) STEP 6 : signal strength = 0.6 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0075 (75 / 10000) 0.0376 (376) 0.0006 (6) Significance = 2.43238 (1.77924 3.23888) STEP 7 : signal strength = 0.7 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0044 (44 / 10000) 0.0265 (265) 0.0002 (2) Significance = 2.61973 (1.93492 3.54008) STEP 8 : signal strength = 0.7999999999999999 Generating background+signal histograms BACKGROUND+SIGNAL SCAN Global p-value : 0.0012 (12 / 10000) 0.0143 (143) 0.0000 (0) Significance = 3.03567 (2.18896 7.94144) REACHED SIGMA LIMIT Number of signal event injected : 799.9999999999999 Signal strength : 0.8000

Error bars obtained with background+signal toys

Points represent the medians and error bars the 1st and 3rd quartiles

2D scan¶

Works the same way as in 1D, but with 2D hitograms

Be carfull with the statistics ... in 2D the stat per bin is lower

# Generate some background and data

np.random.seed(42)

bkg = np.random.exponential(scale=8.0, size=(900_000,2))

data = np.random.exponential(scale=8.0, size=(500_000,2))

data = np.append(

data,

np.random.normal(loc=20, scale=1.5, size=(150,2)),

axis=0

)

rng = [[0,40], [0,40]]

# Make histograms

bkg_hist, binx, biny = np.histogram2d(bkg[:,0],bkg[:,1], bins=20, range=rng)

bkg_hist = bkg_hist * 5/9

data_hist, _, _ = np.histogram2d(data[:,0], data[:,1], bins=[binx,biny])

# Plot the data distribution

F = plt.figure(figsize=(12,10))

plt.title('Data 2D distribution', size='xx-large')

plt.hist2d(data[:,0], data[:,1], bins=[binx,biny], norm=mcl.LogNorm())

plt.plot([20], [20], 'rx', ms=10, mew=3)

plt.colorbar()

plt.xticks(fontsize='x-large')

plt.yticks(fontsize='x-large')

plt.show()

# Create a BumpHunter2D instance

bh2 = BH.BumpHunter2D(

width_min=[2,2],

width_max=[3,3],

width_step=[1,1],

scan_step=[1,1],

bins=[binx,biny],

rang=rng,

npe=6_000,

nworker=1,

seed=666

)

# Call the scan method

bh2.bump_scan(data_hist, bkg_hist, is_hist=True)

# Display results

# NOTE : There is no tomography plot in 2D

print('##.print_bump_info() call##')

bh2.print_bump_info()

print('##.print_bump_true() call##')

bh2.print_bump_true(data_hist, bkg_hist, is_hist=True)

bh2.plot_bump(data_hist, bkg_hist, is_hist=True)

bh2.plot_stat(show_Pval=True)

Generating histograms 4 values of width will be tested SCAN Global p-value : 0.0015 (9 / 6000) Significance = 2.96774 ##.print_bump_info() call## BUMP WINDOW loc = [9, 9] width = [3, 2] local p-value | t = 0.00000 | 13.14360 ##.print_bump_true() call## BUMP POSITION min : [18.000, 18.000] max : [24.000, 22.000] mean : [21.000, 20.000] width : [6.000, 4.000] number of signal events : 157.77777777777783 global p-value : 0.00150 significance = 2.96774

Side-band normalization¶

Principle¶

For each tested interval, compute a scale factor

$$ scale = \frac{Ndata_{tot}-Ndata_{inter}}{Nref_{tot}-Nref_{inter}} $$Scale factor applied to the number of background event when computing the local p-value

Objective¶

Normalize the reference to data

without any prior knowledge on the expected background

# Generating some background and data

np.random.seed(44)

bkg = np.random.exponential(scale=8.0, size=800_000)

data = np.random.exponential(scale=8.0, size=500_000)

data = np.append(

data,

np.random.normal(loc=20, scale=3, size=1200)

)

rng = [0,70]

# Make histograms

bkg_hist, bins = np.histogram(bkg, bins=50, range=rng)

data_hist, _ = np.histogram(data, bins=bins)

bkg_hist = np.array(bkg_hist, dtype=float)

# Plot the distributions

F = plt.figure(figsize=(12,8))

plt.title('Example distributions', size='xx-large')

plt.hist(bkg, bins=bins, histtype='step', lw=2, label='reference background')

plt.hist(data, bins=bins, histtype='step', lw=2, label='observed data')

plt.legend(fontsize='x-large')

plt.xticks(fontsize='x-large')

plt.yticks(fontsize='x-large')

plt.xlabel('variable',size='x-large')

plt.ylabel('Event count',size='x-large')

plt.yscale('log')

plt.show()

# Create a BumpHunter1D instance

bh1 = BH.BumpHunter1D(

width_min=2,

width_max=6,

width_step=1,

scan_step=1,

bins=bins,

rang=rng,

npe=10_000,

nworker=1,

use_sideband=True, # Enable side-band normalization

seed=666

)

# Call the scan method

bh1.bump_scan(data_hist, bkg_hist, is_hist=True)

# Display results

print('##.print_bump_info() call##')

bh1.print_bump_info()

print('##.print_bump_true() call##')

bh1.print_bump_true(data_hist, bkg_hist, is_hist=True)

bh1.plot_bump(data_hist, bkg_hist, is_hist=True)

bh1.plot_stat(show_Pval=True)

Generating histograms 5 values of width will be tested SCAN Global p-value : 0.0016 (16 / 10000) Significance = 2.94784 ##.print_bump_info() call## BUMP WINDOW loc = 12 width = 6 local p-value | t = 0.00000 | 16.71846 ##.print_bump_true() call## BUMP POSITION min : 16.800 max : 25.200 mean : 21.000 width : 8.400 number of signal events : 1064.7465312775894 global p-value : 0.00160 significance = 2.94784

Multi-channel combination (Coming soon)¶

The Combined bump window is the intersection of each channel bump windows

Work with channels with different binning

Combine local p-value = product of all individual p-values

The feature will be added in the next release

Conclusion¶

pyBumpHunter is ready to use¶

pip installable and recently integarted to Scikit-HEP

Many features are already available

Presentation at PyHEP (with video recording available)

Development is still ongoing¶

New features and improvements will keep coming

Multi-channel combination alomst ready for the next release

New features planed :

API refactoring (more efficient, both for developers and users

Add treatment of systematic uncertainties

Anyting else that might be useful ( your ideas are welcome )