General Information¶

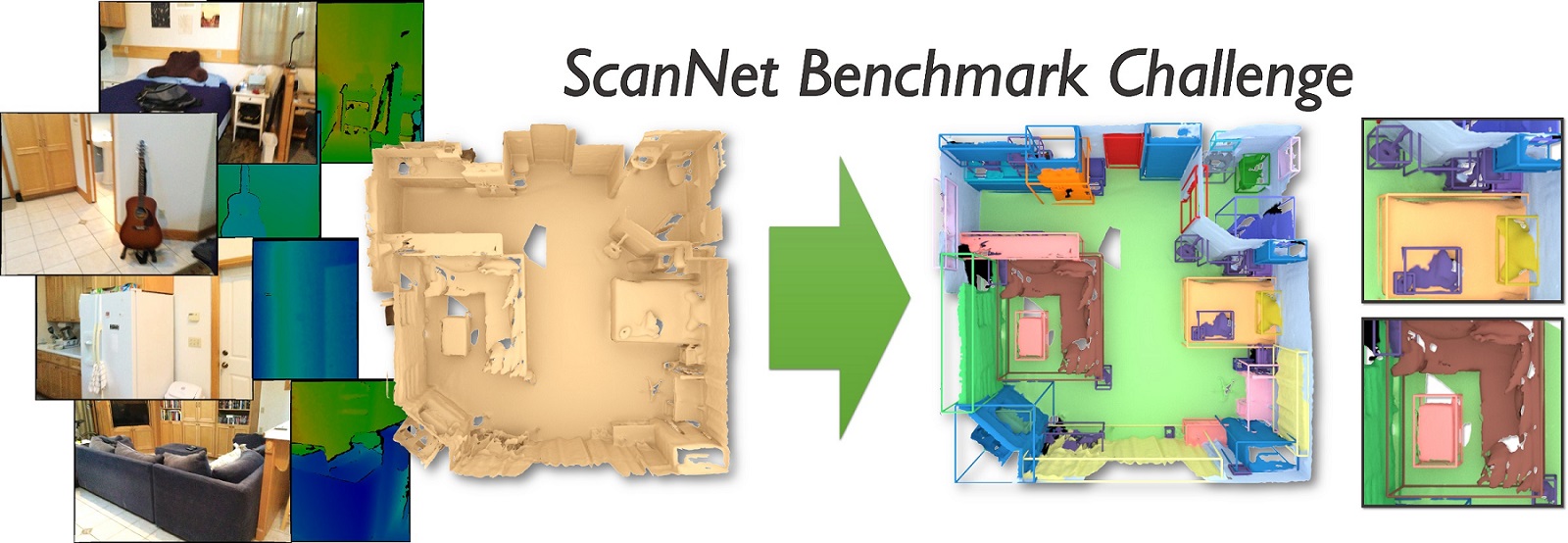

This notebook demonstrates how the fastai_sparse library can be used in semantic segmentation tasks using the example of the ScanNet 3D semantic segmentation solution presented in SparseConvNet example.

Initial data are indoor scenes with RGB and 3D-geometric information obtained as a result of three-dimensional scanning (100...500K vertices in ~1500 internal scenes). Format: 3D geometry mesh in format PLY. The purpose - to predict a label of each vertices (20 furniture classes).

Evaluation metric: IoU (intersection over union)

Firstly, it is necessary to upload and prepare the initial data. See examples/scannet/data

import os

os.environ['CUDA_VISIBLE_DEVICES'] = '1'

Imports¶

import os, sys

import time

import numpy as np

import pandas as pd

import glob

from os.path import join, exists, basename, splitext

from pathlib import Path

from matplotlib import pyplot as plt

from matplotlib import cm

import shutil

from functools import partial

from tqdm import tqdm

from joblib import Parallel, delayed, cpu_count

from IPython.display import display, HTML, FileLink

import torch

import torch.nn as nn

import torch.optim as optim

import sparseconvnet as scn

from fastai_sparse import utils, visualize

from fastai_sparse.utils import log

from fastai_sparse.data import SparseDataBunch

from fastai_sparse.datasets import DataSourceConfig, MeshesDataset

from fastai_sparse.learner import SparseModelConfig, Learner

from fastai_sparse.callbacks import TimeLogger, SaveModelCallback, CSVLogger

# autoreload python modules on the fly when its source is changed

%load_ext autoreload

%autoreload 2

Importing modules specific to this task (standard 3D semantic markers, ScanNet)

from fastai_sparse.metrics import IouMean

from metrics import IouMeanFiltred

from callbacks import CSVLoggerIouByClass

from data import merge_fn

import transforms as T

Experiment environment / system metrics¶

experiment_name = 'scannet_unet_detailed'

utils.watermark(pandas=True)

virtualenv: (fastai_sparse) python: 3.6.8 nvidia driver: b'384.130' nvidia cuda: 9.0, V9.0.176 cudnn: 7.1.4 torch: 1.0.1.post2 pandas: 0.24.2 fastai: 1.0.48 fastai_sparse: 0.0.3.dev0

#!git log1 -n3

# TODO: write to file versions, and so on

fn_commit = Path('results', experiment_name, 'commit.txt')

fn_commit.parent.mkdir(parents=True, exist_ok=True)

!git log -n1 --stat > {str(fn_commit)}

!nvidia-smi

Fri Apr 12 00:27:45 2019

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 384.130 Driver Version: 384.130 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce GTX 108... Off | 00000000:28:00.0 On | N/A |

| 0% 54C P0 68W / 250W | 820MiB / 11163MiB | 2% Default |

+-------------------------------+----------------------+----------------------+

| 1 GeForce GTX 108... Off | 00000000:29:00.0 Off | N/A |

| 0% 40C P8 9W / 250W | 11MiB / 11172MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 1451 G /usr/lib/xorg/Xorg 502MiB |

| 0 2216 G compiz 223MiB |

| 0 2995 G /usr/lib/firefox/firefox 2MiB |

| 0 3109 G /usr/lib/firefox/firefox 2MiB |

| 0 4815 G ...uest-channel-token=10830730894666800651 86MiB |

+-----------------------------------------------------------------------------+

Notebook options¶

utils.wide_notebook()

# uncomment this lines if you want switch off interactive and save visaulisation as screenshoots:

# For rendering run command in terminal: `chromium-browser --remote-debugging-port=9222`

if True:

visualize.options.interactive = False

visualize.options.save_images = True

visualize.options.verbose = True

visualize.options.filename_pattern_image = Path('images', experiment_name, 'fig_{fig_number}')

Source¶

SOURCE_DIR = Path('data', 'scannet_merged_ply')

assert SOURCE_DIR.exists(), "Download data and run prepare_data.ipynb"

definition_of_spliting_dir = Path('data', 'ScanNet_Tasks_Benchmark')

assert definition_of_spliting_dir.exists()

os.listdir(SOURCE_DIR / 'scene0000_01')

['scene0000_01.merged.ply']

Train / Valid / Test lists¶

MeshesDataset uses the pandas DataFrame as a datasource to determine the splitting into testing/valid as well as files locations.

def find_files(path, ext='merged.ply'):

pattern = str(path / '*' / ('*' + ext))

fnames = glob.glob(pattern)

return fnames

def get_df_list(verbose=0):

# train /valid / test splits

fn_lists = {}

fn_lists['train'] = definition_of_spliting_dir / 'scannetv1_train.txt'

fn_lists['valid'] = definition_of_spliting_dir / 'scannetv1_val.txt'

fn_lists['test'] = definition_of_spliting_dir / 'scannetv1_test.txt'

for datatype in ['train', 'valid', 'test']:

assert fn_lists[datatype].exists(), datatype

dfs = {}

total = 0

for datatype in ['train', 'valid', 'test']:

df = pd.read_csv(fn_lists[datatype], header=None, names=['example_id'])

df = df.assign(datatype=datatype)

df = df.assign(subdir=df.example_id)

df = df.sort_values('example_id')

dfs[datatype] = df

if verbose:

print(f"{datatype:5} counts: {len(df):>4}")

total += len(df)

if verbose:

print(f"total: {total}")

return dfs

df_list = get_df_list(verbose=1)

train counts: 1045 valid counts: 156 test counts: 312 total: 1513

df_list['valid'].head(10)

| example_id | datatype | subdir | |

|---|---|---|---|

| 7 | scene0003_00 | valid | scene0003_00 |

| 8 | scene0003_01 | valid | scene0003_01 |

| 9 | scene0003_02 | valid | scene0003_02 |

| 111 | scene0033_00 | valid | scene0033_00 |

| 130 | scene0034_00 | valid | scene0034_00 |

| 131 | scene0034_01 | valid | scene0034_01 |

| 132 | scene0034_02 | valid | scene0034_02 |

| 45 | scene0054_00 | valid | scene0054_00 |

| 101 | scene0056_00 | valid | scene0056_00 |

| 102 | scene0056_01 | valid | scene0056_01 |

DataSets config¶

You can create MeshDataset using the configuration.

train_source_config = DataSourceConfig(root_dir=SOURCE_DIR,

df=df_list['train'],

batch_size=32,

num_workers=8,

ply_label_name='label',

file_ext='.merged.ply',

)

train_source_config

DataSourceConfig; root_dir: data/scannet_merged_ply batch_size: 32 num_workers: 8 file_ext: .merged.ply ply_label_name: label init_numpy_random_seed: True Items count: 1045

valid_source_config = DataSourceConfig(root_dir=SOURCE_DIR,

df=df_list['valid'],

batch_size=2,

num_workers=2,

ply_label_name='label',

file_ext='.merged.ply',

#init_numpy_random_seed=False,

)

valid_source_config

DataSourceConfig; root_dir: data/scannet_merged_ply batch_size: 2 num_workers: 2 file_ext: .merged.ply ply_label_name: label init_numpy_random_seed: True Items count: 156

Datasets¶

train_items = MeshesDataset.from_source_config(train_source_config)

valid_items = MeshesDataset.from_source_config(valid_source_config)

train_items.check()

valid_items.check()

Load file names: 100%|██████████| 1045/1045 [00:00<00:00, 6015.17it/s] Load file names: 100%|██████████| 156/156 [00:00<00:00, 6766.27it/s] Check files exist: 100%|██████████| 1045/1045 [00:00<00:00, 75496.89it/s] Check files exist: 100%|██████████| 156/156 [00:00<00:00, 49659.34it/s]

Let's see what we've done with one example.

In interactive mode, you can switch the color parameters (labels, rgb) to display labels or scan colors.

o = train_items[0]

o.describe()

o.show()

MeshItem (scene0000_00.merged.ply) vertices: shape: (81369, 3) dtype: float64 min: -0.01657, max: 8.74040, mean: 3.19051 faces: shape: (153587, 3) dtype: int64 min: 0, max: 81368, mean: 40549.68796 colors: shape: (81369, 4) dtype: uint8 min: 1.00000, max: 255.00000, mean: 145.80430 labels: shape: (81369,) dtype: uint16 min: 0.00000, max: 230.00000, mean: 12.97057 Colors from vertices Labels from vertices

# short representation

train_items

MeshesDataset (1045 items) (scene0000_00.merged.ply, vertices:81369),(scene0000_01.merged.ply, vertices:80583),(scene0000_02.merged.ply, vertices:86001),(scene0001_00.merged.ply, vertices:125526),(scene0001_01.merged.ply, vertices:124429) Path: data/scannet_merged_ply

Transforms¶

Define Label remaping¶

df_classes = pd.read_csv(definition_of_spliting_dir / 'classes_SemVoxLabel-nyu40id.txt', header=None, names=['class_id', 'name'], delim_whitespace=True)

df_classes.T

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 13 | 14 | 15 | 16 | 17 | 18 | 19 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| class_id | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 | 14 | 16 | 24 | 28 | 33 | 34 | 36 | 39 |

| name | wall | floor | cabinet | bed | chair | sofa | table | door | window | bookshelf | picture | counter | desk | curtain | refridgerator | shower | toilet | sink | bathtub | otherfurniture |

# Manual definition

assert (np.array([1,2,3,4,5,6,7,8,9,10,11,12,14,16,24,28,33,34,36,39]) == df_classes.class_id).all()

# Map relevant classes to {0,1,...,19}, and ignored classes to -100

remapper = np.ones(3000, dtype=np.int32) * (-100)

for i, x in enumerate([1,2,3,4,5,6,7,8,9,10,11,12,14,16,24,28,33,34,36,39]):

remapper[x] = i

Define transforms¶

In order to reproduce the example of SparseConvNet, the same transformations have been redone, but in the manner of fast.ai transformations.

The following cells define the transformations: preprocessing (PRE_TFMS); augmentation (AUGS_); and transformation to convert the points cloud to a sparse representation (SPARSE_TFMS). Sparse representation is the input format for the SparseConvNet model and contains a list of voxels and their features

PRE_TFMS = [T.to_points_cloud(method='vertices', normals = False),

T.remap_labels(remapper=remapper, inplace=False),

T.colors_normalize(),

T.normalize_spatial(),

]

_scale = 20

AUGS_TRAIN = [

T.noise_affine(amplitude=0.1),

T.flip_x(p=0.5),

T.scale(scale=_scale),

T.rotate_XY(),

T.elastic(gran=6 * _scale // 50, mag=40 * _scale / 50),

T.elastic(gran=20 * _scale // 50, mag=160 * _scale / 50),

T.specific_translate(full_scale=4096),

T.crop_points(low=0, high=4096),

T.colors_noise(amplitude=0.1),

]

AUGS_VALID = [

T.noise_affine(amplitude=0.1),

T.flip_x(p=0.5),

T.scale(scale=_scale),

T.rotate_XY(),

T.translate(offset=4096 / 2),

T.rand_translate(offset=(-2, 2, 3)), # low, high, dimention

T.specific_translate(full_scale=4096),

T.crop_points(low=0, high=4096),

T.colors_noise(amplitude=0.1),

]

SPARSE_TFMS = [

T.merge_features(ones=False, colors=True, normals=False),

T.to_sparse_voxels(),

]

# train/valid transforms

tfms = (

PRE_TFMS + AUGS_TRAIN + SPARSE_TFMS,

PRE_TFMS + AUGS_VALID + SPARSE_TFMS,

)

Apply transforms¶

Now we will apply the transformation to the data sets.

train_items.transform(tfms[0])

pass

valid_items.transform(tfms[1])

pass

Let's see what we got in results of train and valid tranformations for the first example:

Train¶

o = train_items[0]

o.describe()

o.show(colors=o.data['features'])

id: scene0000_00 coords shape: (81369, 3) dtype: int64 min: 1118, max: 2597, mean: 2040.09011 features shape: (81369, 3) dtype: float32 min: -1.06819, max: 1.07337, mean: -0.17256 x shape: (81369,) dtype: int64 min: 1118, max: 1319, mean: 1218.68753 y shape: (81369,) dtype: int64 min: 2410, max: 2597, mean: 2492.88749 z shape: (81369,) dtype: int64 min: 2374, max: 2457, mean: 2408.69531 labels shape: (81369,) dtype: int64 min: -100, max: 17, mean: -48.51506 voxels: 53927 points / voxels: 1.5088731062362082

Valid¶

o = valid_items[0]

o.describe()

o.show(colors=o.data['features'])

id: scene0003_00 coords shape: (82063, 3) dtype: int64 min: 1047, max: 2112, mean: 1447.58966 features shape: (82063, 3) dtype: float32 min: -1.18008, max: 0.98457, mean: 0.03542 x shape: (82063,) dtype: int64 min: 1047, max: 1150, mean: 1085.01579 y shape: (82063,) dtype: int64 min: 2002, max: 2112, mean: 2046.15337 z shape: (82063,) dtype: int64 min: 1185, max: 1257, mean: 1211.59982 labels shape: (82063,) dtype: int64 min: -100, max: 12, mean: -32.75373 voxels: 17483 points / voxels: 4.693874049076245

Points per voxel distribution¶

# iterations are in one thread

ppv_valid = []

for o in tqdm(valid_items, total=len(valid_items)):

n_voxels = o.num_voxels()

n_points = len(o.data['coords'])

ppv_valid.append(n_points / n_voxels)

ppv_train = []

for o in tqdm(train_items, total=len(train_items)):

n_voxels = o.num_voxels()

n_points = len(o.data['coords'])

ppv_train.append(n_points / n_voxels)

100%|██████████| 156/156 [00:13<00:00, 10.97it/s] 100%|██████████| 1045/1045 [05:00<00:00, 4.30it/s]

fig, ax = plt.subplots()

ax.hist(ppv_train, bins=100, label='train')

ax.hist(ppv_valid, bins=100, label='valid')

ax.legend()

ax.set_title('Points per voxel distribution')

ax.set_xlabel('Points per voxel')

ax.set_ylabel('Number of examples')

pass

DataBunch¶

In fast.ai the data is represented DataBunch which contains train, valid and optionally test data loaders.

data = SparseDataBunch.create(train_ds=train_items,

valid_ds=valid_items,

collate_fn=merge_fn)

data.describe()

Train: 1045, shuffle: True, batch_size: 32, num_workers: 8, num_batches: 32, drop_last: True Valid: 156, shuffle: False, batch_size: 2, num_workers: 2, num_batches: 78, drop_last: False

Dataloader idle run speed measurement¶

print("cpu_count:", cpu_count())

!lscpu | grep "Model"

cpu_count: 16 Model name: AMD Ryzen 7 1700 Eight-Core Processor

# train

t = tqdm(enumerate(data.train_dl), total=len(data.train_dl))

for i, batch in t:

pass

100%|██████████| 32/32 [01:48<00:00, 1.87s/it]

# valid

t = tqdm(enumerate(data.valid_dl), total=len(data.valid_dl))

for i, batch in t:

pass

100%|██████████| 78/78 [00:10<00:00, 7.36it/s]

# spatial_size is full_scale

model_config = SparseModelConfig(spatial_size=4096, num_classes=20, num_input_features=3, mode=4,

m=16, num_planes_coeffs=[1, 2, 3, 4, 5, 6, 7])

model_config

SparseModelConfig; spatial_size: 4096 dimension: 3 block_reps: 1 m: 16 num_planes: [16, 32, 48, 64, 80, 96, 112] residual_blocks: False num_classes: 20 num_input_features: 3 mode: 4 downsample: [2, 2] bias: False

class Model(nn.Module):

def __init__(self, cfg):

nn.Module.__init__(self)

self.sparseModel = scn.Sequential(

scn.InputLayer(cfg.dimension, cfg.spatial_size, mode=cfg.mode),

scn.SubmanifoldConvolution(cfg.dimension, nIn=cfg.num_input_features, nOut=cfg.m, filter_size=3, bias=cfg.bias),

scn.UNet(cfg.dimension, cfg.block_reps, cfg.num_planes, residual_blocks=cfg.residual_blocks, downsample=cfg.downsample),

scn.BatchNormReLU(cfg.m),

scn.OutputLayer(cfg.dimension),

)

self.linear = nn.Linear(cfg.m, cfg.num_classes)

def forward(self, xb):

x = [xb['coords'], xb['features']]

x = self.sparseModel(x)

x = self.linear(x)

return x

model = Model(model_config)

utils.print_trainable_parameters(model)

Total: 2,689,860

| name | number | shape | |

|---|---|---|---|

| 0 | sparseModel.1.weight | 1296 | 27 x 3 x 16 |

| 1 | sparseModel.2.0.0.weight | 16 | 16 |

| 2 | sparseModel.2.0.0.bias | 16 | 16 |

| 3 | sparseModel.2.0.1.weight | 6912 | 27 x 16 x 16 |

| 4 | sparseModel.2.1.1.0.weight | 16 | 16 |

| 5 | sparseModel.2.1.1.0.bias | 16 | 16 |

| 6 | sparseModel.2.1.1.1.weight | 4096 | 8 x 16 x 32 |

| 7 | sparseModel.2.1.1.2.0.0.weight | 32 | 32 |

| 8 | sparseModel.2.1.1.2.0.0.bias | 32 | 32 |

| 9 | sparseModel.2.1.1.2.0.1.weight | 27648 | 27 x 32 x 32 |

| 10 | sparseModel.2.1.1.2.1.1.0.weight | 32 | 32 |

| 11 | sparseModel.2.1.1.2.1.1.0.bias | 32 | 32 |

| 12 | sparseModel.2.1.1.2.1.1.1.weight | 12288 | 8 x 32 x 48 |

| 13 | sparseModel.2.1.1.2.1.1.2.0.0.weight | 48 | 48 |

| 14 | sparseModel.2.1.1.2.1.1.2.0.0.bias | 48 | 48 |

| 15 | sparseModel.2.1.1.2.1.1.2.0.1.weight | 62208 | 27 x 48 x 48 |

| 16 | sparseModel.2.1.1.2.1.1.2.1.1.0.weight | 48 | 48 |

| 17 | sparseModel.2.1.1.2.1.1.2.1.1.0.bias | 48 | 48 |

| 18 | sparseModel.2.1.1.2.1.1.2.1.1.1.weight | 24576 | 8 x 48 x 64 |

| 19 | sparseModel.2.1.1.2.1.1.2.1.1.2.0.0.weight | 64 | 64 |

| 20 | sparseModel.2.1.1.2.1.1.2.1.1.2.0.0.bias | 64 | 64 |

| 21 | sparseModel.2.1.1.2.1.1.2.1.1.2.0.1.weight | 110592 | 27 x 64 x 64 |

| 22 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.0.weight | 64 | 64 |

| 23 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.0.bias | 64 | 64 |

| 24 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.1.weight | 40960 | 8 x 64 x 80 |

| 25 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.weight | 80 | 80 |

| 26 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.bias | 80 | 80 |

| 27 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.0.1.weight | 172800 | 27 x 80 x 80 |

| 28 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.0.weight | 80 | 80 |

| 29 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.0.bias | 80 | 80 |

| 30 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.1.weight | 61440 | 8 x 80 x 96 |

| 31 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.weight | 96 | 96 |

| 32 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.bias | 96 | 96 |

| 33 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.1.weight | 248832 | 27 x 96 x 96 |

| 34 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.0.weight | 96 | 96 |

| 35 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.0.bias | 96 | 96 |

| 36 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.1.weight | 86016 | 8 x 96 x 112 |

| 37 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.weight | 112 | 112 |

| 38 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.0.bias | 112 | 112 |

| 39 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.0.1.weight | 338688 | 27 x 112 x 112 |

| 40 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.3.weight | 112 | 112 |

| 41 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.3.bias | 112 | 112 |

| 42 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.4.weight | 86016 | 8 x 112 x 96 |

| 43 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.3.0.weight | 192 | 192 |

| 44 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.3.0.bias | 192 | 192 |

| 45 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.2.3.1.weight | 497664 | 27 x 192 x 96 |

| 46 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.3.weight | 96 | 96 |

| 47 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.3.bias | 96 | 96 |

| 48 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.1.1.4.weight | 61440 | 8 x 96 x 80 |

| 49 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.3.0.weight | 160 | 160 |

| 50 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.3.0.bias | 160 | 160 |

| 51 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.2.3.1.weight | 345600 | 27 x 160 x 80 |

| 52 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.3.weight | 80 | 80 |

| 53 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.3.bias | 80 | 80 |

| 54 | sparseModel.2.1.1.2.1.1.2.1.1.2.1.1.4.weight | 40960 | 8 x 80 x 64 |

| 55 | sparseModel.2.1.1.2.1.1.2.1.1.2.3.0.weight | 128 | 128 |

| 56 | sparseModel.2.1.1.2.1.1.2.1.1.2.3.0.bias | 128 | 128 |

| 57 | sparseModel.2.1.1.2.1.1.2.1.1.2.3.1.weight | 221184 | 27 x 128 x 64 |

| 58 | sparseModel.2.1.1.2.1.1.2.1.1.3.weight | 64 | 64 |

| 59 | sparseModel.2.1.1.2.1.1.2.1.1.3.bias | 64 | 64 |

| 60 | sparseModel.2.1.1.2.1.1.2.1.1.4.weight | 24576 | 8 x 64 x 48 |

| 61 | sparseModel.2.1.1.2.1.1.2.3.0.weight | 96 | 96 |

| 62 | sparseModel.2.1.1.2.1.1.2.3.0.bias | 96 | 96 |

| 63 | sparseModel.2.1.1.2.1.1.2.3.1.weight | 124416 | 27 x 96 x 48 |

| 64 | sparseModel.2.1.1.2.1.1.3.weight | 48 | 48 |

| 65 | sparseModel.2.1.1.2.1.1.3.bias | 48 | 48 |

| 66 | sparseModel.2.1.1.2.1.1.4.weight | 12288 | 8 x 48 x 32 |

| 67 | sparseModel.2.1.1.2.3.0.weight | 64 | 64 |

| 68 | sparseModel.2.1.1.2.3.0.bias | 64 | 64 |

| 69 | sparseModel.2.1.1.2.3.1.weight | 55296 | 27 x 64 x 32 |

| 70 | sparseModel.2.1.1.3.weight | 32 | 32 |

| 71 | sparseModel.2.1.1.3.bias | 32 | 32 |

| 72 | sparseModel.2.1.1.4.weight | 4096 | 8 x 32 x 16 |

| 73 | sparseModel.2.3.0.weight | 32 | 32 |

| 74 | sparseModel.2.3.0.bias | 32 | 32 |

| 75 | sparseModel.2.3.1.weight | 13824 | 27 x 32 x 16 |

| 76 | sparseModel.3.weight | 16 | 16 |

| 77 | sparseModel.3.bias | 16 | 16 |

| 78 | linear.weight | 320 | 20 x 16 |

| 79 | linear.bias | 20 | 20 |

learner creation¶

Learner is core fast.ai class which contains model architecture, databunch and optimizer options and implement train loop and prediction

learn = Learner(data, model,

opt_func=partial(optim.Adam),

path=str(Path('results', experiment_name)))

Learning Rate finder¶

We use Learning Rate Finder provided by fast.ai library to find the optimal learning rate

learn.lr_find()

LR Finder is complete, type {learner_name}.recorder.plot() to see the graph.

learn.recorder.plot()

Train¶

To visualize the learning process, we specify some additional callbacks

learn.callbacks = []

cb_iouf = IouMeanFiltred(learn, model_config.num_classes)

learn.callbacks.append(cb_iouf)

learn.callbacks.append(TimeLogger(learn))

learn.callbacks.append(CSVLogger(learn))

learn.callbacks.append(CSVLoggerIouByClass(learn, cb_iouf, class_names=list(df_classes.name), filename='iouf_by_class'))

learn.callbacks.append(SaveModelCallback(learn, every='epoch', name='weights', overwrite=True))

learn.fit(10)

| epoch | train_loss | valid_loss | train_time | valid_time | train_iouf | valid_iouf | time |

|---|---|---|---|---|---|---|---|

| 0 | 1.874499 | 1.239746 | 205.347455 | 23.016982 | 0.070676 | 0.084275 | 03:48 |

| 1 | 1.414802 | 1.004896 | 206.979722 | 23.101352 | 0.085191 | 0.092405 | 03:50 |

| 2 | 1.191607 | 0.921368 | 209.370849 | 23.391099 | 0.098592 | 0.109361 | 03:52 |

| 3 | 1.064442 | 0.879592 | 208.538107 | 23.218478 | 0.099031 | 0.114305 | 03:51 |

| 4 | 0.992228 | 0.856975 | 207.690542 | 23.435412 | 0.107050 | 0.115467 | 03:51 |

| 5 | 0.941356 | 0.815237 | 203.588629 | 22.824540 | 0.111777 | 0.121448 | 03:46 |

| 6 | 0.905555 | 0.812317 | 207.703450 | 23.385710 | 0.118956 | 0.130800 | 03:51 |

| 7 | 0.877082 | 0.756652 | 206.829057 | 23.100073 | 0.130821 | 0.154855 | 03:50 |

| 8 | 0.848020 | 0.784317 | 206.485426 | 23.279963 | 0.144968 | 0.155150 | 03:49 |

| 9 | 0.824155 | 0.746110 | 204.081624 | 22.927912 | 0.150323 | 0.162001 | 03:47 |

metrics.py:112: UserWarning: Wrong example is found: all `labels_raw` < 0. Id=scene0509_00

warnings.warn(f"Wrong example is found: all `labels_raw` < 0. Id={xb['ids'][k]}")

It takes 512 epochs to get IoU ~ 0.44 by this simple model.

learn.fit(512)

Results¶

learn.recorder.plot()

learn.recorder.plot_losses()

learn.recorder.plot_lr()

learn.recorder.plot_metrics()

cb = learn.find_callback(CSVLoggerIouByClass)

cb.read_logged_file().iloc[-1:].T

| 19 | |

|---|---|

| epoch | 9 |

| datatype | valid |

| mean_iou | 0.162001 |

| wall | 0.782948 |

| floor | 0.424996 |

| cabinet | 0.938655 |

| bed | 0.378823 |

| chair | 0 |

| sofa | 0.193514 |

| table | 0.000129 |

| door | 0.219227 |

| window | 0.001197 |

| bookshelf | 0 |

| picture | 0.30053 |

| counter | 0 |

| desk | 0 |

| curtain | 0 |

| refridgerator | 0 |

| shower | 0 |

| toilet | 0 |

| sink | 0 |

| bathtub | 0 |

| otherfurniture | 0 |

Save¶

Save manually

fn_checkpoint = learn.path / learn.model_dir / 'learn_model.pth'

#torch.save(epoch, 'epoch.pth')

torch.save(model.state_dict(), fn_checkpoint)

Check saved weights

model.load_state_dict(torch.load(fn_checkpoint))

Validate¶

Load weights¶

fn_checkpoint = learn.path / learn.model_dir / 'weights.pth'

print(fn_checkpoint)

assert fn_checkpoint.exists()

assert os.path.isfile(fn_checkpoint)

fn_epoch = learn.path / learn.model_dir / 'weights_epoch.pth'

print("Epoch:", torch.load(fn_epoch))

results/scannet_unet_detailed/models/weights.pth Epoch: 9

learn.model.load_state_dict(torch.load(fn_checkpoint)['model'])

Validate¶

# remove callback, because CSVLogger not worked with `learn.validate`

learn.callbacks = []

cb_iouf = IouMeanFiltred(learn, model_config.num_classes)

validation_callbacks = [cb_iouf, TimeLogger(learn)]

np.random.seed(42)

last_metrics = learn.validate(callbacks=validation_callbacks)

last_metrics

[0.7385204, 0.0, 22.492323875427246, 0, 0.16579058166051072]

Internal validation details

print(repr(cb_iouf._d['valid']['cm']))

cb_iouf._d['valid']['iou']

array([[5219183, 49143, 53814, 27336, ..., 6722, 10765, 15817, 2493],

[ 21218, 313395, 4806, 33068, ..., 70, 3487, 1600, 299],

[ 45583, 14697, 3402678, 4787, ..., 0, 1930, 386, 328],

[ 6379, 34626, 2530, 190044, ..., 0, 2174, 550, 123],

...,

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0]], dtype=uint64)

0.16579058166051072

cb_iouf._d['valid']['cm']

array([[5219183, 49143, 53814, 27336, ..., 6722, 10765, 15817, 2493],

[ 21218, 313395, 4806, 33068, ..., 70, 3487, 1600, 299],

[ 45583, 14697, 3402678, 4787, ..., 0, 1930, 386, 328],

[ 6379, 34626, 2530, 190044, ..., 0, 2174, 550, 123],

...,

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0],

[ 0, 0, 0, 0, ..., 0, 0, 0, 0]], dtype=uint64)

cb_iouf._d['valid']['iou_per_class']

array([7.880392e-01, 4.225936e-01, 9.400460e-01, 3.798183e-01, 2.508623e-18, 1.968258e-01, 4.778085e-04, 2.801127e-01,

8.102176e-04, 9.072681e-18, 3.070880e-01, 1.622007e-17, 2.669728e-17, 8.308270e-18, 3.822776e-17, 9.639483e-17,

1.401935e-16, 3.400667e-17, 4.517528e-17, 8.165265e-17])

cb_iouf._d['valid']['iou']

0.16579058166051072