How to Segment Buildings on Drone Imagery with Fast.ai & Cloud-Native GeoData Tools¶

An Interactive Intro to Geospatial Deep Learning on Google Colab¶

by @daveluo

In this Google Colab notebook and accompanying Medium post, we will learn all the code and concepts comprising a complete workflow to automatically detect and delineate building footprints (instance segmentation) from drone imagery with cutting edge deep learning models.

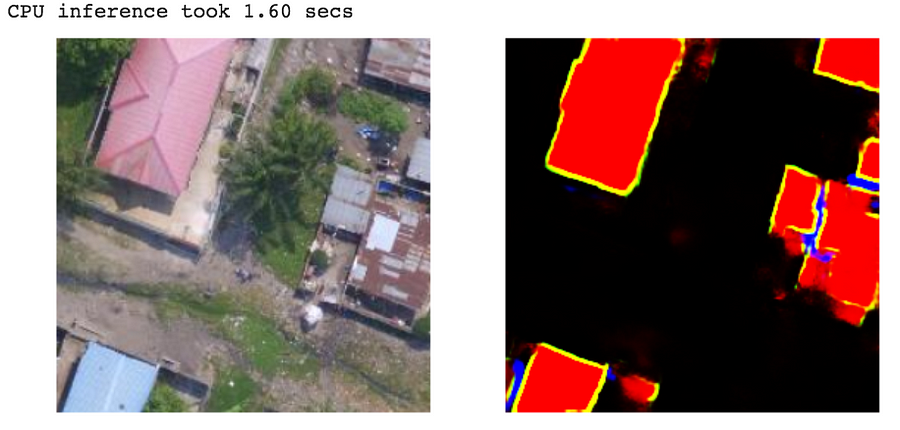

All you'll need is a Google account, an internet connection, and a couple of hours to learn how to make the data & model that learns to make something like this:

In modular steps, we'll learn to…¶

Preprocess image geoTIFFs and manually labeled data geoJSON files into training data for deep learning:¶

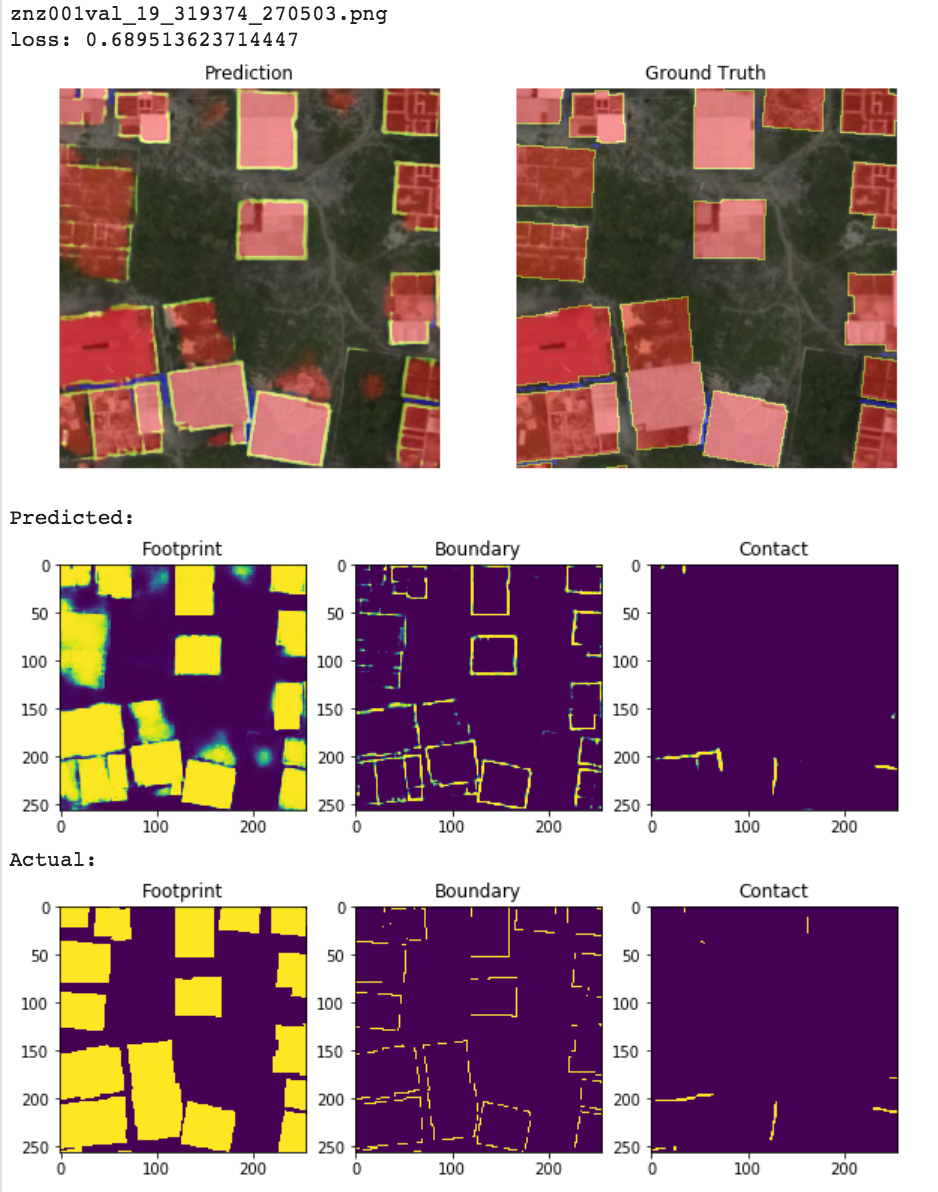

Create a U-net segmentation model to predict what pixels in an image represent buildings (and building-related features):¶

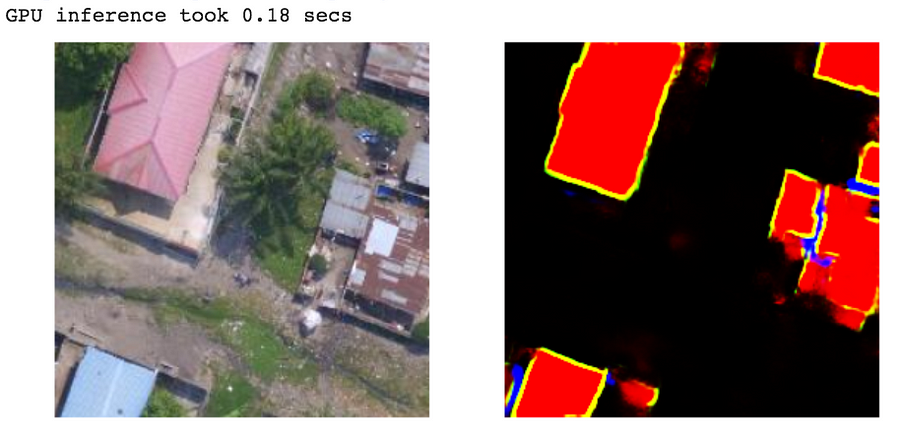

Test our model's performance on unseen imagery with GPU or CPU:¶

Post-process raw model outputs into geo-registered building shapes evaluated against ground truth:¶

And along the way, we'll get familiar with great geospatial data & deep learning tools/resources like:¶

- Geopandas: "an open source project to make working with geospatial data in python easier. GeoPandas extends the datatypes used by pandas to allow spatial operations on geometric types."

- Rasterio: "reads and writes geospatial raster datasets"

- Supermercado: "supercharger for Mercantile" (spherical mercator tile and coordinate utilities)

- Rio-tiler: "Rasterio plugin to read mercator tiles from Cloud Optimized GeoTIFF dataset"

- Solaris: "Geospatial Machine Learning Analysis Toolkit" by Cosmiq Works

- Cloud-Optimized GeoTIFFs (COG): "An imagery format for cloud-native geospatial processing"

- Spatio-Temporal Asset Catalogs (STAC): "Enabling online search and discovery of geospatial assets"

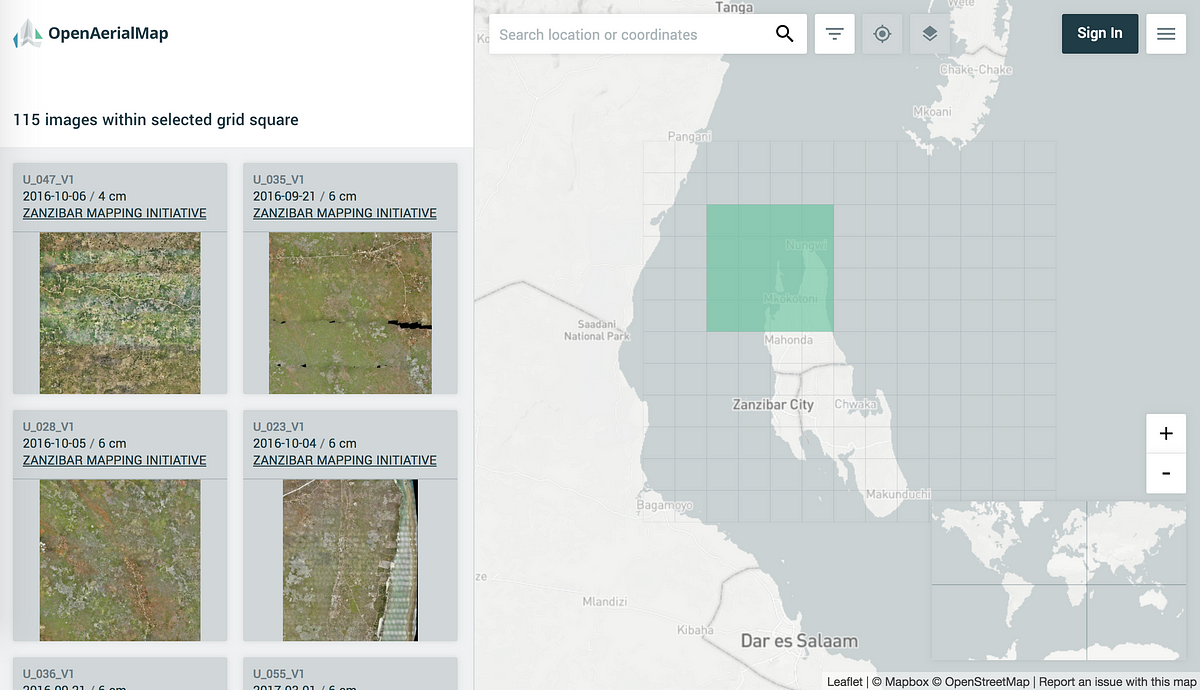

- OpenAerialMap: "The open collection of aerial imagery"

- Fast.ai for geospatial deep learning: "The fastai library simplifies training fast and accurate neural nets using modern best practices" built on the PyTorch deep learning platform.

How to get the most out of this tutorial:¶

This Colab notebook is our main learning resource - working interactively here is highly recommended!

Code is organized into modular sections, set up for installation/import of all required dependencies, and executable on either CPU or GPU runtimes (depending on the section). Links to load files generated at each step are also included so you can pick up and start from any section. Inline# comments (& references for further reading) are provided within code cells to explain steps or nuances in more detail as needed. Executing all code cells end-to-end takes <1 hour on GPU.

The Medium post serves as a high-level conceptual walkthrough and maps directly to sections within the Colab notebook. The post works best as a quick overview with handy bookmarks to Colab or viewed side-by-side with this Colab notebook as a code & concept companion set.

This tutorial assumes you have a working knowledge of Python, data analysis with Pandas, making training/validation/test sets for machine learning, and a beginner practitioner's grasp of deep learning concepts. Or the motivation to gain what knowledge you're missing by following the ample references linked throughout this post and notebook.

With that as mental prep, let's do some geospatial deep learning!¶

Pre-Processing¶

Note that the preprocessing section is possible to be done on CPU runtime:

Change in menu: Runtime > Change runtime type > Hardware Accelerator = None

Install all the geo things¶

Pip install the required geodata processing packages we'll be using of, test that their import to Colab works, and create our output data directories.

!add-apt-repository ppa:ubuntugis/ubuntugis-unstable -y

!apt-get update

!apt-get install python-numpy gdal-bin libgdal-dev python3-rtree

!pip install rasterio

!pip install geopandas

!pip install descartes

!pip install solaris

!pip install rio-tiler

Get:1 https://cloud.r-project.org/bin/linux/ubuntu bionic-cran35/ InRelease [3,626 B]

Ign:2 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 InRelease

Get:3 http://security.ubuntu.com/ubuntu bionic-security InRelease [88.7 kB]

Ign:4 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 InRelease

Hit:5 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 Release

Get:6 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 Release [564 B]

Get:7 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 Release.gpg [833 B]

Get:8 http://ppa.launchpad.net/graphics-drivers/ppa/ubuntu bionic InRelease [21.3 kB]

Get:10 https://cloud.r-project.org/bin/linux/ubuntu bionic-cran35/ Packages [81.6 kB]

Hit:11 http://archive.ubuntu.com/ubuntu bionic InRelease

Get:12 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 Packages [30.4 kB]

Get:13 http://archive.ubuntu.com/ubuntu bionic-updates InRelease [88.7 kB]

Get:14 http://ppa.launchpad.net/marutter/c2d4u3.5/ubuntu bionic InRelease [15.4 kB]

Get:15 http://security.ubuntu.com/ubuntu bionic-security/restricted amd64 Packages [21.8 kB]

Get:16 http://security.ubuntu.com/ubuntu bionic-security/multiverse amd64 Packages [6,779 B]

Get:17 http://security.ubuntu.com/ubuntu bionic-security/main amd64 Packages [777 kB]

Get:18 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic InRelease [20.8 kB]

Get:19 http://archive.ubuntu.com/ubuntu bionic-backports InRelease [74.6 kB]

Get:20 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 Packages [1,075 kB]

Get:21 http://security.ubuntu.com/ubuntu bionic-security/universe amd64 Packages [804 kB]

Get:22 http://ppa.launchpad.net/graphics-drivers/ppa/ubuntu bionic/main amd64 Packages [36.8 kB]

Get:23 http://archive.ubuntu.com/ubuntu bionic-updates/universe amd64 Packages [1,335 kB]

Get:24 http://ppa.launchpad.net/marutter/c2d4u3.5/ubuntu bionic/main Sources [1,752 kB]

Get:25 http://archive.ubuntu.com/ubuntu bionic-updates/multiverse amd64 Packages [10.8 kB]

Get:26 http://archive.ubuntu.com/ubuntu bionic-updates/restricted amd64 Packages [35.5 kB]

Get:27 http://archive.ubuntu.com/ubuntu bionic-backports/universe amd64 Packages [4,241 B]

Get:28 http://ppa.launchpad.net/marutter/c2d4u3.5/ubuntu bionic/main amd64 Packages [845 kB]

Get:29 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 Packages [82.0 kB]

Fetched 7,213 kB in 9s (832 kB/s)

Reading package lists... Done

Hit:1 https://cloud.r-project.org/bin/linux/ubuntu bionic-cran35/ InRelease

Ign:2 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 InRelease

Hit:3 http://security.ubuntu.com/ubuntu bionic-security InRelease

Ign:4 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 InRelease

Hit:5 https://developer.download.nvidia.com/compute/cuda/repos/ubuntu1804/x86_64 Release

Hit:6 https://developer.download.nvidia.com/compute/machine-learning/repos/ubuntu1804/x86_64 Release

Hit:7 http://ppa.launchpad.net/graphics-drivers/ppa/ubuntu bionic InRelease

Hit:8 http://archive.ubuntu.com/ubuntu bionic InRelease

Hit:10 http://archive.ubuntu.com/ubuntu bionic-updates InRelease

Hit:12 http://ppa.launchpad.net/marutter/c2d4u3.5/ubuntu bionic InRelease

Hit:13 http://archive.ubuntu.com/ubuntu bionic-backports InRelease

Hit:14 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic InRelease

Reading package lists... Done

Reading package lists... Done

Building dependency tree

Reading state information... Done

python-numpy is already the newest version (1:1.13.3-2ubuntu1).

python-numpy set to manually installed.

The following packages were automatically installed and are no longer required:

libgdal20 libgeos-3.6.2 libgeotiff2 libnvidia-common-430 libproj12 libproj13

Use 'apt autoremove' to remove them.

The following additional packages will be installed:

default-libmysqlclient-dev gdal-data libarmadillo-dev libarpack2-dev

libblas-dev libblas3 libcfitsio-dev libcfitsio-doc libcfitsio5 libcharls-dev

libdap-dev libdapserver7v5 libepsilon-dev libfreexl-dev libfyba-dev

libgdal20 libgdal26 libgeos-3.8.0 libgeos-c1v5 libgeos-dev libgeotiff-dev

libgeotiff5 libgif-dev libhdf4-alt-dev libjson-c-dev libkml-dev

libkmlconvenience1 libkmlregionator1 libkmlxsd1 libminizip-dev

libmysqlclient-dev libnetcdf-dev libogdi-dev libogdi4.1

libopencv-calib3d-dev libopencv-calib3d3.2 libopencv-contrib-dev

libopencv-contrib3.2 libopencv-core-dev libopencv-core3.2 libopencv-dev

libopencv-features2d-dev libopencv-features2d3.2 libopencv-flann-dev

libopencv-flann3.2 libopencv-highgui-dev libopencv-highgui3.2

libopencv-imgcodecs-dev libopencv-imgcodecs3.2 libopencv-imgproc-dev

libopencv-imgproc3.2 libopencv-ml-dev libopencv-ml3.2

libopencv-objdetect-dev libopencv-objdetect3.2 libopencv-photo-dev

libopencv-photo3.2 libopencv-shape-dev libopencv-shape3.2

libopencv-stitching-dev libopencv-stitching3.2 libopencv-superres-dev

libopencv-superres3.2 libopencv-ts-dev libopencv-video-dev

libopencv-video3.2 libopencv-videoio-dev libopencv-videoio3.2

libopencv-videostab-dev libopencv-videostab3.2 libopencv-viz-dev

libopencv-viz3.2 libopencv3.2-java libopencv3.2-jni libopenjp2-7-dev

libpoppler-dev libpoppler-private-dev libpq-dev libproj-dev libproj13

libproj15 libqhull-dev libqhull-r7 libspatialindex-c4v5 libspatialindex-dev

libspatialindex4v5 libspatialite-dev libspatialite7 libsqlite3-0

libsqlite3-dev libsuperlu-dev liburiparser-dev libvtk6.3 libwebp-dev

libxerces-c-dev libzstd-dev proj-bin proj-data python3-gdal

python3-pkg-resources unixodbc-dev

Suggested packages:

libgdal-grass libitpp-dev liblapack-doc libgdal-doc libgeotiff-epsg

geotiff-bin netcdf-bin netcdf-doc ogdi-bin opencv-doc postgresql-doc-10

sqlite3-doc libsuperlu-doc vtk6-doc vtk6-examples libxerces-c-doc

python3-setuptools

Recommended packages:

opencv-data

The following packages will be REMOVED:

libogdi3.2 python-gdal

The following NEW packages will be installed:

default-libmysqlclient-dev libarmadillo-dev libarpack2-dev libblas-dev

libblas3 libcfitsio-dev libcfitsio-doc libcfitsio5 libcharls-dev libdap-dev

libdapserver7v5 libepsilon-dev libfreexl-dev libfyba-dev libgdal-dev

libgdal26 libgeos-3.8.0 libgeos-dev libgeotiff-dev libgeotiff5 libgif-dev

libhdf4-alt-dev libjson-c-dev libkml-dev libkmlconvenience1

libkmlregionator1 libkmlxsd1 libminizip-dev libmysqlclient-dev libnetcdf-dev

libogdi-dev libogdi4.1 libopenjp2-7-dev libpoppler-dev

libpoppler-private-dev libpq-dev libproj-dev libproj13 libproj15

libqhull-dev libqhull-r7 libspatialindex-c4v5 libspatialindex-dev

libspatialindex4v5 libspatialite-dev libsqlite3-dev libsuperlu-dev

liburiparser-dev libwebp-dev libxerces-c-dev libzstd-dev proj-bin

python3-pkg-resources python3-rtree unixodbc-dev

The following packages will be upgraded:

gdal-bin gdal-data libgdal20 libgeos-c1v5 libopencv-calib3d-dev

libopencv-calib3d3.2 libopencv-contrib-dev libopencv-contrib3.2

libopencv-core-dev libopencv-core3.2 libopencv-dev libopencv-features2d-dev

libopencv-features2d3.2 libopencv-flann-dev libopencv-flann3.2

libopencv-highgui-dev libopencv-highgui3.2 libopencv-imgcodecs-dev

libopencv-imgcodecs3.2 libopencv-imgproc-dev libopencv-imgproc3.2

libopencv-ml-dev libopencv-ml3.2 libopencv-objdetect-dev

libopencv-objdetect3.2 libopencv-photo-dev libopencv-photo3.2

libopencv-shape-dev libopencv-shape3.2 libopencv-stitching-dev

libopencv-stitching3.2 libopencv-superres-dev libopencv-superres3.2

libopencv-ts-dev libopencv-video-dev libopencv-video3.2

libopencv-videoio-dev libopencv-videoio3.2 libopencv-videostab-dev

libopencv-videostab3.2 libopencv-viz-dev libopencv-viz3.2 libopencv3.2-java

libopencv3.2-jni libspatialite7 libsqlite3-0 libvtk6.3 proj-data

python3-gdal

49 upgraded, 55 newly installed, 2 to remove and 63 not upgraded.

Need to get 92.2 MB of archives.

After this operation, 168 MB of additional disk space will be used.

Get:1 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libcfitsio5 amd64 3.430-2 [446 kB]

Get:2 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [237 kB]

Get:3 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libsqlite3-0 amd64 3.22.0-1ubuntu0.2 [498 kB]

Get:4 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-ts-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [279 kB]

Get:5 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libmysqlclient-dev amd64 5.7.28-0ubuntu0.18.04.4 [987 kB]

Get:6 http://archive.ubuntu.com/ubuntu bionic/main amd64 default-libmysqlclient-dev amd64 1.0.4 [3,736 B]

Get:7 http://archive.ubuntu.com/ubuntu bionic/main amd64 libblas3 amd64 3.7.1-4ubuntu1 [140 kB]

Get:8 http://archive.ubuntu.com/ubuntu bionic/main amd64 libblas-dev amd64 3.7.1-4ubuntu1 [143 kB]

Get:9 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libarpack2-dev amd64 3.5.0+real-2 [97.3 kB]

Get:10 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libsuperlu-dev amd64 5.2.1+dfsg1-3 [16.3 kB]

Get:11 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libarmadillo-dev amd64 1:8.400.0+dfsg-2 [340 kB]

Get:12 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libcfitsio-dev amd64 3.430-2 [494 kB]

Get:13 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libcfitsio-doc all 3.430-2 [2,005 kB]

Get:14 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libcharls-dev amd64 1.1.0+dfsg-2 [20.4 kB]

Get:15 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libdapserver7v5 amd64 3.19.1-2build1 [22.2 kB]

Get:16 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libdap-dev amd64 3.19.1-2build1 [710 kB]

Get:17 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libfyba-dev amd64 4.1.1-3 [436 kB]

Get:18 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libepsilon-dev amd64 0.9.2+dfsg-2 [49.3 kB]

Get:19 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libfreexl-dev amd64 1.0.5-1 [30.9 kB]

Get:20 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libsqlite3-dev amd64 3.22.0-1ubuntu0.2 [632 kB]

Get:21 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libgif-dev amd64 5.1.4-2ubuntu0.1 [20.6 kB]

Get:22 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libnetcdf-dev amd64 1:4.6.0-2build1 [37.6 kB]

Get:23 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libhdf4-alt-dev amd64 4.2.13-2 [368 kB]

Get:24 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-contrib-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [1,878 kB]

Get:25 http://archive.ubuntu.com/ubuntu bionic/main amd64 libjson-c-dev amd64 0.12.1-1.3 [31.7 kB]

Get:26 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libkmlconvenience1 amd64 1.3.0-5 [43.1 kB]

Get:27 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libkmlregionator1 amd64 1.3.0-5 [19.0 kB]

Get:28 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libkmlxsd1 amd64 1.3.0-5 [29.5 kB]

Get:29 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libminizip-dev amd64 1.1-8build1 [26.7 kB]

Get:30 http://archive.ubuntu.com/ubuntu bionic/universe amd64 liburiparser-dev amd64 0.8.4-1 [10.0 kB]

Get:31 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libkml-dev amd64 1.3.0-5 [933 kB]

Get:32 http://archive.ubuntu.com/ubuntu bionic-updates/universe amd64 libopenjp2-7-dev amd64 2.3.0-2build0.18.04.1 [26.6 kB]

Get:33 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libpoppler-dev amd64 0.62.0-2ubuntu2.10 [4,608 B]

Get:34 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libpoppler-private-dev amd64 0.62.0-2ubuntu2.10 [169 kB]

Get:35 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libpq-dev amd64 10.10-0ubuntu0.18.04.1 [218 kB]

Get:36 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libqhull-r7 amd64 2015.2-4 [149 kB]

Get:37 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libqhull-dev amd64 2015.2-4 [357 kB]

Get:38 http://archive.ubuntu.com/ubuntu bionic/main amd64 libwebp-dev amd64 0.6.1-2 [267 kB]

Get:39 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libxerces-c-dev amd64 3.2.0+debian-2 [1,627 kB]

Get:40 http://archive.ubuntu.com/ubuntu bionic-updates/main amd64 libzstd-dev amd64 1.3.3+dfsg-2ubuntu1.1 [230 kB]

Get:41 http://archive.ubuntu.com/ubuntu bionic/main amd64 unixodbc-dev amd64 2.3.4-1.1ubuntu3 [217 kB]

Get:42 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libspatialindex4v5 amd64 1.8.5-5 [219 kB]

Get:43 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libspatialindex-c4v5 amd64 1.8.5-5 [51.7 kB]

Get:44 http://archive.ubuntu.com/ubuntu bionic/main amd64 python3-pkg-resources all 39.0.1-2 [98.8 kB]

Get:45 http://archive.ubuntu.com/ubuntu bionic/universe amd64 libspatialindex-dev amd64 1.8.5-5 [285 kB]

Get:46 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-videostab-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [135 kB]

Get:47 http://archive.ubuntu.com/ubuntu bionic/universe amd64 python3-rtree all 0.8.3+ds-1 [16.9 kB]

Get:48 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-stitching-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [222 kB]

Get:49 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-calib3d-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [516 kB]

Get:50 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-features2d-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [294 kB]

Get:51 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-flann-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [176 kB]

Get:52 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-contrib3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [1,463 kB]

Get:53 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv3.2-jni amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [201 kB]

Get:54 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-videostab3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [111 kB]

Get:55 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-stitching3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [179 kB]

Get:56 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-calib3d3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [440 kB]

Get:57 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-features2d3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [244 kB]

Get:58 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-flann3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [105 kB]

Get:59 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-objdetect-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [176 kB]

Get:60 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-objdetect3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [151 kB]

Get:61 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-ml-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [296 kB]

Get:62 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-ml3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [236 kB]

Get:63 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-superres-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [65.8 kB]

Get:64 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-highgui-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [44.3 kB]

Get:65 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-videoio-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [124 kB]

Get:66 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-imgcodecs-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [132 kB]

Get:67 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-superres3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [56.0 kB]

Get:68 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-highgui3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [32.4 kB]

Get:69 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-videoio3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [91.0 kB]

Get:70 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-viz-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [163 kB]

Get:71 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-shape-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [84.1 kB]

Get:72 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-video-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [162 kB]

Get:73 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-photo-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [238 kB]

Get:74 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-imgproc-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [1,024 kB]

Get:75 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-core-dev amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [1,108 kB]

Get:76 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 gdal-data all 3.0.2+dfsg-1~bionic2 [428 kB]

Get:77 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgeos-3.8.0 amd64 3.8.0-1~bionic0 [541 kB]

Get:78 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgeos-c1v5 amd64 3.8.0-1~bionic0 [76.6 kB]

Get:79 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 proj-data all 6.2.1-1~bionic0 [7,608 kB]

Get:80 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libproj15 amd64 6.2.1-1~bionic0 [813 kB]

Get:81 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgeotiff5 amd64 1.5.1-2~bionic1 [73.6 kB]

Get:82 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-imgcodecs3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [95.8 kB]

Get:83 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 python3-gdal amd64 3.0.2+dfsg-1~bionic2 [756 kB]

Get:84 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libvtk6.3 amd64 6.3.0+dfsg2-2build4~bionic3 [31.5 MB]

Get:85 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 gdal-bin amd64 3.0.2+dfsg-1~bionic2 [496 kB]

Get:86 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgdal20 amd64 2.4.2+dfsg-1~bionic0 [6,031 kB]

Get:87 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libogdi4.1 amd64 4.1.0+ds-1~bionic2 [200 kB]

Get:88 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libspatialite7 amd64 4.3.0a-6~bionic2 [1,253 kB]

Get:89 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgdal26 amd64 3.0.2+dfsg-1~bionic2 [6,141 kB]

Get:90 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-viz3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [124 kB]

Get:91 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-shape3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [69.6 kB]

Get:92 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-video3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [136 kB]

Get:93 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-photo3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [203 kB]

Get:94 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-imgproc3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [831 kB]

Get:95 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv-core3.2 amd64 3.2.0+dfsg-4ubuntu0.1+bionic3 [720 kB]

Get:96 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libopencv3.2-java all 3.2.0+dfsg-4ubuntu0.1+bionic3 [401 kB]

Get:97 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libproj13 amd64 5.2.0-1~bionic0 [202 kB]

Get:98 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgeos-dev amd64 3.8.0-1~bionic0 [96.8 kB]

Get:99 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libproj-dev amd64 6.2.1-1~bionic0 [984 kB]

Get:100 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgeotiff-dev amd64 1.5.1-2~bionic1 [101 kB]

Get:101 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libogdi-dev amd64 4.1.0+ds-1~bionic2 [25.4 kB]

Get:102 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libspatialite-dev amd64 4.3.0a-6~bionic2 [1,363 kB]

Get:103 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 libgdal-dev amd64 3.0.2+dfsg-1~bionic2 [7,637 kB]

Get:104 http://ppa.launchpad.net/ubuntugis/ubuntugis-unstable/ubuntu bionic/main amd64 proj-bin amd64 6.2.1-1~bionic0 [113 kB]

Fetched 92.2 MB in 1min 47s (864 kB/s)

Extracting templates from packages: 100%

(Reading database ... 135004 files and directories currently installed.)

Preparing to unpack .../00-libopencv-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-dev (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../01-libopencv-ts-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-ts-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../02-libopencv-contrib-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-contrib-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../03-libopencv-videostab-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-videostab-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../04-libopencv-stitching-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-stitching-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../05-libopencv-calib3d-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-calib3d-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../06-libopencv-features2d-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-features2d-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../07-libopencv-flann-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-flann-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../08-libopencv-contrib3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-contrib3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../09-libopencv3.2-jni_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv3.2-jni (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../10-libopencv-videostab3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-videostab3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../11-libopencv-stitching3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-stitching3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../12-libopencv-calib3d3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-calib3d3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../13-libopencv-features2d3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-features2d3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../14-libopencv-flann3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-flann3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../15-libopencv-objdetect-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-objdetect-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../16-libopencv-objdetect3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-objdetect3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../17-libopencv-ml-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-ml-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../18-libopencv-ml3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-ml3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../19-libopencv-superres-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-superres-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../20-libopencv-highgui-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-highgui-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../21-libopencv-videoio-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-videoio-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../22-libopencv-imgcodecs-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-imgcodecs-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../23-libopencv-superres3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-superres3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../24-libopencv-highgui3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-highgui3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../25-libopencv-videoio3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-videoio3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../26-libopencv-viz-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-viz-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../27-libopencv-shape-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-shape-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../28-libopencv-video-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-video-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../29-libopencv-photo-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-photo-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../30-libopencv-imgproc-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-imgproc-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../31-libopencv-core-dev_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-core-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../32-gdal-data_3.0.2+dfsg-1~bionic2_all.deb ...

Unpacking gdal-data (3.0.2+dfsg-1~bionic2) over (2.2.3+dfsg-2) ...

Selecting previously unselected package libcfitsio5:amd64.

Preparing to unpack .../33-libcfitsio5_3.430-2_amd64.deb ...

Unpacking libcfitsio5:amd64 (3.430-2) ...

Selecting previously unselected package libgeos-3.8.0:amd64.

Preparing to unpack .../34-libgeos-3.8.0_3.8.0-1~bionic0_amd64.deb ...

Unpacking libgeos-3.8.0:amd64 (3.8.0-1~bionic0) ...

Preparing to unpack .../35-libgeos-c1v5_3.8.0-1~bionic0_amd64.deb ...

Unpacking libgeos-c1v5:amd64 (3.8.0-1~bionic0) over (3.6.2-1build2) ...

Preparing to unpack .../36-proj-data_6.2.1-1~bionic0_all.deb ...

Unpacking proj-data (6.2.1-1~bionic0) over (4.9.3-2) ...

Preparing to unpack .../37-libsqlite3-0_3.22.0-1ubuntu0.2_amd64.deb ...

Unpacking libsqlite3-0:amd64 (3.22.0-1ubuntu0.2) over (3.22.0-1ubuntu0.1) ...

Selecting previously unselected package libproj15:amd64.

Preparing to unpack .../38-libproj15_6.2.1-1~bionic0_amd64.deb ...

Unpacking libproj15:amd64 (6.2.1-1~bionic0) ...

Selecting previously unselected package libgeotiff5:amd64.

Preparing to unpack .../39-libgeotiff5_1.5.1-2~bionic1_amd64.deb ...

Unpacking libgeotiff5:amd64 (1.5.1-2~bionic1) ...

Preparing to unpack .../40-libopencv-imgcodecs3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-imgcodecs3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../41-python3-gdal_3.0.2+dfsg-1~bionic2_amd64.deb ...

Unpacking python3-gdal (3.0.2+dfsg-1~bionic2) over (2.2.3+dfsg-2) ...

(Reading database ... 135075 files and directories currently installed.)

Removing python-gdal (2.2.3+dfsg-2) ...

(Reading database ... 134965 files and directories currently installed.)

Preparing to unpack .../libvtk6.3_6.3.0+dfsg2-2build4~bionic3_amd64.deb ...

Unpacking libvtk6.3 (6.3.0+dfsg2-2build4~bionic3) over (6.3.0+dfsg1-11build1) ...

Preparing to unpack .../gdal-bin_3.0.2+dfsg-1~bionic2_amd64.deb ...

Unpacking gdal-bin (3.0.2+dfsg-1~bionic2) over (2.2.3+dfsg-2) ...

Preparing to unpack .../libgdal20_2.4.2+dfsg-1~bionic0_amd64.deb ...

Unpacking libgdal20 (2.4.2+dfsg-1~bionic0) over (2.2.3+dfsg-2) ...

(Reading database ... 135010 files and directories currently installed.)

Removing libogdi3.2 (3.2.0+ds-2) ...

Selecting previously unselected package libogdi4.1.

(Reading database ... 134992 files and directories currently installed.)

Preparing to unpack .../00-libogdi4.1_4.1.0+ds-1~bionic2_amd64.deb ...

Unpacking libogdi4.1 (4.1.0+ds-1~bionic2) ...

Preparing to unpack .../01-libspatialite7_4.3.0a-6~bionic2_amd64.deb ...

Unpacking libspatialite7:amd64 (4.3.0a-6~bionic2) over (4.3.0a-5build1) ...

Selecting previously unselected package libgdal26.

Preparing to unpack .../02-libgdal26_3.0.2+dfsg-1~bionic2_amd64.deb ...

Unpacking libgdal26 (3.0.2+dfsg-1~bionic2) ...

Preparing to unpack .../03-libopencv-viz3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-viz3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../04-libopencv-shape3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-shape3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../05-libopencv-video3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-video3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../06-libopencv-photo3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-photo3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../07-libopencv-imgproc3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-imgproc3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../08-libopencv-core3.2_3.2.0+dfsg-4ubuntu0.1+bionic3_amd64.deb ...

Unpacking libopencv-core3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Preparing to unpack .../09-libopencv3.2-java_3.2.0+dfsg-4ubuntu0.1+bionic3_all.deb ...

Unpacking libopencv3.2-java (3.2.0+dfsg-4ubuntu0.1+bionic3) over (3.2.0+dfsg-4ubuntu0.1) ...

Selecting previously unselected package libproj13:amd64.

Preparing to unpack .../10-libproj13_5.2.0-1~bionic0_amd64.deb ...

Unpacking libproj13:amd64 (5.2.0-1~bionic0) ...

Selecting previously unselected package libmysqlclient-dev.

Preparing to unpack .../11-libmysqlclient-dev_5.7.28-0ubuntu0.18.04.4_amd64.deb ...

Unpacking libmysqlclient-dev (5.7.28-0ubuntu0.18.04.4) ...

Selecting previously unselected package default-libmysqlclient-dev:amd64.

Preparing to unpack .../12-default-libmysqlclient-dev_1.0.4_amd64.deb ...

Unpacking default-libmysqlclient-dev:amd64 (1.0.4) ...

Selecting previously unselected package libblas3:amd64.

Preparing to unpack .../13-libblas3_3.7.1-4ubuntu1_amd64.deb ...

Unpacking libblas3:amd64 (3.7.1-4ubuntu1) ...

Selecting previously unselected package libblas-dev:amd64.

Preparing to unpack .../14-libblas-dev_3.7.1-4ubuntu1_amd64.deb ...

Unpacking libblas-dev:amd64 (3.7.1-4ubuntu1) ...

Selecting previously unselected package libarpack2-dev:amd64.

Preparing to unpack .../15-libarpack2-dev_3.5.0+real-2_amd64.deb ...

Unpacking libarpack2-dev:amd64 (3.5.0+real-2) ...

Selecting previously unselected package libsuperlu-dev:amd64.

Preparing to unpack .../16-libsuperlu-dev_5.2.1+dfsg1-3_amd64.deb ...

Unpacking libsuperlu-dev:amd64 (5.2.1+dfsg1-3) ...

Selecting previously unselected package libarmadillo-dev.

Preparing to unpack .../17-libarmadillo-dev_1%3a8.400.0+dfsg-2_amd64.deb ...

Unpacking libarmadillo-dev (1:8.400.0+dfsg-2) ...

Selecting previously unselected package libcfitsio-dev:amd64.

Preparing to unpack .../18-libcfitsio-dev_3.430-2_amd64.deb ...

Unpacking libcfitsio-dev:amd64 (3.430-2) ...

Selecting previously unselected package libcfitsio-doc.

Preparing to unpack .../19-libcfitsio-doc_3.430-2_all.deb ...

Unpacking libcfitsio-doc (3.430-2) ...

Selecting previously unselected package libcharls-dev:amd64.

Preparing to unpack .../20-libcharls-dev_1.1.0+dfsg-2_amd64.deb ...

Unpacking libcharls-dev:amd64 (1.1.0+dfsg-2) ...

Selecting previously unselected package libdapserver7v5:amd64.

Preparing to unpack .../21-libdapserver7v5_3.19.1-2build1_amd64.deb ...

Unpacking libdapserver7v5:amd64 (3.19.1-2build1) ...

Selecting previously unselected package libdap-dev:amd64.

Preparing to unpack .../22-libdap-dev_3.19.1-2build1_amd64.deb ...

Unpacking libdap-dev:amd64 (3.19.1-2build1) ...

Selecting previously unselected package libfyba-dev:amd64.

Preparing to unpack .../23-libfyba-dev_4.1.1-3_amd64.deb ...

Unpacking libfyba-dev:amd64 (4.1.1-3) ...

Selecting previously unselected package libepsilon-dev:amd64.

Preparing to unpack .../24-libepsilon-dev_0.9.2+dfsg-2_amd64.deb ...

Unpacking libepsilon-dev:amd64 (0.9.2+dfsg-2) ...

Selecting previously unselected package libfreexl-dev:amd64.

Preparing to unpack .../25-libfreexl-dev_1.0.5-1_amd64.deb ...

Unpacking libfreexl-dev:amd64 (1.0.5-1) ...

Selecting previously unselected package libgeos-dev.

Preparing to unpack .../26-libgeos-dev_3.8.0-1~bionic0_amd64.deb ...

Unpacking libgeos-dev (3.8.0-1~bionic0) ...

Selecting previously unselected package libsqlite3-dev:amd64.

Preparing to unpack .../27-libsqlite3-dev_3.22.0-1ubuntu0.2_amd64.deb ...

Unpacking libsqlite3-dev:amd64 (3.22.0-1ubuntu0.2) ...

Selecting previously unselected package libproj-dev:amd64.

Preparing to unpack .../28-libproj-dev_6.2.1-1~bionic0_amd64.deb ...

Unpacking libproj-dev:amd64 (6.2.1-1~bionic0) ...

Selecting previously unselected package libgeotiff-dev:amd64.

Preparing to unpack .../29-libgeotiff-dev_1.5.1-2~bionic1_amd64.deb ...

Unpacking libgeotiff-dev:amd64 (1.5.1-2~bionic1) ...

Selecting previously unselected package libgif-dev.

Preparing to unpack .../30-libgif-dev_5.1.4-2ubuntu0.1_amd64.deb ...

Unpacking libgif-dev (5.1.4-2ubuntu0.1) ...

Selecting previously unselected package libnetcdf-dev.

Preparing to unpack .../31-libnetcdf-dev_1%3a4.6.0-2build1_amd64.deb ...

Unpacking libnetcdf-dev (1:4.6.0-2build1) ...

Selecting previously unselected package libhdf4-alt-dev.

Preparing to unpack .../32-libhdf4-alt-dev_4.2.13-2_amd64.deb ...

Unpacking libhdf4-alt-dev (4.2.13-2) ...

Selecting previously unselected package libjson-c-dev:amd64.

Preparing to unpack .../33-libjson-c-dev_0.12.1-1.3_amd64.deb ...

Unpacking libjson-c-dev:amd64 (0.12.1-1.3) ...

Selecting previously unselected package libkmlconvenience1:amd64.

Preparing to unpack .../34-libkmlconvenience1_1.3.0-5_amd64.deb ...

Unpacking libkmlconvenience1:amd64 (1.3.0-5) ...

Selecting previously unselected package libkmlregionator1:amd64.

Preparing to unpack .../35-libkmlregionator1_1.3.0-5_amd64.deb ...

Unpacking libkmlregionator1:amd64 (1.3.0-5) ...

Selecting previously unselected package libkmlxsd1:amd64.

Preparing to unpack .../36-libkmlxsd1_1.3.0-5_amd64.deb ...

Unpacking libkmlxsd1:amd64 (1.3.0-5) ...

Selecting previously unselected package libminizip-dev:amd64.

Preparing to unpack .../37-libminizip-dev_1.1-8build1_amd64.deb ...

Unpacking libminizip-dev:amd64 (1.1-8build1) ...

Selecting previously unselected package liburiparser-dev.

Preparing to unpack .../38-liburiparser-dev_0.8.4-1_amd64.deb ...

Unpacking liburiparser-dev (0.8.4-1) ...

Selecting previously unselected package libkml-dev:amd64.

Preparing to unpack .../39-libkml-dev_1.3.0-5_amd64.deb ...

Unpacking libkml-dev:amd64 (1.3.0-5) ...

Selecting previously unselected package libogdi-dev.

Preparing to unpack .../40-libogdi-dev_4.1.0+ds-1~bionic2_amd64.deb ...

Unpacking libogdi-dev (4.1.0+ds-1~bionic2) ...

Selecting previously unselected package libopenjp2-7-dev.

Preparing to unpack .../41-libopenjp2-7-dev_2.3.0-2build0.18.04.1_amd64.deb ...

Unpacking libopenjp2-7-dev (2.3.0-2build0.18.04.1) ...

Selecting previously unselected package libpoppler-dev:amd64.

Preparing to unpack .../42-libpoppler-dev_0.62.0-2ubuntu2.10_amd64.deb ...

Unpacking libpoppler-dev:amd64 (0.62.0-2ubuntu2.10) ...

Selecting previously unselected package libpoppler-private-dev:amd64.

Preparing to unpack .../43-libpoppler-private-dev_0.62.0-2ubuntu2.10_amd64.deb ...

Unpacking libpoppler-private-dev:amd64 (0.62.0-2ubuntu2.10) ...

Selecting previously unselected package libpq-dev.

Preparing to unpack .../44-libpq-dev_10.10-0ubuntu0.18.04.1_amd64.deb ...

Unpacking libpq-dev (10.10-0ubuntu0.18.04.1) ...

Selecting previously unselected package libqhull-r7:amd64.

Preparing to unpack .../45-libqhull-r7_2015.2-4_amd64.deb ...

Unpacking libqhull-r7:amd64 (2015.2-4) ...

Selecting previously unselected package libqhull-dev:amd64.

Preparing to unpack .../46-libqhull-dev_2015.2-4_amd64.deb ...

Unpacking libqhull-dev:amd64 (2015.2-4) ...

Selecting previously unselected package libspatialite-dev:amd64.

Preparing to unpack .../47-libspatialite-dev_4.3.0a-6~bionic2_amd64.deb ...

Unpacking libspatialite-dev:amd64 (4.3.0a-6~bionic2) ...

Selecting previously unselected package libwebp-dev:amd64.

Preparing to unpack .../48-libwebp-dev_0.6.1-2_amd64.deb ...

Unpacking libwebp-dev:amd64 (0.6.1-2) ...

Selecting previously unselected package libxerces-c-dev.

Preparing to unpack .../49-libxerces-c-dev_3.2.0+debian-2_amd64.deb ...

Unpacking libxerces-c-dev (3.2.0+debian-2) ...

Selecting previously unselected package libzstd-dev:amd64.

Preparing to unpack .../50-libzstd-dev_1.3.3+dfsg-2ubuntu1.1_amd64.deb ...

Unpacking libzstd-dev:amd64 (1.3.3+dfsg-2ubuntu1.1) ...

Selecting previously unselected package unixodbc-dev:amd64.

Preparing to unpack .../51-unixodbc-dev_2.3.4-1.1ubuntu3_amd64.deb ...

Unpacking unixodbc-dev:amd64 (2.3.4-1.1ubuntu3) ...

Selecting previously unselected package libgdal-dev.

Preparing to unpack .../52-libgdal-dev_3.0.2+dfsg-1~bionic2_amd64.deb ...

Unpacking libgdal-dev (3.0.2+dfsg-1~bionic2) ...

Selecting previously unselected package libspatialindex4v5:amd64.

Preparing to unpack .../53-libspatialindex4v5_1.8.5-5_amd64.deb ...

Unpacking libspatialindex4v5:amd64 (1.8.5-5) ...

Selecting previously unselected package libspatialindex-c4v5:amd64.

Preparing to unpack .../54-libspatialindex-c4v5_1.8.5-5_amd64.deb ...

Unpacking libspatialindex-c4v5:amd64 (1.8.5-5) ...

Selecting previously unselected package proj-bin.

Preparing to unpack .../55-proj-bin_6.2.1-1~bionic0_amd64.deb ...

Unpacking proj-bin (6.2.1-1~bionic0) ...

Selecting previously unselected package python3-pkg-resources.

Preparing to unpack .../56-python3-pkg-resources_39.0.1-2_all.deb ...

Unpacking python3-pkg-resources (39.0.1-2) ...

Selecting previously unselected package libspatialindex-dev:amd64.

Preparing to unpack .../57-libspatialindex-dev_1.8.5-5_amd64.deb ...

Unpacking libspatialindex-dev:amd64 (1.8.5-5) ...

Selecting previously unselected package python3-rtree.

Preparing to unpack .../58-python3-rtree_0.8.3+ds-1_all.deb ...

Unpacking python3-rtree (0.8.3+ds-1) ...

Setting up libgeos-3.8.0:amd64 (3.8.0-1~bionic0) ...

Setting up libcfitsio5:amd64 (3.430-2) ...

Setting up libspatialindex4v5:amd64 (1.8.5-5) ...

Setting up libxerces-c-dev (3.2.0+debian-2) ...

Setting up unixodbc-dev:amd64 (2.3.4-1.1ubuntu3) ...

Setting up libcfitsio-dev:amd64 (3.430-2) ...

Setting up libpq-dev (10.10-0ubuntu0.18.04.1) ...

Setting up libpoppler-dev:amd64 (0.62.0-2ubuntu2.10) ...

Setting up libmysqlclient-dev (5.7.28-0ubuntu0.18.04.4) ...

Setting up libgif-dev (5.1.4-2ubuntu0.1) ...

Setting up libepsilon-dev:amd64 (0.9.2+dfsg-2) ...

Setting up libopencv-core3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopenjp2-7-dev (2.3.0-2build0.18.04.1) ...

Setting up python3-pkg-resources (39.0.1-2) ...

Setting up libminizip-dev:amd64 (1.1-8build1) ...

Setting up libkmlconvenience1:amd64 (1.3.0-5) ...

Setting up libwebp-dev:amd64 (0.6.1-2) ...

Setting up gdal-data (3.0.2+dfsg-1~bionic2) ...

Setting up libcfitsio-doc (3.430-2) ...

Setting up libkmlxsd1:amd64 (1.3.0-5) ...

Setting up libgeos-c1v5:amd64 (3.8.0-1~bionic0) ...

Setting up libblas3:amd64 (3.7.1-4ubuntu1) ...

Setting up libspatialindex-c4v5:amd64 (1.8.5-5) ...

Setting up libdapserver7v5:amd64 (3.19.1-2build1) ...

Setting up libkmlregionator1:amd64 (1.3.0-5) ...

Setting up libcharls-dev:amd64 (1.1.0+dfsg-2) ...

Setting up libopencv-core-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libzstd-dev:amd64 (1.3.3+dfsg-2ubuntu1.1) ...

Setting up libjson-c-dev:amd64 (0.12.1-1.3) ...

Setting up libogdi4.1 (4.1.0+ds-1~bionic2) ...

Setting up libopencv-ml3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libsqlite3-0:amd64 (3.22.0-1ubuntu0.2) ...

Setting up libarpack2-dev:amd64 (3.5.0+real-2) ...

Setting up libopencv-ml-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up liburiparser-dev (0.8.4-1) ...

Setting up libopencv-imgproc3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-flann3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up default-libmysqlclient-dev:amd64 (1.0.4) ...

Setting up libopencv-video3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libfyba-dev:amd64 (4.1.1-3) ...

Setting up libnetcdf-dev (1:4.6.0-2build1) ...

Setting up libqhull-r7:amd64 (2015.2-4) ...

Setting up libopencv-imgproc-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up proj-data (6.2.1-1~bionic0) ...

Setting up libfreexl-dev:amd64 (1.0.5-1) ...

Setting up libopencv-photo3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libpoppler-private-dev:amd64 (0.62.0-2ubuntu2.10) ...

Setting up libopencv-ts-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libogdi-dev (4.1.0+ds-1~bionic2) ...

Setting up libopencv-photo-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libdap-dev:amd64 (3.19.1-2build1) ...

Setting up libproj15:amd64 (6.2.1-1~bionic0) ...

Setting up libsqlite3-dev:amd64 (3.22.0-1ubuntu0.2) ...

Setting up libblas-dev:amd64 (3.7.1-4ubuntu1) ...

Setting up libopencv-flann-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libproj13:amd64 (5.2.0-1~bionic0) ...

Setting up libgeos-dev (3.8.0-1~bionic0) ...

Setting up libgeotiff5:amd64 (1.5.1-2~bionic1) ...

Setting up libqhull-dev:amd64 (2015.2-4) ...

Setting up libkml-dev:amd64 (1.3.0-5) ...

Setting up libhdf4-alt-dev (4.2.13-2) ...

Setting up libspatialindex-dev:amd64 (1.8.5-5) ...

Setting up libsuperlu-dev:amd64 (5.2.1+dfsg1-3) ...

Setting up proj-bin (6.2.1-1~bionic0) ...

Setting up libopencv-shape3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libspatialite7:amd64 (4.3.0a-6~bionic2) ...

Setting up libopencv-video-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libspatialite-dev:amd64 (4.3.0a-6~bionic2) ...

Setting up libopencv-shape-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libarmadillo-dev (1:8.400.0+dfsg-2) ...

Setting up libproj-dev:amd64 (6.2.1-1~bionic0) ...

Setting up libgdal26 (3.0.2+dfsg-1~bionic2) ...

Setting up python3-rtree (0.8.3+ds-1) ...

Setting up libgdal20 (2.4.2+dfsg-1~bionic0) ...

Setting up libopencv-imgcodecs3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up python3-gdal (3.0.2+dfsg-1~bionic2) ...

Setting up libvtk6.3 (6.3.0+dfsg2-2build4~bionic3) ...

Setting up gdal-bin (3.0.2+dfsg-1~bionic2) ...

Setting up libopencv-videoio3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libgeotiff-dev:amd64 (1.5.1-2~bionic1) ...

Setting up libgdal-dev (3.0.2+dfsg-1~bionic2) ...

Setting up libopencv-imgcodecs-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-viz3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-superres3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-highgui3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-videoio-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-viz-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-objdetect3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-highgui-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-features2d3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-superres-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-features2d-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-calib3d3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-stitching3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-calib3d-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-objdetect-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-videostab3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-stitching-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-contrib3.2:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-videostab-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-contrib-dev:amd64 (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv3.2-jni (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv3.2-java (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Setting up libopencv-dev (3.2.0+dfsg-4ubuntu0.1+bionic3) ...

Processing triggers for man-db (2.8.3-2ubuntu0.1) ...

Processing triggers for libc-bin (2.27-3ubuntu1) ...

Collecting rasterio

Downloading https://files.pythonhosted.org/packages/be/e5/7052a3eef72af7e883a280d8dff64f4ea44cb92ec25ffb1d00ce27bc1a12/rasterio-1.1.2-cp36-cp36m-manylinux1_x86_64.whl (18.0MB)

|████████████████████████████████| 18.0MB 244kB/s

Requirement already satisfied: numpy in /usr/local/lib/python3.6/dist-packages (from rasterio) (1.17.5)

Requirement already satisfied: attrs in /usr/local/lib/python3.6/dist-packages (from rasterio) (19.3.0)

Collecting click-plugins

Downloading https://files.pythonhosted.org/packages/e9/da/824b92d9942f4e472702488857914bdd50f73021efea15b4cad9aca8ecef/click_plugins-1.1.1-py2.py3-none-any.whl

Collecting snuggs>=1.4.1

Downloading https://files.pythonhosted.org/packages/cc/0e/d27d6e806d6c0d1a2cfdc5d1f088e42339a0a54a09c3343f7f81ec8947ea/snuggs-1.4.7-py3-none-any.whl

Collecting cligj>=0.5

Downloading https://files.pythonhosted.org/packages/e4/be/30a58b4b0733850280d01f8bd132591b4668ed5c7046761098d665ac2174/cligj-0.5.0-py3-none-any.whl

Requirement already satisfied: click<8,>=4.0 in /usr/local/lib/python3.6/dist-packages (from rasterio) (7.0)

Collecting affine

Downloading https://files.pythonhosted.org/packages/ac/a6/1a39a1ede71210e3ddaf623982b06ecfc5c5c03741ae659073159184cd3e/affine-2.3.0-py2.py3-none-any.whl

Requirement already satisfied: pyparsing>=2.1.6 in /usr/local/lib/python3.6/dist-packages (from snuggs>=1.4.1->rasterio) (2.4.6)

Installing collected packages: click-plugins, snuggs, cligj, affine, rasterio

Successfully installed affine-2.3.0 click-plugins-1.1.1 cligj-0.5.0 rasterio-1.1.2 snuggs-1.4.7

Collecting geopandas

Downloading https://files.pythonhosted.org/packages/5b/0c/e6c99e561b03482220f00443f610ccf4dce9b50f4b1093d735f93c6fc8c6/geopandas-0.6.2-py2.py3-none-any.whl (919kB)

|████████████████████████████████| 921kB 2.8MB/s

Requirement already satisfied: pandas>=0.23.0 in /usr/local/lib/python3.6/dist-packages (from geopandas) (0.25.3)

Collecting pyproj

Downloading https://files.pythonhosted.org/packages/d6/70/eedc98cd52b86de24a1589c762612a98bea26cde649ffdd60c1db396cce8/pyproj-2.4.2.post1-cp36-cp36m-manylinux2010_x86_64.whl (10.1MB)

|████████████████████████████████| 10.1MB 16.6MB/s

Requirement already satisfied: shapely in /usr/local/lib/python3.6/dist-packages (from geopandas) (1.6.4.post2)

Collecting fiona

Downloading https://files.pythonhosted.org/packages/50/f7/9899f8a9a2e38601472fe1079ce5088f58833221c8b8507d8b5eafd5404a/Fiona-1.8.13-cp36-cp36m-manylinux1_x86_64.whl (11.8MB)

|████████████████████████████████| 11.8MB 50.3MB/s

Requirement already satisfied: pytz>=2017.2 in /usr/local/lib/python3.6/dist-packages (from pandas>=0.23.0->geopandas) (2018.9)

Requirement already satisfied: numpy>=1.13.3 in /usr/local/lib/python3.6/dist-packages (from pandas>=0.23.0->geopandas) (1.17.5)

Requirement already satisfied: python-dateutil>=2.6.1 in /usr/local/lib/python3.6/dist-packages (from pandas>=0.23.0->geopandas) (2.6.1)

Requirement already satisfied: cligj>=0.5 in /usr/local/lib/python3.6/dist-packages (from fiona->geopandas) (0.5.0)

Requirement already satisfied: click-plugins>=1.0 in /usr/local/lib/python3.6/dist-packages (from fiona->geopandas) (1.1.1)

Collecting munch

Downloading https://files.pythonhosted.org/packages/cc/ab/85d8da5c9a45e072301beb37ad7f833cd344e04c817d97e0cc75681d248f/munch-2.5.0-py2.py3-none-any.whl

Requirement already satisfied: attrs>=17 in /usr/local/lib/python3.6/dist-packages (from fiona->geopandas) (19.3.0)

Requirement already satisfied: click<8,>=4.0 in /usr/local/lib/python3.6/dist-packages (from fiona->geopandas) (7.0)

Requirement already satisfied: six>=1.7 in /usr/local/lib/python3.6/dist-packages (from fiona->geopandas) (1.12.0)

Installing collected packages: pyproj, munch, fiona, geopandas

Successfully installed fiona-1.8.13 geopandas-0.6.2 munch-2.5.0 pyproj-2.4.2.post1

Requirement already satisfied: descartes in /usr/local/lib/python3.6/dist-packages (1.1.0)

Requirement already satisfied: matplotlib in /usr/local/lib/python3.6/dist-packages (from descartes) (3.1.2)

Requirement already satisfied: pyparsing!=2.0.4,!=2.1.2,!=2.1.6,>=2.0.1 in /usr/local/lib/python3.6/dist-packages (from matplotlib->descartes) (2.4.6)

Requirement already satisfied: python-dateutil>=2.1 in /usr/local/lib/python3.6/dist-packages (from matplotlib->descartes) (2.6.1)

Requirement already satisfied: cycler>=0.10 in /usr/local/lib/python3.6/dist-packages (from matplotlib->descartes) (0.10.0)

Requirement already satisfied: kiwisolver>=1.0.1 in /usr/local/lib/python3.6/dist-packages (from matplotlib->descartes) (1.1.0)

Requirement already satisfied: numpy>=1.11 in /usr/local/lib/python3.6/dist-packages (from matplotlib->descartes) (1.17.5)

Requirement already satisfied: six>=1.5 in /usr/local/lib/python3.6/dist-packages (from python-dateutil>=2.1->matplotlib->descartes) (1.12.0)

Requirement already satisfied: setuptools in /usr/local/lib/python3.6/dist-packages (from kiwisolver>=1.0.1->matplotlib->descartes) (42.0.2)

Collecting solaris

Downloading https://files.pythonhosted.org/packages/57/9d/1663c1eda9d2bcf8f18bed1ce34477c15855fa0e591bc70f94233661b7fc/solaris-0.2.1-py3-none-any.whl (10.2MB)

|████████████████████████████████| 10.2MB 2.8MB/s

Collecting rio-cogeo>=1.1.6

Downloading https://files.pythonhosted.org/packages/2e/90/40638ddabe9c483c0550696a335229df0d71b6499ac15f5a30d708d83d24/rio-cogeo-1.1.8.tar.gz

Requirement already satisfied: opencv-python>=4.1.0.25 in /usr/local/lib/python3.6/dist-packages (from solaris) (4.1.2.30)

Collecting rtree>=0.9.3

Downloading https://files.pythonhosted.org/packages/11/1d/42d6904a436076df813d1df632575529991005b33aa82f169f01750e39e4/Rtree-0.9.3.tar.gz (520kB)

|████████████████████████████████| 522kB 47.2MB/s

Collecting pyyaml==5.2

Downloading https://files.pythonhosted.org/packages/8d/c9/e5be955a117a1ac548cdd31e37e8fd7b02ce987f9655f5c7563c656d5dcb/PyYAML-5.2.tar.gz (265kB)

|████████████████████████████████| 266kB 38.7MB/s

Requirement already satisfied: pandas>=0.25.3 in /usr/local/lib/python3.6/dist-packages (from solaris) (0.25.3)

Requirement already satisfied: scikit-image>=0.16.2 in /usr/local/lib/python3.6/dist-packages (from solaris) (0.16.2)

Requirement already satisfied: pyproj>=2.1 in /usr/local/lib/python3.6/dist-packages (from solaris) (2.4.2.post1)

Requirement already satisfied: torchvision>=0.4.2 in /usr/local/lib/python3.6/dist-packages (from solaris) (0.4.2)

Requirement already satisfied: matplotlib>=3.1.2 in /usr/local/lib/python3.6/dist-packages (from solaris) (3.1.2)

Collecting tensorflow==1.13.1

Downloading https://files.pythonhosted.org/packages/77/63/a9fa76de8dffe7455304c4ed635be4aa9c0bacef6e0633d87d5f54530c5c/tensorflow-1.13.1-cp36-cp36m-manylinux1_x86_64.whl (92.5MB)

|████████████████████████████████| 92.5MB 98kB/s

Requirement already satisfied: rasterio>=1.0.23 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.1.2)

Requirement already satisfied: geopandas>=0.6.2 in /usr/local/lib/python3.6/dist-packages (from solaris) (0.6.2)

Requirement already satisfied: gdal>=3.0.2 in /usr/lib/python3/dist-packages (from solaris) (3.0.2)

Requirement already satisfied: shapely>=1.6.4 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.6.4.post2)

Collecting albumentations==0.4.3

Downloading https://files.pythonhosted.org/packages/f6/c4/a1e6ac237b5a27874b01900987d902fe83cc469ebdb09eb72a68c4329e78/albumentations-0.4.3.tar.gz (3.2MB)

|████████████████████████████████| 3.2MB 39.2MB/s

Requirement already satisfied: fiona>=1.8.13 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.8.13)

Requirement already satisfied: pip>=19.0.3 in /usr/local/lib/python3.6/dist-packages (from solaris) (19.3.1)

Collecting urllib3>=1.25.7

Downloading https://files.pythonhosted.org/packages/b4/40/a9837291310ee1ccc242ceb6ebfd9eb21539649f193a7c8c86ba15b98539/urllib3-1.25.7-py2.py3-none-any.whl (125kB)

|████████████████████████████████| 133kB 48.4MB/s

Requirement already satisfied: torch==1.3.1 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.3.1)

Requirement already satisfied: networkx>=2.4 in /usr/local/lib/python3.6/dist-packages (from solaris) (2.4)

Requirement already satisfied: numpy>=1.17.3 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.17.5)

Requirement already satisfied: affine>=2.3.0 in /usr/local/lib/python3.6/dist-packages (from solaris) (2.3.0)

Collecting tqdm>=4.40.0

Downloading https://files.pythonhosted.org/packages/72/c9/7fc20feac72e79032a7c8138fd0d395dc6d8812b5b9edf53c3afd0b31017/tqdm-4.41.1-py2.py3-none-any.whl (56kB)

|████████████████████████████████| 61kB 7.8MB/s

Requirement already satisfied: scipy>=1.3.2 in /usr/local/lib/python3.6/dist-packages (from solaris) (1.4.1)

Collecting requests>=2.22.0

Downloading https://files.pythonhosted.org/packages/51/bd/23c926cd341ea6b7dd0b2a00aba99ae0f828be89d72b2190f27c11d4b7fb/requests-2.22.0-py2.py3-none-any.whl (57kB)

|████████████████████████████████| 61kB 8.2MB/s

Requirement already satisfied: click in /usr/local/lib/python3.6/dist-packages (from rio-cogeo>=1.1.6->solaris) (7.0)

Collecting supermercado

Downloading https://files.pythonhosted.org/packages/8f/c0/9c7878fbd8533486d04dfee7ef751c458dd73d687e823ad88574f3b2e631/supermercado-0.0.5.tar.gz

Collecting mercantile

Downloading https://files.pythonhosted.org/packages/9d/1d/80d28ba17e4647bf820e8d5f485d58f9da9c5ca424450489eb49e325ba66/mercantile-1.1.2-py3-none-any.whl

Requirement already satisfied: setuptools in /usr/local/lib/python3.6/dist-packages (from rtree>=0.9.3->solaris) (42.0.2)

Requirement already satisfied: pytz>=2017.2 in /usr/local/lib/python3.6/dist-packages (from pandas>=0.25.3->solaris) (2018.9)

Requirement already satisfied: python-dateutil>=2.6.1 in /usr/local/lib/python3.6/dist-packages (from pandas>=0.25.3->solaris) (2.6.1)

Requirement already satisfied: imageio>=2.3.0 in /usr/local/lib/python3.6/dist-packages (from scikit-image>=0.16.2->solaris) (2.4.1)

Requirement already satisfied: pillow>=4.3.0 in /usr/local/lib/python3.6/dist-packages (from scikit-image>=0.16.2->solaris) (6.2.2)

Requirement already satisfied: PyWavelets>=0.4.0 in /usr/local/lib/python3.6/dist-packages (from scikit-image>=0.16.2->solaris) (1.1.1)

Requirement already satisfied: six in /usr/local/lib/python3.6/dist-packages (from torchvision>=0.4.2->solaris) (1.12.0)

Requirement already satisfied: pyparsing!=2.0.4,!=2.1.2,!=2.1.6,>=2.0.1 in /usr/local/lib/python3.6/dist-packages (from matplotlib>=3.1.2->solaris) (2.4.6)

Requirement already satisfied: kiwisolver>=1.0.1 in /usr/local/lib/python3.6/dist-packages (from matplotlib>=3.1.2->solaris) (1.1.0)

Requirement already satisfied: cycler>=0.10 in /usr/local/lib/python3.6/dist-packages (from matplotlib>=3.1.2->solaris) (0.10.0)

Requirement already satisfied: protobuf>=3.6.1 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (3.10.0)

Collecting tensorboard<1.14.0,>=1.13.0

Downloading https://files.pythonhosted.org/packages/0f/39/bdd75b08a6fba41f098b6cb091b9e8c7a80e1b4d679a581a0ccd17b10373/tensorboard-1.13.1-py3-none-any.whl (3.2MB)

|████████████████████████████████| 3.2MB 44.3MB/s

Requirement already satisfied: absl-py>=0.1.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (0.9.0)

Requirement already satisfied: termcolor>=1.1.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (1.1.0)

Requirement already satisfied: gast>=0.2.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (0.2.2)

Requirement already satisfied: grpcio>=1.8.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (1.15.0)

Collecting tensorflow-estimator<1.14.0rc0,>=1.13.0

Downloading https://files.pythonhosted.org/packages/bb/48/13f49fc3fa0fdf916aa1419013bb8f2ad09674c275b4046d5ee669a46873/tensorflow_estimator-1.13.0-py2.py3-none-any.whl (367kB)

|████████████████████████████████| 368kB 45.4MB/s

Requirement already satisfied: astor>=0.6.0 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (0.8.1)

Requirement already satisfied: keras-applications>=1.0.6 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (1.0.8)

Requirement already satisfied: wheel>=0.26 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (0.33.6)

Requirement already satisfied: keras-preprocessing>=1.0.5 in /usr/local/lib/python3.6/dist-packages (from tensorflow==1.13.1->solaris) (1.1.0)

Requirement already satisfied: snuggs>=1.4.1 in /usr/local/lib/python3.6/dist-packages (from rasterio>=1.0.23->solaris) (1.4.7)

Requirement already satisfied: cligj>=0.5 in /usr/local/lib/python3.6/dist-packages (from rasterio>=1.0.23->solaris) (0.5.0)

Requirement already satisfied: attrs in /usr/local/lib/python3.6/dist-packages (from rasterio>=1.0.23->solaris) (19.3.0)

Requirement already satisfied: click-plugins in /usr/local/lib/python3.6/dist-packages (from rasterio>=1.0.23->solaris) (1.1.1)

Collecting imgaug<0.2.7,>=0.2.5

Downloading https://files.pythonhosted.org/packages/ad/2e/748dbb7bb52ec8667098bae9b585f448569ae520031932687761165419a2/imgaug-0.2.6.tar.gz (631kB)

|████████████████████████████████| 634kB 42.7MB/s

Requirement already satisfied: munch in /usr/local/lib/python3.6/dist-packages (from fiona>=1.8.13->solaris) (2.5.0)

Requirement already satisfied: decorator>=4.3.0 in /usr/local/lib/python3.6/dist-packages (from networkx>=2.4->solaris) (4.4.1)

Requirement already satisfied: idna<2.9,>=2.5 in /usr/local/lib/python3.6/dist-packages (from requests>=2.22.0->solaris) (2.8)

Requirement already satisfied: certifi>=2017.4.17 in /usr/local/lib/python3.6/dist-packages (from requests>=2.22.0->solaris) (2019.11.28)

Requirement already satisfied: chardet<3.1.0,>=3.0.2 in /usr/local/lib/python3.6/dist-packages (from requests>=2.22.0->solaris) (3.0.4)

Requirement already satisfied: werkzeug>=0.11.15 in /usr/local/lib/python3.6/dist-packages (from tensorboard<1.14.0,>=1.13.0->tensorflow==1.13.1->solaris) (0.16.0)

Requirement already satisfied: markdown>=2.6.8 in /usr/local/lib/python3.6/dist-packages (from tensorboard<1.14.0,>=1.13.0->tensorflow==1.13.1->solaris) (3.1.1)

Collecting mock>=2.0.0

Downloading https://files.pythonhosted.org/packages/05/d2/f94e68be6b17f46d2c353564da56e6fb89ef09faeeff3313a046cb810ca9/mock-3.0.5-py2.py3-none-any.whl

Requirement already satisfied: h5py in /usr/local/lib/python3.6/dist-packages (from keras-applications>=1.0.6->tensorflow==1.13.1->solaris) (2.8.0)

Building wheels for collected packages: rio-cogeo, rtree, pyyaml, albumentations, supermercado, imgaug

Building wheel for rio-cogeo (setup.py) ... done

Created wheel for rio-cogeo: filename=rio_cogeo-1.1.8-cp36-none-any.whl size=17085 sha256=fe4fd6b0c95c24ccbb6bb99d6035180ae75dd7126af0f0f5f6868e783e131ff8

Stored in directory: /root/.cache/pip/wheels/65/61/bb/57962da75239cb8f3bfef62b6f3e4f4a7dfeec2de3bc89995e

Building wheel for rtree (setup.py) ... done

Created wheel for rtree: filename=Rtree-0.9.3-cp36-none-any.whl size=21264 sha256=95c3a38dde559751720ff9423e6249d1ec8017294cb554b0333727f27c066bb6

Stored in directory: /root/.cache/pip/wheels/0b/f6/58/2d819b2abdc280c3f70db0b0ce86a712839267957db7abad85

Building wheel for pyyaml (setup.py) ... done

Created wheel for pyyaml: filename=PyYAML-5.2-cp36-cp36m-linux_x86_64.whl size=44209 sha256=e3a59363783054c16c845fc47e0d1f349a91870a99eaa77af14a0ed74153c373

Stored in directory: /root/.cache/pip/wheels/54/b7/c7/2ada654ee54483c9329871665aaf4a6056c3ce36f29cf66e67

Building wheel for albumentations (setup.py) ... done

Created wheel for albumentations: filename=albumentations-0.4.3-cp36-none-any.whl size=60764 sha256=179ad89167c0a8c4fda69a0519e02e8e5ec99360e90615d14603cabf42106034

Stored in directory: /root/.cache/pip/wheels/20/16/8e/d3bec34bf30adff30929226f0b83cc8c005b5af131f51db9d0

Building wheel for supermercado (setup.py) ... done

Created wheel for supermercado: filename=supermercado-0.0.5-cp36-none-any.whl size=7088 sha256=9c8ac2677b131bc499f25fd636c433ba8c03b5c306f5f309c97a2756e58d5b1e

Stored in directory: /root/.cache/pip/wheels/9b/2f/8e/011d7ab17b423894b4b358204c0bb854a8bb8de199e9f98f30

Building wheel for imgaug (setup.py) ... done

Created wheel for imgaug: filename=imgaug-0.2.6-cp36-none-any.whl size=654020 sha256=a96fbb499ed8c4d82c60ae96bcab95c523cfc3b082d6bf7e9516e03304ff1661

Stored in directory: /root/.cache/pip/wheels/97/ec/48/0d25896c417b715af6236dbcef8f0bed136a1a5e52972fc6d0

Successfully built rio-cogeo rtree pyyaml albumentations supermercado imgaug

ERROR: kaggle 1.5.6 has requirement urllib3<1.25,>=1.21.1, but you'll have urllib3 1.25.7 which is incompatible.

ERROR: google-colab 1.0.0 has requirement requests~=2.21.0, but you'll have requests 2.22.0 which is incompatible.

ERROR: datascience 0.10.6 has requirement folium==0.2.1, but you'll have folium 0.8.3 which is incompatible.

Installing collected packages: mercantile, supermercado, rio-cogeo, rtree, pyyaml, tensorboard, mock, tensorflow-estimator, tensorflow, imgaug, albumentations, urllib3, tqdm, requests, solaris

Found existing installation: Rtree 0.8.3

Uninstalling Rtree-0.8.3:

Successfully uninstalled Rtree-0.8.3

Found existing installation: PyYAML 3.13

Uninstalling PyYAML-3.13:

Successfully uninstalled PyYAML-3.13

Found existing installation: tensorboard 1.15.0

Uninstalling tensorboard-1.15.0:

Successfully uninstalled tensorboard-1.15.0

Found existing installation: tensorflow-estimator 1.15.1

Uninstalling tensorflow-estimator-1.15.1:

Successfully uninstalled tensorflow-estimator-1.15.1

Found existing installation: tensorflow 1.15.0

Uninstalling tensorflow-1.15.0:

Successfully uninstalled tensorflow-1.15.0

Found existing installation: imgaug 0.2.9

Uninstalling imgaug-0.2.9:

Successfully uninstalled imgaug-0.2.9

Found existing installation: albumentations 0.1.12

Uninstalling albumentations-0.1.12:

Successfully uninstalled albumentations-0.1.12

Found existing installation: urllib3 1.24.3

Uninstalling urllib3-1.24.3:

Successfully uninstalled urllib3-1.24.3

Found existing installation: tqdm 4.28.1

Uninstalling tqdm-4.28.1:

Successfully uninstalled tqdm-4.28.1

Found existing installation: requests 2.21.0

Uninstalling requests-2.21.0:

Successfully uninstalled requests-2.21.0

Successfully installed albumentations-0.4.3 imgaug-0.2.6 mercantile-1.1.2 mock-3.0.5 pyyaml-5.2 requests-2.22.0 rio-cogeo-1.1.8 rtree-0.9.3 solaris-0.2.1 supermercado-0.0.5 tensorboard-1.13.1 tensorflow-1.13.1 tensorflow-estimator-1.13.0 tqdm-4.41.1 urllib3-1.25.7

Collecting rio-tiler

Downloading https://files.pythonhosted.org/packages/5c/c9/302627b333dcb3832ef885430bed1a2b278665401865b489d49c1eefe206/rio-tiler-1.3.1.tar.gz (112kB)

|████████████████████████████████| 112kB 2.5MB/s

Requirement already satisfied: numpy in /usr/local/lib/python3.6/dist-packages (from rio-tiler) (1.17.5)

Requirement already satisfied: numexpr in /usr/local/lib/python3.6/dist-packages (from rio-tiler) (2.7.1)

Requirement already satisfied: mercantile in /usr/local/lib/python3.6/dist-packages (from rio-tiler) (1.1.2)

Requirement already satisfied: boto3 in /usr/local/lib/python3.6/dist-packages (from rio-tiler) (1.10.47)

Requirement already satisfied: rasterio[s3]>=1.1 in /usr/local/lib/python3.6/dist-packages (from rio-tiler) (1.1.2)

Collecting rio-toa

Downloading https://files.pythonhosted.org/packages/de/19/ccfdc23a822e31fdb61fef90ad641089837e86187e2b7168c2359db85df2/rio-toa-0.3.0.tar.gz

Requirement already satisfied: click>=3.0 in /usr/local/lib/python3.6/dist-packages (from mercantile->rio-tiler) (7.0)

Requirement already satisfied: jmespath<1.0.0,>=0.7.1 in /usr/local/lib/python3.6/dist-packages (from boto3->rio-tiler) (0.9.4)

Requirement already satisfied: botocore<1.14.0,>=1.13.47 in /usr/local/lib/python3.6/dist-packages (from boto3->rio-tiler) (1.13.47)

Requirement already satisfied: s3transfer<0.3.0,>=0.2.0 in /usr/local/lib/python3.6/dist-packages (from boto3->rio-tiler) (0.2.1)

Requirement already satisfied: snuggs>=1.4.1 in /usr/local/lib/python3.6/dist-packages (from rasterio[s3]>=1.1->rio-tiler) (1.4.7)

Requirement already satisfied: attrs in /usr/local/lib/python3.6/dist-packages (from rasterio[s3]>=1.1->rio-tiler) (19.3.0)

Requirement already satisfied: cligj>=0.5 in /usr/local/lib/python3.6/dist-packages (from rasterio[s3]>=1.1->rio-tiler) (0.5.0)

Requirement already satisfied: click-plugins in /usr/local/lib/python3.6/dist-packages (from rasterio[s3]>=1.1->rio-tiler) (1.1.1)

Requirement already satisfied: affine in /usr/local/lib/python3.6/dist-packages (from rasterio[s3]>=1.1->rio-tiler) (2.3.0)

Collecting rio-mucho

Downloading https://files.pythonhosted.org/packages/8a/ba/e9a23efc6a8ffe6b2340c9f1040cd26a730754c75a58061c9302c66156fa/rio_mucho-1.0.0-py3-none-any.whl

Requirement already satisfied: docutils<0.16,>=0.10 in /usr/local/lib/python3.6/dist-packages (from botocore<1.14.0,>=1.13.47->boto3->rio-tiler) (0.15.2)

Requirement already satisfied: urllib3<1.26,>=1.20; python_version >= "3.4" in /usr/local/lib/python3.6/dist-packages (from botocore<1.14.0,>=1.13.47->boto3->rio-tiler) (1.25.7)

Requirement already satisfied: python-dateutil<3.0.0,>=2.1; python_version >= "2.7" in /usr/local/lib/python3.6/dist-packages (from botocore<1.14.0,>=1.13.47->boto3->rio-tiler) (2.6.1)

Requirement already satisfied: pyparsing>=2.1.6 in /usr/local/lib/python3.6/dist-packages (from snuggs>=1.4.1->rasterio[s3]>=1.1->rio-tiler) (2.4.6)

Requirement already satisfied: six>=1.5 in /usr/local/lib/python3.6/dist-packages (from python-dateutil<3.0.0,>=2.1; python_version >= "2.7"->botocore<1.14.0,>=1.13.47->boto3->rio-tiler) (1.12.0)

Building wheels for collected packages: rio-tiler, rio-toa

Building wheel for rio-tiler (setup.py) ... done

Created wheel for rio-tiler: filename=rio_tiler-1.3.1-cp36-none-any.whl size=172294 sha256=641bdb72ba8ce43b059ad795d80803a27994227c3835887f763406a79decb569

Stored in directory: /root/.cache/pip/wheels/13/0f/f0/2e7e21b2aeaa99791322cdd28262bbf3da097d24a4bf640f47

Building wheel for rio-toa (setup.py) ... done

Created wheel for rio-toa: filename=rio_toa-0.3.0-cp36-none-any.whl size=12429 sha256=03c31289ee5aec70e1eecd18154081355f6b24aaf0a23ee74c0c4a97cca8a2c4

Stored in directory: /root/.cache/pip/wheels/12/25/52/036fe06fa14768bf5e4eef4abd4beccb3924b695199f1721a2

Successfully built rio-tiler rio-toa

Installing collected packages: rio-mucho, rio-toa, rio-tiler

Successfully installed rio-mucho-1.0.0 rio-tiler-1.3.1 rio-toa-0.3.0

# for bleeding edge version of solaris:

# !pip install git+https://github.com/CosmiQ/solaris/@dev

# restarts runtime

import os

os._exit(00)

import solaris as sol

import numpy as np

import geopandas as gpd

from matplotlib import pyplot as plt

from pathlib import Path

import rasterio

import os

data_dir = Path('data')

data_dir.mkdir(exist_ok=True)

img_path = data_dir/'images-256'

mask_path = data_dir/'masks-256'

img_path.mkdir(exist_ok=True)

mask_path.mkdir(exist_ok=True)

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/dtypes.py:526: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint8 = np.dtype([("qint8", np.int8, 1)])

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/dtypes.py:527: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_quint8 = np.dtype([("quint8", np.uint8, 1)])

/usr/local/lib/python3.6/dist-packages/tensorflow/python/framework/dtypes.py:528: FutureWarning: Passing (type, 1) or '1type' as a synonym of type is deprecated; in a future version of numpy, it will be understood as (type, (1,)) / '(1,)type'.

_np_qint16 = np.dtype([("qint16", np.int16, 1)])