%matplotlib inline

import numpy as np;

from matplotlib import pyplot as plt

$\LaTeX \text{ commands here} \newcommand{\R}{\mathbb{R}} \newcommand{\im}{\text{im}\,} \newcommand{\norm}[1]{||#1||} \newcommand{\inner}[1]{\langle #1 \rangle} \newcommand{\span}{\mathrm{span}} \newcommand{\proj}{\mathrm{proj}} \newcommand{\E}{\mathbb{E}} $

Georgia Tech, CS 4540

L13: Linear Programming Introduction¶

Jake Abernethy & Benjamin Bray

Thursday, October 3, 2019

Quick Review: SGD and OCO¶

Random functions¶

In many fields, especially Machine Learning, we deal with functions that have randomized inputs. For example, assume we have some distribution $D$, we often consider functions of the form $$ F(\theta) := \E_{\xi \sim D} [f(\theta;\xi)]. $$ Here, $\theta$ are the parameters we want to optimize, and $\xi$ is some random input, such as a datapoint. But we'd like to optimize $\theta$ "on average", i.e. in expectation over a random sample of $\xi \sim D$.

Stochastic Gradient Descent¶

We can optimize functions, defined through expectations above, using randomized gradients.

- Initialize $\theta_1$

- For $t=1,2, \ldots, T$:

- Sample $\xi_t \sim D$

- $\theta_{t+1} = \Pi_K(\theta_t - \eta_t \nabla f(\theta_t; \xi_t))$

- Output: $\bar \theta_T := \frac 1 T \sum_{t=1}^T \theta_t$

Theorem (Hazan book): As long as $f(\cdot;\xi)$ is convex, $\|\nabla f(\cdot; \cdot) \| \leq G$ and $\text{diam}(K) \leq D$, then we have $$\E[F(\bar \theta_T)] - \min_{\theta \in K} F(\theta) \leq \frac{3GD}{2\sqrt{T}}$$

SGD versus standard GD¶

Let $F(\theta) := \frac 1 n \sum_{i=1}^n f(\theta; \xi_i)$ be some objective function, defined as an average over samples $\xi_1, \ldots \xi_n$. Consider two algorithms for minimizing $F$:

- Run (average-iterate) Gradient Descent on $F$, for $n$ steps

- Run SGD on $F$, by sampling a random $\xi_i$ on each round, for $n$ steps

Problem¶

- What is the convergence guarantee for each algorithm? (I'm only interested in the $T$-dependence!)

- What is the computational complexity of each? Which is better?

New material: Linear Programming¶

Note: this is material for HW4 and will NOT be on the midterm!

Reading¶

- Tim Roughgarden, Stanford CS261, "Lecture 7: Linear Programming"

- Thomas S. Ferguson, "Linear Programming: A Concise Introduction"

Notation: Elementwise Inequalities¶

For vectors $u,v \in \R^m$, define elementwise inequalities as: $$ \begin{align} u \leq v &\iff u_i \leq b_i & (i=1,\dots,m) \end{align} $$ Similarly, let $x \in \R^n$ and $b \in \R^m$. If $A \in \R^{m \times n}$ is a matrix with rows $a_1^T, \dots, a_m^T \in \R^{1 \times n}$, then $$ \begin{align} A x \leq b &\iff a_i^T x \leq b_i & (i=1,\dots,m) \\ \end{align} $$

Recall: Linear Programs¶

A linear program consists of:

- Decision variables $x = (x_1,\dots,x_n) \in \R^d$

- Linear constraints of the form $(a^T x \leq b)$, $(a^Tx \geq b)$, or $(a^T x = b)$

- Here, $a \in \R^d$ is a vector and $b \in \R$ is a scalar

- A linear objective function $c^T x$ which we want to maximize (or minimize).

The reading contained the following example:

Warm-Up: Geometry of Linear Programs¶

Solution: Feasible Region is Convex (1)¶

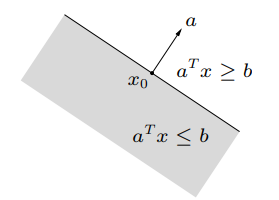

Remember that a hyperplane is a level set of the linear functional $f(x) = a^T x$ for some $a \in \R^n$, and a half-space is the region on either side of a hyperplane: $$ \begin{align} \text{hyperplane:} \quad& \{ x \in \R^n \mid a^T x = b \} \\ \text{half-space:} \quad& \{ x \in \R^n \mid a^T x \leq b \} \end{align} $$

The feasible regions is a polytope or polyhedron, since it's an intersection of half-spaces. This means it's also convex!

Problem: Activity Analysis¶

- A company has $m$ different resources which can be used to manufacture $n$ different products.

- The company has $r_i$ units of resource $i=1,\dots,m$ available.

- The company earns a profit $c_j$ per unit of product $j=1,\dots,n$.

- The company expends $a_{ij}$ units of resource $i$ to manufacture one unit of product $j$.

Using the resources available, how much of each product should be manufactured to maximize profits?

Solution: Activity Analysis (1)¶

For convenience, define

- $x = (x_1,\dots,x_n) \in \R^n$ is the amount of each product manufactured

- $r = (r_1,\dots,r_m) \in \R^m$ is the supply of each resource

- $c = (c_1,\dots,c_n) \in \R^n$ is the profit generated by each product

- $A = [a_{ij}] \in \R^{m \times n}$ represents the manufacturing costs

Notice that:

- The $i$th row of $A$ (let's call it $a_i^T \in \R^{1 \times n}$) lists the amount of resource $i$ that each product uses.

- The $j$th column of $A$ lists the amount of each resource needed to produce product $j$.

Solution: Activity Analysis (2)¶

The total profit earned from production plan $x = (x_1,\dots,x_n) \in \R^n$ is $$ c^T x = c_1 x_1 + c_2 x_2 + \cdots + c_n x_n $$ In doing so, we expend the following amount of each resource $j=1,\dots,m$: $$ a_i^T x = a_{i1} x_1 + a_{i2} x_2 + \cdots + a_{in} x_n $$ We only have $r_i$ units of resource $i$, so we need to enforce $\boxed{a_i^T x \leq r_i}$. We also can't produce a negative amount of any product, so $\boxed{x \geq 0}$.

Solution: Activity Analysis (3)¶

Written more compactly, the linear program we want to solve is:

Notice that we can rewrite the constraints as $Ax \leq r$.

Extra: Transportation¶

- We want to ship coal from $M$ coal mines to $N$ factories.

- Each mine $i=1,\dots,M$ contains $m_i$ units of coal.

- Each factory $j=1,\dots,N$ needs $n_j$ units of coal to operate.

- It costs $c_{ij}$ dollars to ship one unit of coal from mine $i$ to factory $j$.

Our goal is to meet the needs of all the factories at minimum transportation cost.

Linear Programs in Standard Form¶

The standard form of a linear program for $c,x \in \R^n$, $b \in \R^m$ and $A \in \R^{m \times n}$ is

We will show that every linear program can be converted to standard form! Only $\leq$ constraints and nonnegative variables are necessary.

Problem: Inequalities to Standard Form¶

Solution: Inequalities in Standard Form¶

The following linear program is equivalent, but all the constraints are now $\leq$:

$$ \begin{align} \max_{x \in \R^n} &\quad c^T x \\ \text{such that} \quad-& a^T x \leq -\alpha \\ \quad & b^T x \leq \beta \\ \quad-& b^T x \leq \beta \\ \end{align} $$Problem: Nonnegative Decision Variables¶

Hint: Any $x \in \R$ can be written as $x = a - b$ where $a,b \geq 0$.

Solution: Nonnegative Decision Variables¶

Split each unconstrained decision variable into two nonnegative decision variables: $$ \begin{align} x_1 &= y_1 - y_2 &(y_1,y_2 \geq 0)\\ x_2 &= y_3 - y_4 &(y_3,y_4 \geq 0)\\ \end{align} $$

Let $y = (y_1,y_2,y_3,y_4)$. Replace $x_1$ and $x_2$ with the expressions above to obtain: $$ \begin{align} \max_{x \in \R^2} \quad& (y_1 - y_2) - (y_3 - y_4) \\ \text{such that} \quad & (y_1 - y_2) + (y_3 - y_4) \leq 1 \\ \quad-& (y_1 - y_2) + (y_3 - y_4) \leq 1 \\ \quad & y \geq 0 \end{align} $$

By finding an optimal solution for the linear program for $y$, we can reconstruct an optimal solution for $x$'s linear program!

Conclusion: Standard Form¶

The previous two problems show that any linear program can be converted to standard form! From now on, we'll assume every linear program is written in this form.

Farkas Lemma¶

We will work towards proving the following Lemma:

- There exists $x \in \R^n$ such that $Ax = b$ and $x \geq 0$.

- There exists $y \in \R^m$ such that $y^T A \geq 0$ and $b^T y < 0$.

Problem: Farkas Lemma, Part 1¶

Solution: Farkas Lemma, Part 1¶

- $(\implies)$ Suppose there is $x \geq 0$ such that $A x = b$ and $x \geq 0$. Since $A x$ is a linear combination of the columns of $A$ with coefficients from $x$, which has nonnegative entries, $A x = b \in \mathrm{cone}\{ a_1,\dots,a_n \}$ by definition.

- $(\impliedby)$ Similarly, if $b \in \mathrm{cone}(a_1,\dots,a_n)$, then there are coefficients $x_1,\dots,x_n \geq 0$ such that $b = \sum_{k=1}^n x_1 a_1 \in \R^m$. Assemble the coefficients into a vector $x = (x_1,\dots,x_n) \in \R^n$. Then $A x = b$.

Problem: Farkas Lemma, Part 2¶

- If there does not exist $x \geq 0$ such that $Ax = b$...

- then there exists $y \in \R^m$ such that $y^T A \geq 0$ and $b^T y < 0$.

Hint: Use the separating hyperplane theorem, where one set is $\mathrm{cone}(a_1,\dots,a_k)$.

Problem: Farkas Lemma, Part 2¶

- Assume there is no $x \geq 0$ such that $Ax = b$. By the previous problem, then $b \notin \mathrm{cone}(a_1,\dots,a_n)$.

- Notice that $y^T A = [ y^T a_1, y^T a_2, \dots, y^T a_n ] \in \R^{1 \times n}$. So, $y^T A \geq 0$ is equivalent to:

- Interpret $y$ as the normal vector to a hyperplane. So, we want to show that there is a hyperplane which separates $b \in \R^m$ from the columns of $A$.

- By assumption, $b$ is disjoint from $\mathrm{cone}(a_1,\dots,a_n)$ and they are both closed sets. Use the separating hyperplane theorem to find $y \in \R^m$ separating $b$ from the cone. Since the columns of $A$ belong to the cone, we are done!