Notes on The Matrix Calculus You Need for Deep Learning¶

These are my notes from the article "The Matrix Calculus you need for Deep Learning" by Terence Parr and Jeremy Howard as part of a fastai study group being conducted May-Oct 2020 (ongoing).

(Status: unfinished)

Affine function for a Neuron¶

$$ z(x) = \sum \limits _{i} ^{n} w_{i}x_{i} + b = w \cdot x + b $$Common loss function¶

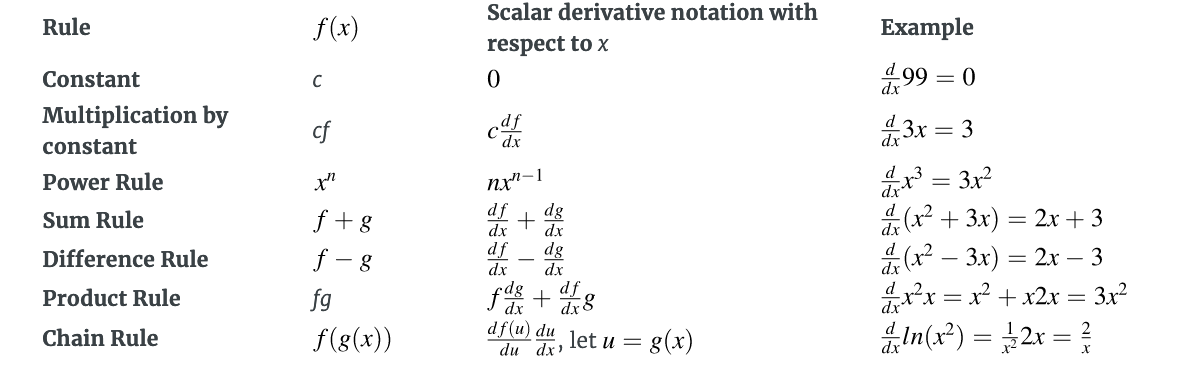

$$ \frac {1}{N} \sum \limits _{x} (target(x) - activation(x))^{2} = \frac {1}{N} \sum \limits _{x} (target(x) - max(0, \sum \limits _{i}^{\vert x \vert} w_{i}x_{i} + b))^2 $$Table of Derivatives¶

Partial Derivative Example¶

Consider the function $ f(x,y) = 3x^{2}y $

The derivative of f wrt to x is:

$\frac{\partial f}{\partial x} = $ $\frac{\partial} {\partial x} 3x^{2}y = $ $3y\frac{\partial}{\partial x}x^{2} = $ $ 6yx $

The derivative of f wrt to y is:

$\frac{\partial f}{\partial y} = $ $\frac{\partial} {\partial y} 3x^{2}y = $ $3x^{2}\frac{\partial}{\partial y}y = $ $ 3x^{2} $

Gradient of $ f(x,y) $¶

$ \nabla f(x,y) = [\frac{\partial f(x,y)}{\partial x},\frac{\partial f(x,y)}{\partial y}] = $ $ [6yx, 3x^{2}] $

Matrix calculus¶

Given the functions $ f(x,y) = 3x^{2}y $ and $ g(x,y) = 2x + y^{8} $

$ \frac{\partial g(x,y)}{\partial x} = $ $ \frac{\partial 2x}{\partial x} + \frac{\partial y^{8}}{\partial x} = $ $ 2\frac{\partial x}{\partial x} + 0 = $ $ 2 \times 1 = 2 $

and

$ \frac{\partial g(x,y)}{\partial y} = $ $ \frac{\partial 2x}{\partial y} + \frac{\partial y^{8}}{\partial y} = $ $ 0 + 8y^{7} = $ $ 8y^{7} $

giving us the gradient of $ g(x,y) $ as

$ \nabla g(x,y) = $ $ [2,8y^{7}] $

Jacobian matrix (aka Jacobian)¶

numerator layout(used here) :

- rows: equations(f,g) ,

- columns: variables(x,y)

denominator layout - rows: variables(x,y) , columns: equations(f,g)

$$ \begin{bmatrix} 6yx & 2 \\ 3x^{2} & 8y^{7} \end{bmatrix} $$Generalization of the Jacobian¶

Combine $ f(x,y,z) \Rightarrow f(x) $ where x is a vector (aka $ \vec{x} $) and x are scalars. e.g. $ x_{i} $ is the $i^{th}$ element of vector x.

Assume vector x is a column vector (vertical vector) by default of size $ n \times 1 $.

$$ x = \begin{bmatrix} x_{1} \\ x_{2} \\ \vdots \\ x_{n} \end{bmatrix} $$For multiple scalar-valued functions, combine all into a vector just like the parameters.

Let $ y = f(x) $ be a vector of m scalar-valued functions that each take a vector x of length $ n = \vert x \vert $ where $ \vert x \vert $ is the count of elements in x.

Each $ f_{i} $ function within f returns a scalar.

$$ \begin{matrix} y_{1} = f_{1}(x) \\ y_{2} = f_{2}(x) \\ \vdots \\ y_{m} = f_{m}(x) \end{matrix} $$For instance, given $ f(x,y) = 3x^{2}y $ and $ g(x,y) = 2x + y^{8} $, then

$ y_{1} = f_{1}(x) = 3x_{1}^{2}x_{2} $ (substituting $ x_{1} $ for x, $ x_{2} $ for y )

$ y_{2} = f_{2}(x) = 2x_{1} + x_{2}^{8} $

For the identity function $ y = f(x) = x $ it will be the case that $ m = n $ : $$ \begin{matrix} y_{1} = f_{1}(x) = x_{1} \\ y_{2} = f_{2}(x) = x_{2} \\ \vdots \\ y_{m} = f_{m}(x) = x_{n} \end{matrix} $$

So for the identity function, we will $ m = n $ functions and parameters.

Generally, the Jacobian matrix is the collection of all $ m \times n $ possible partial derivatives (m rows and n columns), which is a stack of m gradients with respect to x:

$$ \frac {\partial y}{\partial x} = \begin{bmatrix} \nabla f_{1}(x) \\ \nabla f_{2}(x) \\ \dotsb \\ \nabla f_{m}(x) \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x}f_{1}(x) \\ \frac{\partial}{\partial x}f_{2}(x) \\ \dotsb \\ \frac{\partial}{\partial x}f_{m}(x) \\ \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x_{1}}f_{1}(x) & \frac{\partial}{\partial x_{2}}f_{1}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{1}(x) \\ \frac{\partial}{\partial x_{1}}f_{2}(x) & \frac{\partial}{\partial x_{2}}f_{2}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{2}(x) \\ \dotsb \\ \frac{\partial}{\partial x_{1}}f_{m}(x) & \frac{\partial}{\partial x_{2}}f_{m}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{m}(x) \\ \end{bmatrix} $$Each $ \frac{\partial}{\partial x}f_{i}(x) $ is a horizontal n-vector b/c the partial derivative wrt to the vector x, whose length $ n = \vert x \vert $. The width of the Jacobian is n if we take the partial derivative with respect to x because there are n parameters we can wiggle, each potentially changing the function's value. Therefore, the Jacobian is always m rows for m equations.

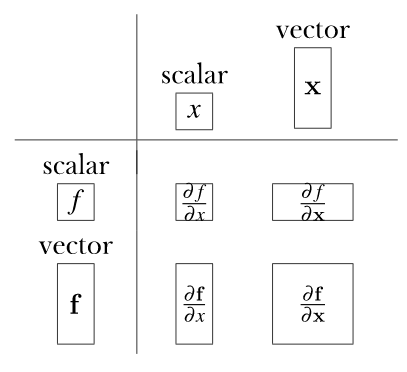

Jacobian Shapes¶

An Example: Jacobian of the identity function¶

Given the identity function $ \pmb{f}(x) = \pmb{x} $, with $ f_{i}(x) = x_{i} $, the Jacobian of the identity function has n functions and each function has n parameters held in a single vector x. The Jacobian is, therefore, a square matrix since $ m = n $:

$$ \frac{\partial y}{\partial x} = \begin{bmatrix} \frac{\partial}{\partial x}f_{1}(x) \\ \frac{\partial}{\partial x}f_{2}(x) \\ \dotsb \\ \frac{\partial}{\partial x}f_{m}(x) \\ \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x_{1}}f_{1}(x) & \frac{\partial}{\partial x_{2}}f_{1}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{1}(x) \\ \frac{\partial}{\partial x_{1}}f_{2}(x) & \frac{\partial}{\partial x_{2}}f_{2}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{2}(x) \\ \dotsb \\ \frac{\partial}{\partial x_{1}}f_{m}(x) & \frac{\partial}{\partial x_{2}}f_{m}(x) & \dotsb & \frac{\partial}{\partial x_{n}}f_{m}(x) \\ \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x_{1}}x_{1} & \frac{\partial}{\partial x_{2}}x_{1} & \dotsb & \frac{\partial}{\partial x_{n}}x_{1} \\ \frac{\partial}{\partial x_{1}}x_{2} & \frac{\partial}{\partial x_{2}}x_{2} & \dotsb & \frac{\partial}{\partial x_{n}}x_{2} \\ \dotsb \\ \frac{\partial}{\partial x_{1}}x_{n} & \frac{\partial}{\partial x_{2}}x_{n} & \dotsb & \frac{\partial}{\partial x_{n}}x_{n} \\ \end{bmatrix} $$And since $ \frac{\partial}{\partial x_{j}}x_{i} = 0 $ for $ j \ne i $ and $ \frac{\partial}{\partial x_{j}}x_{i} = 1 $ for $ j = i $

$$ = \begin{bmatrix} \frac{\partial}{\partial x_{1}}x_{1} & 0 & \dotsb & 0 \\ 0 & \frac{\partial}{\partial x_{2}}x_{2} & \dotsb & 0 \\ & & \ddots \\ 0 & 0 & \dotsb & \frac{\partial}{\partial x_{n}}x_{n} \\ \end{bmatrix} = \begin{bmatrix} 1 & 0 & \dotsb & 0 \\ 0 & 1 & \dotsb & 0 \\ & & \ddots \\ 0 & 0 & \dotsb & 1 \\ \end{bmatrix} = I $$(I is the identity matrix with the ones down the diagonal)

Derivatives of vector element-wise binary operators¶

Element-wise binary operations on vectors - applying an operation to the first element of each vector to get the first element of the output, then apply to the second items of each vector to get the second item of the output, and so forth.

Generalized notation for element-wise binary operations¶

$$ \pmb{y} = \pmb{f}(w) \bigcirc \pmb{g}(x) $$where $ m = n = \vert y \vert = \vert w \vert = \vert x \vert $

Reminder: $ \vert x \vert $ is the number of items in x

Zooming in $ \pmb{y} = \pmb{f}(\pmb{w}) \bigcirc \pmb{g}(\pmb{x}) $ gives :

$$ \begin{bmatrix} y_{1} \\ y_{2} \\ \vdots \\ y_{n} \end{bmatrix} = \begin{bmatrix} f_{1}(w) \bigcirc g_{1}(x) \\ f_{2}(w) \bigcirc g_{2}(x) \\ \vdots \\ f_{n}(w) \bigcirc g_{n}(x) \end{bmatrix} $$Jacobian of Elementwise Binary Operations¶

The general case for the Jacobian of y wrt w is the square matrix: $$ J_{W} = \frac{\partial y}{\partial w} = \begin{bmatrix} \frac{\partial}{\partial w_{1}}(f_{1}(w) \bigcirc g_{1}(x)) & \frac{\partial}{\partial w_{2}}(f_{1}(w) \bigcirc g_{1}(x)) & \dotsb & \frac{\partial}{\partial w_{n}}(f_{1}(w) \bigcirc g_{1}(x)) \\ \frac{\partial}{\partial w_{1}}(f_{2}(w) \bigcirc g_{2}(x)) & \frac{\partial}{\partial w_{2}}(f_{2}(w) \bigcirc g_{2}(x)) & \dotsb & \frac{\partial}{\partial w_{n}}(f_{2}(w) \bigcirc g_{2}(x)) \\ \dotsb \\ \frac{\partial}{\partial w_{1}}(f_{n}(w) \bigcirc g_{n}(x)) & \frac{\partial}{\partial w_{2}}(f_{n}(w) \bigcirc g_{n}(x)) & \dotsb & \frac{\partial}{\partial w_{n}}(f_{n}(w) \bigcirc g_{n}(x)) \end{bmatrix} $$

The general case for the Jacobian of y wrt x is the square matrix: $$ J_{X} = \frac{\partial y}{\partial x} = \begin{bmatrix} \frac{\partial}{\partial x_{1}}(f_{1}(w) \bigcirc g_{1}(x)) & \frac{\partial}{\partial x_{2}}(f_{1}(w) \bigcirc g_{1}(x)) & \dotsb & \frac{\partial}{\partial x_{n}}(f_{1}(w) \bigcirc g_{1}(x)) \\ \frac{\partial}{\partial x_{1}}(f_{2}(w) \bigcirc g_{2}(x)) & \frac{\partial}{\partial x_{2}}(f_{2}(w) \bigcirc g_{2}(x)) & \dotsb & \frac{\partial}{\partial w_{n}}(f_{2}(w) \bigcirc g_{2}(x)) \\ \dotsb \\ \frac{\partial}{\partial x_{1}}(f_{n}(w) \bigcirc g_{n}(x)) & \frac{\partial}{\partial x_{2}}(f_{n}(w) \bigcirc g_{n}(x)) & \dotsb & \frac{\partial}{\partial x_{n}}(f_{n}(w) \bigcirc g_{n}(x)) \end{bmatrix} $$

Diagonal Jacobians¶

In a Diagonal Jacobian, all elements off the diagonal are zero, $ \frac{\partial}{\partial w_{j}}(f_{i}(w) \bigcirc g_{i}(x)) = 0 $ where $ j \ne i $

This will be the case when $ f_{i} $ and $ g_{i} $ are constants wrt $ w_{j} $:

$$ \frac{\partial}{\partial w_{j}}f_{i}(w) = \frac{\partial}{\partial w_{j}}g_{i}(x) = 0 $$Regardless of the operation $ \bigcirc $, if the partial derivatives go to zero, $ 0 \bigcirc 0 = 0 $ and the partial derivative of a constant is zero.

These partial derivatives go to zero when $f_{i} $ and $ g_{i} $ are not functions of $ w_{j} $.

Element-wise operations imply that $ f_{i} $ is purely a function of $ w_{i} $ and $ g_{i} $ is purely a function of $ x_{i} $.

For example, $ \pmb{w} + \pmb{x} $ sums $ w_{i} + x_{i} $.

Consequently, $ f_{i}(w) \bigcirc g_{i}(x) $ reduces to $ f_{i}(w_{i}) \bigcirc g_{i}(x_{i}) $ and the goal becomes $ \frac{\partial}{\partial w_{j}}f_{i}(w_{i}) = 0$ and $ \frac{\partial}{\partial w_{j}}g_{i}(x_{i}) = 0 $

Notice that $ f_{i}(w_{i}) $ and $ g_{i}(x_{i}) $ look like constants to the partial differentiation wrt to $ w_{j} $ when $ j \ne i $

Element-wise diagonal condition¶

Element-wise diagonal condition refers to the constraint that $ f_{i}(w) $ and $ g_{i}(x) $ access at most only $ w_{i} $ and $ x_{i} $, respectively.

Jacobians under an element-wise diagonal condition¶

Under this condition, the elements along the diagonal of the Jacobian are $ \frac{\partial}{\partial w_{i}}(f_{i}(w_{i}) \bigcirc g_{i}(x_{i})) $:

$$ \frac{\partial y}{\partial w} = \begin{bmatrix} \frac{\partial}{\partial w_{1}}(f_{1}(w_{1}) \bigcirc g_{1}(x_{1})) & & \\ & \frac{\partial}{\partial w_{2}}(f_{2}(w_{2}) \bigcirc g_{2}(x_{2})) & \huge0 \\ \dotsb \\ \huge0 & & \frac{\partial}{\partial w_{n}}(f_{n}(w_{n}) \bigcirc g_{n}(x_{n})) \end{bmatrix} $$More succinctly, we can rewrite the following as: $$ \frac{\partial y}{\partial w} = diag \left(\frac{\partial}{\partial w_{1}}(f_{1}(w_{1}) \bigcirc g_{1}(x_{1})), \frac{\partial}{\partial w_{2}}(f_{2}(w_{2}) \bigcirc g_{2}(x_{2})), \dotsb , \frac{\partial}{\partial w_{n}}(f_{n}(w_{n}) \bigcirc g_{n}(x_{n}))\right) $$

and

$$ \frac{\partial y}{\partial x} = diag \left(\frac{\partial}{\partial x_{1}}(f_{1}(w_{1}) \bigcirc g_{1}(x_{1})), \frac{\partial}{\partial x_{2}}(f_{2}(w_{2}) \bigcirc g_{2}(x_{2})), \dotsb , \frac{\partial}{\partial x_{n}}(f_{n}(w_{n}) \bigcirc g_{n}(x_{n}))\right) $$where $ diag(x) $ constructs a matrix whose diagonal elements are taken from the vector $ \pmb{x} $

If the general function $ \pmb{f}(w) $ in the binary element-wise operation is just the vector $ \pmb{w} $, then we know that $ f_i(w) $ reduces to $ f_i(w_i) = w_i $

As an example, $ \pmb{w} + \pmb{x} $ fits our element-wise diagonal condtion because $ \pmb{f}(\pmb{w}) + \pmb{g}(\pmb{x}) $ has scalar equations $ y_i = f_i(w) + g_i(x) $ that reduce to just $ y_i = f_i(w_i) + g_i(x_i) = w_i + x_i $ with the partial derivatives:

$$ \frac{\partial}{\partial w_i}(f_i(w_i) + g_i(x_i)) = \frac{\partial}{\partial w_i}(w_i + x_i) = \frac{\partial w_i}{\partial w_i} + \frac{\partial x_i}{\partial w_i} = 1 + 0 = 1 $$and

$$ \frac{\partial}{\partial x_i}(f_i(w_i) + g_i(x_i)) = \frac{\partial}{\partial x_i}(w_i + x_i) = \frac{\partial w_i}{\partial x_i} + \frac{\partial x_i}{\partial x_i} = 0 + 1 = 1 $$Which gives us: $$ \frac{\partial (w + x)}{\partial w} = \frac{\partial (w + x)}{\partial x} = I $$

Given the simplicity of this special case $f_i(\pmb{w}) $ reducing to $ f_i(w_i) $, we can derive the Jacobians for common element-wise binary operations:

Addition (wrt to w)¶

$$ \frac{\partial (\pmb{w} + \pmb{x})}{\partial \pmb{w}} = diag \left(\dotsb \frac{\partial (w_i + x_i)}{\partial w_i} \dotsb \right) = diag \left(\dotsb \frac{\partial w_i}{\partial w_i} + \frac{\partial x_i}{\partial w_i} \dotsb \right) = diag \left(\dotsb 1 + 0 \dotsb \right) = diag \left(\dotsb 1 \dotsb \right) = diag(\vec{1}) = I $$Subtraction (wrt to w)¶

$$ \frac{\partial (\pmb{w} - \pmb{x})}{\partial \pmb{w}} = diag \left(\dotsb \frac{\partial w_i - x_i}{\partial w_i} \dotsb \right) = diag \left(\dotsb \frac{\partial w_i}{\partial w_i} - \frac{\partial x_i}{\partial w_i} \dotsb \right) = diag \left(\dotsb 1 - 0 \dotsb \right) = diag \left(\dotsb 1 \dotsb \right) = diag(\vec{1}) = I $$Elementwise Multiplication (aka Hadamard product) (wrt to w)¶

$$ \frac{\partial (\pmb{w} \otimes \pmb{x})}{\partial \pmb{w}} = diag \left(\dotsb \frac{\partial w_i \times x_i}{\partial w_i} \dotsb \right) = diag \left(\dotsb (x_i \times \frac{\partial w_i}{\partial w_i}) + (w_i \times \frac{\partial x_i}{\partial w_i}) \dotsb \right) = diag \left(\dotsb (x_i \times 1 ) + (w_i \times 0) \dotsb \right) = diag \left(\dotsb x_i \dotsb \right) = diag(\pmb{x}) $$Elementwise Division (wrt to w)¶

$$ \frac{\partial (\pmb{w} \oslash \pmb{x})}{\partial \pmb{w}} = diag \left(\dotsb \frac{\partial (\frac{w_i}{x_i})}{\partial w_i} \dotsb \right) = diag \left(\dotsb (\frac{1}{x_i} \times \frac{\partial w_i}{\partial w_i}) + (w_i \times \frac{\partial \frac{1}{x_i}}{\partial w_i}) \dotsb \right) = diag \left(\dotsb (\frac{1}{x_i} \times 1 ) + (w_i \times 0) \dotsb \right) = diag \left(\dotsb \frac{1}{x_i} \dotsb \right) $$Addition (wrt to x)¶

$$ \frac{\partial (\pmb{w} + \pmb{x})}{\partial \pmb{x}} = diag \left(\dotsb \frac{\partial (w_i + x_i)}{\partial x_i} \dotsb \right) = diag \left(\dotsb \frac{\partial w_i}{\partial x_i} + \frac{\partial x_i}{\partial x_i} \dotsb \right) = diag \left(\dotsb 0 + 1 \dotsb \right) = diag \left(\dotsb 1 \dotsb \right) = diag(\vec{1}) = I $$Subtraction (wrt to x)¶

$$ \frac{\partial (\pmb{w} - \pmb{x})}{\partial \pmb{x}} = diag \left(\dotsb \frac{\partial (w_i - x_i)}{\partial x_i} \dotsb \right) = diag \left(\dotsb \frac{\partial w_i}{\partial x_i} - \frac{\partial x_i}{\partial x_i} \dotsb \right) = diag \left(\dotsb 0 - 1 \dotsb \right) = diag \left(\dotsb -1 \dotsb \right) = diag(-\vec{1}) = -I $$Elementwise Multiplication (aka Hadamard product) (wrt to x)¶

$$ \frac{\partial (\pmb{w} \otimes \pmb{x})}{\partial \pmb{x}} = diag \left(\dotsb \frac{\partial (w_i \times x_i)}{\partial x_i} \dotsb \right) = diag \left(\dotsb (x_i \times \frac{\partial w_i}{\partial x_i}) + (w_i \times \frac{\partial x_i}{\partial x_i}) \dotsb \right) = diag \left(\dotsb (x_i \times 0) + (w_i \times 1) \dotsb \right) = diag \left(\dotsb w_i \dotsb \right) = diag(\pmb{w}) $$Elementwise Division (wrt to x)¶

$$ \frac{\partial (\pmb{w} \oslash \pmb{x})}{\partial \pmb{x}} = diag \left(\dotsb \frac{\partial (\frac{w_i}{x_i})}{\partial x_i} \dotsb \right) = diag \left(\dotsb (\frac{1}{x_i} \times \frac{\partial w_i}{\partial x_i}) + (w_i \times \frac{\partial}{\partial x_i}(\frac{1}{x})) \dotsb \right) = diag \left(\dotsb (\frac{1}{x_i} \times 0) + (w_i \times -\frac{1}{x_{i}^{2}}) \dotsb \right) = diag \left(\dotsb \frac{-w_i}{x_i^2} \dotsb \right) $$Note: $$ \frac{\partial (\frac{w_i}{x_i})}{\partial x_i} = w_i \times \frac{\partial (\frac{1}{x_i})}{\partial x_i} = w_i \times \frac{\partial x_{i}^{-1}}{\partial x_i} = w_i \times (-1) \times x_{i}^{-2} = \frac {-w_i}{x_{i}^{2}} $$

Derivatives involving scalar expansion¶

To multiply or add scalars to vectors, we expand the scalar to a vector and perform an elementwise binary operation.

Given a scalar $ z $ and a vector $ \pmb{x} $, the partial derivatives for elementwise operations for the function $ \pmb{y} = \pmb{x} \bigcirc z $ wrt to $ \pmb{x} $ is given by:

$$ \frac{\partial \pmb{y}}{\partial \pmb{x}} = diag \left( \dotsb \frac{\partial}{\partial x_i}(f_i(x_i) \bigcirc g_i(z)) \dotsb \right) $$where $ \pmb{g}(z) = \vec{1}z $ is a vector function of the appropriate length where $ g_i(z) = z $ and $ \pmb{f}(\pmb{x}) $ is a vector function such that $ f_i(x_i) = x_i $

This results in the following (based on the previous derivations for elementwise binary operations)

Addition wrt x :¶

$$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} + z) = I $$Since $$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} + z) = \frac{\partial}{\partial \pmb{x}}(\pmb{f}(\pmb{x}) + \pmb{g}(z) = diag \left(\dotsb \frac{\partial x_i + z}{\partial x_i} \dotsb \right) = diag(\vec{1}) = I $$ Since for a given $ y_i = f_i(x) + g_i(z) = x_i + z $,

$$ \frac{\partial y_i}{\partial x_i} = \frac{\partial}{\partial x_{i}}(f_i(x) + g_i(z)) = \frac{\partial x_i}{\partial x_i} + \frac{\partial z}{\partial x_i} = 1 + 0 = 1 $$and $ \frac{\partial}{\partial x_{i}}(f_j(x) + g_j(z)) = 0 + 0 $ for all $ i \ne j $

Subtraction wrt x:¶

$$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} - z) = I $$Since $$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} - z) = \frac{\partial}{\partial \pmb{x}}(\pmb{f}(\pmb{x}) - \pmb{g}(z) = diag \left(\dotsb \frac{\partial x_i - z}{\partial x_i} \dotsb \right) = diag(\vec{1}) = I $$ Since for a given $ y_i = f_i(x) - g_i(z) = x_i - z $,

$$ \frac{\partial y_i}{\partial x_i} = \frac{\partial}{\partial x_{i}}(f_i(x) - g_i(z)) = \frac{\partial}{\partial x_{i}}x_i - \frac{\partial}{\partial z} = 1 - 0 = 1 $$and $ \frac{\partial}{\partial x_{i}}(f_j(x) - g_j(z)) = 0 - 0 $ for all $ i \ne j $

Multiplication wrt x :¶

$$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} \times z) = diag(z) $$Since $$ \frac{\partial}{\partial \pmb{x}}(\pmb{x} \times z) = \frac{\partial}{\partial \pmb{x}}(\pmb{f}(\pmb{x}) \otimes \pmb{g}(z)) = diag \left( \dotsb \frac{\partial}{\partial x_{i}}(x_{i} \times z) \dotsb \right) = diag(z) $$ Since for $ y_i = x_i \times z $, $$ \frac{\partial y_i}{\partial x_i} = \frac{\partial}{\partial x_{i}}(x_{i} \times z) = (z \times \frac{\partial x_{i}}{\partial x_{i}}) + (x_i \times \frac {\partial z}{\partial x_{i}}) = (z \times 1) + (x_i \times 0) = z $$ for all $ i = j $ and $ \frac{\partial y_j}{\partial x_i} = 0 $ for all $ j \ne i $ since $ \frac{\partial x_j}{\partial x_i} = 0 $ and $ \frac{\partial z}{\partial x_i} = 0 $

Division wrt x:¶

$$ \frac{\partial}{\partial \pmb{x}}(\frac{\pmb{x}}{z}) = diag \left( \frac{1}{z} \right) $$Since $$ \frac{\partial}{\partial \pmb{x}}(\frac{\pmb{x}}{z}) = \frac{\partial}{\partial \pmb{x}}(\pmb{x} \times \frac{1}{z}) = diag \left( \frac{1}{z} \right) $$

WRT to Z however,¶

Addition wrt z :¶

$$ \frac{\partial}{\partial z}(\pmb{x} + z) = \begin{bmatrix} \frac{\partial}{\partial z}(x_1 + z) \\ \frac{\partial}{\partial z}(x_2 + z) \\ \vdots \\ \frac{\partial}{\partial z}(x_n + z) \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial z}x_1 + \frac{\partial z}{\partial z} \\ \frac{\partial}{\partial z}x_2 + \frac{\partial z}{\partial z} \\ \vdots \\ \frac{\partial}{\partial z}x_n + \frac{\partial z}{\partial z} \end{bmatrix} = \begin{bmatrix} 0 + 1 \\ 0 + 1 \\ \vdots \\ 0 + 1 \\ \end{bmatrix} = \begin{bmatrix} 1 \\ 1 \\ \vdots \\ 1 \\ \end{bmatrix} = \vec{1} $$Subtraction wrt z:¶

$$ \frac{\partial}{\partial z}(\pmb{x} - z) = \begin{bmatrix} \frac{\partial}{\partial z}(x_1 - z) \\ \frac{\partial}{\partial z}(x_2 - z) \\ \vdots \\ \frac{\partial}{\partial z}(x_n - z) \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial z}x_1 - \frac{\partial z}{\partial z} \\ \frac{\partial}{\partial z}x_2 - \frac{\partial z}{\partial z} \\ \vdots \\ \frac{\partial}{\partial z}x_n - \frac{\partial z}{\partial z} \end{bmatrix} = \begin{bmatrix} 0 - 1 \\ 0 - 1 \\ \vdots \\ 0 - 1 \\ \end{bmatrix} = \begin{bmatrix} -1 \\ -1 \\ \vdots \\ -1 \\ \end{bmatrix} = \vec{-1} $$Multiplication wrt z :¶

$$ \frac{\partial}{\partial z}(\pmb{x} \times z) = \begin{bmatrix} \frac{\partial}{\partial z}(x_1 \times z) \\ \frac{\partial}{\partial z}(x_2 \times z) \\ \vdots \\ \frac{\partial}{\partial z}(x_i \times z) \\ \vdots \\ \frac{\partial}{\partial z}(x_n \times z) \end{bmatrix} = \pmb{x} $$Since $$ \frac{\partial}{\partial z}(x_i \times z) = (x_i \times \frac{\partial z}{\partial z}) + (z \times \frac{\partial x_i}{\partial z}) = (x_i \times 1) + (z \times 0) = x_i $$

Division wrt z:¶

$$ \frac{\partial}{\partial z}(\frac{\pmb{x}}{z}) = \frac{\partial}{\partial z}(\pmb{x} \times \frac{1}{z}) = \begin{bmatrix} \frac{\partial}{\partial z}(x_1 \times \frac{1}{z}) \\ \frac{\partial}{\partial z}(x_2 \times \frac{1}{z}) \\ \vdots \\ \frac{\partial}{\partial z}(x_i \times \frac{1}{z}) \\ \vdots \\ \frac{\partial}{\partial z}(x_n \times \frac{1}{z}) \end{bmatrix} = \pmb{x}\frac{-1}{z^2} = \frac{- \pmb{x}}{z^2} = $$Since $$ \frac{\partial}{\partial z}(x_i \times \frac{1}{z}) = (x_i \times \frac{\partial}{\partial z}\frac{1}{z}) + (\frac{1}{z} \times \frac{\partial x_i}{\partial z}) = (x_i \times \frac{-1}{z^2}) + (\frac{1}{z} \times 0) = \frac{-x_i}{z^2} $$

Vector Sum Reduction¶

Let $ y = sum(f(\pmb{x})) = \sum \limits_{i=1}^{n}f_i(\pmb{x}) $, where each function $ f_i $ could use all values in the vector, not just $ x_i $. The sum is over the results of the function, not the parameter. The gradient ($ 1 \times n $ Jacobian) of vector summation is:

$$ \frac{\partial y}{\partial \pmb{x}} = \begin{bmatrix} \frac{\partial y}{\partial x_1}, \frac{\partial y}{\partial x_2}, \dotsb , \frac{\partial y}{\partial x_n} \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x_1}\sum_i f_i(x), \frac{\partial}{\partial x_2} \sum_i f_i(x), \dotsb , \frac{\partial}{\partial x_n}\sum_i f_i(x) \end{bmatrix} = \begin{bmatrix} \sum_i \frac{\partial}{\partial x_1}f_i(x), \sum_i \frac{\partial}{\partial x_2} f_i(x), \dotsb , \sum_i \frac{\partial}{\partial x_n}f_i(x) \end{bmatrix} $$For example, given $ y = sum(x) $, the function $ f_i(x) = x_i $ and the gradient is:

$$ \nabla y = \begin{bmatrix} \sum_i \frac{\partial f_i(x)}{\partial x_1}, \sum_i \frac{\partial f_i(x)}{\partial x_2}, \dotsb , \sum_i \frac{\partial f_i(x)}{\partial x_n} \end{bmatrix} = \begin{bmatrix} \sum_i \frac{\partial x_i}{\partial x_1}, \sum_i \frac{\partial x_i}{\partial x_2}, \dotsb , \sum_i \frac{\partial x_i}{\partial x_n} \end{bmatrix} = \begin{bmatrix} \frac{\partial x_1}{\partial x_1}, \frac{\partial x_2}{\partial x_2}, \dotsb , \frac{\partial x_n}{\partial x_n} \end{bmatrix} = \begin{bmatrix} 1, 1, \dotsb , 1 \end{bmatrix} = \vec{1}^T $$Since $ \frac{\partial}{\partial x_j}x_i = 0 $ for $ i \ne j $.

As another example, given $ y = sum(\pmb{x}z) $ then $ f_{i}(x,z) = x_{i}z $ The gradient wrt to $ \pmb{x} $ is: $$ \frac{\partial y}{\partial \pmb{x}} = \begin{bmatrix} \sum_i\frac{\partial}{\partial x_1}x_{i}z, \sum_i\frac{\partial}{\partial x_2}x_{i}z, \dotsb, \sum_i\frac{\partial}{\partial x_n}x_{i}z, \end{bmatrix} = \begin{bmatrix} \frac{\partial}{\partial x_1}x_{1}z, \frac{\partial}{\partial x_2}x_{2}z, \dotsb, \frac{\partial}{\partial x_n}x_{n}z, \end{bmatrix} = \left[ z,z, \dotsb , z \right] = \vec{z}^T $$ where $ \vert \pmb{x} \vert = \vert \vec{z} \vert = n $.

With respect to the scalar z, the resulting is a $ 1 \times 1 $ $$ \frac{\partial y}{\partial z} = \frac{\partial}{\partial z}\sum_{i=1}^{n}x_{i}z = \sum_i \frac{\partial}{\partial z} x_{i}z = \sum_i x_i = sum(\pmb{x}) $$

Vector Chain Rules¶

Three types of chain rules:

- single-variable chain rule - derivative of a scalar function with respect to a scalar

- single-variable total-derivative chain rule - derivative of a scalar function wrt to scalar but with "many paths"

- vector chain rule - full vector chain rules

Single Variable Chain Rule¶

Given $ y = f(g(x)) $, the scalar derivation rule can be expressed by defining an intermediate variable $ u $ such that: $$ y = f(u) $$ and $$ u = g(x) $$ The derivative of $$ \frac{dy}{dx} = \frac{dy}{du}\frac{du}{dx} $$ As an example, given: $$ y = sin(x^2) $$ then $$ \frac{dy}{dx} = \frac{d}{du}sin(u) \times \frac{d}{dx}(x^2) = cos(u) \times 2x = 2x cos(x^2) $$ where $$ u = x^2 $$

Another example:¶

Given $ y = f(x) = ln(sin(x^3)^2) $ let: $$ \begin{align*} u_1 &= f_1(x) = x^3 \\ u_2 &= f_2(u_1) = sin(u_1) \\ u_3 &= f_3(u_2) = u_2^2 \\ u_4 &= f_4(u_3) = ln(u_3) \\ y &= u_4 & \end{align*} $$

Such that $$ \begin{align*} \frac{d}{dx}u_1 &= \frac{d}{dx}x^3 = 3x^2 \\ \frac{d}{du_1}u_2 &= \frac{d}{du_1}sin(u_1) = cos(u_1)\\ \frac{d}{du_2}u_3 &= \frac{d}{du_2}u_2^2 = 2u_2\\ \frac{d}{du_3}u_4 &= \frac{d}{du_3}ln(u_3) = \frac{1}{u_3}\\ \frac{dy}{du_4} &= \frac{du_4}{du_4} = 1 \end{align*} $$

And combining the intermediate variables : $$ \frac{dy}{dx} = \frac{dy}{du_4}\frac{du_4}{du_3}\frac{du_3}{du_2}\frac{du_2}{du_1}\frac{du_1}{dx} = 1 \times \frac{1}{u_3} \times 2u_2 \times cos(u_1) \times 3x^2 = \frac{6u_2x^2cos(u_1)}{u_3} $$

Substituting the derivatives results in $$ \frac{dy}{dx} = \frac{6sin(u_1)x^2cos(x^3}{u_2^2} = \frac{6sin(x^3)x^2cos(x^3)}{sin(u_1)^2} = \frac{6sin(x^3)x^2cos(x^3)}{sin(x^3)^2} = \frac{6x^2cos(x^3)}{sin(x^3)} $$

Single variable total derivative chain rule¶

$$ \frac{\partial f(u_1,\dotsb,u_{n+1})}{\partial x} = \sum \limits_{i=1}^{n+1}\frac{\partial f}{\partial u_i}\frac{\partial u_i}{\partial x} $$As an example, given: $ y = f(x) = x + x^2 $, we can restate the function as: $$ \begin{align*} u_1(x) &= x^2 \\ u_2(x,u_1) &= x + u_1 \\ y &= f(x) = u_2(x,u_1) \end{align*} $$ Applying the total derivative rule: $$ \frac{dy}{dx} = \frac{\partial f(x)}{\partial x} = \frac{\partial u_2(x,u_1)}{\partial x} = \frac{\partial u_2}{\partial x}\frac{\partial x}{\partial x} + \frac{\partial u_2}{\partial u_1}\frac{\partial u_1}{\partial x} = $$

Questions and Exercises¶

Compute the Jacobian of the following function $ \pmb{f}(\pmb{w}) $:

- such that $ f_{i}(w) = w_{i}^{2} $:

1. such that $ f_{i}(w) = w_{i}^{i} $:

1. such that $ f_{i}(w) = \sum \limits _{j} ^{m} w_{j}^{i} $ where $ n = m = \vert w \vert $:

Since $$ \frac{\partial}{\partial w_i} \sum \limits _{j} ^{n} w_{j}^{i} = \frac{\partial}{\partial w_i} \left( w_{1}^{i} + w_{2}^{i} + \dots + w_{i}^{i} + \dots + w_{n}^{i} \right) = \left( \frac{\partial}{\partial w_i}w_{1}^{i} + \frac{\partial}{\partial w_i}w_{2}^{i} + \dots + \frac{\partial}{\partial w_i}w_{i}^{i} + \dots + \frac{\partial}{\partial w_i}w_{n}^{i} \right) = \left( 0 + 0 + \dots + iw_{i}^{i-1} + \dots + \right) = iw_{i}^{i-1} $$