HTML("""

<style>

.rendered_html table, .rendered_html th, .rendered_html tr, .rendered_html td {

font-size: 100%;

}

</style>

""")

using PyPlot, PyCall

using AIBECS, WorldOceanAtlasTools

using LinearAlgebra

The F-1 algorithm

Benoît Pasquier and François Primeau

University of California, Irvine

Paper: The F-1 algorithm for efficient computation of the Hessian matrix of an objective function defined implicitly by the solution of a steady-state problem (under review)

Code: F1Method.jl (Julia package — check it out on GitHub!)

$\newcommand{\state}{\boldsymbol{x}}$ $\newcommand{\sol}{\boldsymbol{s}}$ $\newcommand{\params}{\boldsymbol{p}}$ $\newcommand{\lambdas}{\boldsymbol{\lambda}}$ $\newcommand{\statefun}{\boldsymbol{F}}$ $\newcommand{\cost}{f}$ $\newcommand{\objective}{\hat{f}}$ $\DeclareMathOperator*{\minimize}{minimize}$ $\newcommand{\vece}{\boldsymbol{e}}$ $\newcommand{\matI}{\mathbf{I}}$ $\newcommand{\matA}{\mathbf{A}}$

F-1 algorithm – Outline¶

- Motivation & Context

- Autodifferentiation

- What is the F-1 algorithm?

- Benchmarks

As we go through, I will demo some Julia code!

As we go through, I will demo some Julia code!

Motivation¶

For parameter optimization and parameter sensitivity estimation!

The AIBECS for building global marine steady-state biogeochemistry models in just a few commands (yesterday's CCRC seminar)

And the F-1 algorithm to facilitate optimization of biogeochemical parameters.

But the context of the F-1 algorithm is more general...

Context¶

Steady-state equation

$$\frac{\partial \state}{\partial t} = \statefun(\state,\params) = 0$$

for some state $\state$ (size $n \sim 400\,000$) and parameters $\params$ (size $m \sim 10$).

Constrained optimization problem

$$\left\{\begin{aligned} \minimize_{\boldsymbol{x}, \boldsymbol{p}} & & \cost(\state, \params) \\ \textrm{subject to} & & \statefun(\state, \params) = 0 \end{aligned}\right.$$function constrained_optimization_plot()

figure(figsize=(10,6))

f(x,p) = (x - 1)^2 + (p - 1)^2 + 1

s(p) = 1 + 0.9atan(p - 0.3)

xs, ps = -1.2:0.1:3, -1.2:0.1:3.2

plt = plot_surface(xs, ps, [f(x,p) for p in ps, x in xs], alpha = 0.5, cmap=:viridis_r)

gca(projection="3d")

P = repeat(reshape(ps, length(ps), 1), outer = [1, length(xs)])

X = repeat(reshape(xs, 1, length(xs)), outer = [length(ps), 1])

contour(X, P, [f(x,p) for p in ps, x in xs], levels = 2:6, colors="black", alpha=0.5, linewidths=0.5)

plot([s(p) for p in ps], ps)

legend(("\$F(x,p) = 0\$",))

xlabel("state \$x\$"); ylabel("parameters \$p\$"); zlabel("objective \$f(x,p)\$")

xticks(unique(round.(xs))); yticks(unique(round.(ps))); zticks(2:6)

return plt, f, s, xs, ps

end

constrained_optimization_plot()

Unconstrained optimization along the manifold of steady-state solutions.

$$\minimize_\params \objective(\params)$$

where $$\objective(\params) \equiv \cost\big(\sol(\params), \params\big)$$ is the objective function.

And $\sol(\params)$ is the steady-state solution for parameters $\params$, i.e., such that

$$\statefun\left(\sol(\params),\params\right) = 0$$function unconstrained_optimization_plot()

plt, f, s, xs, ps = constrained_optimization_plot()

plot([s(p) for p in ps], ps, [f(s(p),p) for p in ps], color="black", linewidth=3)

plot(maximum(xs) * ones(length(ps)), ps, [f(s(p),p) for p in ps], color="red")

legend(("\$x=s(p) \\Longleftrightarrow F(x,p)=0\$","\$f(x,p)\$ with \$x = s(p)\$", "\$\\hat{f}(p) = f(s(p),p)\$"))

for p in ps[1:22:end]

plot(s(p) * [1,1], p * [1,1], f(s(p),p) * [0,1], color="black", alpha = 0.3, linestyle="--")

plot([s(p), maximum(xs)], p * [1,1], f(s(p),p) * [1,1], color="black", alpha = 0.3, linestyle="--", marker="o")

end

return plt

end

unconstrained_optimization_plot()

Two nested iterative algorithms

Inner solver with Newton step

$$\Delta \state \equiv - \left[\nabla_\state \statefun(\state,\params)\right]^{-1} \statefun(\state,\params)$$Outer Optimizer with Newton step

$$\Delta\params \equiv - \left[\nabla^2\objective(\params)\right]^{-1}\nabla \objective(\params)$$requires the Hessian of the objective function,

$$\nabla^2 \objective(\params)$$

Autodifferentiation¶

Take the Taylor expansion of $\objective(\params)$ in the $h\vece_j$ direction for a given $h$:

$$\objective(\params + h \vece_j) = \objective(\params) + h \underbrace{\nabla\objective(\params) \, \vece_j}_{?} + \frac{h^2}{2} \, \left[\vece_j^\mathsf{T} \, \nabla^2\objective(\params) \, \vece_j\right] + \ldots$$A standard solution is to use Finite differences:

$$\nabla\objective(\params) \, \vece_j = \frac{\objective(\params + h \vece_j) - \objective(\params)}{h} + \mathcal{O}(h)$$

But truncation and round-off errors!

A better solution is to use Complex numbers!

Taylor-expand in the $ih\vece_j$ direction:

$$\objective(\params + i h \vece_j)

= \objective(\params)

+ i h \underbrace{\nabla\objective(\params) \, \vece_j}_{?}

- \frac{h^2}{2} \, \left[\vece_j^\mathsf{T} \, \nabla^2\objective(\params) \, \vece_j\right]

+ \ldots$$

Because when taking the imaginary part, the convergence is faster and there are no more round-off errors:

$$\nabla\objective(\params) \, \vece_j = \Im\left[\frac{\objective(\params + i h \vece_j)}{h}\right] + \mathcal{O}(h^2)$$

𝑓(x) = cos(x^2) + exp(x)

∇𝑓(x) = -2x * sin(x^2) + exp(x)

finite_differences(f, x, h) = (f(x + h) - f(x)) / h

centered_differences(f, x, h) = (f(x + h) - f(x - h)) / 2h

complex_step_method(f, x, h) = imag(f(x + im * h)) / h

relative_error(m, f, ∇f, x, h) = Float64(abs(BigFloat(m(f, x, h)) - ∇f(BigFloat(x))) / abs(∇f(x)))

x, step_sizes = 2.0, 10 .^ (-20:0.02:0)

numerical_schemes = [finite_differences, centered_differences, complex_step_method]

plot(step_sizes, [relative_error(m, 𝑓, ∇𝑓, x, h) for h in step_sizes, m in numerical_schemes])

loglog(), legend(string.(numerical_schemes)), xlabel("step size, \$h\$"), ylabel("Relative Error, \$\\frac{|\\bullet - \\nabla f(x)|}{|\\nabla f(x)|}\$")

title("There are better alternatives to finite differences")

An even better solution is to use Dual numbers!

Define $\varepsilon \ne 0$ s.t. $\varepsilon^2 = 0$, then the complete Taylor expansion in the $\varepsilon \vece_j$ direction is

$$\objective(\params + \varepsilon \vece_j) = \objective(\params) + \varepsilon \underbrace{\nabla\objective(\params) \, \vece_j}_{?}$$Hence, 1st derivatives are given exactly by

$$\nabla\objective(\params) \, \vece_j = \mathfrak{D}\left[\objective(\params + \varepsilon \vece_j)\right]$$

where $\mathfrak{D}$ is the dual part (the $\varepsilon$ coefficient).

The dual number $\varepsilon$ behaves like an infinitesimal and it gives very accurate derivatives!

using DualNumbers

dual_step_method(f, x, h) = dualpart(f(x + ε))

push!(numerical_schemes, dual_step_method)

plot(step_sizes, [relative_error(m, 𝑓, ∇𝑓, x, h) for h in step_sizes, m in numerical_schemes])

loglog(), legend(string.(numerical_schemes)), xlabel("step size, \$h\$"), ylabel("Relative Error, \$\\frac{|\\bullet - \\nabla f(x)|}{|\\nabla f(x)|}\$")

title("There are even better alternatives to complex-step differentiation")

Just like complex identify with $\mathbb{R}[X]/(X^2+1)$,

dual numbers identify with $\mathbb{R}[X]/(X^2)$

The gradient of the objective function can be computed in $m$ dual evaluations of the objective function, via

$$\nabla\objective(\params) = \mathfrak{D} \left( \left[\begin{matrix} \objective(\params + \varepsilon \vece_1) \\ \objective(\params + \varepsilon \vece_2) \\ \vdots \\ \objective(\params + \varepsilon \vece_{m}) \end{matrix} \right]^\mathsf{T} \right)$$

where $m$ is the number of parameters.

For second derivatives, we can use hyperdual numbers.

Let $\varepsilon_1$ and $\varepsilon_2$ be the hyperdual units defined by $\varepsilon_1^2 = \varepsilon_2^2 = 0$ but $\varepsilon_1 \varepsilon_2 \ne 0$.

Let $\params_{jk} = \params + \varepsilon_1 \vece_j + \varepsilon_2 \vece_k$, for which the Taylor expansion of $\objective$ is

$$\objective(\params_{jk}) = \objective(\params) + \varepsilon_1 \nabla\objective(\params) \vece_j + \varepsilon_2 \nabla\objective(\params) \vece_k + \varepsilon_1 \varepsilon_2 \underbrace{\vece_j^\mathsf{T} \nabla^2\objective(\params) \vece_k}_{?}$$Taking the "hyperdual" part gives the second derivative:

$$\vece_j^\mathsf{T} \nabla^2\objective(\params) \vece_k = \mathfrak{H}\left[\objective(\params_{jk})\right]$$

where $\mathfrak{H}$ stands for hyperdual part (the $\varepsilon_1 \varepsilon_2$ coefficient).

Hyperdual steps also give very accurate derivatives!

∇²𝑓(x) = -2sin(x^2) - 4x^2 * cos(x^2) + exp(x)

using HyperDualNumbers

finite_differences_2(f, x, h) = (f(x + h) - 2f(x) + f(x - h)) / h^2

hyperdual_step_method(f, x, h) = ε₁ε₂part(f(x + ε₁ + ε₂))

numerical_schemes_2 = [finite_differences_2, hyperdual_step_method]

plot(step_sizes, [relative_error(m, 𝑓, ∇²𝑓, x, h) for h in step_sizes, m in numerical_schemes_2])

loglog(), legend(string.(numerical_schemes_2)), xlabel("step size, \$h\$"), ylabel("Relative Error, \$\\frac{|\\bullet - \\nabla f(x)|}{|\\nabla f(x)|}\$")

title("HyperDual Numbers for second derivatives")

Autodifferentiating through an iterative solver is expensive¶

The Hessian of $\objective$ can be computed in $\frac{m(m+1)}{2}$ hyperdual evaluations:

$$\nabla^2\objective(\params) = \mathfrak{H} \left( \left[\begin{matrix} \objective(\params_{11}) & \objective(\params_{12}) & \cdots & \objective(\params_{1m}) \\ \objective(\params_{12}) & \objective(\params_{22}) & \cdots & \objective(\params_{2m}) \\ \vdots & \vdots & \ddots & \vdots \\ \objective(\params_{1m}) & \objective(\params_{2m}) & \cdots & \objective(\params_{mm}) \end{matrix} \right] \right)$$

But this requires $\frac{m(m+1)}{2}$ calls to the inner solver, which requires hyperdual factorizations, and fine-tuned non-real tolerances!

What is the F-1 algorithm¶

What does it do?¶

The F-1 algorithm allows you to calculate the gradient and Hessian of an objective function, $\objective(\params)$, defined implicitly by the solution of a steady-state problem.

How does it work?¶

It leverages analytical shortcuts, combined with dual and hyperdual numbers, to avoid calls to the inner solver and unnecessary factorizations.

Analytical gradient¶

Differentiate the objective function $\objective(\params) = \cost\left(\sol(\params), \params\right)$:

$$\color{forestgreen}{\underbrace{\nabla\objective(\params)}_{1 \times m}}

= \color{royalblue}{\underbrace{\nabla_\state\cost(\sol, \params)_{\vphantom{\params}}}_{1 \times n}} \,

\color{red}{\underbrace{\nabla \sol(\params)_{\vphantom{\params}}}_{n \times m}}

+ \color{DarkOrchid}{\underbrace{\nabla_\params \cost(\sol, \params)}_{1 \times m}}$$

$$\color{forestgreen}{\underbrace{\nabla\objective(\params)}_{1 \times m}}

= \color{royalblue}{\underbrace{\nabla_\state\cost(\sol, \params)_{\vphantom{\params}}}_{1 \times n}} \,

\color{red}{\underbrace{\nabla \sol(\params)_{\vphantom{\params}}}_{n \times m}}

+ \color{DarkOrchid}{\underbrace{\nabla_\params \cost(\sol, \params)}_{1 \times m}}$$

Differentiate the steady-state equation, $\statefun\left(\sol(\params),\params\right)=0$:

$$\color{royalblue}{\underbrace{\matA_{\vphantom{\params}}}_{n \times n}} \,

\color{red}{\underbrace{\nabla\sol(\params)_{\vphantom{\params}}}_{n \times m}}

+ \color{forestgreen}{\underbrace{\nabla_\params \statefun(\sol, \params)}_{n\times m}} = 0$$

$$\color{royalblue}{\underbrace{\matA_{\vphantom{\params}}}_{n \times n}} \,

\color{red}{\underbrace{\nabla\sol(\params)_{\vphantom{\params}}}_{n \times m}}

+ \color{forestgreen}{\underbrace{\nabla_\params \statefun(\sol, \params)}_{n\times m}} = 0$$where $\matA \equiv \nabla_\state\statefun(\sol,\params)$ is the Jacobian of the steady-state system (a large, sparse matrix)

Analytical Hessian¶

Differentiate $\objective(\params) = \cost\left(\sol(\params), \params\right)$ twice:

$$\begin{split} \nabla^2 \objective(\params) &= \nabla_{\state\state}\cost(\sol, \params) \, (\nabla\sol \otimes \nabla\sol) + \nabla_{\state\params}\cost(\sol, \params) \, (\nabla\sol \otimes \matI_\params) \\ &\quad+ \nabla_{\params\state}\cost(\sol, \params) \, (\matI_\params \otimes \nabla\sol) + \nabla_{\params\params}\cost(\sol, \params) \, (\matI_\params \otimes \matI_\params) \\ &\quad+ \nabla_\state\cost(\sol, \params) \, \nabla^2\sol, \end{split}$$Differentiate the steady-state equation, $\statefun\left(\sol(\params),\params\right)=0$, twice:

$$\begin{split} 0 & = \nabla_{\state\state}\statefun(\sol, \params) \, (\nabla\sol \otimes \nabla\sol) + \nabla_{\state\params}\statefun(\sol, \params) \, (\nabla\sol \otimes \matI_\params)\\ & \quad + \nabla_{\params\state}\statefun(\sol, \params) \, (\matI_\params \otimes \nabla\sol) + \nabla_{\params\params}\statefun(\sol, \params) \, (\matI_\params \otimes \matI_\params) \\ & \quad + \matA \, \nabla^2\sol. \end{split}$$(Tensor notation of Manton [2012])

F-1 Gradient and Hessian¶

Find the steady state solution $\sol(\params)$

Factorize the Jacobian $\matA = \nabla_\state \statefun\left(\sol(\params), \params\right)$ ($1$ factorization)

Compute $\nabla\sol(\params) = -\matA^{-1} \nabla_\params \statefun(\sol, \params)$ ($m$ forward and back substitutions)

Compute the gradient

$$\nabla\objective(\params) = \nabla_\state\cost(\sol, \params) , \nabla \sol(\params)

- \nabla_\params \cost(\sol, \params)$$

Compute the Hessian ($1$ forward and back substitution)

$$[\nabla^2\objective(\params)]{jk} = \mathfrak{H}\big[\cost(\state{jk}, \params_{jk})\big]

- \mathfrak{H}\big[\statefun(\state_{jk}, \params_{jk})^\mathsf{T}\big]

, \matA^{-\mathsf{T}} ,\nabla_\state\cost(\sol,\params)^\mathsf{T}$$

where $\state_{jk} \equiv \sol + \varepsilon_1 \nabla\sol \, \vece_j +\varepsilon_2 \nabla\sol \, \vece_k$

Demo¶

Let us first quickly build a model using the AIBECS

This will create $\statefun(\state,\params)$ and $\cost(\state,\params)$ and some derivatives, such that we have an expensive objective function, $\objective(\params)$, defined implicitly by the solution $\sol(\params)$.

Below I will skip the details of the model but I will run the code that generates F, ∇ₓF, f, and ∇ₓf.

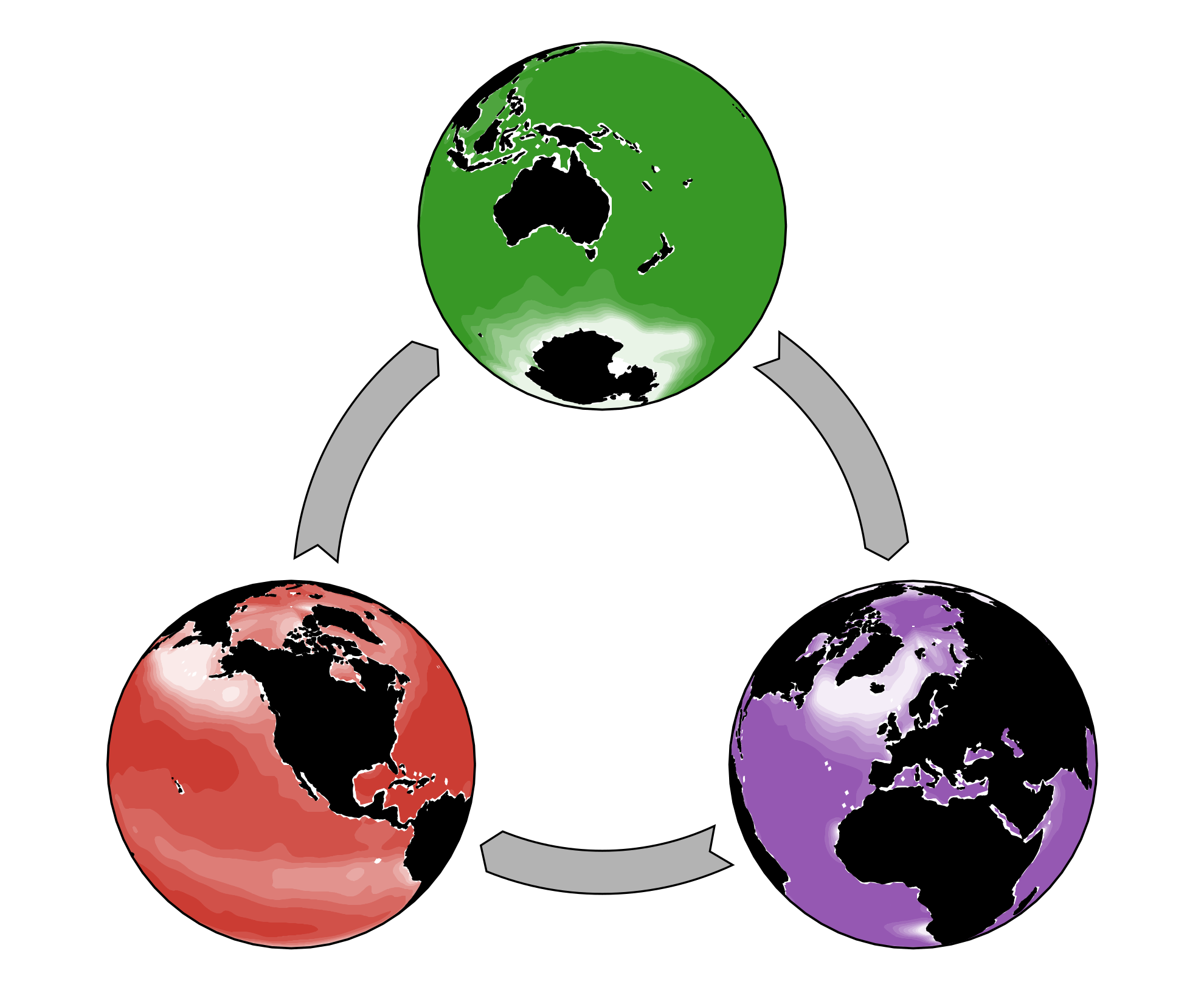

Simple phosphorus-cycling model:

- Dissolved inorganic phosphorus (DIP)

- Particulate organic phosphorus (POP)

const wet3D, grd, T_OCIM = OCIM0.load()

T_DIP(p) = T_OCIM

const ztop = vector_of_top_depths(wet3D, grd) .|> ustrip

const iwet, v = findall(vec(wet3D)), vector_of_volumes(wet3D, grd) .|> ustrip

const nb, z = length(iwet), grd.depth_3D[iwet] .|> ustrip

const DIV, Iabove = buildDIV(wet3D, iwet, grd), buildIabove(wet3D, iwet)

const S₀, S′ = buildPFD(ones(nb), DIV, Iabove), buildPFD(ztop, DIV, Iabove)

T_POP(p) = p.w₀ * S₀ + p.w′ * S′

function G_DIP!(dx, DIP, POP, p)

τ, k, z₀, κ, xgeo, τgeo = p.τ, p.k, p.z₀, p.κ, p.xgeo, p.τgeo

dx .= @. (xgeo - DIP) / τgeo - (DIP ≥ 0) / τ * DIP^2 / (DIP + k) * (z ≤ z₀) + κ * POP

end

function G_POP!(dx, DIP, POP, p)

τ, k, z₀, κ = p.τ, p.k, p.z₀, p.κ

dx .= @. (DIP ≥ 0) / τ * DIP^2 / (DIP + k) * (z ≤ z₀) - κ * POP

end

const iDIP = 1:nb

const iPOP = nb .+ iDIP

t = empty_parameter_table()

add_parameter!(t, :xgeo, 2.17u"mmol/m^3", optimizable = true)

add_parameter!(t, :τgeo, 1.0u"Myr")

add_parameter!(t, :k, 5.0u"μmol/m^3", optimizable = true)

add_parameter!(t, :z₀, 80.0u"m")

add_parameter!(t, :w₀, 0.5u"m/d", optimizable = true)

add_parameter!(t, :w′, 0.1u"1/d", optimizable = true)

add_parameter!(t, :κ, 0.3u"1/d", optimizable = true)

add_parameter!(t, :τ, 100.0u"d", optimizable = true)

initialize_Parameters_type(t, "Pcycle_Parameters")

p = Pcycle_Parameters()

F!, ∇ₓF = inplace_state_function_and_Jacobian((T_DIP,T_POP), (G_DIP!,G_POP!), nb)

x = p.xgeo * ones(2nb)

const μDIPobs3D, σ²DIPobs3D = WorldOceanAtlasTools.fit_to_grid(grd, 2018, "phosphate", "annual", "1°", "an")

const μDIPobs, σ²DIPobs = μDIPobs3D[iwet], σ²DIPobs3D[iwet]

const μx, σ²x = (μDIPobs, missing), (σ²DIPobs, missing)

const ωs, ωp = [1.0, 0.0], 1e-4

f, ∇ₓf, ∇ₚf = generate_objective_and_derivatives(ωs, μx, σ²x, v, ωp, mean_obs(p), variance_obs(p))

F(x::Vector{Tx}, p::Pcycle_Parameters{Tp}) where {Tx,Tp} = F!(Vector{promote_type(Tx,Tp)}(undef,length(x)),x,p)

Tracer equations:

$$\left[\frac{\partial}{\partial t} + \nabla \cdot (\boldsymbol{u} + \mathbf{K}\cdot\nabla )\right] x_\mathsf{DIP} = -U(x_\mathsf{DIP}) + R(x_\mathsf{POP})$$$$\left[\frac{\partial}{\partial t} + \nabla \cdot \boldsymbol{w}\right] x_\mathsf{POP} = U(x_\mathsf{DIP}) - R(x_\mathsf{POP})$$DIP→POP: $U=\frac{x_\mathsf{DIP}}{\tau} \, \frac{x_\mathsf{DIP}}{x_\mathsf{DIP} + k} \, (z < z_0)$

POP→DIP: $R = \kappa \, x_\mathsf{POP}$

6 parameters¶

p

p[:]

Using the F-1 algorithm is easy¶

using F1Method

mem = F1Method.initialize_mem(x, p)

objective(p) = F1Method.objective(f, F, ∇ₓF, mem, p, CTKAlg(); preprint=" ")

gradient(p) = F1Method.gradient(f, F, ∇ₓf, ∇ₓF, mem, p, CTKAlg(); preprint=" ")

hessian(p) = F1Method.hessian(f, F, ∇ₓf, ∇ₓF, mem, p, CTKAlg(); preprint=" ")

That's it, we have defined functions that "autodifferentiate" through the iterative solver!

Testing the F-1 algorithm¶

We simply call the objective,

objective(p)

the gradient,

gradient(p)

and the Hessian,

hessian(p)

And do it again after updating the parameters, this time also recording the time spent, for the objective,

@time objective(1.1p)

the gradient,

@time gradient(1.1p)

and the Hessian,

@time hessian(1.1p)

Factorizations are the bottleneck

@time factorize(∇ₓF(mem.s, mem.p))

length(p), length(p)^2 * 20.313170 * u"s" |> u"minute"

Benchmark the F-1 algorithm

(for a full optimization run)

| Algorithm | Definition | Factorizations |

|---|---|---|

| F-1 | F-1 algorithm | $\mathcal{O}(1)$ |

| DUAL | Dual step for Hessian + Analytical gradient | $\mathcal{O}(m)$ |

| COMPLEX | Complex step for Hessian + Analytical gradient | $\mathcal{O}(m)$ |

| FD1 | Finite differences for Hessian + Analytical gradient | $\mathcal{O}(m)$ |

| HYPER | Hyperdual step for Hessian and dual step for gradient | $\mathcal{O}(m^2)$ |

| FD2 | Finite differences for Hessian and gradient | $\mathcal{O}(m^2)$ |

Computation time full optimization¶

Partition of computation time¶

Conclusions

The F-1 algorithm is

- easy to use and understand

- accurate (machine-precision)

- fast!

Check it on Github

at briochemc/F1Method.jl!