Data Acquisition and Cleaning¶

This is by far and away the most valuable skill in Data Science.

Not the fancy graphs, not the clever machine learning...

The ability to import and manipulate data from a format that you get, to a format that you want, is the data science skill.

Don't let anyone tell you differently.

Why do we need to clean data?¶

Because a lot of the time, noone expected machines to read the data you want to play with

- A computer might only read one of these header rows

- A computer doesn't automatically know what a

\*or#means, and will assume the whole column is just strings

- A computer doesn't automatically know that these aren't "valid" data rows, or how to interpret them

Long story short, half of the battle of doing Data Science is translating between human/business expectation and computer/programming clarity.

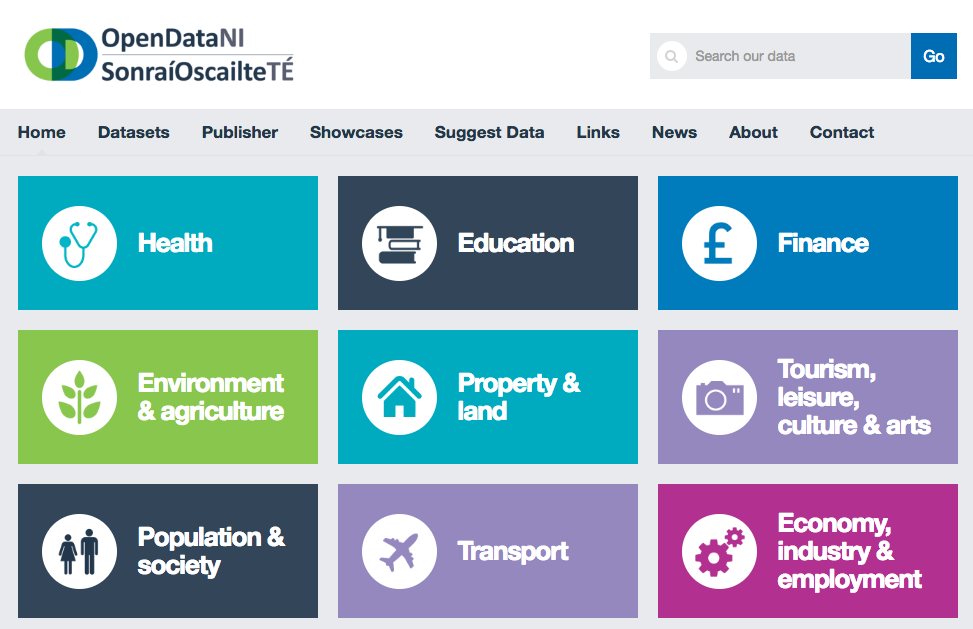

Where can we get data?¶

All over the place

And hundreds more. But for our purposes, I like to start locally.

https://www.opendatani.gov.uk/

Sidebar¶

I am both a massive proponent and regular critic of Open Data in Northern Ireland. The team are great but some of the data that's been put on the platform is woefully inadequate and really difficult to work with.

See below for a rant.

Example Data¶

We're going to start off with a relatively 'easy' one; Population Estimates for Northern Ireland by Parlimentary Constituency

Spoiler Alert: While this data set doesn't have any (known) errors, it does require some manipulation to make sense of. Also, it including Geographic Data Means that we can do some really cool stuff in the Data Visualisation section....

However, how we're starting to do some real code, we need a mascot....

pandas is a python package (sometimes called a module), and you can think of packages as being big (or small) boxes of functionality that we can bring to bear to solve a problem, like custom toolboxes for different operations, for example.

One of the major strengths of the Python Data Science ecosystemis the range of mature and well maintained packages that are outside of the 'standard library' that python ships with.

import pandas as pd # this is just a convention, 'pandas' is the special word

If the above line doesn't work, you will need to install pandas in your environment.

This should be as simple as the following jupyter line(s)

%conda install pandas

Collecting package metadata (current_repodata.json): ...working... done Solving environment: ...working... done # All requested packages already installed. Note: you may need to restart the kernel to use updated packages.

%pip install pandas

Requirement already satisfied: pandas in c:\users\me\anaconda3\lib\site-packages (1.0.5) Requirement already satisfied: python-dateutil>=2.6.1 in c:\users\me\anaconda3\lib\site-packages (from pandas) (2.8.1) Requirement already satisfied: pytz>=2017.2 in c:\users\me\anaconda3\lib\site-packages (from pandas) (2020.1) Requirement already satisfied: numpy>=1.13.3 in c:\users\me\anaconda3\lib\site-packages (from pandas) (1.18.5) Requirement already satisfied: six>=1.5 in c:\users\me\anaconda3\lib\site-packages (from python-dateutil>=2.6.1->pandas) (1.15.0) Note: you may need to restart the kernel to use updated packages.

The trick here is that the % symbol tells jupyter to route the rest of the outside the python envionment and to the base terminal/shell

Now that we've got that sorted; we can get to downloading the data set and seeing what pandas can do for us...

import pandas as pd

from pathlib import Path

# Jump to the 'asides' to understand the operation of the walrus (:=)

# This is really 'clever' and fun but don't worry about it, it just says

# "If I've already downloaded the file, read it from there, otherwise, set

# the source to be that url"

if not (source:= Path('data/ni_pop.csv')).exists():

print('Downloading...')

source = 'https://www.opendatani.gov.uk/dataset/62e7073f-e924-4d3f-81a5-ad45b5127682/resource/67c25586-b9aa-4717-9a4b-42de21a403f2/download/parliamentary-constituencies-by-single-year-of-age-and-gender-mid-2001-to-mid-2019.csv'

df = pd.read_csv(source) # `read_csv` can read from URL's or from local files aswell

df.to_csv('data/ni_pop.csv', index=False) # Stash for later

df.head()

Downloading...

| Geo_Name | Geo_Code | Mid_Year_Ending | Gender | Age | Population_Estimate | |

|---|---|---|---|---|---|---|

| 0 | Belfast East | N06000001 | 2001 | All persons | 0 | 827 |

| 1 | Belfast East | N06000001 | 2001 | All persons | 1 | 1045 |

| 2 | Belfast East | N06000001 | 2001 | All persons | 2 | 1159 |

| 3 | Belfast East | N06000001 | 2001 | All persons | 3 | 1032 |

| 4 | Belfast East | N06000001 | 2001 | All persons | 4 | 1106 |

df.shape

(93366, 6)

head() gives us the first 5 entries, and shape tells us that there are more than 90 thousand rows and 6 data columns.

pandas also provides simple aggregation functions too.

df['Population_Estimate'].sum()

68166520

Whoa... That doesn't look right...

Maybe check that...

So what's going wrong here?

df['Mid_Year_Ending'].unique()

array([2001, 2002, 2003, 2004, 2005, 2006, 2007, 2008, 2009, 2010, 2011,

2012, 2013, 2014, 2015, 2016, 2017, 2018, 2019], dtype=int64)

We can apply logical conditions to the columns (or several columns at once).

Using this pandas allows you to select within a dataframe (like a table in Excel, except it supports near infinite columns/rows....)

df['Mid_Year_Ending'] == 2019

0 False

1 False

2 False

3 False

4 False

...

93361 True

93362 True

93363 True

93364 True

93365 True

Name: Mid_Year_Ending, Length: 93366, dtype: bool

df[df['Mid_Year_Ending'] == 2019] ## select * from df where 'mid_year_ending' = 2019

| Geo_Name | Geo_Code | Mid_Year_Ending | Gender | Age | Population_Estimate | |

|---|---|---|---|---|---|---|

| 4914 | Belfast East | N06000001 | 2019 | All persons | 0 | 1141 |

| 4915 | Belfast East | N06000001 | 2019 | All persons | 1 | 1163 |

| 4916 | Belfast East | N06000001 | 2019 | All persons | 2 | 1170 |

| 4917 | Belfast East | N06000001 | 2019 | All persons | 3 | 1159 |

| 4918 | Belfast East | N06000001 | 2019 | All persons | 4 | 1150 |

| ... | ... | ... | ... | ... | ... | ... |

| 93361 | West Tyrone | N06000018 | 2019 | Males | 86 | 103 |

| 93362 | West Tyrone | N06000018 | 2019 | Males | 87 | 113 |

| 93363 | West Tyrone | N06000018 | 2019 | Males | 88 | 80 |

| 93364 | West Tyrone | N06000018 | 2019 | Males | 89 | 52 |

| 93365 | West Tyrone | N06000018 | 2019 | Males | 90 | 196 |

4914 rows × 6 columns

df[df['Mid_Year_Ending'] == 2019]['Population_Estimate'].sum()

3787334

Ok, so we're at least in the correct order of magnitude but still very wrong... Any ideas?

pandas data frames are stored in columns. Each column is assumed to be a particular type.

When you read in from a CSV file, pandas tries its best to guess what type each column coming in is.

For instance, we can see that the 'Mid_Year_Ending','Age',and 'Population_Estimate' all get int64 type, which means each value is stored in memory as a 64-bit Integer.

However, these object types indicate that the guessing has just given up, and treated these columns as boring strings, however we know that 'Gender' and 'Geo_Name' are Categories, sometimes called 'enums' or enumerables, i.e a small set of valid values, like a dropdown box.

These categories can often indicate particular slices and design decisions made by data providers.

df.dtypes

Geo_Name object Geo_Code object Mid_Year_Ending int64 Gender object Age int64 Population_Estimate int64 dtype: object

Looking at Gender, we can see there are three available 'values' of Gender, Males, Females, and All Persons.

From context we can guess that 'All Persons' is a double-count of the Males/Females breakdown.

df['Gender'].unique()

array(['All persons', 'Females', 'Males'], dtype=object)

To make things slightly more light weight (and significantly faster when used correctly...), we can great a new categorical type that's more efficient using pd.CategoricalDtype

This is useful for situations where there are a very small number of possible (usually string) options, but a very large number of entries.

Good examples of this are found in the clothing industry

- Size (X-Small, Small, Medium, Large, X-Large)

- Color (Red, Black, White)

- Style (Short sleeve, long sleeve)

- Material (Cotton, Polyester)

This categorisation is a form of 'encoding' where, behind the scenes, pandas will create a very small lookup table between a set of numbers ${0,1,2}$ for instance, and will use those values in the in-memory dataframe, replacing the very redundant existing labels; {'All persons','Males','Females'}

df['Gender'].astype('category').to_frame().info()

<class 'pandas.core.frame.DataFrame'> RangeIndex: 93366 entries, 0 to 93365 Data columns (total 1 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Gender 93366 non-null category dtypes: category(1) memory usage: 91.4 KB

df['Gender'].to_frame().info()

<class 'pandas.core.frame.DataFrame'> RangeIndex: 93366 entries, 0 to 93365 Data columns (total 1 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Gender 93366 non-null object dtypes: object(1) memory usage: 729.5+ KB

It would be completly reasonable to ask "What's the point of this? This is a tiny dataset and size doesn't matter".

However, having a solid understanding of the data you're working with, and being able to embed your assumptions and contexts in to the data itself as 'metadata' is extremely valuable for tripping you up if you do something stupid.

For instance, if at some point during your analysis you accidentally swap columns around and instead of querying to the 'minimum age', you try to ask for the 'minimum gender'; as these are non-lexical categories, this makes no sense, and pandas will helpfully tell you off for trying to do something so silly

df['Gender'].astype('category').min()

--------------------------------------------------------------------------- TypeError Traceback (most recent call last) <ipython-input-22-9f4b957ed454> in <module> ----> 1 df['Gender'].astype('category').min() ~\anaconda3\lib\site-packages\pandas\core\generic.py in stat_func(self, axis, skipna, level, numeric_only, **kwargs) 11212 if level is not None: 11213 return self._agg_by_level(name, axis=axis, level=level, skipna=skipna) > 11214 return self._reduce( 11215 f, name, axis=axis, skipna=skipna, numeric_only=numeric_only 11216 ) ~\anaconda3\lib\site-packages\pandas\core\series.py in _reduce(self, op, name, axis, skipna, numeric_only, filter_type, **kwds) 3870 3871 if isinstance(delegate, Categorical): -> 3872 return delegate._reduce(name, skipna=skipna, **kwds) 3873 elif isinstance(delegate, ExtensionArray): 3874 # dispatch to ExtensionArray interface ~\anaconda3\lib\site-packages\pandas\core\arrays\categorical.py in _reduce(self, name, axis, **kwargs) 2123 if func is None: 2124 raise TypeError(f"Categorical cannot perform the operation {name}") -> 2125 return func(**kwargs) 2126 2127 @deprecate_kwarg(old_arg_name="numeric_only", new_arg_name="skipna") ~\anaconda3\lib\site-packages\pandas\util\_decorators.py in wrapper(*args, **kwargs) 212 else: 213 kwargs[new_arg_name] = new_arg_value --> 214 return func(*args, **kwargs) 215 216 return cast(F, wrapper) ~\anaconda3\lib\site-packages\pandas\core\arrays\categorical.py in min(self, skipna, **kwargs) 2146 """ 2147 nv.validate_min((), kwargs) -> 2148 self.check_for_ordered("min") 2149 2150 if not len(self._codes): ~\anaconda3\lib\site-packages\pandas\core\arrays\categorical.py in check_for_ordered(self, op) 1491 """ assert that we are ordered """ 1492 if not self.ordered: -> 1493 raise TypeError( 1494 f"Categorical is not ordered for operation {op}\n" 1495 "you can use .as_ordered() to change the " TypeError: Categorical is not ordered for operation min you can use .as_ordered() to change the Categorical to an ordered one

from pandas.api.types import CategoricalDtype

_gender_type = CategoricalDtype(

categories=df['Gender'].unique(),

ordered=False

)

(df['Mid_Year_Ending'] == 2019) & (df['Gender'] == 'All persons')

0 False

1 False

2 False

3 False

4 False

...

93361 False

93362 False

93363 False

93364 False

93365 False

Length: 93366, dtype: bool

df[

(df['Mid_Year_Ending'] == 2019) &

(df['Gender'] == 'All persons')

]

| Geo_Name | Geo_Code | Mid_Year_Ending | Gender | Age | Population_Estimate | |

|---|---|---|---|---|---|---|

| 4914 | Belfast East | N06000001 | 2019 | All persons | 0 | 1141 |

| 4915 | Belfast East | N06000001 | 2019 | All persons | 1 | 1163 |

| 4916 | Belfast East | N06000001 | 2019 | All persons | 2 | 1170 |

| 4917 | Belfast East | N06000001 | 2019 | All persons | 3 | 1159 |

| 4918 | Belfast East | N06000001 | 2019 | All persons | 4 | 1150 |

| ... | ... | ... | ... | ... | ... | ... |

| 93179 | West Tyrone | N06000018 | 2019 | All persons | 86 | 266 |

| 93180 | West Tyrone | N06000018 | 2019 | All persons | 87 | 255 |

| 93181 | West Tyrone | N06000018 | 2019 | All persons | 88 | 208 |

| 93182 | West Tyrone | N06000018 | 2019 | All persons | 89 | 158 |

| 93183 | West Tyrone | N06000018 | 2019 | All persons | 90 | 608 |

1638 rows × 6 columns

df[

(df['Mid_Year_Ending'] == 2019) &

(df['Gender'] == 'All persons')

]['Population_Estimate'].sum()

1893667

Understanding Data:¶

This population data set has:

- Multiple years of data put together with temporal 'duplicates'

- Multiple geographic regions put together

- Gender counted 'twice'.

Question 1: Binary Computation¶

How does this dataset treat people with non binary gender identity?

How can you test that?

Try it out for yourself and check Q1.ipynb for an answer.

Finally! Some Statistics!¶

df_2019_all = df[

(df['Mid_Year_Ending'] == 2019) &

(df['Gender'] == 'All persons')

]

df_2019_all

| Geo_Name | Geo_Code | Mid_Year_Ending | Gender | Age | Population_Estimate | |

|---|---|---|---|---|---|---|

| 4914 | Belfast East | N06000001 | 2019 | All persons | 0 | 1141 |

| 4915 | Belfast East | N06000001 | 2019 | All persons | 1 | 1163 |

| 4916 | Belfast East | N06000001 | 2019 | All persons | 2 | 1170 |

| 4917 | Belfast East | N06000001 | 2019 | All persons | 3 | 1159 |

| 4918 | Belfast East | N06000001 | 2019 | All persons | 4 | 1150 |

| ... | ... | ... | ... | ... | ... | ... |

| 93179 | West Tyrone | N06000018 | 2019 | All persons | 86 | 266 |

| 93180 | West Tyrone | N06000018 | 2019 | All persons | 87 | 255 |

| 93181 | West Tyrone | N06000018 | 2019 | All persons | 88 | 208 |

| 93182 | West Tyrone | N06000018 | 2019 | All persons | 89 | 158 |

| 93183 | West Tyrone | N06000018 | 2019 | All persons | 90 | 608 |

1638 rows × 6 columns

Part of data maniulation is removing columns that aren't relevant to us; We've created a new slimmed down version of the dataframe with just the data to do with 2019 that disregards gender.

But we can see that it still takes up a bit of memory.

df_2019_all.info(memory_usage='deep')

<class 'pandas.core.frame.DataFrame'> Int64Index: 1638 entries, 4914 to 93183 Data columns (total 6 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Geo_Name 1638 non-null object 1 Geo_Code 1638 non-null object 2 Mid_Year_Ending 1638 non-null int64 3 Gender 1638 non-null object 4 Age 1638 non-null int64 5 Population_Estimate 1638 non-null int64 dtypes: int64(3), object(3) memory usage: 376.4 KB

What about if we forgot about (or 'dropped') the Gender and Mid_Year_Ending columns, because we don't need them, and the 'Geo_Code' one, because we're not going to use it?

df_2019_all.drop(['Gender','Mid_Year_Ending','Geo_Code'])

--------------------------------------------------------------------------- KeyError Traceback (most recent call last) <ipython-input-43-ccc9cb5d22a9> in <module> ----> 1 df_2019_all.drop(['Gender','Mid_Year_Ending','Geo_Code']) C:\ProgramData\Anaconda3\lib\site-packages\pandas\core\frame.py in drop(self, labels, axis, index, columns, level, inplace, errors) 3988 weight 1.0 0.8 3989 """ -> 3990 return super().drop( 3991 labels=labels, 3992 axis=axis, C:\ProgramData\Anaconda3\lib\site-packages\pandas\core\generic.py in drop(self, labels, axis, index, columns, level, inplace, errors) 3934 for axis, labels in axes.items(): 3935 if labels is not None: -> 3936 obj = obj._drop_axis(labels, axis, level=level, errors=errors) 3937 3938 if inplace: C:\ProgramData\Anaconda3\lib\site-packages\pandas\core\generic.py in _drop_axis(self, labels, axis, level, errors) 3968 new_axis = axis.drop(labels, level=level, errors=errors) 3969 else: -> 3970 new_axis = axis.drop(labels, errors=errors) 3971 result = self.reindex(**{axis_name: new_axis}) 3972 C:\ProgramData\Anaconda3\lib\site-packages\pandas\core\indexes\base.py in drop(self, labels, errors) 5016 if mask.any(): 5017 if errors != "ignore": -> 5018 raise KeyError(f"{labels[mask]} not found in axis") 5019 indexer = indexer[~mask] 5020 return self.delete(indexer) KeyError: "['Gender' 'Mid_Year_Ending' 'Geo_Code'] not found in axis"

Whoops! drop defaults to thinking about rows, when we want columns;

df_2019_all.drop(['Gender','Mid_Year_Ending','Geo_Code'], axis=1)

| Geo_Name | Age | Population_Estimate | |

|---|---|---|---|

| 4914 | Belfast East | 0 | 1141 |

| 4915 | Belfast East | 1 | 1163 |

| 4916 | Belfast East | 2 | 1170 |

| 4917 | Belfast East | 3 | 1159 |

| 4918 | Belfast East | 4 | 1150 |

| ... | ... | ... | ... |

| 93179 | West Tyrone | 86 | 266 |

| 93180 | West Tyrone | 87 | 255 |

| 93181 | West Tyrone | 88 | 208 |

| 93182 | West Tyrone | 89 | 158 |

| 93183 | West Tyrone | 90 | 608 |

1638 rows × 3 columns

Now, this is important; we have not changed either the original df data frame or the df_2019_all data frame, what is displayed above is just the result of the drop function.

df_2019_all

| Geo_Name | Geo_Code | Mid_Year_Ending | Gender | Age | Population_Estimate | |

|---|---|---|---|---|---|---|

| 4914 | Belfast East | N06000001 | 2019 | All persons | 0 | 1141 |

| 4915 | Belfast East | N06000001 | 2019 | All persons | 1 | 1163 |

| 4916 | Belfast East | N06000001 | 2019 | All persons | 2 | 1170 |

| 4917 | Belfast East | N06000001 | 2019 | All persons | 3 | 1159 |

| 4918 | Belfast East | N06000001 | 2019 | All persons | 4 | 1150 |

| ... | ... | ... | ... | ... | ... | ... |

| 93179 | West Tyrone | N06000018 | 2019 | All persons | 86 | 266 |

| 93180 | West Tyrone | N06000018 | 2019 | All persons | 87 | 255 |

| 93181 | West Tyrone | N06000018 | 2019 | All persons | 88 | 208 |

| 93182 | West Tyrone | N06000018 | 2019 | All persons | 89 | 158 |

| 93183 | West Tyrone | N06000018 | 2019 | All persons | 90 | 608 |

1638 rows × 6 columns

The clearest way to do what we mean to do is to simply reassign the dataframe back to 'itself' (If you want to know the innards of this, it's complicated, but here is a good read)

df_2019_all = df_2019_all.drop(['Gender','Mid_Year_Ending','Geo_Code'], axis=1)

df_2019_all

| Geo_Name | Age | Population_Estimate | |

|---|---|---|---|

| 4914 | Belfast East | 0 | 1141 |

| 4915 | Belfast East | 1 | 1163 |

| 4916 | Belfast East | 2 | 1170 |

| 4917 | Belfast East | 3 | 1159 |

| 4918 | Belfast East | 4 | 1150 |

| ... | ... | ... | ... |

| 93179 | West Tyrone | 86 | 266 |

| 93180 | West Tyrone | 87 | 255 |

| 93181 | West Tyrone | 88 | 208 |

| 93182 | West Tyrone | 89 | 158 |

| 93183 | West Tyrone | 90 | 608 |

1638 rows × 3 columns

df_2019_all.info(memory_usage='deep')

<class 'pandas.core.frame.DataFrame'> Int64Index: 1638 entries, 4914 to 93183 Data columns (total 3 columns): # Column Non-Null Count Dtype --- ------ -------------- ----- 0 Geo_Name 1638 non-null object 1 Age 1638 non-null int64 2 Population_Estimate 1638 non-null int64 dtypes: int64(2), object(1) memory usage: 229.2 KB

Obviously this didn't exactly save the world in this case, but it's useful to know about...

df_2019_all.groupby('Age')['Population_Estimate'].sum() # select sum(population_estimate) from df_2019_all group by Age

Age

0 22721

1 23408

2 24206

3 25074

4 24960

...

86 5789

87 5005

88 4375

89 3617

90 13734

Name: Population_Estimate, Length: 91, dtype: int64

Another way to think about this is by taking each group in turn

for age_value, group in df_2019_all.groupby('Age'):

break # Interrupts the flow of the loop

group

| Geo_Name | Age | Population_Estimate | |

|---|---|---|---|

| 4914 | Belfast East | 0 | 1141 |

| 10101 | Belfast North | 0 | 1399 |

| 15288 | Belfast South | 0 | 1246 |

| 20475 | Belfast West | 0 | 1260 |

| 25662 | East Antrim | 0 | 857 |

| 30849 | East Londonderry | 0 | 1097 |

| 36036 | Fermanagh and South Tyrone | 0 | 1425 |

| 41223 | Foyle | 0 | 1303 |

| 46410 | Lagan Valley | 0 | 1320 |

| 51597 | Mid Ulster | 0 | 1412 |

| 56784 | Newry and Armagh | 0 | 1678 |

| 61971 | North Antrim | 0 | 1268 |

| 67158 | North Down | 0 | 865 |

| 72345 | South Antrim | 0 | 1208 |

| 77532 | South Down | 0 | 1458 |

| 82719 | Strangford | 0 | 936 |

| 87906 | Upper Bann | 0 | 1693 |

| 93093 | West Tyrone | 0 | 1155 |

group['Population_Estimate'].sum()

22721

What is the most populated age group?¶

This starts off as an easy one, but depends on your definition of 'age group'....

Now we start to see that Statistics can be used by unscrupulous people to change a narrative to suit themselves... be on the watch for politicians carrying statistics...

s_age_pop = df_2019_all.groupby('Age')['Population_Estimate'].sum()

s_age_pop

Age

0 22721

1 23408

2 24206

3 25074

4 24960

...

86 5789

87 5005

88 4375

89 3617

90 13734

Name: Population_Estimate, Length: 91, dtype: int64

s_age_pop.sort_values()

Age

89 3617

88 4375

87 5005

86 5789

85 6219

...

7 26274

52 26301

53 26344

51 26499

54 26776

Name: Population_Estimate, Length: 91, dtype: int64

Easy peasy, 54 is the most common age, and it looks like the 50's are well represented in this data.

pd.cut(df_2019_all.Age, [0,18,30,50,90])

4914 NaN

4915 (0.0, 18.0]

4916 (0.0, 18.0]

4917 (0.0, 18.0]

4918 (0.0, 18.0]

...

93179 (50.0, 90.0]

93180 (50.0, 90.0]

93181 (50.0, 90.0]

93182 (50.0, 90.0]

93183 (50.0, 90.0]

Name: Age, Length: 1638, dtype: category

Categories (4, interval[int64]): [(0, 18] < (18, 30] < (30, 50] < (50, 90]]

s_age_grp_pop = df_2019_all.groupby(

pd.cut(df_2019_all.Age, [0,10,20,30,40,50,60,70,80,90])

)['Population_Estimate'].sum()

s_age_grp_pop

Age (0, 10] 252321 (10, 20] 233470 (20, 30] 239596 (30, 40] 250481 (40, 50] 243976 (50, 60] 253225 (60, 70] 190199 (70, 80] 136211 (80, 90] 71467 Name: Population_Estimate, dtype: int64

s_age_grp_pop.sort_values()

Age (80, 90] 71467 (70, 80] 136211 (60, 70] 190199 (10, 20] 233470 (20, 30] 239596 (40, 50] 243976 (30, 40] 250481 (0, 10] 252321 (50, 60] 253225 Name: Population_Estimate, dtype: int64

So it's a real toss up between 50/60's and the children!

But what if we modified the age groups to something like Marketing people care about?

s_age_grp_pop = df_2019_all.groupby(

pd.cut(df_2019_all.Age, [0,12,17,25,35,45,55,65,90])

)['Population_Estimate'].sum()

s_age_grp_pop

Age (0, 12] 303398 (12, 17] 114586 (17, 25] 183719 (25, 35] 250458 (35, 45] 239822 (45, 55] 260036 (55, 65] 223084 (65, 90] 295843 Name: Population_Estimate, dtype: int64

What about if we wanted to estimate the 'average age'?

We do it with 'normal' numbers by simply summing all the values and dividing by the number of values, but we're not dealing with individual values here, we're dealing with 'bucketised' values. And we can do that too!

# This is the total number of years lived by everyone in northern ireland

# It's approximately the same time ago as when the meteorite killed the dinosaurs

(df_2019_all.Age*df_2019_all.Population_Estimate).sum()

73899465

(df_2019_all.Age*df_2019_all.Population_Estimate).sum() / df_2019_all.Population_Estimate.sum()

39.02453018402919

Oh! Well that's different; Depending on how you say things, the following statistics are all true;

- The average age in Northern Ireland is 40

- The more of the population are 54 than any other age

- Under 12's are the most populous age group

Is there anything else we can say that similarly contradictory?

Learning outcomes¶

So far we've worked out:

- How to read CSV files into

pandasdataframes - How to validate data against expectations

- How to select and filter data in these dataframes based on columns and their values

- How to make basic aggregations across data

- How to make aggregations across groups of data

Now we're gonna make them pretty... in the next worksheet.

df.to_csv('ihatemyself.csv')