6. Linear Model Selection and Regularisation – Conceptual¶

Excercises from *Chapter 6 of An Introduction to Statistical Learning by Gareth James, Daniela Witten, Trevor Hastie and Robert Tibshirani.

import numpy as np

1. We perform best subset, forward stepwise, and backward stepwise selection on a single data set. For each approach, we obtain p + 1 models, containing 0, 1, 2, . . . , p predictors. Explain your answers:¶

(a) Which of the three models with k predictors has the smallest training RSS?¶

Best subset selection will exhibit the smallest training RSS because all possible combinations of predictors are considered for a given k. It is possible that the most effective model is also found by forward stepwise or backward stepwise, but not possible that they will find a model more effective than best subset, as the former consider a subset of the models considered by best subset selection.

(b) Which of the three models with k predictors has the smallest test RSS?¶

Best subset selection will certainly yield the smallest test rss, this model might also be discovered by forward stepwise or backward stepwise but this is not certain.

(c) True or False:¶

i. The predictors in the k-variable model identified by forward stepwise are a subset of the predictors in the (k+1)-variable model identified by forward stepwise selection.

- True

ii. The predictors in the k-variable model identified by backward stepwise are a subset of the predictors in the (k + 1)- variable model identified by backward stepwise selection.

- True

iii. The predictors in the k-variable model identified by backward stepwise are a subset of the predictors in the (k + 1)- variable model identified by forward stepwise selection.

- False

iv. The predictors in the k-variable model identified by forward stepwise are a subset of the predictors in the (k+1)-variable model identified by backward stepwise selection.

- False

v. The predictors in the k-variable model identified by best subset are a subset of the predictors in the (k + 1)-variable model identified by best subset selection.

- False

2. For parts (a) through (c), indicate which of i. through iv. is correct. Justify your answer.¶

(a) The lasso, relative to least squares, is:¶

i. More flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Incorrect. Least squares uses all features, whereas lasso wil either use all features or set coefficients to zero for some features – so lasso is either equivalent to least squares or less flexible.

ii. More flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Incorrect

iii. Less flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Correct. As λ is increased the variance of the model will decrease quickly for a small increase in bias resulting in improved test MSE. At some point the bias will start to increase dramatically outweighing any benefits from further reduction in variance, at the expense of test MSE.

iv. Less flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Incorrect

(b) Repeat (a) for ridge regression relative to least squares.¶

i. More flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Incorrect. Same as for lasso

ii. More flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Incorrect. Same as for lasso

iii. Less flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Correct. Same as for lasso

iv. Less flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Incorrect. Same as for lasso

(c) Repeat (a) for non-linear methods relative to least squares.¶

i. More flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Incorrect

ii. More flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Correct. If the data contains non-linear relationships then the reduction in bias will outweigh the increase in variance as the model better fitst the data. At some point the model will become too flexible and will start to overfit the data at which point increased variance will begin to outwiegh any further reduction in bias.

iii. Less flexible and hence will give improved prediction accuracy when its increase in bias is less than its decrease in variance.

- Incorrect

iv. Less flexible and hence will give improved prediction accuracy when its increase in variance is less than its decrease in bias.

- Incorrect

3. Suppose we estimate the regression coefficients in a linear regression model by minimizing for a particular value of s. For parts (a) through (e), indicate which of i. through v. is correct. Justify your answer.¶

(a) As we increase s from 0, the training RSS will:¶

- iv. Steadily decrease.

As we increase s we increase the models flexibility, in the training setting this will lead to overfitting and a monotonic decrease in the RSS error.

(b) Repeat (a) for test RSS.¶

- ii. Decrease initially, and then eventually start increasing in a U shape.

The increased flexibility of our model improves our models performance in the test setting up until the point where our model starts to overfit the data.

(c) Repeat (a) for variance.¶

- iii. Steadily increase.

Reduced constraint on sum of coefficients means monotonic increase in flexibility – increase variance

(d) Repeat (a) for (squared) bias.¶

- iv. Steadily decrease.

Reduced constraint on sum of coefficients means monotonic decrease in bias.

(e) Repeat (a) for the irreducible error.¶

- v. Remain constant.

The irreducible error represents the inherent noise in our data, because this noise is random it contains no useful information and remains constant regardless of the models flexibility.

4. Suppose we estimate the regression coefficients in a linear regression model by minimizing for a particular value of λ. For parts (a) through (e), indicate which of i. through v. is correct. Justify your answer.¶

(a) As we increase λ from 0, the training RSS will:¶

- iii. Steadily increase.

As lambda increases a heavier penalty is placed on a higher total value of coefficients so the model becomes less flexible and so is less able to fite variability in the training data.

(b) Repeat (a) for test RSS.¶

- ii. Decrease initially, and then eventually start increasing in a U shape.

As lambda increases the models bias is increased reducing any overfitting of the training data and so improving the test RSS accuracy. At some point the bias will increase to the point that our model is less able to represent true relationships in the data.

(c) Repeat (a) for variance.¶

- iv. Steadily decrease.

As lambas increases a higher penalty is placed on model flexibility and so variance decreases.

(d) Repeat (a) for (squared) bias.¶

- iii. Steadily increase.

As lambda increases coefficient estimates are reduced and so bias increases.

(e) Repeat (a) for the irreducible error.¶

- v. Remain constant.

Irreducible error is not effected by the model.

5. It is well-known that ridge regression tends to give similar coefficient values to correlated variables, whereas the lasso may give quite different coefficient values to correlated variables. We will now explore this property in a very simple setting.¶

Suppose that n = 2, p = 2, x11 = x12, x21 = x22. Furthermore, suppose that y1+y2 =0 and x11+x21 =0 and x12+x22 =0, so that the estimate for the intercept in a least squares, ridge regression, or lasso model is zero: βˆ0 = 0.¶

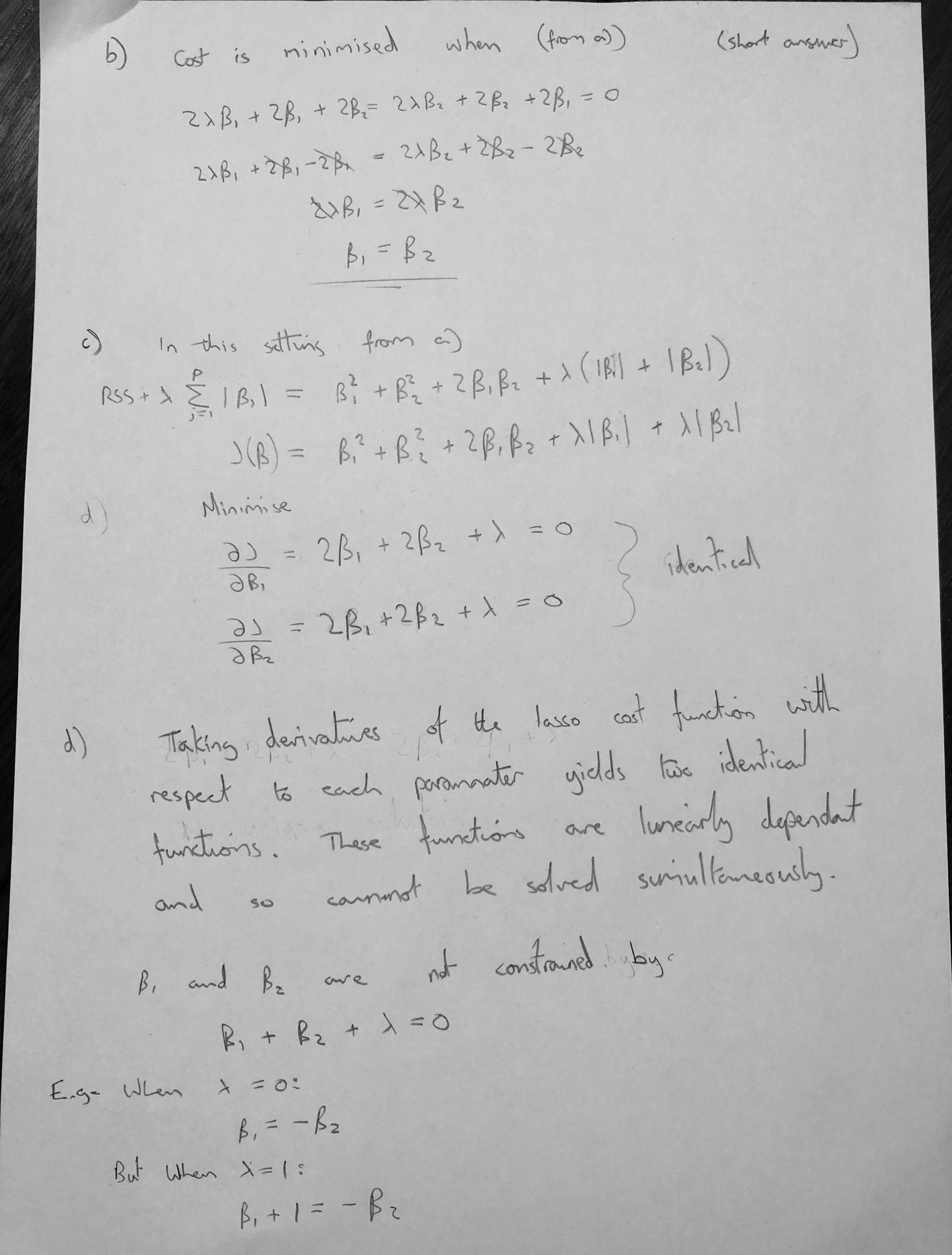

(a) Write out the ridge regression optimization problem in this setting.¶

(b) Argue that in this setting, the ridge coefficient estimates satisfy βˆ 1 = βˆ 2 .¶

(c) Write out the lasso optimization problem in this setting.¶

(d) Argue that in this setting, the lasso coefficients βˆ1 and βˆ2 are not unique—in other words, there are many possible solutions to the optimization problem in (c). Describe these solutions.¶

6. We will now explore (6.12) and (6.13) further.¶

(a) Consider (6.12) with p = 1. For some choice of y1 and λ > 0, plot (6.12) as a function of β1. Your plot should confirm that (6.12) is solved by (6.14).¶

import matplotlib.pyplot as plt

import seaborn as sns

def rr(y1, β1, λ):

return np.power(y1 - β1, 2) + λ*(β1**2)

y1 = 5

λ = 1

β = list(range(-10, 15))

results = [rr(y1, β1, λ) for β1 in β]

βR = y1/(1+λ)

ax = sns.scatterplot(x=β, y=results)

ax.axvline(x=βR, color='r')

plt.xlabel('β1')

plt.ylabel('Cost');

(b) Consider (6.13) with p = 1. For some choice of y1 and λ > 0, plot (6.13) as a function of β1. Your plot should confirm that (6.13) is solved by (6.15).¶

def lasso(y1, β1, λ):

return np.power(y1 - β1, 2) + λ*(np.absolute(β1))

y1 = -1

λ = 3

β = list(range(-10, 15))

results = [lasso(y1, β1, λ) for β1 in β]

if y1 > λ/2:

print('y1 > λ/2')

βL = y1 - λ/2

if y1 < -λ/2:

print('y1 < -λ/2')

βL = y1 + λ/2

if np.absolute(y1) <= λ/2:

print('np.absolute(y1) <= λ/2')

βL = 0

ax = sns.scatterplot(x=β, y=results)

ax.axvline(x=βL, color='r')

plt.xlabel('β1')

plt.ylabel('Cost');

np.absolute(y1) <= λ/2

np.random.seed(1)

x1 = np.random.normal(0, 1, 100)

y_hat = x1 + np.random.normal(0, 1, 100)*0.2

ax = sns.scatterplot(x=x1, y=y_hat)

plt.xlabel('x1')

plt.ylabel('y_hat');

np.random.seed(1)

x1 = np.random.normal(0, 1, 100)

y_hat = np.random.normal(0, 1, 100)*0.2

sns.scatterplot(x=x1, y=y_hat)

plt.xlabel('x1')

plt.ylabel('y_hat');