Call Center - Model Transfer Learning and Fine-Tuning¶

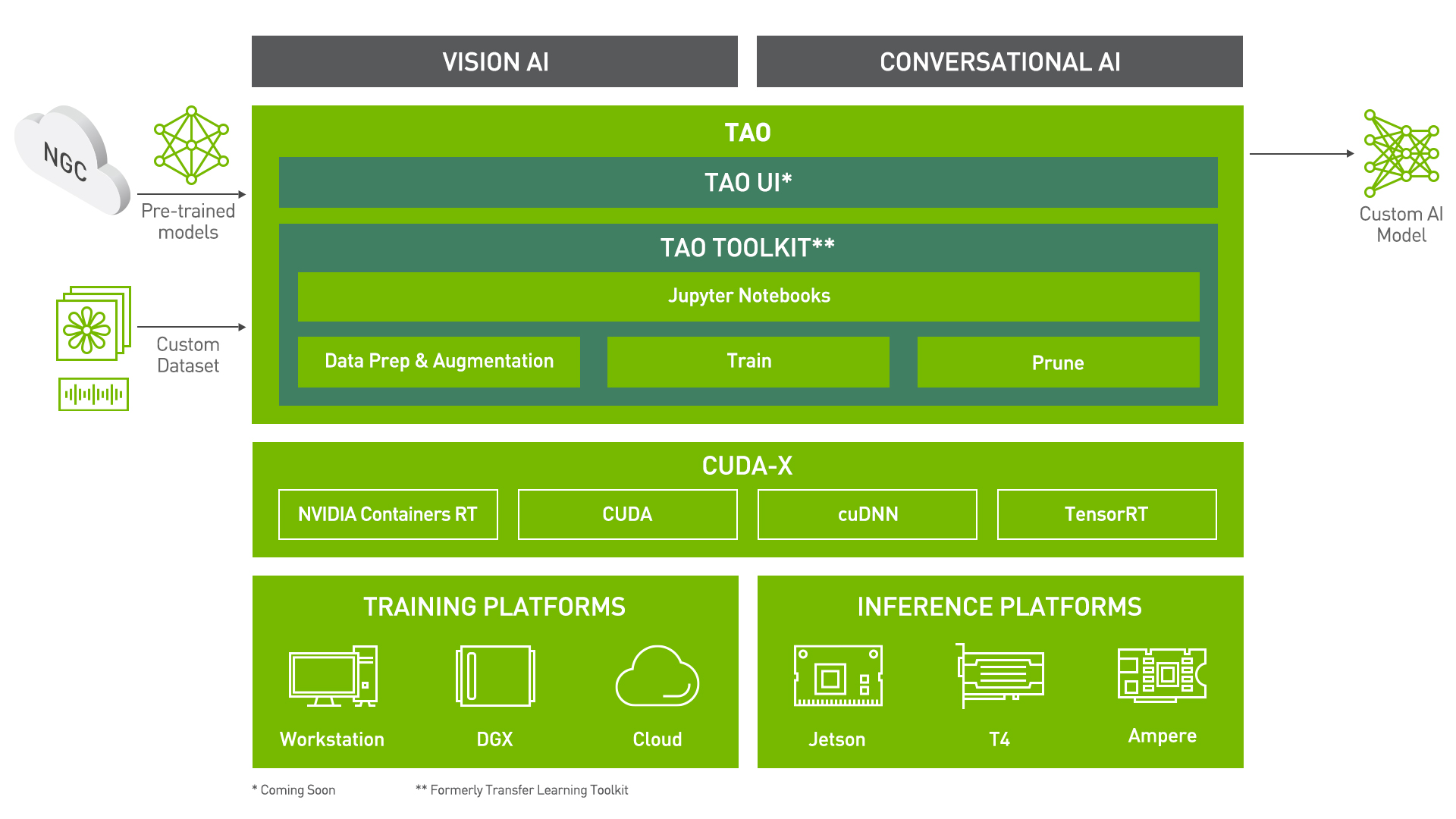

TAO Toolkit is a python based AI toolkit for taking purpose-built pre-trained AI models and customizing them with your own data. Transfer learning extracts learned features from an existing neural network to a new one. Transfer learning is often used when creating a large training dataset is not feasible in order to enhance the base performance of state-of-the-art models.

For this call center solution, the speech-to-text and sentiment analysis models are fine-tuned on call center data to augment the model performance on business specific terminology.

For more information on the TAO Toolkit, please visit here.

Installing necessary dependencies¶

For ease of use, please install TAO Toolkit inside a python virtual environment. We recommend performing this step first and then launching the notebook from the virtual environment. Please refer to the README for these instructions.

Importing Libraries¶

import os

import re

import glob

import wave

import random

import contextlib

from tqdm.notebook import tqdm

from utils_TLT import (

prepare_train_test_manifests,

)

Use these constants to affect different aspects of this pipeline:

DATA_DIR: base folder where data is storedDATASET_NAME: name of the datasetRIVA_MODEL_DIR: directory where the exported models will be saved (.riva and .rmir)STT_MODEL_NAME: name of the speech-to-text modelSEA_MODEL_NAME: name of the sentiment analysis model

For the variable names, the STT tag corresponds to the speech-to-text model, the SEA prefix to the sentiment analysis.

NOTE: MAKE SURE THESE CONSTANTS ALIGN WITH Call Center - Sentiment Analysis Pipeline.ipynb¶

DATA_DIR = "data"

DATASET_NAME = "ReleasedDataset_mp3"

RIVA_MODEL_DIR = "/sfl_data/riva/models"

STT_MODEL_NAME = "speech-to-text-model.riva"

SEA_MODEL_NAME = "sentiment-analysis-model.riva"

Setting up directories¶

After installing TAO Toolkit, the next step is to setup the mounts. The TAO Toolkit launcher uses docker containers under the hood, and for our data and results directory to be visible to the docker, they need to be mapped. The launcher can be configured using the config file ~/.tao_mounts.json. Apart from the mounts, you can also configure additional options like the Environment Variables and amount of Shared Memory available to the TAO Toolkit launcher.

The code below creates a ~/.tao_mounts.json file. This maps directories in which we save the data, specs, results and cache. You should configure it for your specific case so these directories are correctly visible to the docker container. The source directories are found on the host machine and use the HOST tag in the variable names (e.g. STT_HOST_CONFIG_DIR). The destination directories are found on the docker container created by the TAO Toolkit and use the TAO tag in the variable names (e.g. STT_TAO_CONFIG_DIR).

HOST_DATA_DIR = "/sfl_data/devs/diego/NetApp_JarvisDemo/data"

# Speech to Text #

STT_HOST_CONFIG_DIR = "/sfl_data/tao/config/speech_to_text"

STT_HOST_RESULTS_DIR = "/sfl_data/tao/results/speech_to_text"

STT_HOST_CACHE_DIR = "/sfl_data/tao/.cache/speech_to_text"

# Sentiment Analysis #

SEA_HOST_CONFIG_DIR = "/sfl_data/tao/config/sentiment_analysis"

SEA_HOST_RESULTS_DIR = "/sfl_data/tao/results/sentiment_analysis"

SEA_HOST_CACHE_DIR = "/sfl_data/tao/.cache/sentiment_analysis"

!mkdir -p $STT_HOST_CONFIG_DIR

!mkdir -p $STT_HOST_RESULTS_DIR

!mkdir -p $STT_HOST_CACHE_DIR

!mkdir -p $SEA_HOST_CONFIG_DIR

!mkdir -p $SEA_HOST_RESULTS_DIR

!mkdir -p $SEA_HOST_CACHE_DIR

%%bash

tee ~/.tao_mounts.json <<'EOF'

{

"Mounts":[

{

"source": "/sfl_data/devs/diego/NetApp_JarvisDemo/data" ,

"destination": "/data"

},

{

"source": "/sfl_data/tao/config" ,

"destination": "/config"

},

{

"source": "/sfl_data/tao/results" ,

"destination": "/results"

},

{

"source": "/sfl_data/tao/.cache",

"destination": "/root/.cache"

}

],

"DockerOptions": {

"shm_size": "128G",

"ulimits": {

"memlock": -1,

"stack": 67108864

}

}

}

EOF

{

"Mounts":[

{

"source": "/sfl_data/devs/diego/NetApp_JarvisDemo/data" ,

"destination": "/data"

},

{

"source": "/sfl_data/tao/config" ,

"destination": "/config"

},

{

"source": "/sfl_data/tao/results" ,

"destination": "/results"

},

{

"source": "/sfl_data/tao/.cache",

"destination": "/root/.cache"

}

],

"DockerOptions": {

"shm_size": "128G",

"ulimits": {

"memlock": -1,

"stack": 67108864

}

}

}

Check if the GPUs are available using the nvidia-smi command.

!nvidia-smi

Mon Sep 20 17:01:21 2021

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 470.57.02 Driver Version: 470.57.02 CUDA Version: 11.4 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 Tesla V100-SXM2... On | 00000000:06:00.0 Off | 0 |

| N/A 37C P0 82W / 300W | 7670MiB / 32510MiB | 48% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 1 Tesla V100-SXM2... On | 00000000:07:00.0 Off | 0 |

| N/A 35C P0 44W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 2 Tesla V100-SXM2... On | 00000000:0A:00.0 Off | 0 |

| N/A 35C P0 43W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 3 Tesla V100-SXM2... On | 00000000:0B:00.0 Off | 0 |

| N/A 32C P0 44W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 4 Tesla V100-SXM2... On | 00000000:85:00.0 Off | 0 |

| N/A 33C P0 44W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 5 Tesla V100-SXM2... On | 00000000:86:00.0 Off | 0 |

| N/A 36C P0 45W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 6 Tesla V100-SXM2... On | 00000000:89:00.0 Off | 0 |

| N/A 36C P0 45W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

| 7 Tesla V100-SXM2... On | 00000000:8A:00.0 Off | 0 |

| N/A 35C P0 42W / 300W | 0MiB / 32510MiB | 0% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 N/A N/A 1994212 C tritonserver 7667MiB |

+-----------------------------------------------------------------------------+

You can check the docker image versions and the tasks that TAO Toolkit can perform with tao --help or tao info.

NetApp DataOps Toolkit¶

The massive volume of calls that a call center must process on a daily basis means that a database can be quickly overwhelmed by audio files. Efficiently managing the processing and transfer of these audio files is an integral part of the model training and fine-tuning.

The data processing steps can be facilitated through the use of the NetApp DataOps Toolkit. This toolkit is a Python library that makes it simple for developers, data scientists, DevOps engineers, and data engineers to perform various data management tasks, such as provisioning a new data volume, near-instantaneously cloning a data volume, and near-instantaneously snapshotting a data volume for traceability/baselining.

Installation and usage of the NetApp DataOps Toolkit for Traditional Environments requires that Python 3.6 or above be installed on the local host. Additionally, the toolkit requires that pip for Python3 be installed.

For more information on the NetApp DataOps Toolkit, click here.

To install the NetApp DataOps Toolkit for Traditional Environments, run the following command.

python3 -m pip install netapp-dataops-traditional

A config file must be created before the NetApp DataOps Toolkit for Traditional Environments can be used to perform data management operations. To create a config file, run the following command. This command will create a config file named 'config.json' in '~/.netapp_dataops/'.

netapp_dataops_cli.py config

Speech-to-Text¶

The speech-to-text (or Automatic Speech Recognition) is a part of NVIDIA's TAO Conversational AI Toolkit. This Toolkit can train models for common conversational AI tasks such as text classification, question answering, speech recognition, and more.

For an overview of the Conversational AI Toolkit, click here.

Set TAO Toolkit Paths¶

NOTE: The following paths are set from the perspective of the TAO Toolkit Docker.

# the data directory structure is based off main_RIVA.ipynb

# the config and results are manually created

STT_TAO_DATA_DIR = "/data"

STT_TAO_CONFIG_DIR = "/config/speech_to_text"

STT_TAO_RESULTS_DIR = "/results/speech_to_text"

# The encryption key from config.sh. Use the same key for all commands

KEY = 'tlt_encode'

Downloading Specs¶

TAO's Conversational AI Toolkit works off of spec files which make it easy to edit hyperparameters on the fly. We can proceed to downloading the spec files. The user may choose to modify/rewrite these specs, or even individually override them through the launcher. You can download the default spec files by using the download_specs command.

The -o argument indicating the folder where the default configuration files will be downloaded, and -r that instructs the script where to save the logs. Make sure the -o points to an empty folder, otherwise the config files will not be downloaded. If you have already downloaded the config files, then this command will not overwrite them.

For more information on how to build and deploy models using the TAO Toolkit, visit here.

!tao speech_to_text download_specs \

-r $STT_TAO_RESULTS_DIR \

-o $STT_TAO_CONFIG_DIR

2021-09-20 17:01:22,711 [INFO] root: Registry: ['nvcr.io']

2021-09-20 17:01:22,804 [WARNING] tlt.components.docker_handler.docker_handler:

Docker will run the commands as root. If you would like to retain your

local host permissions, please add the "user":"UID:GID" in the

DockerOptions portion of the "/root/.tao_mounts.json" file. You can obtain your

users UID and GID by using the "id -u" and "id -g" commands on the

terminal.

[NeMo W 2021-09-20 21:01:26 experimental:27] Module <class 'nemo.collections.asr.losses.ctc.CTCLoss'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[nltk_data] Downloading package averaged_perceptron_tagger to

[nltk_data] /root/nltk_data...

[nltk_data] Unzipping taggers/averaged_perceptron_tagger.zip.

[nltk_data] Downloading package cmudict to /root/nltk_data...

[nltk_data] Unzipping corpora/cmudict.zip.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioLabelDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text._TarredAudioToTextDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:27 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text_dali.AudioToCharDALIDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo I 2021-09-20 21:01:29 tlt_logging:20] Experiment configuration:

exp_manager:

task_name: download_specs

explicit_log_dir: /results/speech_to_text

source_data_dir: /opt/conda/lib/python3.8/site-packages/asr/speech_to_text/experiment_specs

target_data_dir: /config/speech_to_text

workflow: asr

[NeMo W 2021-09-20 21:01:29 exp_manager:26] Exp_manager is logging to `/results/speech_to_text``, but it already exists.

Traceback (most recent call last):

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 198, in run_and_report

return func()

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 347, in <lambda>

lambda: hydra.run(

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/hydra.py", line 107, in run

return run_job(

File "/opt/conda/lib/python3.8/site-packages/hydra/core/utils.py", line 127, in run_job

ret.return_value = task_function(task_cfg)

File "/tlt-nemo/tlt_utils/download_specs.py", line 59, in main

FileExistsError: The target directory `/config/speech_to_text` is not empty!

In order to avoid overriding the existing spec files please point to a different folder.

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/tlt-nemo/tlt_utils/download_specs.py", line 78, in <module>

File "/opt/conda/lib/python3.8/site-packages/nemo/core/config/hydra_runner.py", line 98, in wrapper

_run_hydra(

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 346, in _run_hydra

run_and_report(

File "/opt/conda/lib/python3.8/site-packages/hydra/_internal/utils.py", line 237, in run_and_report

assert mdl is not None

AssertionError

2021-09-20 17:01:30,776 [INFO] tlt.components.docker_handler.docker_handler: Stopping container.

Overwrite Specs¶

The default speech-to-text specs are built for translation into the Russian language. finetune.yaml must be overwritten for the pipeline to work in English.

NOTE: THE PATH TO finetune.yaml MUST ALIGN WITH STT_HOST_CONFIG_DIR. If you change the STT_HOST_CONFIG_DIR, make sure you change the path between tee and <<.

STT_HOST_CONFIG_DIR

'/sfl_data/tao/config/speech_to_text'

%%bash

tee /sfl_data/tao/config/speech_to_text/finetune.yaml <<'EOF'

trainer:

max_epochs: 1 # This is low for demo purposes

# Whether or not to change the decoder vocabulary.

# Note that this MUST be set if the labels change, e.g. to a different language's character set

# or if additional punctuation characters are added.

change_vocabulary: true

# Fine-tuning settings: training dataset

finetuning_ds:

manifest_filepath: ???

sample_rate: 16000

labels: [" ", "a", "b", "c", "d", "e", "f", "g", "h", "i", "j", "k", "l", "m",

"n", "o", "p", "q", "r", "s", "t", "u", "v", "w", "x", "y", "z", "'"]

batch_size: 16

trim_silence: false

max_duration: 16.7

shuffle: true

is_tarred: false

tarred_audio_filepaths: null

# Fine-tuning settings: validation dataset

validation_ds:

manifest_filepath: ???

sample_rate: 16000

labels: [" ", "a", "b", "c", "d", "e", "f", "g", "h", "i", "j", "k", "l", "m",

"n", "o", "p", "q", "r", "s", "t", "u", "v", "w", "x", "y", "z", "'"]

batch_size: 32

shuffle: false

# Fine-tuning settings: optimizer

optim:

name: novograd

lr: 0.001

EOF

trainer:

max_epochs: 1 # This is low for demo purposes

# Whether or not to change the decoder vocabulary.

# Note that this MUST be set if the labels change, e.g. to a different language's character set

# or if additional punctuation characters are added.

change_vocabulary: true

# Fine-tuning settings: training dataset

finetuning_ds:

manifest_filepath: ???

sample_rate: 16000

labels: [" ", "a", "b", "c", "d", "e", "f", "g", "h", "i", "j", "k", "l", "m",

"n", "o", "p", "q", "r", "s", "t", "u", "v", "w", "x", "y", "z", "'"]

batch_size: 16

trim_silence: false

max_duration: 16.7

shuffle: true

is_tarred: false

tarred_audio_filepaths: null

# Fine-tuning settings: validation dataset

validation_ds:

manifest_filepath: ???

sample_rate: 16000

labels: [" ", "a", "b", "c", "d", "e", "f", "g", "h", "i", "j", "k", "l", "m",

"n", "o", "p", "q", "r", "s", "t", "u", "v", "w", "x", "y", "z", "'"]

batch_size: 32

shuffle: false

# Fine-tuning settings: optimizer

optim:

name: novograd

lr: 0.001

if not os.path.exists(os.path.join(STT_HOST_CONFIG_DIR, "speechtotext_english_jasper.tlt")):

!wget -O $STT_HOST_CONFIG_DIR/speechtotext_english_jasper.tlt https://api.ngc.nvidia.com/v2/models/nvidia/tlt-jarvis/speechtotext_english_jasper/versions/trainable_v1.2/files/speechtotext_english_jasper.tlt

Fine-Tuning¶

Before fine-tuning, we need to create a manifest for the train and test sets. There are a handful of parameters used to create this manifest:

N_SAMPLE: number of calls to sampleMAX_DURATION: maximum duration for the WAV files (for Jasper, 16.7 is the maximum)SET_SIZES: dictionary of set sizes (must include "train", "valid" and "test")COMPANY_BLACKLIST: blacklist of files to remove

COMPANY_BLACKLIST = [

"Hormel Foods Corp._20170223",

"Kraft Heinz Co_20170503",

"Amazon.com Inc._20170202",

"Vulcan Materials_20170802",

"Masco Corp._20171024",

"Fortive Corp_20170207",

"Salesforce.com_20170228",

"Home Depot_20170516",

"Hasbro Inc._20170206",

"Exxon Mobil Corp._20171027",

"Biogen Inc._20170126",

"Goodyear Tire & Rubber_20170428",

"Alaska Air Group Inc_20171025",

"FleetCor Technologies Inc_20170803",

"Roper Technologies_20170209",

"Foot Locker Inc_20170224",

"Starbucks Corp._20170126",

"Dover Corp._20170720",

"Xerox_20170801",

"AT&T Inc._2017042",

"AT&T Inc._20170425",

"Salesforce.com_20170822",

"Varian Medical Systems_20171025",

]

N_SAMPLE = 200

MAX_DURATION = 16.7

SET_SIZES = {

"train": 0.75,

"valid": 0.20,

"test": 0.05,

}

prepare_train_test_manifests(

output_path = STT_HOST_CONFIG_DIR,

host_data_dir = HOST_DATA_DIR,

tlt_data_dir = STT_TAO_DATA_DIR,

dataset_name = DATASET_NAME,

set_sizes = SET_SIZES,

max_duration = MAX_DURATION,

company_blacklist = COMPANY_BLACKLIST,

n_sample = N_SAMPLE,

)

0%| | 0/200 [00:00<?, ?it/s]

Unable to load earnings call 'Amazon.com Inc._20170202' [ERROR] list index out of range Unable to load earnings call 'Foot Locker Inc_20170224' [ERROR] list index out of range Unable to load earnings call 'F5 Networks_20170726' [ERROR] list index out of range Unable to load earnings call 'Xcel Energy Inc_20170202' [ERROR] list index out of range Unable to load earnings call 'Goodyear Tire & Rubber_20170428' [ERROR] list index out of range Skipped Iron Mountain Incorporated_20170728 Unable to load earnings call 'Biogen Inc._20170126' [ERROR] list index out of range Unable to load earnings call 'ResMed_20170427' [ERROR] list index out of range Unable to load earnings call 'JPMorgan Chase & Co._20170714' [ERROR] list index out of range Unable to load earnings call 'Celgene Corp._20170427' [ERROR] list index out of range Unable to load earnings call 'Comerica Inc._20170418' [ERROR] list index out of range Unable to load earnings call 'Home Depot_20170516' [ERROR] list index out of range Unable to load earnings call 'Halliburton Co._20171023' [ERROR] list index out of range Unable to load earnings call 'Western Union Co_20171102' [ERROR] list index out of range Unable to load earnings call 'Grainger (W.W.) Inc._20170719' [ERROR] list index out of range Unable to load earnings call 'United Parcel Service_20170727' [ERROR] list index out of range Unable to load earnings call 'Coca-Cola Company (The)_20170726' [ERROR] list index out of range Unable to load earnings call 'Skyworks Solutions_20171106' [ERROR] list index out of range Unable to load earnings call 'Walmart_20171116' [ERROR] list index out of range Unable to load earnings call 'Salesforce.com_20170228' [ERROR] list index out of range Unable to load earnings call 'Fortive Corp_20170207' [ERROR] list index out of range Unable to load earnings call 'Hologic_20171108' [ERROR] list index out of range Skipped CMS Energy_20170202 Unable to load earnings call 'Western Union Co_20170502' [ERROR] list index out of range Skipped Alexion Pharmaceuticals_20170427 Skipped DTE Energy Co._20170726 Unable to load earnings call 'Salesforce.com_20170822' [ERROR] list index out of range Saved train and test manifests to /sfl_data/tao/config/speech_to_text

Once the pretrained model is in place and the manifest is created, the following command can be used to fine tune the ASR model.

For more information on how to build and deploy models using the TAO Toolkit, visit here.

!tao speech_to_text finetune \

-e $STT_TAO_CONFIG_DIR/finetune.yaml \

-g 1 \

-k $KEY \

-m $STT_TAO_CONFIG_DIR/speechtotext_english_jasper.tlt \

-r $STT_TAO_RESULTS_DIR/finetune \

finetuning_ds.manifest_filepath=$STT_TAO_CONFIG_DIR/train_manifest.json \

validation_ds.manifest_filepath=$STT_TAO_CONFIG_DIR/valid_manifest.json \

trainer.max_epochs=20 \

finetuning_ds.max_duration=$MAX_DURATION \

validation_ds.max_duration=$MAX_DURATION \

finetuning_ds.trim_silence=false \

validation_ds.trim_silence=false \

finetuning_ds.batch_size=16 \

finetuning_ds.batch_size=16 \

finetuning_ds.num_workers=16 \

validation_ds.num_workers=16 \

trainer.gpus=1 \

optim.lr=0.001

2021-09-20 17:01:51,118 [INFO] root: Registry: ['nvcr.io']

2021-09-20 17:01:51,210 [WARNING] tlt.components.docker_handler.docker_handler:

Docker will run the commands as root. If you would like to retain your

local host permissions, please add the "user":"UID:GID" in the

DockerOptions portion of the "/root/.tao_mounts.json" file. You can obtain your

users UID and GID by using the "id -u" and "id -g" commands on the

terminal.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.losses.ctc.CTCLoss'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[nltk_data] Downloading package averaged_perceptron_tagger to

[nltk_data] /root/nltk_data...

[nltk_data] Unzipping taggers/averaged_perceptron_tagger.zip.

[nltk_data] Downloading package cmudict to /root/nltk_data...

[nltk_data] Unzipping corpora/cmudict.zip.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioLabelDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text._TarredAudioToTextDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:55 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:56 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text_dali.AudioToCharDALIDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:57 experimental:27] Module <class 'nemo.collections.asr.losses.ctc.CTCLoss'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.AudioLabelDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text._TarredAudioToTextDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToCharDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text.TarredAudioToBPEDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo W 2021-09-20 21:01:59 experimental:27] Module <class 'nemo.collections.asr.data.audio_to_text_dali.AudioToCharDALIDataset'> is experimental, not ready for production and is not fully supported. Use at your own risk.

[NeMo I 2021-09-20 21:02:00 tlt_logging:20] Experiment configuration:

restore_from: /config/speech_to_text/speechtotext_english_jasper.tlt

save_to: ???

exp_manager:

explicit_log_dir: /results/speech_to_text/finetune

exp_dir: null

name: finetuned-model

version: null

use_datetime_version: true

resume_if_exists: true

resume_past_end: false

resume_ignore_no_checkpoint: true

create_tensorboard_logger: false

summary_writer_kwargs: null

create_wandb_logger: false

wandb_logger_kwargs: null

create_checkpoint_callback: true

checkpoint_callback_params:

filepath: null

monitor: val_loss

verbose: true

save_last: true

save_top_k: 3

save_weights_only: false

mode: auto

period: 1

prefix: null

postfix: .tlt

save_best_model: false

files_to_copy: null

trainer:

logger: false

checkpoint_callback: false

callbacks: null

default_root_dir: null

gradient_clip_val: 0.0

process_position: 0

num_nodes: 1

num_processes: 1

gpus: 1

auto_select_gpus: false

tpu_cores: null

log_gpu_memory: null

progress_bar_refresh_rate: 1

overfit_batches: 0.0

track_grad_norm: -1

check_val_every_n_epoch: 1

fast_dev_run: false

accumulate_grad_batches: 1

max_epochs: 20

min_epochs: 1

max_steps: null

min_steps: null

limit_train_batches: 1.0

limit_val_batches: 1.0

limit_test_batches: 1.0

val_check_interval: 1.0

flush_logs_every_n_steps: 100

log_every_n_steps: 50

accelerator: ddp

sync_batchnorm: false

precision: 32

weights_summary: full

weights_save_path: null

num_sanity_val_steps: 2

truncated_bptt_steps: null

resume_from_checkpoint: null

profiler: null

benchmark: false

deterministic: false

reload_dataloaders_every_epoch: false

auto_lr_find: false

replace_sampler_ddp: true

terminate_on_nan: false

auto_scale_batch_size: false

prepare_data_per_node: true

amp_backend: native

amp_level: O0

change_vocabulary: true

finetuning_ds:

manifest_filepath: /config/speech_to_text/train_manifest.json

batch_size: 16

sample_rate: 16000

labels:

- ' '

- a

- b

- c

- d

- e

- f

- g

- h

- i

- j

- k

- l

- m

- 'n'

- o

- p

- q

- r

- s

- t

- u

- v

- w

- x

- 'y'

- z

- ''''

num_workers: 16

trim_silence: false

shuffle: true

max_duration: 16.7

is_tarred: false

tarred_audio_filepaths: null

validation_ds:

manifest_filepath: /config/speech_to_text/valid_manifest.json

batch_size: 32

sample_rate: 16000

labels:

- ' '

- a

- b

- c

- d

- e

- f

- g

- h

- i

- j

- k

- l

- m

- 'n'

- o

- p

- q

- r

- s

- t

- u

- v

- w

- x

- 'y'

- z

- ''''

num_workers: 16

trim_silence: false

shuffle: false

max_duration: 16.7

is_tarred: false

tarred_audio_filepaths: null

optim:

name: novograd

lr: 0.001

encryption_key: '*******'

tlt_checkpoint_interval: 0

GPU available: True, used: True

GPU available: True, used: True

TPU available: None, using: 0 TPU cores

TPU available: None, using: 0 TPU cores

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0,1,2,3,4,5,6,7]

LOCAL_RANK: 0 - CUDA_VISIBLE_DEVICES: [0,1,2,3,4,5,6,7]

[NeMo W 2021-09-20 21:02:00 exp_manager:380] Exp_manager is logging to /results/speech_to_text/finetune, but it already exists.

[NeMo I 2021-09-20 21:02:00 exp_manager:328] Resuming from /results/speech_to_text/finetune/checkpoints/finetuned-model-last.ckpt

[NeMo I 2021-09-20 21:02:00 exp_manager:194] Experiments will be logged at /results/speech_to_text/finetune

[NeMo W 2021-09-20 21:02:01 nemo_logging:349] /opt/conda/lib/python3.8/site-packages/pytorch_lightning/utilities/distributed.py:49: UserWarning: Checkpoint directory /results/speech_to_text/finetune/checkpoints exists and is not empty.

warnings.warn(*args, **kwargs)

[NeMo W 2021-09-20 21:02:10 modelPT:145] Please call the ModelPT.setup_training_data() method and provide a valid configuration file to setup the train data loader.

Train config :

manifest_filepath: /data/fisher_min5sec/small_manifest.json

batch_size: 32

sample_rate: 16000

labels:

- ' '

- a

- b

- c

- d

- e

- f

- g

- h

- i

- j

- k

- l

- m

- 'n'

- o

- p

- q

- r

- s

- t

- u

- v

- w

- x

- 'y'

- z

- ''''

num_workers: null

trim_silence: true

shuffle: true

max_duration: 16.7

is_tarred: false

tarred_audio_filepaths: null

[NeMo W 2021-09-20 21:02:10 modelPT:152] Please call the ModelPT.setup_validation_data() or ModelPT.setup_multiple_validation_data() method and provide a valid configuration file to setup the validation data loader(s).

Validation config :

manifest_filepath: /data/fisher_min5sec/small_manifest.json

batch_size: 32

sample_rate: 16000

labels:

- ' '

- a

- b

- c

- d

- e

- f

- g

- h

- i

- j

- k

- l

- m

- 'n'

- o

- p

- q

- r

- s

- t

- u

- v

- w

- x

- 'y'

- z

- ''''

num_workers: null

trim_silence: true

shuffle: false

max_duration: null

is_tarred: false

tarred_audio_filepaths: null

[NeMo I 2021-09-20 21:02:10 features:236] PADDING: 16

[NeMo I 2021-09-20 21:02:10 features:252] STFT using torch

[NeMo I 2021-09-20 21:02:45 finetune:119] Model restored from '/config/speech_to_text/speechtotext_english_jasper.tlt'

[NeMo W 2021-09-20 21:02:45 ctc_models:216] Old [' ', 'a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'j', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r', 's', 't', 'u', 'v', 'w', 'x', 'y', 'z', "'"] and new [' ', 'a', 'b', 'c', 'd', 'e', 'f', 'g', 'h', 'i', 'j', 'k', 'l', 'm', 'n', 'o', 'p', 'q', 'r', 's', 't', 'u', 'v', 'w', 'x', 'y', 'z', "'"] match. Not changing anything.

[NeMo I 2021-09-20 21:02:46 collections:173] Dataset loaded with 18073 files totalling 45.33 hours

[NeMo I 2021-09-20 21:02:46 collections:174] 0 files were filtered totalling 0.00 hours

[NeMo I 2021-09-20 21:02:47 collections:173] Dataset loaded with 4341 files totalling 11.13 hours

[NeMo I 2021-09-20 21:02:47 collections:174] 0 files were filtered totalling 0.00 hours

[NeMo I 2021-09-20 21:02:47 modelPT:753] Optimizer config = Novograd (

Parameter Group 0

amsgrad: False

betas: (0.95, 0.98)

eps: 1e-08

grad_averaging: False

lr: 0.001

weight_decay: 0

)

[NeMo I 2021-09-20 21:02:47 lr_scheduler:492] Scheduler not initialized as no `sched` config supplied to setup_optimizer()

initializing ddp: GLOBAL_RANK: 0, MEMBER: 1/1

initializing ddp: GLOBAL_RANK: 0, MEMBER: 1/1

Added key: store_based_barrier_key:1 to store for rank: 0

[NeMo I 2021-09-20 21:02:47 modelPT:753] Optimizer config = Novograd (

Parameter Group 0

amsgrad: False

betas: (0.95, 0.98)

eps: 1e-08

grad_averaging: False

lr: 0.001

weight_decay: 0

)

[NeMo I 2021-09-20 21:02:47 lr_scheduler:492] Scheduler not initialized as no `sched` config supplied to setup_optimizer()

| Name | Type | Params

-----------------------------------------------------------------------------------------

0 | preprocessor | AudioToMelSpectrogramPreprocessor | 0

1 | preprocessor.featurizer | FilterbankFeatures | 0

2 | encoder | ConvASREncoder | 332 M

3 | encoder.encoder | Sequential | 332 M

4 | encoder.encoder.0 | JasperBlock | 180 K

5 | encoder.encoder.0.mconv | ModuleList | 180 K

6 | encoder.encoder.0.mconv.0 | MaskedConv1d | 180 K

7 | encoder.encoder.0.mconv.0.conv | Conv1d | 180 K

8 | encoder.encoder.0.mconv.1 | BatchNorm1d | 512

9 | encoder.encoder.0.mout | Sequential | 0

10 | encoder.encoder.0.mout.0 | ReLU | 0

11 | encoder.encoder.0.mout.1 | Dropout | 0

12 | encoder.encoder.1 | JasperBlock | 3.7 M

13 | encoder.encoder.1.mconv | ModuleList | 3.6 M

14 | encoder.encoder.1.mconv.0 | MaskedConv1d | 720 K

15 | encoder.encoder.1.mconv.0.conv | Conv1d | 720 K

16 | encoder.encoder.1.mconv.1 | BatchNorm1d | 512

17 | encoder.encoder.1.mconv.3 | Dropout | 0

18 | encoder.encoder.1.mconv.4 | MaskedConv1d | 720 K

19 | encoder.encoder.1.mconv.4.conv | Conv1d | 720 K

20 | encoder.encoder.1.mconv.5 | BatchNorm1d | 512

21 | encoder.encoder.1.mconv.7 | Dropout | 0

22 | encoder.encoder.1.mconv.8 | MaskedConv1d | 720 K

23 | encoder.encoder.1.mconv.8.conv | Conv1d | 720 K

24 | encoder.encoder.1.mconv.9 | BatchNorm1d | 512

25 | encoder.encoder.1.mconv.11 | Dropout | 0

26 | encoder.encoder.1.mconv.12 | MaskedConv1d | 720 K

27 | encoder.encoder.1.mconv.12.conv | Conv1d | 720 K

28 | encoder.encoder.1.mconv.13 | BatchNorm1d | 512

29 | encoder.encoder.1.mconv.15 | Dropout | 0

30 | encoder.encoder.1.mconv.16 | MaskedConv1d | 720 K

31 | encoder.encoder.1.mconv.16.conv | Conv1d | 720 K

32 | encoder.encoder.1.mconv.17 | BatchNorm1d | 512

33 | encoder.encoder.1.res | ModuleList | 66.0 K

34 | encoder.encoder.1.res.0 | ModuleList | 66.0 K

35 | encoder.encoder.1.res.0.0 | MaskedConv1d | 65.5 K

36 | encoder.encoder.1.res.0.0.conv | Conv1d | 65.5 K

37 | encoder.encoder.1.res.0.1 | BatchNorm1d | 512

38 | encoder.encoder.1.mout | Sequential | 0

39 | encoder.encoder.1.mout.1 | Dropout | 0

40 | encoder.encoder.2 | JasperBlock | 3.7 M

41 | encoder.encoder.2.mconv | ModuleList | 3.6 M

42 | encoder.encoder.2.mconv.0 | MaskedConv1d | 720 K

43 | encoder.encoder.2.mconv.0.conv | Conv1d | 720 K

44 | encoder.encoder.2.mconv.1 | BatchNorm1d | 512

45 | encoder.encoder.2.mconv.3 | Dropout | 0

46 | encoder.encoder.2.mconv.4 | MaskedConv1d | 720 K

47 | encoder.encoder.2.mconv.4.conv | Conv1d | 720 K

48 | encoder.encoder.2.mconv.5 | BatchNorm1d | 512

49 | encoder.encoder.2.mconv.7 | Dropout | 0

50 | encoder.encoder.2.mconv.8 | MaskedConv1d | 720 K

51 | encoder.encoder.2.mconv.8.conv | Conv1d | 720 K

52 | encoder.encoder.2.mconv.9 | BatchNorm1d | 512

53 | encoder.encoder.2.mconv.11 | Dropout | 0

54 | encoder.encoder.2.mconv.12 | MaskedConv1d | 720 K

55 | encoder.encoder.2.mconv.12.conv | Conv1d | 720 K

56 | encoder.encoder.2.mconv.13 | BatchNorm1d | 512

57 | encoder.encoder.2.mconv.15 | Dropout | 0

58 | encoder.encoder.2.mconv.16 | MaskedConv1d | 720 K

59 | encoder.encoder.2.mconv.16.conv | Conv1d | 720 K

60 | encoder.encoder.2.mconv.17 | BatchNorm1d | 512

61 | encoder.encoder.2.res | ModuleList | 132 K

62 | encoder.encoder.2.res.0 | ModuleList | 66.0 K

63 | encoder.encoder.2.res.0.0 | MaskedConv1d | 65.5 K

64 | encoder.encoder.2.res.0.0.conv | Conv1d | 65.5 K

65 | encoder.encoder.2.res.0.1 | BatchNorm1d | 512

66 | encoder.encoder.2.res.1 | ModuleList | 66.0 K

67 | encoder.encoder.2.res.1.0 | MaskedConv1d | 65.5 K

68 | encoder.encoder.2.res.1.0.conv | Conv1d | 65.5 K

69 | encoder.encoder.2.res.1.1 | BatchNorm1d | 512

70 | encoder.encoder.2.mout | Sequential | 0

71 | encoder.encoder.2.mout.1 | Dropout | 0

72 | encoder.encoder.3 | JasperBlock | 9.2 M

73 | encoder.encoder.3.mconv | ModuleList | 8.9 M

74 | encoder.encoder.3.mconv.0 | MaskedConv1d | 1.3 M

75 | encoder.encoder.3.mconv.0.conv | Conv1d | 1.3 M

76 | encoder.encoder.3.mconv.1 | BatchNorm1d | 768

77 | encoder.encoder.3.mconv.3 | Dropout | 0

78 | encoder.encoder.3.mconv.4 | MaskedConv1d | 1.9 M

79 | encoder.encoder.3.mconv.4.conv | Conv1d | 1.9 M

80 | encoder.encoder.3.mconv.5 | BatchNorm1d | 768

81 | encoder.encoder.3.mconv.7 | Dropout | 0

82 | encoder.encoder.3.mconv.8 | MaskedConv1d | 1.9 M

83 | encoder.encoder.3.mconv.8.conv | Conv1d | 1.9 M

84 | encoder.encoder.3.mconv.9 | BatchNorm1d | 768

85 | encoder.encoder.3.mconv.11 | Dropout | 0

86 | encoder.encoder.3.mconv.12 | MaskedConv1d | 1.9 M

87 | encoder.encoder.3.mconv.12.conv | Conv1d | 1.9 M

88 | encoder.encoder.3.mconv.13 | BatchNorm1d | 768

89 | encoder.encoder.3.mconv.15 | Dropout | 0

90 | encoder.encoder.3.mconv.16 | MaskedConv1d | 1.9 M

91 | encoder.encoder.3.mconv.16.conv | Conv1d | 1.9 M

92 | encoder.encoder.3.mconv.17 | BatchNorm1d | 768

93 | encoder.encoder.3.res | ModuleList | 297 K

94 | encoder.encoder.3.res.0 | ModuleList | 99.1 K

95 | encoder.encoder.3.res.0.0 | MaskedConv1d | 98.3 K

96 | encoder.encoder.3.res.0.0.conv | Conv1d | 98.3 K

97 | encoder.encoder.3.res.0.1 | BatchNorm1d | 768

98 | encoder.encoder.3.res.1 | ModuleList | 99.1 K

99 | encoder.encoder.3.res.1.0 | MaskedConv1d | 98.3 K

100 | encoder.encoder.3.res.1.0.conv | Conv1d | 98.3 K

101 | encoder.encoder.3.res.1.1 | BatchNorm1d | 768

102 | encoder.encoder.3.res.2 | ModuleList | 99.1 K

103 | encoder.encoder.3.res.2.0 | MaskedConv1d | 98.3 K

104 | encoder.encoder.3.res.2.0.conv | Conv1d | 98.3 K

105 | encoder.encoder.3.res.2.1 | BatchNorm1d | 768

106 | encoder.encoder.3.mout | Sequential | 0

107 | encoder.encoder.3.mout.1 | Dropout | 0

108 | encoder.encoder.4 | JasperBlock | 10.0 M

109 | encoder.encoder.4.mconv | ModuleList | 9.6 M

110 | encoder.encoder.4.mconv.0 | MaskedConv1d | 1.9 M

111 | encoder.encoder.4.mconv.0.conv | Conv1d | 1.9 M

112 | encoder.encoder.4.mconv.1 | BatchNorm1d | 768

113 | encoder.encoder.4.mconv.3 | Dropout | 0

114 | encoder.encoder.4.mconv.4 | MaskedConv1d | 1.9 M

115 | encoder.encoder.4.mconv.4.conv | Conv1d | 1.9 M

116 | encoder.encoder.4.mconv.5 | BatchNorm1d | 768

117 | encoder.encoder.4.mconv.7 | Dropout | 0

118 | encoder.encoder.4.mconv.8 | MaskedConv1d | 1.9 M

119 | encoder.encoder.4.mconv.8.conv | Conv1d | 1.9 M

120 | encoder.encoder.4.mconv.9 | BatchNorm1d | 768

121 | encoder.encoder.4.mconv.11 | Dropout | 0

122 | encoder.encoder.4.mconv.12 | MaskedConv1d | 1.9 M

123 | encoder.encoder.4.mconv.12.conv | Conv1d | 1.9 M

124 | encoder.encoder.4.mconv.13 | BatchNorm1d | 768

125 | encoder.encoder.4.mconv.15 | Dropout | 0

126 | encoder.encoder.4.mconv.16 | MaskedConv1d | 1.9 M

127 | encoder.encoder.4.mconv.16.conv | Conv1d | 1.9 M

128 | encoder.encoder.4.mconv.17 | BatchNorm1d | 768

129 | encoder.encoder.4.res | ModuleList | 445 K

130 | encoder.encoder.4.res.0 | ModuleList | 99.1 K

131 | encoder.encoder.4.res.0.0 | MaskedConv1d | 98.3 K

132 | encoder.encoder.4.res.0.0.conv | Conv1d | 98.3 K

133 | encoder.encoder.4.res.0.1 | BatchNorm1d | 768

134 | encoder.encoder.4.res.1 | ModuleList | 99.1 K

135 | encoder.encoder.4.res.1.0 | MaskedConv1d | 98.3 K

136 | encoder.encoder.4.res.1.0.conv | Conv1d | 98.3 K

137 | encoder.encoder.4.res.1.1 | BatchNorm1d | 768

138 | encoder.encoder.4.res.2 | ModuleList | 99.1 K

139 | encoder.encoder.4.res.2.0 | MaskedConv1d | 98.3 K

140 | encoder.encoder.4.res.2.0.conv | Conv1d | 98.3 K

141 | encoder.encoder.4.res.2.1 | BatchNorm1d | 768

142 | encoder.encoder.4.res.3 | ModuleList | 148 K

143 | encoder.encoder.4.res.3.0 | MaskedConv1d | 147 K

144 | encoder.encoder.4.res.3.0.conv | Conv1d | 147 K

145 | encoder.encoder.4.res.3.1 | BatchNorm1d | 768

146 | encoder.encoder.4.mout | Sequential | 0

147 | encoder.encoder.4.mout.1 | Dropout | 0

148 | encoder.encoder.5 | JasperBlock | 22.0 M

149 | encoder.encoder.5.mconv | ModuleList | 21.2 M

150 | encoder.encoder.5.mconv.0 | MaskedConv1d | 3.3 M

151 | encoder.encoder.5.mconv.0.conv | Conv1d | 3.3 M

152 | encoder.encoder.5.mconv.1 | BatchNorm1d | 1.0 K

153 | encoder.encoder.5.mconv.3 | Dropout | 0

154 | encoder.encoder.5.mconv.4 | MaskedConv1d | 4.5 M

155 | encoder.encoder.5.mconv.4.conv | Conv1d | 4.5 M

156 | encoder.encoder.5.mconv.5 | BatchNorm1d | 1.0 K

157 | encoder.encoder.5.mconv.7 | Dropout | 0

158 | encoder.encoder.5.mconv.8 | MaskedConv1d | 4.5 M

159 | encoder.encoder.5.mconv.8.conv | Conv1d | 4.5 M

160 | encoder.encoder.5.mconv.9 | BatchNorm1d | 1.0 K

161 | encoder.encoder.5.mconv.11 | Dropout | 0

162 | encoder.encoder.5.mconv.12 | MaskedConv1d | 4.5 M

163 | encoder.encoder.5.mconv.12.conv | Conv1d | 4.5 M

164 | encoder.encoder.5.mconv.13 | BatchNorm1d | 1.0 K

165 | encoder.encoder.5.mconv.15 | Dropout | 0

166 | encoder.encoder.5.mconv.16 | MaskedConv1d | 4.5 M

167 | encoder.encoder.5.mconv.16.conv | Conv1d | 4.5 M

168 | encoder.encoder.5.mconv.17 | BatchNorm1d | 1.0 K

169 | encoder.encoder.5.res | ModuleList | 791 K

170 | encoder.encoder.5.res.0 | ModuleList | 132 K

171 | encoder.encoder.5.res.0.0 | MaskedConv1d | 131 K

172 | encoder.encoder.5.res.0.0.conv | Conv1d | 131 K

173 | encoder.encoder.5.res.0.1 | BatchNorm1d | 1.0 K

174 | encoder.encoder.5.res.1 | ModuleList | 132 K

175 | encoder.encoder.5.res.1.0 | MaskedConv1d | 131 K

176 | encoder.encoder.5.res.1.0.conv | Conv1d | 131 K

177 | encoder.encoder.5.res.1.1 | BatchNorm1d | 1.0 K

178 | encoder.encoder.5.res.2 | ModuleList | 132 K

179 | encoder.encoder.5.res.2.0 | MaskedConv1d | 131 K

180 | encoder.encoder.5.res.2.0.conv | Conv1d | 131 K

181 | encoder.encoder.5.res.2.1 | BatchNorm1d | 1.0 K

182 | encoder.encoder.5.res.3 | ModuleList | 197 K

183 | encoder.encoder.5.res.3.0 | MaskedConv1d | 196 K

184 | encoder.encoder.5.res.3.0.conv | Conv1d | 196 K

185 | encoder.encoder.5.res.3.1 | BatchNorm1d | 1.0 K

186 | encoder.encoder.5.res.4 | ModuleList | 197 K

187 | encoder.encoder.5.res.4.0 | MaskedConv1d | 196 K

188 | encoder.encoder.5.res.4.0.conv | Conv1d | 196 K

189 | encoder.encoder.5.res.4.1 | BatchNorm1d | 1.0 K

190 | encoder.encoder.5.mout | Sequential | 0

191 | encoder.encoder.5.mout.1 | Dropout | 0

192 | encoder.encoder.6 | JasperBlock | 23.3 M

193 | encoder.encoder.6.mconv | ModuleList | 22.3 M

194 | encoder.encoder.6.mconv.0 | MaskedConv1d | 4.5 M

195 | encoder.encoder.6.mconv.0.conv | Conv1d | 4.5 M

196 | encoder.encoder.6.mconv.1 | BatchNorm1d | 1.0 K

197 | encoder.encoder.6.mconv.3 | Dropout | 0

198 | encoder.encoder.6.mconv.4 | MaskedConv1d | 4.5 M

199 | encoder.encoder.6.mconv.4.conv | Conv1d | 4.5 M

200 | encoder.encoder.6.mconv.5 | BatchNorm1d | 1.0 K

201 | encoder.encoder.6.mconv.7 | Dropout | 0

202 | encoder.encoder.6.mconv.8 | MaskedConv1d | 4.5 M

203 | encoder.encoder.6.mconv.8.conv | Conv1d | 4.5 M

204 | encoder.encoder.6.mconv.9 | BatchNorm1d | 1.0 K

205 | encoder.encoder.6.mconv.11 | Dropout | 0

206 | encoder.encoder.6.mconv.12 | MaskedConv1d | 4.5 M

207 | encoder.encoder.6.mconv.12.conv | Conv1d | 4.5 M

208 | encoder.encoder.6.mconv.13 | BatchNorm1d | 1.0 K

209 | encoder.encoder.6.mconv.15 | Dropout | 0

210 | encoder.encoder.6.mconv.16 | MaskedConv1d | 4.5 M

211 | encoder.encoder.6.mconv.16.conv | Conv1d | 4.5 M

212 | encoder.encoder.6.mconv.17 | BatchNorm1d | 1.0 K

213 | encoder.encoder.6.res | ModuleList | 1.1 M

214 | encoder.encoder.6.res.0 | ModuleList | 132 K

215 | encoder.encoder.6.res.0.0 | MaskedConv1d | 131 K

216 | encoder.encoder.6.res.0.0.conv | Conv1d | 131 K

217 | encoder.encoder.6.res.0.1 | BatchNorm1d | 1.0 K

218 | encoder.encoder.6.res.1 | ModuleList | 132 K

219 | encoder.encoder.6.res.1.0 | MaskedConv1d | 131 K

220 | encoder.encoder.6.res.1.0.conv | Conv1d | 131 K

221 | encoder.encoder.6.res.1.1 | BatchNorm1d | 1.0 K

222 | encoder.encoder.6.res.2 | ModuleList | 132 K

223 | encoder.encoder.6.res.2.0 | MaskedConv1d | 131 K

224 | encoder.encoder.6.res.2.0.conv | Conv1d | 131 K

225 | encoder.encoder.6.res.2.1 | BatchNorm1d | 1.0 K

226 | encoder.encoder.6.res.3 | ModuleList | 197 K

227 | encoder.encoder.6.res.3.0 | MaskedConv1d | 196 K

228 | encoder.encoder.6.res.3.0.conv | Conv1d | 196 K

229 | encoder.encoder.6.res.3.1 | BatchNorm1d | 1.0 K

230 | encoder.encoder.6.res.4 | ModuleList | 197 K

231 | encoder.encoder.6.res.4.0 | MaskedConv1d | 196 K

232 | encoder.encoder.6.res.4.0.conv | Conv1d | 196 K

233 | encoder.encoder.6.res.4.1 | BatchNorm1d | 1.0 K

234 | encoder.encoder.6.res.5 | ModuleList | 263 K

235 | encoder.encoder.6.res.5.0 | MaskedConv1d | 262 K

236 | encoder.encoder.6.res.5.0.conv | Conv1d | 262 K

237 | encoder.encoder.6.res.5.1 | BatchNorm1d | 1.0 K

238 | encoder.encoder.6.mout | Sequential | 0

239 | encoder.encoder.6.mout.1 | Dropout | 0

240 | encoder.encoder.7 | JasperBlock | 42.9 M

241 | encoder.encoder.7.mconv | ModuleList | 41.3 M

242 | encoder.encoder.7.mconv.0 | MaskedConv1d | 6.9 M

243 | encoder.encoder.7.mconv.0.conv | Conv1d | 6.9 M

244 | encoder.encoder.7.mconv.1 | BatchNorm1d | 1.3 K

245 | encoder.encoder.7.mconv.3 | Dropout | 0

246 | encoder.encoder.7.mconv.4 | MaskedConv1d | 8.6 M

247 | encoder.encoder.7.mconv.4.conv | Conv1d | 8.6 M

248 | encoder.encoder.7.mconv.5 | BatchNorm1d | 1.3 K

249 | encoder.encoder.7.mconv.7 | Dropout | 0

250 | encoder.encoder.7.mconv.8 | MaskedConv1d | 8.6 M

251 | encoder.encoder.7.mconv.8.conv | Conv1d | 8.6 M

252 | encoder.encoder.7.mconv.9 | BatchNorm1d | 1.3 K

253 | encoder.encoder.7.mconv.11 | Dropout | 0

254 | encoder.encoder.7.mconv.12 | MaskedConv1d | 8.6 M

255 | encoder.encoder.7.mconv.12.conv | Conv1d | 8.6 M

256 | encoder.encoder.7.mconv.13 | BatchNorm1d | 1.3 K

257 | encoder.encoder.7.mconv.15 | Dropout | 0

258 | encoder.encoder.7.mconv.16 | MaskedConv1d | 8.6 M

259 | encoder.encoder.7.mconv.16.conv | Conv1d | 8.6 M

260 | encoder.encoder.7.mconv.17 | BatchNorm1d | 1.3 K

261 | encoder.encoder.7.res | ModuleList | 1.6 M

262 | encoder.encoder.7.res.0 | ModuleList | 165 K

263 | encoder.encoder.7.res.0.0 | MaskedConv1d | 163 K

264 | encoder.encoder.7.res.0.0.conv | Conv1d | 163 K

265 | encoder.encoder.7.res.0.1 | BatchNorm1d | 1.3 K

266 | encoder.encoder.7.res.1 | ModuleList | 165 K

267 | encoder.encoder.7.res.1.0 | MaskedConv1d | 163 K

268 | encoder.encoder.7.res.1.0.conv | Conv1d | 163 K

269 | encoder.encoder.7.res.1.1 | BatchNorm1d | 1.3 K

270 | encoder.encoder.7.res.2 | ModuleList | 165 K

271 | encoder.encoder.7.res.2.0 | MaskedConv1d | 163 K

272 | encoder.encoder.7.res.2.0.conv | Conv1d | 163 K

273 | encoder.encoder.7.res.2.1 | BatchNorm1d | 1.3 K

274 | encoder.encoder.7.res.3 | ModuleList | 247 K

275 | encoder.encoder.7.res.3.0 | MaskedConv1d | 245 K

276 | encoder.encoder.7.res.3.0.conv | Conv1d | 245 K

277 | encoder.encoder.7.res.3.1 | BatchNorm1d | 1.3 K

278 | encoder.encoder.7.res.4 | ModuleList | 247 K

279 | encoder.encoder.7.res.4.0 | MaskedConv1d | 245 K

280 | encoder.encoder.7.res.4.0.conv | Conv1d | 245 K

281 | encoder.encoder.7.res.4.1 | BatchNorm1d | 1.3 K

282 | encoder.encoder.7.res.5 | ModuleList | 328 K

283 | encoder.encoder.7.res.5.0 | MaskedConv1d | 327 K

284 | encoder.encoder.7.res.5.0.conv | Conv1d | 327 K

285 | encoder.encoder.7.res.5.1 | BatchNorm1d | 1.3 K

286 | encoder.encoder.7.res.6 | ModuleList | 328 K

287 | encoder.encoder.7.res.6.0 | MaskedConv1d | 327 K

288 | encoder.encoder.7.res.6.0.conv | Conv1d | 327 K

289 | encoder.encoder.7.res.6.1 | BatchNorm1d | 1.3 K

290 | encoder.encoder.7.mout | Sequential | 0

291 | encoder.encoder.7.mout.1 | Dropout | 0

292 | encoder.encoder.8 | JasperBlock | 45.1 M

293 | encoder.encoder.8.mconv | ModuleList | 43.0 M

294 | encoder.encoder.8.mconv.0 | MaskedConv1d | 8.6 M

295 | encoder.encoder.8.mconv.0.conv | Conv1d | 8.6 M

296 | encoder.encoder.8.mconv.1 | BatchNorm1d | 1.3 K

297 | encoder.encoder.8.mconv.3 | Dropout | 0

298 | encoder.encoder.8.mconv.4 | MaskedConv1d | 8.6 M

299 | encoder.encoder.8.mconv.4.conv | Conv1d | 8.6 M

300 | encoder.encoder.8.mconv.5 | BatchNorm1d | 1.3 K

301 | encoder.encoder.8.mconv.7 | Dropout | 0

302 | encoder.encoder.8.mconv.8 | MaskedConv1d | 8.6 M

303 | encoder.encoder.8.mconv.8.conv | Conv1d | 8.6 M

304 | encoder.encoder.8.mconv.9 | BatchNorm1d | 1.3 K

305 | encoder.encoder.8.mconv.11 | Dropout | 0

306 | encoder.encoder.8.mconv.12 | MaskedConv1d | 8.6 M

307 | encoder.encoder.8.mconv.12.conv | Conv1d | 8.6 M

308 | encoder.encoder.8.mconv.13 | BatchNorm1d | 1.3 K

309 | encoder.encoder.8.mconv.15 | Dropout | 0

310 | encoder.encoder.8.mconv.16 | MaskedConv1d | 8.6 M

311 | encoder.encoder.8.mconv.16.conv | Conv1d | 8.6 M

312 | encoder.encoder.8.mconv.17 | BatchNorm1d | 1.3 K

313 | encoder.encoder.8.res | ModuleList | 2.1 M

314 | encoder.encoder.8.res.0 | ModuleList | 165 K

315 | encoder.encoder.8.res.0.0 | MaskedConv1d | 163 K

316 | encoder.encoder.8.res.0.0.conv | Conv1d | 163 K

317 | encoder.encoder.8.res.0.1 | BatchNorm1d | 1.3 K

318 | encoder.encoder.8.res.1 | ModuleList | 165 K

319 | encoder.encoder.8.res.1.0 | MaskedConv1d | 163 K

320 | encoder.encoder.8.res.1.0.conv | Conv1d | 163 K

321 | encoder.encoder.8.res.1.1 | BatchNorm1d | 1.3 K

322 | encoder.encoder.8.res.2 | ModuleList | 165 K

323 | encoder.encoder.8.res.2.0 | MaskedConv1d | 163 K

324 | encoder.encoder.8.res.2.0.conv | Conv1d | 163 K

325 | encoder.encoder.8.res.2.1 | BatchNorm1d | 1.3 K

326 | encoder.encoder.8.res.3 | ModuleList | 247 K

327 | encoder.encoder.8.res.3.0 | MaskedConv1d | 245 K

328 | encoder.encoder.8.res.3.0.conv | Conv1d | 245 K

329 | encoder.encoder.8.res.3.1 | BatchNorm1d | 1.3 K

330 | encoder.encoder.8.res.4 | ModuleList | 247 K

331 | encoder.encoder.8.res.4.0 | MaskedConv1d | 245 K

332 | encoder.encoder.8.res.4.0.conv | Conv1d | 245 K

333 | encoder.encoder.8.res.4.1 | BatchNorm1d | 1.3 K

334 | encoder.encoder.8.res.5 | ModuleList | 328 K

335 | encoder.encoder.8.res.5.0 | MaskedConv1d | 327 K

336 | encoder.encoder.8.res.5.0.conv | Conv1d | 327 K

337 | encoder.encoder.8.res.5.1 | BatchNorm1d | 1.3 K

338 | encoder.encoder.8.res.6 | ModuleList | 328 K

339 | encoder.encoder.8.res.6.0 | MaskedConv1d | 327 K

340 | encoder.encoder.8.res.6.0.conv | Conv1d | 327 K

341 | encoder.encoder.8.res.6.1 | BatchNorm1d | 1.3 K

342 | encoder.encoder.8.res.7 | ModuleList | 410 K

343 | encoder.encoder.8.res.7.0 | MaskedConv1d | 409 K

344 | encoder.encoder.8.res.7.0.conv | Conv1d | 409 K

345 | encoder.encoder.8.res.7.1 | BatchNorm1d | 1.3 K

346 | encoder.encoder.8.mout | Sequential | 0

347 | encoder.encoder.8.mout.1 | Dropout | 0

348 | encoder.encoder.9 | JasperBlock | 74.2 M

349 | encoder.encoder.9.mconv | ModuleList | 71.3 M

350 | encoder.encoder.9.mconv.0 | MaskedConv1d | 12.3 M

351 | encoder.encoder.9.mconv.0.conv | Conv1d | 12.3 M

352 | encoder.encoder.9.mconv.1 | BatchNorm1d | 1.5 K

353 | encoder.encoder.9.mconv.3 | Dropout | 0

354 | encoder.encoder.9.mconv.4 | MaskedConv1d | 14.7 M

355 | encoder.encoder.9.mconv.4.conv | Conv1d | 14.7 M

356 | encoder.encoder.9.mconv.5 | BatchNorm1d | 1.5 K

357 | encoder.encoder.9.mconv.7 | Dropout | 0

358 | encoder.encoder.9.mconv.8 | MaskedConv1d | 14.7 M

359 | encoder.encoder.9.mconv.8.conv | Conv1d | 14.7 M

360 | encoder.encoder.9.mconv.9 | BatchNorm1d | 1.5 K

361 | encoder.encoder.9.mconv.11 | Dropout | 0

362 | encoder.encoder.9.mconv.12 | MaskedConv1d | 14.7 M

363 | encoder.encoder.9.mconv.12.conv | Conv1d | 14.7 M

364 | encoder.encoder.9.mconv.13 | BatchNorm1d | 1.5 K

365 | encoder.encoder.9.mconv.15 | Dropout | 0

366 | encoder.encoder.9.mconv.16 | MaskedConv1d | 14.7 M

367 | encoder.encoder.9.mconv.16.conv | Conv1d | 14.7 M

368 | encoder.encoder.9.mconv.17 | BatchNorm1d | 1.5 K

369 | encoder.encoder.9.res | ModuleList | 3.0 M

370 | encoder.encoder.9.res.0 | ModuleList | 198 K

371 | encoder.encoder.9.res.0.0 | MaskedConv1d | 196 K

372 | encoder.encoder.9.res.0.0.conv | Conv1d | 196 K

373 | encoder.encoder.9.res.0.1 | BatchNorm1d | 1.5 K

374 | encoder.encoder.9.res.1 | ModuleList | 198 K

375 | encoder.encoder.9.res.1.0 | MaskedConv1d | 196 K

376 | encoder.encoder.9.res.1.0.conv | Conv1d | 196 K

377 | encoder.encoder.9.res.1.1 | BatchNorm1d | 1.5 K

378 | encoder.encoder.9.res.2 | ModuleList | 198 K

379 | encoder.encoder.9.res.2.0 | MaskedConv1d | 196 K

380 | encoder.encoder.9.res.2.0.conv | Conv1d | 196 K

381 | encoder.encoder.9.res.2.1 | BatchNorm1d | 1.5 K

382 | encoder.encoder.9.res.3 | ModuleList | 296 K

383 | encoder.encoder.9.res.3.0 | MaskedConv1d | 294 K

384 | encoder.encoder.9.res.3.0.conv | Conv1d | 294 K

385 | encoder.encoder.9.res.3.1 | BatchNorm1d | 1.5 K

386 | encoder.encoder.9.res.4 | ModuleList | 296 K

387 | encoder.encoder.9.res.4.0 | MaskedConv1d | 294 K

388 | encoder.encoder.9.res.4.0.conv | Conv1d | 294 K

389 | encoder.encoder.9.res.4.1 | BatchNorm1d | 1.5 K

390 | encoder.encoder.9.res.5 | ModuleList | 394 K

391 | encoder.encoder.9.res.5.0 | MaskedConv1d | 393 K

392 | encoder.encoder.9.res.5.0.conv | Conv1d | 393 K

393 | encoder.encoder.9.res.5.1 | BatchNorm1d | 1.5 K

394 | encoder.encoder.9.res.6 | ModuleList | 394 K

395 | encoder.encoder.9.res.6.0 | MaskedConv1d | 393 K

396 | encoder.encoder.9.res.6.0.conv | Conv1d | 393 K

397 | encoder.encoder.9.res.6.1 | BatchNorm1d | 1.5 K

398 | encoder.encoder.9.res.7 | ModuleList | 493 K

399 | encoder.encoder.9.res.7.0 | MaskedConv1d | 491 K

400 | encoder.encoder.9.res.7.0.conv | Conv1d | 491 K

401 | encoder.encoder.9.res.7.1 | BatchNorm1d | 1.5 K

402 | encoder.encoder.9.res.8 | ModuleList | 493 K

403 | encoder.encoder.9.res.8.0 | MaskedConv1d | 491 K

404 | encoder.encoder.9.res.8.0.conv | Conv1d | 491 K

405 | encoder.encoder.9.res.8.1 | BatchNorm1d | 1.5 K

406 | encoder.encoder.9.mout | Sequential | 0

407 | encoder.encoder.9.mout.1 | Dropout | 0

408 | encoder.encoder.10 | JasperBlock | 77.3 M

409 | encoder.encoder.10.mconv | ModuleList | 73.7 M

410 | encoder.encoder.10.mconv.0 | MaskedConv1d | 14.7 M

411 | encoder.encoder.10.mconv.0.conv | Conv1d | 14.7 M

412 | encoder.encoder.10.mconv.1 | BatchNorm1d | 1.5 K

413 | encoder.encoder.10.mconv.3 | Dropout | 0

414 | encoder.encoder.10.mconv.4 | MaskedConv1d | 14.7 M

415 | encoder.encoder.10.mconv.4.conv | Conv1d | 14.7 M

416 | encoder.encoder.10.mconv.5 | BatchNorm1d | 1.5 K

417 | encoder.encoder.10.mconv.7 | Dropout | 0

418 | encoder.encoder.10.mconv.8 | MaskedConv1d | 14.7 M

419 | encoder.encoder.10.mconv.8.conv | Conv1d | 14.7 M

420 | encoder.encoder.10.mconv.9 | BatchNorm1d | 1.5 K

421 | encoder.encoder.10.mconv.11 | Dropout | 0

422 | encoder.encoder.10.mconv.12 | MaskedConv1d | 14.7 M

423 | encoder.encoder.10.mconv.12.conv | Conv1d | 14.7 M

424 | encoder.encoder.10.mconv.13 | BatchNorm1d | 1.5 K

425 | encoder.encoder.10.mconv.15 | Dropout | 0

426 | encoder.encoder.10.mconv.16 | MaskedConv1d | 14.7 M

427 | encoder.encoder.10.mconv.16.conv | Conv1d | 14.7 M

428 | encoder.encoder.10.mconv.17 | BatchNorm1d | 1.5 K

429 | encoder.encoder.10.res | ModuleList | 3.6 M

430 | encoder.encoder.10.res.0 | ModuleList | 198 K

431 | encoder.encoder.10.res.0.0 | MaskedConv1d | 196 K

432 | encoder.encoder.10.res.0.0.conv | Conv1d | 196 K

433 | encoder.encoder.10.res.0.1 | BatchNorm1d | 1.5 K

434 | encoder.encoder.10.res.1 | ModuleList | 198 K

435 | encoder.encoder.10.res.1.0 | MaskedConv1d | 196 K

436 | encoder.encoder.10.res.1.0.conv | Conv1d | 196 K

437 | encoder.encoder.10.res.1.1 | BatchNorm1d | 1.5 K

438 | encoder.encoder.10.res.2 | ModuleList | 198 K

439 | encoder.encoder.10.res.2.0 | MaskedConv1d | 196 K

440 | encoder.encoder.10.res.2.0.conv | Conv1d | 196 K

441 | encoder.encoder.10.res.2.1 | BatchNorm1d | 1.5 K

442 | encoder.encoder.10.res.3 | ModuleList | 296 K

443 | encoder.encoder.10.res.3.0 | MaskedConv1d | 294 K

444 | encoder.encoder.10.res.3.0.conv | Conv1d | 294 K

445 | encoder.encoder.10.res.3.1 | BatchNorm1d | 1.5 K

446 | encoder.encoder.10.res.4 | ModuleList | 296 K

447 | encoder.encoder.10.res.4.0 | MaskedConv1d | 294 K

448 | encoder.encoder.10.res.4.0.conv | Conv1d | 294 K

449 | encoder.encoder.10.res.4.1 | BatchNorm1d | 1.5 K

450 | encoder.encoder.10.res.5 | ModuleList | 394 K

451 | encoder.encoder.10.res.5.0 | MaskedConv1d | 393 K

452 | encoder.encoder.10.res.5.0.conv | Conv1d | 393 K

453 | encoder.encoder.10.res.5.1 | BatchNorm1d | 1.5 K

454 | encoder.encoder.10.res.6 | ModuleList | 394 K

455 | encoder.encoder.10.res.6.0 | MaskedConv1d | 393 K

456 | encoder.encoder.10.res.6.0.conv | Conv1d | 393 K

457 | encoder.encoder.10.res.6.1 | BatchNorm1d | 1.5 K

458 | encoder.encoder.10.res.7 | ModuleList | 493 K

459 | encoder.encoder.10.res.7.0 | MaskedConv1d | 491 K

460 | encoder.encoder.10.res.7.0.conv | Conv1d | 491 K

461 | encoder.encoder.10.res.7.1 | BatchNorm1d | 1.5 K

462 | encoder.encoder.10.res.8 | ModuleList | 493 K

463 | encoder.encoder.10.res.8.0 | MaskedConv1d | 491 K

464 | encoder.encoder.10.res.8.0.conv | Conv1d | 491 K

465 | encoder.encoder.10.res.8.1 | BatchNorm1d | 1.5 K

466 | encoder.encoder.10.res.9 | ModuleList | 591 K

467 | encoder.encoder.10.res.9.0 | MaskedConv1d | 589 K

468 | encoder.encoder.10.res.9.0.conv | Conv1d | 589 K

469 | encoder.encoder.10.res.9.1 | BatchNorm1d | 1.5 K

470 | encoder.encoder.10.mout | Sequential | 0

471 | encoder.encoder.10.mout.1 | Dropout | 0

472 | encoder.encoder.11 | JasperBlock | 20.0 M

473 | encoder.encoder.11.mconv | ModuleList | 20.0 M

474 | encoder.encoder.11.mconv.0 | MaskedConv1d | 20.0 M

475 | encoder.encoder.11.mconv.0.conv | Conv1d | 20.0 M

476 | encoder.encoder.11.mconv.1 | BatchNorm1d | 1.8 K

477 | encoder.encoder.11.mout | Sequential | 0

478 | encoder.encoder.11.mout.1 | Dropout | 0

479 | encoder.encoder.12 | JasperBlock | 919 K

480 | encoder.encoder.12.mconv | ModuleList | 919 K

481 | encoder.encoder.12.mconv.0 | MaskedConv1d | 917 K

482 | encoder.encoder.12.mconv.0.conv | Conv1d | 917 K

483 | encoder.encoder.12.mconv.1 | BatchNorm1d | 2.0 K

484 | encoder.encoder.12.mout | Sequential | 0

485 | encoder.encoder.12.mout.1 | Dropout | 0

486 | decoder | ConvASRDecoder | 29.7 K

487 | decoder.decoder_layers | Sequential | 29.7 K

488 | decoder.decoder_layers.0 | Conv1d | 29.7 K

489 | loss | CTCLoss | 0

490 | spec_augmentation | SpectrogramAugmentation | 0

491 | spec_augmentation.spec_cutout | SpecCutout | 0

492 | _wer | WER | 0

-----------------------------------------------------------------------------------------

332 M Trainable params

0 Non-trainable params

332 M Total params

| Name | Type | Params

-----------------------------------------------------------------------------------------

0 | preprocessor | AudioToMelSpectrogramPreprocessor | 0

1 | preprocessor.featurizer | FilterbankFeatures | 0

2 | encoder | ConvASREncoder | 332 M

3 | encoder.encoder | Sequential | 332 M

4 | encoder.encoder.0 | JasperBlock | 180 K

5 | encoder.encoder.0.mconv | ModuleList | 180 K

6 | encoder.encoder.0.mconv.0 | MaskedConv1d | 180 K

7 | encoder.encoder.0.mconv.0.conv | Conv1d | 180 K

8 | encoder.encoder.0.mconv.1 | BatchNorm1d | 512

9 | encoder.encoder.0.mout | Sequential | 0

10 | encoder.encoder.0.mout.0 | ReLU | 0

11 | encoder.encoder.0.mout.1 | Dropout | 0

12 | encoder.encoder.1 | JasperBlock | 3.7 M

13 | encoder.encoder.1.mconv | ModuleList | 3.6 M

14 | encoder.encoder.1.mconv.0 | MaskedConv1d | 720 K

15 | encoder.encoder.1.mconv.0.conv | Conv1d | 720 K

16 | encoder.encoder.1.mconv.1 | BatchNorm1d | 512

17 | encoder.encoder.1.mconv.3 | Dropout | 0

18 | encoder.encoder.1.mconv.4 | MaskedConv1d | 720 K

19 | encoder.encoder.1.mconv.4.conv | Conv1d | 720 K

20 | encoder.encoder.1.mconv.5 | BatchNorm1d | 512

21 | encoder.encoder.1.mconv.7 | Dropout | 0

22 | encoder.encoder.1.mconv.8 | MaskedConv1d | 720 K

23 | encoder.encoder.1.mconv.8.conv | Conv1d | 720 K

24 | encoder.encoder.1.mconv.9 | BatchNorm1d | 512

25 | encoder.encoder.1.mconv.11 | Dropout | 0

26 | encoder.encoder.1.mconv.12 | MaskedConv1d | 720 K

27 | encoder.encoder.1.mconv.12.conv | Conv1d | 720 K

28 | encoder.encoder.1.mconv.13 | BatchNorm1d | 512

29 | encoder.encoder.1.mconv.15 | Dropout | 0

30 | encoder.encoder.1.mconv.16 | MaskedConv1d | 720 K

31 | encoder.encoder.1.mconv.16.conv | Conv1d | 720 K

32 | encoder.encoder.1.mconv.17 | BatchNorm1d | 512

33 | encoder.encoder.1.res | ModuleList | 66.0 K

34 | encoder.encoder.1.res.0 | ModuleList | 66.0 K

35 | encoder.encoder.1.res.0.0 | MaskedConv1d | 65.5 K

36 | encoder.encoder.1.res.0.0.conv | Conv1d | 65.5 K

37 | encoder.encoder.1.res.0.1 | BatchNorm1d | 512

38 | encoder.encoder.1.mout | Sequential | 0

39 | encoder.encoder.1.mout.1 | Dropout | 0

40 | encoder.encoder.2 | JasperBlock | 3.7 M

41 | encoder.encoder.2.mconv | ModuleList | 3.6 M

42 | encoder.encoder.2.mconv.0 | MaskedConv1d | 720 K

43 | encoder.encoder.2.mconv.0.conv | Conv1d | 720 K

44 | encoder.encoder.2.mconv.1 | BatchNorm1d | 512

45 | encoder.encoder.2.mconv.3 | Dropout | 0

46 | encoder.encoder.2.mconv.4 | MaskedConv1d | 720 K

47 | encoder.encoder.2.mconv.4.conv | Conv1d | 720 K

48 | encoder.encoder.2.mconv.5 | BatchNorm1d | 512

49 | encoder.encoder.2.mconv.7 | Dropout | 0

50 | encoder.encoder.2.mconv.8 | MaskedConv1d | 720 K

51 | encoder.encoder.2.mconv.8.conv | Conv1d | 720 K

52 | encoder.encoder.2.mconv.9 | BatchNorm1d | 512

53 | encoder.encoder.2.mconv.11 | Dropout | 0

54 | encoder.encoder.2.mconv.12 | MaskedConv1d | 720 K

55 | encoder.encoder.2.mconv.12.conv | Conv1d | 720 K

56 | encoder.encoder.2.mconv.13 | BatchNorm1d | 512

57 | encoder.encoder.2.mconv.15 | Dropout | 0

58 | encoder.encoder.2.mconv.16 | MaskedConv1d | 720 K

59 | encoder.encoder.2.mconv.16.conv | Conv1d | 720 K

60 | encoder.encoder.2.mconv.17 | BatchNorm1d | 512

61 | encoder.encoder.2.res | ModuleList | 132 K

62 | encoder.encoder.2.res.0 | ModuleList | 66.0 K

63 | encoder.encoder.2.res.0.0 | MaskedConv1d | 65.5 K

64 | encoder.encoder.2.res.0.0.conv | Conv1d | 65.5 K

65 | encoder.encoder.2.res.0.1 | BatchNorm1d | 512

66 | encoder.encoder.2.res.1 | ModuleList | 66.0 K

67 | encoder.encoder.2.res.1.0 | MaskedConv1d | 65.5 K

68 | encoder.encoder.2.res.1.0.conv | Conv1d | 65.5 K

69 | encoder.encoder.2.res.1.1 | BatchNorm1d | 512

70 | encoder.encoder.2.mout | Sequential | 0

71 | encoder.encoder.2.mout.1 | Dropout | 0

72 | encoder.encoder.3 | JasperBlock | 9.2 M

73 | encoder.encoder.3.mconv | ModuleList | 8.9 M

74 | encoder.encoder.3.mconv.0 | MaskedConv1d | 1.3 M

75 | encoder.encoder.3.mconv.0.conv | Conv1d | 1.3 M

76 | encoder.encoder.3.mconv.1 | BatchNorm1d | 768

77 | encoder.encoder.3.mconv.3 | Dropout | 0

78 | encoder.encoder.3.mconv.4 | MaskedConv1d | 1.9 M

79 | encoder.encoder.3.mconv.4.conv | Conv1d | 1.9 M

80 | encoder.encoder.3.mconv.5 | BatchNorm1d | 768

81 | encoder.encoder.3.mconv.7 | Dropout | 0

82 | encoder.encoder.3.mconv.8 | MaskedConv1d | 1.9 M

83 | encoder.encoder.3.mconv.8.conv | Conv1d | 1.9 M

84 | encoder.encoder.3.mconv.9 | BatchNorm1d | 768

85 | encoder.encoder.3.mconv.11 | Dropout | 0

86 | encoder.encoder.3.mconv.12 | MaskedConv1d | 1.9 M

87 | encoder.encoder.3.mconv.12.conv | Conv1d | 1.9 M

88 | encoder.encoder.3.mconv.13 | BatchNorm1d | 768

89 | encoder.encoder.3.mconv.15 | Dropout | 0

90 | encoder.encoder.3.mconv.16 | MaskedConv1d | 1.9 M

91 | encoder.encoder.3.mconv.16.conv | Conv1d | 1.9 M

92 | encoder.encoder.3.mconv.17 | BatchNorm1d | 768

93 | encoder.encoder.3.res | ModuleList | 297 K

94 | encoder.encoder.3.res.0 | ModuleList | 99.1 K

95 | encoder.encoder.3.res.0.0 | MaskedConv1d | 98.3 K

96 | encoder.encoder.3.res.0.0.conv | Conv1d | 98.3 K

97 | encoder.encoder.3.res.0.1 | BatchNorm1d | 768

98 | encoder.encoder.3.res.1 | ModuleList | 99.1 K

99 | encoder.encoder.3.res.1.0 | MaskedConv1d | 98.3 K

100 | encoder.encoder.3.res.1.0.conv | Conv1d | 98.3 K

101 | encoder.encoder.3.res.1.1 | BatchNorm1d | 768

102 | encoder.encoder.3.res.2 | ModuleList | 99.1 K

103 | encoder.encoder.3.res.2.0 | MaskedConv1d | 98.3 K

104 | encoder.encoder.3.res.2.0.conv | Conv1d | 98.3 K

105 | encoder.encoder.3.res.2.1 | BatchNorm1d | 768

106 | encoder.encoder.3.mout | Sequential | 0

107 | encoder.encoder.3.mout.1 | Dropout | 0

108 | encoder.encoder.4 | JasperBlock | 10.0 M

109 | encoder.encoder.4.mconv | ModuleList | 9.6 M

110 | encoder.encoder.4.mconv.0 | MaskedConv1d | 1.9 M

111 | encoder.encoder.4.mconv.0.conv | Conv1d | 1.9 M

112 | encoder.encoder.4.mconv.1 | BatchNorm1d | 768

113 | encoder.encoder.4.mconv.3 | Dropout | 0

114 | encoder.encoder.4.mconv.4 | MaskedConv1d | 1.9 M

115 | encoder.encoder.4.mconv.4.conv | Conv1d | 1.9 M

116 | encoder.encoder.4.mconv.5 | BatchNorm1d | 768

117 | encoder.encoder.4.mconv.7 | Dropout | 0

118 | encoder.encoder.4.mconv.8 | MaskedConv1d | 1.9 M

119 | encoder.encoder.4.mconv.8.conv | Conv1d | 1.9 M

120 | encoder.encoder.4.mconv.9 | BatchNorm1d | 768

121 | encoder.encoder.4.mconv.11 | Dropout | 0

122 | encoder.encoder.4.mconv.12 | MaskedConv1d | 1.9 M

123 | encoder.encoder.4.mconv.12.conv | Conv1d | 1.9 M

124 | encoder.encoder.4.mconv.13 | BatchNorm1d | 768

125 | encoder.encoder.4.mconv.15 | Dropout | 0

126 | encoder.encoder.4.mconv.16 | MaskedConv1d | 1.9 M

127 | encoder.encoder.4.mconv.16.conv | Conv1d | 1.9 M

128 | encoder.encoder.4.mconv.17 | BatchNorm1d | 768

129 | encoder.encoder.4.res | ModuleList | 445 K

130 | encoder.encoder.4.res.0 | ModuleList | 99.1 K

131 | encoder.encoder.4.res.0.0 | MaskedConv1d | 98.3 K

132 | encoder.encoder.4.res.0.0.conv | Conv1d | 98.3 K

133 | encoder.encoder.4.res.0.1 | BatchNorm1d | 768

134 | encoder.encoder.4.res.1 | ModuleList | 99.1 K

135 | encoder.encoder.4.res.1.0 | MaskedConv1d | 98.3 K

136 | encoder.encoder.4.res.1.0.conv | Conv1d | 98.3 K

137 | encoder.encoder.4.res.1.1 | BatchNorm1d | 768

138 | encoder.encoder.4.res.2 | ModuleList | 99.1 K

139 | encoder.encoder.4.res.2.0 | MaskedConv1d | 98.3 K

140 | encoder.encoder.4.res.2.0.conv | Conv1d | 98.3 K

141 | encoder.encoder.4.res.2.1 | BatchNorm1d | 768

142 | encoder.encoder.4.res.3 | ModuleList | 148 K

143 | encoder.encoder.4.res.3.0 | MaskedConv1d | 147 K

144 | encoder.encoder.4.res.3.0.conv | Conv1d | 147 K

145 | encoder.encoder.4.res.3.1 | BatchNorm1d | 768

146 | encoder.encoder.4.mout | Sequential | 0

147 | encoder.encoder.4.mout.1 | Dropout | 0

148 | encoder.encoder.5 | JasperBlock | 22.0 M

149 | encoder.encoder.5.mconv | ModuleList | 21.2 M

150 | encoder.encoder.5.mconv.0 | MaskedConv1d | 3.3 M

151 | encoder.encoder.5.mconv.0.conv | Conv1d | 3.3 M

152 | encoder.encoder.5.mconv.1 | BatchNorm1d | 1.0 K

153 | encoder.encoder.5.mconv.3 | Dropout | 0

154 | encoder.encoder.5.mconv.4 | MaskedConv1d | 4.5 M

155 | encoder.encoder.5.mconv.4.conv | Conv1d | 4.5 M

156 | encoder.encoder.5.mconv.5 | BatchNorm1d | 1.0 K

157 | encoder.encoder.5.mconv.7 | Dropout | 0

158 | encoder.encoder.5.mconv.8 | MaskedConv1d | 4.5 M

159 | encoder.encoder.5.mconv.8.conv | Conv1d | 4.5 M

160 | encoder.encoder.5.mconv.9 | BatchNorm1d | 1.0 K

161 | encoder.encoder.5.mconv.11 | Dropout | 0

162 | encoder.encoder.5.mconv.12 | MaskedConv1d | 4.5 M

163 | encoder.encoder.5.mconv.12.conv | Conv1d | 4.5 M

164 | encoder.encoder.5.mconv.13 | BatchNorm1d | 1.0 K

165 | encoder.encoder.5.mconv.15 | Dropout | 0

166 | encoder.encoder.5.mconv.16 | MaskedConv1d | 4.5 M

167 | encoder.encoder.5.mconv.16.conv | Conv1d | 4.5 M

168 | encoder.encoder.5.mconv.17 | BatchNorm1d | 1.0 K

169 | encoder.encoder.5.res | ModuleList | 791 K

170 | encoder.encoder.5.res.0 | ModuleList | 132 K

171 | encoder.encoder.5.res.0.0 | MaskedConv1d | 131 K

172 | encoder.encoder.5.res.0.0.conv | Conv1d | 131 K

173 | encoder.encoder.5.res.0.1 | BatchNorm1d | 1.0 K

174 | encoder.encoder.5.res.1 | ModuleList | 132 K

175 | encoder.encoder.5.res.1.0 | MaskedConv1d | 131 K

176 | encoder.encoder.5.res.1.0.conv | Conv1d | 131 K

177 | encoder.encoder.5.res.1.1 | BatchNorm1d | 1.0 K

178 | encoder.encoder.5.res.2 | ModuleList | 132 K

179 | encoder.encoder.5.res.2.0 | MaskedConv1d | 131 K

180 | encoder.encoder.5.res.2.0.conv | Conv1d | 131 K

181 | encoder.encoder.5.res.2.1 | BatchNorm1d | 1.0 K

182 | encoder.encoder.5.res.3 | ModuleList | 197 K

183 | encoder.encoder.5.res.3.0 | MaskedConv1d | 196 K

184 | encoder.encoder.5.res.3.0.conv | Conv1d | 196 K

185 | encoder.encoder.5.res.3.1 | BatchNorm1d | 1.0 K

186 | encoder.encoder.5.res.4 | ModuleList | 197 K

187 | encoder.encoder.5.res.4.0 | MaskedConv1d | 196 K

188 | encoder.encoder.5.res.4.0.conv | Conv1d | 196 K

189 | encoder.encoder.5.res.4.1 | BatchNorm1d | 1.0 K

190 | encoder.encoder.5.mout | Sequential | 0

191 | encoder.encoder.5.mout.1 | Dropout | 0

192 | encoder.encoder.6 | JasperBlock | 23.3 M

193 | encoder.encoder.6.mconv | ModuleList | 22.3 M

194 | encoder.encoder.6.mconv.0 | MaskedConv1d | 4.5 M

195 | encoder.encoder.6.mconv.0.conv | Conv1d | 4.5 M

196 | encoder.encoder.6.mconv.1 | BatchNorm1d | 1.0 K

197 | encoder.encoder.6.mconv.3 | Dropout | 0

198 | encoder.encoder.6.mconv.4 | MaskedConv1d | 4.5 M

199 | encoder.encoder.6.mconv.4.conv | Conv1d | 4.5 M

200 | encoder.encoder.6.mconv.5 | BatchNorm1d | 1.0 K

201 | encoder.encoder.6.mconv.7 | Dropout | 0

202 | encoder.encoder.6.mconv.8 | MaskedConv1d | 4.5 M

203 | encoder.encoder.6.mconv.8.conv | Conv1d | 4.5 M

204 | encoder.encoder.6.mconv.9 | BatchNorm1d | 1.0 K

205 | encoder.encoder.6.mconv.11 | Dropout | 0

206 | encoder.encoder.6.mconv.12 | MaskedConv1d | 4.5 M

207 | encoder.encoder.6.mconv.12.conv | Conv1d | 4.5 M

208 | encoder.encoder.6.mconv.13 | BatchNorm1d | 1.0 K

209 | encoder.encoder.6.mconv.15 | Dropout | 0

210 | encoder.encoder.6.mconv.16 | MaskedConv1d | 4.5 M

211 | encoder.encoder.6.mconv.16.conv | Conv1d | 4.5 M

212 | encoder.encoder.6.mconv.17 | BatchNorm1d | 1.0 K

213 | encoder.encoder.6.res | ModuleList | 1.1 M

214 | encoder.encoder.6.res.0 | ModuleList | 132 K

215 | encoder.encoder.6.res.0.0 | MaskedConv1d | 131 K

216 | encoder.encoder.6.res.0.0.conv | Conv1d | 131 K

217 | encoder.encoder.6.res.0.1 | BatchNorm1d | 1.0 K

218 | encoder.encoder.6.res.1 | ModuleList | 132 K

219 | encoder.encoder.6.res.1.0 | MaskedConv1d | 131 K

220 | encoder.encoder.6.res.1.0.conv | Conv1d | 131 K

221 | encoder.encoder.6.res.1.1 | BatchNorm1d | 1.0 K

222 | encoder.encoder.6.res.2 | ModuleList | 132 K

223 | encoder.encoder.6.res.2.0 | MaskedConv1d | 131 K

224 | encoder.encoder.6.res.2.0.conv | Conv1d | 131 K

225 | encoder.encoder.6.res.2.1 | BatchNorm1d | 1.0 K

226 | encoder.encoder.6.res.3 | ModuleList | 197 K

227 | encoder.encoder.6.res.3.0 | MaskedConv1d | 196 K

228 | encoder.encoder.6.res.3.0.conv | Conv1d | 196 K

229 | encoder.encoder.6.res.3.1 | BatchNorm1d | 1.0 K

230 | encoder.encoder.6.res.4 | ModuleList | 197 K

231 | encoder.encoder.6.res.4.0 | MaskedConv1d | 196 K

232 | encoder.encoder.6.res.4.0.conv | Conv1d | 196 K

233 | encoder.encoder.6.res.4.1 | BatchNorm1d | 1.0 K

234 | encoder.encoder.6.res.5 | ModuleList | 263 K

235 | encoder.encoder.6.res.5.0 | MaskedConv1d | 262 K

236 | encoder.encoder.6.res.5.0.conv | Conv1d | 262 K

237 | encoder.encoder.6.res.5.1 | BatchNorm1d | 1.0 K

238 | encoder.encoder.6.mout | Sequential | 0

239 | encoder.encoder.6.mout.1 | Dropout | 0

240 | encoder.encoder.7 | JasperBlock | 42.9 M

241 | encoder.encoder.7.mconv | ModuleList | 41.3 M

242 | encoder.encoder.7.mconv.0 | MaskedConv1d | 6.9 M

243 | encoder.encoder.7.mconv.0.conv | Conv1d | 6.9 M

244 | encoder.encoder.7.mconv.1 | BatchNorm1d | 1.3 K

245 | encoder.encoder.7.mconv.3 | Dropout | 0

246 | encoder.encoder.7.mconv.4 | MaskedConv1d | 8.6 M

247 | encoder.encoder.7.mconv.4.conv | Conv1d | 8.6 M

248 | encoder.encoder.7.mconv.5 | BatchNorm1d | 1.3 K

249 | encoder.encoder.7.mconv.7 | Dropout | 0