CS/ECE/ISyE 524 — Introduction to Optimization — Spring 2016¶

Image Mosaicking¶

Ananth Sridhar (ananth.sridhar@wisc.edu), Rangapriya Parthasarathy (rparthasarat@wisc.edu), Song Mei (smei4@wisc.edu)¶

Table of Contents¶

- Introduction

- Mathematical Model - Mixed Integer Program Formulation

- Similarity Criterion: Pixel Absolute Difference

- Similarity Criterion: Mean Absolute Difference

- Similarity Criterion: Mode Absolute Difference

- Similarity Criterion: Histogram based Matching

- Solution - Mixed Integer Program Formulation (Julia-Code)

- Common Functions

- Load Images

- Optimization Function

- Mosaicking First Image

- More Complicated Image with Image Library

- Brute Force Optimization

- Split RGB Channel - Speed up our Optimization!

- Histogram based Matching

- Mathematical Model - Linear Program Formulation

- Solution - Linear Program Formulation (Julia-Code)

- Results and Discussion

- Conclusion

- Bonus: Try It Yourself

1. Introduction¶

Mosaicking is an old art technique where pictures or designs are formed by inlaying small bits of colored stone, glass, or tile. These small bits are visible close up, but the boundaries of the bits will blend and a single recognizable image will show at a distance.

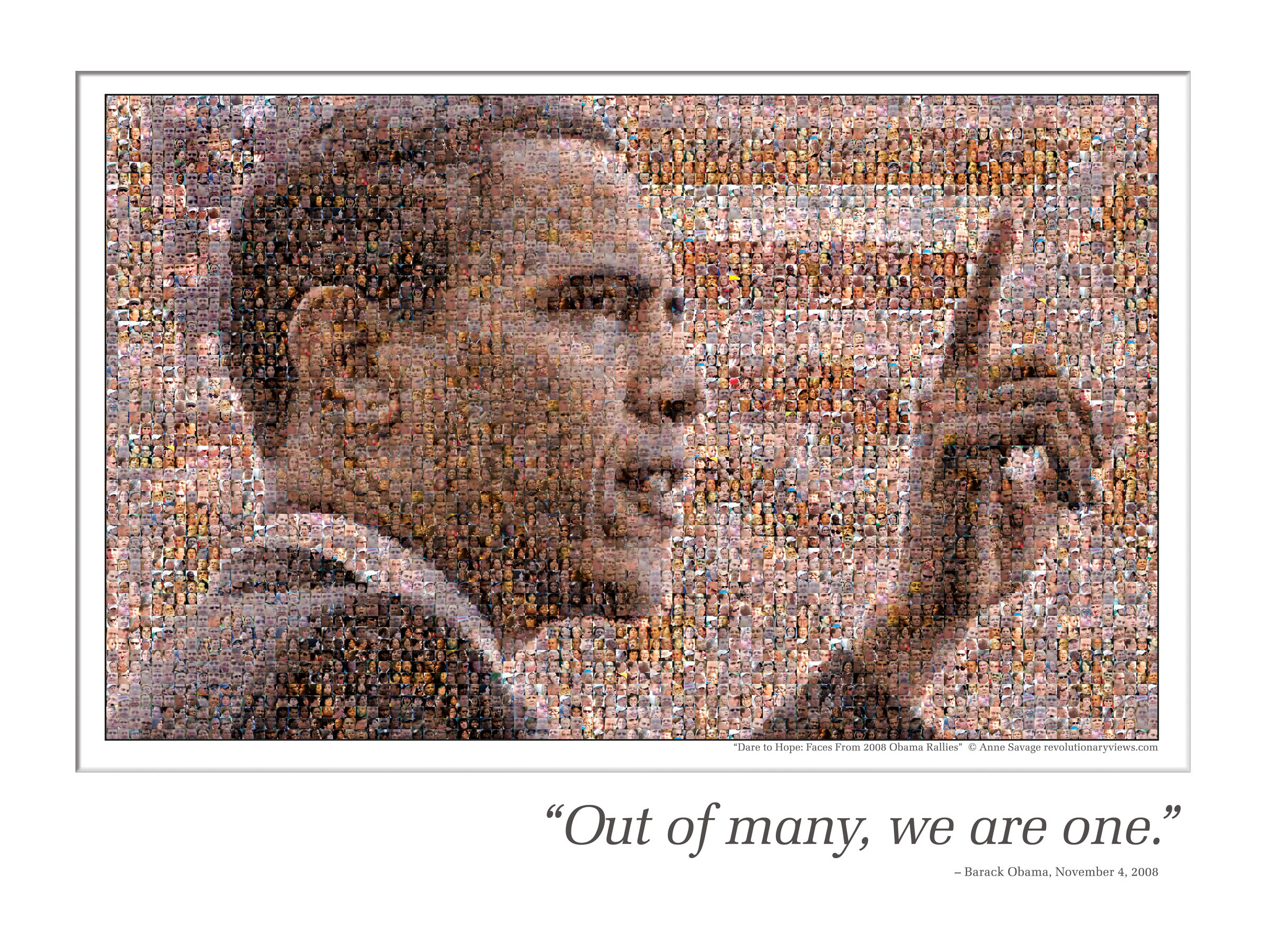

In the modern digital world, this acient art form has been transformed and combined with new technologies. As one has access to large public image database, individual images, instead of pure-colored blocks can be used as tiles to make pictures. Here is a famous mosaic image of president Obama made by Anne Savage during his campaign.

Nearly 6,000 images of individual faces are used to create this image. Sure enough, it has become a very popular poster because of the special art form. Using image mosaicking to form various pictures is very cool and seems like a daunting task because we are picking and arranging thousands of pictures from a even bigger pool of pictures. There are businesses helping you create your own mosaic work with charge. However, with intuition and just a little knowledge of optimization, we can do it at home ourselves!

Nearly 6,000 images of individual faces are used to create this image. Sure enough, it has become a very popular poster because of the special art form. Using image mosaicking to form various pictures is very cool and seems like a daunting task because we are picking and arranging thousands of pictures from a even bigger pool of pictures. There are businesses helping you create your own mosaic work with charge. However, with intuition and just a little knowledge of optimization, we can do it at home ourselves!

We know images consist of pixels, and each pixel can be described using RGB color intensities. In order for two images to look alike, we just need to match their pixels. In this case, for the image to show at a distance, intuitively, we can just treat the basis images as "super pixels" and try to match them with the corresponding subimage of the target image. Naturally, we will take the target image and partition it into a grid, where each grid element is our tile size. Then for each grid, we will choose one image from the basis that resembles the most. The way to qualify "resemblance" is discussed in detail in Part II - there are many ways of doing this. This project explores a few different ways of doing this. Because each chosen basis image resembles the grid best, after processing all grids, we will end up with a mosaicking image that overall best resembles the whole image. Notice that this is the most intuitive way to solve the problem, and it will always achieve the best result. We will present the solution using this method in the first section of Part III. This brute force method is limited by its speed; variants of the algorithm that speeds the process up will be discussed. We will also explore how changing the size of the tiles will affect the performance. The image basis in this project are obtained from online source mazaika and generic results from Google Image Search.

Disclaimer: All images are property of their respective owners. Their usage in this report is purely educational.

What will I learn from this report?¶

- How to use Optimization techniques to create Image Mosaics

- Different techniques and the factors that affect the quality of the Image Mosaic

- How to create Image Mosaics with varying "basis" image dimensions

- Extensions to this project that you can try on your own

2. Mathematical model - Mixed Integer Program Formulation¶

From the introduction above, it is clear that intuitive approach to image mosaicking is an assignment problem which can be modeled as a Mixed Integer Program. Let's setup the problem as follows:

- A set of "basis" images - these are the images we will use as tiles to reconstruct the so-called "test" image (which we are trying to reporoduce). The set of "basis" images constitute the set $\mathbf{B}$

- Create a grid of equally sized sub-images from the target image - each sub-image will be a grid element. The smaller we make the grid element, the higher the quality of the resulting mosaic will be. The set of all the grid elements constitute the set $\mathbf{I}$.

- Of course, the quality also depends on the diversity of colors in our "test" image and the diversity of colors in our "basis" set.

- The size of the grid elements and the size of the "basis" images must be the same to be consistent (each grid-element is a tile location and each "basis" image is a tile that needs to fit into a tile location). Let this size be represented by $s$.

The decision variables $G_i,i\in\mathbf{I}$ ($\mathbf{I}$ is the set of all grid elements in target image) are which image (represented by its index $j\in\mathbf{B}$, where $\mathbf{B}$ is the set of basis images of size $s$) to choose for each grid $i$ of the target image. Therefore, $\mathbf{B}$ represent the restricted set of values $G_i$ can take (in other words, we can choose exactly ONE basis image as the tile to be placed in ONE grid-element). In the mathematical formulation, binary variable for each basis images is introduced to indicate whether a particular basis image is chosen for a grid-element location. For a single grid-element, the variables that indicate assignment of a basis image to that grid-element must satisfy the SOS constraint (only one image can be chosen). The objective is to minimize the visual difference$^*$ between the chosen mosaic and the target image. Note the difference function should be linear for the model to be MIP.

($^*$ Several non-linear functions can be used to describe the resemblance of the grid and the basis image and be implemented within special algorithms. But they fall out of the scope of this course and are not discussed here.)

It is worth pointing out that in the current formulation, the optimization of each grid is independent from one another. Therefore, we can lessen the solvers burden by forming a model that solves the sub-problem of optimizing each grid element. To optimize the whole target image, one can simply put the optimization model in a function and call it for each grid element of the image. Let's use the following notation:

- $m$ represents the size of a grid-element along the Y-axis (same as the height of a basis image)

- $n$ represents the size of a grid-element along the X-axis (same as the width of a basis image)

For grid-element size $m \times n$, each target grid-element can be represented by $m\times n\times 3$ RGB matrix $G$. Before optimization, basis images are pre-processed so that they all have the same size as the grid-elements. Each basis image is represented as $m\times n\times 3$ RGB matrix $B_j,j\in\mathbf{B}$. There are many different ways to quantify the similarity/dis-similarity between any basis and the target sub-image.

In this project, we have limited the grid sizes to $2^p\times 2^p,~p\in\{3,\ldots,6\}$ square grids for the sake of scaling the basis. Further, we make sure the target image can be divided perfectly into integer number of grids. We have explored several different cost functions, and the formulation of each is described below.

Various heuristics to improve the image matching are also explored in the subsequent sections.

2.A. Similarity Criterion: Pixel absolute difference¶

Descripton¶

Minimize the pixel-wise difference between a basis image and a sub-image.

Why is this approach NOT the only one worth considering?¶

This has to do with how our eye processes images. By minimizing the pixel-wise difference, we are doing the algorithmically obvious thing, without any consideration that our eye perceives the image as a whole, which means pixel intensity variations within a region may or may not be significant (it depends on range of intensities in the region, the underlying structure of the image etc.)

Additionally, this approach results in far too many constraints and variables to deal with, which makes this approach practically unreasonable. Mathematically,

$$\text{Number of Constraints} \propto \left( \text{Number of Pixels in Image} \times \text{Number of Basis Images} \right) $$Precomputation¶

The difference matrix ($m\times n\times 3$) between target $G$ and candidate $B_j$ is

$D_j = G-B_j$

Optimization Model (for each grid-element / sub-image)¶

$\text{minimize}:\qquad\sum_{y=1}^m\sum_{x=1}^n\sum_{c=R,G,B}~~error_{xy,c}$

$\text{subject to}:\qquad error_{xy,c}\geq E[y,x,c]$

$\qquad\qquad\qquad error_{xy,c}\geq -E[y,x,c]$

$\qquad\qquad\qquad E[y,x,c]=\sum_{j=1}^{s}D_j[y,x,c]\cdot p_j$

$\qquad\qquad\qquad \sum_{j=1}^s p_j=1, p_j\in\{0,1\}$

2.B. Similarity Criterion: Mean absolute difference¶

Description¶

Minimize the mean of the pixel-wise difference between each sub-image and basis - a simple attempt at accounting for local variations among the pixels. In other words, this approach is a step towards improving the perceived visual quality of the image mosaic.

Optimization Model¶

$\text{minimize}:\qquad\sum_{c=R,G,B}~~error_{c}$

$\text{subject to}:\qquad error_{c}\geq E[c]$

$\qquad\qquad\qquad error_{c}\geq -E[c]$

$\qquad\qquad\qquad E[c]=\sum_{j=1}^{s}D_j[c]\cdot p_j$

$\qquad\qquad\qquad D_j[c]=\frac{1}{mn}\sum_{x=1}^m\sum_{y=1}^nG[x,y,c]-\frac{1}{mn}\sum_{x=1}^m\sum_{y=1}^nB_j[x,y,c],c\in\{R,G,B\}$

$\qquad\qquad\qquad \sum_{j=1}^{s}p_{j}=1, p_j\in\{0,1\}$

2.C. Similarity Criterion: Mode absolute difference¶

Description¶

Minimize the difference between the mode of the pixel intensities - in other words, try to match the most prominent color in the sub-image in an attempt to get better visual matching. Since making this work across three channels is complicated, this technique is used when we optimize for matching the grayscale versions of the images.

Optimization Model¶

$\text{minimize}:\qquad\sum_{c=R,G,B}~~error_{c}$

$\text{subject to}:\qquad error_{c}\geq E[c]$

$\qquad\qquad\qquad error_{c}\geq -E[c]$

$\qquad\qquad\qquad E[c]=\sum_{j=1}^{s}D_j[c]\cdot p_j$

$\qquad\qquad\qquad D_j[c]=mode(G[:,:,c])-mode(B_j[:,:,c]),c=\{R,G,B\}$

$\qquad\qquad\qquad \sum_{j=1}^{s}p_{j}=1,p_j\in\{0,1\}$

The first formulation is the most straight forward yet most expensive way. The number of varibles and constraints scales with the size of the target image. Further, by trying to match individual pixels, one may not necessarily get the best performance because mosaicking is more about overall resemblance at a distance. As a result, the optimization model using this objective fuction is not shown in the solution part.

Considering matching individual pixels is very expensive and all we care about is the images look close at a distance. It is straight forward to treat each basis image as a whole. We can extract features from the picture. Two most frequently used are the mean (average) of the pixel RGB intensities and the mode (most frequent value) of the pixel RGB. Both of these are implemented.

2.D. Similarity Criterion: Histogram based matching¶

Description¶

The mode does not capture subtle variations in the color in a sub-image - the histogram paints a far better picture of the color variations within a sub-image, and histogram based matching should be closer to our own visual perception. Whereas a mode tells us only the single most prominent color (which may be useless is a sub-image has equal portions of different colors), a histogram captures this information and let's us match the image better.

Optimization Model¶

$\text{mazimize}:\qquad\sum_{c=R,G,B}~~Correlation_{c} $

$\text{subject to}:\qquad Correlation[c]=\sum_{j=1}^{s}D_j[G,B_j]\cdot p_j$

$\qquad D_j[G,B_j]=\dfrac{\sum_{j=1}^{s}~~\Big[\Big(Hist(G[:,:,c])-Mean{(Hist(G[:,:,c])}\Big)\Big(Hist(B_j[:,:,c])-Mean{(Hist(B_j[:,:,c])}\Big)\Big]}{\sqrt{\sum_{}~~(Hist(G[:,:,c])-Mean{(Hist(G[:,:,c])))^2}}\sqrt{\sum_{j=1}^{s}~~(Hist(B_j[:,:,c])-Mean{(Hist(B_j[:,:,c])))^2}}},c=\{R,G,B\}$

$\qquad\qquad\qquad \sum_{j=1}^{s}p_{j}=1,p_j\in\{0,1\}$

Histograms helps us capture an overall sense of the lightness and darkness and the amount of contrast of the image. In an image, I, I(x,y) represents the intensity at pixel with coordinates (x,y). The histogram h, as h(i) indicates that intensity i, appears h(i) times in the image. Mathematically, $\qquad\qquad\qquad\qquad\qquad h(i)=\sum_{x}\sum{y}~~I(x,y)=i$

We have also utilized histograms to compare each basis image $B_j$ with target grid $G$. This would essentially boil down to comparing two arrays of data. We have used correlation as the comparison metric for comparing the two arrays. Correlation is given by,

$\qquad\qquad\qquad d(H_1,H_2)=\dfrac{\sum_{k}^{s}~~\Big[\Big(H_1(k))-\overline{H_1}\Big)\Big(H_2(k))-\overline{H_2}\Big)\Big]}{\sqrt{\sum_{k}~~\Big(H_1(k))-\overline{H_1}\Big)^2}\sqrt{\sum_{k}~~\Big(H_2(k))-\overline{H_2}\Big)^2}},\overline{H_i}=\dfrac{1}{N}\sum_{k=1}^{N} ~~H_i(k)$

Larger the distance metric, better the match. Perfect match would be $d(H_1,H_2)=1$.

Therefore, the objective function in our problem is to maximize the correlation between the histograms of the target grid $G$ and the basis image $B_j$. This can be done both using the grayscale image and an RGB image. In the case of a grayscale image, the grayscale histograms of $G$ and $B_j$ are compared and in the case of an RGB image, the votes obtained for each intensity in each channel is summed in both $G$ and $B_j$ and then the correlation between the corresponding two arrays of data is maximized. Both these cases are implemented.

3. Solution - Mixed Integer Program Formulation¶

IMPORTANT: Please run all the cells sequentially

3.A. Common functions¶

The following module contains all the helper functions to process the images. Run this section first before moving on to the optimization model. The common functions include:

- get_image_files(image_dir): load all jpg image files inside the directory

- convert_image_to_mat(image): convert one image file to a h x w matrix

- convert_mat_to_image(image_mat): convert a h x w matrix to an Images module image

- load_images_as_cellarray_mats(image_files): load a list of image files as a cell array of image matrices

- load_cellarray_mats_as_images(images_mat): convert a cell array of image matrices to a cell array of images

- scale_image(image,desired_size): scale one basis image to the desired size

- scale_cellarray_mats(images_mat, desired_size): scale the whole base array

- downscale_image(image, factor): downscale image by a factor

- find_channel(image): find the dominant channel for an image

- split_channel(images_mat,images_r,images_g,images_b): split the basis image array to 3 different basis sets based on the dominant channel

- rgb2gray(images): convert rgb image array to grayscaled image array

- scale_gray_image(image,desired_size): scale a grayscale image

- scale_cellarray_gray_mats(images_mat, desired_size): scale an array of grayscale images

- convert_image_mat_to_Int64(image_mat): convert the image type to Int64 for easy calculation of image histogram

The following cell contains all the packages needed to run the code¶

## There is a warning that appears when loading common_functions because of one of

## the packages we import. Please ignore the warnings, or run this cell twice to

## hide the warnings

using Images, DataFrames, FixedPointNumbers, PyPlot, Colors, ProgressMeter

using Interact, Reactive, DataStructures

using JuMP, Clp, Mosek

include("common_functions.jl");

3.B. Load images¶

This section contains the sample preparation work before mosaicking image. Loading the test images, loading the basis images, and scaling the basis images to the desired size. These will be copied and properly modified in following sections when we do different mosaickings.

# load target images

test_image_files = get_image_files("./test_images")

test_images_mat = load_images_as_cellarray_mats(test_image_files)

println(length(test_images_mat), " test image files loaded.");

3.C. Optimization function¶

The optimization of a single grid element put in a function, using JuMP. Function pickOpt() uses approaches 2.B and 2.C (mean and mode of the image).

function pickOpt(sub_test_image, basis_colors, optimize_color)

## function that picks the optimal basis image for one sub-image of the same size

## inputs: sub_test_image, the sub-image we are trying to optimize, same size as the desired basis

## basis_colors, the mode/mean array of basis library depending on optimize_color

## optimize_color, logical variable choosing the mean absolute difference (F)

## or mode absolute difference (T)

# get number of basis library and the number of channels we are optimizing

n_basis = size(basis_colors, 1)

n_colors = size(basis_colors, 2)

m = Model(solver = MosekSolver()) # optimization model

@variable(m, pick_basis[1:n_basis], Bin) # one binary varible for each basis image, indicating whether it is chosen

@variable(m, AbsMatchError[1:n_colors] >= 0) # absolute difference

@constraint(m, sum(pick_basis) == 1) # SOS constraint, only one image can be picked

test_image_value = nothing

if optimize_color # calculate the mode/mean value for each basis image

test_image_value = [ mode(sub_test_image[:,:,color]) for color in 1:n_colors ]

else

test_image_value = [ mean(sub_test_image[:,:,color]) for color in 1:n_colors ]

end

@expression(m, MatchError[color in 1:n_colors], test_image_value[color] -

sum(dot(pick_basis,basis_colors[:,color])) )

@constraint(m, MatchError .<= AbsMatchError)

@constraint(m, MatchError .>= -AbsMatchError)

@objective(m, Min, sum(AbsMatchError)) # minimize the absolute error

status = solve(m)

# show some output to communicate that things are moving forward

opt_pick_basis = getvalue(pick_basis)

chosen_basis = findfirst(opt_pick_basis)

return chosen_basis

end

pickOpt (generic function with 1 method)

Function pickOptHist() uses approaches 2.D.

function pickOptHist(sub_test_image,optimize_color,n_basis,hist_basis_mat)

## function that picks the optimal basis image for one sub-image of the same size

## using the histogram similarity criterion

## inputs: sub_test_image, the sub-image we are trying to optimize, same size as the desired basis

## optimize_color, logical variable choosing the mean absolute difference (F)

## or mode absolute difference (T)

## n_basis, number of basis images in the library

## hist_basis_mat, matrix storing the precalculated histogram of images in the library

# get number of basis library and the number of channels we are optimizing

corr = zeros(n_basis,1)

m = Model(solver = MosekSolver(LOG=1)) # optimization model

@variable(m, pick_basis[1:n_basis], Bin)# one binary varible for each basis image, indicating whether it is chosen

@variable(m,test_image_hist_mean>=0) # used to calculate the histogram of the test image

@constraint(m, sum(pick_basis) == 1)# SOS constraint, only one image can be picked

if optimize_color # calculate for RGB

(nothing,test_image_hist1) = hist(vec(sub_test_image[:,:,1]),-1:255)

(nothing,test_image_hist2) = hist(vec(sub_test_image[:,:,2]),-1:255)

(nothing,test_image_hist3) = hist(vec(sub_test_image[:,:,3]),-1:255)

test_image_hist=test_image_hist1+test_image_hist2+test_image_hist3

test_image_hist_mean=test_image_hist - mean(test_image_hist)

else # calculate for the mean value

(nothing,test_image_hist) = hist(vec(sub_test_image[:,:,1]),-1:255)

test_image_hist_mean=test_image_hist - mean(test_image_hist)

end

for basis in 1:n_basis # calculate correlation of the test image with each basis

corr_num=(sum(test_image_hist_mean.*hist_basis_mat[basis]))

corr[basis]=sum(corr_num/((sqrt(sum(test_image_hist_mean.^2))).*sqrt(sum(hist_basis_mat[basis].^2))));

end

#Check if value is NaN

for basis in 1:n_basis

if isequal(corr[basis],NaN)

corr[basis]=0

end

end

@expression(m, Correlation, sum(dot(pick_basis,corr[:])))

@objective(m, Max, Correlation) # maximize correlation to get best match

status = solve(m)

# return the basis of choosing

opt_pick_basis = getvalue(pick_basis)

chosen_basis = findfirst(opt_pick_basis)

return chosen_basis

end

pickOptHist (generic function with 1 method)

3.D. Mosaicking first image¶

Let us try mosaicking our first image. Target is a black and white QR code image, and we choose the gray scale image set. Should be able to get the exact picture back with small enough basis images. In this part, we do things the brute force way. Function brute_force() wraps everthing needed to be done in a function.

Settings¶

test_image = test_images_mat[1] # qr code

image_files = get_image_files("./grayscale") # load gray scale library

base_size = 8 # choose base_size

optimize_color = true # choose optimizing mean (F) or mode (T)

# load data

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded.");

4 basis image files loaded.

function brute_force(test_image, images_mat, base_size, optimize_color)

## compact function that uses the brute force mosaicking, return the assembled image

## inputs: test_image, the image we are trying to mosaic in cellarray form

## images_mat, the image library loaded in cellarray form

## base_size, number of pixels along the width of the square base

## optimize_color, logical variable, choose whether we use mean absolute difference (F)

## or mode absolute difference (T)

# scale the library based on the desired size from input

desired_size = (base_size, base_size)

images_mat = scale_cellarray_mats(images_mat, desired_size)

# get dimensions

n_basis = length(images_mat) # number of basis images

w_basis = size(images_mat[1], 2) # basis width

h_basis = size(images_mat[1], 1) # basis height

w_test_image = size(test_image, 2) # target width

h_test_image = size(test_image, 1) # target height

n_basis_width = div(w_test_image, w_basis) # number of basis images needed to fill target width

n_basis_height = div(h_test_image, h_basis) # number of basis images needed to fill target height

# prepare some arrays for storage

basis_colors = nothing

n_colors = nothing

basis_choice = zeros(n_basis_height, n_basis_width)

mosaic_image = similar(test_image)

@showprogress 1 for j = 1:n_basis_height # loop over all subimages

for i = 1:n_basis_width

# pick out the target grid

sub_test_image = test_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis) , : ]

if optimize_color # use mode absolute difference

n_colors = 3

basis_colors = zeros(n_basis, n_colors)

for color in 1:n_colors

for basis in 1:n_basis

basis_colors[basis,color] = mode(images_mat[basis][:,:,color])

end

end

else # use mean absolute difference

n_colors = 1

basis_colors = zeros(n_basis, n_colors)

for color in 1:n_colors

for basis in 1:n_basis

basis_colors[basis,color] = mean(images_mat[basis][:,:,:])

end

end

end

# call optimization fucntion for the subimage

chosen_basis = pickOpt(sub_test_image, basis_colors, optimize_color)

basis_choice[j,i] = chosen_basis

mosaic_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis) , : ] = images_mat[chosen_basis]

end

end

return mosaic_image

end

brute_force (generic function with 1 method)

mosaic_image = brute_force(test_image, images_mat, base_size, optimize_color);

println("RESULTS:")

println("\t ** Test Image, Mosaic Image **")

imshow([test_image mosaic_image]);

Progress: 100% Time: 0:00:15 RESULTS: ** Test Image, Mosaic Image **

3.E. More complicated image with image library¶

In this section, we are optimizing a real image with one of our libraries. We first solve the problem using the brute force way. Then several improvement are explored. Here is the image we are mosaicking.

3.E.a. Brute force optimization¶

The brute force way means we do the optimization for each grid the same way using the function in section 3.C. without any heuristics / prior processing. The following section loads the basis and rescale it to the desired size. Functions brute_force() utilizes the optimization functions pickOpt() shown above and return the mosaic image. For description of inputs, please see the comments in the cells.

test_image = test_images_mat[2]; # dog

imshow(test_image);

image_files = get_image_files("./music0500") # image library with ~500 album covers

base_size = 8

optimize_color = true

# load data

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded.")

498 basis image files loaded.

# try mosaick the image the brute force way

mosaic_image = brute_force(test_image, images_mat, base_size, optimize_color);

println("RESULTS:")

println("\t ** Test Image, Mosaic Image **")

imshow([test_image mosaic_image]);

Progress: 100% Time: 0:19:36 RESULTS: ** Test Image, Mosaic Image **

3.E.b. Split RGB channel - Speed up our Optimization!¶

Notice the brute force way is very slow because the SOS constraint has the size of the whole basis array for each sub-problem. Further, the result is not very satisfying at some points. It is worth noting that smallest $L_1$ norm does not necessarily guarantee similar color appearance. For example at the lower right corner, the brownish basis is chosen for the dark blue regions. To address this, we can preprocess both the grid and basis images by identifying the dominant color. The simple way is to find the color with the largest average intensity. By doing this, we roughly reduces the basis size for each sub-problem to $\frac{1}{3}$ of the original basis size. Additionally, we guarantee the basis image we choose for each grid has the same dominant color as the grid itself.

Function split_rgb() wraps everything needed for this method into one function.

function split_rgb(test_image, images_mat, base_size, optimize_color)

## compact function that uses the splitting rgb speed up, return the assembled image

## inputs: test_image, the image we are trying to mosaic in cellarray form

## images_mat, the image library loaded in cellarray form

## base_size, number of pixels along the width of the square base

## optimize_color, logical variable, choose whether we use mean absolute difference (F)

## or mode absolute difference (T)

# scale the library based on the desired size from input

desired_size = (base_size, base_size)

images_mat = scale_cellarray_mats(images_mat, desired_size)

# declare the 3 channels and split the scaled library into 3 channels

images_r = similar(images_mat)

images_g = similar(images_mat)

images_b = similar(images_mat)

count=split_channel(images_mat,images_r,images_g,images_b)

images_r = images_r[1:count[1]]

images_g = images_g[1:count[2]]

images_b = images_b[1:count[3]]

# get dimensions

n_basis = length(images_mat) # number of basis images

w_basis = size(images_mat[1], 2) # basis width

h_basis = size(images_mat[1], 1) # basis height

w_test_image = size(test_image, 2) # target width

h_test_image = size(test_image, 1) # target height

n_basis_width = div(w_test_image, w_basis) # number of basis images needed to fill target width

n_basis_height = div(h_test_image, h_basis) # number of basis images needed to fill target height

# prepare some arrays for storage

basis_colors = nothing

n_colors = nothing

basis_choice = zeros(n_basis_height, n_basis_width)

mosaic_image = similar(test_image)

@time @showprogress 1 for j = 1:n_basis_height # loop over all sub images

for i = 1:n_basis_width

# pick out the target grid

sub_test_image = test_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis) , : ]

# find the channel of the sub-image and choose the corresponding basis for optimization

channel = find_channel(sub_test_image)

if (channel == 1)

images_base = images_r

elseif (channel == 2)

images_base = images_g

else

images_base = images_b

end

n_basis = size(images_base)[1] # the basis size of the specific channel

if optimize_color # optimize mean absolute difference

n_colors = 3

basis_colors = zeros(n_basis, n_colors)

for color in 1:n_colors

for basis in 1:n_basis

basis_colors[basis,color] = mode(images_base[basis][:,:,color])

end

end

else # optimiza mode absolute difference

n_colors = 1

basis_colors = zeros(n_basis, n_colors)

for color in 1:n_colors

for basis in 1:n_basis

basis_colors[basis,color] = mean(images_base[basis][:,:,:])

end

end

end

chosen_basis = pickOpt(sub_test_image, basis_colors, optimize_color)

basis_choice[j,i] = chosen_basis

mosaic_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis) , : ] = copy(images_base[chosen_basis])

end

end

return mosaic_image

end

split_rgb (generic function with 1 method)

mosaic_image = split_rgb(test_image, images_mat, base_size, optimize_color);

println("RESULTS:")

println("\t ** Test Image, Mosaic Image **")

imshow([test_image mosaic_image]);

Progress: 100% Time: 0:05:32 332.438631 seconds (136.12 M allocations: 23.397 GB, 0.76% gc time) RESULTS: ** Test Image, Mosaic Image **

3.E.c. Histogram based Matching¶

Another method which was previously discussed was using Histogram matching to match the target grid with the best match from the basis set. This gives a smoother result compared to previous methods discussed above. The total number of bins used has not been quantized here. We have used 256 bins each for 0 - 255 intensities.Two more helper functions are added for this method.

Function histogram_optimization() wraps everything needed in the method into a function.

test_image = test_images_mat[2] # dog

image_files = get_image_files("./music0500")

base_size = 8

optimize_color = true

# load data

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded.");

498 basis image files loaded.

function histogram_optimization(test_image,images_mat,base_size,optimize_color)

## function that uses histogram matching to return the assembled image

## inputs: test_image, the image we are trying to mosaic in cellarray form

## images_mat, the image library loaded in cellarray form

## base_size, number of pixels along the width of the square base

## optimize_color, logical variable, choose whether we want to perform mosaicing on

## a color image (T) or grayscaled image (F)

image_files=load_cellarray_mats_as_images(images_mat)

images_gray_mat=rgb2gray(image_files)

# scale the library based on the desired size from input

desired_size = (base_size, base_size)

images_mat = scale_cellarray_mats(images_mat, desired_size)

test_image_temp = cell(1,1)

test_image_temp[1] = convert_mat_to_image(test_image)

test_image_gray = rgb2gray(test_image_temp)[1]

# get dimensions

n_basis = length(images_mat) #Number of basis images

w_basis = size(images_mat[1], 2) #Basis width

h_basis = size(images_mat[1], 1) #Basis height

w_test_image = size(test_image, 2) #Target image's width

h_test_image = size(test_image, 1) #Target image's height

n_basis_width = round(Int64, w_test_image/w_basis) #Number of basis images needed to fill target width

n_basis_height = round(Int64, h_test_image/h_basis) #Number of basis images needed to fill target height

#Initialize required arrays

basis_choice = zeros(n_basis_height, n_basis_width)

mosaic_image = copy(test_image)

hist_basis_mat = cell(n_basis,1)

hist_basis_mat1 = cell(n_basis,1)

hist_basis_mat2 = cell(n_basis,1)

hist_basis_mat3 = cell(n_basis,1)

test_image1=rand(Int64,size(test_image,1),size(test_image,2),size(test_image,3))

images_mat1 = cell(length(images_mat),1)

test_image_gray1=rand(Int64,size(test_image_gray,1),size(test_image_gray,2),size(test_image_gray,3))

images_gray_mat1 = cell(length(images_gray_mat),1)

for basis in 1:n_basis

images_mat1[basis]=

rand(Int64,size(images_mat[basis],1),size(images_mat[basis],2),size(images_mat[basis],3))

end

for basis in 1:n_basis

images_gray_mat1[basis]=rand(Int64,size(images_gray_mat[basis],1),

size(images_gray_mat[basis],2), size(images_gray_mat[basis],3))

end

#Convert Hexadecimal intensity values to Int values for easy computation of Histogram

test_image1 = convert_image_mat_to_Int64(test_image)

test_image_gray1=convert_image_mat_to_Int64(test_image_gray)

for basis in 1:n_basis

images_mat1[basis]=convert_image_mat_to_Int64(images_mat[basis])

images_gray_mat1[basis]=convert_image_mat_to_Int64(images_gray_mat[basis])

end

@showprogress 1 for j = 1:n_basis_height #loop over all sub images

for i = 1:n_basis_width

if optimize_color

# pick out the target grid

sub_test_image = test_image1[(j-1)*h_basis+(1:h_basis),(i-1)*w_basis+(1:w_basis),:]

for basis in 1:n_basis

# Find histogram of Channel 1(Red)

(a,hist_basis_mat1[basis]) = hist(vec(images_mat1[basis][:,:,1]),-1:255)

# Find histogram of Channel 2(Green)

(a,hist_basis_mat2[basis]) = hist(vec(images_mat1[basis][:,:,2]),-1:255)

# Find Histogram of Channel 3(Blue)

(a,hist_basis_mat3[basis]) = hist(vec(images_mat1[basis][:,:,3]),-1:255)

hist_basis_mat[basis]= hist_basis_mat1[basis]+hist_basis_mat2[basis]+hist_basis_mat3[basis]

hist_basis_mat[basis]=hist_basis_mat[basis]-mean(hist_basis_mat[basis])

end

else

# pick out the target grid

sub_test_image = test_image_gray1[(j-1)*h_basis+(1:h_basis),(i-1)*w_basis+(1:w_basis),:]

for basis in 1:n_basis

#Find histogram of one channel

(a,hist_basis_mat[basis]) = hist(vec(images_gray_mat1[basis]),-1:255)

hist_basis_mat[basis]=hist_basis_mat[basis]-mean(hist_basis_mat[basis])

end

end

chosen_basis = pickOptHist(sub_test_image,optimize_color,n_basis,hist_basis_mat)

basis_choice[j,i] = chosen_basis

if optimize_color

mosaic_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis) , : ] = images_mat[chosen_basis]

else

mosaic_image[ (j-1)*h_basis+(1:h_basis) , (i-1)*w_basis+(1:w_basis),:] = images_gray_mat[chosen_basis]

end

end

end

if optimize_color

return mosaic_image, test_image

else

return mosaic_image, test_image_gray

end

end

histogram_optimization (generic function with 1 method)

In the case of a color image¶

optimize_color = true;

mosaic_image, test_image_used = histogram_optimization(test_image, images_mat, base_size, optimize_color);

println("RESULTS:")

println("\t ** Test Image, Mosaic Image **")

imshow([test_image_used mosaic_image]);

Progress: 100% Time: 0:02:37 RESULTS: ** Test Image, Mosaic Image **

In the case of a grayscale image¶

optimize_color = false;

mosaic_image, test_image_used = histogram_optimization(test_image, images_mat, base_size, optimize_color);

println("RESULTS:")

println("\t ** Test Image, Mosaic Image **")

imshow([test_image_used mosaic_image]);

Progress: 100% Time: 0:02:42 RESULTS: ** Test Image, Mosaic Image **

4. Mathematical Model - Linear Program Formulation¶

So far, we have only been able to work with fixed basis sizes. What if we wanted to create a mosaic where we could also optimize to choose the best size of the tiles and have a mosaic image that is composed of tiles of varying sizes?

For this approach, given its inherent complexity, a Linear Program formulation with heavily pre-processed data could be practically feasible, and such an approach is presented below.

We choose a desired downscaling level for the basis images (the downscaling happens in factors of 2). For example:

- Input Basis Images - Size - 64 x 64

- Desired Downscaling : 3

- The sizes that will be considered are:

- Downscaling 1: 64 x 64

- Downscaling 2: 32 x 32

- Downscaking 3: 16 x 16

The optimization will try to pick the best combination of the basis image as well as the best size possible and create an image using that.

NOTE: Setting desired downscaling to 1 will result in a Linear Program formulation for a fixed basis size

Important Warning¶

Unfortunately, the amount of pre-processing memory this approach needs scales quadratically with each dimension of the target image, exponentially with the desired downscaling and linearly with the number of basis images. This results in any desired downcaling value beyond 3 impractical on our personal computers (a computer with about 40-50 GB of RAM can handle it). The minimum amount of memory required on the system is displayed during the calculations below.

Objective¶

Here we are only minimizing the mean error between the pixels to minimize the already high computational effort while pre-processing.

Preprocessing¶

We pick the smallest downscaled image based on the desired downscaling setting (let's say this is 16 x 16). Let's say that the test image is of size 640 x 640. This would mean the smallest grid elements would be 16 x 16 and the grid of the smallest basis images itself would be 40 x40 to cover the entire image. These 40 x 40 smallest elements are our grid elements.

For basis images that are not scaled down to this level, they will span more than one grid element. For example, a 32 x 32 downscaled basis image will span 4 grid elements of size 16 x 16, in the following way:

| $\dots$ | $\dots$ | $\dots$ | $\dots$ |

|---|---|---|---|

| $\dots$ | 16 x 16 | 16 x 16 | $\dots$ |

| $\dots$ | 16 x 16 | 16 x 16 | $\dots$ |

| $\dots$ | $\dots$ | $\dots$ | $\dots$ |

The preprocessing step does the following:

- For every downscaling settings (1 --> desired)

- For every basis image

- For every position of the downscaled basis image in the grid

- Compute the mean absolute pixel intensity error and store it in a matrix

- For every position of the downscaled basis image in the grid

- For every basis image

This processing is done by the compute_mean_errors function below. The result of the precomputation is a matrix, which is described below.

$\text{mean_error}\left[ \text{grid_loc_y}\ ,\ \text{grid_loc_x}\ ,\ \text{basis_loc_y}\ ,\ \text{basis_loc_x}\ ,\ \text{color}\ ,\ \text{downscaling}\ ,\ \text{basis} \right]$

Explanation¶

- grid_loc_y : Location of the grid element on the Y-axis

- grid_loc_x : Location of the grid element on the X-axis

- basis_loc_y : Location of the top-left corner of the basis image on the Y-axis

- basis_loc_x : Location of the top-left corner of the basis image on the X-axis

- color : The Color Channel (R/G/B)

- downscaling : The factor by which the basis image has been scaled down

- basis : The index of the basis image from the basis set $\mathbf{B}$

grid_loc_y and grid_loc_x are needed because if a basis image is not downscaled to the maximum level, it can be located in multiple places and still affect the error in the same grid-element. This arises from the fact that a basis image that is not completely downscaled spans multiple grid elements, as explained above. So the mean_error value is available for every legal combination of a grid-element, basis location, its scale, color channel and basis image.

During the optimization, the restriction that only one basis image can cover any particulat grid element is imposed, giving rise to the unit hypercube.

Why does an LP work (giving integer solutions)?¶

The only constraints used in this model have vertices on the unit hypercube - consequently the solution has to be at a vertex, giving rise to integer solutions.

Optimization Model¶

minimize: $\sum_{\text{grid elements}} \left[ \text{mosaic error} + \lambda \times \text{number of downscaled basis images} \right]$

where:

- $\lambda$ : tradeoff parameter between decreasing mosaic error and doing more downscaling

- $\text{mosaic error}[\text{grid_loc_y}, \text{grid_loc_x}] = \sum_{\text{basis locations}} \sum_{\text{downscaling}} \sum_{\text{basis images}} \\ \quad\quad\quad\quad\quad\quad\quad\quad\quad \text{mean}_{\text{colour}}\left( \text{mean_error}\left[ \text{grid location}\ ,\ \text{basis location}\ ,\ \text{downscaling}\ ,\ \text{basis image} \right] \times \\ \quad\quad\quad\quad\quad\quad\quad\quad\quad \text{place_basis}[\text{basis location}, \text{downscaling}, \text{basis image}] \right) $

subject to: For each grid location

$$ \sum_{\text{possible basis images, locations, scales for this grid location }} \text{place_basis}\left[\text{basis location}, \text{downscaling}, \text{basis image}\right] = 1$$5. Solution - Linear Program Formulation¶

test_image = test_images_mat[5] # wisconsin state capitol

image_files = get_image_files("./small_set")

desired_downscaling = 3

# load data

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded.");

50 basis image files loaded.

# load data

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded")

max_downscaling_height = floor( log2(size(images_mat[1],1)) )

max_downscaling_width = floor( log2(size(images_mat[1],2)) )

downscaling = round(Int, min(desired_downscaling, max_downscaling_height, max_downscaling_width))

n_basis = length(images_mat)

h_test, w_test, n_colors = size(test_image)

h_basis, w_basis, n_colors = size(images_mat[1])

h_basis_smallest = div(h_basis, 2^(downscaling-1))

w_basis_smallest = div(w_basis, 2^(downscaling-1))

h_grid_test_image = div(h_test, h_basis_smallest)

w_grid_test_image = div(w_test, w_basis_smallest)

memory_needed = (h_grid_test_image * w_grid_test_image * (2^downscaling-1)^2 * n_colors * downscaling * n_basis * 2) / 1e9 # GB

println("Memory Needed for Data Pre-processing = ", memory_needed*2, " GB")

50 basis image files loaded Memory Needed for Data Pre-processing = 0.21168 GB

function calculate_mean_errors(test_image, images_mat, downscaling)

n_basis = length(images_mat)

h_test, w_test, n_colors = size(test_image)

h_basis, w_basis, n_colors = size(images_mat[1])

h_basis_smallest = div(h_basis, 2^(downscaling-1))

w_basis_smallest = div(w_basis, 2^(downscaling-1))

h_grid_test_image = div(h_test, h_basis_smallest)

w_grid_test_image = div(w_test, w_basis_smallest)

default_error = 1000

# grid_h_, grid_w, basis_top_h, basis_left_w, n_colors, downscaling, n_basis

mean_errors = cell(downscaling,1)

for scale in 1:downscaling

mean_errors[scale] = default_error*ones(Float16, h_grid_test_image, w_grid_test_image,

2^(downscaling-scale), 2^(downscaling-scale), n_colors, n_basis)

end

possible_bases_for_grid_location = cell(h_grid_test_image, w_grid_test_image)

# initialize the possible_bases_for_grid_location

for j in 1:h_grid_test_image

for i in 1:w_grid_test_image

possible_bases_for_grid_location[j, i] = []

end

end

# return mean_errors, possible_bases_for_grid_location

for scale in 1:downscaling

h_grid_basis_image = 2^(downscaling-scale)

w_grid_basis_image = 2^(downscaling-scale)

@showprogress for basis in 1:n_basis

basis_image = downscale_image(images_mat[basis], 2^(scale-1))

for top_grid_y in 1:(h_grid_test_image-h_grid_basis_image+1)

top_y = (top_grid_y-1)*h_basis_smallest + 1

bottom_y = (top_grid_y-1+h_grid_basis_image)*h_basis_smallest

for left_grid_x in 1:(w_grid_test_image-w_grid_basis_image+1)

left_x = (left_grid_x-1)*w_basis_smallest + 1

right_x = (left_grid_x-1+w_grid_basis_image)*w_basis_smallest

sub_test_image = test_image[top_y:bottom_y, left_x:right_x, :]

basis_image_error = basis_image - sub_test_image

abs_basis_image_error = abs(basis_image_error)

for top_subgrid_y in top_grid_y:min( (top_grid_y+h_grid_basis_image-1) , h_grid_test_image )

top_sub_y = (top_subgrid_y-top_grid_y)*h_basis_smallest + 1

bottom_sub_y = (top_subgrid_y-top_grid_y+1)*h_basis_smallest

for left_subgrid_x in left_grid_x:min( (left_grid_x+w_grid_basis_image-1) , w_grid_test_image )

left_sub_x = (left_subgrid_x-left_grid_x)*w_basis_smallest + 1

right_sub_x = (left_subgrid_x-left_grid_x+1)*w_basis_smallest

push!(possible_bases_for_grid_location[top_subgrid_y, left_subgrid_x],

(top_subgrid_y-top_grid_y+1, left_subgrid_x-left_grid_x+1, scale, basis) )

for color in 1:n_colors

mean_abs_error = mean(abs_basis_image_error[top_sub_y:bottom_sub_y,

left_sub_x:right_sub_x, color])

mean_errors[scale][top_subgrid_y, left_subgrid_x,

top_subgrid_y-top_grid_y+1, left_subgrid_x-left_grid_x+1,

color, basis] = mean_abs_error

# end of color loop

end

# end of left_subgrid_x loop

end

# end of top_subgrid_y loop

end

# end of left_grid_x loop

end

#end of top_grid_y loop

end

# end of basis loop

end

# end of scaling loop

end

return mean_errors, possible_bases_for_grid_location

end

calculate_mean_errors (generic function with 1 method)

function lp_formulation(test_image, images_mat, downscaling, λ)

n_basis = length(images_mat)

h_test, w_test, n_colors = size(test_image)

h_basis, w_basis, n_colors = size(images_mat[1])

h_basis_smallest = div(h_basis, 2^(downscaling-1))

w_basis_smallest = div(w_basis, 2^(downscaling-1))

h_grid_test_image = div(h_test, h_basis_smallest)

w_grid_test_image = div(w_test, w_basis_smallest)

# do the pre-processing to get the result

println("INFO: Starting pre-processing")

@time mean_errors, possible_bases_for_grid_location = calculate_mean_errors(test_image, images_mat, downscaling)

println("INFO: Finished pre-processing")

println("INFO: Building optimization model")

# declare optimization model

m = Model(solver = ClpSolver())

# place_basis variable

# place_basis variable tells whether a particular basis image of a particular scale

# is placed such that its top-left corner corresponds to the grid element [h,w]

@time @variable(m, 0 <= place_basis[1:h_grid_test_image, 1:w_grid_test_image, 1:downscaling, 1:n_basis] <= 1)

# exactly one basis must correspond to any location

# any given grid-element is has to be covered by EXACTLY ONE basis image

@time @constraint(m, C_one_basis[h_grid in 1:h_grid_test_image, w_grid in 1:w_grid_test_image], sum{

place_basis[h_grid-h_loc+1, w_grid-w_loc+1, scale, basis],

(h_loc, w_loc, scale, basis) in possible_bases_for_grid_location[h_grid, w_grid]

} == 1)

# mosaic error

# compute the mosaic error as described before

@time @expression(m, mosaic_error[h_grid in 1:h_grid_test_image, w_grid in 1:w_grid_test_image], sum{

place_basis[h_grid-h_loc+1, w_grid-w_loc+1, scale, basis] *

mean( mean_errors[scale][h_grid, w_grid, h_loc, w_loc, 1:n_colors, basis] ),

(h_loc, w_loc, scale, basis) in possible_bases_for_grid_location[h_grid, w_grid]

})

# total mosaic error

@time @expression(m, total_mosaic_error, sum(mosaic_error))

# total downscaled basis

# total number of basis images that were downscaled. calculated for the tradeoff

@time @expression(m, total_downscaled_bases, sum(place_basis[:, :, 2:downscaling, :]) )

# set the objective as a linear combination using the tradeoff parameter

@time @objective(m, Min, total_mosaic_error + λ * total_downscaled_bases )

println("INFO: Finished building optimization model")

println("INFO: Starting solver")

@time status = solve(m)

println("INFO: Finished solver")

println("INFO: Reconstructing image")

opt_place_basis = getvalue(place_basis)

@time mosaic_image = construct_mosaic_image(opt_place_basis, test_image, images_mat, downscaling);

println("INFO: Finished reconstructing image")

return mosaic_image

end

lp_formulation (generic function with 1 method)

# construct mosaic image from solution

function construct_mosaic_image(opt_place_basis, test_image, images_mat, downscaling)

n_basis = length(images_mat)

h_test, w_test, n_colors = size(test_image)

h_basis, w_basis, n_colors = size(images_mat[1])

h_basis_smallest = div(h_basis, 2^(downscaling-1))

w_basis_smallest = div(w_basis, 2^(downscaling-1))

h_grid_test_image = div(h_test, h_basis_smallest)

w_grid_test_image = div(w_test, w_basis_smallest)

opt_place_basis = round(Int8, opt_place_basis)

nonzero_indices = find(opt_place_basis)

chosen_bases = [ ind2sub(opt_place_basis, nonzero_indices[i]) for i in 1:length(nonzero_indices) ]

mosaic_image = similar(test_image)

for choice in chosen_bases

h_grid_loc = choice[1]

w_grid_loc = choice[2]

scale = choice[3]

basis = choice[4]

h_grid_basis_image = 2^(downscaling-scale)

w_grid_basis_image = 2^(downscaling-scale)

top_y = (h_grid_loc-1)*h_basis_smallest + 1

bottom_y = (h_grid_loc-1+h_grid_basis_image)*h_basis_smallest

left_x = (w_grid_loc-1)*w_basis_smallest + 1

right_x = (w_grid_loc-1+w_grid_basis_image)*w_basis_smallest

basis_image = downscale_image(images_mat[basis], 2^(scale-1))

mosaic_image[top_y:bottom_y, left_x:right_x, :] = basis_image

end

return mosaic_image

end

construct_mosaic_image (generic function with 1 method)

# plot two images side by side to see how we did

λ = 0

mosaic_image = lp_formulation(test_image, images_mat, downscaling, λ)

println("RESULTS:");

println("\t ** Desired Image, Mosaic Image **");

imshow([test_image mosaic_image]);

INFO: Starting pre-processing Progress: 100%|█████████████████████████████████████████| Time: 0:00:18 Progress: 100%|█████████████████████████████████████████| Time: 0:00:07 Progress: 100%|█████████████████████████████████████████| Time: 0:00:03 27.748375 seconds (147.27 M allocations: 11.396 GB, 26.75% gc time) INFO: Finished pre-processing INFO: Building optimization model 0.190547 seconds (402.80 k allocations: 45.290 MB, 40.02% gc time) 3.311674 seconds (15.79 M allocations: 552.990 MB, 9.73% gc time) 8.931886 seconds (65.90 M allocations: 2.162 GB, 8.87% gc time) 0.074267 seconds (3.64 k allocations: 74.653 MB, 22.02% gc time) 0.090370 seconds (62.84 k allocations: 10.236 MB) 0.047971 seconds (357 allocations: 34.615 MB, 25.68% gc time) INFO: Finished building optimization model INFO: Starting solver 1.000508 seconds (74.48 k allocations: 177.435 MB, 24.67% gc time) INFO: Finished solver INFO: Reconstructing image 0.653515 seconds (7.30 M allocations: 374.756 MB, 36.98% gc time) INFO: Finished reconstructing image RESULTS: ** Desired Image, Mosaic Image **

6. Results and discussion¶

We have summarized our results for each of the method we implemented with a range of basis sizes.

6.A. Similarity Criterion: Mean absolute difference¶

| <img src="./test_images/test2.jpg"alt="Drawing" style="width: 200px;"> | <img src="./Results/Mean4.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Mean8.png"alt="Drawing" style="width: 200px;"> | |

|---|---|---|---|

| Original Image | Base-size= 4X4 | Base-size= 8X8 | |

| <img src="./Results/Mean16.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Mean32.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Mean64.png"alt="Drawing" style="width: 200px;"> | |

| Base-size= 16X16 | Base-size= 32X32 | Base-size= 64X64 |

We observe that, as the basis size increases the picture looks more deformed. As the comparison metric used in this case is mean absolute difference, the colour of the image is not taken into account and we can see that the background color does not match exactly with the target image.

6.B. Similarity Criterion: Histogram based matching¶

| <img src="./test_images/test2.jpg"alt="Drawing" style="width: 200px;"> | <img src="./Results/Hist4.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Hist8.png"alt="Drawing" style="width: 200px;"> | |

|---|---|---|---|

| Original Image | Base-size= 4X4 | Base-size= 8X8 | |

| <img src="./Results/Hist16.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Hist32.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/Hist64.png"alt="Drawing" style="width: 200px;"> | |

| Base-size= 16X16 | Base-size= 32X32 | Base-size= 64X64 |

The image looks more smoother compared to the mosaiced image obtained when mean absolute difference was used. Also, even when basis size is higher, the color of the basis is similar to the corresponding grid. This corresponds to what we expect as we choose the basis which correlates maximum with that particular grid in the target image.

6.C. Split RGB channel¶

| <img src="./test_images/test2.jpg"alt="Drawing" style="width: 200px;"> | <img src="./Results/SplitRGB4.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/SplitRGB8.png"alt="Drawing" style="width: 200px;"> | |

|---|---|---|---|

| Original Image | Base-size= 4X4 | Base-size= 8X8 | |

| <img src="./Results/SplitRGB16.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/SplitRGB32.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/SplitRGB64.png"alt="Drawing" style="width: 200px;"> | |

| Base-size= 16X16 | Base-size= 32X32 | Base-size= 64X64 |

The images obtained using this method align with the fact that the color that dominates the basis in the grid position is the color that dominates the corresponding grid in the target image. Even when higher scale basis are used, we are obtaining the same results.

6.D. Linear Programming Formulation¶

| <img src="./test_images/test2.jpg"alt="Drawing" style="width: 200px;"> | Infeasible Runtime | <img src="./Results/LP4.png"alt="Drawing" style="width: 200px;"> | |

|---|---|---|---|

| Original Image | Downscaling: 5 (Upto Base-size= 4X4) |

Downscaling: 4 (Upto Base-size= 8X8) |

|

| <img src="./Results/LP3.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/LP2.png"alt="Drawing" style="width: 200px;"> | <img src="./Results/LP1.png"alt="Drawing" style="width: 200px;"> | |

| Downscaling: 3 (Upto Base-size= 16X16) |

Downscaling: 2 (Upto Base-size= 32X32) |

Downscaling: 1 (Upto Base-size= 64X64) |

Only a set of 50 basis images were used for the solution using the Linear Programming formulation in order to keep the computation tractable on personal computers. Consquently, when compared against the diverse set of 500 images used with the iterative MIP approach, the performance appears worse. However, the trend of observing better performance with more downscaling is apparent here as well.

Even with this caveat, there is one very interesting observation with the LP formulation: the places where basis images are downscaled to their smallest size typically indicate edges or color transitions within the image - giving clues towards one of teh classic image processing problems: edge detection.

The two advantages of the LP approach as described here:

- Allows for varying basis sizes which could be a good proxy for improving visual perception. Our eye observes more details in areas of the image with more variations - akin to choosing finer basis images around variations in the image and coarser basis images around relatively non-varying parts of the image

- Allows the user to control tradeoff between moving to a finer scale and improving accuracy VS moving to a coarser scale and improving recognizibility of the basis images

The main disadvantage of the LP apprach as described here:

- The preprocessing time

- Memory required depends exponentially on downscaling and quadratically on the test image dimension and lineary on the basis set, leading to rapid intractability with increasing test image size and improving the accuracy

7. Conclusion¶

Some of the key conclusions are summarized below

- The quality of the mosaic is highly dependent on the diversity of colors in the basis set and the diversity of colors in the test image

- Visual image similarity is not always quantified by individual pixel-pixel matching

- Although the specific example used above shows extremely good results when the mean absolute difference is used as the similarity criterion, this does not always work for all images. For the sake of brevity, only the strong results have been shown above, but the user can try different combinations of scales, basis sets and test images using the interactive elements in Section 8

Some suggestions for future work:

- Solving a Square Jigsaw Puzzle

- If a similarity criterion can be established between every pair of image pieces, concepts similar to the ones used here can be used to arrange the pieces on a grid, based on the similarity criterion (the real image is not provided)

- The challenge is in making the approach scalable. Calculating the similarity criterion is of the order $O(n!)$, where $n$ is the number of pieces of the image

- Edge Detection in Images

- The LP formulation gives some good insight into how edge-detection can translate itself to an optimization problem

- By identifying regions of the image that maximize the color difference between adjacent pixels (or adjacent groups of pixels a.k.a grid elements) edges can be detected. A tradeoff parameter can be used to decide how sensitive the edge detection will be to color variations in the image

- The challenge is in coming up with the right formulation that connects information for all the three color channels (R, G, B) to identify the edge in the image.

- Improving the speed of the LP formulation as presented in this report

- The LP formulation works exceedingly fast (<2 minutes) if the downscaling factor is less than or equal to 3 (going up to 16x16) for a 960x640 image. However, for a downscaling setting of 4 (going up to 8x8), the runtime is ~0.5 hours. To address this difference, one LP solution can be found with lower downscaling and sub-sections of the image with higher error could be chosen for further optimization (with higher downscaling settings).

- This way, since the higher downscaling optimizations happen on a smaller image, the runtime and the memory requirements will be reduce and computation could be tractable

- The challenge is in adaptively identifying sub-sections of the image that could be optimized further to improve overall performance given the tradeoff setting

my_image_url = nothing

8.B. Show buttons for MIP Based Solution¶

using Interact, Reactive, Colors, PyPlot, DataStructures

test_images_dictionary = Dict(

"QR Code" => 1,

"Dog" => 2,

"Frog Shadow" => 3,

"Iron Man" => 4,

"Wisconsin State Capitol" => 5,

"User Uploaded Image" => 2,

)

if my_image_url != nothing

try

target_file_name = "./test_images/test6.jpg"

download(my_image_url, target_file_name)

my_image_file = load(target_file_name)

my_image_mat = convert_image_to_mat(my_image_file)

test_images_mat[6] = my_image_mat

test_images_dictionary["User Uploaded Image"] = 6

println("Uploaded user specified image")

catch x

error("Unable to download user specified image")

end

end

basis_sets_dictionary = Dict(

"Grayscale" => "./grayscale",

"CD Covers Set #1" => "./music0500",

"CD Covers Set #2" => "./music1000",

"CD Covers Set #3" => "./music1500",

"Avengers" => "./avengers",

)

basis_size_list = OrderedDict{AbstractString, Int}()

basis_size_list["64 x 64"] = 64

basis_size_list["32 x 32"] = 32

basis_size_list["16 x 16"] = 16

basis_size_list[" 8 x 8"] = 8

basis_size_list[" 4 x 4"] = 4

method_choice_dictionary = Dict(

"Brute Force Method" => 1,

"RGB Split Based Method" => 2,

"Histogram Method" => 3,

)

test_image_signal = @manipulate for test_image_choice=test_images_dictionary

test_image_choice

end

basis_set_signal = @manipulate for basis_set_choice=basis_sets_dictionary

basis_set_choice

end

basis_size_signal = @manipulate for basis_size_choice=basis_size_list

basis_size_choice

end

optimize_color_signal = @manipulate for optimize_color_choice=[true, false]

optimize_color_choice

end

method_choice_signal = @manipulate for method_choice=method_choice_dictionary

method_choice

end

; # suppress output

8.C. Get the MIP based solution based on your own settings¶

Run the cell below to get the solution

test_image = test_images_mat[ value(test_image_signal) ]

image_files = get_image_files( value(basis_set_signal) )

basis_size = convert(Int64, value(basis_size_signal))

optimize_color = convert(Bool, value(optimize_color_signal) )

optimization_method = convert(Int64, value(method_choice_signal) )

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded")

test_image_used = test_image

mosaic_image = similar(test_image)

if optimization_method == 1

mosaic_image = brute_force(test_image, images_mat, basis_size, optimize_color)

elseif optimization_method == 2

mosaic_image = split_rgb(test_image, images_mat, basis_size, optimize_color)

elseif optimization_method == 3

mosaic_image, test_image_used = histogram_optimization(test_image, images_mat, basis_size, optimize_color)

end

figure(1)

imshow( test_image )

title("Test Image")

figure(2)

imshow( mosaic_image )

title("Mosaic Image")

figure(3)

imshow( [test_image mosaic_image] )

title("Comparison")

8.D. Show buttons for LP based solution¶

using Interact, Reactive, Colors, PyPlot, DataStructures

test_images_dictionary = Dict(

"QR Code" => 1,

"Dog" => 2,

"Frog Shadow" => 3,

"Iron Man" => 4,

"Wisconsin State Capitol" => 5,

"User Uploaded Image" => 2,

)

if my_image_url != nothing

try

target_file_name = "./test_images/test6.jpg"

download(my_image_url, target_file_name)

my_image_file = load(target_file_name)

my_image_mat = convert_image_to_mat(my_image_file)

test_images_mat[6] = my_image_mat

test_images_dictionary["User Uploaded Image"] = 6

println("Uploaded user specified image")

catch x

error("Unable to download user specified image")

end

end

basis_sets_dictionary = Dict(

"Grayscale" => "./grayscale",

"CD Covers Reduced Set #1" => "./small_set",

"CD Covers Reduced Set #2" => "./small_set_2",

"Avengers" => "./avengers",

)

desired_downscaling_list = OrderedDict{AbstractString, Int}()

desired_downscaling_list["1 (No Downscaling Allowed)"] = 1

desired_downscaling_list["2 (Decrease Size by at most 1/2)"] = 2

desired_downscaling_list["3 (Decrease Size by at most 1/4)"] = 3

desired_downscaling_list["4 (Decrease Size by at most 1/8)"] = 4

desired_lambda_list = 0:5:100

test_image_signal = @manipulate for test_image_choice=test_images_dictionary

test_image_choice

end

basis_set_signal = @manipulate for basis_set_choice=basis_sets_dictionary

basis_set_choice

end

desired_downscaling_signal = @manipulate for desired_downscaling_choice=desired_downscaling_list

desired_downscaling_choice

end

desired_lambda_signal = @manipulate for desired_tradeoff_choice=desired_lambda_list

desired_tradeoff_choice

end

; # suppress output

8.E. Get the LP based solution based on your own settings¶

Run the cell below to get the solution

test_image = test_images_mat[ value(test_image_signal) ]

image_files = get_image_files( value(basis_set_signal) )

desired_downscaling = convert(Int64, value(desired_downscaling_signal))

λ = convert(Int64, value(desired_lambda_signal) )

images_mat = load_images_as_cellarray_mats(image_files)

println(length(images_mat), " basis image files loaded")

max_downscaling_height = floor( log2(size(images_mat[1],1)) )

max_downscaling_width = floor( log2(size(images_mat[1],2)) )

downscaling = round(Int, min(desired_downscaling, max_downscaling_height, max_downscaling_width))

test_image_used = test_image

mosaic_image = similar(test_image)

mosaic_image = lp_formulation(test_image, images_mat, downscaling, λ)

figure(1)

imshow( test_image )

title("Test Image")

figure(2)

imshow( mosaic_image )

title("Mosaic Image")

figure(3)

imshow( [test_image mosaic_image] )

title("Comparison")