%pdb 1

import megatest as mt

mt.ut.ipython_setup()

%matplotlib nbagg

from mtd.models import *

from pts.models import *

from sec.models import *

from matplotlib import pyplot

import alphamax as am

import datetime

import mtd

import numpy as np

import pandas as pd

import pts

import qgrid

import sec

import seaborn as sns

mt.async.IS_MULTIPROCESSING = False

CPU count = 8 Automatic pdb calling has been turned ON

security = Security.with_name('VIX Index')

hedge = Security.with_name('SPX Index')

security_prices = security.quote_df()['adj_mark_in_usd'].dropna()

hedge_prices = hedge.quote_df()['adj_mark_in_usd'].dropna()

beta_df, beta_figures = mt.analysis.kf_beta(

security_prices = security_prices,

hedge_prices = hedge_prices,

beta_guess = -3.0,

fold_count = 10,

iterations = 10,

with_analysis = True

)

for figure in beta_figures:

figure.set_size_inches(12, 9)

OLS Regression Results

==============================================================================

Dep. Variable: y R-squared: 0.656

Model: OLS Adj. R-squared: 0.656

Method: Least Squares F-statistic: 1.131e+04

Date: Thu, 05 Nov 2015 Prob (F-statistic): 0.00

Time: 08:10:32 Log-Likelihood: 11030.

No. Observations: 5919 AIC: -2.206e+04

Df Residuals: 5917 BIC: -2.204e+04

Df Model: 1

Covariance Type: nonrobust

==============================================================================

coef std err t P>|t| [95.0% Conf. Int.]

------------------------------------------------------------------------------

const -0.0002 0.000 -0.401 0.688 -0.001 0.001

y_hat 0.9786 0.009 106.326 0.000 0.961 0.997

==============================================================================

Omnibus: 642.555 Durbin-Watson: 2.194

Prob(Omnibus): 0.000 Jarque-Bera (JB): 2582.744

Skew: 0.484 Prob(JB): 0.00

Kurtosis: 6.088 Cond. No. 18.9

==============================================================================

Warnings:

[1] Standard Errors assume that the covariance matrix of the errors is correctly specified.

normality: passed

2015-11-05 08:10:44.457 [WARN] C:\Anaconda2\lib\site-packages\numpy\ma\core.py:4085: UserWarning: Warning: converting a masked element to nan.

warnings.warn("Warning: converting a masked element to nan.")

security = Security.with_name('CMCSA US Equity')

hedge = Security.with_name('CMCSK US Equity')

security_prices = security.quote_df()['adj_mark_in_usd'].dropna()

hedge_prices = hedge.quote_df()['adj_mark_in_usd'].dropna()

pairs_df, pairs_figures = mt.analysis.kf_beta(

security_prices = security_prices,

hedge_prices = hedge_prices,

beta_guess = 1.0,

fold_count = 10,

iterations = 10,

with_analysis = True,

return_space = False

)

OLS Regression Results

==============================================================================

Dep. Variable: y R-squared: 1.000

Model: OLS Adj. R-squared: 1.000

Method: Least Squares F-statistic: 1.426e+10

Date: Thu, 05 Nov 2015 Prob (F-statistic): 0.00

Time: 08:15:39 Log-Likelihood: 19935.

No. Observations: 6590 AIC: -3.987e+04

Df Residuals: 6588 BIC: -3.985e+04

Df Model: 1

Covariance Type: nonrobust

==============================================================================

coef std err t P>|t| [95.0% Conf. Int.]

------------------------------------------------------------------------------

const -2.025e-06 0.000 -0.009 0.993 -0.000 0.000

y_hat 1.0000 8.38e-06 1.19e+05 0.000 1.000 1.000

==============================================================================

Omnibus: 1804.775 Durbin-Watson: 2.238

Prob(Omnibus): 0.000 Jarque-Bera (JB): 193586.089

Skew: -0.034 Prob(JB): 0.00

Kurtosis: 29.552 Cond. No. 45.5

==============================================================================

Warnings:

[1] Standard Errors assume that the covariance matrix of the errors is correctly specified.

normality: passed

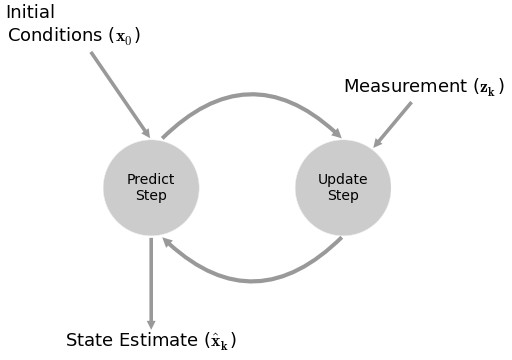

Kalman Filters¶

the fundamental idea is to blend somewhat inaccurate measurements with somewhat inaccurate models of how the systems behaves to get a filtered estimate that is better than either information source by itself

Pros¶

- filters out noise

- predictive

- adapative with future data

- does NOT require stationary data

- outputs a distribution for estimates $ (\mu, \sigma) $

- for a random walk + noise model, it can be shown to be equivalent to a EWMA (exponentially weighted moving average) [1]

- can handle missing data

- compared to other methods, found to be superior in calculating beta

Cons¶

- assumes linear dependencies (although there is a non-linear version)

- assumes guassian errors

- computationally is cubic to the size of the state space

Equations¶

$$\begin{align*} x_{t+1} &= Ax_t + Bu_t + w_t \\ z_t &= Hx_t + v_t \\ \end{align*}$$$$\begin{align*} A &= \text{predicion matrix (transition_matrices)} \\ x &= \text{states} \\ B &= \text{control matrix (transition_offsets)} \\ w &= \text{noise (transition_covariance)} \sim N(\theta, Q) \\ z &= \text{observable readings} \\ H &= \text{mapping of} \; x \; \text{to observable (observation_matrices)} \\ v &= \text{observation noise, measurement noise (observation_covariance)} \sim N(\theta, R) \end{align*}$$Best Practices¶

- normalize if possible

- the ratio of $ Q $ and $ R $

Calculating $ Q $¶

- approximate [1]

- calculate variate estimate of error in a controlled environment

- if z doesn't change, calculate variance estimate of z

- if z does change, calculate variance of regression estimate of z

- guess

- use some constant multiplied by the identity matrix

- higher the constant, higher the noise

- MLE

Example: Beta¶

beta_figures[0]

2015-11-05 08:15:54.476 [WARN] C:\Anaconda2\lib\site-packages\matplotlib\collections.py:590: FutureWarning: elementwise comparison failed; returning scalar instead, but in the future will perform elementwise comparison

if self._edgecolors == str('face'):

Equations¶

$$ \begin{bmatrix} \beta_{t+1} \\ \alpha_{t+1} \end{bmatrix} = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix} \begin{bmatrix} \beta_{t} \\ \alpha_{t} \end{bmatrix} + \begin{bmatrix} 0 \\ 0 \end{bmatrix} + \begin{bmatrix} w_{\beta, t} \\ w_{\alpha, t} \end{bmatrix} $$$$ r_{VIX, t} = \begin{bmatrix} r_{SPX, t} & 1 \end{bmatrix} \begin{bmatrix} \beta_{t} \\ \alpha_{t} \end{bmatrix} + v_t $$Transition Parameters¶

# how does beta/alpha change over time? random walk!

transition_matrices = np.eye(2)

# what is the beta/alpha drift over time? random walk!

transition_offsets = [0, 0]

# GUESS how much does beta/alpha change due to unmodeled forces?

transition_covariance = delta / (1 - delta) * np.eye(2)

Observation Parameters¶

# VIX returns are 1-dimensional

n_dim_obs = 1

# how do we convert beta/alpha to VIX returns

observation_matrices = np.expand_dims(

a = np.vstack([[x], [np.ones(len(x))]]).T,

axis = 1

)

# GUESS how much does VIX returns deviate outside of beta/alpha estimates

observation_covariance = .01

Calculate Q and R¶

split into k folds

calculate MSE using pykalman's

emon previous foldskf.em( X = y_training, n_iter = 10, em_vars = ['transition_covariance', 'observation_covariance'] )use MSE in current fold

beta_figures[1]

beta_df.dropna(inplace=True)

resid_kf = beta_df['beta'] * beta_df['x'] - beta_df['y']

resid_30 = beta_df['beta_30'] * beta_df['x'] - beta_df['y']

resid_250 = beta_df['beta_250'] * beta_df['x'] - beta_df['y']

resid_500 = beta_df['beta_500'] * beta_df['x'] - beta_df['y']

print np.sum(resid_kf**2) / len(resid_kf)

print np.sum(resid_30**2) / len(resid_30)

print np.sum(resid_250**2) / len(resid_250)

print np.sum(resid_500**2) / len(resid_500)

0.00145648345444 0.00136178536756 0.00162842090621 0.00176787087888

resid_kf = beta_df['beta'].shift(1) * beta_df['x'] - beta_df['y']

resid_30 = beta_df['beta_30'].shift(1) * beta_df['x'] - beta_df['y']

resid_250 = beta_df['beta_250'].shift(1) * beta_df['x'] - beta_df['y']

resid_500 = beta_df['beta_500'].shift(1) * beta_df['x'] - beta_df['y']

print np.sum(resid_kf**2) / len(resid_kf)

print np.sum(resid_30**2) / len(resid_30)

print np.sum(resid_250**2) / len(resid_250)

print np.sum(resid_500**2) / len(resid_500)

0.00157659837059 0.00159214078837 0.0016753622042 0.0017999663961

beta_figures[2]

Example: Pairs Trading¶

$$ \begin{bmatrix} \beta_{t+1} \\ \alpha_{t+1} \end{bmatrix} = \begin{bmatrix} 1 & 0 \\ 0 & 1 \end{bmatrix} \begin{bmatrix} \beta_{t} \\ \alpha_{t} \end{bmatrix} + \begin{bmatrix} 0 \\ 0 \end{bmatrix} + \begin{bmatrix} w_{\beta, t} \\ w_{\alpha, t} \end{bmatrix} $$$$ p_{B, t} = \begin{bmatrix} p_{A, t} & 1 \end{bmatrix} \begin{bmatrix} \beta_{t} \\ \alpha_{t} \end{bmatrix} + v_t $$pairs_figures[0]

pairs_figures[1]

pairs_figures[2]

Equations¶

$$ \begin{align*} x_{t+1} & = f(x_t, w_t) \\ z_t & = g(x_t, v_t) \end{align*} $$f = predicion matrix (transition_functions)

x = states

w = noise ~ N(0, Q) (transition_covariance)

z = observable readings

g = mapping of x to observable (observation_functions)

v = observation noise, measurement noise ~ N(0, R) (observation_covariance)

Example: Stochastic Volatility¶

$$ r_t = \mu + \sqrt{\nu_t} \, w_t $$$$ \nu_{t+1} = \nu_{t} + \theta \, (\omega-\nu_t) + \xi \, \nu_t^{\kappa} \, b_t $$where $ w_t $ and $ b_t $ are correlated with a value of $ \rho $.

For Heston, $ k = 0.5 $ . For GARCH, $ k = 1.0 $. For 3/2, $ k = 1.5 $.

Let:

$$ z_t = \frac{1}{\sqrt{1 - \rho^{2}}} (b_t - \rho w_t)$$$ z_t $ and $ w_t $ are not correlated!

$$ w_t = \frac{ r_t - \mu }{ \sqrt{\nu_t} } $$$$ b_t = \sqrt{1 - \rho^{2}} \, z_t + \rho w_t $$$$ b_t = \sqrt{1 - \rho^{2}} \, z_t + \rho \frac{ r_t - \mu }{ \sqrt{\nu_t} } $$Finally...

$$ \nu_{t+1} = \nu_{t} + \theta \, (\omega-\nu_t) + \xi \, \nu_t^{\kappa} \left [ \sqrt{1 - \rho^{2}} \, z_t + \rho \frac{ r_t - \mu }{ \sqrt{\nu_t} } \right ] $$$$ r_t = \mu + \sqrt{\nu_t} w_t $$def sv_kf_functions(mu, theta, omega, xi, k, rho, log_returns):

def f(current_state, transition_noise, log_return):

b_t = math.sqrt(1 - rho ** 2) * transition_noise + rho * (r - mu) / math.sqrt(current_state)

return current_state + theta * (omega - current_state) + xi * current_state ** k * b_t

def g(current_state, observation_noise):

return mu + math.sqrt(current_state) * observation_noise

return [partial(f, log_return=log_return) for log_return in log_returns], g

About Me¶

I'm a trader/quant.

I started blogging on Alphamaximus

I'm also a co-founder of Bonjournal.

Follow me on Twitter.