Hybrid computing using a neural network with dynamic external memory¶

- 싸이그래머 / HAI : 파트 1 - 딥마인드 논문리뷰 [1]

- 김무성

Contents¶

- System overview

- Interaction between the heads and the memory

- Synthetic question answering experiments

- Graph experiments

- Block puzzle experiments

참고¶

- [2] Differentiable neural computers - https://deepmind.com/blog/differentiable-neural-computers/

- [3] Deep Learning for Computer Vision: Attention Models (UPC 2016) - http://www.slideshare.net/xavigiro/deep-learning-for-computer-vision-attention-models-upc-2016

- [4] Neural Turing Machines(Ilya Kuzovkin's SlideShare) - http://www.slideshare.net/iljakuzovkin/neural-turing-machines

- [5] Neural Turing Machines(SlideShare) - http://www.slideshare.net/yuzurukato/neural-turing-machines-43179669

Artificial neural networks are remarkably adept at sensory processing, sequence learning and reinforcement learning, but are limited in their ability to represent variables and data structures and to store data over long timescales, owing to the lack of an external memory.

Here we introduce a machine learning model called a differentiable neural computer (DNC),

- which consists of a neural network that can read from and write to an external memory matrix, analogous to the random-access memory in a conventional computer.

- Like a conventional computer, it can use its memory to represent and manipulate complex data structures, but, like a neural network, it can learn to do so from data.

We aim to combine the advantages of neural and computational processing by providing a neural network with read–write access to external memory.

- The access is narrowly focused, minimizing interference among memoranda and enabling long-term storage.

- The whole system is differentiable, and can therefore be trained end-to-end with gradient descent,

- allowing the network to learn how to

- operate and

- organize

- the memory in a goal-directed manner.

- allowing the network to learn how to

System overview¶

A DNC is a neural network coupled to an external memory matrix.

- If the memory can be thought of as the DNC’s RAM,

- then the network, referred to as the ‘controller’,

- is a differentiable CPU

- whose operations are learned

- with gradient descent.

- whose operations are learned

- is a differentiable CPU

- An earlier form of DNC, the neural Turing machine, had a similar structure, but more limited memory access methods (see Methods for further discussion).

memory, attention, locaton, weightings¶

- Whereas conventional computers use unique addresses to access memory contents, a DNC uses

- differentiable attention mechanisms

- to define distributions

- over the N rows, or ‘locations’,

- in the N × W memory matrix M.

- over the N rows, or ‘locations’,

- These distributions, which we call weightings, represent

- the degree to

- which each location is involved

- in a read or write operation.

- which each location is involved

- the degree to

read¶

The read vector r returned by a read weighting $w^r$ over memory M is a weighted sum over the memory locations:

write¶

Similarly, the write operation uses a write weighting $w^w$ to first erase with an erase vector $e$, then add a write vector $v$:

$M[i,j]$ ← $M[i,j](1 − w^w[i]e[j]) + w^w[i]v[j]$.

read and write heads¶

The functional units that determine and apply the weightings are called read and write heads.

Interaction between the heads and the memory¶

The heads use three distinct forms of differentiable attention.

- The first is content lookup,

- in which a key vector

- emitted by the controller

- is compared to the content of

- each location in memory

- according to a similarity measure

- (here, cosine similarity).

- according to a similarity measure

- each location in memory

- The similarity scores determine a weighting that can be used by the read heads for associative recall1 or by the write head to modify an existing vector in memory.

- Importantly, a key that only partially matches the content of a memory location can still be used to attend strongly to that location.

- In general, key-value retrieval provides a rich mechanism for navigating associative data structures in the external memory, because the content of one address can effectively encode references to other addresses.

- in which a key vector

- A second attention mechanism records transitions

- between consecutively written locations

- in an N × N temporal link matrix L.

- L[i, j] is close to 1 if i was the next location written after j, and is close to 0 otherwise.

- For any weighting w, the operation Lw smoothly shifts the focus forwards to the locations written after those emphasized in w, whereas $L^⊤w$ shifts the focus backwards.

- This gives a DNC the native ability to recover sequences in the order in which it wrote them, even when consecutive writes did not occur in adjacent time-steps.

- between consecutively written locations

- The third form of attention allocates memory for writing.

- The ‘usage’ of each location is represented as a number between 0 and 1, and a weighting that picks out unused locations is delivered to the write head.

- As well as automatically increasing with each write to a location, usage can be decreased after each read.

- This allows the controller to reallocate memory that is no longer required.

- The allocation mechanism is independent of the size and contents of the memory, meaning that DNCs can be trained to solve a task using one size of memory and later upgraded to a larger memory without retraining

mammalian hippocampus¶

- The design of the attention mechanisms was motivated largely by computational considerations.

- Content lookup enables the formation of associative data structures;

- temporal links enable sequential retrieval of input sequences;

- and allocation provides the write head with unused locations.

- However, there are interesting parallels between the memory mechanisms of a DNC and the functional capabilities of the mammalian hippocampus.

Synthetic question answering experiments¶

Our first experiments investigated the capacity of the DNC to perform question answering.

참고¶

- [6] Playground for bAbI tasks - https://yerevann.github.io/2016/02/23/playground-for-babi-tasks/

- [7] Dynamic Memory Networks by YerevanNN Web Demo - http://yerevann.com/dmn-ui/#/

- [8] Curriculum Learning - http://videolectures.net/icml09_bengio_cl/

bAbI Task¶

- To compare DNCs to other neural network archi- tectures, we considered the bAbI dataset26, which includes 20 types of synthetically generated questions designed to mimic aspects of textual reasoning.

- We found that a single DNC, jointly trained on all 20 question types with 10,000 instances each, was able to achieve a mean test error rate of 3.8% with task failure (defined as >5% error) on 2 types of questions, compared to 7.5% mean error and 6 failed tasks for the best previous jointly trained result21.

- We also found that DNCs performed much better than both long short-term memory (LSTM; at present the benchmark neural network for most sequence processing tasks) and the neural Turing machine16 (see Extended Data Table 1 for details).

- Unlike previous results on this dataset, the inputs to our model were single word tokens without any preprocessing or sentence-level features (see Methods for details).

Graph experiments¶

- Although bAbI is presented in natural language, each declarative sen- tence involves a limited vocabulary and is generated from a simple triple containing an actor, an action and a set of arguments. Such sentences could easily be rendered in graphical form.

- In this sense, the propositional knowledge in many of the bAbI tasks is equivalent to a set of constraints on an underlying graph structure.

We therefore turn next to a set of synthetic reasoning experiments on randomly generated graphs.

- Unlike bAbI, the edges in our graphs were presented explicitly, with each input vector specifying a triple consisting of two node labels and an edge label.

- We generated training graphs with random labelling and connectivity and defined three kinds of query:

- ‘traversal’,

- ‘shortest path’ and

- ‘inference’

After training with curriculum learning using graphs and queries with gradually increasing complexity, the networks were tested (with no retraining) on two specific graphs as a test of generalization to realistic data:

- a symbolic map of the London Underground and

- an invented family tree.

traversal task¶

For the traversal task (Fig. 2b), the network was instructed to report the node arrived at after leaving a start node and following a path of edges generated by a random walk.

shortest-path task¶

- For the shortest-path task (Fig. 2b), a random start and end node were given as the query, and the net- work was asked to return a sequence of triples corresponding to a minimum-length path between them.

- Because we considered paths of up to length five, this is a harder version of the path-finding task in the bAbI dataset, which has a maximum length of two.

inference task¶

- For the inference task (Fig. 2c), we predefined 400 ‘relation’ labels that stood as abbreviations for sequences of up to five connected edge labels.

- A query consisted of an incomplete triple specifying a start node and a relation label, and the required answer was the final node after following the relation sequence.

- Because the relation sequences were never presented to the network, they had to be inferred from the queries and targets.

result¶

- As a benchmark we again compared DNCs with LSTM.

- In this case, the best LSTM network we found in an extensive hyper-parameter search failed to complete the first level of its training curriculum of even the easiest task (traversal), reaching an average of only 37% accuracy after almost two million training examples;

- DNCs reached an average of 98.8% accuracy on the final lesson of the same curriculum after around one million training examples.

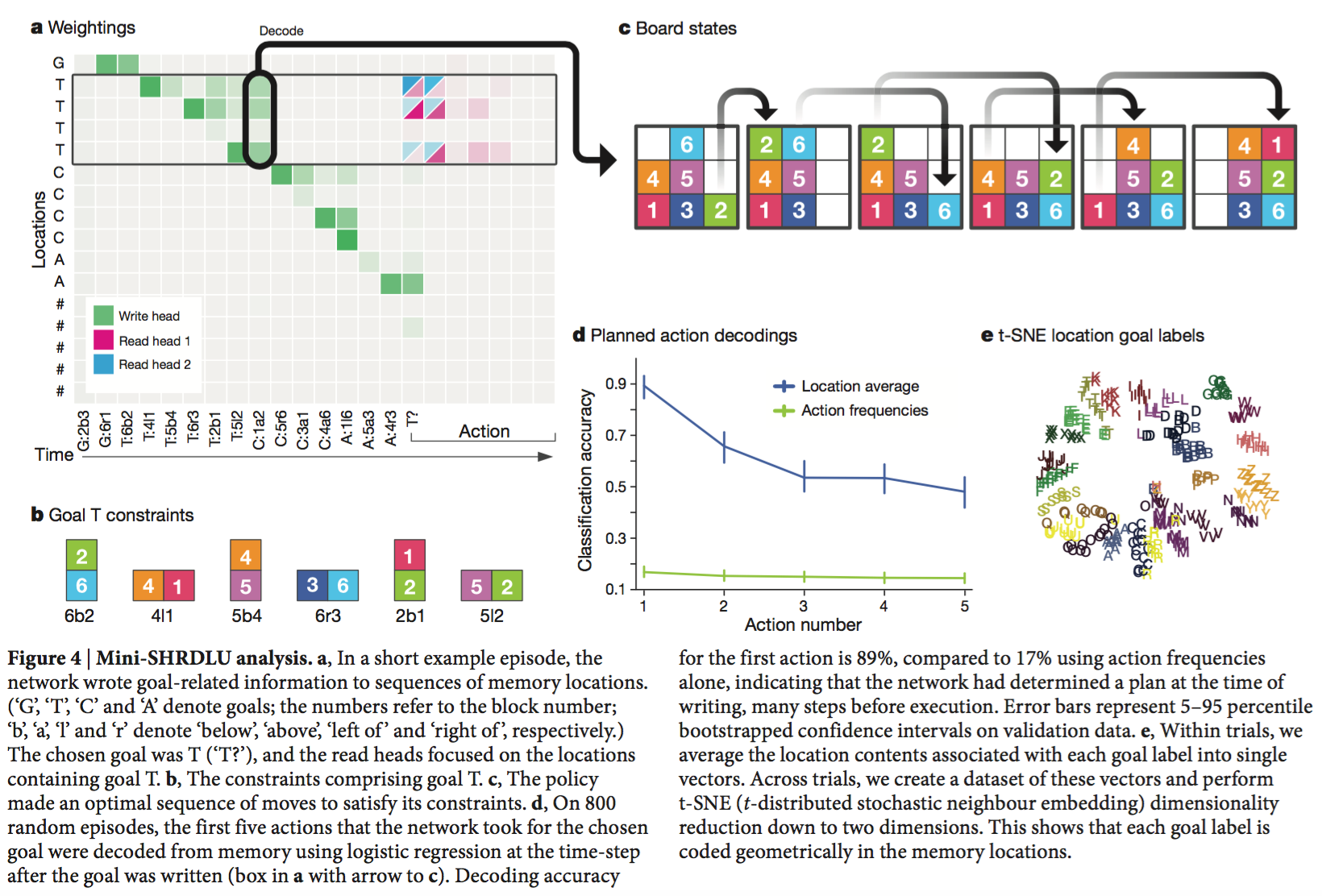

Block puzzle experiments¶

Discussion¶

참고자료¶

- [1] Hybrid computing using a neural network with dynamic external memory - http://www.nature.com/nature/journal/vaop/ncurrent/full/nature20101.html

- [2] Differentiable neural computers - https://deepmind.com/blog/differentiable-neural-computers/

- [3] Deep Learning for Computer Vision: Attention Models (UPC 2016) - http://www.slideshare.net/xavigiro/deep-learning-for-computer-vision-attention-models-upc-2016

- [4] Neural Turing Machines(Ilya Kuzovkin's SlideShare) - http://www.slideshare.net/iljakuzovkin/neural-turing-machines

- [5] Neural Turing Machines(SlideShare) - http://www.slideshare.net/yuzurukato/neural-turing-machines-43179669

- [6] Playground for bAbI tasks - https://yerevann.github.io/2016/02/23/playground-for-babi-tasks/

- [7] Dynamic Memory Networks by YerevanNN Web Demo - http://yerevann.com/dmn-ui/#/

- [8] Curriculum Learning - http://videolectures.net/icml09_bengio_cl/