English Deep Writer¶

In this notebook I have built a deep neural network that can successfully identify writers based on their writing style. The data used to build the model was collected from IAM Handwriting database. This model is built on handwriting from 50 different writers. Each writer has written multiple paragraphs and sentences have been extracted from those paragraphs.

Model¶

I have built a multi layer CNN in Keras to classify writers. The inspiration for this work comes from a paper written by Linjie Xing, Yu Qiao referenced below. They were able to successfully classify English and Chinese test. I have taken some key concepts from their paper and modified the neural network tfor this task

Results¶

Model's performance has been calculated based on a test set which has writings from among 50 writers. The model was not exposed to this data during training and validation Classification accuracy on Test Set : *94.1%*

Resources¶

- IAM handwriting database

- [Deep Writer Paper] (https://arxiv.org/abs/1606.06472)

# Imports

from __future__ import division

import numpy as np

import os

import glob

from PIL import Image

from random import *

from keras.utils import to_categorical

from sklearn.preprocessing import LabelEncoder

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

%matplotlib inline

from keras.models import Sequential

from keras.layers import Dense, Dropout, Flatten, Lambda, ELU, Activation, BatchNormalization

from keras.layers.convolutional import Convolution2D, Cropping2D, ZeroPadding2D, MaxPooling2D

from keras.optimizers import SGD, Adam, RMSprop

# Create sentence writer mapping

#Dictionary with form and writer mapping

d = {}

with open('forms_for_parsing.txt') as f:

for line in f:

key = line.split(' ')[0]

writer = line.split(' ')[1]

d[key] = writer

# Create array of file names and corresponding target writer names

tmp = []

target_list = []

path_to_files = os.path.join('data_subset', '*')

for filename in sorted(glob.glob(path_to_files)):

tmp.append(filename)

image_name = filename.split('/')[-1]

file, ext = os.path.splitext(image_name)

parts = file.split('-')

form = parts[0] + '-' + parts[1]

for key in d:

if key == form:

target_list.append(str(d[form]))

img_files = np.asarray(tmp)

img_targets = np.asarray(target_list)

# Visualizing the data

for filename in img_files[:3]:

img=mpimg.imread(filename)

plt.figure(figsize=(10,10))

plt.imshow(img, cmap ='gray')

# Label Encode writer names for one hot encoding later

encoder = LabelEncoder()

encoder.fit(img_targets)

encoded_Y = encoder.transform(img_targets)

print(img_files[:5], img_targets[:5], encoded_Y[:5])

(array(['data_subset/a01-000u-s00-00.png',

'data_subset/a01-000u-s00-01.png',

'data_subset/a01-000u-s00-02.png',

'data_subset/a01-000u-s00-03.png', 'data_subset/a01-000u-s01-00.png'],

dtype='|S34'), array(['000', '000', '000', '000', '000'],

dtype='|S3'), array([0, 0, 0, 0, 0]))

#split into test train and validation in ratio 4:1:1

from sklearn.model_selection import train_test_split

train_files, rem_files, train_targets, rem_targets = train_test_split(

img_files, encoded_Y, train_size=0.66, random_state=52, shuffle= True)

validation_files, test_files, validation_targets, test_targets = train_test_split(

rem_files, rem_targets, train_size=0.5, random_state=22, shuffle=True)

print(train_files.shape, validation_files.shape, test_files.shape)

print(train_targets.shape, validation_targets.shape, test_targets.shape)

((3274,), (844,), (844,)) ((3274,), (844,), (844,))

Input to the model¶

As suggested in the paper, the input to the model are not unique sentences but rather random patches cropped from each sentence. This was done by:

- Resizing each sentence so that new height is 113 pixels and new width is such that original aspect ratio is maintained

- As stated in the paper, distorting the shape of image by changing the aspect ratio resulted in a big drop in model performance

- From the adjusted image, patches of 113x113 are randomly cropped

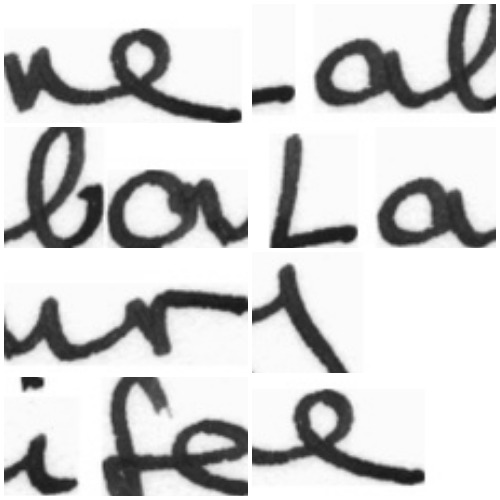

Pic below shows the random 113x113 patches from one of the sentences combined in one frame

Collage of 8 113x113 patches:

# Generator function for generating random crops from each sentence

# # Now create generators for randomly cropping 113x113 patches from these images

batch_size = 16

num_classes = 50

# Start with train generator shared in the class and add image augmentations

def generate_data(samples, target_files, batch_size=batch_size, factor = 0.1 ):

num_samples = len(samples)

from sklearn.utils import shuffle

while 1: # Loop forever so the generator never terminates

for offset in range(0, num_samples, batch_size):

batch_samples = samples[offset:offset+batch_size]

batch_targets = target_files[offset:offset+batch_size]

images = []

targets = []

for i in range(len(batch_samples)):

batch_sample = batch_samples[i]

batch_target = batch_targets[i]

im = Image.open(batch_sample)

cur_width = im.size[0]

cur_height = im.size[1]

# print(cur_width, cur_height)

height_fac = 113 / cur_height

new_width = int(cur_width * height_fac)

size = new_width, 113

imresize = im.resize((size), Image.ANTIALIAS) # Resize so height = 113 while keeping aspect ratio

now_width = imresize.size[0]

now_height = imresize.size[1]

# Generate crops of size 113x113 from this resized image and keep random 10% of crops

avail_x_points = list(range(0, now_width - 113 ))# total x start points are from 0 to width -113

# Pick random x%

pick_num = int(len(avail_x_points)*factor)

# Now pick

random_startx = sample(avail_x_points, pick_num)

for start in random_startx:

imcrop = imresize.crop((start, 0, start+113, 113))

images.append(np.asarray(imcrop))

targets.append(batch_target)

# trim image to only see section with road

X_train = np.array(images)

y_train = np.array(targets)

#reshape X_train for feeding in later

X_train = X_train.reshape(X_train.shape[0], 113, 113, 1)

#convert to float and normalize

X_train = X_train.astype('float32')

X_train /= 255

#One hot encode y

y_train = to_categorical(y_train, num_classes)

yield shuffle(X_train, y_train)

# Generate data for training and validation

train_generator = generate_data(train_files, train_targets, batch_size=batch_size, factor = 0.3)

validation_generator = generate_data(validation_files, validation_targets, batch_size=batch_size, factor = 0.3)

test_generator = generate_data(test_files, test_targets, batch_size=batch_size, factor = 0.1)

# Build a neural network in Keras

# Function to resize image to 56x56

def resize_image(image):

import tensorflow as tf

return tf.image.resize_images(image,[56,56])

# Function to resize image to 64x64

row, col, ch = 113, 113, 1

model = Sequential()

model.add(ZeroPadding2D((1, 1), input_shape=(row, col, ch)))

# Resise data within the neural network

model.add(Lambda(resize_image)) #resize images to allow for easy computation

# CNN model - Building the model suggested in paper

model.add(Convolution2D(filters= 32, kernel_size =(5,5), strides= (2,2), padding='same', name='conv1')) #96

model.add(Activation('relu'))

model.add(MaxPooling2D(pool_size=(2,2),strides=(2,2), name='pool1'))

model.add(Convolution2D(filters= 64, kernel_size =(3,3), strides= (1,1), padding='same', name='conv2')) #256

model.add(Activation('relu'))

model.add(MaxPooling2D(pool_size=(2,2),strides=(2,2), name='pool2'))

model.add(Convolution2D(filters= 128, kernel_size =(3,3), strides= (1,1), padding='same', name='conv3')) #256

model.add(Activation('relu'))

model.add(MaxPooling2D(pool_size=(2,2),strides=(2,2), name='pool3'))

model.add(Flatten())

model.add(Dropout(0.5))

model.add(Dense(512, name='dense1')) #1024

# model.add(BatchNormalization())

model.add(Activation('relu'))

model.add(Dropout(0.5))

model.add(Dense(256, name='dense2')) #1024

model.add(Activation('relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes,name='output'))

model.add(Activation('softmax')) #softmax since output is within 50 classes

model.compile(loss='categorical_crossentropy', optimizer=Adam(), metrics=['accuracy'])

print(model.summary())

_________________________________________________________________ Layer (type) Output Shape Param # ================================================================= zero_padding2d_2 (ZeroPaddin (None, 115, 115, 1) 0 _________________________________________________________________ lambda_2 (Lambda) (None, 56, 56, 1) 0 _________________________________________________________________ conv1 (Conv2D) (None, 28, 28, 32) 832 _________________________________________________________________ activation_7 (Activation) (None, 28, 28, 32) 0 _________________________________________________________________ pool1 (MaxPooling2D) (None, 14, 14, 32) 0 _________________________________________________________________ conv2 (Conv2D) (None, 14, 14, 64) 18496 _________________________________________________________________ activation_8 (Activation) (None, 14, 14, 64) 0 _________________________________________________________________ pool2 (MaxPooling2D) (None, 7, 7, 64) 0 _________________________________________________________________ conv3 (Conv2D) (None, 7, 7, 128) 73856 _________________________________________________________________ activation_9 (Activation) (None, 7, 7, 128) 0 _________________________________________________________________ pool3 (MaxPooling2D) (None, 3, 3, 128) 0 _________________________________________________________________ flatten_2 (Flatten) (None, 1152) 0 _________________________________________________________________ dropout_4 (Dropout) (None, 1152) 0 _________________________________________________________________ dense1 (Dense) (None, 512) 590336 _________________________________________________________________ activation_10 (Activation) (None, 512) 0 _________________________________________________________________ dropout_5 (Dropout) (None, 512) 0 _________________________________________________________________ dense2 (Dense) (None, 256) 131328 _________________________________________________________________ activation_11 (Activation) (None, 256) 0 _________________________________________________________________ dropout_6 (Dropout) (None, 256) 0 _________________________________________________________________ output (Dense) (None, 50) 12850 _________________________________________________________________ activation_12 (Activation) (None, 50) 0 ================================================================= Total params: 827,698 Trainable params: 827,698 Non-trainable params: 0 _________________________________________________________________ None

# Train the model

nb_epoch = 8

samples_per_epoch = 3268

nb_val_samples = 842

#save every model using Keras checkpoint

from keras.callbacks import ModelCheckpoint

filepath="checkpoint2/check-{epoch:02d}-{val_loss:.4f}.hdf5"

checkpoint = ModelCheckpoint(filepath= filepath, verbose=1, save_best_only=False)

callbacks_list = [checkpoint]

#Model fit generator

history_object = model.fit_generator(train_generator, samples_per_epoch= samples_per_epoch,

validation_data=validation_generator,

nb_val_samples=nb_val_samples, nb_epoch=nb_epoch, verbose=1, callbacks=callbacks_list)

Test model performance on the Test Set¶

- Accuracy on test set

- Samples predicted to be from the same writer

# Load save model and use for prediction on test set

model.load_weights('low_loss.hdf5')

scores = model.evaluate_generator(test_generator,842)

print("Accuracy = ", scores[1])

('Accuracy = ', 0.94013787749041677)

images = []

for filename in test_files[:50]:

im = Image.open(filename)

cur_width = im.size[0]

cur_height = im.size[1]

# print(cur_width, cur_height)

height_fac = 113 / cur_height

new_width = int(cur_width * height_fac)

size = new_width, 113

imresize = im.resize((size), Image.ANTIALIAS) # Resize so height = 113 while keeping aspect ratio

now_width = imresize.size[0]

now_height = imresize.size[1]

# Generate crops of size 113x113 from this resized image and keep random 10% of crops

avail_x_points = list(range(0, now_width - 113 ))# total x start points are from 0 to width -113

# Pick random x%

factor = 0.1

pick_num = int(len(avail_x_points)*factor)

random_startx = sample(avail_x_points, pick_num)

for start in random_startx:

imcrop = imresize.crop((start, 0, start+113, 113))

images.append(np.asarray(imcrop))

X_test = np.array(images)

X_test = X_test.reshape(X_test.shape[0], 113, 113, 1)

#convert to float and normalize

X_test = X_test.astype('float32')

X_test /= 255

shuffle(X_test)

print(X_test.shape)

(6575, 113, 113, 1)

# Play with results from model

predictions = model.predict(X_test, verbose =1)

print(predictions.shape)

predicted_writer = []

for pred in predictions:

predicted_writer.append(np.argmax(pred))

print(len(predicted_writer))

6575/6575 [==============================] - 0s 66us/step (6575, 50) 6575

writer_number = 18

total_images =10

counter = 0

for i in range(len(predicted_writer)//10):

if predicted_writer[i] == writer_number:

image = X_test[i].squeeze()

plt.figure(figsize=(2,2))

plt.imshow(image, cmap ='gray')

Next Steps¶

- Fine tune model hyper parameters to further improve accuracy on English dataset

- Replicate the process on Khatt dataset of handwritten Arabic Samples