Sensory Integration Demo¶

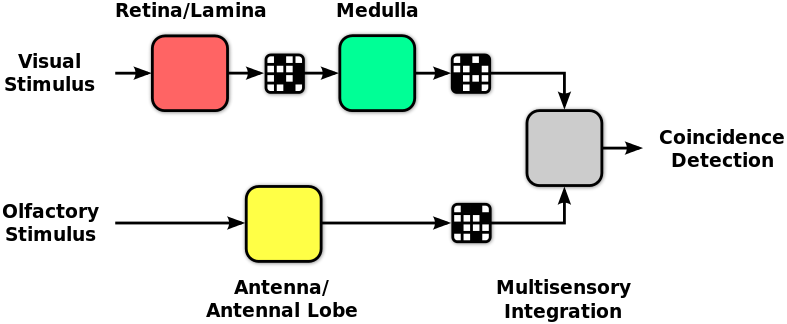

This notebook demonstrates how to use Neurokernel to integrate multiple independently developed LPUs. In this example, partial models of Drosophila's olfaction and vision systems are connected to an LPU that performs basic multisensory coincidence detection.

Background¶

The olfaction and vision models employed in this example were independently developed and implemented. The olfaction model contains a model of the antennal lobe LPU, while the vision model contains models of the fly's lamina (combined with cells in the retina), and medulla LPUs.

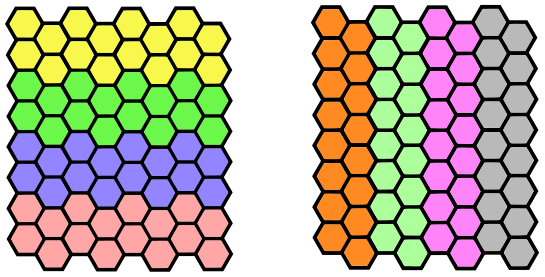

The integration LPU consists of 8 neurons that each accept input from both the antennal lobe and medulla. All 3 projection neurons in glomerulus DA1 in the antennal lobe project to all of the neurons in the integration LPU. The medulla contains 8 wide field tangential neurons that receive inputs from 8 groups of medullar columns (depicted below) that cover overlapping verticle and horizontal portions of the visual field and also connect to the neurons in the integration LPU. These 8 neurons are sensitive to quick light intensity changes.

It should be noted that the integration LPU employed in this example is artificial and does not directly correspond to any specific biological LPU in the fly brain.

The various LPUs comprised by the integration model are connected as follows:

A script for generating the GEXF file containing the antennal lobe LPU model

configuration and additional GEXF files containing the configurations of the vision and

integration LPUs are available in the examples/data/sensory_int subdirectory of the Neurokernel

source code.

Executing the Model¶

Assuming that the Neurokernel source has been cloned to ~/neurokernel, we first generate the odorant and visual input stimuli and construct the sensory integration LPU used in the example:

%cd -q ~/neurokernel/examples/sensory_int/data

%run gen_vis_input.py

%run gen_olf_input.py

%run gen_integrate.py

Once the input and the configuration are ready, we execute the entire model. Note that the interconnections between the integration LPU and both the antennal lobe and medulla LPUs are configured in the simulation script rather than in a GEXF file.

%cd -q ~/neurokernel/examples/sensory_int/

%run sensory_int_demo.py

Next, we generate a video to show the final result:

%run visualize_output.py

The resulting video can be viewed below:

import IPython.display

IPython.display.YouTubeVideo('e-eUOtOF9fc')

The first row of the video depicts the input to the visual system. The visual input has two periods of input activity interleaved with quiescent periods. The first event is a quick vertically moving black-to-white edge followed by a white-to-black edge. The second event is a quick horizontally moving black-to-white edge followed by white-to-black edge.

The second row of the video depicts the odorant stimulus profile; this stimulus consists of a series of ON and OFF events.

The third row is a raster plot of the spikes generated by the 8 neurons in the integration LPU. Each neuron emits spikes if the visual signal stimulates the columns that are connected to it at the same time the odorant is on. For example, note that a visual stimulus in the leftmost vertical region of columns alone (34s to 39s) or the delivery of the odorant alone (3s to 7s) can not induce integration neuron 1 to emit spikes; the neuron does detect when the visual and olfactory inputs coincide, however.

Acknowledgements¶

The olfaction, vision, and sensory integration models demonstrated in this notebook were developed by Nikul H. Ukani, Chung-Heng Yeh, and Yiyin Zhou.