Installing Neurokernel on Amazon Compute Cloud GPU Instances¶

In this notebook, we show step by step how to run Neurokernel on Amazon Elastic Compute Cloud (Amazon EC2) GPU instances. This notebook assumes that you have already setup an account on Amazon Web Services (AWS) and that you are able to start an Amazon EC2 Linux Instance. For detailed tutorial on starting an Linux Instance, see Getting Started with Amazon EC2 Linux Instances. AWS currently provide free tier for new users for one year on t2.micro instances. If you have not used AWS before, you can try starting an linux instance free of charge to familiarize yourself with the cloud computing service.

Important: GPU instances on Amazon EC2 always incur charges. Please be sure that you understand pricing structure on Amazon EC2. Pricing information can be found here.

Setting up with Amazon EC2¶

We provide here a few links to Amazon EC2 Documentation to help you set up with Amazon EC2. If you have already set up with Amazon EC2, you can skip to the next section.

- General information about Amazon EC2 can be found here.

- To sign up for AWS and prepare for launching an instance, follow the documentation here.

- To start an Amazon EC2 Linux instance, follow these steps.

- Make sure to check out these best practice guides to better manage your instances.

If you follow these steps, the setup time usually takes 1-2 hours. Note that there is an up to two-hour verification time after you sign up for AWS.

Using Preloaded Neurokernel Amazon Machine Image¶

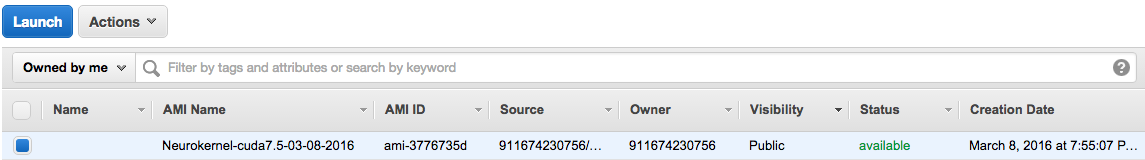

We made available a public Amazon Machine Image (AMI) in which all packages required by Neurokernel are preloaded. The AMI is only available in US East Region currently, and you must launch the AMI in this region. If you wish to run Neurokernel in another region, you can copy the AMI to that region. AMI is preloaded with the latest CUDA 7.5. We will update the AMI from time to time as newer version of packages are released. Please follow the steps below to launch an GPU instance using the AMI.

First, change your region to US East and search in the public images for Neurokernel. Click on the one with AMI ID: ami-3776735d. Then click Launch button on the top.

You will be prompted to Step 2 of instance setup. Here, choose either g2.2xlarge for an instance with 1 GPU or g2.8xlarge for one with 4 GPUs.

In step 3: leave the default setting for instance details, or customize it according to your needs. You can also request a spot instance, which can significantly lower your cost (but the instance can be taken down once the current price is higher than your maximum bid price). For example, you can bid your price and launch the instance in a specific subnet that corresponds to the one having the lowest cost.

In step 4, add storage with at least 8 GiB size. If you wish to keep the root storage, uncheck "Delete on Termination" box. Add additional storage as you need.

In step 5, you can leave it as is or create a new tag for you instance.

In step 6, select an existing security group. Then, review and launch the instance. You can log into your instance.

Once you are logged in, actviate the Neurokernel environment by:

source activate NK

You can now start using Neurokernel in ~/neurokernel. To update neurokernel to the latest

cd ~/neurokernel

git pull

cd ~/neurodriver

git pull

You can then try the intro example:

cd ~/neurodriver/examples/intro/data

python gen_generic_lpu.py -s 0 -l lpu_0 generic_lpu_0.gexf.gz generic_lpu_0_input.h5

python gen_generic_lpu.py -s 1 -l lpu_1 generic_lpu_1.gexf.gz generic_lpu_1_input.h5

cd ../

python intro_demo.py --gpu_dev 0 0 --log file

Now, you can use Neurokernel on the EC2 instance.

The following is intended for more advanced users, and is not required to run Neurokernel on EC2¶

Install Neurokernel from Scratch¶

If you wish to install NVIDIA Driver, CUDA and Neurokernel from scratch, you can following the guide below. These are the steps that were used to create the AMI mentioned above.

Starting a GPU Instance¶

In your EC2 dashboard, click on "instance" on the navigation bar on the left and then click on the "Launch Instance" button.

In step 1, choose Ubuntu Server 14.04 LTS (HVM), SSD Volume Type - ami-d05e75b8 (The AMI number may differ, as they are frequently updated).

In step 2, choose either g2.2xlarge for an instance with 1 GPU or g2.8xlarge for one with 4 GPUs.

In step 3, leave the default setting for instance details, or customize it according to your needs.

In step 4, add storage with at least 8 GiB size. If you wish to keep the root storage, uncheck "Delete on Termination" box. Add additional storage as you need.

In step 5, you can leave it as is or create a new tag for you instance.

In step 6, select an existing security group. Then, review and launch the instance.

Installing NVIDIA Drivers and CUDA¶

The Ubuntu AMI does not come with GPU driver installed. After you have launched the GPU instance, the first thing to do is to install NVIDIA drivers. This requires a series of commands. The following commands install 340.46 driver and CUDA 7.0. In principle, latest NVIDIA driver and CUDA library can be installed.

Important Note: At various point, you will be prompted to answer [Y/n] when executing some of the following commands. Please make sure that you have installed all packages by answering "y" and make sure that you DO NOT see "Abort" at the end of output of each command. The best way to avoid this is to execute the commands one line at a time.

sudo apt-get update

sudo apt-get upgrade

To this point, you may be prompted "What would you like to do about menu.lst?". Select: "keep the local version currently installed". Wait until the upgrade is finished and execute the following commands

sudo apt-get install build-essential

sudo apt-get install linux-image-extra-virtual

You may be prompted again with "What would you like to do about menu.lst?". Select again: "keep the local version currently installed". Wait until the end of the installation and execute the following commands

echo -e "blacklist nouveau\nblacklist lbm-nouveau\noptions nouveau modeset=0\nalias nouveau off\nalias lbm-nouveau off" | sudo tee /etc/modprobe.d/blacklist-nouveau.conf

echo options nouveau modeset=0 | sudo tee -a /etc/modprobe.d/nouveau-kms.conf

sudo update-initramfs -u

sudo reboot

The server will reboot. Log in after it is up again, execute the following commands

sudo apt-get install linux-source

sudo apt-get install linux-headers-`uname -r`

Now we are ready to install NVIDIA Driver

mkdir packages

cd packages

wget http://us.download.nvidia.com/XFree86/Linux-x86_64/361.28/NVIDIA-Linux-x86_64-361.28.run

chmod u+x NVIDIA-Linux-x86_64-361.28.run

sudo ./NVIDIA-Linux-x86_64-361.28.run

Installation of GPU driver starts here. Accept the license agreement. "OK" throughout the installation process. When prompted "Would you like to run the nvidia-xconfig utility to automatically update your X configuration file so that the NVIDIA X driver will be used when you restart X?", choose "no".

Now, we install CUDA by the following commands

sudo modprobe nvidia

wget http://developer.download.nvidia.com/compute/cuda/7.5/Prod/local_installers/cuda_7.5.18_linux.run

chmod u+x cuda_7.5.18_linux.run

./cuda_7.5.18_linux.run -extract=`pwd`

sudo ./cuda-linux64-rel-7.5.18-19867135.run

When the license agreement appears, press "q" so you don't have to scroll down. Type in "accept" to accept the license agreement. Press Enter to use the default path. Enter "no" when asked to add desktop menu shortcuts. Enter "yes" for creating a symbolic link.

Finally, update your path variable by

echo -e "export PATH=/usr/local/cuda-7.5/bin:\$PATH\nexport LD_LIBRARY_PATH=/usr/local/cuda-7.5/lib64:\$LD_LIBRARY_PATH" | tee -a ~/.bashrc

source ~/.bashrc

Installing Neurokernel¶

The simplest way to install Neurokernel on Ubuntu is to use conda. We first install miniconda

wget https://repo.continuum.io/miniconda/Miniconda-latest-Linux-x86_64.sh

chmod u+x Miniconda-latest-Linux-x86_64.sh

./Miniconda-latest-Linux-x86_64.sh

When prompt, accept the license agreement and choose default path for installing miniconda. Answer "yes" when asked "Do you wish the installer to prepend the Miniconda2 install location to PATH in your /home/ubuntu/.bashrc ?". After installation is complete, edit ~/.condarc file by

echo -e "channels:\n- https://conda.binstar.org/neurokernel/channel/ubuntu1404\n- defaults" > ~/.condarc

You can then create a new conda environment containing the packages required by Neurokernel by the following command

sudo apt-get install libibverbs1 libnuma1 libpmi0 libslurm26 libtorque2 libhwloc-dev git

source ~/.bashrc

conda create -n NK neurokernel_deps

Clone the Neurokernel repository

cd

rm -rf packages

git clone https://github.com/neurokernel/neurokernel.git

git clone https://github.com/neurokernel/neurodriver.git

source activate NK

cd ~/neurokernel

python setup.py develop

cd ~/neurodriver

python setup.py develop

Test by running intro example:

cd ~/neurodriver/examples/intro/data

python gen_generic_lpu.py -s 0 -l lpu_0 generic_lpu_0.gexf.gz generic_lpu_0_input.h5

python gen_generic_lpu.py -s 1 -l lpu_1 generic_lpu_1.gexf.gz generic_lpu_1_input.h5

cd ../

python intro_demo.py --gpu_dev 0 0 --log file

Inspect the log "neurokernel.log" to ensure that no error has occured during execution. If this is the case, the installation is complete.