Table of Contents¶

-

- Statistical Linear Regression Models

- Interpreting Regression Coefficients

- Statistical Regression Models Examples

- Residuals

- Properties of Residuals

- Residual Variation

- Inference in Regression

- Tests for Regression Coefficients

- Prediction of Outcomes

- Quiz 2

[Week 4]

Term Definitions¶

TODO: Complete

- $\hat Y$ : Predicted $Y$, aka the fitted value

- $\bar Y$: Sample Mean

- $\mu$: Population Mean

- $\beta_n$: model coefficient

- $s$

- $\sigma$

Coursera Regressions Week 1¶

Least Squares¶

import pandas as pd

import matplotlib.pyplot as plt

import rpy2

from IPython.display import YouTubeVideo

%load_ext rpy2.ipython

%matplotlib inline

The rpy2.ipython extension is already loaded. To reload it, use: %reload_ext rpy2.ipython

heig = pd.DataFrame.from_csv('data/Galton.csv', index_col=None)

heig = pd.melt(heig, value_vars = ['child', 'parent'], value_name = 'height')

%%R -i heig

require(ggplot2)

df <- as.data.frame(heig)

g <- ggplot(heig, aes(height, fill=variable)) + geom_histogram(binwidth=1) + theme_bw()

g <- g + facet_grid(~variable)

g

Loading required package: ggplot2

Find the middle via least squares¶

Let $Y_i$ be the height of child $i$ for $i = 1, ..., n = 928$ then define the middle as the value of $\mu$ that minimizes

$\sum\limits_{i=1}^n (Y_i - \mu)^2$

- The middle gives us the 'physical' center of mass of the graph

- You might have guessed that the answer that minimizes the squared distance above is $\mu = \bar{Y}$

Experiment¶

$\sum\limits_{i=1}^n (Y_i - \mu)^2$

Let's try different values of $\mu$ to see where the minimal value is above

child = heig[heig['variable'] == 'child']

import numpy as np

child.head()

| variable | height | |

|---|---|---|

| 0 | child | 61.7 |

| 1 | child | 61.7 |

| 2 | child | 61.7 |

| 3 | child | 61.7 |

| 4 | child | 61.7 |

hrange = np.arange(child['height'].min(), child['height'].max(), .5)

MSE = lambda x: ((child.height - x)**2).mean()

MSEdf = pd.DataFrame([(_, MSE(_)) for _ in hrange], columns = ['mu', 'MSE'])

Let's plot different values for $\mu$ and see what we get¶

pd.DataFrame.plot(MSEdf, x = 'mu', y='MSE', title='Minimizing Mu');

child.mean()

height 68.08847 dtype: float64

<img "source=(/images/least_squares.proof.jpg">

** The math follows as **

$$ \begin{align} \sum_{i=1}^n (Y_i - \mu)^2 & = \ \sum_{i=1}^n (Y_i - \bar Y + \bar Y - \mu)^2 \\ & = \sum_{i=1}^n (Y_i - \bar Y)^2 + \ 2 \sum_{i=1}^n (Y_i - \bar Y) (\bar Y - \mu) +\ \sum_{i=1}^n (\bar Y - \mu)^2 \\ & = \sum_{i=1}^n (Y_i - \bar Y)^2 + \ 2 (\bar Y - \mu) \sum_{i=1}^n (Y_i - \bar Y) +\ \sum_{i=1}^n (\bar Y - \mu)^2 \\ & = \sum_{i=1}^n (Y_i - \bar Y)^2 + \ 2 (\bar Y - \mu) (\sum_{i=1}^n Y_i - n \bar Y) +\ \sum_{i=1}^n (\bar Y - \mu)^2 \\ & = \sum_{i=1}^n (Y_i - \bar Y)^2 + \sum_{i=1}^n (\bar Y - \mu)^2\\ & \geq \sum_{i=1}^n (Y_i - \bar Y)^2 \ \end{align} $$- $\bar{Y}$ : different guesses for $\mu$

- $\mu$ : value that minimizes $\sum\limits_{i=1}^n (Y_i - \mu)^2$

He adds and subtracts $\bar{Y}$ and then expands and simplifies.

The end result is that for any arbitrary $\mu$, the sum will be greater than the sum when $\bar{Y} = \mu$

Comparing childrens' heights and their parents' heights¶

# reimport so I don't have to unmelt

heig = pd.DataFrame.from_csv('data/Galton.csv', index_col=None)

# get counts of values for the bubble plot

h = pd.DataFrame({'count' : heig.groupby( [ "child", "parent"] ).size()}).reset_index()

h[:10]

| child | parent | count | |

|---|---|---|---|

| 0 | 61.7 | 64.0 | 1 |

| 1 | 61.7 | 64.5 | 1 |

| 2 | 61.7 | 65.5 | 1 |

| 3 | 61.7 | 68.5 | 1 |

| 4 | 61.7 | 70.5 | 1 |

| 5 | 62.2 | 64.5 | 1 |

| 6 | 62.2 | 66.5 | 3 |

| 7 | 62.2 | 67.5 | 3 |

| 8 | 63.2 | 64.0 | 2 |

| 9 | 63.2 | 64.5 | 4 |

%%R -i h

require(ggplot2)

require(hexbin)

df <- as.data.frame(h)

g <- ggplot(df, aes(parent, child, size=count)) + geom_point() + ggtitle("Bubble Plot of Height")

g + theme_bw()

Loading required package: hexbin

Regression through the origin¶

Suppose that $X_i$ are the parent's heights

Consider picking the slope $\beta$ that minimizes

$\sum\limits_{i=1}^n (Y_i - X_i\beta)^2$ -- this in effect is trying to minimize the error value $\epsilon$

This is using the origin as a pivot point picking the line that minimizes the sum of the squared vertical distances of the points to the line

A line through the origin is:

$y = x\beta$

It has a y-intercept of 0.

the slope is $\beta$

$x_i\beta=\hat{y}$ is the fitted value

$y_i - \hat{y}$ is the vertical distance between the data point and the fitted line

is it NOT the orthoganol distance

we don't really want the line go through the origin

if we subtract the means so that the origin is the mean of the parents' and child's heights, then going through the origin makes sense

the origin is the average of both variables

$\mu$: population mean

$\bar X$: sample mean

$\sigma$: population standard deviation

$s$: sample standard deviation

col = 'child'

h[col] = h[col] - h[col].mean()

col = 'parent'

h[col] = h[col] - h[col].mean()

%%R -i h

require(ggplot2)

require(hexbin)

df <- as.data.frame(h)

g <- ggplot(df, aes(parent, child, size=count)) + geom_point() + ggtitle("Bubble Plot Height De-meaned") + theme_bw()

g2 <- geom_smooth(data=df, aes(parent, child, weight=count), colour='red', method=lm, se=FALSE)

g3 <- geom_smooth(data=df, aes(parent, child), colour='green', method=lm, se=FALSE)

g4 <- geom_smooth(data=df, aes(parent, child), colour='blue', method=loess, se=FALSE)

g + g2 + g3 + g4 + theme(legend.position="none")

Note the difference in the linear fit when the size of the dots is considered.

- red = size considered

- green = size not considered

- blue = loess

Find the best $\beta$¶

find:

$\min\sum\limits_{i=1}^n (Y_i - X_i\beta)^2$

col = 'child'

heig[col] = heig[col] - heig[col].mean()

col = 'parent'

heig[col] = heig[col] - heig[col].mean()

MSE = lambda beta: ((heig['child'] - heig['parent'] * beta)**2).mean()

# try slopes between -3 and 3

betas = np.arange(-3, 3, .01)

df = pd.DataFrame([(beta, MSE(beta),) for beta in betas], columns = ['beta', 'MSE'])

df.plot(x = 'beta', y='MSE', title = 'finding the best Beta empirically');

min_beta = float(df[df['MSE']==df['MSE'].min()]['beta'])

df[df['MSE']==df['MSE'].min()]

| beta | MSE | |

|---|---|---|

| 365 | 0.65 | 5.000338 |

float(min_beta)

0.6499999999999222

Lets create the linear regression and see if we get the same answer¶

Using scipy¶

from scipy.stats import linregress

slope, intercept, rvalue, pvalue, stderr = linregress(heig['parent'], heig['child'])

slope

0.64629058199363942

MSE(slope)

5.0002937655516639

Using StatsModels¶

import statsmodels.formula.api as sm

result = sm.ols(formula="child ~ parent", data=heig).fit()

Some Basic Notation and Background¶

We write $X_1, X_2, ... X_n$ to describe $n$ data points

- Uppercase $X$ for a random variable

- lowercase $x$ for a particular value

- greek letters for things we don't know

Empirical Mean

$\hat{X} = \dfrac{1}{n}\sum\limits_{i=1}^n X_i$

If we subtract the mean from all data points, we get data that has a mean of 0.

$\hat{X_i} = X_i - \hat{X} = 0$

Remember the mean is the least squares solution for minimizing:

$\sum\limits_{i=1}^n (X_i - \mu)^2$

The empirical standard deviation and variance¶

$\text{Variance: } s^2 = \dfrac{1}{n-1}\sum\limits_{i = 1}^n {\left( {X_i - \bar X} \right)^2 } $

Normalization¶

$Z_i = \dfrac{X_i - \bar x}{s}$

- Normalizing gives an empiricial mean of zero and a empirical standard deviation of 0

- Its units are described in standard deviations from the mean

- A z value of 2 means the normalized observation is 2 standard deviations from the mean

The empirical covariance¶

of $n$ observations is ..

$Cov(X, Y) = \dfrac{1}{n-1}\sum\limits_{i=1}^n(X_i - \hat X)(Y_i - \hat Y) = \dfrac{1}{n-1}\left(\sum\limits_{i=1}^nX_iY_i - n\hat X\hat Y\right)$

- think of covariance as a function that takes two vectors as an input

- has the units of X and Y

Correlation is:¶

$ Cor(X, Y) = \dfrac{Cov(X, Y)}{S_xS_y}$

Where

- $S_x$ is the estimate of the standard deviation for the $X$ observations

- $S_y$ is the estimate of the standard deviation for the $Y$ observations

- Unit free, between -1 and 1

$Cor(X, Y) = \pm 1$ only when the $X$ or $Y$ observations fall perfectly on a posivite or negative sloped line

$Cor(X, Y) = 0$ implies no linear relationship

Least squares estimation of regression lines¶

- Let $Y_i$ be the $i^{th}$ child's height

- Let $X_i$ be the $i^{th}$ parents' average height

Consider finding the best line

Child's Height = $\beta_0$ + Parent's Height $\cdot \beta_1$

Use least squares

$ \sum\limits_{i=1}^n(Y_i - (\beta_0 + \beta_1X_i))^2 $

- the difference between the actual and the predicted

$ \sum\limits_{i=1}^n{\big(Y_i - \hat Y\big)}^2 $ - the difference between the actual and the predicted

Oh how the fuck how do we do it?

Mean only regression¶

So after a lot of stuff...

$ \hat\beta_1 = \dfrac{\sum\limits_{i=1}^n Y_iX_i}{\sum\limits_{i=1}^nX_i^2} $

When we restrict the line through the origin

Figuring out the final forms we can use to get $\beta_n$¶

... lots of math... which can be summarized as

$\hat \beta_1 = Cor(Y, X) \dfrac{S_dY}{S_dX}$

also stated as

$\dfrac{\sum(X-\bar X) \sum (Y - \bar Y)}{\sum(X - \bar X)^2}$

and

$\hat \beta_0 = \bar Y - \hat \beta_1 \bar X$

And our regression line always travels through $(\bar X, \bar Y)$

heig = pd.DataFrame.from_csv('data/Galton.csv', index_col=None)

heig.head()

| child | parent | |

|---|---|---|

| 0 | 61.7 | 70.5 |

| 1 | 61.7 | 68.5 |

| 2 | 61.7 | 65.5 |

| 3 | 61.7 | 64.5 |

| 4 | 61.7 | 64.0 |

y = 'child'

x = 'parent'

beta1 = heig[y].corr(heig[x]) * heig[y].std() / heig[x].std()

beta0 = heig[y].mean() - beta1 * heig[x].mean()

beta0

23.941530180421658

beta1

0.64629058199351186

result = sm.ols(formula="child ~ parent", data=heig).fit()

result.params

Intercept 23.941530 parent 0.646291 dtype: float64

Regression to the Mean¶

$P(Y < x| X=x)$ gets bigger as $x$ heads to very large values

$P(Y > x| X=x)$ gets bigger as $x$ heads to very small values

Quiz¶

q1¶

# q1 - what's a better way to do this?

x = [0.18, -1.54, 0.42, 0.95]

w = [2, 1, 3, 1]

Give the value of $\mu$ that minimizes $\sum\limits_{i=1}^nw_i(x_i - \mu)^2$

df = pd.DataFrame([x, w]).T

df.columns = ['x', 'w']

df

| x | w | |

|---|---|---|

| 0 | 0.18 | 2 |

| 1 | -1.54 | 1 |

| 2 | 0.42 | 3 |

| 3 | 0.95 | 1 |

square = lambda x: x**2

f1 = lambda mu: (df['w'] * (df['x'] - mu).apply(square)).sum()

mus = np.arange(0, 1, .01)

f1(.18)

3.7241

mus = pd.DataFrame([(mu, f1(mu),) for mu in mus], columns = ['mu', 'fx'])

pd.DataFrame.plot(mus, x = 'mu', y='fx', title='Minimizing Mu');

mus[mus['fx']==mus['fx'].min()]

| mu | fx | |

|---|---|---|

| 15 | 0.15 | 3.7166 |

# q2

x = [0.8, 0.47, 0.51, 0.73, 0.36, 0.58, 0.57, 0.85, 0.44, 0.42]

y = [1.39, 0.72, 1.55, 0.48, 1.19, -1.59, 1.23, -0.65, 1.49, 0.05]

df = pd.DataFrame([x, y]).T

df.columns = ['x', 'y']

df.head()

| x | y | |

|---|---|---|

| 0 | 0.80 | 1.39 |

| 1 | 0.47 | 0.72 |

| 2 | 0.51 | 1.55 |

| 3 | 0.73 | 0.48 |

| 4 | 0.36 | 1.19 |

#result = sm.ols(formula="child ~ parent", data=heig).fit()

# y intercept set to zero

result = sm.ols(formula="y ~ x - 1", data=df).fit()

result.summary()

/Users/idris/.virtualenvs/regres/lib/python2.7/site-packages/scipy/stats/stats.py:1205: UserWarning: kurtosistest only valid for n>=20 ... continuing anyway, n=10 int(n))

| Dep. Variable: | y | R-squared: | 0.183 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.092 |

| Method: | Least Squares | F-statistic: | 2.018 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 0.189 |

| Time: | 15:48:14 | Log-Likelihood: | -14.561 |

| No. Observations: | 10 | AIC: | 31.12 |

| Df Residuals: | 9 | BIC: | 31.42 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| x | 0.8263 | 0.582 | 1.421 | 0.189 | -0.490 2.142 |

| Omnibus: | 2.980 | Durbin-Watson: | 3.044 |

|---|---|---|---|

| Prob(Omnibus): | 0.225 | Jarque-Bera (JB): | 1.581 |

| Skew: | -0.959 | Prob(JB): | 0.454 |

| Kurtosis: | 2.658 | Cond. No. | 1.00 |

So that's the code way to do it. Let's do it symbolically too.¶

$\beta_1 = \dfrac{\sum(X_i - \bar X)(Y_i - \bar Y)}{\sum(X_i - \bar X)^2}$

wait... that's close.

But for a RTO ("regression through origin") Remember that $\bar X$ and $\bar Y$ for a RTO are both $0$

$\beta_1 = \dfrac{\sum X_iY_i}{\sum X_i^2}$

x = 'x'

y = 'y'

num = (df[x] * df[y]).sum()

den = (df[x]).apply(square).sum()

num / den

0.82625166087128599

q3¶

%%R -i x

require(dataset)

data(mtcars)

head(mtcars)

lm(mpg~wt, data=mtcars)

Loading required package: dataset

Call:

lm(formula = mpg ~ wt, data = mtcars)

Coefficients:

(Intercept) wt

37.285 -5.344

q4¶

Consider data with an outcome (Y) and a predictor (X). The standard deviation of the predictor is one half that of the outcome. The correlation between the two variables is .5. What value would the slope coefficient for the regression model with Y as the outcome and X as the predictor?

- $Cor(X, Y) = \frac{1}{2}$

- $2 \cdot S_d(X) = S_d(Y)$

- $\hat \beta_1 = Cor(Y, X) \dfrac{S_dY}{S_dX}$

- $\hat \beta_1 = \frac{1}{2} \dfrac{2S_dY}{S_dX} = \frac{1}{2}\cdot\frac{2}{1}$

q5¶

Students were given two hard tests and scores were normalized to have empirical mean 0 and variance 1. The correlation between the scores on the two tests was 0.4. What would be the expected score on Quiz 2 for a student who had a normalized score of 1.5 on Quiz 1?

$Cor(q1, q2) = 0.4 $

$ Z_{q1} = 1.5 $

1.5 *0.4 #???

0.6000000000000001

q6¶

x = [8.58, 10.46, 9.01, 9.64, 8.86]

s = pd.Series(x)

# normalized values

ns = (s - s.mean())/s.std()

ns

0 -0.971866 1 1.531022 2 -0.399397 3 0.439337 4 -0.599095 dtype: float64

ns.mean()

1.8873791418627661e-15

q7¶

df

| x | y | |

|---|---|---|

| 0 | 0.80 | 1.39 |

| 1 | 0.47 | 0.72 |

| 2 | 0.51 | 1.55 |

| 3 | 0.73 | 0.48 |

| 4 | 0.36 | 1.19 |

| 5 | 0.58 | -1.59 |

| 6 | 0.57 | 1.23 |

| 7 | 0.85 | -0.65 |

| 8 | 0.44 | 1.49 |

| 9 | 0.42 | 0.05 |

result = sm.ols("y ~ x", data=df).fit()

result.summary()

| Dep. Variable: | y | R-squared: | 0.076 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | -0.039 |

| Method: | Least Squares | F-statistic: | 0.6620 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 0.439 |

| Time: | 15:48:14 | Log-Likelihood: | -13.666 |

| No. Observations: | 10 | AIC: | 31.33 |

| Df Residuals: | 8 | BIC: | 31.94 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 1.5675 | 1.252 | 1.252 | 0.246 | -1.320 4.455 |

| x | -1.7128 | 2.105 | -0.814 | 0.439 | -6.568 3.142 |

| Omnibus: | 3.809 | Durbin-Watson: | 2.515 |

|---|---|---|---|

| Prob(Omnibus): | 0.149 | Jarque-Bera (JB): | 1.534 |

| Skew: | -0.956 | Prob(JB): | 0.464 |

| Kurtosis: | 3.158 | Cond. No. | 8.37 |

q9¶

What value minimizes the sum of the squared distances between these points and itself?

By definition: see this

df['x'].mean()

0.57299999999999995

q10¶

Consider taking the slope having fit Y as the outcome and X as the predictor, β1 and the slope from fitting X as the outcome and Y as the predictor, γ1, and dividing the two as β1/γ1. What is this ratio always equal to

df

| x | y | |

|---|---|---|

| 0 | 0.80 | 1.39 |

| 1 | 0.47 | 0.72 |

| 2 | 0.51 | 1.55 |

| 3 | 0.73 | 0.48 |

| 4 | 0.36 | 1.19 |

| 5 | 0.58 | -1.59 |

| 6 | 0.57 | 1.23 |

| 7 | 0.85 | -0.65 |

| 8 | 0.44 | 1.49 |

| 9 | 0.42 | 0.05 |

r1 = sm.ols("x~y", data=df).fit()

r2 = sm.ols("y~x", data=df).fit()

r2.params.x / r1.params.y

38.390772016849851

df['y'].var() / df['x'].var()

38.390772016849652

$\beta_1 = Cor(X, Y)\dfrac{S_y}{S_X}$

$\gamma_1 = Cor(X, Y)\dfrac{S_X}{S_Y}$

$\dfrac{\beta_1}{\gamma_1} = \dfrac{Cor(X, Y)\dfrac{S_y}{S_X}}{Cor(X, Y)\dfrac{S_X}{S_Y}}$

$\dfrac{S_Y}{S_X}\cdot\dfrac{S_Y}{S_X} = \dfrac{S_Y^2}{S_X^2} = \dfrac{Var(Y)}{Var(X)}$

Week 2¶

Statistical linear regression models¶

Basic regression model with additive Gaussian errors¶

- Least squares is an estimation tool, how do we do inference?

- Develop a probabilistic model for linear regression

- $\epsilon$ is the error

$Y_i = \beta_0 + \beta_1X_i + \epsilon_i$

slope intercept line with error term

Here the $\epsilon_i$ are assumed iid $N(0, \sigma^2)$

$ E[Y_i | X_i = x_i] = \mu_i = \beta_0 + \beta_1x_i $

The expected value of $Y_i$ given $x_i$ is the regression equation. You take the expected value of the equation above and the $E[\epsilon_i]=0$

$ Var(Y_i | X_i = x_i) = \sigma^2 $

Likelihood¶

- see the statistical inference class

- The least squares estimate for $\mu_i$ is exactly the maximum likelihood estimate

Recap¶

Interpreting regression coefficients¶

$\beta_0$ is the expected value of the response when the predictor is 0

$E[Y|X=0] = \beta_0 + \beta_1 \cdot 0 = \beta_0$

This isn't always interesting, when $X=0$ is impossible or far outside of the range of possible values

You can shift the data by $a$, which changes the intercept but not the slope. In this way you can make the intercept interesting

$\beta_1$ is the expected change in response for a 1 unit change in the predictor

Multiplication of $X$ by a factor of $a$ results in dividing the coefficient by a factor of $a$

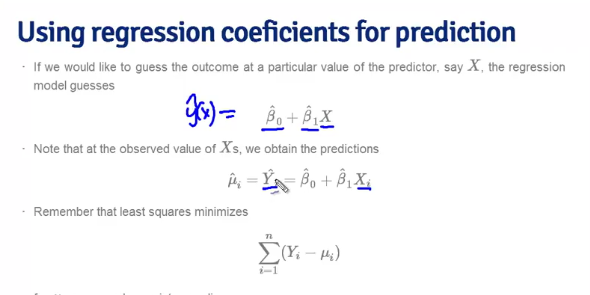

Using the regression coefficients for prediction¶

$\hat Y = \hat \beta_0 + \hat \beta_1X$

- Why are these $\hat \beta$ instead of just $\beta$?

- Because the $\beta$ values are just our estimations, specifically our least squares estimation

Statistical Regression Models Examples¶

%%R -o diamond

require(UsingR)

require(ggplot2)

data(diamond)

g <- ggplot(diamond, aes(carat, price)) + geom_point() + theme_bw()

g <- g + geom_smooth(method=lm, se=FALSE)

g

Loading required package: UsingR

Loading required package: MASS

Loading required package: HistData

Loading required package: Hmisc

Loading required package: grid

Loading required package: lattice

Loading required package: survival

Loading required package: splines

Loading required package: Formula

Attaching package: ‘Hmisc’

The following object is masked from ‘package:base’:

format.pval, round.POSIXt, trunc.POSIXt, units

Loading required package: quantreg

Loading required package: SparseM

Attaching package: ‘SparseM’

The following object is masked from ‘package:base’:

backsolve

Attaching package: ‘quantreg’

The following object is masked from ‘package:Hmisc’:

latex

The following object is masked from ‘package:survival’:

untangle.specials

Attaching package: ‘UsingR’

The following object is masked from ‘package:survival’:

cancer

The following object is masked from ‘package:ggplot2’:

movies

formula = "price ~ carat"

r1 = sm.ols(formula, data=diamond).fit()

r1.summary()

| Dep. Variable: | price | R-squared: | 0.978 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.978 |

| Method: | Least Squares | F-statistic: | 2070. |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 6.75e-40 |

| Time: | 15:48:16 | Log-Likelihood: | -233.20 |

| No. Observations: | 48 | AIC: | 470.4 |

| Df Residuals: | 46 | BIC: | 474.1 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | -259.6259 | 17.319 | -14.991 | 0.000 | -294.487 -224.765 |

| carat | 3721.0249 | 81.786 | 45.497 | 0.000 | 3556.398 3885.651 |

| Omnibus: | 0.739 | Durbin-Watson: | 1.994 |

|---|---|---|---|

| Prob(Omnibus): | 0.691 | Jarque-Bera (JB): | 0.181 |

| Skew: | 0.056 | Prob(JB): | 0.913 |

| Kurtosis: | 3.280 | Cond. No. | 18.5 |

# same formula, but subtracting out the carat mean to get a usable intercept values

f = "price ~ I(carat - carat.mean())"

r2 = sm.ols(f, data=diamond).fit()

# note that the slope is the same

print(r1.params)

print(r2.params)

print(diamond.price.mean())

Intercept -259.625907 carat 3721.024852 dtype: float64 Intercept 500.083333 I(carat - carat.mean()) 3721.024852 dtype: float64 500.083333333

$500 (the intercept) is the expected price for the average sized diamond of the data.

And the intercept is exactly the mean of the prices

diamond.price.mean()

500.08333333333331

Changing Scale¶

# A one carat increase in diamond is pretty big.

f = "price ~ I(carat *10)"

r3 = sm.ols(f, data=diamond).fit()

r3.params

Intercept -259.625907 I(carat * 10) 372.102485 dtype: float64

# And the slope is 1/coef we mutliplied the regressor by

df = pd.DataFrame([0.16, 0.27, 0.34], columns=['carat'])

df['price'] = r3.predict(df)

df

| carat | price | |

|---|---|---|

| 0 | 0.16 | 335.738069 |

| 1 | 0.27 | 745.050803 |

| 2 | 0.34 | 1005.522542 |

diamond['price_predict'] = r3.predict(diamond)

%%R -i diamond

g <- ggplot(diamond, aes(carat, price)) + geom_point(color='green')

g <- g + geom_line(data=diamond, aes(carat, price_predict), colour='blue', linetype=2)

g <- g + geom_point(data=diamond, aes(carat, price_predict), colour='red', shape=3)

g + theme_bw()

# green dots - actual observations

# blue dashed line - predictions

# red dashes - places where we made predictions. Note that these are the same x_i values where we have observed points

Residuals and residual variation¶

$\hat Y_i = \hat \beta_0 + \hat \beta_1X_i$

$e_i = Y_i - \hat Y_i$ which is the vertical distance between the observed data point and the regression line

$e_i \neq \epsilon_i$.

$e_i$ is the observed error, not the true error which we don't get to know

Least squares minimizes $\sum\limits_{i=1}^ne_i^2$

The $e_i$ can be thought of as the estimates of the $\epsilon_i$

Properties of the residuals¶

<img "src=images/properties_of_the_residuals.png"/>

- Useful for investigating poor model fit

- Residuals can be thought of as the outcome $Y$ with the linear association of the predictor $X$ removed

- residual variation is the variation after removing the systematic variation

- residual plots highlight poor model fit

%%R -o diamond

data(diamond)

y <- diamond$price

x <- diamond$carat

n <- length(y)

fit <- lm(y~x)

e <- resid(fit)

yhat <- predict(fit)

max(abs(e - (y-yhat)))

[1] 9.485746e-13

diamond

x = diamond.carat

y = diamond.price

results = sm.ols("price ~ carat", data=diamond).fit()

e = results.resid

yhat = results.predict()

df = pd.DataFrame([e, y - yhat]).T

df.columns = ['e', 'y - yhat']

df.head()

| e | y - yhat | |

|---|---|---|

| 0 | -17.948318 | -17.948318 |

| 1 | -7.738069 | -7.738069 |

| 2 | -22.948318 | -22.948318 |

| 3 | -85.158566 | -85.158566 |

| 4 | -28.630306 | -28.630306 |

(df.e - df['y - yhat']).abs().max()

0.0

from statsmodels.api import graphics

What we're looking for is a pattern in the residuals. A pattern indicates our model is missing something¶

See this for more details on plotting regressions in statsmodels

fig, ax = plt.subplots(figsize=(12, 8))

fig = graphics.plot_fit(results, "carat", ax=ax)

fig, ax = plt.subplots(figsize=(12, 8))

graphics.plot_regress_exog(results, "carat", fig);

# generated data set

from random import uniform, normalvariate, seed

from math import sin

seed(123)

x = [uniform(-3, 3) for _ in xrange(100)]

y = [_ + sin(_) + normalvariate(mu=100, sigma=.2) for _ in x]

df = pd.DataFrame([_ for _ in zip(x, y)], columns = ['x', 'y'])

df = df.sort('x')

plt.scatter(df.y, df.x);

results = sm.ols("y ~ x", data=df).fit()

# why does this show up twice??

# todo: look at the source and figure

fig, ax = plt.subplots(figsize=(12, 8))

graphics.plot_regress_exog(results, "x", fig);

plt.plot(df.x, results.resid, 'o');

Heteroskedasticity¶

- The model isn't right because the variance isn't constant as a function of $x$

x = [uniform(0, 6) for _ in xrange(100)]

df = pd.DataFrame(x, columns = ['x'])

ygen = lambda x: x + normalvariate(0, .001*x)

df['y'] = [ygen(x) for x in df.x]

df = df.sort('x')

plt.plot(df.x, df.y, 'o');

r_hetero = sm.ols("y~x", data=df).fit()

plt.plot(df.x, r_hetero.resid, 'o');

fig, ax = plt.subplots(figsize=(12, 8))

graphics.plot_regress_exog(r_hetero, "x", fig);

- Residuals highlight stuff you will not see in scatter plots

Estimating Residual Variation¶

- Model $Y_i = \beta_0 + \beta_1 X_i + \epsilon_i$ where $\epsilon_i \sim N(0, \sigma^2)$.

- The ML estimate of $\sigma^2$ is $\frac{1}{n}\sum_{i=1}^n e_i^2$, the average squared residual.

Most people use $$ \hat \sigma^2 = \frac{1}{n-2}\sum_{i=1}^n e_i^2. $$

The $n-2$ instead of $n$ is so that $E[\hat \sigma^2] = \sigma^2$

Diamond Example¶

diamond.head()

| carat | price | |

|---|---|---|

| 0 | 0.17 | 355 |

| 1 | 0.16 | 328 |

| 2 | 0.17 | 350 |

| 3 | 0.18 | 325 |

| 4 | 0.25 | 642 |

%%R

y <- diamond$price; x <- diamond$carat; n <- length(y)

fit <- lm(y ~ x)

summary(fit)$sigma

sqrt(sum(resid(fit)^2) / (n - 2))

[1] 31.84052

y = 'price'; x = 'carat'

fit = sm.ols('%s ~ %s' % (y, x, ), data=diamond).fit()

e = (fit.predict(diamond) - diamond.price)**2

- calculating:

from math import sqrt

sigma = sqrt((e.shape[0]-2)**-1 * e.sum())

sigma

31.840522265031762

# Normalized by N-1 by default. This can be changed using the ddof argument

sqrt(fit.resid.var(ddof=2))

31.840522265031762

Summarizing Variation¶

Or

Total Variation = Residual Variation + Regression Variation

Define the percent of total varation described by the model as $$ R^2 = \frac{\sum_{i=1}^n (\hat Y_i - \bar Y)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} = 1 - \frac{\sum_{i=1}^n (Y_i - \hat Y_i)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} $$

Key:¶

$Y_i$ - $i^{th}$ obseration of $Y$

$\hat Y_i$ - Predicted value of $i^{th} Y$

$n$ - number of observations

$\bar Y$ - Empirical mean of $Y$

Total Variation = $\sum_{i=1}^n (\bar Y - Y_i)^2$

Residual Variation = $\sum_{i=1}^n(\hat Y_i - Y_i)^2$

Regression Variation = $\sum_{i=1}^n (\hat Y_i - \bar Y)^2$

$R^2 = \dfrac{\text{Regression Variation}}{\text{Total Variation}} = 1 - \dfrac{\text{Residual Variation}}{\text{Total Variation}}$

Relation between $R^2$ and $r$ (the corrrelation)¶

Recall that $(\hat Y_i - \bar Y) = \hat \beta_1 (X_i - \bar X)$ so that

$$ R^2 = \frac{\sum_{i=1}^n (\hat Y_i - \bar Y)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} = \hat \beta_1^2 \frac{\sum_{i=1}^n(X_i - \bar X)^2}{\sum_{i=1}^n (Y_i - \bar Y)^2} = Cor(Y, X)^2 $$Since, recall, $$ \hat \beta_1 = Cor(Y, X)\frac{Sd(Y)}{Sd(X)} $$ So, $R^2$ is literally $r$ squared.

from __future__ import print_function

"""

Edward Tufte uses this example from Anscombe to show 4 datasets of x

and y that have the same mean, standard deviation, and regression

line, but which are qualitatively different.

matplotlib fun for a rainy day

"""

from pylab import *

x = array([10, 8, 13, 9, 11, 14, 6, 4, 12, 7, 5])

y1 = array([8.04, 6.95, 7.58, 8.81, 8.33, 9.96, 7.24, 4.26, 10.84, 4.82, 5.68])

y2 = array([9.14, 8.14, 8.74, 8.77, 9.26, 8.10, 6.13, 3.10, 9.13, 7.26, 4.74])

y3 = array([7.46, 6.77, 12.74, 7.11, 7.81, 8.84, 6.08, 5.39, 8.15, 6.42, 5.73])

x4 = array([8,8,8,8,8,8,8,19,8,8,8])

y4 = array([6.58,5.76,7.71,8.84,8.47,7.04,5.25,12.50,5.56,7.91,6.89])

def fit(x):

return 3+0.5*x

xfit = array( [amin(x), amax(x) ] )

subplot(221)

plot(x,y1,'ks', xfit, fit(xfit), 'r-', lw=2)

axis([2,20,2,14])

setp(gca(), xticklabels=[], yticks=(4,8,12), xticks=(0,10,20))

text(3,12, 'I', fontsize=20)

subplot(222)

plot(x,y2,'ks', xfit, fit(xfit), 'r-', lw=2)

axis([2,20,2,14])

setp(gca(), xticklabels=[], yticks=(4,8,12), yticklabels=[], xticks=(0,10,20))

text(3,12, 'II', fontsize=20)

subplot(223)

plot(x,y3,'ks', xfit, fit(xfit), 'r-', lw=2)

axis([2,20,2,14])

text(3,12, 'III', fontsize=20)

setp(gca(), yticks=(4,8,12), xticks=(0,10,20))

subplot(224)

xfit = array([amin(x4),amax(x4)])

plot(x4,y4,'ks', xfit, fit(xfit), 'r-', lw=2)

axis([2,20,2,14])

setp(gca(), yticklabels=[], yticks=(4,8,12), xticks=(0,10,20))

text(3,12, 'IV', fontsize=20)

#verify the stats

pairs = (x,y1), (x,y2), (x,y3), (x4,y4)

for x,y in pairs:

print ('mean=%1.2f, std=%1.2f, r=%1.2f'%(mean(y), std(y), corrcoef(x,y)[0][1]))

show()

mean=7.50, std=1.94, r=0.82 mean=7.50, std=1.94, r=0.82 mean=7.50, std=1.94, r=0.82 mean=7.50, std=1.94, r=0.82

So that's the bad $R^2$, what about the residual plots¶

x = array([10, 8, 13, 9, 11, 14, 6, 4, 12, 7, 5])

y1 = array([8.04, 6.95, 7.58, 8.81, 8.33, 9.96, 7.24, 4.26, 10.84, 4.82, 5.68])

y2 = array([9.14, 8.14, 8.74, 8.77, 9.26, 8.10, 6.13, 3.10, 9.13, 7.26, 4.74])

y3 = array([7.46, 6.77, 12.74, 7.11, 7.81, 8.84, 6.08, 5.39, 8.15, 6.42, 5.73])

x4 = array([8,8,8,8,8,8,8,19,8,8,8])

y4 = array([6.58,5.76,7.71,8.84,8.47,7.04,5.25,12.50,5.56,7.91,6.89])

df = pd.DataFrame([x, x4, y1, y2, y3, y4]).T

df.columns = ['x', 'x4', 'y1', 'y2', 'y3', 'y4']

def regress(x, y, data):

formula = '%s ~ %s' % (y, x, )

return sm.ols(formula, data=data).fit()

subplot(221)

plt.plot(df.x, regress('x', 'y1', df).resid, 'ro');

plt.title('I');

subplot(222)

plt.plot(df.x, regress('x', 'y2', df).resid, 'ro');

plt.title('II');

subplot(223)

plt.plot(df.x, regress('x', 'y3', df).resid, 'ro');

plt.title('III');

subplot(224)

plt.plot(df.x, regress('x4', 'y4', df).resid, 'ro');

plt.title('IV');

** and the residuals are fucked up too **

Inference in Regression¶

Consider the model $$ Y_i = \beta_0 + \beta_1 X_i + \epsilon_i $$

$\epsilon \sim N(0, \sigma^2)$.

We assume that the true model is known.

$\hat \beta_0 = \bar Y - \hat \beta_1 \bar X $

$\hat \beta_1 = Cor(X, Y) \cdot \dfrac{Sd(Y)}{Sd(X)} $

Not sure if this last equation should be $\beta_1$ or $\hat \beta_1$

$\hat \beta_1= \dfrac{\sum(X_i - \bar X)(Y_i - \bar Y)}{\sum(X_i - \bar X)^2}$

Tests for Regression Coefficients¶

Review¶

- Statistics like $\frac{\hat \theta - \theta}{\hat \sigma_{\hat \theta}}$ often have the following properties.

- Is normally distributed and has a finite sample Student's T distribution if the estimated variance is replaced with a sample estimate (under normality assumptions).

- Can be used to test $H_0 : \theta = \theta_0$ versus $H_a : \theta >, <, \neq \theta_0$.

- Can be used to create a confidence interval for $\theta$ via $\hat \theta \pm Q_{1-\alpha/2} \hat \sigma_{\hat \theta}$

- In the case of regression with iid sampling assumptions and normal errors, our inferences will follow

very similarily to what you saw in your inference class.

- We won't cover asymptotics for regression analysis, but suffice it to say that under assumptions

on the ways in which the $X$ values are collected, the iid sampling model, and mean model, the normal results hold to create intervals and confidence intervals

Standard errors (conditioned on X)¶

$$ \begin{align} Var(\hat \beta_1) & = Var\left(\frac{\sum_{i=1}^n (Y_i - \bar Y) (X_i - \bar X)}{\sum_{i=1}^n (X_i - \bar X)^2}\right) \\ & = \frac{Var\left(\sum_{i=1}^n Y_i (X_i - \bar X) \right) }{\left(\sum_{i=1}^n (X_i - \bar X)^2 \right)^2} \\ & = \frac{\sum_{i=1}^n \sigma^2(X_i - \bar X)^2}{\left(\sum_{i=1}^n (X_i - \bar X)^2 \right)^2} \\ & = \frac{\sigma^2}{\sum_{i=1}^n (X_i - \bar X)^2} \\ \end{align} $$Results¶

- $\sigma_{\hat \beta_1}^2 = Var(\hat \beta_1) = \sigma^2 / \sum_{i=1}^n (X_i - \bar X)^2$

- $\sigma_{\hat \beta_0}^2 = Var(\hat \beta_0) = \left(\frac{1}{n} + \frac{\bar X^2}{\sum_{i=1}^n (X_i - \bar X)^2 }\right)\sigma^2$

- In practice, $\sigma$ is replaced by its estimate.

- It's probably not surprising that under iid Gaussian errors

follows a $t$ distribution with $n-2$ degrees of freedom and a normal distribution for large $n$.

- This can be used to create confidence intervals and perform

hypothesis tests.

%%R -o diamond

library(UsingR); data(diamond)

y <- diamond$price; x <- diamond$carat; n <- length(y)

beta1 <- cor(y, x) * sd(y) / sd(x)

beta0 <- mean(y) - beta1 * mean(x)

e <- y - beta0 - beta1 * x

sigma <- sqrt(sum(e^2) / (n-2))

ssx <- sum((x - mean(x))^2)

seBeta0 <- (1 / n + mean(x) ^ 2 / ssx) ^ .5 * sigma

seBeta1 <- sigma / sqrt(ssx)

tBeta0 <- beta0 / seBeta0;

tBeta1 <- beta1 / seBeta1

pBeta0 <- 2 * pt(abs(tBeta0), df = n - 2, lower.tail = FALSE)

pBeta1 <- 2 * pt(abs(tBeta1), df = n - 2, lower.tail = FALSE)

coefTable <- rbind(c(beta0, seBeta0, tBeta0, pBeta0), c(beta1, seBeta1, tBeta1, pBeta1))

colnames(coefTable) <- c("Estimate", "Std. Error", "t value", "P(>|t|)")

rownames(coefTable) <- c("(Intercept)", "x")

coefTable

Estimate Std. Error t value P(>|t|) (Intercept) -259.6259 17.31886 -14.99094 2.523271e-19 x 3721.0249 81.78588 45.49715 6.751260e-40

import scipy.stats as st

n = 46

st.t.pdf(abs(1), df = n - 2)

0.23924692073493825

x = diamond['carat']

y = diamond['price']

n = float(len(y))

beta1 = y.corr(x) * y.std() / x.std() # regression slope

beta0 = y.mean() - beta1 * x.mean() # regression intercept

e = y - (beta1 * x + beta0) # errors

sigma = ((e**2).sum()/(n - 2))**0.5 # unbiased residual mean

ssx = ((x - x.mean())**2).sum() # sum of squared Xs

seBeta0 = (1/n + x.mean()**2 / ssx)**0.5 * sigma

seBeta1 = sigma / ssx**0.5

tBeta0 = beta0 / seBeta0

tBeta1 = beta1 / seBeta1

# TODO: review hypothesis testing

pBeta0 = 2 * (1-st.t.cdf(abs(tBeta0), df = n - 2))

pBeta1 = 2 * (1-st.t.cdf(abs(tBeta1), df = n - 2))

l = [[beta0, seBeta0, tBeta0, pBeta0],

[beta1, seBeta1, tBeta1, pBeta1]]

coefTable = pd.DataFrame((_ for _ in l),

columns = ["Estimate", "Std. Error", "t value", "P(>|t|)"],

index = ["(Intercept)", "x"])

coefTable

| Estimate | Std. Error | t value | P(>|t|) | |

|---|---|---|---|---|

| (Intercept) | -259.625907 | 17.318856 | -14.990938 | 0 |

| x | 3721.024852 | 81.785880 | 45.497155 | 0 |

fit = sm.ols("price ~ carat", data=diamond).fit()

fit.summary()

| Dep. Variable: | price | R-squared: | 0.978 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.978 |

| Method: | Least Squares | F-statistic: | 2070. |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 6.75e-40 |

| Time: | 15:48:23 | Log-Likelihood: | -233.20 |

| No. Observations: | 48 | AIC: | 470.4 |

| Df Residuals: | 46 | BIC: | 474.1 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | -259.6259 | 17.319 | -14.991 | 0.000 | -294.487 -224.765 |

| carat | 3721.0249 | 81.786 | 45.497 | 0.000 | 3556.398 3885.651 |

| Omnibus: | 0.739 | Durbin-Watson: | 1.994 |

|---|---|---|---|

| Prob(Omnibus): | 0.691 | Jarque-Bera (JB): | 0.181 |

| Skew: | 0.056 | Prob(JB): | 0.913 |

| Kurtosis: | 3.280 | Cond. No. | 18.5 |

%%R

data(diamond)

fit <- lm("price ~ carat", data=diamond)

summary(fit)

Call:

lm(formula = "price ~ carat", data = diamond)

Residuals:

Min 1Q Median 3Q Max

-85.159 -21.448 -0.869 18.972 79.370

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -259.63 17.32 -14.99 <2e-16 ***

carat 3721.02 81.79 45.50 <2e-16 ***

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 31.84 on 46 degrees of freedom

Multiple R-squared: 0.9783, Adjusted R-squared: 0.9778

F-statistic: 2070 on 1 and 46 DF, p-value: < 2.2e-16

Interlude to find Python equivalant of R's:¶

pt(abs(tBeta1), df = n - 2, lower.tail = FALSE)

print ('\n'.join(st.t.pdf.__doc__.split('\n')[:100]))

Probability density function at x of the given RV.

Parameters

----------

x : array_like

quantiles

arg1, arg2, arg3,... : array_like

The shape parameter(s) for the distribution (see docstring of the

instance object for more information)

loc : array_like, optional

location parameter (default=0)

scale : array_like, optional

scale parameter (default=1)

Returns

-------

pdf : ndarray

Probability density function evaluated at x

import numpy as np

x = np.arange(-3, 3, .01)

ypdf = [st.t.pdf(_, n - 2) for _ in x]

ycdf = [1 - st.t.cdf(_, n - 2) for _ in x]

f, axarr = plt.subplots(2, sharex=True)

axarr[0].plot(x, ypdf)

axarr[0].set_title('PDF')

axarr[1].plot(x, ycdf)

axarr[1].set_title('CDF');

%%R -o dfR

# test to figure out wtf the pt function is doing

require(ggplot2)

n <- 48

x <- seq(-3, 3, by=.01)

y <- pt(x, df = n - 2, lower.tail = FALSE)

df1 <- data.frame(x, y, 'false')

names(df1) <- c('x', 'y', 'tail')

y <- pt(x, df = n - 2, lower.tail = TRUE)

df2 <- data.frame(x, y, 'true')

names(df2) <- c('x', 'y', 'tail')

dfR <- rbind(df1, df2)

ggplot(dfR, aes(x, y, colour=tail)) + geom_line() + theme_bw() + ggtitle("Effect of lower.tail parameter in `pt` function")

So the pt function creates a cdf

dfR = dfR[dfR['tail'] == 'false']

f, axarr = plt.subplots(2, sharex=True)

axarr[0].plot(dfR['x'], dfR['y'])

axarr[0].set_title('R CDF')

axarr[1].plot(x, ycdf)

axarr[1].set_title('Python CDF');

End Interlude¶

Getting a confidence interval¶

%%R

sumCoef <- summary(fit)$coefficients

sumCoef

Estimate Std. Error t value Pr(>|t|) (Intercept) -259.6259 17.31886 -14.99094 2.523271e-19 carat 3721.0249 81.78588 45.49715 6.751260e-40

%%R

sumCoef <- summary(fit)$coefficients

sumCoef[1,1] + c(-1, 1) * qt(.975, df = fit$df) * sumCoef[1, 2]

[1] -294.4870 -224.7649

%%R

sumCoef[2,1] + c(-1, 1) * qt(.975, df = fit$df) * sumCoef[2, 2]

[1] 3556.398 3885.651

coefTable['Estimate']['x'] + pd.Series([-1, 1]) * coefTable['Std. Error']['x'] * st.t.ppf(.975, fit.df_resid)

0 3556.398413 1 3885.651290 dtype: float64

coefTable['Estimate']['(Intercept)'] + pd.Series([-1, 1]) * coefTable['Std. Error']['(Intercept)'] * st.t.ppf(.975, fit.df_resid)

0 -294.486957 1 -224.764858 dtype: float64

WTF are these different R t-distribution functions doing

%%R

require(reshape)

require(ggplot2)

n <- 3

by <- 0.001

x <- seq(-n, n, by)

qt <- qt(x, df = 30)

pt <- pt(x, df=30)

dt <- dt(x, df=30)

rt <- rt(x, df=30)

df <- data.frame(x, qt, pt, dt, rt)

names(df) <- c('x', 'qt\ndensity', 'pt\ndistribution', 'dt\nquantile', 'rt\nrandom')

mdf <- melt.data.frame(df, id = c('x'))

g <- ggplot(mdf, aes(x, value, colour=variable)) + geom_point(alpha=0.1)

g <- g + facet_wrap(~variable, scale='free_y') + theme_bw()

g <- g + ggtitle("R Functions for T-Distribution") + theme(legend.position="none")

g <- g + ylab('f(x)')

g

Loading required package: reshape

Loading required package: plyr

Attaching package: ‘plyr’

The following object is masked from ‘package:Hmisc’:

is.discrete, summarize

Attaching package: ‘reshape’

The following object is masked from ‘package:plyr’:

rename, round_any

ptandqtare the opposite of eachother.- The input of

ptis a $z$ score and you get back the $P(z>X)$ - The input of

qtis some $p, p\in [0, 1]$. You get back a $z$ such that $P(z>X) = p$

x = np.arange(-3, 3, .001)

dt = st.t.pdf(x, 30)

qt = st.t.ppf(x, 30)

pt = st.t.cdf(x, 30)

f, axarr = plt.subplots(3, sharex=True)

axarr[0].plot(x, dt)

axarr[0].set_title('Python st.t.pdf = R dt')

axarr[1].plot(x, qt)

axarr[1].set_title('Python st.t.ppf = R qt')

axarr[2].plot(x, pt)

axarr[2].set_title('Python st.t.cdf = R pt');

st.t.ppf(.975, 46)

2.0128955952945886

st.norm.ppf(.975)

1.959963984540054

%%R

qt(.975, df = fit$df)

[1] 2.012896

Prediction of Outcomes¶

- Consider predicting $Y$ at a value of $X$

- Predicting the price of a diamond given the carat

- Predicting the height of a child given the height of the parents

- The obvious estimate for prediction at point $x_0$ is

- A standard error is needed to create a prediction interval.

- There's a distinction between intervals for the regression line at point $x_0$ and the prediction of what a $y$ would be at point $x_0$.

- Line at $x_0$ se, $\hat \sigma\sqrt{\frac{1}{n} + \frac{(x_0 - \bar X)^2}{\sum_{i=1}^n (X_i - \bar X)^2}}$

- Prediction interval se at $x_0$, $\hat \sigma\sqrt{1 + \frac{1}{n} + \frac{(x_0 - \bar X)^2}{\sum_{i=1}^n (X_i - \bar X)^2}}$

Confidence Intervals¶

$\theta$ via $\hat \theta \pm Q_{1-\alpha/2} \hat \sigma_{\hat \theta}$

Plotting the prediction intervals¶

%%R

data(diamond)

x <- diamond$carat; y <- diamond$price

plot(x, y, frame=FALSE,xlab="Carat",ylab="Dollars",pch=21,col="black", bg="lightblue", cex=2)

abline(fit, lwd = 2)

xVals <- seq(min(x), max(x), by = .01)

yVals <- beta0 + beta1 * xVals

se1 <- sigma * sqrt(1 / n + (xVals - mean(x))^2/ssx) # orange

se2 <- sigma * sqrt(1 + 1 / n + (xVals - mean(x))^2/ssx) # blue

lines(xVals, yVals + 2 * se1, col='orange')

lines(xVals, yVals - 2 * se1, col='orange')

lines(xVals, yVals + 2 * se2, col='blue')

lines(xVals, yVals - 2 * se2, col='blue')

black line = OLS regression line

orange lines = standard error line

blue lines = standard error prediction interval

Discussion¶

- Both intervals have varying widths.

- Least width at the mean of the Xs.

- We are quite confident in the regression line, so that

interval is very narrow.

- If we knew $\beta_0$ and $\beta_1$ this interval would have zero width.

- The prediction interval must incorporate the variabilibity

in the data around the line.

- Even if we knew $\beta_0$ and $\beta_1$ this interval would still have width.

ok... I'm taking this one on faith

Quiz 2¶

q1¶

Consider the following data with x as the predictor and y as as the outcome.

Give a P-value for the two sided hypothesis test of whether β1 from a linear regression model is 0 or not.

x = [0.61, 0.93, 0.83, 0.35, 0.54, 0.16, 0.91, 0.62, 0.62]

y = [0.67, 0.84, 0.6, 0.18, 0.85, 0.47, 1.1, 0.65, 0.36]

df = pd.DataFrame(zip(x, y), columns = ['x', 'y'])

$\beta_1 = Cor(x, y) \cdot \dfrac{Sd_y}{Sd_x}$

$\sigma_{\hat \beta_1}^2 = Var(\hat \beta_1) = \dfrac{\sigma^2}{\sum_{i=1}^n (X_i - \bar X)^2}$

$ \hat \sigma^2 = \frac{1}{n-2}\sum_{i=1}^n e_i^2 $

$ ssx = {\sum_{i=1}^n (X_i - \bar X)^2}$ (convenience value)

$\hat \beta_0 = \bar Y - \hat \beta_1 \bar X $

$ p = \dfrac{\mu - \mu:H_o}{\sigma}$

from math import sqrt

x = df['x']

y = df['y']

n = len(x)

beta1 = y.corr(x) * (y.std() / x.std())

beta0 = y.mean() - beta1 * x.mean()

ssx = ((x - mean(x))**2).sum()

yhat = beta0 + beta1 * x

e = y - yhat

sigma = sqrt(1.0/(n-2) * (e**2).sum())

se_beta1 = sqrt((sigma**2 / ssx))

p = beta1 / se_beta1

p

2.3254912115169395

results = sm.ols('y~x', data=df).fit().summary()

results

/Users/idris/.virtualenvs/regres/lib/python2.7/site-packages/scipy/stats/stats.py:1205: UserWarning: kurtosistest only valid for n>=20 ... continuing anyway, n=9 int(n))

| Dep. Variable: | y | R-squared: | 0.436 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.355 |

| Method: | Least Squares | F-statistic: | 5.408 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 0.0530 |

| Time: | 15:48:27 | Log-Likelihood: | 1.8658 |

| No. Observations: | 9 | AIC: | 0.2684 |

| Df Residuals: | 7 | BIC: | 0.6629 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 0.1885 | 0.206 | 0.914 | 0.391 | -0.299 0.676 |

| x | 0.7224 | 0.311 | 2.325 | 0.053 | -0.012 1.457 |

| Omnibus: | 1.435 | Durbin-Watson: | 1.384 |

|---|---|---|---|

| Prob(Omnibus): | 0.488 | Jarque-Bera (JB): | 0.698 |

| Skew: | -0.067 | Prob(JB): | 0.705 |

| Kurtosis: | 1.642 | Cond. No. | 5.85 |

q2¶

Consider the previous problem, give the estimate of the residual standard deviation.

sigma

0.2229980659078784

q3¶

In the mtcars data set, fit a linear regression model of weight (predictor) on mpg (outcome). Get a 95% confidence interval for the expected mpg at the average weight. What is the lower endpoint?

%%R -o mtcars

data(mtcars)

r = sm.ols('mpg ~ I(wt - wt.mean())', data=mtcars).fit()

alpha = 0.05

20.0906 + pd.Series([-1, 1]) * st.t.ppf(1 - alpha/2, 30) * .538

0 18.991857 1 21.189343 dtype: float64

t = r.summary().tables[1]

print (t.as_text())

=====================================================================================

coef std err t P>|t| [95.0% Conf. Int.]

-------------------------------------------------------------------------------------

Intercept 20.0906 0.538 37.313 0.000 18.991 21.190

I(wt - wt.mean()) -5.3445 0.559 -9.559 0.000 -6.486 -4.203

=====================================================================================

(20.0906 - 18.991) / .538

2.043866171003715

q4¶

Consider again the mtcars data set and a linear regression model with mpg as predicted by weight (1,000 lbs). A new car is coming weighing 3000 pounds. Construct a 95% prediction interval for its mpg. What is the upper endpoint?

- Prediction interval se at $x_0$,

$\hat \sigma\sqrt{1 + \frac{1}{n} + \frac{(x_0 - \bar X)^2}{\sum_{i=1}^n (X_i - \bar X)^2}}$

%%R

data(diamond)

x <- diamond$carat; y <- diamond$price

xVals <- seq(min(x), max(x), by = .01)

yVals <- beta0 + beta1 * xVals

se1 <- sigma * sqrt(1 / n + (xVals - mean(x))^2/ssx) # orange

se2 <- sigma * sqrt(1 + 1 / n + (xVals - mean(x))^2/ssx) # blue

x = mtcars['wt']

y = mtcars['mpg']

n = len(x)

ssx = (1.0/n) * ((x- x.mean())**2).sum()

e = (r.resid - x)

sigma = sqrt(1.0/(n-2) * (e**2).sum())

xVals = 3

se = sigma * sqrt(1 + 1 / n + (xVals - mean(x))**2/ssx)

se

4.731991939031135

(3 - mtcars.wt.mean()) * r.params[1] + r.params[0] + pd.Series([-1, 1]) * se * st.norm.ppf(.975)

0 11.977178 1 30.526245 dtype: float64

q6¶

Consider again the mtcars data set and a linear regression model with mpg as predicted by weight (in 1,000 lbs). A “short” ton is defined as 2,000 lbs. Construct a 95% confidence interval for the expected change in mpg per 1 short ton increase in weight. Give the lower endpoint.

sm.ols('mpg ~ I(wt/2)', data=mtcars).fit().summary()

| Dep. Variable: | mpg | R-squared: | 0.753 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.745 |

| Method: | Least Squares | F-statistic: | 91.38 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 1.29e-10 |

| Time: | 15:48:27 | Log-Likelihood: | -80.015 |

| No. Observations: | 32 | AIC: | 164.0 |

| Df Residuals: | 30 | BIC: | 167.0 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 37.2851 | 1.878 | 19.858 | 0.000 | 33.450 41.120 |

| I(wt / 2) | -10.6889 | 1.118 | -9.559 | 0.000 | -12.973 -8.405 |

| Omnibus: | 2.988 | Durbin-Watson: | 1.252 |

|---|---|---|---|

| Prob(Omnibus): | 0.225 | Jarque-Bera (JB): | 2.399 |

| Skew: | 0.668 | Prob(JB): | 0.301 |

| Kurtosis: | 2.877 | Cond. No. | 7.80 |

q7¶

r.resid.sum()

-2.9132252166164108e-13

Multivariate Regressions¶

- The general linear model extends simple linear regression (SLR)

by adding terms linearly into the model. $$ Y_i = \beta_1 X_{1i} + \beta_2 X_{2i} + \ldots + \beta_{p} X_{pi} + \epsilon_{i} = \sum_{k=1}^p X_{ik} \beta_j + \epsilon_{i} $$

- Here $X_{1i}=1$ typically, so that an intercept is included.

- Least squares (and hence ML estimates under iid Gaussianity

of the errors) minimizes $$ \sum_{i=1}^n \left(Y_i - \sum_{k=1}^p X_{ki} \beta_j\right)^2 $$

- Note, the important linearity is linearity in the coefficients.

Thus $$ Y_i = \beta_1 X_{1i}^2 + \beta_2 X_{2i}^2 + \ldots + \beta_{p} X_{pi}^2 + \epsilon_{i} $$ is still a linear model. (We've just squared the elements of the predictor variables.)

How to get estimates¶

The real way requires linear algebra... ( and gradient descent for data too expensive to inverse)

So skipping a bunch of math,

We get that $$ \hat \beta_1 = \frac{\sum_{i=1}^n e_{i, Y | X_2} e_{i, X_1 | X_2}}{\sum_{i=1}^n e_{i, X_1 | X_2}^2} $$

- That is, the regression estimate for $\beta_1$ is the regression

through the origin estimate having regressed $X_2$ out of both the response and the predictor.

- (Similarly, the regression estimate for $\beta_2$ is the regression through the origin estimate having regressed $X_1$ out of both the response and the predictor.)

- More generally, multivariate regression estimates are exactly those having removed the linear relationship of the other variables

from both the regressor and response.

Multivariable regression examples¶

Swiss fertility data¶

%%R

library(datasets); data(swiss); require(stats); require(graphics)

pairs(swiss, panel = panel.smooth, main = "Swiss data", col = 3 + (swiss$Catholic > 50))

%%R -o swiss -w 960 -h 960

require(GGally)

library(datasets);

data(swiss);

theme_set(theme_bw())

swiss$cath_majority = factor(swiss$Catholic >= 50)

ggpairs(swiss,

lower = list(continuous = "smooth"),

colour = "cath_majority"

)

Loading required package: GGally

_ = pd.scatter_matrix(swiss, figsize=(12,8), diagonal='kde');

try:

del swiss['cath_majority']

except KeyError:

pass

swiss.columns = [_.replace('.', '_') for _ in swiss.columns]

y = 'Fertility'

x = "+".join(swiss.columns - [y])

formula = '%s ~ %s' % (y, x)

results1 = sm.ols(formula, data=swiss).fit()

results1.summary()

| Dep. Variable: | Fertility | R-squared: | 0.707 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.671 |

| Method: | Least Squares | F-statistic: | 19.76 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 5.59e-10 |

| Time: | 15:48:45 | Log-Likelihood: | -156.04 |

| No. Observations: | 47 | AIC: | 324.1 |

| Df Residuals: | 41 | BIC: | 335.2 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 66.9152 | 10.706 | 6.250 | 0.000 | 45.294 88.536 |

| Agriculture | -0.1721 | 0.070 | -2.448 | 0.019 | -0.314 -0.030 |

| Catholic | 0.1041 | 0.035 | 2.953 | 0.005 | 0.033 0.175 |

| Education | -0.8709 | 0.183 | -4.758 | 0.000 | -1.241 -0.501 |

| Examination | -0.2580 | 0.254 | -1.016 | 0.315 | -0.771 0.255 |

| Infant_Mortality | 1.0770 | 0.382 | 2.822 | 0.007 | 0.306 1.848 |

| Omnibus: | 0.058 | Durbin-Watson: | 1.454 |

|---|---|---|---|

| Prob(Omnibus): | 0.971 | Jarque-Bera (JB): | 0.155 |

| Skew: | -0.077 | Prob(JB): | 0.925 |

| Kurtosis: | 2.764 | Cond. No. | 807. |

We estimate an expected 0.17 decrease in standardized fertility for every 1% increase in percentage of males involved in agriculture in holding the remaining variables constant.

x = 'Agriculture'

y = 'Fertility'

results = sm.ols('%s ~ %s' % (y, x, ), data=swiss).fit()

results.summary()

| Dep. Variable: | Fertility | R-squared: | 0.125 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.105 |

| Method: | Least Squares | F-statistic: | 6.409 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 0.0149 |

| Time: | 15:48:45 | Log-Likelihood: | -181.73 |

| No. Observations: | 47 | AIC: | 367.5 |

| Df Residuals: | 45 | BIC: | 371.2 |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 60.3044 | 4.251 | 14.185 | 0.000 | 51.742 68.867 |

| Agriculture | 0.1942 | 0.077 | 2.532 | 0.015 | 0.040 0.349 |

| Omnibus: | 0.295 | Durbin-Watson: | 0.646 |

|---|---|---|---|

| Prob(Omnibus): | 0.863 | Jarque-Bera (JB): | 0.481 |

| Skew: | -0.095 | Prob(JB): | 0.786 |

| Kurtosis: | 2.542 | Cond. No. | 137. |

Simulation¶

How can adjustment reverse the sign of an effect? Let's try a simulation.

%%R -w 300 -h 300 -o df_simul

require(ggplot2)

require(GGally)

n <- 100;

x2 <- 1 : n;

x1 <- .01 * x2 + runif(n, -.1, .1);

y = -x1 + x2 + rnorm(n, sd = .01)

df_simul <- data.frame(x1, x2, y)

theme_set(theme_bw())

(ggpairs(df_simul))

r1 = sm.ols('y ~ x1', data=df_simul).fit()

r2 = sm.ols('y ~ x1 + x2', data=df_simul).fit()

r1.params, r2.params

(Intercept 3.187722 x1 93.551646 dtype: float64, Intercept 0.000236 x1 -0.966914 x2 0.999680 dtype: float64)

graphics.plot_regress_exog(r1, 'x1');

graphics.plot_regress_exog(r2, "x1");

x1 = df_simul['x1']

x2 = df_simul['x2']

y = df_simul['y']

f, axarr = plt.subplots(2, sharex=True)

axarr[0].plot(x1, y)

axarr[0].set_title('x1')

axarr[1].plot(x2, y)

axarr[1].set_title('x2');

r1 = sm.ols('x1 ~ x2', data=df_simul).fit()

r2 = sm.ols('y ~ x2', data=df_simul).fit()

# Here we factor out the effect of x2

# Not really sure what the point of is this

plt.plot(r1.resid, r2.resid, 'o');

So ultimately, if we use 'fertilitiy ~ agriculture' vs 'fertility ~ .', we get different signs for agriculture. So what is the better model? Well you can learn a lot more about this and learn Causal Inference, or you can ask 'how will people attack this model' and adjust accordingly

What if we include an unnecessary variable?¶

%%R -o swiss

data(swiss)

swiss.columns = [_.replace('.', '_') for _ in swiss.columns]

swiss['z'] = swiss['Agriculture'] + swiss['Education']

y = 'Fertility'

x = "+".join(swiss.columns - [y])

formula = '%s ~ %s' % (y, x)

swiss.head()

x

'Agriculture+Catholic+Education+Examination+Infant_Mortality+z'

r = sm.ols('%s ~ %s' % (y, x), data=swiss).fit();

# wait, this doesn't work. sm.ols doesn't pick up that Z is no good

r.summary()

| Dep. Variable: | Fertility | R-squared: | 0.707 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.671 |

| Method: | Least Squares | F-statistic: | 19.76 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 5.59e-10 |

| Time: | 15:48:49 | Log-Likelihood: | -156.04 |

| No. Observations: | 47 | AIC: | 324.1 |

| Df Residuals: | 41 | BIC: | 335.2 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 66.9152 | 10.706 | 6.250 | 0.000 | 45.294 88.536 |

| Agriculture | 0.1756 | 0.062 | 2.852 | 0.007 | 0.051 0.300 |

| Catholic | 0.1041 | 0.035 | 2.953 | 0.005 | 0.033 0.175 |

| Education | -0.5233 | 0.115 | -4.536 | 0.000 | -0.756 -0.290 |

| Examination | -0.2580 | 0.254 | -1.016 | 0.315 | -0.771 0.255 |

| Infant_Mortality | 1.0770 | 0.382 | 2.822 | 0.007 | 0.306 1.848 |

| z | -0.3477 | 0.073 | -4.760 | 0.000 | -0.495 -0.200 |

| Omnibus: | 0.058 | Durbin-Watson: | 1.454 |

|---|---|---|---|

| Prob(Omnibus): | 0.971 | Jarque-Bera (JB): | 0.155 |

| Skew: | -0.077 | Prob(JB): | 0.925 |

| Kurtosis: | 2.764 | Cond. No. | 1.11e+08 |

%%R

# R does pick up that Z is no good. Note that the agriculture value is different

# Post question about this

data(swiss)

z<-swiss$Agriculture+swiss$Education

lm(Fertility~.+z,data=swiss)

Call:

lm(formula = Fertility ~ . + z, data = swiss)

Coefficients:

(Intercept) Agriculture Examination Education Catholic

66.9152 -0.1721 -0.2580 -0.8709 0.1041

Infant.Mortality z

1.0770 NA

Dummy Variables¶

Dummy variables are smart¶

- Consider the linear model

where each $X_{i1}$ is binary so that it is a 1 if measurement $i$ is in a group and 0 otherwise. (Treated versus not in a clinical trial, for example.)

- Then for people in the group $E[Y_i] = \beta_0 + \beta_1$

- And for people not in the group $E[Y_i] = \beta_0$

- The LS fits work out to be $\hat \beta_0 + \hat \beta_1$ is the mean for those in the group and $\hat \beta_0$ is the mean for those not in the group.

- $\beta_1$ is interpretted as the increase or decrease in the mean comparing those in the group to those not.

- Note including a binary variable that is 1 for those not in the group would be redundant. It would create three parameters to describe two means.

More than 2 levels¶

- Consider a multilevel factor level. For didactic reasons, let's say a three level factor (example, US political party affiliation: Republican, Democrat, Independent)

- $Y_i = \beta_0 + X_{i1} \beta_1 + X_{i2} \beta_2 + \epsilon_i$.

- $X_{i1}$ is 1 for Republicans and 0 otherwise.

- $X_{i2}$ is 1 for Democrats and 0 otherwise.

- If $i$ is Republican $E[Y_i] = \beta_0 +\beta_1$

- If $i$ is Democrat $E[Y_i] = \beta_0 + \beta_2$.

- If $i$ is Independent $E[Y_i] = \beta_0$.

- $\beta_1$ compares Republicans to Independents.

- $\beta_2$ compares Democrats to Independents.

- $\beta_1 - \beta_2$ compares Republicans to Democrats.

- (Choice of reference category changes the interpretation.)

%%R -o InsectSprays

require(ggplot)

g <- ggplot(InsectSprays, aes(spray, count)) + geom_boxplot()

#summary(lm(count ~ spray, data = InsectSprays))$coef

print(g)

summary(lm(count ~ spray, data = InsectSprays))$coef

Loading required package: ggplot

Estimate Std. Error t value Pr(>|t|)

(Intercept) 14.5000000 1.132156 12.8074279 1.470512e-19

sprayB 0.8333333 1.601110 0.5204724 6.044761e-01

sprayC -12.4166667 1.601110 -7.7550382 7.266893e-11

sprayD -9.5833333 1.601110 -5.9854322 9.816910e-08

sprayE -11.0000000 1.601110 -6.8702352 2.753922e-09

sprayF 2.1666667 1.601110 1.3532281 1.805998e-01

# InsectSprays['spray'].astype(str)

results = sm.ols("count ~ spray", data = InsectSprays).fit()

results.summary()

| Dep. Variable: | count | R-squared: | 0.724 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.704 |

| Method: | Least Squares | F-statistic: | 34.70 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 3.18e-17 |

| Time: | 15:48:49 | Log-Likelihood: | -197.42 |

| No. Observations: | 72 | AIC: | 406.8 |

| Df Residuals: | 66 | BIC: | 420.5 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 14.5000 | 1.132 | 12.807 | 0.000 | 12.240 16.760 |

| spray[T.B] | 0.8333 | 1.601 | 0.520 | 0.604 | -2.363 4.030 |

| spray[T.C] | -12.4167 | 1.601 | -7.755 | 0.000 | -15.613 -9.220 |

| spray[T.D] | -9.5833 | 1.601 | -5.985 | 0.000 | -12.780 -6.387 |

| spray[T.E] | -11.0000 | 1.601 | -6.870 | 0.000 | -14.197 -7.803 |

| spray[T.F] | 2.1667 | 1.601 | 1.353 | 0.181 | -1.030 5.363 |

| Omnibus: | 3.201 | Durbin-Watson: | 1.753 |

|---|---|---|---|

| Prob(Omnibus): | 0.202 | Jarque-Bera (JB): | 2.421 |

| Skew: | 0.411 | Prob(JB): | 0.298 |

| Kurtosis: | 3.360 | Cond. No. | 6.85 |

### Hard coding the dummy variables

%%R

summary(lm(count~ I(1*(spray=='B'))+I(1*(spray=='C'))+

I(1*(spray=='D'))+I(1*(spray=='E'))+

I(1*(spray=='F')) ,data=InsectSprays))$coef

Estimate Std. Error t value Pr(>|t|) (Intercept) 14.5000000 1.132156 12.8074279 1.470512e-19 I(1 * (spray == "B")) 0.8333333 1.601110 0.5204724 6.044761e-01 I(1 * (spray == "C")) -12.4166667 1.601110 -7.7550382 7.266893e-11 I(1 * (spray == "D")) -9.5833333 1.601110 -5.9854322 9.816910e-08 I(1 * (spray == "E")) -11.0000000 1.601110 -6.8702352 2.753922e-09 I(1 * (spray == "F")) 2.1666667 1.601110 1.3532281 1.805998e-01

formula = "count~ I(1*(spray=='B'))+I(1*(spray=='C'))+I(1*(spray=='D'))+I(1*(spray=='E'))+I(1*(spray=='F'))"

formula

"count~ I(1*(spray=='B'))+I(1*(spray=='C'))+I(1*(spray=='D'))+I(1*(spray=='E'))+I(1*(spray=='F'))"

sm.ols(formula, data=InsectSprays).fit().summary()

| Dep. Variable: | count | R-squared: | 0.724 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.704 |

| Method: | Least Squares | F-statistic: | 34.70 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 3.18e-17 |

| Time: | 15:48:49 | Log-Likelihood: | -197.42 |

| No. Observations: | 72 | AIC: | 406.8 |

| Df Residuals: | 66 | BIC: | 420.5 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 14.5000 | 1.132 | 12.807 | 0.000 | 12.240 16.760 |

| I(1 * (spray == 'B')) | 0.8333 | 1.601 | 0.520 | 0.604 | -2.363 4.030 |

| I(1 * (spray == 'C')) | -12.4167 | 1.601 | -7.755 | 0.000 | -15.613 -9.220 |

| I(1 * (spray == 'D')) | -9.5833 | 1.601 | -5.985 | 0.000 | -12.780 -6.387 |

| I(1 * (spray == 'E')) | -11.0000 | 1.601 | -6.870 | 0.000 | -14.197 -7.803 |

| I(1 * (spray == 'F')) | 2.1667 | 1.601 | 1.353 | 0.181 | -1.030 5.363 |

| Omnibus: | 3.201 | Durbin-Watson: | 1.753 |

|---|---|---|---|

| Prob(Omnibus): | 0.202 | Jarque-Bera (JB): | 2.421 |

| Skew: | 0.411 | Prob(JB): | 0.298 |

| Kurtosis: | 3.360 | Cond. No. | 6.85 |

What if we include all 6?¶

%%R

lm(count~

I(1*(spray=='B'))+I(1*(spray=='C'))+ I(1*(spray=='D'))+I(1*(spray=='E'))+ I(1*(spray=='F'))+I(1*(spray=='A')),data=InsectSprays)

Call:

lm(formula = count ~ I(1 * (spray == "B")) + I(1 * (spray ==

"C")) + I(1 * (spray == "D")) + I(1 * (spray == "E")) + I(1 *

(spray == "F")) + I(1 * (spray == "A")), data = InsectSprays)

Coefficients:

(Intercept) I(1 * (spray == "B")) I(1 * (spray == "C")) I(1 * (spray == "D"))

14.5000 0.8333 -12.4167 -9.5833

I(1 * (spray == "E")) I(1 * (spray == "F")) I(1 * (spray == "A"))

-11.0000 2.1667 NA

formula="count~I(1*(spray=='B'))+I(1*(spray=='C'))+ I(1*(spray=='D'))+I(1*(spray=='E'))+ I(1*(spray=='F'))+I(1*(spray=='A'))"

sm.ols(formula, data=InsectSprays).fit().summary()

| Dep. Variable: | count | R-squared: | 0.724 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.704 |

| Method: | Least Squares | F-statistic: | 34.70 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 3.18e-17 |

| Time: | 15:48:50 | Log-Likelihood: | -197.42 |

| No. Observations: | 72 | AIC: | 406.8 |

| Df Residuals: | 66 | BIC: | 420.5 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 8.1429 | 0.396 | 20.554 | 0.000 | 7.352 8.934 |

| I(1 * (spray == 'B')) | 7.1905 | 1.036 | 6.943 | 0.000 | 5.123 9.258 |

| I(1 * (spray == 'C')) | -6.0595 | 1.036 | -5.851 | 0.000 | -8.127 -3.992 |

| I(1 * (spray == 'D')) | -3.2262 | 1.036 | -3.115 | 0.003 | -5.294 -1.159 |

| I(1 * (spray == 'E')) | -4.6429 | 1.036 | -4.483 | 0.000 | -6.711 -2.575 |

| I(1 * (spray == 'F')) | 8.5238 | 1.036 | 8.231 | 0.000 | 6.456 10.591 |

| I(1 * (spray == 'A')) | 6.3571 | 1.036 | 6.138 | 0.000 | 4.289 8.425 |

| Omnibus: | 3.201 | Durbin-Watson: | 1.753 |

|---|---|---|---|

| Prob(Omnibus): | 0.202 | Jarque-Bera (JB): | 2.421 |

| Skew: | 0.411 | Prob(JB): | 0.298 |

| Kurtosis: | 3.360 | Cond. No. | nan |

My Python version doesnt give the NA like R does when duplicative information is provided¶

What if we omit the intercept?¶

formula = "count~ I(1*(spray=='B'))+I(1*(spray=='C'))+I(1*(spray=='D'))+I(1*(spray=='E'))+I(1*(spray=='F')) -1"

sm.ols(formula, data=InsectSprays).fit().summary()

| Dep. Variable: | count | R-squared: | 0.653 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.627 |

| Method: | Least Squares | F-statistic: | 25.16 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 3.48e-14 |

| Time: | 15:48:50 | Log-Likelihood: | -242.37 |

| No. Observations: | 72 | AIC: | 494.7 |

| Df Residuals: | 67 | BIC: | 506.1 |

| Df Model: | 5 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| I(1 * (spray == 'B')) | 15.3333 | 2.098 | 7.309 | 0.000 | 11.146 19.521 |

| I(1 * (spray == 'C')) | 2.0833 | 2.098 | 0.993 | 0.324 | -2.104 6.271 |

| I(1 * (spray == 'D')) | 4.9167 | 2.098 | 2.344 | 0.022 | 0.729 9.104 |

| I(1 * (spray == 'E')) | 3.5000 | 2.098 | 1.668 | 0.100 | -0.687 7.687 |

| I(1 * (spray == 'F')) | 16.6667 | 2.098 | 7.945 | 0.000 | 12.479 20.854 |

| Omnibus: | 18.081 | Durbin-Watson: | 0.586 |

|---|---|---|---|

| Prob(Omnibus): | 0.000 | Jarque-Bera (JB): | 21.653 |

| Skew: | 1.228 | Prob(JB): | 1.99e-05 |

| Kurtosis: | 4.090 | Cond. No. | 1.00 |

InsectSprays.head()

| count | spray | |

|---|---|---|

| 0 | 10 | A |

| 1 | 7 | A |

| 2 | 20 | A |

| 3 | 14 | A |

| 4 | 14 | A |

IS = InsectSprays

grouped = IS.groupby("spray")

grouped.mean()

| count | |

|---|---|

| spray | |

| A | 14.500000 |

| B | 15.333333 |

| C | 2.083333 |

| D | 4.916667 |

| E | 3.500000 |

| F | 16.666667 |

Without the intercept we simply get the means

Interactions¶

%%R -o hunger

hunger <- read.csv('data/hunger.csv')

hunger <- hunger[hunger$Sex != "Both sexes", ]

lm1 <- lm(hunger$Numeric ~ hunger$Year)

qqnorm(residuals(lm1))

qqline(residuals(lm1))

hunger.describe()

| Year | Display.Value | Numeric | Low | High | Comments | |

|---|---|---|---|---|---|---|

| count | 858.000000 | 858.000000 | 858.000000 | 858 | 858 | 858 |

| mean | 2000.466200 | 17.927389 | 17.927389 | -2147483648 | -2147483648 | -2147483648 |

| std | 6.819349 | 13.674090 | 13.674090 | 0 | 0 | 0 |

| min | 1970.000000 | 0.500000 | 0.500000 | -2147483648 | -2147483648 | -2147483648 |

| 25% | 1997.000000 | 6.000000 | 6.000000 | -2147483648 | -2147483648 | -2147483648 |

| 50% | 2001.000000 | 15.300000 | 15.300000 | -2147483648 | -2147483648 | -2147483648 |

| 75% | 2006.000000 | 25.500000 | 25.500000 | -2147483648 | -2147483648 | -2147483648 |

| max | 2011.000000 | 65.500000 | 65.500000 | -2147483648 | -2147483648 | -2147483648 |

# columns that need to be fixed from the R import of data

cols = ['Low', 'High', 'Comments']

for col in cols:

hunger[col] = hunger[col].replace(-2147483648 , NaN)

hunger.head()

| Indicator | Data.Source | PUBLISH.STATES | Year | WHO.region | Country | Sex | Display.Value | Numeric | Low | High | Comments | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Children aged <5 years underweight (%) | NLIS_312819 | Published | 2004 | Eastern Mediterranean | Afghanistan | Female | 33.0 | 33.0 | NaN | NaN | NaN |

| 1 | Children aged <5 years underweight (%) | NLIS_312819 | Published | 2004 | Eastern Mediterranean | Afghanistan | Male | 32.7 | 32.7 | NaN | NaN | NaN |

| 2 | Children aged <5 years underweight (%) | NLIS_312361 | Published | 2000 | Europe | Albania | Male | 19.6 | 19.6 | NaN | NaN | NaN |

| 3 | Children aged <5 years underweight (%) | NLIS_312361 | Published | 2000 | Europe | Albania | Female | 14.2 | 14.2 | NaN | NaN | NaN |

| 4 | Children aged <5 years underweight (%) | NLIS_312879 | Published | 2005 | Europe | Albania | Male | 7.3 | 7.3 | NaN | NaN | NaN |

y = 'Numeric'

x = 'Year'

formula = '%s ~ %s' %(y, x,)

fit = sm.ols(formula, data=hunger).fit()

fit.summary()

| Dep. Variable: | Numeric | R-squared: | 0.018 |

|---|---|---|---|

| Model: | OLS | Adj. R-squared: | 0.017 |

| Method: | Least Squares | F-statistic: | 15.43 |

| Date: | Tue, 30 Sep 2014 | Prob (F-statistic): | 9.26e-05 |

| Time: | 21:04:21 | Log-Likelihood: | -3453.4 |

| No. Observations: | 858 | AIC: | 6911. |

| Df Residuals: | 856 | BIC: | 6920. |

| Df Model: | 1 |

| coef | std err | t | P>|t| | [95.0% Conf. Int.] | |

|---|---|---|---|---|---|

| Intercept | 551.6586 | 135.886 | 4.060 | 0.000 | 284.951 818.367 |

| Year | -0.2668 | 0.068 | -3.928 | 0.000 | -0.400 -0.133 |

| Omnibus: | 82.684 | Durbin-Watson: | 0.298 |

|---|---|---|---|

| Prob(Omnibus): | 0.000 | Jarque-Bera (JB): | 105.636 |

| Skew: | 0.855 | Prob(JB): | 1.15e-23 |

| Kurtosis: | 3.165 | Cond. No. | 5.87e+05 |

fig, ax = plt.subplots(figsize=(12, 8))

graphics.plot_regress_exog(fit, "Year", fig);

Remember the linear model¶

$$Hu_i = b_0 + b_1 Y_i + e_i$$$b_0$ = percent hungry at Year 0 (hasn't been calibrated to an interpretable value)

$b_1$ = decrease in percent hungry per year

$e_i$ = everything we didn't measure

Makes sense to subtract 1970 from all years

%%R

require(ggplot2)

g <- ggplot(hunger, aes(Year, Numeric, colour=Sex)) + geom_point(alpha=0.5)

g <- g + geom_smooth(method='lm', se=T)

g

Create one regression per gender, like the plot above¶

$${HuF}_i = {bf}_0 + {bf}_1 {YF}_i + {ef}_i$$${bf}_0$ = percent of girls hungry at Year 0

${bf}_1$ = decrease in percent of girls hungry per year

${ef}_i$ = everything we didn't measure

$${HuM}_i = {bm}_0 + {bm}_1 {YM}_i + {em}_i$$${bm}_0$ = percent of boys hungry at Year 0

${bm}_1$ = decrease in percent of boys hungry per year

${em}_i$ = everything we didn't measure

Two lines, two slopes¶

%%R

lmM <- lm(hunger$Numeric[hunger$Sex=="Male"] ~ hunger$Year[hunger$Sex=="Male"])

lmF <- lm(hunger$Numeric[hunger$Sex=="Female"] ~ hunger$Year[hunger$Sex=="Female"])

print(summary(lmM))

plot(hunger$Year,hunger$Numeric,pch=19)

points(hunger$Year,hunger$Numeric,pch=19,col=((hunger$Sex=="Male")*1+1))

lines(hunger$Year[hunger$Sex=="Male"],lmM$fitted,col="black",lwd=3)

lines(hunger$Year[hunger$Sex=="Female"],lmF$fitted,col="red",lwd=3)

Call:

lm(formula = hunger$Numeric[hunger$Sex == "Male"] ~ hunger$Year[hunger$Sex ==

"Male"])

Residuals:

Min 1Q Median 3Q Max

-25.092 -12.122 -1.717 8.249 42.226

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 580.84512 195.50162 2.971 0.00314 **

hunger$Year[hunger$Sex == "Male"] -0.28091 0.09773 -2.874 0.00425 **

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 13.8 on 427 degrees of freedom

Multiple R-squared: 0.01898, Adjusted R-squared: 0.01668

F-statistic: 8.262 on 1 and 427 DF, p-value: 0.00425

Two lines, same slope¶

$${Hu}_i = b_0 + b_1 \mathbb{1}(Sex_i="Male") + b_2 Y_i + e^*_i$$$b_0$ - percent hungry at year zero for females

$b_0 + b_1$ - percent hungry at year zero for males

$b_2$ - change in percent hungry (for either males or females) in one year

$e^*_i$ - everything we didn't measure

Males and Females both have slope $\beta_2$

Males have intercept $\beta_0 + \beta_1$

Females have intercept $\beta_0$

%%R

lmBoth <- lm(hunger$Numeric ~ hunger$Year + hunger$Sex)

print(summary(lmBoth))

plot(hunger$Year,hunger$Numeric,pch=19)

points(hunger$Year,hunger$Numeric,pch=19,col=((hunger$Sex=="Male")*1+1))

abline(c(lmBoth$coeff[1],lmBoth$coeff[2]),col="red",lwd=3)

abline(c(lmBoth$coeff[1] + lmBoth$coeff[3],lmBoth$coeff[2] ),col="black",lwd=3)

Call:

lm(formula = hunger$Numeric ~ hunger$Year + hunger$Sex)

Residuals:

Min 1Q Median 3Q Max

-24.691 -11.556 -2.149 7.224 46.016

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 550.68998 135.61772 4.061 5.34e-05 ***

hunger$Year -0.26680 0.06779 -3.936 8.97e-05 ***

hunger$SexMale 1.93730 0.92406 2.097 0.0363 *

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 13.53 on 855 degrees of freedom

Multiple R-squared: 0.02273, Adjusted R-squared: 0.02044

F-statistic: 9.942 on 2 and 855 DF, p-value: 5.39e-05

Two lines, different slopes (interactions)¶

$${Hu}_i = b_0 + b_1 \mathbb{1}(Sex_i="Male") + b_2 Y_i + b_3 \mathbb{1}(Sex_i="Male")\cdot Y_i + e^+ _i$$$b_0$ - percent hungry at year zero for females

$b_0 + b_1$ - percent hungry at year zero for males

$b_2$ - change in percent hungry (females) in one year

$b_2 + b_3$ - change in percent hungry (males) in one year

$e^+_i$ - everything we didn't measure

$E[Hu_{male}] = \beta_0 + \beta_1 + \beta_2Y + \beta_3Y$

$E[Hu_{female}] = \beta_0 + \beta_2Y$

%%R

lmBoth <- lm(hunger$Numeric ~ hunger$Year + hunger$Sex + hunger$Sex*hunger$Year)

print(summary(lmBoth))

plot(hunger$Year,hunger$Numeric,pch=19)

points(hunger$Year,hunger$Numeric,pch=19,col=((hunger$Sex=="Male")*1+1))

abline(c(lmBoth$coeff[1],lmBoth$coeff[2]),col="red",lwd=3)

abline(c(lmBoth$coeff[1] + lmBoth$coeff[3],lmBoth$coeff[2] +lmBoth$coeff[4]),col="black",lwd=3)

Call:

lm(formula = hunger$Numeric ~ hunger$Year + hunger$Sex + hunger$Sex *

hunger$Year)

Residuals:

Min 1Q Median 3Q Max

-25.092 -11.613 -2.148 7.290 46.149

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 522.47214 191.89871 2.723 0.00661 **

hunger$Year -0.25270 0.09593 -2.634 0.00858 **

hunger$SexMale 58.37299 271.38575 0.215 0.82975

hunger$Year:hunger$SexMale -0.02821 0.13566 -0.208 0.83531

---

Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1

Residual standard error: 13.54 on 854 degrees of freedom

Multiple R-squared: 0.02278, Adjusted R-squared: 0.01934

F-statistic: 6.635 on 3 and 854 DF, p-value: 0.0001969

Interpretting a continuous interaction¶

$$ E[Y_i | X_{1i}=x_1, X_{2i}=x_2] = \beta_0 + \beta_1 x_{1} + \beta_2 x_{2} + \beta_3 x_{1}x_{2} $$Holding $X_2$ constant we have $$ E[Y_i | X_{1i}=x_1+1, X_{2i}=x_2]-E[Y_i | X_{1i}=x_1, X_{2i}=x_2] = \beta_1 + \beta_3 x_{2} $$

And thus the expected change in $Y$ per unit change in $X_1$ holding all else constant is not constant. $\beta_1$ is the slope when $x_{2} = 0$.