13. Scikit-Learn¶

Scikit-learn es una librería de Machine Learning para Python. Cuenta con varios algoritmos de__ clasificación__, regresión y clustering, incluyendo support vector machine(SVM), random_forest, gradient boosting y k-means; está diseñado para interoperar con las bibliotecas numéricas y científicas Python NumPy y SciPy.

Pero... ¿Qué es Machine Learning?

Machine Learning¶

Machine Learning es una disciplina científica del ámbito de la Inteligencia Artificial que crea sistemas que aprenden automáticamente. Aprender en este contexto quiere decir identificar patrones complejos en millones de datos. El aprendizaje que se está haciendo siempre se basa en algún tipo de observaciones o datos, como ejemplos (el caso más común en este curso), la experiencia directa, o la instrucción. Por lo tanto, en general, el aprendizaje automático es aprender a hacer mejor en el futuro sobre la base de lo que se experimentó en el pasado.

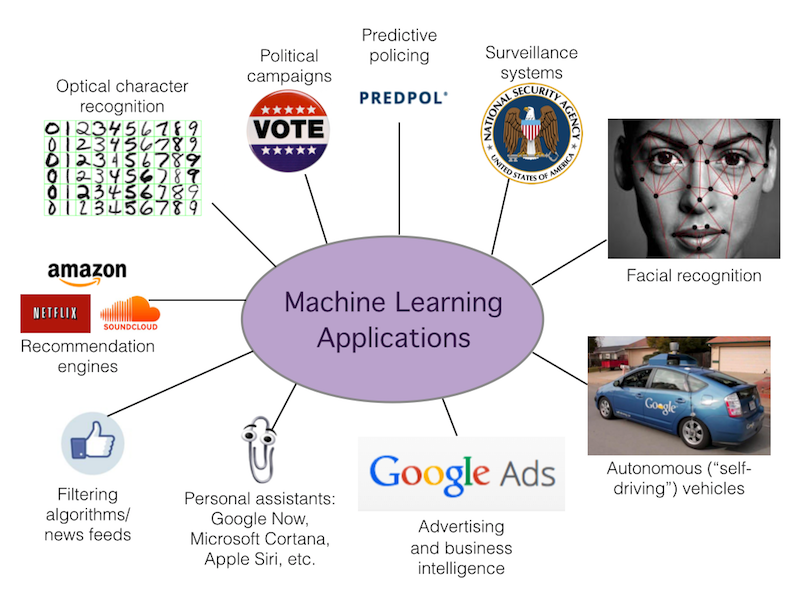

Aplicaciones de Machine Learning¶

Muchas actividades actualmente ya se están aprovechando del Machine Learning. Sectores como el de las compras online – ¿No te has preguntado alguna vez cómo se decide instantáneamente los productos recomendados para cada cliente al final de un proceso de compra? –, el online advertising – dónde poner un anuncio para que tenga más visibilidad en función del usuario que visita la web – o los filtros anti-spam llevan tiempo sacando partido a estas tecnologías.

El campo de aplicación práctica depende de la imaginación y de los datos que estén disponibles en la empresa. Estos son algunos ejemplos más:

- Detectar fraude en transacciones.

- Predecir de fallos en equipos tecnológicos.

- Prever qué empleados serán más rentables el año que viene (el sector de los Recursos Humanos está apostando seriamente por el Machine Learning).

- Seleccionar clientes potenciales basándose en comportamientos en las redes sociales, interacciones en la web…

- Predecir el tráfico urbano.

- Saber cuál es el mejor momento para publicar tuits, actualizaciones de Facebook o enviar las newsletter.

- Hacer prediagnósticos médicos basados en síntomas del paciente.

- Cambiar el comportamiento de una app móvil para adaptarse a las costumbres y necesidades de cada usuario.

- Detectar intrusiones en una red de comunicaciones de datos.

- Decidir cuál es la mejor hora para llamar a un cliente.

La tecnología está ahí. Los datos también. ¿Por qué esperar a probar algo que puede suponer una puerta abierta a nuevas formas de tomar decisiones basadas en datos? Seguro que has oído que los datos son el petróleo del futuro. Ahora veremos que tipos de problemas de machine learning existen:

Tipos de problemas de Machine Learning¶

En general, un problema de aprendizaje considera un conjunto de n muestras de datos y luego intenta predecir las propiedades de los datos desconocidos. Si cada muestra es más que un solo número y, por ejemplo, una entrada multidimensional (también conocida como datos multivariables), se dice que tiene varios atributos o características.

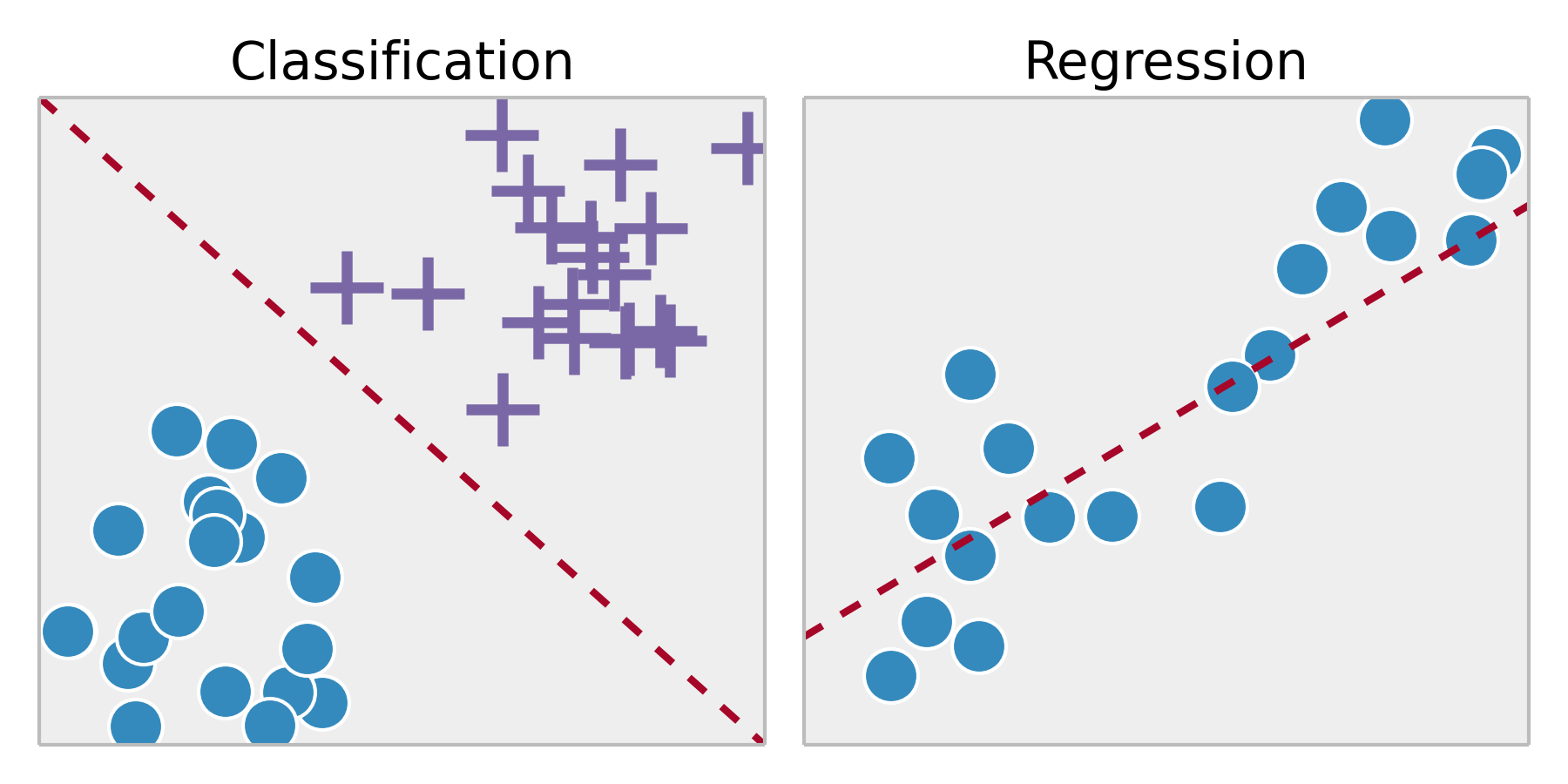

Podemos separar los problemas de aprendizaje en algunas categorías grandes:

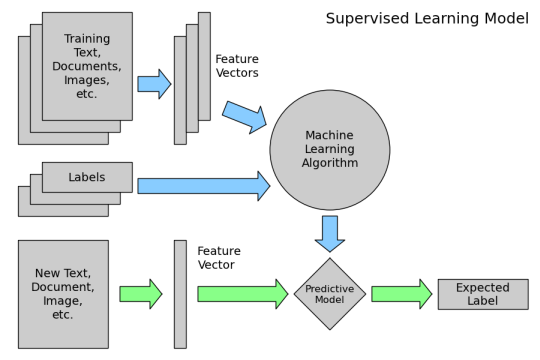

Aprendizaje supervisado, es una técnica para deducir una función a partir de datos de entrenamiento. Los datos de entrenamiento consisten de pares de objetos (entrada, salida esperada). La salida de la función puede ser un valor numérico (como en los problemas de regresión) o una etiqueta de clase (como en los de clasificación). El objetivo del aprendizaje supervisado es el de crear una función capaz de predecir el valor correspondiente a cualquier objeto de entrada válida después de haber visto una serie de ejemplos, los datos de entrenamiento.

Clasificación: las muestras pertenecen a dos o más clases y queremos aprender de los datos ya etiquetados cómo predecir la clase de datos sin etiquetar. Un ejemplo de problema de clasificación sería el ejemplo de reconocimiento de dígitos manuscritos, en el que el objetivo es asignar cada vector de entrada a uno de un número finito de categorías discretas. Otra manera de pensar en la clasificación es como una forma discreta (en contraposición a continua) de aprendizaje supervisado donde uno tiene un número limitado de categorías y para cada una de las n muestras proporcionadas, se trata de etiquetarlas con la categoría o clase correcta .

Regresión: si la salida deseada consiste en una o más variables continuas, entonces la tarea se llama regresión. Un ejemplo de un problema de regresión sería la predicción de la longitud de un salmón en función de su edad y peso.

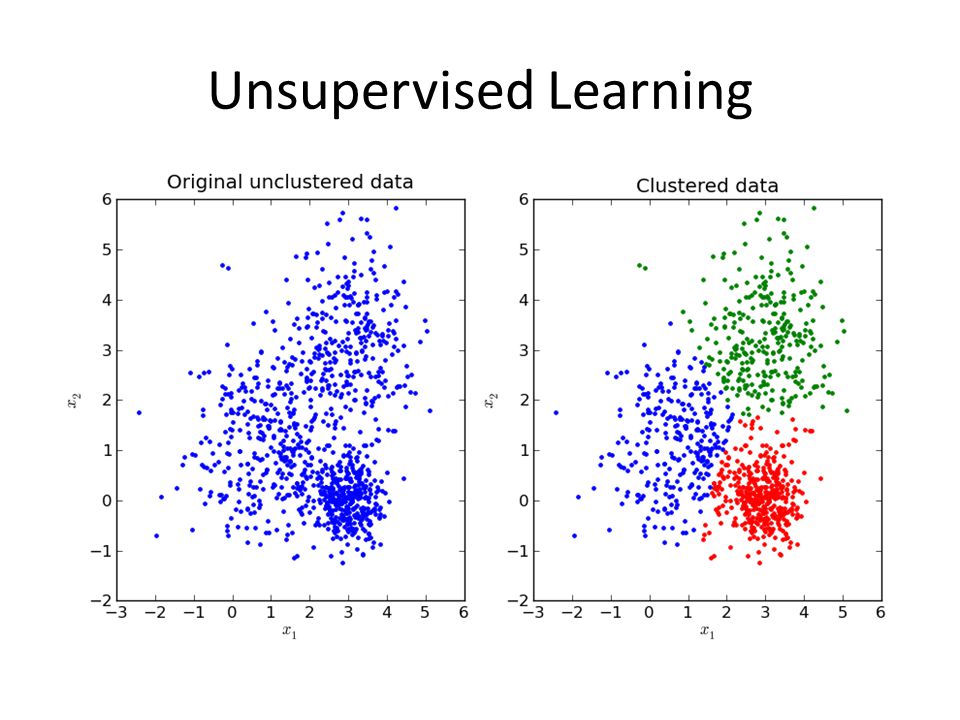

Aprendizaje no supervisado, en el que los datos de entrenamiento consisten en un conjunto de vectores de entrada x sin ningún valor objetivo correspondiente. El objetivo en tales problemas puede ser descubrir grupos de ejemplos similares dentro de los datos, donde se denomina clustering (agrupación).

Data de entrenamiento y data de pruebas¶

El aprendizaje automático consiste en aprender algunas propiedades de un conjunto de datos y aplicarlas a nuevos datos. Esta es la razón por la que una práctica común en el aprendizaje de máquina para evaluar un algoritmo es dividir los datos a mano en dos conjuntos, uno que llamamos el conjunto de entrenamiento en el que aprendemos las propiedades de datos y uno que llamamos el conjunto de pruebas en el que probamos estas propiedades.

¿Cómo funciona el aprendizaje automático?¶

En este caso veremos como funciona ML en el aprendizaje supervisado:

Primero, entrene un modelo de ML usando datos etiquetados

- "Etiquetado de datos" ha sido etiquetado con el resultado

- "Modelo de aprendizaje automático" aprende la relación entre los atributos de los datos y su resultado

A continuación, hacer predicciones sobre los nuevos datos para los que la etiqueta es desconocida

Elegir el algoritmo de ML adecuado¶

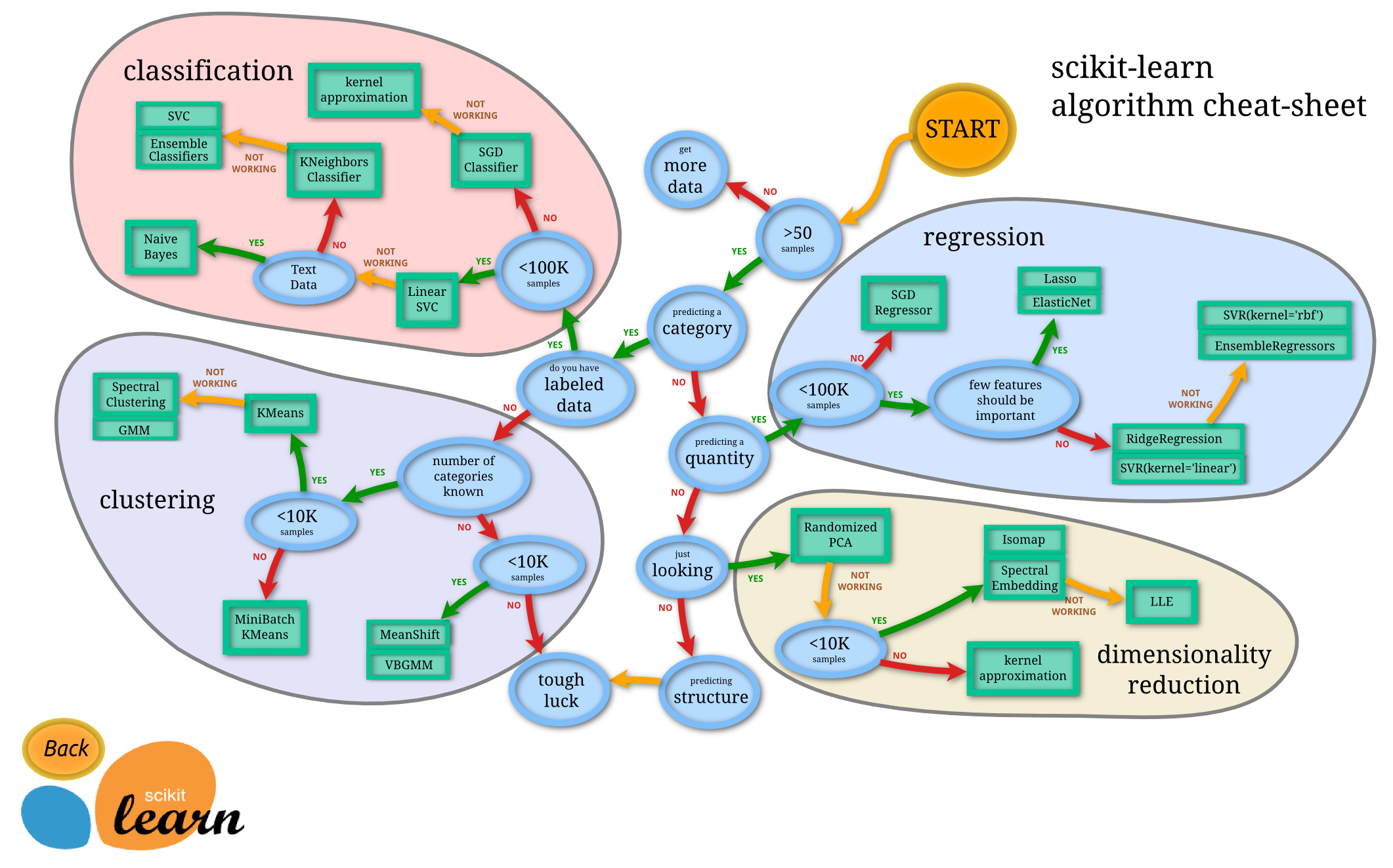

A menudo la parte más difícil de resolver un problema de aprendizaje de la máquina puede ser encontrar el estimador adecuado para el trabajo.

Diferentes estimadores son más adecuados para diferentes tipos de datos y diferentes problemas.

El diagrama de flujo siguiente está diseñado para dar a los usuarios un poco de una guía aproximada sobre cómo abordar los problemas con respecto a qué estimadores para probar sus datos.

# Esta línea configura matplotlib para mostrar las figuras incrustadas en el jupyter notebook

%matplotlib inline

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from IPython.display import HTML

plt.rcParams['figure.figsize'] = (12, 10)

1. Data Preprocessing¶

Los algoritmos de Machine Learning aprenden de los datos. Es fundamental que debamos ingresar los datos correctos para el problema que desea resolver. Incluso si usted tiene buenos datos, es necesario asegurarse de que está en una escala útil, formato e incluso que se incluyen características significativas.

En este parte usted aprenderá cómo preparar los datos para un algoritmo de aprendizaje automático. Este es un gran tema y que cubrirá los elementos esenciales.

Paso 1: Seleccionar datos¶

Este paso tiene que ver con la selección del subconjunto de todos los datos disponibles con los cuales se va a trabajar. Siempre hay un fuerte deseo por incluir todos los datos que están disponibles, mientras más data tengas será mejor (Puede ser falso...)

Paso 2: Preprocesamiento de datos¶

Después de haber seleccionado los datos, es necesario tener en cuenta cómo se van a utilizar los datos. Esta etapa de preprocesamiento se trata de obtener los datos seleccionados en una forma que se puede trabajar.

Tres pasos comunes de pre-procesamiento de datos se formateo, limpieza y toma de muestras:

- Formateo : Los datos que ha seleccionado puede que no se encuentren en un formato no deseado. Los datos pueden presentarse en una base de datos relacional y que le gustaría que en un archivo plano, o CSV TSV o quisas uno propio.

- Limpieza : La limpieza de datos es la elimación o de fijación de los datos que faltan. Puede haber casos en que los datos son incompletos (NaN) y no portan los datos que cree que necesita para resolver el problema. Estos casos pueden necesitar ser eliminado. Además, puede haber información sensible en algunos de los atributos y estos atributos pueden necesitar ser anónimos o eliminados de los datos por completo.

- Muestreo: Puede haber muchos más datos disponibles disponibles de los que necesita trabajar. Más datos pueden resultar en tiempos de ejecución mucho más largos para algoritmos y mayores requisitos computacionales y de memoria. Puede tomar una muestra representativa más pequeña de los datos seleccionados que puede ser mucho más rápida para explorar y prototipar soluciones antes de considerar el conjunto de datos completo.

Es muy probable que las herramientas de aprendizaje automático que utiliza en los datos influirán en el pre-procesamiento se le requerirá para llevar a cabo. Es probable que volver a este paso.

Paso 3: Transformar datos¶

El paso final es transformar los datos del proceso. El algoritmo específico con el que está trabajando y el conocimiento del dominio del problema influirá en este paso y es muy probable que tenga que revisar diferentes transformaciones de sus datos preprocesados mientras trabaja en su problema.

Tres transformaciones de datos comunes son escalamiento, descomposición de atributos y agregaciones de atributo. Este paso también se conoce como ingeniería de características.

- Escalamiento: Los datos preprocesados pueden contener atributos con una mezcla de escalas para varias cantidades tales como dólares, kilogramos y volumen de ventas. Muchos métodos de aprendizaje automático como los atributos de datos tienen la misma escala, como entre 0 y 1, para el valor más pequeño y el más grande para una característica dada. Considere cualquier escala de características que pueda necesitar realizar.

- Descomposición: Puede haber características que representan un concepto complejo que puede ser más útil para un método de aprendizaje de la máquina cuando se divide en las partes constituyentes. Un ejemplo es una fecha que puede tener componentes de día y hora que a su vez podrían dividirse más. Quizás sólo la hora del día sea relevante para resolver el problema. Considere qué características de descomposición puede realizar.

- Agregación: Puede haber características que se pueden agregar en una sola característica que sería más significativa para el problema que está tratando de resolver. Por ejemplo, puede haber instancias de datos para cada vez que un cliente inicia sesión en un sistema que podría agregarse en un recuento para el número de inicios de sesión que permite descartar las instancias adicionales. Considere qué tipo de agregación de características podría realizar.

Usted puede pasar un montón de tiempo las características de ingeniería de sus datos y puede ser muy beneficioso para el rendimiento de un algoritmo. Empiece pequeño y construya sobre las habilidades que usted aprende.

# Importing the dataset

dataset = pd.read_csv('../data/Data.csv')

X = dataset.iloc[:, :-1].values

y = dataset.iloc[:, 3].values

dataset.head()

| Country | Age | Salary | Purchased | |

|---|---|---|---|---|

| 0 | France | 44.0 | 72000.0 | No |

| 1 | Spain | 27.0 | 48000.0 | Yes |

| 2 | Germany | 30.0 | 54000.0 | No |

| 3 | Spain | 38.0 | 61000.0 | No |

| 4 | Germany | 40.0 | NaN | Yes |

# Haciendose cargo de los datos que faltan

# Importamos la clase imputer que se encargará de manejar la data faltante

from sklearn.preprocessing import Imputer

imputer = Imputer(missing_values = 'NaN', strategy = 'mean', axis = 0) # reemplazamos los Nan por la media

imputer = imputer.fit(X[:, 1:3])

X[:, 1:3] = imputer.transform(X[:, 1:3])

X

array([['France', 44.0, 72000.0],

['Spain', 27.0, 48000.0],

['Germany', 30.0, 54000.0],

['Spain', 38.0, 61000.0],

['Germany', 40.0, 63777.77777777778],

['France', 35.0, 58000.0],

['Spain', 38.77777777777778, 52000.0],

['France', 48.0, 79000.0],

['Germany', 50.0, 83000.0],

['France', 37.0, 67000.0]], dtype=object)

help(Imputer)

Help on class Imputer in module sklearn.preprocessing.imputation:

class Imputer(sklearn.base.BaseEstimator, sklearn.base.TransformerMixin)

| Imputation transformer for completing missing values.

|

| Read more in the :ref:`User Guide <imputation>`.

|

| Parameters

| ----------

| missing_values : integer or "NaN", optional (default="NaN")

| The placeholder for the missing values. All occurrences of

| `missing_values` will be imputed. For missing values encoded as np.nan,

| use the string value "NaN".

|

| strategy : string, optional (default="mean")

| The imputation strategy.

|

| - If "mean", then replace missing values using the mean along

| the axis.

| - If "median", then replace missing values using the median along

| the axis.

| - If "most_frequent", then replace missing using the most frequent

| value along the axis.

|

| axis : integer, optional (default=0)

| The axis along which to impute.

|

| - If `axis=0`, then impute along columns.

| - If `axis=1`, then impute along rows.

|

| verbose : integer, optional (default=0)

| Controls the verbosity of the imputer.

|

| copy : boolean, optional (default=True)

| If True, a copy of X will be created. If False, imputation will

| be done in-place whenever possible. Note that, in the following cases,

| a new copy will always be made, even if `copy=False`:

|

| - If X is not an array of floating values;

| - If X is sparse and `missing_values=0`;

| - If `axis=0` and X is encoded as a CSR matrix;

| - If `axis=1` and X is encoded as a CSC matrix.

|

| Attributes

| ----------

| statistics_ : array of shape (n_features,)

| The imputation fill value for each feature if axis == 0.

|

| Notes

| -----

| - When ``axis=0``, columns which only contained missing values at `fit`

| are discarded upon `transform`.

| - When ``axis=1``, an exception is raised if there are rows for which it is

| not possible to fill in the missing values (e.g., because they only

| contain missing values).

|

| Method resolution order:

| Imputer

| sklearn.base.BaseEstimator

| sklearn.base.TransformerMixin

| builtins.object

|

| Methods defined here:

|

| __init__(self, missing_values='NaN', strategy='mean', axis=0, verbose=0, copy=True)

| Initialize self. See help(type(self)) for accurate signature.

|

| fit(self, X, y=None)

| Fit the imputer on X.

|

| Parameters

| ----------

| X : {array-like, sparse matrix}, shape (n_samples, n_features)

| Input data, where ``n_samples`` is the number of samples and

| ``n_features`` is the number of features.

|

| Returns

| -------

| self : object

| Returns self.

|

| transform(self, X)

| Impute all missing values in X.

|

| Parameters

| ----------

| X : {array-like, sparse matrix}, shape = [n_samples, n_features]

| The input data to complete.

|

| ----------------------------------------------------------------------

| Methods inherited from sklearn.base.BaseEstimator:

|

| __getstate__(self)

|

| __repr__(self)

| Return repr(self).

|

| __setstate__(self, state)

|

| get_params(self, deep=True)

| Get parameters for this estimator.

|

| Parameters

| ----------

| deep : boolean, optional

| If True, will return the parameters for this estimator and

| contained subobjects that are estimators.

|

| Returns

| -------

| params : mapping of string to any

| Parameter names mapped to their values.

|

| set_params(self, **params)

| Set the parameters of this estimator.

|

| The method works on simple estimators as well as on nested objects

| (such as pipelines). The latter have parameters of the form

| ``<component>__<parameter>`` so that it's possible to update each

| component of a nested object.

|

| Returns

| -------

| self

|

| ----------------------------------------------------------------------

| Data descriptors inherited from sklearn.base.BaseEstimator:

|

| __dict__

| dictionary for instance variables (if defined)

|

| __weakref__

| list of weak references to the object (if defined)

|

| ----------------------------------------------------------------------

| Methods inherited from sklearn.base.TransformerMixin:

|

| fit_transform(self, X, y=None, **fit_params)

| Fit to data, then transform it.

|

| Fits transformer to X and y with optional parameters fit_params

| and returns a transformed version of X.

|

| Parameters

| ----------

| X : numpy array of shape [n_samples, n_features]

| Training set.

|

| y : numpy array of shape [n_samples]

| Target values.

|

| Returns

| -------

| X_new : numpy array of shape [n_samples, n_features_new]

| Transformed array.

# Codificación de datos categórico

# Codificando la variable independiente

from sklearn.preprocessing import LabelEncoder, OneHotEncoder

labelencoder_X = LabelEncoder()

X[:, 0] = labelencoder_X.fit_transform(X[:, 0])

onehotencoder = OneHotEncoder(categorical_features = [0])

X = onehotencoder.fit_transform(X).toarray()

# Codificando la variable dependiente

labelencoder_y = LabelEncoder()

y = labelencoder_y.fit_transform(y)

np.set_printoptions(formatter={'float': lambda x: "{0:0.2f}".format(x)}) #imprime con 2 decimales

X

array([[1.00, 0.00, 0.00, 44.00, 72000.00],

[0.00, 0.00, 1.00, 27.00, 48000.00],

[0.00, 1.00, 0.00, 30.00, 54000.00],

[0.00, 0.00, 1.00, 38.00, 61000.00],

[0.00, 1.00, 0.00, 40.00, 63777.78],

[1.00, 0.00, 0.00, 35.00, 58000.00],

[0.00, 0.00, 1.00, 38.78, 52000.00],

[1.00, 0.00, 0.00, 48.00, 79000.00],

[0.00, 1.00, 0.00, 50.00, 83000.00],

[1.00, 0.00, 0.00, 37.00, 67000.00]])

y

array([0, 1, 0, 0, 1, 1, 0, 1, 0, 1])

# División del conjunto de datos en el conjunto de entrenamiento y el conjunto de pruebas

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0) # Escogemos el 20% del conjunto de datos

X_train, X_test

(array([[0.00, 1.00, 0.00, 40.00, 63777.78],

[1.00, 0.00, 0.00, 37.00, 67000.00],

[0.00, 0.00, 1.00, 27.00, 48000.00],

[0.00, 0.00, 1.00, 38.78, 52000.00],

[1.00, 0.00, 0.00, 48.00, 79000.00],

[0.00, 0.00, 1.00, 38.00, 61000.00],

[1.00, 0.00, 0.00, 44.00, 72000.00],

[1.00, 0.00, 0.00, 35.00, 58000.00]]),

array([[0.00, 1.00, 0.00, 30.00, 54000.00],

[0.00, 1.00, 0.00, 50.00, 83000.00]]))

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

X_train = sc_X.fit_transform(X_train)

X_test = sc_X.transform(X_test)

# If you want to scale Y

#sc_y = StandardScaler()

#y_train = sc_y.fit_transform(y_train)

X_train

array([[-1.00, 2.65, -0.77, 0.26, 0.12],

[1.00, -0.38, -0.77, -0.25, 0.46],

[-1.00, -0.38, 1.29, -1.98, -1.53],

[-1.00, -0.38, 1.29, 0.05, -1.11],

[1.00, -0.38, -0.77, 1.64, 1.72],

[-1.00, -0.38, 1.29, -0.08, -0.17],

[1.00, -0.38, -0.77, 0.95, 0.99],

[1.00, -0.38, -0.77, -0.60, -0.48]])

X_test

array([[-1.00, 2.65, -0.77, -1.46, -0.90],

[-1.00, 2.65, -0.77, 1.98, 2.14]])

y_train

array([1, 1, 1, 0, 1, 0, 0, 1])

2. Regression¶

En estadística, el análisis de la regresión es un proceso estadístico para estimar las relaciones entre variables. Incluye muchas técnicas para el modelado y análisis de diversas variables, cuando la atención se centra en la relación entre una variable dependiente y una o más variables independientes (o predictoras). Más específicamente, el análisis de regresión ayuda a entender cómo el valor de la variable dependiente varía al cambiar el valor de una de las variables independientes, manteniendo el valor de las otras variables independientes fijas.

El análisis de regresión es ampliamente utilizado para la predicción y previsión, donde su uso tiene superposición sustancial en el campo de ML. El análisis de regresión se utiliza también para comprender cuales de las variables independientes están relacionadas con la variable dependiente, y explorar las formas de estas relaciones. En circunstancias limitadas, el análisis de regresión puede utilizarse para inferir relaciones causales entre las variables independientes y dependientes. Sin embargo, esto puede llevar a ilusiones o relaciones falsas, por lo que se recomienda precaución, por ejemplo, la correlación no implica causalidad.

Muchas técnicas han sido desarrolladas para llevar a cabo el análisis de regresión. Métodos familiares tales como la regresión lineal y la regresión por cuadrados mínimos ordinarios son paramétricos, en que la función de regresión se define en términos de un número finito de parámetros desconocidos que se estiman a partir de los datos.

2.1 Regresión Lineal Simple¶

Este modelo puede ser expresado como:

$$ \alpha_0 + \alpha_1 X_1 + \alpha_2 X_2 + \alpha_3 X_3 + ... + \alpha_n X_n = Y $$donde:

- Y: es la variable dependiente

- X: la variable independiente

- $\alpha$ : parámetros, miden la influencia que las variables independiente tienen sobre la variable dependiente.

# Simple Linear Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Salary_Data.csv')

X = dataset.iloc[:, :-1].values

y = dataset.iloc[:, 1].values

dataset.head()

| YearsExperience | Salary | |

|---|---|---|

| 0 | 1.1 | 39343.0 |

| 1 | 1.3 | 46205.0 |

| 2 | 1.5 | 37731.0 |

| 3 | 2.0 | 43525.0 |

| 4 | 2.2 | 39891.0 |

# Splitting the dataset into the Training set and Test set

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 1/3.0, random_state = 0)

# Fitting Simple Linear Regression to the Training set

from sklearn.linear_model import LinearRegression

regressor = LinearRegression()

regressor.fit(X_train, y_train)

LinearRegression(copy_X=True, fit_intercept=True, n_jobs=1, normalize=False)

# Si queremos saber más acerca de la clase Linear Regression

HTML('<iframe src="http://scikit-learn.org/stable/modules/generated/sklearn.linear_model.LinearRegression.html" width="800" height="600"></iframe>')

# Predicting the Test set results

y_pred = regressor.predict(X_test) # These are the predicted salaries

# Some configurations for matplotlib

%matplotlib inline

plt.rcParams['figure.figsize'] = (15, 8)

# Visualising the Training set results

plt.scatter(X_train, y_train, color = 'red')

plt.plot(X_train, regressor.predict(X_train), color = 'blue')

plt.title('Salary vs Experience (Training set)')

plt.xlabel('Years of Experience')

plt.ylabel('Salary')

plt.show()

# Visualising the Test set results

plt.scatter(X_train, y_train, color = 'green') # Los puntos de entramiento

plt.scatter(X_test, y_test, color = 'red') # Puntos de prueba

plt.plot(X_train, regressor.predict(X_train), color = 'blue')

plt.title('Salary vs Experience (Test set)')

plt.xlabel('Years of Experience')

plt.ylabel('Salary')

plt.show()

2.2 Regresión Lineal Múltiple¶

# Multiple Linear Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/50_Startups.csv')

X = dataset.iloc[:, :-1].values

y = dataset.iloc[:, 4].values

dataset.head()

| R&D Spend | Administration | Marketing Spend | State | Profit | |

|---|---|---|---|---|---|

| 0 | 165349.20 | 136897.80 | 471784.10 | New York | 192261.83 |

| 1 | 162597.70 | 151377.59 | 443898.53 | California | 191792.06 |

| 2 | 153441.51 | 101145.55 | 407934.54 | Florida | 191050.39 |

| 3 | 144372.41 | 118671.85 | 383199.62 | New York | 182901.99 |

| 4 | 142107.34 | 91391.77 | 366168.42 | Florida | 166187.94 |

# Encoding categorical data

from sklearn.preprocessing import LabelEncoder, OneHotEncoder

labelencoder = LabelEncoder()

X[:, 3] = labelencoder.fit_transform(X[:, 3])

onehotencoder = OneHotEncoder(categorical_features = [3])

X = onehotencoder.fit_transform(X).toarray()

np.set_printoptions(formatter={'float': lambda x: "{0:0.2f}".format(x)})

X[0:10,:]

array([[0.00, 0.00, 1.00, 165349.20, 136897.80, 471784.10],

[1.00, 0.00, 0.00, 162597.70, 151377.59, 443898.53],

[0.00, 1.00, 0.00, 153441.51, 101145.55, 407934.54],

[0.00, 0.00, 1.00, 144372.41, 118671.85, 383199.62],

[0.00, 1.00, 0.00, 142107.34, 91391.77, 366168.42],

[0.00, 0.00, 1.00, 131876.90, 99814.71, 362861.36],

[1.00, 0.00, 0.00, 134615.46, 147198.87, 127716.82],

[0.00, 1.00, 0.00, 130298.13, 145530.06, 323876.68],

[0.00, 0.00, 1.00, 120542.52, 148718.95, 311613.29],

[1.00, 0.00, 0.00, 123334.88, 108679.17, 304981.62]])

# Avoiding the Dummy Variable Trap

X = X[:, 1:]

X[0:10,:]

array([[0.00, 1.00, 165349.20, 136897.80, 471784.10],

[0.00, 0.00, 162597.70, 151377.59, 443898.53],

[1.00, 0.00, 153441.51, 101145.55, 407934.54],

[0.00, 1.00, 144372.41, 118671.85, 383199.62],

[1.00, 0.00, 142107.34, 91391.77, 366168.42],

[0.00, 1.00, 131876.90, 99814.71, 362861.36],

[0.00, 0.00, 134615.46, 147198.87, 127716.82],

[1.00, 0.00, 130298.13, 145530.06, 323876.68],

[0.00, 1.00, 120542.52, 148718.95, 311613.29],

[0.00, 0.00, 123334.88, 108679.17, 304981.62]])

# Splitting the dataset into the Training set and Test set

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.3, random_state = 0)

# Fitting Multiple Linear Regression to the Training set

from sklearn.linear_model import LinearRegression

regressor = LinearRegression()

regressor.fit(X_train, y_train)

# Predicting the Test set results

y_pred = regressor.predict(X_test)

y_test,y_pred

(array([103282.38, 144259.40, 146121.95, 77798.83, 191050.39, 105008.31,

81229.06, 97483.56, 110352.25, 166187.94, 96778.92, 96479.51,

105733.54, 96712.80, 124266.90]),

array([104282.76, 132536.88, 133910.85, 72584.77, 179920.93, 114549.31,

66444.43, 98404.97, 114499.83, 169367.51, 96522.63, 88040.67,

110949.99, 90419.19, 128020.46]))

import matplotlib.pyplot as plt

# Some configurations for matplotlib

%matplotlib inline

plt.rcParams['figure.figsize'] = (15, 8)

N = 15

x1 = np.linspace(0, 10, N, endpoint=True)

plt.plot(x1, y_test, '-')

plt.plot(x1, y_pred, '--')

plt.xlim([-2, 12])

plt.show()

print("real profit \t profit predicted")

for i in range(0,15):

print ("{} \t {}".format(y_test[i],y_pred[i]))

real profit profit predicted 103282.38 104282.76472171725 144259.4 132536.88499212448 146121.95 133910.8500776713 77798.83 72584.77489417307 191050.39 179920.9276189077 105008.31 114549.31079233429 81229.06 66444.4326134641 97483.56 98404.96840122048 110352.25 114499.82808602191 166187.94 169367.5063989582 96778.92 96522.62539980911 96479.51 88040.67182870372 105733.54 110949.99405525548 96712.8 90419.18978509636 124266.9 128020.46250064336

2.3 Regresión Polinomial¶

# Polynomial Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Position_Salaries.csv')

X = dataset.iloc[:, 1:2].values

y = dataset.iloc[:, 2].values

dataset

| Position | Level | Salary | |

|---|---|---|---|

| 0 | Business Analyst | 1 | 45000 |

| 1 | Junior Consultant | 2 | 50000 |

| 2 | Senior Consultant | 3 | 60000 |

| 3 | Manager | 4 | 80000 |

| 4 | Country Manager | 5 | 110000 |

| 5 | Region Manager | 6 | 150000 |

| 6 | Partner | 7 | 200000 |

| 7 | Senior Partner | 8 | 300000 |

| 8 | C-level | 9 | 500000 |

| 9 | CEO | 10 | 1000000 |

# Splitting the dataset into the Training set and Test set

# Is not need it because the data is too small

"""from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0)"""

# Feature Scaling

# No because the libraries from sklear do it for himself

"""from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

X_train = sc_X.fit_transform(X_train)

X_test = sc_X.transform(X_test)"""

# Fitting Linear Regression to the dataset

from sklearn.linear_model import LinearRegression

lin_reg = LinearRegression()

lin_reg.fit(X, y)

# Fitting Polynomial Regression to the dataset

from sklearn.preprocessing import PolynomialFeatures

poly_reg = PolynomialFeatures(degree = 2)

X_poly = poly_reg.fit_transform(X)

lin_reg_2 = LinearRegression()

lin_reg_2.fit(X_poly, y)

LinearRegression(copy_X=True, fit_intercept=True, n_jobs=1, normalize=False)

np.set_printoptions(formatter={'float': lambda x: "{0:0.2f}".format(x)})

X_poly

array([[1.00, 1.00, 1.00],

[1.00, 2.00, 4.00],

[1.00, 3.00, 9.00],

[1.00, 4.00, 16.00],

[1.00, 5.00, 25.00],

[1.00, 6.00, 36.00],

[1.00, 7.00, 49.00],

[1.00, 8.00, 64.00],

[1.00, 9.00, 81.00],

[1.00, 10.00, 100.00]])

# Visualising the Linear Regression results

plt.scatter(X, y, color = 'red')

plt.plot(X, lin_reg.predict(X), color = 'blue')

plt.title('Truth or Bluff (Linear Regression)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

# Visualising the Polynomial Regression results

plt.scatter(X, y, color = 'red')

plt.plot(X, lin_reg_2.predict(poly_reg.fit_transform(X)), color = 'blue')

plt.title('Truth or Bluff (Polynomial Regression) using a polynom of degree =2')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

# Ahora veamos si ponemos grado 4

# Fitting Polynomial Regression to the dataset

from sklearn.preprocessing import PolynomialFeatures

poly_reg = PolynomialFeatures(degree = 4)

X_poly = poly_reg.fit_transform(X)

lin_reg_2 = LinearRegression()

lin_reg_2.fit(X_poly, y)

# Visualising the Polynomial Regression results

plt.scatter(X, y, color = 'red')

plt.plot(X, lin_reg_2.predict(poly_reg.fit_transform(X)), color = 'blue')

plt.title('Truth or Bluff (Polynomial Regression) using a polynom of degree =4')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

# Visualising the Polynomial Regression results (for higher resolution and smoother curve)

X_grid = np.arange(min(X), max(X), 0.1)

X_grid = X_grid.reshape((len(X_grid), 1))

plt.scatter(X, y, color = 'red')

plt.plot(X_grid, lin_reg_2.predict(poly_reg.fit_transform(X_grid)), color = 'blue')

plt.title('Truth or Bluff (Polynomial Regression)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

# Predicting a new result with Linear Regression

lin_reg.predict(6.5)

array([330378.79])

# Predicting a new result with Polynomial Regression

lin_reg_2.predict(poly_reg.fit_transform(6.5))

array([158862.45])

2.4 Support Vector Regression¶

# SVR

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

np.set_printoptions(formatter={'float': lambda x: "{0:0.2f}".format(x)})

# Some configurations for matplotlib

%matplotlib inline

plt.rcParams['figure.figsize'] = (15, 8)

# Importing the dataset

dataset = pd.read_csv('../data/Position_Salaries.csv')

X = dataset.iloc[:, 1:2].values

X,y

(array([[ 1],

[ 2],

[ 3],

[ 4],

[ 5],

[ 6],

[ 7],

[ 8],

[ 9],

[10]]),

array([ 45000, 50000, 60000, 80000, 110000, 150000, 200000,

300000, 500000, 1000000]))

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

sc_y = StandardScaler()

X = sc_X.fit_transform(X)

y = sc_y.fit_transform(y)

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning) /home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/preprocessing/data.py:586: DeprecationWarning: Passing 1d arrays as data is deprecated in 0.17 and will raise ValueError in 0.19. Reshape your data either using X.reshape(-1, 1) if your data has a single feature or X.reshape(1, -1) if it contains a single sample. warnings.warn(DEPRECATION_MSG_1D, DeprecationWarning) /home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/preprocessing/data.py:649: DeprecationWarning: Passing 1d arrays as data is deprecated in 0.17 and will raise ValueError in 0.19. Reshape your data either using X.reshape(-1, 1) if your data has a single feature or X.reshape(1, -1) if it contains a single sample. warnings.warn(DEPRECATION_MSG_1D, DeprecationWarning)

# Fitting SVR to the dataset

from sklearn.svm import SVR

regressor = SVR(kernel = 'rbf') #Gaussian kernel

regressor.fit(X, y)

# Predicting a new result

y_pred = sc_y.inverse_transform(regressor.predict(sc_X.transform(np.array([[6.5]]))))

# Visualising the SVR results

plt.scatter(X, y, color = 'red')

plt.plot(X, regressor.predict(X), color = 'blue')

plt.title('Truth or Bluff (SVR)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

# Visualising the SVR results (for higher resolution and smoother curve)

X_grid = np.arange(min(X), max(X), 0.01) # choice of 0.01 instead of 0.1 step because the data is feature scaled

X_grid = X_grid.reshape((len(X_grid), 1))

plt.scatter(X, y, color = 'red')

plt.plot(X_grid, regressor.predict(X_grid), color = 'blue')

plt.title('Truth or Bluff (SVR)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

A = np.array([6.5])

A = np.reshape(A,(-1,1))

#print(A)

y_pred = sc_y.inverse_transform(regressor.predict(sc_X.transform(A)))

y_pred

array([170370.02])

2.5 Decision Tree Regression¶

# Decision Tree Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Position_Salaries.csv')

X = dataset.iloc[:, 1:2].values

y = dataset.iloc[:, 2].values

# Fitting Decision Tree Regression to the dataset

from sklearn.tree import DecisionTreeRegressor

regressor = DecisionTreeRegressor(random_state = 0)

regressor.fit(X, y)

# Predicting a new result

y_pred = regressor.predict(6.5)

# Visualising the Decision Tree Regression results (higher resolution)

X_grid = np.arange(min(X), max(X), 0.01)

X_grid = X_grid.reshape((len(X_grid), 1))

plt.scatter(X, y, color = 'red')

plt.scatter(np.array([6.5]), y_pred, color = 'green')

plt.plot(X_grid, regressor.predict(X_grid), color = 'blue')

plt.title('Truth or Bluff (Decision Tree Regression)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

y_pred

array([150000.00])

2.6 Random Forest Regression¶

# Random Forest Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Some configurations for matplotlib

%matplotlib inline

plt.rcParams['figure.figsize'] = (15, 8)

# Importing the dataset

dataset = pd.read_csv('../data/Position_Salaries.csv')

X = dataset.iloc[:, 1:2].values

y = dataset.iloc[:, 2].values

dataset

| Position | Level | Salary | |

|---|---|---|---|

| 0 | Business Analyst | 1 | 45000 |

| 1 | Junior Consultant | 2 | 50000 |

| 2 | Senior Consultant | 3 | 60000 |

| 3 | Manager | 4 | 80000 |

| 4 | Country Manager | 5 | 110000 |

| 5 | Region Manager | 6 | 150000 |

| 6 | Partner | 7 | 200000 |

| 7 | Senior Partner | 8 | 300000 |

| 8 | C-level | 9 | 500000 |

| 9 | CEO | 10 | 1000000 |

# Fitting Random Forest Regression to the dataset

from sklearn.ensemble import RandomForestRegressor

regressor = RandomForestRegressor(n_estimators = 10, random_state = 0)

regressor.fit(X, y)

# Predicting a new result

y_pred = regressor.predict(6.5)

# Visualising the Random Forest Regression results (higher resolution)

X_grid = np.arange(min(X), max(X), 0.01)

X_grid = X_grid.reshape((len(X_grid), 1))

plt.scatter(X, y, color = 'red')

plt.plot(X_grid, regressor.predict(X_grid), color = 'blue')

plt.scatter(np.array([6.5]), y_pred, color = 'green')

plt.title('Truth or Bluff (Random Forest Regression)')

plt.xlabel('Position level')

plt.ylabel('Salary')

plt.show()

y_pred

array([167000.00])

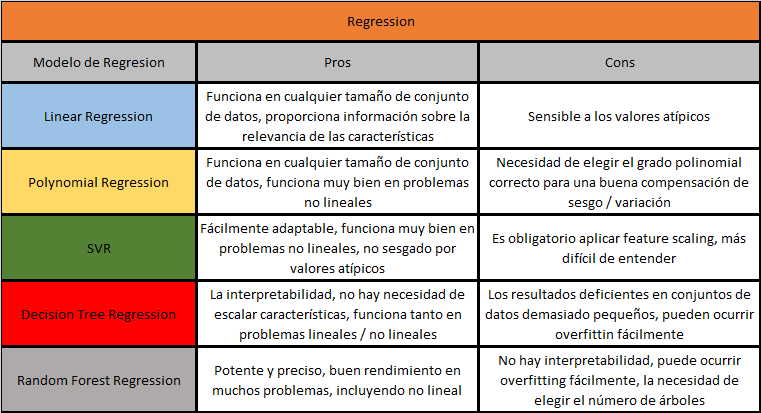

Pro y Cons¶

3. Clasificación¶

3.1 Logistic Regression¶

# Logistic Regression

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

dataset.head(10)

| User ID | Gender | Age | EstimatedSalary | Purchased | |

|---|---|---|---|---|---|

| 0 | 15624510 | Male | 19 | 19000 | 0 |

| 1 | 15810944 | Male | 35 | 20000 | 0 |

| 2 | 15668575 | Female | 26 | 43000 | 0 |

| 3 | 15603246 | Female | 27 | 57000 | 0 |

| 4 | 15804002 | Male | 19 | 76000 | 0 |

| 5 | 15728773 | Male | 27 | 58000 | 0 |

| 6 | 15598044 | Female | 27 | 84000 | 0 |

| 7 | 15694829 | Female | 32 | 150000 | 1 |

| 8 | 15600575 | Male | 25 | 33000 | 0 |

| 9 | 15727311 | Female | 35 | 65000 | 0 |

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting Logistic Regression to the Training set

from sklearn.linear_model import LogisticRegression

classifier = LogisticRegression(random_state = 0)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Logistic Regression (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/cross_validation.py:44: DeprecationWarning: This module was deprecated in version 0.18 in favor of the model_selection module into which all the refactored classes and functions are moved. Also note that the interface of the new CV iterators are different from that of this module. This module will be removed in 0.20. "This module will be removed in 0.20.", DeprecationWarning) /home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Logistic Regression (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.2 KNN (K nearest neighbors)¶

# K-Nearest Neighbors (K-NN)

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting K-NN to the Training set

from sklearn.neighbors import KNeighborsClassifier

classifier = KNeighborsClassifier(n_neighbors = 5, metric = 'minkowski', p = 2)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('K-NN (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('K-NN (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.3 Support Vector Machine (SVM)¶

# Support Vector Machine (SVM)

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting SVM to the Training set

from sklearn.svm import SVC

classifier = SVC(kernel = 'linear', random_state = 0)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('SVM (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('SVM (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.4 Kernel SVM¶

# Kernel SVM

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting Kernel SVM to the Training set

from sklearn.svm import SVC

classifier = SVC(kernel = 'rbf', random_state = 0)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Kernel SVM (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Kernel SVM (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.5 Naive Bayes¶

# Naive Bayes

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting Naive Bayes to the Training set

from sklearn.naive_bayes import GaussianNB

classifier = GaussianNB()

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Naive Bayes (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Naive Bayes (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.6 Decision Tree Classification¶

# Decision Tree Classification

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting Decision Tree Classification to the Training set

from sklearn.tree import DecisionTreeClassifier

classifier = DecisionTreeClassifier(criterion = 'entropy', random_state = 0)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Decision Tree Classification (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Decision Tree Classification (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

3.7 Random Forest¶

# Random Forest Classification

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Social_Network_Ads.csv')

X = dataset.iloc[:, [2, 3]].values

y = dataset.iloc[:, 4].values

# Splitting the dataset into the Training set and Test set

from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.25, random_state = 0)

# Feature Scaling

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

X_train = sc.fit_transform(X_train)

X_test = sc.transform(X_test)

# Fitting Random Forest Classification to the Training set

from sklearn.ensemble import RandomForestClassifier

classifier = RandomForestClassifier(n_estimators = 10, criterion = 'entropy', random_state = 0)

classifier.fit(X_train, y_train)

# Predicting the Test set results

y_pred = classifier.predict(X_test)

# Making the Confusion Matrix

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

# Visualising the Training set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_train, y_train

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Random Forest Classification (Training set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

/home/gerson/anaconda3/envs/py3/lib/python3.6/site-packages/sklearn/utils/validation.py:429: DataConversionWarning: Data with input dtype int64 was converted to float64 by StandardScaler. warnings.warn(msg, _DataConversionWarning)

# Visualising the Test set results

from matplotlib.colors import ListedColormap

X_set, y_set = X_test, y_test

X1, X2 = np.meshgrid(np.arange(start = X_set[:, 0].min() - 1, stop = X_set[:, 0].max() + 1, step = 0.01),

np.arange(start = X_set[:, 1].min() - 1, stop = X_set[:, 1].max() + 1, step = 0.01))

plt.contourf(X1, X2, classifier.predict(np.array([X1.ravel(), X2.ravel()]).T).reshape(X1.shape),

alpha = 0.75, cmap = ListedColormap(('red', 'green')))

plt.xlim(X1.min(), X1.max())

plt.ylim(X2.min(), X2.max())

for i, j in enumerate(np.unique(y_set)):

plt.scatter(X_set[y_set == j, 0], X_set[y_set == j, 1],

c = ListedColormap(('red', 'green'))(i), label = j)

plt.title('Random Forest Classification (Test set)')

plt.xlabel('Age')

plt.ylabel('Estimated Salary')

plt.legend()

plt.show()

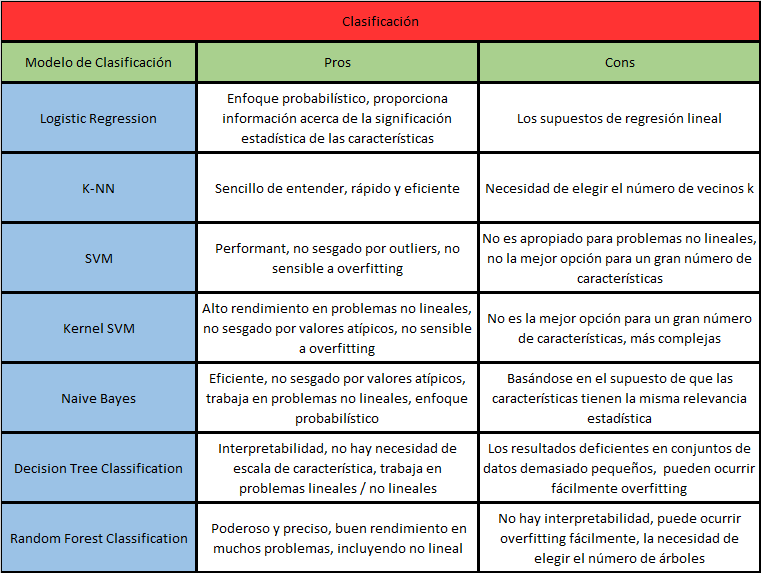

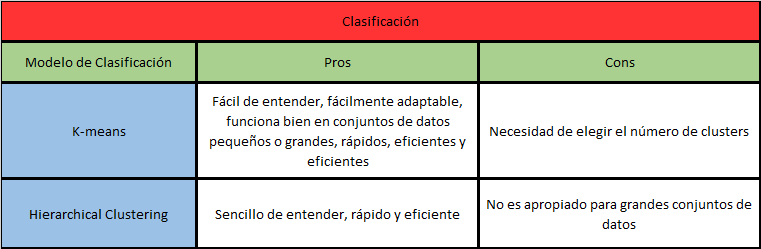

Pro y Cons¶

4. Clustering¶

4.1 K-means¶

# K-Means Clustering

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Mall_Customers.csv')

X = dataset.iloc[:, [3, 4]].values

# y = dataset.iloc[:, 3].values

dataset.head()

| CustomerID | Genre | Age | Annual Income (k$) | Spending Score (1-100) | |

|---|---|---|---|---|---|

| 0 | 1 | Male | 19 | 15 | 39 |

| 1 | 2 | Male | 21 | 15 | 81 |

| 2 | 3 | Female | 20 | 16 | 6 |

| 3 | 4 | Female | 23 | 16 | 77 |

| 4 | 5 | Female | 31 | 17 | 40 |

# Splitting the dataset into the Training set and Test set

"""from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0)"""

# Feature Scaling

"""from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

X_train = sc_X.fit_transform(X_train)

X_test = sc_X.transform(X_test)

sc_y = StandardScaler()

y_train = sc_y.fit_transform(y_train)"""

# Using the elbow method to find the optimal number of clusters

from sklearn.cluster import KMeans

wcss = []

for i in range(1, 11):

kmeans = KMeans(n_clusters = i, init = 'k-means++', random_state = 42)

kmeans.fit(X)

wcss.append(kmeans.inertia_)

plt.plot(range(1, 11), wcss)

plt.title('The Elbow Method')

plt.xlabel('Number of clusters')

plt.ylabel('WCSS')

plt.show()

# Fitting K-Means to the dataset

kmeans = KMeans(n_clusters = 5, init = 'k-means++', random_state = 42)

y_kmeans = kmeans.fit_predict(X)

# Visualising the clusters

plt.scatter(X[y_kmeans == 0, 0], X[y_kmeans == 0, 1], s = 100, c = 'red', label = 'Cluster 1')

plt.scatter(X[y_kmeans == 1, 0], X[y_kmeans == 1, 1], s = 100, c = 'blue', label = 'Cluster 2')

plt.scatter(X[y_kmeans == 2, 0], X[y_kmeans == 2, 1], s = 100, c = 'green', label = 'Cluster 3')

plt.scatter(X[y_kmeans == 3, 0], X[y_kmeans == 3, 1], s = 100, c = 'cyan', label = 'Cluster 4')

plt.scatter(X[y_kmeans == 4, 0], X[y_kmeans == 4, 1], s = 100, c = 'magenta', label = 'Cluster 5')

plt.scatter(kmeans.cluster_centers_[:, 0], kmeans.cluster_centers_[:, 1], s = 300, c = 'yellow', label = 'Centroids')

plt.title('Clusters of customers')

plt.xlabel('Annual Income (k$)')

plt.ylabel('Spending Score (1-100)')

plt.legend()

plt.show()

4.2 Hierarchical Clustering¶

# Hierarchical Clustering

# Importing the libraries

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

# Importing the dataset

dataset = pd.read_csv('../data/Mall_Customers.csv')

X = dataset.iloc[:, [3, 4]].values

# y = dataset.iloc[:, 3].values

# Splitting the dataset into the Training set and Test set

"""from sklearn.cross_validation import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = 0.2, random_state = 0)"""

# Feature Scaling

"""from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

X_train = sc_X.fit_transform(X_train)

X_test = sc_X.transform(X_test)

sc_y = StandardScaler()

y_train = sc_y.fit_transform(y_train)"""

# Using the dendrogram to find the optimal number of clusters

import scipy.cluster.hierarchy as sch

dendrogram = sch.dendrogram(sch.linkage(X, method = 'ward'))

plt.title('Dendrogram')

plt.xlabel('Customers')

plt.ylabel('Euclidean distances')

plt.show()

# Fitting Hierarchical Clustering to the dataset

from sklearn.cluster import AgglomerativeClustering

hc = AgglomerativeClustering(n_clusters = 5, affinity = 'euclidean', linkage = 'ward')

y_hc = hc.fit_predict(X)

# Visualising the clusters

plt.scatter(X[y_hc == 0, 0], X[y_hc == 0, 1], s = 100, c = 'red', label = 'Cluster 1')

plt.scatter(X[y_hc == 1, 0], X[y_hc == 1, 1], s = 100, c = 'blue', label = 'Cluster 2')

plt.scatter(X[y_hc == 2, 0], X[y_hc == 2, 1], s = 100, c = 'green', label = 'Cluster 3')

plt.scatter(X[y_hc == 3, 0], X[y_hc == 3, 1], s = 100, c = 'cyan', label = 'Cluster 4')

plt.scatter(X[y_hc == 4, 0], X[y_hc == 4, 1], s = 100, c = 'magenta', label = 'Cluster 5')

plt.title('Clusters of customers')

plt.xlabel('Annual Income (k$)')

plt.ylabel('Spending Score (1-100)')

plt.legend()

plt.show()

Pro y Cons¶

# Esta celda da el estilo al notebook

from IPython.core.display import HTML

css_file = '../styles/StyleCursoPython.css'

HTML(open(css_file, "r").read())