Scalar and vector¶

Marcos Duarte, Renato Naville Watanabe

Laboratory of Biomechanics and Motor Control

Federal University of ABC, Brazil

Python handles very well all mathematical operations with numeric scalars and vectors and you can use Sympy for similar stuff but with abstract symbols. Let's briefly review scalars and vectors and show how to use Python for numerical calculation.

For a review about scalars and vectors, see chapter 2 of Ruina and Rudra's book.

Python setup¶

from IPython.display import IFrame

import math

import numpy as np

a = 2

b = 3

print('a =', a, ', b =', b)

print('a + b =', a + b)

print('a - b =', a - b)

print('a * b =', a * b)

print('a / b =', a / b)

print('a ** b =', a ** b)

print('sqrt(b) =', math.sqrt(b))

a = 2 , b = 3 a + b = 5 a - b = -1 a * b = 6 a / b = 0.6666666666666666 a ** b = 8 sqrt(b) = 1.7320508075688772

If you have a set of numbers, or an array, it is probably better to use Numpy; it will be faster for large data sets, and combined with Scipy, has many more mathematical funcions.

a = 2

b = [3, 4, 5, 6, 7, 8]

b = np.array(b)

print('a =', a, ', b =', b)

print('a + b =', a + b)

print('a - b =', a - b)

print('a * b =', a * b)

print('a / b =', a / b)

print('a ** b =', a ** b)

print('np.sqrt(b) =', np.sqrt(b)) # use numpy functions for numpy arrays

a = 2 , b = [3 4 5 6 7 8] a + b = [ 5 6 7 8 9 10] a - b = [-1 -2 -3 -4 -5 -6] a * b = [ 6 8 10 12 14 16] a / b = [0.66666667 0.5 0.4 0.33333333 0.28571429 0.25 ] a ** b = [ 8 16 32 64 128 256] np.sqrt(b) = [1.73205081 2. 2.23606798 2.44948974 2.64575131 2.82842712]

Numpy performs the arithmetic operations of the single number in a with all the numbers of the array b. This is called broadcasting in computer science.

Even if you have two arrays (but they must have the same size), Numpy handles for you:

a = np.array([1, 2, 3])

b = np.array([4, 5, 6])

print('a =', a, ', b =', b)

print('a + b =', a + b)

print('a - b =', a - b)

print('a * b =', a * b)

print('a / b =', a / b)

print('a ** b =', a ** b)

a = [1 2 3] , b = [4 5 6] a + b = [5 7 9] a - b = [-3 -3 -3] a * b = [ 4 10 18] a / b = [0.25 0.4 0.5 ] a ** b = [ 1 32 729]

Vector¶

A vector is a quantity with magnitude (or length) and direction expressed numerically as an ordered list of values according to a coordinate reference system.

For example, position, force, and torque are physical quantities defined by vectors.

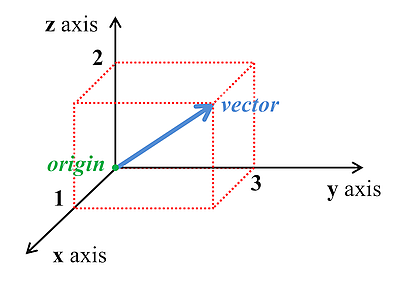

For instance, consider the position of a point in space represented by a vector:

The position of the point (the vector) above can be represented as a tuple of values:

$$ (x,\: y,\: z) \; \Rightarrow \; (1, 3, 2) $$or in matrix form:

$$ \begin{bmatrix} x \\y \\z \end{bmatrix} \;\; \Rightarrow \;\; \begin{bmatrix} 1 \\3 \\2 \end{bmatrix}$$We can use the Numpy array to represent the components of vectors.

For instance, for the vector above is expressed in Python as:

a = np.array([1, 3, 2])

print('a =', a)

a = [1 3 2]

Exactly like the arrays in the last example for scalars, so all operations we performed will result in the same values, of course.

However, as we are now dealing with vectors, now some of the operations don't make sense. For example, for vectors there are no multiplication, division, power, and square root in the way we calculated.

A vector can also be represented as:

$$ \overrightarrow{\mathbf{a}} = a_x\hat{\mathbf{i}} + a_y\hat{\mathbf{j}} + a_z\hat{\mathbf{k}} $$

Where $\hat{\mathbf{i}},\, \hat{\mathbf{j}},\, \hat{\mathbf{k}}\,$ are unit vectors, each representing a direction and $ a_x\hat{\mathbf{i}},\: a_y\hat{\mathbf{j}},\: a_z\hat{\mathbf{k}} $ are the vector components of the vector $\overrightarrow{\mathbf{a}}$.

A unit vector (or versor) is a vector whose length (or norm) is 1.

The unit vector of a non-zero vector $\overrightarrow{\mathbf{a}}$ is the unit vector codirectional with $\overrightarrow{\mathbf{a}}$:

Magnitude (length or norm) of a vector¶

The magnitude (length) of a vector is often represented by the symbol $||\;||$, also known as the norm (or Euclidean norm) of a vector and it is defined as:

$$ ||\overrightarrow{\mathbf{a}}|| = \sqrt{a_x^2+a_y^2+a_z^2} $$ The function `numpy.linalg.norm` calculates the norm:a = np.array([1, 2, 3])

np.linalg.norm(a)

3.7416573867739413

Or we can use the definition and compute directly:

np.sqrt(np.sum(a*a))

3.7416573867739413

Then, the versor for the vector $ \overrightarrow{\mathbf{a}} = (1, 2, 3) $ is:

a = np.array([1, 2, 3])

u = a/np.linalg.norm(a)

print('u =', u)

u = [0.26726124 0.53452248 0.80178373]

And we can verify its magnitude is indeed 1:

np.linalg.norm(u)

1.0

But the representation of a vector as a tuple of values is only valid for a vector with its origin coinciding with the origin $ (0, 0, 0) $ of the coordinate system we adopted. For instance, consider the following vector:

Such a vector cannot be represented by $ (b_x, b_y, b_z) $ because this would be for the vector from the origin to the point B. To represent exactly this vector we need the two vectors $ \mathbf{a} $ and $ \mathbf{b} $ . This fact is important when we perform some calculations in Mechanics.

Vecton addition and subtraction¶

The addition of two vectors is another vector:

$$ \overrightarrow{\mathbf{a}} + \overrightarrow{\mathbf{b}} = (a_x\hat{\mathbf{i}} + a_y\hat{\mathbf{j}} + a_z\hat{\mathbf{k}}) + (b_x\hat{\mathbf{i}} + b_y\hat{\mathbf{j}} + b_z\hat{\mathbf{k}}) = (a_x+b_x)\hat{\mathbf{i}} + (a_y+b_y)\hat{\mathbf{j}} + (a_z+b_z)\hat{\mathbf{k}} $$The subtraction of two vectors is also another vector:

$$ \overrightarrow{\mathbf{a}} - \overrightarrow{\mathbf{b}} = (a_x\hat{\mathbf{i}} + a_y\hat{\mathbf{j}} + a_z\hat{\mathbf{k}}) + (b_x\hat{\mathbf{i}} + b_y\hat{\mathbf{j}} + b_z\hat{\mathbf{k}}) = (a_x-b_x)\hat{\mathbf{i}} + (a_y-b_y)\hat{\mathbf{j}} + (a_z-b_z)\hat{\mathbf{k}} $$