Nonlinear Schrödinger as a Dynamical System¶

Ascona Winter School 2016, (alternate link)¶

J. Colliander (UBC)¶

Overview of Lecture 2¶

- Conserved Quantities

- Bourgain's High/Low Frequency Decomposition

- $I$-Method

- Multilinear Correction Terms

- Applications

Lecture Notes¶

https://github.com/colliand/ascona2016¶

Conserved Quantities¶

$$ \begin{align*} {\mbox{Mass}}& = \| u \|_{L^2_x}^2 = \int_{\mathbb{R}^d} |u(t,x)|^2 dx. \\ {\mbox{Momentum}}& = {\textbf{p}}(u) = 2 \Im \int_{\mathbb{R}^2} {\overline{u}(t)} \nabla u (t) dx. \\ {\mbox{Energy}} & = H[u(t)] = \frac{1}{2} \int_{\mathbb{R}^2} |\nabla u(t) |^2 dx {\pm} \frac{2}{p+1} |u(t)|^{p+1} dx . \end{align*} $$Conserved¶

$$ \partial_t Q[u] = 0.$$Almost Conserved##¶

$$\big| \partial_t Q[u] \big| ~\mbox{is small}.$$Conservation of Mass¶

From the equation $ (i \partial_t + \Delta) u = \pm |u|^{p-1} u $, we have: $$ u_t = i \Delta u \mp i |u|^{p-1} u$$ $$ {\overline{u}}_t = -i \Delta {\overline{u}} \pm i |u|^{p-1} {\overline{u}}$$

Thus, $$ \partial_t |u(t)|^2 = [ i \Delta u \mp i |u|^{p-1} u] \overline{u} + u [ -i \Delta {\overline{u}} \pm i |u|^{p-1} {\overline{u}}]$$

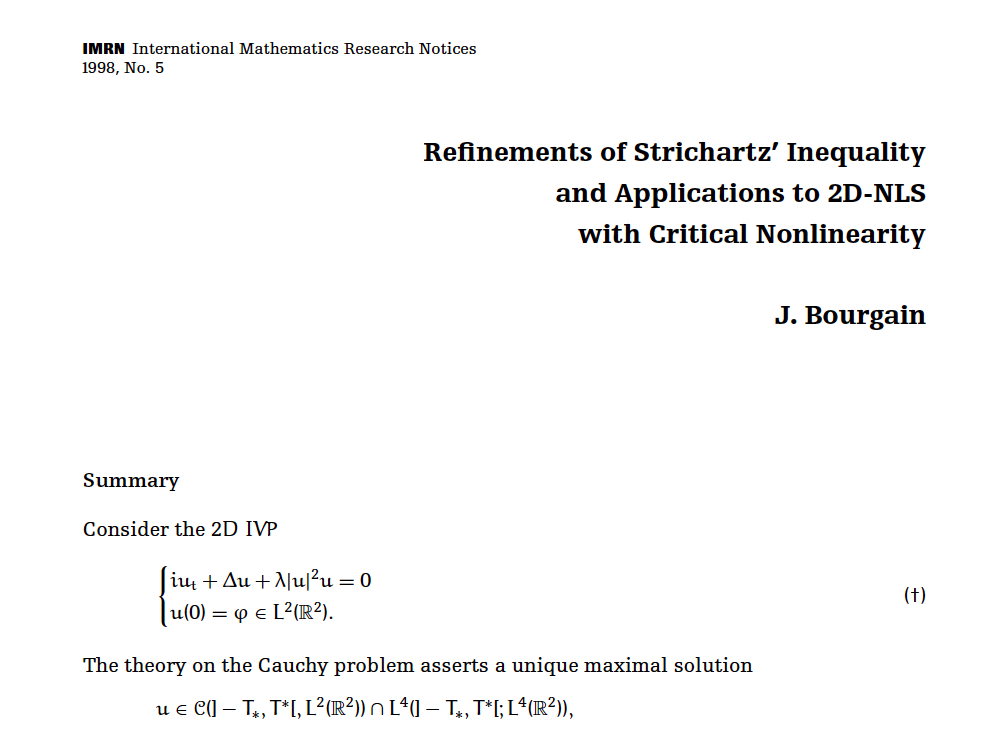

Bourgain's High/Low Method¶

Consider the Cauchy problem for defocusing cubic NLS on $\mathbb{R}^2$: \begin{equation*} \tag{{$NLS^{+}_3 (\mathbb{R}^2)$}} \left\{ \begin{matrix} (i \partial_t + \Delta) u = +|u|^{2} u \\ u(0,x) = u_{hi_0} (x). \end{matrix} \right. \end{equation*} We describe the first result to give global well-posedness below $H^1$.

- $NLS_3^+ (\mathbb{R}^2)$ is GWP in $H^s$ for $s > \frac{2}{3}$.

- First use of Bilinear Strichartz

estimate was in this proof.

- Proof cuts solution into low and high frequency parts.

- For $u_0 \in H^s, ~s>\frac{2}{3},$ Proof gives (and crucially exploits),

Setting up; Decomposing Data¶

- Fix a large target time $T$.

- Let $N = N(T)$ be large to be determined.

- Decompose the initial data:

where $$ u_{low} (x) = \int_{{|\xi| < N}} ~e^{i x \cdot \xi } \widehat{u_0} (\xi) d \xi. $$

- Our plan is to evolve:

to $$ u(t) = u_{{low}} (t) + u_{{high}} (t) . $$

Sizes of the Data Components¶

Low Frequency Data Size:

- Kinetic Energy:

- Potential Energy:

$ \| u_{low} \|_{L^4_x} \leq \| u_{low} \|_{L^2}^{1/2} \| \nabla u_{low} \|_{L^2}^{1/2} $ $$ \implies H[ u_{low} ] \leq C N^{2(1-s)}. $$

High Frequency Data Size: $$\| u_{high} \|_{L^2} \leq C_0 N^{-s}, ~\| u_{high} \|_{H^s} \leq C_0.$$

LWP of $u_{low}$ Frequency Evolution along NLS¶

The NLS Cauchy Problem for the low frequency data \begin{equation*} \tag{{${{NLS}}$}} %\tag{{$NLS^{+}_3 (\mathbb{R}^2)$}} \left\{ \begin{matrix} (i \partial_t + \Delta) u_{{low}} = +|u_{{low}}|^{2} u_{{low}} \\ u_{{low}}(0,x) = u_{low} (x) \end{matrix} \right. \end{equation*} is well-posed on $[0, T_{lwp}]$ with $T_{lwp} \thicksim \| u_{low} \|_{H^1}^{-2} \thicksim N^{-2(1-s)}$.

We obtain, as a consequence of the local theory, that $$ \| u_{{low}} \|_{L^4_{[0,T_{lwp}], x}} \leq \frac{1}{100}. $$

LWP of $u_{high}$ Evolution along DE¶

The NLS Cauchy Problem for the high frequency data \begin{equation*} %\tag{{$NLS^{+}_3 (\mathbb{R}^2)$}} \left\{ \begin{matrix} (i \partial_t + \Delta) u_{{high}} = +2 |u_{{low}}|^2 u_{{high}} + {\mbox{similar}} + |u_{{high}}|^{2} u_{{high}} \\ u_{{high}} (0,x) = u_{high} (x) \end{matrix} \right. \end{equation*} is also well-posed on $[0, T_{lwp}]$.

Crucial Observation: The LWP lifetime of $NLS$ evolution of $u_{{low}}$ AND the LWP lifetime of the $DE$ evolution of $u_{{high}}$ are controlled by $\| u_{{low}}(0)\|_{H^1}$.

Extra Smoothing of Nonlinear Duhamel Term¶

The high frequency evolution may be written $$ u_{{high}} (t) = e^{it \Delta} u_{{high}} + w. $$ The local theory gives $\| w(t) \|_{L^2} \lesssim N^{-s}$. Moreover, due to smoothing (obtained via bilinear Strichartz), we have that \begin{equation} \tag{SMOOTH!} w \in H^1, ~ \| w(t) \|_{H^1} \lesssim N^{1-2s+}. \end{equation} Let's postpone the proof of (SMOOTH!).

Nonlinear High Frequency Term Hiding Step!¶

- $\forall ~t \in [0, T_{lwp}]$, we have

At time $T_{lwp}$, we define data for the progressive scheme: $$ u(T_{lwp} ) = u_{{low}} (T_{lwp}) + w(T_{lwp} ) + e^{iT_{lwp} \Delta} u_{high}. $$

$$ u(t) = u^{(2)}_{{low}} (t) + u^{(2)}_{{high}} (t) $$for $ t > T_{lwp}$.

Hamiltonian Increment: $u_{low} (0) \longmapsto¶

u^{(2)}_{{low}} (T_{lwp})$

The Hamiltonian increment due to $w(T_{lwp})$ being added to low frequency evolution can be calcluated. Indeed, by Taylor expansion, using the bound (SMOOTH!) and energy conservation of $u_{{low}}$ evolution, we have using \begin{align*} H[u^{(2)}_{{l}} (T_{lwp})] &= H[u_{{l}} (0)] + (H[ u_{{l}} (T_{lwp}) + w(T_{lwp}) ] - H[u_{{l}} (T_{lwp})]) \\ & \thicksim N^{2(1-s) } + N^{2 -3s+} \thicksim N^{2(1-s)}. \end{align*}

We can accumulate $N^s$ increments of size $N^{2-3s+}$ before we double the size $N^{2(1-s)}$ of the Hamiltonian. During the iteration, Hamiltonian of ``low frequency'' pieces remains of size $\lesssim N^{2(1-s)}$ so the LWP steps are of uniform size $N^{-2(1-s)}$. We advance the solution on a time interval of size: $$ N^s N^{-2(1-s)} = N^{-2 + 3s}. $$ For $s>\frac{2}{3}$, we can choose $N$ to go past target time $T. ~\blacksquare$

How do we prove (SMOOTH!)?¶

The proof follows from a bilinear estimate.

Bilinear Strichartz Estimate¶

- Recall the Strichartz estimate for $(i \partial_t + \Delta)$ on $\mathbb{R}^2$:

- We can view this trivially as a bilinear estimate by writing

- Bourgain refined this trivial bilinear estimate for

functions having certain Fourier support properties.

Bilinear Strichartz Estimate¶

For (dyadic) $N \leq L$ and for $x \in \mathbb{R}^2$, $$ \| e^{it\Delta} f_L e^{it\Delta} g_N \|_{L^2_{t,x}} \leq \frac{N^{\frac{1}{2}}}{L^{\frac{1}{2}}} \| f_L \|_{L^2_x} \| g_N \|_{L^2_x}. $$

- Here $\mbox{spt}~(\widehat{f_L}) \subset \{ |\xi | \thicksim L\}, ~g_N$ similar.

- Observe that $\sqrt{\frac{N}{L}} \ll 1$ when $N \ll L$.

$I$-Method¶

The $I$-Method of Almost Conservation¶

Let $H^s \ni u_0 \longmapsto u$ solve $NLS$ for $t \in [0, T_{lwp}], T_{lwp} \thicksim \|u_0 \|_{H^s}^{-2/s}.$

Consider two ingredients (to be defined):

- A smoothing operator $I = I_N: H^s \longmapsto H^1$. The $NLS$ evolution $u_0 \longmapsto u$ induces a smooth reference evolution $H^1 \ni Iu_0 \longmapsto Iu$ solving $I(NLS)$ equation on $[0,T_{lwp}]$.

- A modified energy $\widetilde{E}[Iu]$ built using the reference evolution.

First Version of the $I$-method: ${\widetilde{E}}= H[Iu]$¶

For $s<1, N \gg 1$ define smooth monotone $m: \mathbb{R}^2_\xi \rightarrow \mathbb{R}^+$ s.t. $$ m(\xi) = \left\{ \begin{matrix} 1 & {\mbox{for}}~ |\xi | <N \\ \left( \frac{|\xi|}{N} \right)^{s-1} &{\mbox{for}}~ |\xi | > 2N. \end{matrix} \right. $$

The associated Fourier multiplier operator, ${\widehat{(Iu)}} (\xi) = m(\xi) \widehat{u} (\xi),$ satisfies $I: H^s \rightarrow H^1 $. Note that, pointwise in time, we have $$ \| u \|_{H^s} \lesssim \| Iu \|_{H^1} \lesssim N^{1-s} \|u \|_{H^s}. $$

Set $\widetilde{E}[Iu(t)] = H[Iu(t)]$. Other choices of $\widetilde E$ are mentioned later.

AC Law Decay and Sobolev GWP index¶

- Modified LWP. Initial $v_0$ s.t. $| \nabla I v_0

|{L^2} \thicksim 1$ has $T{lwp} \thicksim 1$.

- Goal. $\forall ~u_0 \in H^s, ~\forall ~T > 0$, construct

$u:[0,T] \times \mathbb{R}^2 \rightarrow \mathbb{C}.$

- $\iff$ Dilated Goal. Construct

$ u^\lambda: [0, \lambda^2 T] \times \mathbb{R}^2 \rightarrow \mathbb{C}. $

- Rescale Data. $\| I \nabla u_0^\lambda \|_{L^2} \lesssim N^{1-s} \lambda^{-s} \| u_0 \|_{H^s} \thicksim 1$

provided we choose $\lambda = \lambda (N) \thicksim N^{\frac{1-s}{s}} \iff N^{1-s} \lambda^{-s} \thicksim 1$.

- Almost Conservation Law. $\| I \nabla u ( t ) \|_{L^2} \lesssim H[Iu(t)]$ and

- Delay of Data Doubling. Iterate modified LWP $N^\alpha$ steps with $T_{lwp} \thicksim 1$. We obtain rescaled solution for $t \in [0, N^\alpha]$.

Almost Conservation Law for $H[Iu]$¶

Given $s > \frac{4}{7}, N \gg 1,$ and initial data $u_0 \in C^{\infty}_0(\mathbb{R}^2)$ with $E(I_N u_0) \leq 1$, then there exists a $ T_{lwp}\thicksim 1$ so that the solution \begin{align*} u(t,x) & \in C([0,T_{lwp}], H^s(\mathbb{R}^2)) \end{align*} of $NLS_3^+ (\mathbb{R}^2)$ satisfies \begin{equation*} \label{increment} E(I_N u)(t) = E(I_N u)(0) + O(N^{- \frac{3}{2}+}), \end{equation*} for all $t \in [0, T_{lwp}]$.

Ideas in the Proof of Almost Conservation¶

- Standard Energy Conservation Calculation:

- For the smoothed reference evolution, we imitate....

\begin{align*} \partial_t H(Iu) &= \Re \int_{\mathbb{R}^2} \overline{Iu_t} (|Iu|^2 Iu - \Delta Iu - i I u_t)dx \\ & = \Re \int_{\mathbb{R}^2} \overline{Iu_t} ( |Iu|^2 Iu - I (|u|^2 u)) dx \neq 0. \end{align*}

- The increment in modified energy involves a commutator,

$$H(Iu)(t) - H(Iu)(0) = \Re \int_0^t \int_{\mathbb{R}^2} \overline{Iu_t} ( |Iu|^2 Iu - I (|u|^2 u)) dx dt. $$

- Littlewood-Paley, Case-by-Case, (Bi)linear Strichartz, $X_{s,b}$....

Remarks¶

- The almost conservation property

leads to GWP for $$ s > s_\alpha = \frac{2}{2+\alpha}. $$

The $I$-method is a subcritical method.

The $I$-method localizes the conserved density in frequency}}.

There is a multilinear corrections algorithm for defining other choices of $\widetilde{E}$ which yield a better AC property.

Multilinear Correction Terms¶

Multilinear Correction Terms¶

- For $k \in {\mathbb{N}}$, define the convolution hypersurface

- For $M: \Sigma_k \to \mathbb{C}$ and $u_1,\ldots,u_k$ nice, define $k$-linear functional

$$ \Lambda_k( M; u_1,\ldots,u_k ) := c_k ~\mathbb{R}e \int\limits_{\Sigma_k} M(\xi_1,\ldots,\xi_k) \widehat{u_1}(\xi_1) \ldots \widehat{u_k}(\xi_k).$$

- For $k \in 2{\mathbb{N}}$ abbreviate

$\Lambda_k (M; u) = \Lambda_k (M; u, \overline{u}, \ldots, \overline{u}).$

- $\Lambda_k (M;u)$ invariant under interchange of even/odd arguments,

- We can define a symmetrization rule via group orbit.

Examples¶

Thus, $H[u] = \Lambda_2 ( - \xi_1 \cdot \xi_2; u) \pm \Lambda_4 (\frac{1}{2} ; u)$.

Time Differentiation Formula¶

$$ \partial_t \Lambda_k( M; u(t) ) = \Lambda_k( i M \alpha_k; u(t) ) - \Lambda_{k+2}( i k X(M); u(t) ) $$$$ = \Lambda_k( i M \alpha_k; u(t) ) - \Lambda_{k+2}( [i k X(M)]_{sym}; u(t) ). $$Here $$ \alpha_k(\xi_1,\ldots,\xi_k) := -|\xi_1|^2 + |\xi_2|^2 - \ldots - |\xi_{k-1}|^2 + |\xi_k|^2$$ (so $\alpha_2 = 0$ on $\Sigma_2$) and $$ X(M)(\xi_1,\ldots,\xi_{k+2}) := M( \xi_{123}, \xi_4, \ldots, \xi_{k+2}).$$ We use the notation $\xi_{ab} := \xi_a + \xi_b$, $\xi_{abc} := \xi_a + \xi_b + \xi_c$, etc.

AC Quantities via Multilinear Corrections¶

- Abbreviate $m(\xi_j)$ as $m_j$. Define $\sigma_2$ s.t. $\| I \nabla u \|_{L^2}^2 = \Lambda_2 (\sigma_2; u):$

- With $\tilde \sigma_4$ (symmetric, time independent) {{to be determined}}, set

- Using the time differentiation formula, we calculate

We'd like to define $\tilde \sigma_4$ to cancel away the $\Lambda_4$ contribution.

Natural Choice of $\sigma_4$ Fails¶

Here is the natural choice: $$ \tilde \sigma_4 =~ \frac{[2 i X(\sigma_2)]_{sym}}{i \alpha_4}.$$ On $\Sigma_4$, we can reexpress $\alpha_4 = -|\xi_1|^2 + |\xi_2|^2 -|\xi_3|^2 + |\xi_4|^2$ as $$ \alpha_4 = -2 \xi_{12} \cdot \xi_{14} = -2 |\xi_{12}| |\xi_{14}| \cos \angle(\xi_{12},\xi_{14}), $$ and $$ [2 i X(\sigma_2)]_{sym} = \frac{1}{4} ( - m_1^2 |\xi_1|^2 + m_2^2 |\xi_2|^2 - m_3^2 |\xi_3|^2 + m_4^2 |\xi_4|^2 ). $$ When all the $m_j = 1$ (so $\max_{j} |\xi_j | < N$), $\tilde \sigma_4$ is well-defined. However, $\alpha_4$ can also vanish when $\xi_{12}$ and $\xi_{14}$ are orthogonal.

Small Divisor Problem¶

Speculation on Integrable Systems?¶

For $NLS_3^+ (\mathbb{R})$, the resonant obstruction disappears. Thus, $$ \widetilde E^1 = \Lambda_2 (\sigma_2) + \Lambda_4 (\tilde \sigma_4);$$ $$ \partial_t \widetilde E^1 = - \Lambda_6 ( [i 4 X(\tilde \sigma_4)]_{sym}).$$ We can then define, with $\tilde \sigma_6$ to be determined, $$\widetilde E^2 = \widetilde E^1 + \Lambda_6 (\tilde \sigma_6 );$$ $$ \partial_t \widetilde E^2 = \Lambda_6 ( {{\{ i \tilde \sigma_6 \alpha_6 - [i 4 X(\tilde \sigma_4)]_{sym}\} }}) + \Lambda_{8}( [i 6 X(\tilde \sigma_6)]_{sym}). $$ Let's define $$ \tilde \sigma_6 = \frac{[i 4 X (\tilde \sigma_4)]_{sym}}{i \alpha_6}. $$

Speculation on Integrable Systems?¶

Thus, we formally obtain a continued-fraction-like algorithm. $$ \tilde \sigma_6 = \frac{\left[i 4 X \left ( \frac{[2 i X(\sigma_2)]_{sym}}{i \alpha_4}\right)\right]_{sym}}{i \alpha_6}, $$ $$ \tilde \sigma_8 = \frac{\left[i 6 X \left( \frac{\left[i 4 X \left ( \frac{[2 i X(\sigma_2)]_{sym}}{i \alpha_4}\right)\right]_{sym}}{i \alpha_6} \right)\right]_{sym}}{i \alpha_8}, \ldots. $$ Each step gains two derivatives but costs two more factors.

Speculation: The multipliers $\tilde \sigma_6, \tilde \sigma_8, \ldots$ are well defined and lead to better AC properties. Same for other integrable systems.