Introduction to Bioinformatics

A masters course by Blaž Zupan and Tomaž Curk

University of Ljubljana (C) 2016-2018

Disclaimer: this work is a first draft of our working notes. The images were obtained from various web sites, but mainly from the wikipedia entries with explanations of different biological entities. Material is intended for our bioinformatics class and is not meant for distribution.

Lecture Notes Part 6¶

Sequence Alignment: Few More Tricks¶

The last lecture was about sequence alignment and dynamic programming. We have learned that the type of problems that includes sequence alignment can be solved with algorithms whose complexity only linearly depend on the sequence length. This was due to a class of scoring function used, where the value of the scoring function depends only on the pair of symbols of the align sequences, and not on any of its neighbors. Hence, it is possible to find optimal alignments by first finding (scoring) optimal alignments of sequence heads, that is, subsequences from the start to some position of the sequence, and the scoring the alignment of the rest of the sequence.

We have introduced Needleman-Wunsch algorithm that perform global alignment and aligns all the symbols of the two sequences. Just like any other dynamic programming algorithm, it uses dynamic programming table and the steps that include table initialization, table computation and trace-back. Let us illustrate this approach on a related problem of longest common subsequence.

Longest common subsequence (LCS)¶

For a start, consider a sequence $s$. A subsequence of $s$ is a sequence that can be derived from $s$ by deleting some elements without changing the order of the remaining elements. Say, ATACG is a subsequence of ATTATCTG, and so is ATTA, and ACG, and TTG. With $n=|s|$ being the length of $s$, thera are $2^n$ subsequences of $s$, counting also an empty subsequence.

We will design the algorithm to solve LCS for a pair of sequences. Given two sequences, $s$ and $t$, our aim is to find a sequence $p$ that is a subsequence of $s$ and subsequence of $t$, such that there is no $p'$ that is a subsequence of $s$ and $t$ and that is longer than $p$.

For a start, we will consider a simpler problem of finding the length of such subsequence. We will use dynamic programming. The scoring function for our problem is simple: we can take a symbol from either $s$ or $t$, and the score will not change. But if we take a symbol from both sequences, the alignment score would increase by $1$ if the two symbols are equal and would not change if the symbols are different. Increasing the score also means that we have, locally, increased the common subsequence with that particular symbol.

Our recursive definition of the entries of dynamic programming table are is thus:

$$M_{i,j}= \max \begin{cases} M_{i-1,j}\\ M_{i,j-1}\\ M_{i-1,j-1}+{\bf 1}(s_i\equiv t_i) \end{cases}$$Here, ${\bf 1}(x)$ is an indicator function that has a value of 1 when $x$ is true and 0 otherwise.

For initialization, we assume that subsequences where no symbol has been taken from $s$ (or from $t$) have all zero length. So, $M_{i, 1}=0$ for $i\in\{1,\ldots,|s|\}$ and $M_{1, j}=0$ for $j\in\{1,\ldots,|t|\}$.

This looks so easy. In our implementation, we will also use a trick and define the dynamic programming table as a dictionary whose default values are 0. So, no need for explicit initialization. The function that computes the length of longest common subsequence for two sequences is therefore as follows.

from collections import defaultdict

def lcs_length(s, t):

"""Length of longest common subsequence."""

table = defaultdict(int)

for i, si in enumerate(s):

for j, tj in enumerate(t):

table[i, j] = max(

table[i-1, j],

table[i, j-1],

table[i-1, j-1] + (si == tj)

)

return table[len(s)-1, len(t)-1]

Now for a few tests.

s = "AACC"

t = "ATAGCG"

lcs_length(s, t)

3

s = "AAAAAGGGG"

t = "GGGGAA"

lcs_length(s, t)

4

s = "ATGTATCTATA"

t = "ACTCGTCACTCCTCATCA"

lcs_length(s, t)

11

Seems to work. Though, at least for the last example, it is hard to tell. It would be easier if we would print out the subsequence. The code for this is a bit more complicated. For each cell in dynamic programming table, we need to remember which was the optimal path, that is the longest subsequence that led us to the state represented with the cell in the table. We will store this in a separate dictionary we will call prev (as for the previous best cell).

def lcs_table(s, t):

"""Construction of dynamic programming table."""

table = defaultdict(int)

prev = {}

for i, si in enumerate(s):

for j, tj in enumerate(t):

table[i, j], prev[i, j] = max(

(table[i-1, j], (i-1, j)),

(table[i, j-1], (i, j-1)),

(table[i-1, j-1] + (si == tj), (i-1, j-1))

)

return table, prev

The above function constructs the dynamic programming table, and a table with an entry for the best previous cell. To obtain the longest common subsequence, we have to traverse the table: any time we find that the move that we have made was diagonal and the symbols we took from $s$ and $t$ were the same, that symbol is a part of the common subsequence.

def trace_back(s, t, table, prev):

"""Trace-back and return the longest common subsequence."""

i, j = len(s)-1, len(t)-1

lcs = ""

while table[i, j] != 0:

if prev[i, j] == (i-1, j-1) and (s[i]==t[j]):

lcs = s[i] + lcs

i, j = prev[i, j]

return lcs

def lcs(s, t):

table, prev = lcs_table(s, t)

return trace_back(s, t, table, prev)

Let us try this out.

s = "AAAAAGGGG"

t = "GGGGAA"

lcs(s, t)

'GGGG'

s = "ATGTATCTATA"

t = "ACTCGTCACTCCTCATCA"

lcs(s, t)

'ATGTATCTATA'

Wow, works! But this was a simple example of dynamic programming. Now for some more complex ones.

Local Alignment¶

Needleman-Wunsch globally aligns two sequences. But what we are actually after optimal local alignment. That is, we are looking for alignment of two subsequences from $s$ and $t$ with the highest alignment score. At first, this algorithm looks so much different from Needleman-Wunsch algorithm that it requires another name: a Smith-Waterman algorithm. Turns out Needleman-Wunsch algorithm was proposed in 1970, and Smith-Waterman somehow later, in 1981. The difference between these two approaches is, though, surprisingly small. For a taste, here is the recursive definition of entries of dynamic programming table for Needleman-Wunsch:

$$M_{i,j}= \max \begin{cases} M_{i-1,j}+\sigma(s_i,-)\\ M_{i,j-1}+\sigma(-,t_i)\\ M_{i-1,j-1}+\sigma(s_i,t_i) \end{cases}$$And here goes the definition for Smith-Waterman:

$$M_{i,j}= \max \begin{cases} M_{i-1,j}+\sigma(s_i,-)\\ M_{i,j-1}+\sigma(-,t_i)\\ M_{i-1,j-1}+\sigma(s_i,t_i) \\ 0 \end{cases}$$That's it. Crazy, right? That is the only difference between global and local alignment. Of course we have assumed we are not interested in alignments which are scored below $0$, and are only looking for subsequences whose alignment scores are positive. Therefore, we have to set the scoring function $\sigma$ accordingly. For DNA alignment, the scoring functions we have introduced in our last lecture would do just fine.

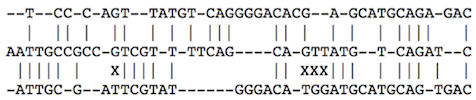

Here is an example of optimal local alignment of two sequences:

------------------TCCCAGTTATGTCAGGGGACACGAGCATGCAGAGAC |||||||||||| AATTGCCGCCGTCGTTTTCAGCAGTTATGTCAGATC------------------

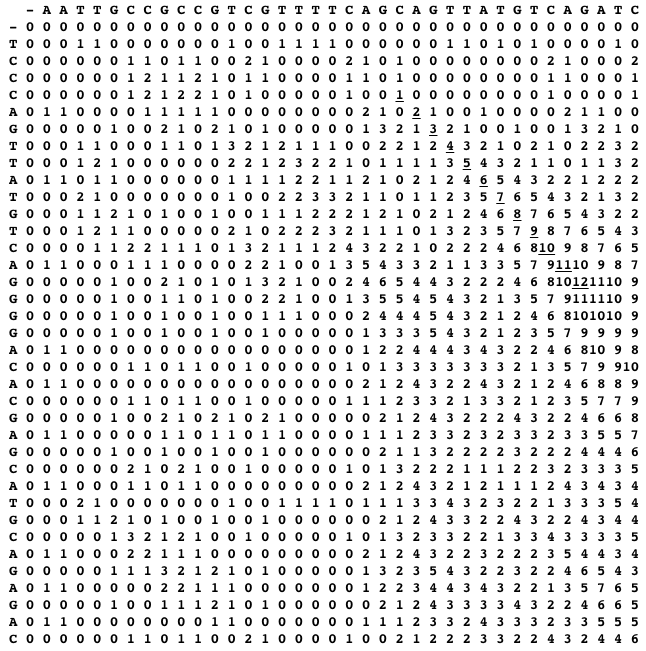

Do not be misguided by this example, the optimal local alignment may contain also indels, or can include conflicting pairs of symbols (our example does not). The idea of Smith-Waterman is now the following: find the cell with the maximum alignment score in the dynamic programming table, and from this cell trace-back to the last cell with a positive score. The traced path reveals an optimal local alignment. For the two sequences above, the dynamic programming table and the traced path (the uderlined scores) are shown below.

Local alignment is therefore just like global alignment where history does not matter and restarts from 0 are allowed.

Local Alignment and Permutation Tests¶

We can find local alignments with some positive scores in virtually any pair of sequences. But how likely is it that are our finding is not due to chance? Permutation analysis comes to the rescue. We could take two sequences, $s$ and $t$, compute the local alignment score $M$, and then ask what is the probability that this score could not be obtained on a set of arbitrary sequence. For this purpose, we take $s$, randomly permute its symbols, compute the maximal alignment score, and repeat the procedure, say, 10.000 times. We then compute how many times we obtain the score that is equal to or greater than $M$. The proportion of such scores is our $p$ value, and tells us about probability we could achieve this score in unstructured sequences. We would like the $p$-value to be small, say, below $0.0001$ and report on local alignment only if it is not due by chance, or better, when this chance is very very small.

Alignment with Affine Gap Scores¶

So far, we have brutally penalized gaps, that is, the parts of the alignments where we took a symbol from one sequence but retained the position in the other sequence. In nature, insertions would often involve a series of nucleotides. We should penalize the start of the insertion, but then the penalty should less depend on the length of the inserted sequence. The total penalty for the insertion should therefore be something like:

$$\gamma(x)=-(\rho+\eta x)$$where $\rho$ is the penalty for the introduction of the gap, $\eta$ the cost of each gap symbol, and $x$ the length of the gap. Normally, $\rho\gg\eta$.

We now need to design the algorithm that can consider affine gap penalties, that is the algorithm that assigns different penalties for the opening and extending the gap. Notice that the gap may span across one or the other sequence. The solution for the problem is decomposition of the dynamic programming table to three tables. We will use the table $M$ for scoring the alignments when we consume both symbols, one from $s$ and the other from $t$. For consuming symbols from $s$ only (gaps in sequence $t$) we will consult the table $M^{\downarrow}$, and for consuming symbols from $t$ (skipping the sequence from $s$) we will consult $M^{\rightarrow}$. This is like traversing the three-layered transit system. In the world of $M$, we can only go diagonally, south-east. But then we can jump to the layer $M^{\downarrow}$, where we can only go south, or can jump to $M^{\rightarrow}$, where we can only go east. Direct jumps from $M^{\downarrow}$ to $M^{\rightarrow}$ are not allowed, and can only go through $M$. Let us write this in equations:

$$M^{\downarrow}_{i,j}= \max \begin{cases} M^{\downarrow}_{i-1,j}-\eta\\ M_{i-1,j}-(\eta+\rho) \end{cases}$$$$M^{\rightarrow}_{i,j}= \max \begin{cases} M^{\rightarrow}_{i,j-1}-\eta\\ M_{i,j-1}-(\eta+\rho) \end{cases}$$$$M_{i,j}= \max \begin{cases} M_{i-1,j-1}+\sigma(s_i,t_j)\\ M^{\downarrow}_{i,j}\\ M^{\rightarrow}_{i,j} \end{cases}$$Multiple Sequence Alignment¶

Consider an example of a global alignment of three sequences:

We can extend the basic Needleman-Wunsch to consider any number of sequences. For three sequences, the table $M$ is three dimensional, and any of its cells can be reached from seven different neighboring cells: from 1 taking symbols from all three sequences, from 3 taking symbols from two but not from one sequence (there are three such combinations), and from 3 taking a symbol just from one of the three sequences. Also, the scoring function has now to consider three symbols, that is $\sigma(s_i, t_j, u_k)$.

The complexity of multiple sequence alignment grows with the number of sequences. For $q$ sequences, assuming they are of the equal length of $n$, the complexity of the algorithm is now $\mathcal{O}(n^q)$. Despite using dynamic programming, simultaneously aligning many sequences is not feasible, and other heuristics come into play, like those used in Clustal series of algorithms.

We could, intuitively, immediately think of some tricks that we could use in such situations. Say, we are looking for the alignment of $q$ sequences. We will start with two most similar sequences, align them and then, by fixing the alignment align a third sequences. We will repeat the procedure, each time aligning another non-align sequence that will be most similar to the current alignment. We could also replace an aligned sequence with consensus sequence, represented with symbols that are most frequent at some alignment position. Or represent each alignment position with probability of the symbols, and then change the scoring function so that it would consider these probabilities instead of crisp symbols. The scoring function could then be entropy-based, say using $\sum_{x\in {\mathcal A}}p_x\log p_x$, where ${\mathcal A}$ is an alphabet and $p_x$ is probability of the symbol $x$ at a given position of the alignment. Those of you already introduced in data science would remember this formula from several machine learning algorithms.

Oh, so many ideas. But in fact these above, although looking quite simple, are not far from what constitutes industrial-level alignment algorithms, like Clustal. The guiding principle for these algorithms is that they have to work reasonably well and they have to be very fast, as --- what we know by now --- biological sequences are long and they come in plenty.

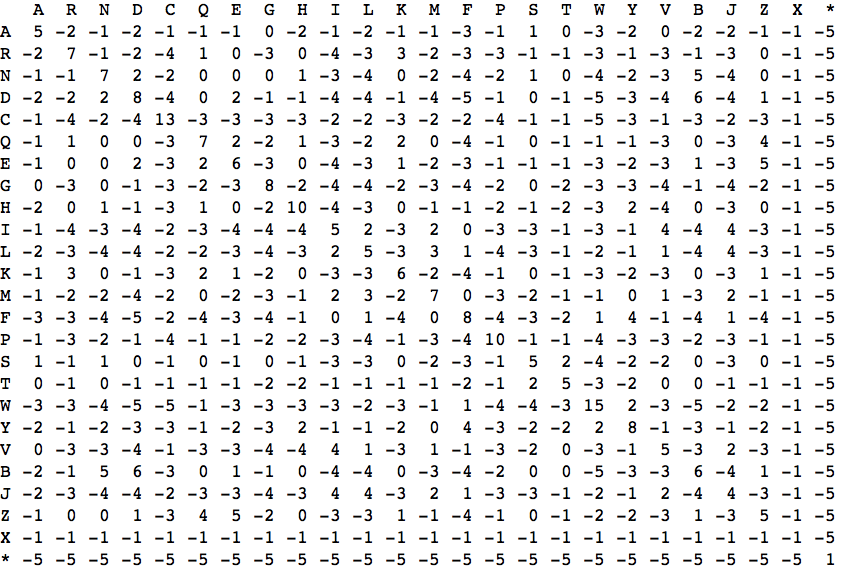

Substitution Matrices¶

So far we have assumed that the penalty of substituting one nucleotide with another does not depend on the nucleotides. That is, substituting A with T would receive the same penalty as substituting A with G. But in nature, substitutions, even on the nucleotide level, are not equally likely and because of redundancy of genetic code have different implications. This is even more true if we consider sequences of aminoacids. Say, some aminoacids are polar, so replacing a polar aminoacid Glutamine (Q) with another polar aminoacid Asparagine (N) may have a lesser effect then replacement with hydrophobic amino acid Alanine (A). The evolution would be more prone to replacements which cause lesser harm, so in theory we could derive a scoring (substitution) matrix from sequence comparison of homologous genes. This has indeed been done and has resulted in a series of substitution matrices called PAM and BLOSUM. Here is an example of BLOSUM50 substitution matrix: