from IPython.display import HTML

style = """

<style>

.expo {

line-height: 150%;

}

.visual {

width: 600px;

}

.red {

color: red;

display:inline;

}

.blue {

color: blue;

display:inline;

}

.green {

color: green;

display:inline;

}

</style>

"""

HTML(style)

2. What are the latest and greatest cutting edge results?¶

- Pose Generation

- Semi Supervised Learning

- Progressive GANs

Special topic: Inception Score for scoring GANs

3. What is their future of GANs and Deep Learning in general?¶

What are GANs?¶

You may not know what a GAN is: when the conversation turns to GANs, you may feel like Homer Simpson.

"GAN" stands for "Generative Adversarial Network".

They are a method of training neural networks to generate images similar to those in the data the neural network is trained on. This training is done via an adversarial process.

Basic example (Goodfellow et. al., 2014)¶

Not digits written by a human. Generated by a neural network.

Cutting edge example: (NVIDIA Research, October 2017)¶

Not real people: images generated by a neural network.

"[GANs], and the variations that are now being proposed, are the most interesting idea in the last 10 years in ML [machine learning], in my opinion."

-- Yann LeCunn, Director of AI Research at Facebook, in 2016 on Quora

We've all seen diagrams like this when trying to understand neural nets:

But what are they really? There are many different ways of explaining what a neural net is. Mathematically, they are:

- Nested functions (like $f(g(x))$)

- Universal function approximators (if we nest enough of them, we can approximate any function, no matter how complex)

- Differentiable (this allows us to "train" them to actually accomplish things)

This means that you can think of a neural net as being a mathematical function that takes in:

- An input image (or batch) (that we'll call $X$)

- Several weight matrices (that we'll denote $W$)

The net itself is just some big differentiable function $N$. Like $N(X, W) = X^3 + 3W^2 - 5$, but way more complicated.

Every time we feed a set of inputs and weights through this network, we get a "prediction vector" $P$; we compare the prediction vector to the actual vector of correct responses $Y$ to get a loss vector $L$.

These facts mean we can train neural network using the following procedure:

- Feed a bunch of data points through the neural network.

- Compute the loss $L$ - how much the network "missed" by on these points.

- Compute for every single weight $w$ in the network: $$ \frac{\partial L}{\partial w} $$

And then we can update the weights according to the equation:

$$ w = w - \frac{\partial L}{\partial w} $$(Or one of the many modifications of this equation that exist, that are different variants on gradient descent).

In addition, differentiability means we can compute, for every pixel $x$ in the input image:

$$ \frac{\partial L}{\partial x} $$In other words, how much the loss would change if this pixel in the input image changed.

(This turns out to be the key fact that allows GANs to work)

How were GANs invented?¶

In 2013, Ian Goodfellow (inventor of GANs, then a grad student at the University of Montreal) and Yoshua Bengio (one of the leading researchers on neural networks in the world) are about to run a speech synthesis contest.

Their idea is to have a "discriminator network" that could listen to artificially generated speech and decide if it was real or not.

They decide not to run the contest, concluding that people will just game the system by generating examples that will fool this particular discriminator network, rather than trying to produce generally good speech.

Then, Ian Goodfellow was in a bar one night, and asked the question: can this be fixed by the discriminator network learning?

This led him to develop what ultimately became the GAN framework. Let's dive in and see how it works:

Part 1¶

First: randomly generate a feature vector; feed the feature vector through a randomly initialized neural network to produce an output image.

Denote the matrix of pixels in this image - generated by the first neural network - $X$.

Then, feed this image (matrix of pixels $X$) into a second network and get a prediction:

Use this loss to train this second network, called the "discriminator".

Critically, also compute $$ \frac{\partial L}{\partial X} $$ - how much each of the pixels generated affects the loss.

Then, update the first network, called the generator, with $$ -\frac{\partial L}{\partial X} $$

negative because we want the generator to be continually making the discriminator more likely to say that the images it is generating are real.

Finally, generate a new random noise vector $Z$, and repeat the process, so that the generator will learn to turn any random noise vector into an image that the discriminator thinks is real.

What's missing?¶

This will train the generator to generate good fake images, but it will likely result in the discriminator not being a very smart classifier since we only gave it one of the two classes it is trying to classify - that is, only fake images, and no real images.

So, we'll have to give it real images as well.

Part 2:¶

Quote from the original paper on GANs:

"The generative model can be thought of as analogous to a team of counterfeiters, trying to produce fake currency and use it without detection, while the discriminative model is analogous to the police, trying to detect the counterfeit currency. Competition in this game drives both teams to improve their methods until the counterfeits are indistinguishable from the genuine articles."

-Goodfellow et. al., "Generative Adversarial Networks" (2014)

Let's code one up¶

Let's check one out!

Code of the first GAN ever:¶

This is the Original GitHub repo with Ian Goodfellow's code that he used to generate MNIST digits back in 2014.

DCGAN (January 2016)¶

This paper introduced a couple of key concepts that pushed GANs forward:

- Deep Convolutional/Deconvolutional architecture

- Batch normalization (which had been invented earlier in 2015) first applied to GANs

Crazy fact: the lead author of the DCGAN paper (Alec Radford) was still in college when it was published.

DCGAN Results¶

Which of these five bedrooms do you think are real?

(they're all fake!)

Smooth transitions in the latent (100-dimensional input to generator) space:

Filters learned by the last layer of the discriminator:

Arithmetic in the latent space:

Discriminator¶

class Discriminator(torch.nn.Module):

def __init__(self):

super(Discriminator, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(

in_channels=1, out_channels=128, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.LeakyReLU(0.2, inplace=True)

)

self.conv2 = nn.Sequential(

nn.Conv2d(

in_channels=128, out_channels=256, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(256),

nn.LeakyReLU(0.2, inplace=True)

)

self.conv3 = nn.Sequential(

nn.Conv2d(

in_channels=256, out_channels=512, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(512),

nn.LeakyReLU(0.2, inplace=True)

)

self.conv4 = nn.Sequential(

nn.Conv2d(

in_channels=512, out_channels=1024, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(1024),

nn.LeakyReLU(0.2, inplace=True)

)

self.out = nn.Sequential(

nn.Linear(1024*4*4, 1),

nn.Sigmoid(),

)

def forward(self, x):

# Convolutional layers

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

# Flatten and apply sigmoid

x = x.view(-1, 1024*4*4)

x = self.out(x)

return x

Generator¶

class Generator(torch.nn.Module):

def __init__(self):

super(Generator, self).__init__()

self.linear = torch.nn.Linear(100, 1024*4*4)

self.conv1 = nn.Sequential(

nn.ConvTranspose2d(

in_channels=1024, out_channels=512, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(512),

nn.ReLU(inplace=True)

)

self.conv2 = nn.Sequential(

nn.ConvTranspose2d(

in_channels=512, out_channels=256, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(256),

nn.ReLU(inplace=True)

)

self.conv3 = nn.Sequential(

nn.ConvTranspose2d(

in_channels=256, out_channels=128, kernel_size=4,

stride=2, padding=1, bias=False

),

nn.BatchNorm2d(128),

nn.ReLU(inplace=True)

)

self.conv4 = nn.Sequential(

nn.ConvTranspose2d(

in_channels=128, out_channels=1, kernel_size=4,

stride=2, padding=1, bias=False

)

)

self.out = torch.nn.Tanh()

def forward(self, x):

# Project and reshape

x = self.linear(x)

x = x.view(x.shape[0], 1024, 4, 4)

# Convolutional layers

x = self.conv1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.conv4(x)

# Apply Tanh

return self.out(x)

What's going on in these convolutions anyway?

Convolutions deep dive¶

We've all seen diagrams like this in the context of convolutional neural nets:

This is the famous AlexNet architecture.

What's really going on here?

Let's say we have an input layer of size $[224x224x3]$, as we do in the ImageNet dataset that AlexNet was trained on. This next layer seems to be $96$ deep. What does that mean?

Review of convolutions¶

"Filters" are slid over images using the convolution operation.

In theory, these filters can act as feature detectors, and the images that result from the convolving these filters with the image can be thought of as versions of the original image where the detected features have been "highlighted."

Blue = original image Gray = convolutional "filter" Green = output image

In practice, the neural network learns filters that are useful to solving the particular problem it has been given.

We typically visualize the results of applying these filters to the images. However, in certain cases we visualize the filters themselves.

Let's return to the concrete example of the AlexNet architecture:

For each of 96 filters, the following happens:

For each of the 3 input channels - usually red, green, and blue for color image - one of these filters, which happens to be dimension $11 x 11$ in this case, is slid over the image, "detecting the presence of different features" at each location.

So, there are actually a total of 96 * 3 convolution operations that take place, resulting in 96 filters, each of which has a red, green, and blue component.

We can combine the red, green, and blue filters together and visualize them as if they were a mini $11x11$ image:

The 96 AlexNet filters:¶

DCGAN Trick #2: Deep Convolutional/Deconvolutional Architecture¶

Batch normalization is one of the most powerful and simple tricks to come along in the history of the training of deep neural networks. It was introduced by two researchers from Google in March 2015, just nine months before the DCGAN paper came out.

Regular neural network:

We know that normalizing the input to a neural network helps with training: the network doesn't have to "learn" that one feature is on a scale from 0-1000 and another is on a scale from 0-1 and change its weights accordingly, for example.

The same thing applies further down in the network:

Inituitively, batch normalization works for the same reasons that normalizing data before feeding it into a neural network works.

How is it actually done?

When passing data through a neural network, we do so in batches - say, 64 or 128 images at a time.

Thus, at every step of the neural network, each neuron has a value for each observation that is being passed through.

We normalize across these observations, so that for each batch, each neuron will have a mean 0 and standard deviation 1. Specifically, we replace the value of the neuron $N$ with:

$$N' = \frac{N - \mu}{\sigma}$$What's wrong with this in convolutional neural networks specifically?

Hint: convolutional neural networks learn by learning groups of neurons which are really filters:

This is one image, convolved with 10 different filters in a CNN.

For convolutional networks, the "neurons" are pixels in output images that have been convolved with a filter. These images are important - they contain spatial information about what is present in the images. If we modify pixels in these images by different amounts, this spatial information could get modified.

So, instead of calculating means and standard deviations for each neuron in each batch, we calculate means and standard deviations for all the output images for a given batch, so that for a given image, each pixel will be modified by the same amount.

Enough theory!

Latest and Greatest Results¶

This paper proposes the novel Pose Guided Person Generation Network (PG2) that allows to synthesize person images in arbitrary poses, based on an image of that person and a novel pose.

Based on the DeepFashion Dataset

DeepFashion dataset description¶

"“It contains over 800,000 images, which are richly annotated with massive attributes, clothing landmarks, and correspondence of images taken under different scenarios including store, street snapshot, and consumer. Such rich annotations enable the development of powerful algorithms in clothes recognition and facilitating future researches.”

Example data¶

Generated poses¶

Semi-supervised learning made it into Jeff Bezos' most recent letter to Amazon's shareholders!

"...in the U.S., U.K., and Germany, we’ve improved Alexa’s spoken language understanding by more than 25% over the last 12 months through enhancements in Alexa’s machine learning components and the use of semi-supervised learning techniques. (These semi-supervised learning techniques reduced the amount of labeled data needed to achieve the same accuracy improvement by 40 times!)"

Semi-supervised learning is a third type of machine learning, in addition to supervised learning and unsupervised learning.

At a high level:

- The goal of supervised learning is to learn from labeled data.

- The goal of unsupervised learning is to learn from unlabeled data.

Semi-supervised learning asks the question: can you learn from a combination of both labeled and unlabeled data?

With GANs, the answer turns out to be yes! The paper that introduced this idea was Improved Techniques for Training GANs.

How does it work? Basic idea is:

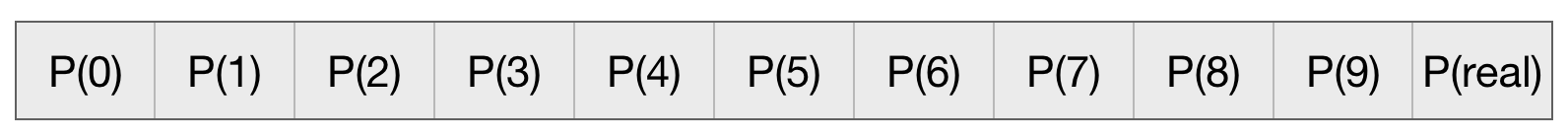

Let's say we're trying to classify MNIST digits. The discriminator will output a probability vector of an image belonging to one of ten classes:

This is compared with the real values, turned into a loss vector, and backpropagated through the network to train it.

With semi-supervised learning, we simply add another class to this output:

Then, data points are fed through a classifier, with the following labels:

- Real, labeled examples are given labels simply of 0 for all the digits they are not, 1 for the digit they are, and 0 for $P(real)$.

- Fake examples generated by the generator are given labels of 0 across the board, including for $P(real)$.

- Real, un-labeled examples are given labels of 0 for all the classes and 1 for the probability of the image being real.

This allows the classifier to learn from real, labeled examples, as well as both fake examples, and real, unlabeled examples!

In practice, using fake examples is more effective than using real, unlabeled examples.

In this framework, how do we train the generator?

The classifier in this case is acting like a discrminator at the same time it is acting like a classifier. For each image it sees, it is outputting both:

- The probability that the image is a 0, 1, 2, etc.

- The probability that the image is real.

Nevertheless, as the researchers in the paper point out:

This approach introduces an interaction between G and our classifier that we do not fully understand yet

This leads them to one of their key innovations that allowed this procedure to work: feature matching.

Semi-supervised learning trick: feature matching¶

Feature matching, a technique for training GANs, was proposed in the same paper that proposed using GANs for Semi-Supervised Learning: Improved Techniques for Training GANs, by Salimans et. al. from OpenAI.

Idea¶

The last layer of a convolutional netural network, before the values get fed through a fully connected layer, is typically a layer with many features that have been detected

For example, in the convolutional architecture used in the discriminator of the DCGAN architecture described above, the last layer is $2x2x128$ - the result of 128 "features of features of features" that the network has learned.

This is then "flattened" to a single layer of $2 * 2 * 128 = 512$ neurons, and these 512 neurons are then fed through a fully connected layer to produce an output of length 10.

Their idea was to train the generator, not simply by using the discriminator's prediction of whether the image was real or fake, but on how similar this 512 dimensional vector was between real images fed through the discrimintor compared to fake images fed through the discriminator.

The delta between these two sets of features is the loss used to train the generator.

Aside: why does this work? Even the authors of the paper don't fully understand it:

"This approach introduces an interaction between G and [the hybrid classifier-discriminator] that we do not fully understand yet, but empirically we find that optimizing G using feature matching GAN works very well for semi-supervised learning, while training G using GAN with minibatch discrimination does not work at all. Here we present our empirical results using this approach; developing a full theoretical understanding of the interaction between D and G using this approach is left for future work.

Nevertheless, feature matching was the trick that led to breakthrough performance using semi-supervised learning to build powerful classifiers:

Salimans et. al. from OpenAI in mid-2016 used this approach to get just under a 6% error rate on the Street View House Numbers dataset with just 1,000 labeled images. Prior approaches achieved roughly 16% error.

State-of-the-art error, using the entire dataset of roughly 600,000 images, simply using supervised learning with very deep convolutional networks, is roughly 2%.

Semi-supervised learning is perhaps the most important application of GANs - what is the cutting edge of building GANs themselves?

Here is the Progressive GAN paper, describing how they generated high quality 1024x1024 images mimicking those from the CelebA dataset. The findings even made the New York Times!

What is the main idea behind Progressive GANs?

- Begin by downsampling the images to be simply 4x4.

- Train a GAN to generate "high quality" 4x4 images.

- Then, using the weights already learned in the initial layers, add a layer after the generator and before the discriminator so that this GAN now generates 8x8 images, etc.

But how do we know these GANs are any good?

An aside: how do we score GANs?¶

How do we know that these samples are "good"? They "look good", but how can we quantify this?

"Generative Adversarial Networks are generally regarded as producing the best samples [compared to other generative methods such as variational autoencoders] but there is no good way to quantify this."

--Ian Goodfellow, NIPS tutorial 2016

Since then, several methods have been proposed, the most prominent of which is the Inception Score:

Inception Score¶

In the same paper that introduced feature matching, a technique for scoring GANs called Inception Score was introduced.

Consider a GAN that was intended to generate images that come from one of a finite number of classes, such as MNIST digits.

Inception score (cont.)¶

Let's say that the generator generated some images, and those generated images were then fed through a pre-trained neural network, and the a probability distribution over the images was:

[0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1, 0.1]

In other words, the pre-trained model has no idea which class this image should belong to.

In this case, we conclude that all else equal, this likely isn't a very good generator.

The way we formalize this is that this resulting vector should have low entropy - that is, not an even distribution over class labels.

Inception score (cont.)¶

There is another way we can use this pre-trained neural network. Let's say that for every image generated, we recorded the "most likely class" that the pre-trained network was predicting. And let's say that 90% of the time, the pre-trained network was classifying the images that our model was generating as zeros, so that the vector of "most likely class" looked like:

[0.91, 0.01, 0.01, 0.01, 0.01, 0.01, 0.01, 0.01, 0.01, 0.01]

Again, this would not be a very good GAN!

The way we formalize this is that we want the vector of the frequency of the predictions to have high entropy: that is, we do want the classes to be balanced.

"Inception" simply refers to the neural network architecture used to score these generated images.

Progressive GANs did indeed show a record Inception score on the CIFAR-10 dataset:

However, we can't do this in the Celeb-A dataset: there are no classes!

Patch similarity¶

The authors propose a new way of assessing their GANs to identify improvement:

They randomly sample 7x7 patches from the 16x16 versions of the images, the 32x32 versions, etc., up to the 1024x1024 version. They then use a metric called the "Wasserstein distance" to compute the similarity between generated patches and the corresponding real patches.

"...the distance between the patch sets extracted from the lowest-resolution 16 × 16 images indicate similarity in large-scale image structures, while the finest-level patches encode information about pixel-level attributes such as sharpness of edges and noise."

Using this metric, their method does indeed outperform other GANs that have come before.

The future¶

What is the future of GANs? More generally, what is the future of Deep Learning? Can we predict it?

I asked Ian Goodfellow in a LinkedIn message if he was surprised by how quickly Progressive GANs were able toget clase to photorealistic image quality on 1024x1024 images. He replied:

I'm actually surprised at how slow it's been. Back in 2015 I thought that getting to photorealistic video was mostly going to be an engineering effort of scaling the model up and training on more data.

-Ian Goodfellow, in a LinkedIn message to me

Ian Goodfellow's background¶

Knowledge over time¶

What is the future of GANs?¶

Nobody knows!