A Dockerfile that will produce a container with all the dependencies necessary to run this notebook is available here.

Clear the theano cache before each run to avoid subtle bugs.

!rm -rf /home/jovyan/.theano/

%matplotlib inline

import datetime

from itertools import product

import logging

import pickle

from warnings import filterwarnings

from matplotlib import pyplot as plt

from matplotlib.offsetbox import AnchoredText

from matplotlib.ticker import FuncFormatter, StrMethodFormatter

import numpy as np

import pandas as pd

import scipy as sp

import seaborn as sns

from sklearn.preprocessing import LabelEncoder

from theano import shared, tensor as tt

# keep theano from complaining about compile locks for small models

(logging.getLogger('theano.gof.compilelock')

.setLevel(logging.CRITICAL))

# silence PyMC3 warnings (there aren't many)

filterwarnings(

'ignore', ".*diverging samples after tuning.*",

module='pymc3'

)

filterwarnings(

'ignore', ".*acceptance probability.*",

module='pymc3'

)

pct_formatter = StrMethodFormatter('{x:.1%}')

blue, green, *_ = sns.color_palette()

# configure pyplot for readability when rendered as a slideshow and projected

sns.set(color_codes=True)

plt.rc('figure', figsize=(8, 6))

LABELSIZE = 14

plt.rc('axes', labelsize=LABELSIZE)

plt.rc('axes', titlesize=LABELSIZE)

plt.rc('figure', titlesize=LABELSIZE)

plt.rc('legend', fontsize=LABELSIZE)

plt.rc('xtick', labelsize=LABELSIZE)

plt.rc('ytick', labelsize=LABELSIZE)

SEED = 207183 # from random.org, for reproducibility

About Me¶

Principal Data Scientist @ Monetate Labs • PyMC3 contributor¶

@AustinRochford • austinrochford.com • github.com/AustinRochford¶

austin.rochford@gmail.com • arochford@monetate.com¶

Last Two Minute Report¶

Since late in the 2014-2015 season, the NBA has issued last two minute reports. These reports give the league's assessment of the correctness of fall calls and non-calls in the last two minutes of any game where the score difference was three or fewer points at any point in the last two minutes.

These reports are notably different from play-by-play logs, in that they include information on non-calls for notable on-court interactions. This non-call information presents a unique opportunity to study the factors that impact foul calls. There is a level of subjectivity inherent in the the NBA's definition of notable on-court interactions which we attempt to mitigate later using season-specific factors.

Scraping the data¶

Loading the data¶

We download the data locally to be kind to GitHub.

%%bash

DATA_URI=https://raw.githubusercontent.com/polygraph-cool/last-two-minute-report/32f1c43dfa06c2e7652cc51ea65758007f2a1a01/output/all_games.csv

DATA_DEST=/tmp/all_games.csv

if [[ ! -e $DATA_DEST ]];

then

wget -q -O $DATA_DEST $DATA_URI

fi

We use only a subset of the columns in the source data set.

USECOLS = [

'period',

'seconds_left',

'call_type',

'committing_player',

'disadvantaged_player',

'review_decision',

'play_id',

'away',

'home',

'date',

'score_away',

'score_home',

'disadvantaged_team',

'committing_team'

]

orig_df = pd.read_csv(

'/tmp/all_games.csv',

usecols=USECOLS,

index_col='play_id',

parse_dates=['date']

)

orig_df.shape

(16300, 13)

The each row of the DataFrame represents a play and each column describes an attrbiute of the play:

periodis the period of the game,seconds_leftis the number of seconds remaining in the game,call_typeis the type of callcommitting_playeranddisadvantaged_playerare the names of the players involved in the play,review_decisionis the opinion of the league reviewer on whether or not the play was called correctly:review_decision = "INC"means the call was an incorrect noncall,review_decision = "CNC"means the call was an correct noncall,review_decision = "IC"means the call was an incorrect call,review_decision = "CC"means the call was an correct call,

awayandhomeare the abbreviations of the teams involved in the game,dateis the date on which the game was played,score_awayandscore_homeare the scores of theawayandhometeam during the play, respectively,disadvantaged_teamandcommitting_teamindicate how each team is involved in the play.

orig_df.head(n=2).T

| play_id | 20150301CLEHOU-0 | 20150301CLEHOU-1 |

|---|---|---|

| period | Q4 | Q4 |

| seconds_left | 112 | 103 |

| call_type | Foul: Shooting | Foul: Shooting |

| committing_player | Josh Smith | J.R. Smith |

| disadvantaged_player | Kevin Love | James Harden |

| review_decision | CNC | CC |

| away | CLE | CLE |

| home | HOU | HOU |

| date | 2015-03-01 00:00:00 | 2015-03-01 00:00:00 |

| score_away | 103 | 103 |

| score_home | 105 | 105 |

| disadvantaged_team | CLE | HOU |

| committing_team | HOU | CLE |

Research questions¶

- How does game context impact foul calls?

- Is (not) committing and/or drawing fouls a measurable player skill?

Exploratory Data Analysis¶

First we examine the types of calls present in the data set.

orig_df.call_type.value_counts()

Foul: Personal 4736 Foul: Shooting 4201 Foul: Offensive 2846 Foul: Loose Ball 1316 Turnover: Traveling 779 Instant Replay: Support Ruling 607 Foul: Defense 3 Second 277 Instant Replay: Overturn Ruling 191 Foul: Personal Take 172 Turnover: 3 Second Violation 139 Turnover: 24 Second Violation 126 Turnover: 5 Second Inbound 99 Stoppage: Out-of-Bounds 96 Violation: Lane 84 Foul: Away from Play 82 Violation: Defensive Goaltending 65 Turnover: Stepped out of Bounds 59 Violation: Kicked Ball 58 Foul: Technical 38 Turnover: Backcourt Turnover 33 Violation: Delay of Game 32 Turnover: Out of Bounds 29 Turnover: Offensive Goaltending 26 Other 26 Turnover: Palming 24 Turnover: Double Dribble 21 Foul: Inbound 19 Foul: Double Personal 11 Violation: Double Lane 9 Violation: Jump Ball 9 Foul: Clear Path 8 Turnover: Discontinue Dribble 7 Foul: Flagrant Type 1 6 Turnover: 5 Second Violation 6 Turnover: Inbound Turnover 6 Turnover: Illegal Screen 5 Turnover: 8 Second Violation 5 Foul: Double Technical 5 Foul: Delay Technical 5 Turnover: Lane Violation 5 Turnover: Kicked Ball Violation 4 Ejection: Second Technical 4 Turnover: 10 Second Violation 3 Turnover: Lost Ball Possession 3 Turnover: Punched Ball 2 Turnover: Jump Ball Violation 2 Jump Ball 2 Turnover: 24 Second violationCC 1 Turnover: Illegal Assist 1 Other: Timeout 1 Stoppage: Other 1 Other: Jump Ball 1 Other: Held Ball 1 Turnover: Lost Ball Out of Bounds 1 Violation: Other 1 Foul: Punching 1 Instant Replay: Support ruling 1 Shot Clock 1 Name: call_type, dtype: int64

The portion of call_type before the colon is the general category of the call. We count the occurence of these categories below.

(orig_df.call_type

.str.split(':', expand=True).iloc[:, 0]

.value_counts()

.plot(kind='bar', color=blue, logy=True, title="Call types")

.set_ylabel("Frequency"));

We restrict our attention to foul calls, though other call types would be interesting to study in the future.

foul_df = orig_df[

orig_df.call_type

.fillna("UNKNOWN")

.str.startswith("Foul")

]

We count the foul call types below.

(foul_df.call_type

.str.split(': ', expand=True).iloc[:, 1]

.value_counts()

.plot(kind='bar', color=blue, logy=True, title="Foul Types")

.set_ylabel("Frequency"));

We restrict our attention to the five foul types below, which generally involve two players. This subset of fouls allows us to pursue our second research question in the most direct manner.

FOULS = [

f"Foul: {foul_type}"

for foul_type in [

"Personal",

"Shooting",

"Offensive",

"Loose Ball",

"Away from Play"

]

]

Data transformation¶

There are a number of misspelled team names in the data, which we correct.

TEAM_MAP = {

"NKY": "NYK",

"COS": "BOS",

"SAT": "SAS",

"CHi": "CHI",

"LA)": "LAC",

"AT)": "ATL",

"ARL": "ATL"

}

def correct_team_name(col):

def _correct_team_name(df):

return df[col].apply(lambda team_name: TEAM_MAP.get(team_name, team_name))

return _correct_team_name

We also convert each game date to NBA season.

def date_to_season(date):

if date >= datetime.datetime(2017, 10, 17):

return '2017-2018'

elif date >= datetime.datetime(2016, 10, 25):

return '2016-2017'

elif date >= datetime.datetime(2015, 10, 27):

return '2015-2016'

else:

return '2014-2015'

We clean the data by

- restricting to plays that occured during the last two minutes of regulation,

- imputing incorrect non-calls when

review_decisionis missing, - correcting team names,

- converting game dates to seasons,

- restricting to the foul types discussed above,

- restricting to the plays that happened during the 2015-2016 and 2016-2017 regular seasons (those are the only full seasons in the data set as of November 2017),

- and dropping unneeded rows and columns.

clean_df = (foul_df.where(lambda df: df.period == "Q4")

.where(lambda df: (df.date.between(datetime.datetime(2016, 10, 25),

datetime.datetime(2017, 4, 12))

| df.date.between(datetime.datetime(2015, 10, 27),

datetime.datetime(2016, 5, 30)))

)

.assign(

review_decision=lambda df: df.review_decision.fillna("INC"),

committing_team=correct_team_name('committing_team'),

disadvantged_team=correct_team_name('disadvantaged_team'),

away=correct_team_name('away'),

home=correct_team_name('home'),

season=lambda df: df.date.apply(date_to_season)

)

.where(lambda df: df.call_type.isin(FOULS))

.dropna()

.drop('period', axis=1)

.assign(call_type=lambda df: (df.call_type

.str.split(': ', expand=True)

.iloc[:, 1])))

About 50% of the rows in the full data set remain.

clean_df.shape[0] / orig_df.shape[0]

0.5516564417177914

clean_df.head(n=2).T

| play_id | 20151028INDTOR-1 | 20151028INDTOR-2 |

|---|---|---|

| seconds_left | 89 | 73 |

| call_type | Shooting | Shooting |

| committing_player | Ian Mahinmi | Bismack Biyombo |

| disadvantaged_player | DeMar DeRozan | Paul George |

| review_decision | CC | IC |

| away | IND | IND |

| home | TOR | TOR |

| date | 2015-10-28 00:00:00 | 2015-10-28 00:00:00 |

| score_away | 99 | 99 |

| score_home | 106 | 106 |

| disadvantaged_team | TOR | IND |

| committing_team | IND | TOR |

| disadvantged_team | TOR | IND |

| season | 2015-2016 | 2015-2016 |

We use scikit-learn's LabelEncoder to transform categorical features (call type, player, and season) to integer ids.

call_type_enc = LabelEncoder().fit(

clean_df.call_type

)

n_call_type = call_type_enc.classes_.size

player_enc = LabelEncoder().fit(

np.concatenate((

clean_df.committing_player,

clean_df.disadvantaged_player

))

)

n_player = player_enc.classes_.size

season_enc = LabelEncoder().fit(

clean_df.season

)

n_season = season_enc.classes_.size

We transform the data by

- rounding

seconds_leftto the nearest second (purely for convenience), - transforming categorical features to integer ids,

- setting

foul_calledequal to zero if a foul was not called, or one if it was, - setting

score_committingandscore_disadvantagedto the score of the committing and disadvantaged teams, respectively.

df = (clean_df[['seconds_left']]

.round(0)

.assign(

call_type=call_type_enc.transform(clean_df.call_type),

foul_called=1. * clean_df.review_decision.isin(['CC', 'INC']),

player_committing=player_enc.transform(clean_df.committing_player),

player_disadvantaged=player_enc.transform(clean_df.disadvantaged_player),

score_committing=clean_df.score_home.where(

clean_df.committing_team == clean_df.home,

clean_df.score_away

),

score_disadvantaged=clean_df.score_home.where(

clean_df.disadvantaged_team == clean_df.home,

clean_df.score_away

),

season=season_enc.transform(clean_df.season)

))

The resulting DataFrame is ready for analysis.

df.head(n=2).T

| play_id | 20151028INDTOR-1 | 20151028INDTOR-2 |

|---|---|---|

| seconds_left | 89.0 | 73.0 |

| call_type | 4.0 | 4.0 |

| foul_called | 1.0 | 0.0 |

| player_committing | 162.0 | 36.0 |

| player_disadvantaged | 98.0 | 358.0 |

| score_committing | 99.0 | 106.0 |

| score_disadvantaged | 106.0 | 99.0 |

| season | 0.0 | 0.0 |

Modeling¶

|

George Box (via Dustin Tran):

|

Build a model of the science¶

def make_foul_rate_yaxis(ax, label="Observed foul call rate"):

ax.yaxis.set_major_formatter(pct_formatter)

ax.set_ylabel(label)

return ax

Below we examine the foul call rate by season.

make_foul_rate_yaxis(

df.pivot_table('foul_called', 'season')

.rename(index=season_enc.inverse_transform)

.rename_axis("Season")

.plot(kind='bar', rot=0, legend=False)

);

There is a pronounced difference between the foul call rate in the 2015-2016 and 2016-2017 NBA seasons. This change in foul call rates is due to a rule change between these seasons meant to cut down on hack-a-Shaq fouls.

Our first model accounts for this difference.

- Each season has a different foul call rate

We use pymc3 to specify our models. Our first model is given by

We use a logistic regression model with different factors for each season.

import pymc3 as pm

with pm.Model() as base_model:

β_season = pm.Normal('β_season', 0., 5., shape=n_season)

p = pm.Deterministic('p', pm.math.sigmoid(β_season))

- Foul calls are like flipping a weighted coin

When building models, we will wrap each feature in a Theano shared variable in order to eventually facilitate posterior predictive sampling.

season = shared(df.season.values)

with base_model:

y = pm.Bernoulli(

'y', p[season],

observed=df.foul_called.values

)

Infer the model given data¶

|

|

PyMC3 provides an accessible interface to state-of-the art Bayesian inference algorithms. Throughout this talk, we will use PyMC3 to perform Hamiltonian Monte Carlo inference (HMC).

Unfortunately there is not enough time in this talk to do these deep topics justice. For the curious:

- [_Probabilistic Programming and

Bayesian Methods for Hackers_](https://github.com/CamDavidsonPilon/Probabilistic-Programming-and-Bayesian-Methods-for-Hackers#pymc3) is an accessible, open-source introduction to Bayesian statistics using PyMC3.

- Statistical Rethinking is an acessible introduction to Bayesian data analysis originally using Stan, whose examples have been ported to PyMC3.

- Bayesian Analysis with Python is an accessible introduction to Bayesian statistics using PyMC3.

- Bayesian Data Analysis (commonly known as BDA3) is an excellent reference on applied Bayesian statistics.

- A Conceptual Introduction to Hamiltonian Monte Carlo pairs an intuitive conceptual motivation of HMC algorithms with extensive references to the rigorous mathematics of HMC.

- The No-U-Turn Sampler: Adaptively Setting Path Lengths in Hamiltonian Monte Carlo is the seminal paper on the NUTS algorithm that is central to modern Bayesian statistics.

NJOBS = 3

SAMPLE_KWARGS = {

'draws': 1000,

'njobs': NJOBS,

'random_seed': [

SEED + i for i in range(NJOBS)

]

}

with base_model:

base_trace = pm.sample(**SAMPLE_KWARGS)

Auto-assigning NUTS sampler... Initializing NUTS using jitter+adapt_diag... 100%|██████████| 1500/1500 [00:06<00:00, 235.48it/s]

Convergence diagnostics¶

The folk theorem [of statistical computing] is this: When you have computational problems, often there’s a problem with your model.

We rely on three diagnostics to ensure that our samples have converged to the posterior distribution:

- Energy plots: if the two distributions in the energy plot differ significantly (espescially in the tails), the sampling was not very efficient.

- Bayesian fraction of missing information (BFMI): BFMI quantifies this difference with a number between zero and one. A BFMI close to (or exceeding) one is preferable, and a BFMI lower than 0.2 is indicative of efficiency issues.

- Gelman-Rubin statistics: Gelman-Rubin statistics near one are preferable, and values less than 1.1 are generally taken to indicate convergence.

For more information on energy plots and BFMI consult Robust Statistical Workflow with PyStan.

bfmi = pm.bfmi(base_trace)

max_gr = max(np.max(gr_stats) for gr_stats in pm.gelman_rubin(base_trace).values())

CONVERGENCE_TITLE = lambda: f"BFMI = {bfmi:.2f}\nGelman-Rubin = {max_gr:.3f}"

(pm.energyplot(base_trace, legend=False, figsize=(6, 4))

.set_title(CONVERGENCE_TITLE()));

Criticize the model given data¶

We use the samples from p's posterior distribution to calculate residuals, which we use to criticize our models. These residuals allow us to assess how well our model describes the data-generation process and to discover unmodeled sources of variation.

base_trace['p']

array([[ 0.39621111, 0.30601358],

[ 0.40373111, 0.31909179],

[ 0.40255341, 0.3074904 ],

...,

[ 0.40184561, 0.30958139],

[ 0.40790953, 0.30464071],

[ 0.39905963, 0.31899429]])

base_trace['p'].shape

(3000, 2)

resid_df = (df.assign(p_hat=base_trace['p'][:, df.season].mean(axis=0))

.assign(resid=lambda df: df.foul_called - df.p_hat))

resid_df[['foul_called', 'p_hat', 'resid']].head()

| foul_called | p_hat | resid | |

|---|---|---|---|

| play_id | |||

| 20151028INDTOR-1 | 1.0 | 0.403732 | 0.596268 |

| 20151028INDTOR-2 | 0.0 | 0.403732 | -0.403732 |

| 20151028INDTOR-3 | 1.0 | 0.403732 | 0.596268 |

| 20151028INDTOR-4 | 0.0 | 0.403732 | -0.403732 |

| 20151028INDTOR-6 | 0.0 | 0.403732 | -0.403732 |

The per-season residuals are quite small, which is to be expected.

(resid_df.pivot_table('resid', 'season')

.rename(index=season_enc.inverse_transform))

| resid | |

|---|---|

| season | |

| 2015-2016 | -0.000019 |

| 2016-2017 | 0.000026 |

Intentional fouls¶

|

|

Anyone who has watched a close basketball game will realize that we have neglected an important factor in late game foul calls — intentional fouls. Near the end of the game, intentional fouls are used by the losing team when they are on defense to end the leading team's possession as quickly as possible.

The influence of intentional fouls in the plot below is shown by the rapidly increasing of the residuals as the number of seconds left in the game decreases.

def make_time_axes(ax,

xlabel="Seconds remaining in game",

ylabel="Observed foul call rate"):

ax.invert_xaxis()

ax.set_xlabel(xlabel)

return make_foul_rate_yaxis(ax, label=ylabel)

make_time_axes(

resid_df.pivot_table('resid', 'seconds_left')

.reset_index()

.plot('seconds_left', 'resid', kind='scatter'),

ylabel="Residual"

);

Build a model of the science, take two¶

df['trailing_committing'] = (df.score_committing

.lt(df.score_disadvantaged)

.mul(1.)

.astype(np.int64))

The following plot illustrates the fact that only the trailing team has any incentive to committ intentional fouls.

make_time_axes(

df.pivot_table('foul_called', 'seconds_left', 'trailing_committing')

.rolling(20).mean()

.rename(columns={0: "No", 1: "Yes"})

.rename_axis("Committing team is trailing", axis=1)

.plot()

);

Intentional fouls are only useful when the trailing (and committing) team is on defense. The plot below reflects this fact; shooting and personal fouls are almost always called against the defensive player; we see that they are called at a much higher rate than offensive fouls.

ax = (df.pivot_table('foul_called', 'call_type')

.rename(index=call_type_enc.inverse_transform)

.rename_axis("Call type", axis=0)

.plot(kind='barh', legend=False))

ax.xaxis.set_major_formatter(pct_formatter);

ax.set_xlabel("Observed foul call rate");

We continue to model the differnce in foul call rates between seasons.

with pm.Model() as poss_model:

β_season = pm.Normal('β_season', 0., 5., shape=2)

Throughout this talk, we will use hierarchical distributions to model the variation of foul call rates across different categories (in this instance, call types). For much more information on hierarchical models, consult Data Analysis Using Regression and Multilevel/Hierarchical Models.

σcall∼HalfNormal(5)βcallc∼Hierarchical-Normal(0,σ2call)For sampling efficiency, we use an offset parametrization of the hierarchical normal distribution.

def hierarchical_normal(name, shape, σ_shape=1):

Δ = pm.Normal(

f'Δ_{name}', 0., 1., shape=shape

)

σ = pm.HalfNormal(f'σ_{name}', 5., shape=σ_shape)

return pm.Deterministic(name, Δ * σ)

- Each call type has a different foul call rate

with poss_model:

β_call = hierarchical_normal('β_call', n_call_type)

We add score difference and the number of possessions by which the committing team is trailing to the DataFrame. We assume that at most three points can be scored in a single possession (while this is not quite correct, four-point plays are rare enough that we do not account for them in our analysis).

df['score_diff'] = (df.score_disadvantaged

.sub(df.score_committing))

df['trailing_poss'] = (df.score_diff

.div(3)

.apply(np.ceil))

trailing_poss_enc = LabelEncoder().fit(df.trailing_poss)

trailing_poss = shared(

trailing_poss_enc.transform(df.trailing_poss)

)

n_trailing_poss = trailing_poss_enc.classes_.size

The plot below shows that the foul call rate (over time) varies based on the score difference (quantized into possessions) between the disadvanted team and the committing team.

make_time_axes(

df.pivot_table('foul_called', 'seconds_left', 'trailing_poss')

.loc[:, 1:3]

.rolling(20).mean()

.rename_axis(

"Trailing possessions\n(committing team)",

axis=1

)

.plot()

);

The plot below reflects the fact that intentional fouls are disproportionately personal fouls; the rate at which personal fouls are called increases drastically as the game nears its end.

make_time_axes(

df.pivot_table('foul_called', 'seconds_left', 'call_type')

.rolling(20).mean()

.rename(columns=call_type_enc.inverse_transform)

.rename_axis(None, axis=1)

.plot()

);

The shot clock¶

Due to the NBA's shot clock, the natural timescale of a basketball game is possessions, not seconds, remaining.

df['remaining_poss'] = (df.seconds_left

.floordiv(25)

.add(1))

remaining_poss_enc = LabelEncoder().fit(df.remaining_poss)

remaining_poss = shared(

remaining_poss_enc.transform(df.remaining_poss)

)

n_remaining_poss = remaining_poss_enc.classes_.size

Below we plot the foul call rate across trailing possession/remaining posession pairs. Note that we always calculate trailing possessions (trailing_poss) from the perspective of the committing team. For instance, trailing_poss = 1 indicates that the committing team is trailing by 1-3 points, whereas trailing_poss = -1 indicates that the committing team is leading by 1-3 points.

ax = sns.heatmap(

df.pivot_table('foul_called', 'trailing_poss', 'remaining_poss')

.rename_axis(

"Trailing possessions\n(committing team)", axis=0

)

.rename_axis("Remaining possessions", axis=1),

cmap='seismic', cbar_kws={'format': pct_formatter}

)

ax.invert_yaxis();

ax.set_title("Observed foul call rate");

The heatmap above shows that the foul call rate increases significantly when the committing team is trailing by more than the number of possessions remaining in the game. That is, teams resort to intentional fouls only when the opposing team can run out the clock and guarantee a win. (Since we have quantized the score difference and time into posessions, this conclusion is not entirely correct; it is, however, correct enough for our purposes.)

def plot_foul_diff_heatmap(*_, data=None, **kwargs):

ax = plt.gca()

sns.heatmap(

data.pivot_table(

'diff',

'trailing_poss',

'remaining_poss'

),

cmap='seismic', robust=True,

cbar_kws={'format': pct_formatter}

)

ax.invert_yaxis()

ax.set_title("Observed foul call rate")

call_name_df = df.assign(

call_type=lambda df: call_type_enc.inverse_transform(

df.call_type.values

)

)

diff_df = (pd.merge(

call_name_df,

call_name_df.groupby('call_type')

.foul_called.mean()

.rename('avg_foul_called')

.reset_index()

)

.assign(diff=lambda df: df.foul_called - df.avg_foul_called))

Difference from call type average¶

The heatmaps below are broken out by call type, and show the difference between the foul call rate for each trailing/remaining possession combination and the overall foul call rate for the call type in question

(sns.FacetGrid(diff_df, col='call_type', col_wrap=3, aspect=1.5)

.map_dataframe(plot_foul_diff_heatmap)

.set_axis_labels(

"Remaining possessions",

"Trailing possessions\n(committing team)"

)

.set_titles("{col_name}"));

These plots confirm that most intentional fouls are personal fouls. They also show that the three-way interaction between trailing possesions, remaining possessions, and call type are important to model foul call rates.

- The foul call rate changes based on the number of possessions trailing and remaining and the call type

with poss_model:

β_poss = hierarchical_normal(

'β_poss',

(n_trailing_poss, n_remaining_poss, n_call_type),

σ_shape=(1, 1, n_call_type)

)

- The foul call rate is a combination of season, call type, and possession factors

call_type = shared(df.call_type.values)

with poss_model:

η_game = β_season[season] \

+ β_call[call_type] \

+ β_poss[

trailing_poss, remaining_poss, call_type

]

with poss_model:

p = pm.Deterministic('p', pm.math.sigmoid(η_game))

y = pm.Bernoulli('y', p, observed=df.foul_called)

Infer the model given data, take two¶

with poss_model:

poss_trace = pm.sample(**SAMPLE_KWARGS)

Auto-assigning NUTS sampler... Initializing NUTS using jitter+adapt_diag... 100%|██████████| 1500/1500 [06:34<00:00, 3.80it/s]

The BFMI and Gelman-Rubin statistics for this model indicate no problems with HMC sampling and good convergence.

bfmi = pm.bfmi(poss_trace)

max_gr = max(np.max(gr_stats) for gr_stats in pm.gelman_rubin(poss_trace).values())

(pm.energyplot(poss_trace, legend=False, figsize=(6, 4))

.set_title(CONVERGENCE_TITLE()));

Criticize the model given data, take two¶

resid_df = (df.assign(p_hat=poss_trace['p'].mean(axis=0))

.assign(resid=lambda df: df.foul_called - df.p_hat))

The following plots show that, grouped various ways, the residuals for this model are relatively well-distributed.

ax = sns.heatmap(

resid_df.pivot_table('resid', 'trailing_poss', 'remaining_poss')

.rename_axis("Trailing possessions\n(committing team)", axis=0)

.rename_axis("Remaining possessions", axis=1)

.loc[-3:3],

cmap='seismic', cbar_kws={'format': pct_formatter}

)

ax.invert_yaxis();

ax.set_title("Observed foul call rate");

N_BIN = 20

bin_ix, bins = pd.qcut(

resid_df.p_hat, N_BIN,

labels=np.arange(N_BIN),

retbins=True

)

ax = (resid_df.groupby(bins[bin_ix])

.resid.mean()

.rename_axis('p_hat', axis=0)

.reset_index()

.plot('p_hat', 'resid', kind='scatter'))

ax.xaxis.set_major_formatter(pct_formatter);

ax.set_xlabel(r"Binned $\hat{p}$");

make_foul_rate_yaxis(ax, label="Residual");

ax = (resid_df.groupby('seconds_left')

.resid.mean()

.reset_index()

.plot('seconds_left', 'resid', kind='scatter'))

make_time_axes(ax, ylabel="Residual");

Model selection¶

Now that we have two models, we can engage in model selection. We use the widely applicable Bayesian information criterion (WAIC) for model selection.

MODEL_NAME_MAP = {

0: "Base",

1: "Possession"

}

comp_df = (pm.compare(

(base_trace, poss_trace),

(base_model, poss_model)

)

.rename(index=MODEL_NAME_MAP)

.loc[MODEL_NAME_MAP.values()])

Since smaller WAICs are better, the possession model clearly outperforms the base model.

comp_df

| WAIC | pWAIC | dWAIC | weight | SE | dSE | warning | |

|---|---|---|---|---|---|---|---|

| Base | 11609.8 | 1.98 | 1543.34 | 0 | 56.98 | 73.35 | 1 |

| Possession | 10066.5 | 81.92 | 0 | 1 | 87.93 | 0 | 1 |

fig, ax = plt.subplots()

ax.errorbar(

np.arange(len(MODEL_NAME_MAP)), comp_df.WAIC,

yerr=comp_df.SE, fmt='o'

);

ax.set_xticks(np.arange(len(MODEL_NAME_MAP)));

ax.set_xticklabels(comp_df.index);

ax.set_xlabel("Model");

ax.set_ylabel("WAIC");

Research questions¶

How does game context impact foul calls?- Is (not) committing and/or drawing fouls a measurable player skill?

Build a model of the science, take three¶

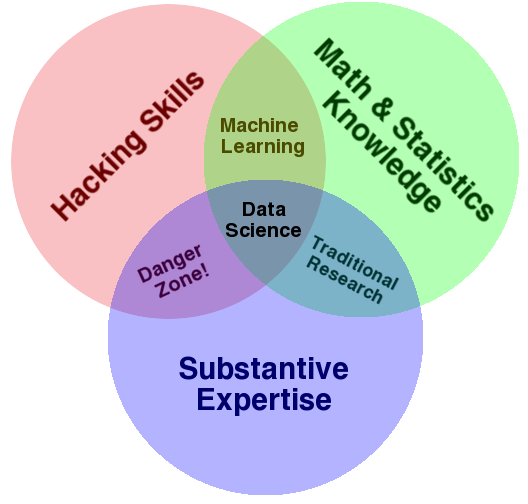

We now turn to the question of whether or not committing and/or drawing fouls is a measurable skill. We use an item-response theory (IRT) model to study this question.

Unfortunately there is not enough time in this talk to do Bayesian item-response theory justice. For the curious:

- Practical Issues in Implementing and Understanding Bayesian Ideal Point Estimation is an excellent introduction to applied Bayesian IRT models and has inspired much of this work.

- Bayesian Item Response Modeling — Theory and Applications is a comprehensive mathematical overview of Bayesien IRT modeling.

Item-response theory¶

fig, ax = plt.subplots()

ax.set_aspect('equal');

x = y = np.linspace(-3, 3, 100)

C = sp.special.expit(

np.subtract.outer(x, y)

)

poly = ax.pcolor(x, y, C, cmap='bwr')

ax.text(

-4., -3.5, "Liberal",

fontdict={'size': LABELSIZE}

);

ax.text(

3.1, -3.5, "Conservative",

fontdict={'size': LABELSIZE}

);

ax.text(

-4.85, 3.1, "Conservative",

fontdict={'size': LABELSIZE}

);

cbar = fig.colorbar(poly, ax=ax)

cbar.ax.yaxis.set_ticks(np.linspace(0, 1, 5));

cbar.ax.yaxis.set_major_formatter(pct_formatter);

ax.set_ylabel("Case ideal point");

ax.set_xlabel("Justice ideal point");

ax.set_title("Probability justice issues\nconservative opinion on case");

fig

with pm.Model() as irt_model:

β_season = pm.Normal('β_season', 0., 5., shape=n_season)

β_call = hierarchical_normal('β_call', n_call_type)

β_poss = hierarchical_normal(

'β_poss',

(n_trailing_poss, n_remaining_poss, n_call_type),

σ_shape=(1, 1, n_call_type)

)

η_game = β_season[season] \

+ β_call[call_type] \

+ β_poss[trailing_poss, remaining_poss, call_type]

player_committing = shared(df.player_committing.values)

player_disadvantaged = shared(df.player_disadvantaged.values)

n_player = player_enc.classes_.size

- Each disadvantaged player has an ideal point (per season)

with irt_model:

θ_player = hierarchical_normal(

'θ_player', (n_player, n_season)

)

θ = θ_player[player_disadvantaged, season]

- Each committing player has an ideal point (per season)

with irt_model:

b_player = hierarchical_normal(

'b_player', (n_player, n_season)

)

b = b_player[player_committing, season]

- Players affect the foul call rate through the difference in their ideal points

with irt_model:

η_player = θ - b

- Game and player effects combine to determine the foul call rate

with irt_model:

η = η_game + η_player

with irt_model:

p = pm.Deterministic('p', pm.math.sigmoid(η))

y = pm.Bernoulli(

'y', p,

observed=df.foul_called

)

Infer the model given data, take three¶

with irt_model:

irt_trace = pm.sample(**SAMPLE_KWARGS)

Auto-assigning NUTS sampler... Initializing NUTS using jitter+adapt_diag... 100%|██████████| 1500/1500 [08:45<00:00, 2.85it/s]

bfmi = pm.bfmi(irt_trace)

max_gr = max(np.max(gr_stats) for gr_stats in pm.gelman_rubin(irt_trace).values())

(pm.energyplot(irt_trace, legend=False, figsize=(6, 4))

.set_title(CONVERGENCE_TITLE()));

Criticize the model given data, take three¶

resid_df = (df.assign(p_hat=irt_trace['p'].mean(axis=0))

.assign(resid=lambda df: df.foul_called - df.p_hat))

The binned residuals for this model are more asymmetric than for the previous models, but still not too bad.

N_BIN = 50

bin_ix, bins = pd.qcut(

resid_df.p_hat, N_BIN,

labels=np.arange(N_BIN),

retbins=True

)

ax = (resid_df.groupby(bins[bin_ix])

.resid.mean()

.rename_axis('p_hat', axis=0)

.reset_index()

.plot('p_hat', 'resid', kind='scatter'))

ax.xaxis.set_major_formatter(pct_formatter);

ax.set_xlabel(r"Binned $\hat{p}$");

make_foul_rate_yaxis(ax, label="Residual");

ax = (resid_df.groupby('seconds_left')

.resid.mean()

.reset_index()

.plot('seconds_left', 'resid', kind='scatter'))

make_time_axes(ax, ylabel="Residual");

Model selection¶

The IRT model represents a marginal improvement over the possession model in terms of WAIC.

MODEL_NAME_MAP[2] = "IRT"

comp_df = (pm.compare(

(base_trace, poss_trace, irt_trace),

(base_model, poss_model, irt_model)

)

.rename(index=MODEL_NAME_MAP)

.loc[MODEL_NAME_MAP.values()])

comp_df

| WAIC | pWAIC | dWAIC | weight | SE | dSE | warning | |

|---|---|---|---|---|---|---|---|

| Base | 11609.8 | 1.98 | 1567.2 | 0 | 56.98 | 73.91 | 1 |

| Possession | 10066.5 | 81.92 | 23.86 | 0.1 | 87.93 | 10.98 | 1 |

| IRT | 10042.6 | 215.56 | 0 | 0.9 | 88.4 | 0 | 1 |

fig, ax = plt.subplots()

ax.errorbar(

np.arange(len(MODEL_NAME_MAP)), comp_df.WAIC,

yerr=comp_df.SE, fmt='o'

);

ax.set_xticks(np.arange(len(MODEL_NAME_MAP)));

ax.set_xticklabels(comp_df.index);

ax.set_xlabel("Model");

ax.set_ylabel("WAIC");

Is committing and/or drawing fouls a measurable player skill?¶

def varname_to_param(varname):

return varname[0]

def varname_to_player(varname):

return int(varname[3:-2])

def varname_to_season(varname):

return int(varname[-1])

irt_df = (pm.trace_to_dataframe(

irt_trace, varnames=['θ_player', 'b_player']

)

.rename(columns=lambda col: col.replace('_player', ''))

.T

.apply(

lambda s: pd.Series.describe(

s, percentiles=[0.055, 0.945]

),

axis=1

)

[['mean', '5.5%', '94.5%']]

.rename(columns={

'5.5%': 'low',

'94.5%': 'high'

})

.rename_axis('varname')

.reset_index()

.assign(

param=lambda df: df.varname.apply(varname_to_param),

player=lambda df: df.varname.apply(varname_to_player),

season=lambda df: df.varname.apply(varname_to_season)

)

.drop('varname', axis=1))

irt_df.head()

| mean | low | high | param | player | season | |

|---|---|---|---|---|---|---|

| 0 | -0.015132 | -0.312961 | 0.276028 | θ | 0 | 0 |

| 1 | -0.003946 | -0.284629 | 0.283364 | θ | 0 | 1 |

| 2 | 0.010513 | -0.277971 | 0.290268 | θ | 1 | 0 |

| 3 | 0.037012 | -0.239934 | 0.337692 | θ | 1 | 1 |

| 4 | -0.034668 | -0.311093 | 0.229465 | θ | 2 | 0 |

player_irt_df = irt_df.pivot_table(

index='player',

columns=['param', 'season'],

values='mean'

)

player_irt_df.head()

| param | b | θ | ||

|---|---|---|---|---|

| season | 0 | 1 | 0 | 1 |

| player | ||||

| 0 | -0.064689 | 0.006621 | -0.015132 | -0.003946 |

| 1 | -0.006142 | 0.004387 | 0.010513 | 0.037012 |

| 2 | 0.090107 | 0.092868 | -0.034668 | -0.003519 |

| 3 | -0.022949 | -0.002456 | 0.005822 | -0.003961 |

| 4 | -0.006999 | 0.288897 | 0.068338 | 0.007508 |

The following plot shows that the committing skill appears to be somewhat larger than the disadvantaged skill. This difference seems reasonable because most fouls are committed by the player on defense; committing skill is quite likely to to be correlated with defensive ability.

def plot_latent_params(df):

fig, ax = plt.subplots()

n, _ = df.shape

y = np.arange(n)

ax.errorbar(

df['mean'], y,

xerr=(df[['high', 'low']]

.sub(df['mean'], axis=0)

.abs()

.values.T),

fmt='o'

)

ax.set_yticks(y)

ax.set_yticklabels(

player_enc.inverse_transform(df.player)

)

ax.set_ylabel("Player")

return fig, ax

fig, axes = plt.subplots(

ncols=2, nrows=2, sharex=True,

figsize=(16, 8)

)

(θ0_ax, θ1_ax), (b0_ax, b1_ax) = axes

bins = np.linspace(

0.9 * irt_df['mean'].min(),

1.1 * irt_df['mean'].max(),

75

)

θ0_ax.hist(

player_irt_df['θ', 0],

bins=bins, normed=True

);

θ1_ax.hist(

player_irt_df['θ', 1],

bins=bins, normed=True

);

θ0_ax.set_yticks([]);

θ0_ax.set_title(

r"$\hat{\theta}$ (" + season_enc.inverse_transform(0) + ")"

);

θ1_ax.set_yticks([]);

θ1_ax.set_title(

r"$\hat{\theta}$ (" + season_enc.inverse_transform(1) + ")"

);

b0_ax.hist(

player_irt_df['b', 0],

bins=bins, normed=True, color=green

);

b1_ax.hist(

player_irt_df['b', 1],

bins=bins, normed=True, color=green

);

b0_ax.set_xlabel(

r"$\hat{b}$ (" + season_enc.inverse_transform(0) + ")"

);

b0_ax.invert_yaxis();

b0_ax.xaxis.tick_top();

b0_ax.set_yticks([]);

b1_ax.set_xlabel(

r"$\hat{b}$ (" + season_enc.inverse_transform(1) + ")"

);

b1_ax.invert_yaxis();

b1_ax.xaxis.tick_top();

b1_ax.set_yticks([]);

fig.suptitle("Disadvantaged skill", size=18);

fig.text(0.45, 0.02, "Committing skill", size=18)

fig.tight_layout();

The latent ability parameters tend to lie in the interval [−0.2,0.2], so these skills are small, if they exist.

fig

top_bot_irt_df = (irt_df.groupby('param')

.apply(

lambda df: pd.concat((

df.nlargest(10, 'mean'),

df.nsmallest(10, 'mean')

),

axis=0, ignore_index=True

)

)

.reset_index(drop=True))

top_bot_irt_df.head()

| mean | low | high | param | player | season | |

|---|---|---|---|---|---|---|

| 0 | 0.347551 | -0.028239 | 0.758395 | b | 86 | 0 |

| 1 | 0.328016 | -0.028768 | 0.707518 | b | 23 | 0 |

| 2 | 0.288897 | -0.089046 | 0.677624 | b | 4 | 1 |

| 3 | 0.285537 | -0.084353 | 0.689011 | b | 78 | 0 |

| 4 | 0.279316 | -0.049866 | 0.634959 | b | 462 | 1 |

We now examine the top and bottom ten players in each ability, across both seasons.

The top players in terms of disadvantaged ability tend to be good scorers (Jimmy Butler, Ricky Rubio, John Wall, Andre Iguodala). The presence of DeAndre Jordan in the top ten seems to be due to the hack-a-Shaq phenomenon. Future work, it would be interesting to control for the disavantage player's free throw percentage in order to mitigate the influence of the hack-a-Shaq effect on the measurement of latent skill.

Interestingly, the bottom players (in terms of disadvantaged ability) include many stars (Pau Gasol, Carmelo Anthony, Kevin Durant, Kawhi Leonard). The presence of these stars in the bottom may somewhat counteract the pervasive narrative that referees favor stars in their foul calls.

fig, ax = plot_latent_params(

top_bot_irt_df[top_bot_irt_df.param == 'θ']

.sort_values('mean')

)

ax.set_xlabel(r"$\hat{\theta}$");

ax.set_title("Top and bottom ten");

The top ten players in terms of committing skill include many defensive standouts (Danny Green — twice, Gordon Hayward, Paul George).

The bottom ten players include many that are known to be defensively challenged (Ricky Rubio, James Harden, Chris Paul). Dwight Howard was, at one point, a fierce defender of the rim, but was well past his prime in 2015, when our data set begins.

fig, ax = plot_latent_params(

top_bot_irt_df[top_bot_irt_df.param == 'b']

.sort_values('mean')

)

ax.set_xlabel(r"$\hat{b}$");

ax.set_title("Top and bottom ten");

player_irt_df.head()

| param | b | θ | ||

|---|---|---|---|---|

| season | 0 | 1 | 0 | 1 |

| player | ||||

| 0 | -0.064689 | 0.006621 | -0.015132 | -0.003946 |

| 1 | -0.006142 | 0.004387 | 0.010513 | 0.037012 |

| 2 | 0.090107 | 0.092868 | -0.034668 | -0.003519 |

| 3 | -0.022949 | -0.002456 | 0.005822 | -0.003961 |

| 4 | -0.006999 | 0.288897 | 0.068338 | 0.007508 |

def p_val_to_asterisks(p_val):

if p_val < 0.0001:

return "****"

elif p_val < 0.001:

return "***"

elif p_val < 0.01:

return "**"

elif p_val < 0.05:

return "*"

else:

return ""

def plot_corr(x, y, **kwargs):

corrcoeff, p_val = sp.stats.pearsonr(x, y)

asterisks = p_val_to_asterisks(p_val)

artist = AnchoredText(

f'{corrcoeff:.2f}{asterisks}',

loc=10, frameon=False,

prop=dict(size=LABELSIZE)

)

plt.gca().add_artist(artist)

plt.grid(b=False)

PARAM_MAP = {

'θ': r"$\hat{\theta}$",

'b': r"$\hat{b}$"

}

def replace_label(label):

param, season = eval(label)

return "{param}\n({season})".format(

param=PARAM_MAP[param],

season=season_enc.inverse_transform(season)

)

def style_grid(grid):

for ax in grid.axes.flat:

ax.grid(False)

ax.set_xticklabels([]);

ax.set_yticklabels([]);

if ax.get_xlabel():

ax.set_xlabel(replace_label(ax.get_xlabel()))

if ax.get_ylabel():

ax.set_ylabel(replace_label(ax.get_ylabel()))

return grid

player_all_season = set(df.groupby('player_disadvantaged')

.filter(lambda df: df.season.nunique() == n_season)

.player_committing) \

& set(df.groupby('player_committing')

.filter(lambda df: df.season.nunique() == n_season)

.player_committing)

Year-over-year consistency (players that appeared in all seasons)¶

In the sports analytics community, year-over-year correlation of latent parameters is the test of whether or not a skill exists. The following plots show a slight (but significant) year-over-year correlation in the committing skill, but not much correlation in the disadvantaged skill.

style_grid(sns.PairGrid(player_irt_df.loc[player_all_season], size=1.75)

.map_upper(plt.scatter, alpha=0.5)

.map_diag(plt.hist)

.map_lower(plot_corr));

MIN = 10

player_has_min = set(df.groupby('player_disadvantaged')

.filter(lambda df: df.season.value_counts().gt(MIN).all())

.player_committing) \

& set(df.groupby('player_committing')

.filter(lambda df: df.season.value_counts().gt(MIN).all())

.player_committing)

Year-over-year consistency (players that appeared at least ten times in all seasons)¶

grid = style_grid(sns.PairGrid(player_irt_df.loc[player_has_min], size=1.75)

.map_upper(plt.scatter, alpha=0.5)

.map_diag(plt.hist)

.map_lower(plot_corr))

grid.fig

Based on this data set, disadvanted skill is not measurable, and if committing skill is measurable, its effect is quite small.

Research questions¶

How does game context impact foul calls?Is (not) committing and/or drawing fouls a measurable player skill?

Visualize the Predictions¶

This section could be better documented, but I ran out of time.

with open('./irt.pickle', mode='wb') as dest:

pickle.dump(

{

'call_type_enc': call_type_enc,

'player_enc': player_enc,

'season_enc': season_enc,

'trailing_poss_enc': trailing_poss_enc,

'remaining_poss_enc': remaining_poss_enc,

'df': df,

'irt_trace': irt_trace

},

dest

)

!ls -lh ./irt.pickle

-rw-r--r-- 1 jovyan users 318M Nov 26 14:00 ./irt.pickle

import dash

import dash_html_components as html

import dash_core_components as dcc

import plotly.graph_objs as go

CALL_TYPE = 0

PLAYER_COMMITTING = 0

PLAYER_DISADVANTAGED = 1

SEASON = 1

def get_pp_data_df(call_type=CALL_TYPE,

player_committing=PLAYER_COMMITTING,

player_disadvantaged=PLAYER_DISADVANTAGED,

season=SEASON):

return (pd.DataFrame(

list(product(

np.arange(n_trailing_poss),

np.arange(n_remaining_poss)

)),

columns=[

'trailing_poss',

'remaining_poss'

]

)

.assign(

call_type=call_type,

player_committing=player_committing,

player_disadvantaged=1,

season=SEASON

))

def get_irt_df(player_committing_=PLAYER_COMMITTING,

player_disadvantaged_=PLAYER_DISADVANTAGED,

season_=SEASON):

return pd.DataFrame({

'θ': irt_trace['θ_player'][:, player_disadvantaged_, season_],

'b': irt_trace['b_player'][:, player_committing_, season_]

})

def get_pp_df(call_type_=CALL_TYPE,

player_committing_=PLAYER_COMMITTING,

player_disadvantaged_=PLAYER_DISADVANTAGED,

season_=SEASON):

pp_data_df = get_pp_data_df(

call_type=call_type_,

player_committing=player_committing_,

player_disadvantaged=player_disadvantaged_,

season=season_

)

call_type.set_value(pp_data_df.call_type.values)

player_committing.set_value(pp_data_df.player_committing.values)

player_disadvantaged.set_value(pp_data_df.player_disadvantaged.values)

season.set_value(pp_data_df.season.values)

trailing_poss.set_value(pp_data_df.trailing_poss.values)

remaining_poss.set_value(pp_data_df.remaining_poss.values)

with irt_model:

pp_trace = pm.sample_ppc(irt_trace)

return pp_data_df.assign(

p_hat=pp_trace['y'].mean(axis=0)

)

def get_irt_scatter(irt_df):

return {

'data': [

go.Scatter(

x=irt_df.b,

y=irt_df.θ,

mode='markers',

marker={'opacity': 0.5},

hoverinfo='none'

)

],

'layout': go.Layout(

title="Latent parameters",

xaxis={'title': "b (committing player)"},

yaxis={'title': "θ (disadvantaged player)"}

)

}

def get_irt_hist(irt_df):

return {

'data': [

go.Histogram(

x=irt_df.θ - irt_df.b,

histnorm='probability',

hoverinfo='none'

)

],

'layout': go.Layout(

title="Latent parameter difference",

xaxis={'title': "η_game"}

)

}

def get_foul_figure(pp_df):

table = pp_df.pivot_table(

'p_hat',

'trailing_poss',

'remaining_poss'

)

return {

'data': [

go.Heatmap(

x=remaining_poss_enc.inverse_transform(

table.columns

),

y=trailing_poss_enc.inverse_transform(

table.index

),

z=table.values.tolist(),

zmin=0., zmax=0.75,

hoverinfo='z',

colorbar={'tickformat': '.1%'}

)

],

'layout': go.Layout(

title="Predicted foul call probability",

xaxis={'title': "Remaining possessions"},

yaxis={'title': "Trailing possessions"}

)

}

PLAYER_OPTIONS = [

{

'label': name,

'value': i

}

for i, name in enumerate(player_enc.classes_)

]

INPUT_TABLE = html.Table(

[

html.Tr([

html.Td(

[

html.Div("Season"),

dcc.Dropdown(

id='season-dropdown',

options=[

{

'label': season,

'value': i

}

for i, season in enumerate(season_enc.classes_)

],

value=0

)

],

style={'width': '50%'}

),

html.Td([

html.Div("Call type"),

dcc.Dropdown(

id='call-type-dropdown',

options=[

{

'label': call_type.lstrip("Foul: "),

'value': i

}

for i, call_type in enumerate(call_type_enc.classes_)

],

value=0

)

])

]),

html.Tr([

html.Td([

html.Div("Disadvantaged player"),

dcc.Dropdown(

id='player-disadvantaged-dropdown',

options=PLAYER_OPTIONS,

value=1

)

]),

html.Td([

html.Div("Committing player"),

dcc.Dropdown(

id='player-committing-dropdown',

options=PLAYER_OPTIONS,

value=0

)

])

])

],

style={'width': '100%'}

)

FOUL_HEATMAP = dcc.Graph(

id='foul-heatmap',

figure=get_foul_figure(

get_pp_df()

)

)

IRT_PARAM_SCATTER = dcc.Graph(

id='irt-param-scatter',

figure=get_irt_scatter(

get_irt_df()

)

)

IRT_DIFF_HIST = dcc.Graph(

id='irt-diff-hist',

figure=get_irt_hist(

get_irt_df()

)

)

0%| | 0/1000 [00:00<?, ?it/s]

TABLE = html.Table(

[

html.Tr([

html.Td(

[INPUT_TABLE],

style={'width': '50%'}

),

html.Td([FOUL_HEATMAP])

]),

html.Tr([

html.Td([IRT_PARAM_SCATTER]),

html.Td([IRT_DIFF_HIST])

])

],

style={'width': '75%'}

)

app = dash.Dash()

app.layout = html.Div(children=[

html.H1(

"Understanding NBA Foul Calls with Python"

),

html.Center(TABLE)

])

@app.callback(

dash.dependencies.Output(

'irt-param-scatter', 'figure'

),

[

dash.dependencies.Input(

'season-dropdown','value'

),

dash.dependencies.Input(

'player-committing-dropdown','value'

),

dash.dependencies.Input(

'player-disadvantaged-dropdown','value'

)

]

)

def update_irt_scatter(season_,

player_committing_,

player_disadvantaged_):

irt_df = get_irt_df(

player_committing_=player_committing_,

player_disadvantaged_=player_disadvantaged_,

season_=season_,

)

return get_irt_scatter(irt_df)

@app.callback(

dash.dependencies.Output(

'irt-diff-hist', 'figure'

),

[

dash.dependencies.Input(

'season-dropdown','value'

),

dash.dependencies.Input(

'player-committing-dropdown','value'

),

dash.dependencies.Input(

'player-disadvantaged-dropdown','value'

)

]

)

def update_irt_hist(season_,

player_committing_,

player_disadvantaged_):

irt_df = get_irt_df(

player_committing_=player_committing_,

player_disadvantaged_=player_disadvantaged_,

season_=season_,

)

return get_irt_hist(irt_df)

@app.callback(

dash.dependencies.Output('foul-heatmap', 'figure'),

[

dash.dependencies.Input(

'call-type-dropdown','value'

),

dash.dependencies.Input(

'season-dropdown','value'

),

dash.dependencies.Input(

'player-committing-dropdown','value'

),

dash.dependencies.Input(

'player-disadvantaged-dropdown','value'

)

]

)

def update_foul_pct_graph(call_type_,

season_,

player_committing_,

player_disadvantaged_):

pp_df = get_pp_df(

call_type_=call_type_,

player_committing_=player_committing_,

player_disadvantaged_=player_disadvantaged_,

season_=season_

)

return get_foul_figure(pp_df)

app.css.config.serve_locally = True

app.scripts.config.serve_locally = True

app.run_server(host='0.0.0.0')

* Running on http://0.0.0.0:8050/ (Press CTRL+C to quit) 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET / HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_renderer/react@15.4.2.min.js?v=0.11.1 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/react-virtualized-select@3.1.0.css?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/react-dates@12.3.0.css?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/rc-slider@6.1.2.css?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/react-select@1.0.0-rc.3.min.css?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/react-virtualized@9.9.0.css?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_renderer/react-dom@15.4.2.min.js?v=0.11.1 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_html_components/bundle.js?v=0.8.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/plotly-1.31.0.min.js?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_core_components/bundle.js?v=0.15.0 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:46] "GET /_dash-component-suites/dash_renderer/bundle.js?v=0.11.1 HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:53] "GET /_dash-layout HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:53] "GET /_dash-dependencies HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:01:53] "GET /favicon.ico HTTP/1.1" 200 - 0%| | 0/1000 [00:00<?, ?it/s] 172.17.0.1 - - [26/Nov/2017 14:02:00] "POST /_dash-update-component HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:02:00] "POST /_dash-update-component HTTP/1.1" 200 - 172.17.0.1 - - [26/Nov/2017 14:02:00] "POST /_dash-update-component HTTP/1.1" 200 - 0%| | 0/1000 [00:00<?, ?it/s] 172.17.0.1 - - [26/Nov/2017 14:02:02] "POST /_dash-update-component HTTP/1.1" 200 -

The code in the following cell converts this notebook to an HTML slideshow powered by reveal.js.

%%bash

jupyter nbconvert \

--to=slides \

--reveal-prefix=https://cdnjs.cloudflare.com/ajax/libs/reveal.js/3.2.0/ \

--output=nba-fouls-pydata-nyc-2017 \

./PyData\ NYC\ 2017\ Understanding\ NBA\ Foul\ Calls\ with\ Python.ipynb

[NbConvertApp] Converting notebook ./PyData NYC 2017 Understanding NBA Foul Calls with Python.ipynb to slides [NbConvertApp] Writing 1245540 bytes to ./nba-fouls-pydata-nyc-2017.slides.html